Compare commits

369 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

3492b07d9a | ||

|

|

d0b582048f | ||

|

|

a82eb7b01f | ||

|

|

cd08afcbeb | ||

|

|

331942a4ed | ||

|

|

4e387fa943 | ||

|

|

15484363d6 | ||

|

|

50b7b74480 | ||

|

|

adb53c63dd | ||

|

|

bdc3a32e96 | ||

|

|

65f716182b | ||

|

|

6ef72e2550 | ||

|

|

60f51ad7d5 | ||

|

|

a09dc2cbd8 | ||

|

|

825d07aa54 | ||

|

|

f46882c778 | ||

|

|

663fa08cc1 | ||

|

|

19e625d38e | ||

|

|

edcff9cd15 | ||

|

|

e0fc5ecb39 | ||

|

|

4ac6629969 | ||

|

|

68d8dad7c8 | ||

|

|

4ab9ceafc1 | ||

|

|

352ed898d4 | ||

|

|

e091d6a50d | ||

|

|

c651ef00c9 | ||

|

|

4b17788a77 | ||

|

|

db673dddd9 | ||

|

|

88ad457e87 | ||

|

|

126b68559e | ||

|

|

2cd3fe47e6 | ||

|

|

15eb7cce55 | ||

|

|

13f923aabf | ||

|

|

0ffb112063 | ||

|

|

b4ea6af110 | ||

|

|

611c8f7374 | ||

|

|

1cc73f37e7 | ||

|

|

ca37fc0eb5 | ||

|

|

5380624da9 | ||

|

|

aaece0bd44 | ||

|

|

de7cc17f5d | ||

|

|

66efa39d27 | ||

|

|

ff7c0a105d | ||

|

|

7b29253df4 | ||

|

|

7ef63b341e | ||

|

|

e7bfaa4f1a | ||

|

|

3a9a408941 | ||

|

|

3e43963daa | ||

|

|

69a6e260f5 | ||

|

|

664e7ad555 | ||

|

|

ee4a009a06 | ||

|

|

36dfd4dd35 | ||

|

|

dbf36082b2 | ||

|

|

3a1018cff6 | ||

|

|

fc10745a1a | ||

|

|

347cfd06de | ||

|

|

ec759ce467 | ||

|

|

f211e0fe31 | ||

|

|

c91a128b65 | ||

|

|

6a080f3032 | ||

|

|

b2c12c1131 | ||

|

|

b945b37089 | ||

|

|

9a5529a0aa | ||

|

|

025785389d | ||

|

|

48d9a0dede | ||

|

|

fbdf38e990 | ||

|

|

ef5bf70386 | ||

|

|

274c1469b4 | ||

|

|

960d506360 | ||

|

|

79a6421178 | ||

|

|

8b5c004860 | ||

|

|

f54768772e | ||

|

|

b9075dc6f9 | ||

|

|

107596ad54 | ||

|

|

3c6a2b1508 | ||

|

|

f996cba354 | ||

|

|

bdd864fbdd | ||

|

|

ca074ef13f | ||

|

|

ddd3a8251e | ||

|

|

f5b862dc1b | ||

|

|

d45d475f61 | ||

|

|

d0f72ea3fa | ||

|

|

5ed5d1e5b6 | ||

|

|

311b14026e | ||

|

|

67cd722b54 | ||

|

|

7f6247eb7b | ||

|

|

f3fd515521 | ||

|

|

9db5dd0d7f | ||

|

|

d07925d79d | ||

|

|

662d0f31ff | ||

|

|

2c5ad0bf8f | ||

|

|

0ea76b986a | ||

|

|

3c4253c336 | ||

|

|

77ba28e91c | ||

|

|

6399e7586c | ||

|

|

1caa62adc8 | ||

|

|

8fa558f124 | ||

|

|

191228633b | ||

|

|

8a981f935a | ||

|

|

a8ea9adbcc | ||

|

|

685d94c44b | ||

|

|

153ed1b044 | ||

|

|

7788f3a1ba | ||

|

|

cd99225f9b | ||

|

|

33ba3b8d4a | ||

|

|

d222dd1069 | ||

|

|

39fd3d46ba | ||

|

|

419c1804b6 | ||

|

|

ae0351ddad | ||

|

|

941be15762 | ||

|

|

578ebcf6ed | ||

|

|

27ab4b08f9 | ||

|

|

428b2208ba | ||

|

|

438c553d60 | ||

|

|

90cb293182 | ||

|

|

1f9f93ebe4 | ||

|

|

f5b97fbb74 | ||

|

|

ce79244126 | ||

|

|

3af5d767d8 | ||

|

|

3ce3efd2f2 | ||

|

|

8108edea31 | ||

|

|

5d80087ab3 | ||

|

|

6593be584d | ||

|

|

a0f63f858f | ||

|

|

49914f3bd5 | ||

|

|

71988a8b98 | ||

|

|

d65be6ef58 | ||

|

|

38c40d02e7 | ||

|

|

9e071b9d60 | ||

|

|

b4ae060122 | ||

|

|

436656e81b | ||

|

|

d7e111b7d4 | ||

|

|

4b6126dd1a | ||

|

|

89faa70196 | ||

|

|

6ed9d4a1db | ||

|

|

9d0e38c2e1 | ||

|

|

8b758fd616 | ||

|

|

14369d8be3 | ||

|

|

7b4153113e | ||

|

|

7d340c5e61 | ||

|

|

337c94376d | ||

|

|

0ef1d0b2f1 | ||

|

|

5cf67bd4e0 | ||

|

|

f22be17852 | ||

|

|

48e79d5dd4 | ||

|

|

59f5a0654a | ||

|

|

6da2e11683 | ||

|

|

802c087a4b | ||

|

|

ed2048e9f3 | ||

|

|

437b1d30c0 | ||

|

|

ba1788cbc5 | ||

|

|

773094a20d | ||

|

|

5aa39106a0 | ||

|

|

a9167801ba | ||

|

|

62f4a6cb96 | ||

|

|

ea2b41e96e | ||

|

|

d28ce650e9 | ||

|

|

1bfcdba499 | ||

|

|

e48faa9144 | ||

|

|

33fbe99561 | ||

|

|

989925b484 | ||

|

|

7dd66559e7 | ||

|

|

2ef1c5608e | ||

|

|

b5932e8905 | ||

|

|

37999d3250 | ||

|

|

83985ae482 | ||

|

|

3adfcc837e | ||

|

|

c720fee3ab | ||

|

|

881387e522 | ||

|

|

d9f3378e29 | ||

|

|

ba87620225 | ||

|

|

1cd0c49872 | ||

|

|

12ac96deeb | ||

|

|

17e6f35785 | ||

|

|

bd115633a3 | ||

|

|

86ea172380 | ||

|

|

d87bbbbc1e | ||

|

|

6196f69f4d | ||

|

|

be31bcf22f | ||

|

|

cba2135c69 | ||

|

|

2e52573499 | ||

|

|

b2ce1ed1fb | ||

|

|

77a485af74 | ||

|

|

d8b847a973 | ||

|

|

e80a3d3232 | ||

|

|

780ba82385 | ||

|

|

6ba69dce0a | ||

|

|

3c7a561db8 | ||

|

|

49c942bea0 | ||

|

|

bf1ca293dc | ||

|

|

62b906d30b | ||

|

|

65bf048189 | ||

|

|

a498ed8200 | ||

|

|

9f12bbcd98 | ||

|

|

fcd520787d | ||

|

|

e2417e4e40 | ||

|

|

70a2cbf1c6 | ||

|

|

fa0c6af6aa | ||

|

|

4f1abd0c8d | ||

|

|

41e839aa36 | ||

|

|

2fd1593ad2 | ||

|

|

27b601c5aa | ||

|

|

5fc69134e3 | ||

|

|

9adc0698bb | ||

|

|

119c2ff464 | ||

|

|

f3a4201c7d | ||

|

|

8b6aa73df0 | ||

|

|

1d4dfb0883 | ||

|

|

eab7f126a6 | ||

|

|

fe7547d83e | ||

|

|

7d0df82861 | ||

|

|

7f0cd27591 | ||

|

|

e094c2ae14 | ||

|

|

a5d438257f | ||

|

|

d8cb8f1064 | ||

|

|

a8d8bb2d6f | ||

|

|

a76ea5917c | ||

|

|

b0b6198ec8 | ||

|

|

eda97f35d2 | ||

|

|

2b6507d35a | ||

|

|

f7c4d5aa0b | ||

|

|

74f07cffa6 | ||

|

|

79c8ff0af8 | ||

|

|

ac544eea4b | ||

|

|

231a32331b | ||

|

|

104e8ef050 | ||

|

|

296015faff | ||

|

|

9a9964c968 | ||

|

|

0d05d86e32 | ||

|

|

9680ca98f2 | ||

|

|

42b850ca52 | ||

|

|

3f5c22d863 | ||

|

|

535a92e871 | ||

|

|

3411a6a981 | ||

|

|

b5adee271c | ||

|

|

e2abcd1323 | ||

|

|

25fbe7ecb6 | ||

|

|

6befee79c2 | ||

|

|

f09c5a60f1 | ||

|

|

52e89ff509 | ||

|

|

35e20406ef | ||

|

|

c6e96ff1bb | ||

|

|

793ab524b0 | ||

|

|

5a479d0187 | ||

|

|

a23e4f1d2a | ||

|

|

bd35a3f61c | ||

|

|

197e987d5f | ||

|

|

7f29beb639 | ||

|

|

1140af8dc7 | ||

|

|

a2688c3910 | ||

|

|

75b27ab3f3 | ||

|

|

59d3f55fb2 | ||

|

|

f34739f334 | ||

|

|

90c71ec18f | ||

|

|

395234d7c8 | ||

|

|

e322ba0065 | ||

|

|

6db8b96f72 | ||

|

|

44d7e96e96 | ||

|

|

1662479c8d | ||

|

|

2e351fcf0d | ||

|

|

5d81876d07 | ||

|

|

c81e6989ec | ||

|

|

4d61a896c3 | ||

|

|

d148933ab3 | ||

|

|

04a56a3591 | ||

|

|

4a354e74d4 | ||

|

|

1e3e6427d5 | ||

|

|

38826108c8 | ||

|

|

4c4752f907 | ||

|

|

94dcd6c94d | ||

|

|

eabef3db30 | ||

|

|

6750f10ffa | ||

|

|

56cb888cbf | ||

|

|

b3e7fb3417 | ||

|

|

2c6e1baca2 | ||

|

|

c8358929d1 | ||

|

|

1dc7677dfb | ||

|

|

8e699a7543 | ||

|

|

cbbabdfac0 | ||

|

|

9d92de234c | ||

|

|

ba65975fb5 | ||

|

|

ef423b2078 | ||

|

|

f451b4e36c | ||

|

|

0856e13ee6 | ||

|

|

87b9fa8ca7 | ||

|

|

5b43d3d314 | ||

|

|

ac4972dd8d | ||

|

|

8a8f68af5d | ||

|

|

c669dc0c4b | ||

|

|

863a5466cc | ||

|

|

e2347c84e3 | ||

|

|

e0e673f565 | ||

|

|

30cbf2a741 | ||

|

|

f58de3801c | ||

|

|

7c6b88d4c1 | ||

|

|

0c0ebaecd5 | ||

|

|

1925f99118 | ||

|

|

6f2a22a1cc | ||

|

|

ee04082cd7 | ||

|

|

efd901ac3a | ||

|

|

e565789ae8 | ||

|

|

d3953004f6 | ||

|

|

df1d9e3011 | ||

|

|

631c55fa6e | ||

|

|

29cdd43288 | ||

|

|

9b79af9fcd | ||

|

|

2c9c1adb47 | ||

|

|

5dfb5808c4 | ||

|

|

bb0175aebf | ||

|

|

adaf4c99c0 | ||

|

|

bed6ed09d5 | ||

|

|

4ff67a85ce | ||

|

|

702f4fcd14 | ||

|

|

8a03ae153d | ||

|

|

434c6149ab | ||

|

|

97fc4a90ae | ||

|

|

217ef06930 | ||

|

|

71057946e6 | ||

|

|

a74ad52c72 | ||

|

|

12d26874f8 | ||

|

|

27de9ce151 | ||

|

|

9e7cd5a8c5 | ||

|

|

38cb487b64 | ||

|

|

05ca266c5e | ||

|

|

5cc26de645 | ||

|

|

2b9a195fa3 | ||

|

|

4454749eec | ||

|

|

b435a03fab | ||

|

|

7c166e2b40 | ||

|

|

f7a7963dcf | ||

|

|

9c77c0d69c | ||

|

|

e8a9555346 | ||

|

|

59751dd007 | ||

|

|

9c4d4d16b6 | ||

|

|

0e3d1b3e8f | ||

|

|

f119b78940 | ||

|

|

456d914c35 | ||

|

|

737507b0fe | ||

|

|

4bcf82d295 | ||

|

|

e9cd7afc8a | ||

|

|

0830abd51d | ||

|

|

5b296e01b3 | ||

|

|

3fd039afd1 | ||

|

|

5904348ba5 | ||

|

|

1a98e93723 | ||

|

|

c9685fbd13 | ||

|

|

dc347e273d | ||

|

|

8170916897 | ||

|

|

71cd4e0cb7 | ||

|

|

0109788ccc | ||

|

|

1649dea468 | ||

|

|

b8a7ea8534 | ||

|

|

afe4d59d5a | ||

|

|

0f2697df23 | ||

|

|

05664fa648 | ||

|

|

3b2564f34b | ||

|

|

dd0cf2d588 | ||

|

|

7c66f23c6a | ||

|

|

a9f034de1a | ||

|

|

6ad2dca57a | ||

|

|

e8353c110b | ||

|

|

dbf26ddf53 | ||

|

|

acc72d207f | ||

|

|

a784f83464 | ||

|

|

07d8355363 | ||

|

|

f7a439274e | ||

|

|

bd6d446cb8 | ||

|

|

385d0e0549 | ||

|

|

02236374d8 |

22

.circleci/config.yml

Normal file

@@ -0,0 +1,22 @@

|

||||

version: 2.1

|

||||

jobs:

|

||||

e2e-testing:

|

||||

machine: true

|

||||

steps:

|

||||

- checkout

|

||||

- run: test/e2e-kind.sh

|

||||

- run: test/e2e-istio.sh

|

||||

- run: test/e2e-build.sh

|

||||

- run: test/e2e-tests.sh

|

||||

|

||||

workflows:

|

||||

version: 2

|

||||

build-and-test:

|

||||

jobs:

|

||||

- e2e-testing:

|

||||

filters:

|

||||

branches:

|

||||

ignore:

|

||||

- /gh-pages.*/

|

||||

- /docs-.*/

|

||||

- /release-.*/

|

||||

@@ -6,3 +6,6 @@ coverage:

|

||||

threshold: 50

|

||||

base: auto

|

||||

patch: off

|

||||

|

||||

comment:

|

||||

require_changes: yes

|

||||

1

.github/CODEOWNERS

vendored

Normal file

@@ -0,0 +1 @@

|

||||

* @stefanprodan

|

||||

17

.github/main.workflow

vendored

Normal file

@@ -0,0 +1,17 @@

|

||||

workflow "Publish Helm charts" {

|

||||

on = "push"

|

||||

resolves = ["helm-push"]

|

||||

}

|

||||

|

||||

action "helm-lint" {

|

||||

uses = "stefanprodan/gh-actions/helm@master"

|

||||

args = ["lint charts/*"]

|

||||

}

|

||||

|

||||

action "helm-push" {

|

||||

needs = ["helm-lint"]

|

||||

uses = "stefanprodan/gh-actions/helm-gh-pages@master"

|

||||

args = ["charts/*","https://flagger.app"]

|

||||

secrets = ["GITHUB_TOKEN"]

|

||||

}

|

||||

|

||||

4

.gitignore

vendored

@@ -11,3 +11,7 @@

|

||||

# Output of the go coverage tool, specifically when used with LiteIDE

|

||||

*.out

|

||||

.DS_Store

|

||||

|

||||

bin/

|

||||

artifacts/gcloud/

|

||||

.idea

|

||||

@@ -1,7 +1,7 @@

|

||||

builds:

|

||||

- main: ./cmd/flagger

|

||||

binary: flagger

|

||||

ldflags: -s -w -X github.com/stefanprodan/flagger/pkg/version.REVISION={{.Commit}}

|

||||

ldflags: -s -w -X github.com/weaveworks/flagger/pkg/version.REVISION={{.Commit}}

|

||||

goos:

|

||||

- linux

|

||||

goarch:

|

||||

|

||||

29

.travis.yml

@@ -1,8 +1,13 @@

|

||||

sudo: required

|

||||

language: go

|

||||

|

||||

branches:

|

||||

except:

|

||||

- /gh-pages.*/

|

||||

- /docs-.*/

|

||||

|

||||

go:

|

||||

- 1.11.x

|

||||

- 1.12.x

|

||||

|

||||

services:

|

||||

- docker

|

||||

@@ -13,26 +18,26 @@ addons:

|

||||

- docker-ce

|

||||

|

||||

script:

|

||||

- set -e

|

||||

- make test-fmt

|

||||

- make test-codegen

|

||||

- go test -race -coverprofile=coverage.txt -covermode=atomic ./pkg/controller/

|

||||

- make build

|

||||

- make test-fmt

|

||||

- make test-codegen

|

||||

- go test -race -coverprofile=coverage.txt -covermode=atomic $(go list ./pkg/...)

|

||||

- make build

|

||||

|

||||

after_success:

|

||||

- if [ -z "$DOCKER_USER" ]; then

|

||||

echo "PR build, skipping image push";

|

||||

else

|

||||

docker tag stefanprodan/flagger:latest quay.io/stefanprodan/flagger:${TRAVIS_COMMIT};

|

||||

echo $DOCKER_PASS | docker login -u=$DOCKER_USER --password-stdin quay.io;

|

||||

docker push quay.io/stefanprodan/flagger:${TRAVIS_COMMIT};

|

||||

BRANCH_COMMIT=${TRAVIS_BRANCH}-$(echo ${TRAVIS_COMMIT} | head -c7);

|

||||

docker tag weaveworks/flagger:latest weaveworks/flagger:${BRANCH_COMMIT};

|

||||

echo $DOCKER_PASS | docker login -u=$DOCKER_USER --password-stdin;

|

||||

docker push weaveworks/flagger:${BRANCH_COMMIT};

|

||||

fi

|

||||

- if [ -z "$TRAVIS_TAG" ]; then

|

||||

echo "Not a release, skipping image push";

|

||||

else

|

||||

docker tag stefanprodan/flagger:latest quay.io/stefanprodan/flagger:${TRAVIS_TAG};

|

||||

echo $DOCKER_PASS | docker login -u=$DOCKER_USER --password-stdin quay.io;

|

||||

docker push quay.io/stefanprodan/flagger:$TRAVIS_TAG;

|

||||

docker tag weaveworks/flagger:latest weaveworks/flagger:${TRAVIS_TAG};

|

||||

echo $DOCKER_PASS | docker login -u=$DOCKER_USER --password-stdin;

|

||||

docker push weaveworks/flagger:$TRAVIS_TAG;

|

||||

fi

|

||||

- bash <(curl -s https://codecov.io/bash)

|

||||

- rm coverage.txt

|

||||

|

||||

229

CHANGELOG.md

Normal file

@@ -0,0 +1,229 @@

|

||||

# Changelog

|

||||

|

||||

All notable changes to this project are documented in this file.

|

||||

|

||||

## 0.11.0 (2019-04-17)

|

||||

|

||||

Adds pre/post rollout [webhooks](https://docs.flagger.app/how-it-works#webhooks)

|

||||

|

||||

#### Features

|

||||

|

||||

- Add `pre-rollout` and `post-rollout` webhook types [#147](https://github.com/weaveworks/flagger/pull/147)

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Unify App Mesh and Istio builtin metric checks [#146](https://github.com/weaveworks/flagger/pull/146)

|

||||

- Make the pod selector label configurable [#148](https://github.com/weaveworks/flagger/pull/148)

|

||||

|

||||

#### Breaking changes

|

||||

|

||||

- Set default `mesh` Istio gateway only if no gateway is specified [#141](https://github.com/weaveworks/flagger/pull/141)

|

||||

|

||||

## 0.10.0 (2019-03-27)

|

||||

|

||||

Adds support for App Mesh

|

||||

|

||||

#### Features

|

||||

|

||||

- AWS App Mesh integration

|

||||

[#107](https://github.com/weaveworks/flagger/pull/107)

|

||||

[#123](https://github.com/weaveworks/flagger/pull/123)

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Reconcile Kubernetes ClusterIP services [#122](https://github.com/weaveworks/flagger/pull/122)

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Preserve pod labels on canary promotion [#105](https://github.com/weaveworks/flagger/pull/105)

|

||||

- Fix canary status Prometheus metric [#121](https://github.com/weaveworks/flagger/pull/121)

|

||||

|

||||

## 0.9.0 (2019-03-11)

|

||||

|

||||

Allows A/B testing scenarios where instead of weighted routing, the traffic is split between the

|

||||

primary and canary based on HTTP headers or cookies.

|

||||

|

||||

#### Features

|

||||

|

||||

- A/B testing - canary with session affinity [#88](https://github.com/weaveworks/flagger/pull/88)

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Update the analysis interval when the custom resource changes [#91](https://github.com/weaveworks/flagger/pull/91)

|

||||

|

||||

## 0.8.0 (2019-03-06)

|

||||

|

||||

Adds support for CORS policy and HTTP request headers manipulation

|

||||

|

||||

#### Features

|

||||

|

||||

- CORS policy support [#83](https://github.com/weaveworks/flagger/pull/83)

|

||||

- Allow headers to be appended to HTTP requests [#82](https://github.com/weaveworks/flagger/pull/82)

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Refactor the routing management

|

||||

[#72](https://github.com/weaveworks/flagger/pull/72)

|

||||

[#80](https://github.com/weaveworks/flagger/pull/80)

|

||||

- Fine-grained RBAC [#73](https://github.com/weaveworks/flagger/pull/73)

|

||||

- Add option to limit Flagger to a single namespace [#78](https://github.com/weaveworks/flagger/pull/78)

|

||||

|

||||

## 0.7.0 (2019-02-28)

|

||||

|

||||

Adds support for custom metric checks, HTTP timeouts and HTTP retries

|

||||

|

||||

#### Features

|

||||

|

||||

- Allow custom promql queries in the canary analysis spec [#60](https://github.com/weaveworks/flagger/pull/60)

|

||||

- Add HTTP timeout and retries to canary service spec [#62](https://github.com/weaveworks/flagger/pull/62)

|

||||

|

||||

## 0.6.0 (2019-02-25)

|

||||

|

||||

Allows for [HTTPMatchRequests](https://istio.io/docs/reference/config/istio.networking.v1alpha3/#HTTPMatchRequest)

|

||||

and [HTTPRewrite](https://istio.io/docs/reference/config/istio.networking.v1alpha3/#HTTPRewrite)

|

||||

to be customized in the service spec of the canary custom resource.

|

||||

|

||||

#### Features

|

||||

|

||||

- Add HTTP match conditions and URI rewrite to the canary service spec [#55](https://github.com/weaveworks/flagger/pull/55)

|

||||

- Update virtual service when the canary service spec changes

|

||||

[#54](https://github.com/weaveworks/flagger/pull/54)

|

||||

[#51](https://github.com/weaveworks/flagger/pull/51)

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Run e2e testing on [Kubernetes Kind](https://github.com/kubernetes-sigs/kind) for canary promotion

|

||||

[#53](https://github.com/weaveworks/flagger/pull/53)

|

||||

|

||||

## 0.5.1 (2019-02-14)

|

||||

|

||||

Allows skipping the analysis phase to ship changes directly to production

|

||||

|

||||

#### Features

|

||||

|

||||

- Add option to skip the canary analysis [#46](https://github.com/weaveworks/flagger/pull/46)

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Reject deployment if the pod label selector doesn't match `app: <DEPLOYMENT_NAME>` [#43](https://github.com/weaveworks/flagger/pull/43)

|

||||

|

||||

## 0.5.0 (2019-01-30)

|

||||

|

||||

Track changes in ConfigMaps and Secrets [#37](https://github.com/weaveworks/flagger/pull/37)

|

||||

|

||||

#### Features

|

||||

|

||||

- Promote configmaps and secrets changes from canary to primary

|

||||

- Detect changes in configmaps and/or secrets and (re)start canary analysis

|

||||

- Add configs checksum to Canary CRD status

|

||||

- Create primary configmaps and secrets at bootstrap

|

||||

- Scan canary volumes and containers for configmaps and secrets

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Copy deployment labels from canary to primary at bootstrap and promotion

|

||||

|

||||

## 0.4.1 (2019-01-24)

|

||||

|

||||

Load testing webhook [#35](https://github.com/weaveworks/flagger/pull/35)

|

||||

|

||||

#### Features

|

||||

|

||||

- Add the load tester chart to Flagger Helm repository

|

||||

- Implement a load test runner based on [rakyll/hey](https://github.com/rakyll/hey)

|

||||

- Log warning when no values are found for Istio metric due to lack of traffic

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Run wekbooks before the metrics checks to avoid failures when using a load tester

|

||||

|

||||

## 0.4.0 (2019-01-18)

|

||||

|

||||

Restart canary analysis if revision changes [#31](https://github.com/weaveworks/flagger/pull/31)

|

||||

|

||||

#### Breaking changes

|

||||

|

||||

- Drop support for Kubernetes 1.10

|

||||

|

||||

#### Features

|

||||

|

||||

- Detect changes during canary analysis and reset advancement

|

||||

- Add status and additional printer columns to CRD

|

||||

- Add canary name and namespace to controller structured logs

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Allow canary name to be different to the target name

|

||||

- Check if multiple canaries have the same target and log error

|

||||

- Use deep copy when updating Kubernetes objects

|

||||

- Skip readiness checks if canary analysis has finished

|

||||

|

||||

## 0.3.0 (2019-01-11)

|

||||

|

||||

Configurable canary analysis duration [#20](https://github.com/weaveworks/flagger/pull/20)

|

||||

|

||||

#### Breaking changes

|

||||

|

||||

- Helm chart: flag `controlLoopInterval` has been removed

|

||||

|

||||

#### Features

|

||||

|

||||

- CRD: canaries.flagger.app v1alpha3

|

||||

- Schedule canary analysis independently based on `canaryAnalysis.interval`

|

||||

- Add analysis interval to Canary CRD (defaults to one minute)

|

||||

- Make autoscaler (HPA) reference optional

|

||||

|

||||

## 0.2.0 (2019-01-04)

|

||||

|

||||

Webhooks [#18](https://github.com/weaveworks/flagger/pull/18)

|

||||

|

||||

#### Features

|

||||

|

||||

- CRD: canaries.flagger.app v1alpha2

|

||||

- Implement canary external checks based on webhooks HTTP POST calls

|

||||

- Add webhooks to Canary CRD

|

||||

- Move docs to gitbook [docs.flagger.app](https://docs.flagger.app)

|

||||

|

||||

## 0.1.2 (2018-12-06)

|

||||

|

||||

Improve Slack notifications [#14](https://github.com/weaveworks/flagger/pull/14)

|

||||

|

||||

#### Features

|

||||

|

||||

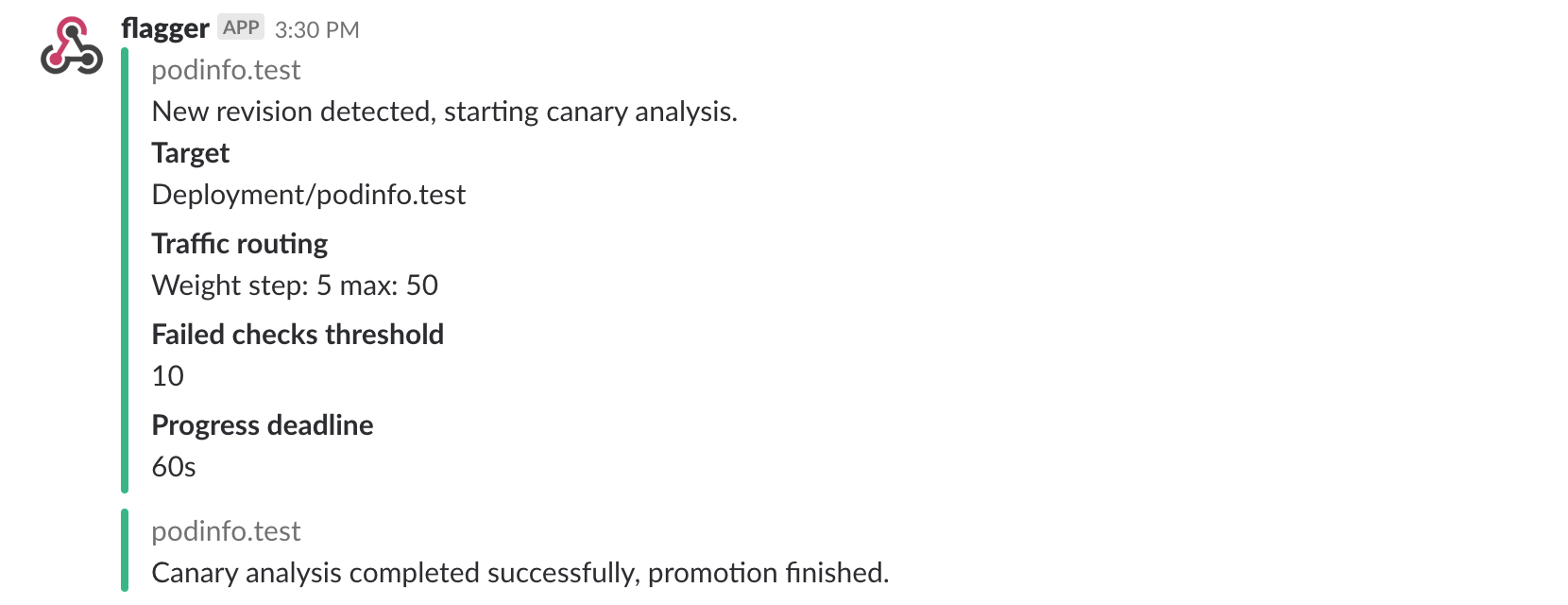

- Add canary analysis metadata to init and start Slack messages

|

||||

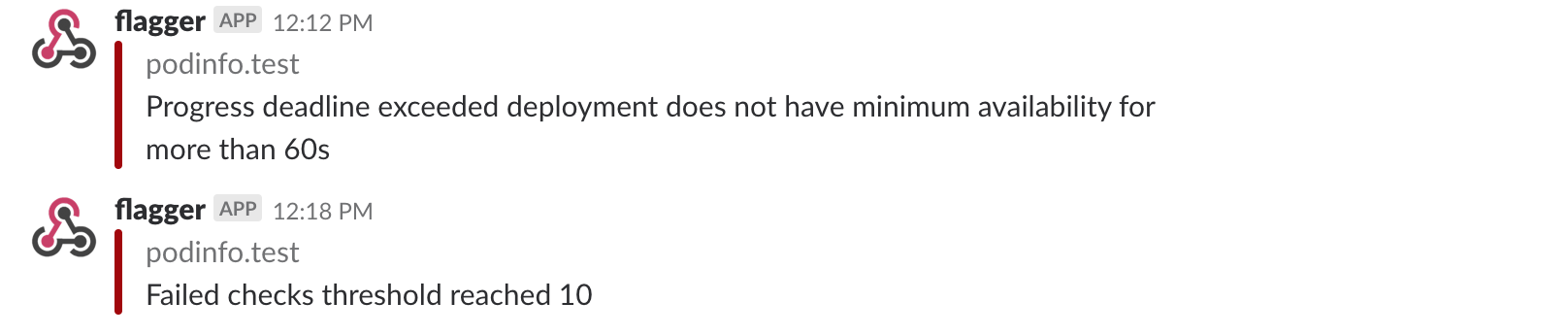

- Add rollback reason to failed canary Slack messages

|

||||

|

||||

## 0.1.1 (2018-11-28)

|

||||

|

||||

Canary progress deadline [#10](https://github.com/weaveworks/flagger/pull/10)

|

||||

|

||||

#### Features

|

||||

|

||||

- Rollback canary based on the deployment progress deadline check

|

||||

- Add progress deadline to Canary CRD (defaults to 10 minutes)

|

||||

|

||||

## 0.1.0 (2018-11-25)

|

||||

|

||||

First stable release

|

||||

|

||||

#### Features

|

||||

|

||||

- CRD: canaries.flagger.app v1alpha1

|

||||

- Notifications: post canary events to Slack

|

||||

- Instrumentation: expose Prometheus metrics for canary status and traffic weight percentage

|

||||

- Autoscaling: add HPA reference to CRD and create primary HPA at bootstrap

|

||||

- Bootstrap: create primary deployment, ClusterIP services and Istio virtual service based on CRD spec

|

||||

|

||||

|

||||

## 0.0.1 (2018-10-07)

|

||||

|

||||

Initial semver release

|

||||

|

||||

#### Features

|

||||

|

||||

- Implement canary rollback based on failed checks threshold

|

||||

- Scale up the deployment when canary revision changes

|

||||

- Add OpenAPI v3 schema validation to Canary CRD

|

||||

- Use CRD status for canary state persistence

|

||||

- Add Helm charts for Flagger and Grafana

|

||||

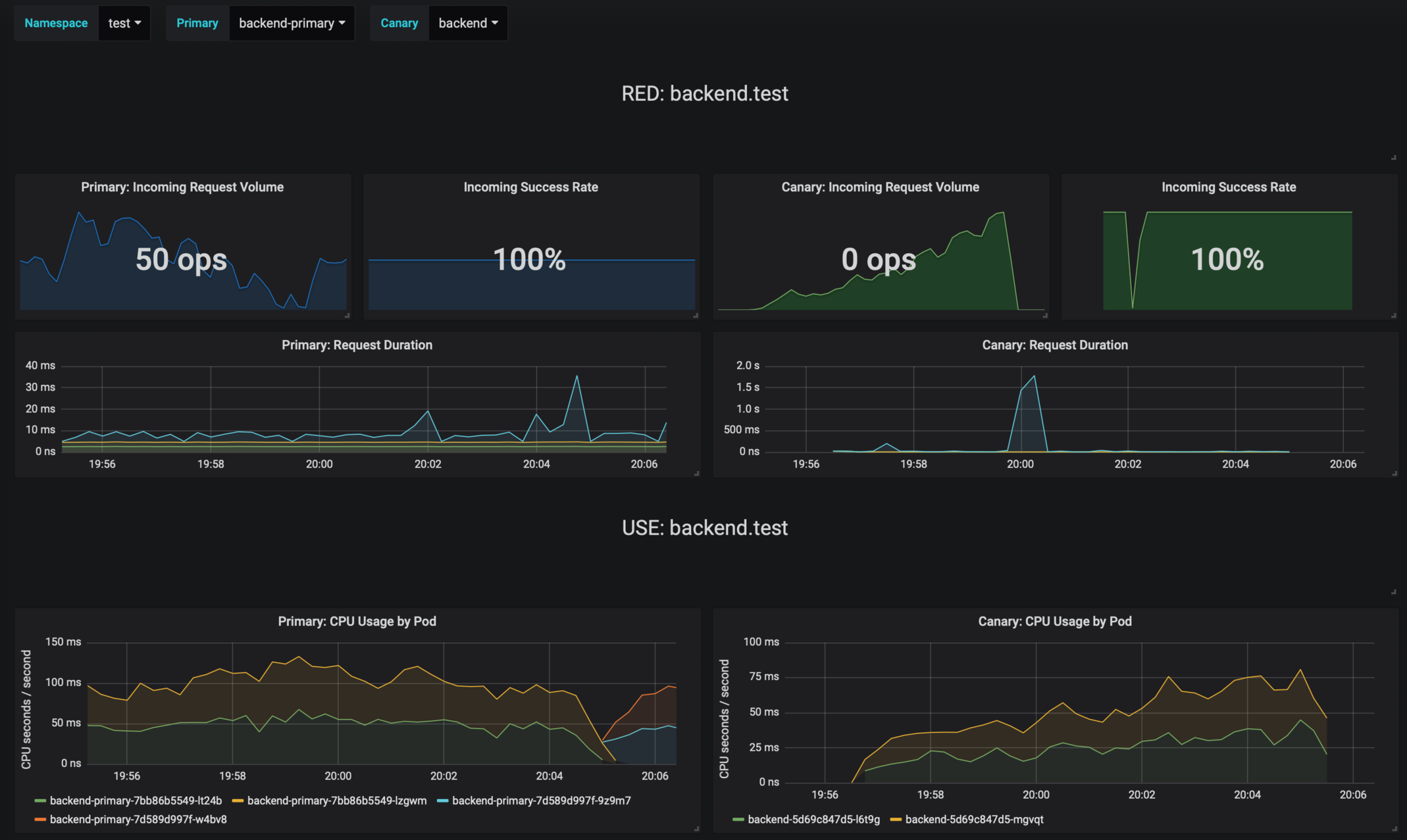

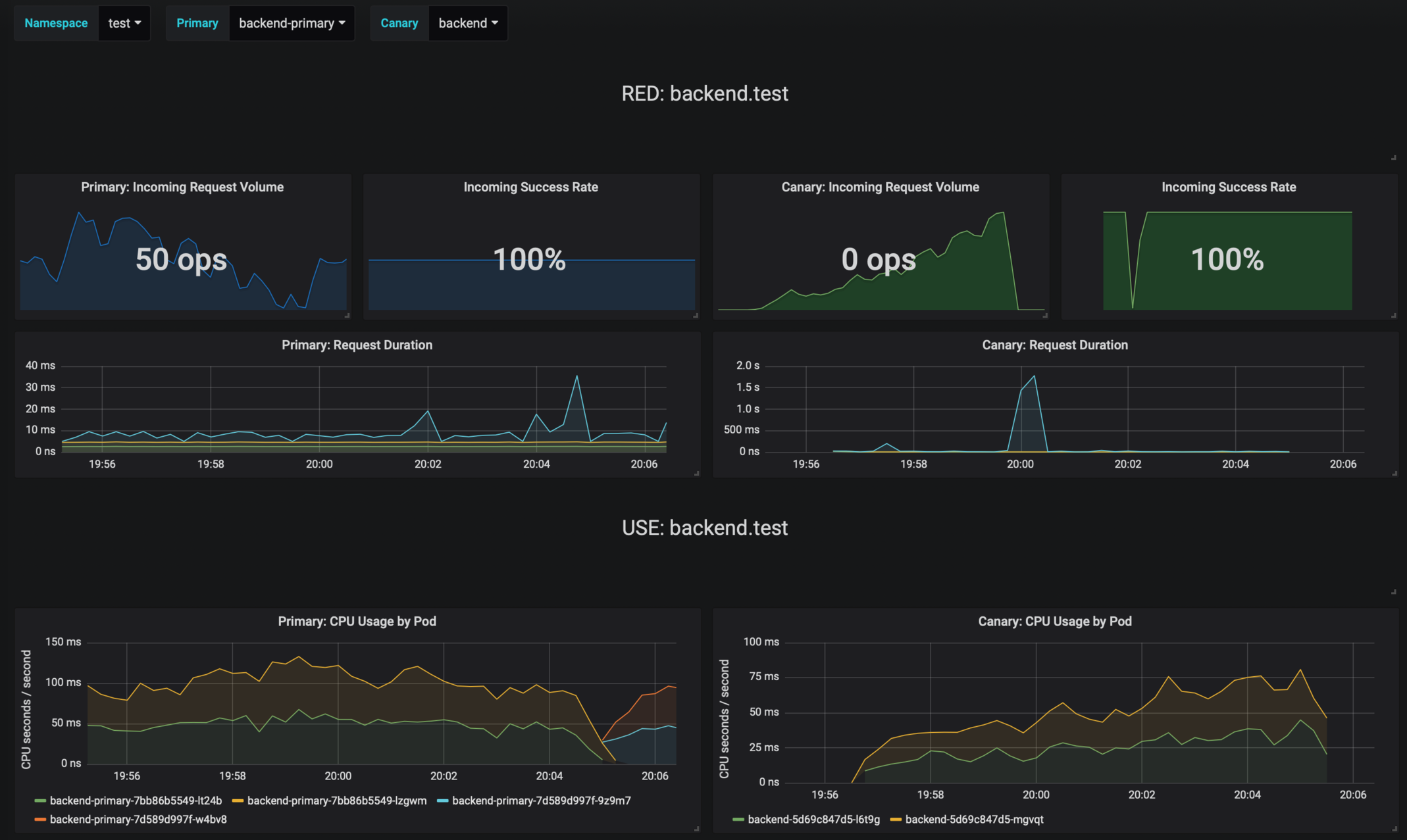

- Add canary analysis Grafana dashboard

|

||||

12

Dockerfile

@@ -1,17 +1,17 @@

|

||||

FROM golang:1.11

|

||||

FROM golang:1.12

|

||||

|

||||

RUN mkdir -p /go/src/github.com/stefanprodan/flagger/

|

||||

RUN mkdir -p /go/src/github.com/weaveworks/flagger/

|

||||

|

||||

WORKDIR /go/src/github.com/stefanprodan/flagger

|

||||

WORKDIR /go/src/github.com/weaveworks/flagger

|

||||

|

||||

COPY . .

|

||||

|

||||

RUN GIT_COMMIT=$(git rev-list -1 HEAD) && \

|

||||

CGO_ENABLED=0 GOOS=linux go build -ldflags "-s -w \

|

||||

-X github.com/stefanprodan/flagger/pkg/version.REVISION=${GIT_COMMIT}" \

|

||||

-X github.com/weaveworks/flagger/pkg/version.REVISION=${GIT_COMMIT}" \

|

||||

-a -installsuffix cgo -o flagger ./cmd/flagger/*

|

||||

|

||||

FROM alpine:3.8

|

||||

FROM alpine:3.9

|

||||

|

||||

RUN addgroup -S flagger \

|

||||

&& adduser -S -g flagger flagger \

|

||||

@@ -19,7 +19,7 @@ RUN addgroup -S flagger \

|

||||

|

||||

WORKDIR /home/flagger

|

||||

|

||||

COPY --from=0 /go/src/github.com/stefanprodan/flagger/flagger .

|

||||

COPY --from=0 /go/src/github.com/weaveworks/flagger/flagger .

|

||||

|

||||

RUN chown -R flagger:flagger ./

|

||||

|

||||

|

||||

30

Dockerfile.loadtester

Normal file

@@ -0,0 +1,30 @@

|

||||

FROM golang:1.12 AS builder

|

||||

|

||||

RUN mkdir -p /go/src/github.com/weaveworks/flagger/

|

||||

|

||||

WORKDIR /go/src/github.com/weaveworks/flagger

|

||||

|

||||

COPY . .

|

||||

|

||||

RUN go test -race ./pkg/loadtester/

|

||||

|

||||

RUN CGO_ENABLED=0 GOOS=linux go build -a -installsuffix cgo -o loadtester ./cmd/loadtester/*

|

||||

|

||||

FROM bats/bats:v1.1.0

|

||||

|

||||

RUN addgroup -S app \

|

||||

&& adduser -S -g app app \

|

||||

&& apk --no-cache add ca-certificates curl jq

|

||||

|

||||

WORKDIR /home/app

|

||||

|

||||

RUN curl -sSLo hey "https://storage.googleapis.com/jblabs/dist/hey_linux_v0.1.2" && \

|

||||

chmod +x hey && mv hey /usr/local/bin/hey

|

||||

|

||||

COPY --from=builder /go/src/github.com/weaveworks/flagger/loadtester .

|

||||

|

||||

RUN chown -R app:app ./

|

||||

|

||||

USER app

|

||||

|

||||

ENTRYPOINT ["./loadtester"]

|

||||

169

Gopkg.lock

generated

@@ -2,12 +2,12 @@

|

||||

|

||||

|

||||

[[projects]]

|

||||

digest = "1:5c3894b2aa4d6bead0ceeea6831b305d62879c871780e7b76296ded1b004bc57"

|

||||

digest = "1:4d6f036ea3fe636bcb2e89850bcdc62a771354e157cd51b8b22a2de8562bf663"

|

||||

name = "cloud.google.com/go"

|

||||

packages = ["compute/metadata"]

|

||||

pruneopts = "NUT"

|

||||

revision = "97efc2c9ffd9fe8ef47f7f3203dc60bbca547374"

|

||||

version = "v0.28.0"

|

||||

revision = "c9474f2f8deb81759839474b6bd1726bbfe1c1c4"

|

||||

version = "v0.36.0"

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

@@ -34,15 +34,15 @@

|

||||

version = "v1.0.0"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:8679b8a64f3613e9749c5640c3535c83399b8e69f67ce54d91dc73f6d77373af"

|

||||

digest = "1:a1b2a5e38f79688ee8250942d5fa960525fceb1024c855c7bc76fa77b0f3cca2"

|

||||

name = "github.com/gogo/protobuf"

|

||||

packages = [

|

||||

"proto",

|

||||

"sortkeys",

|

||||

]

|

||||

pruneopts = "NUT"

|

||||

revision = "636bf0302bc95575d69441b25a2603156ffdddf1"

|

||||

version = "v1.1.1"

|

||||

revision = "ba06b47c162d49f2af050fb4c75bcbc86a159d5c"

|

||||

version = "v1.2.1"

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

@@ -55,14 +55,14 @@

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

digest = "1:3fb07f8e222402962fa190eb060608b34eddfb64562a18e2167df2de0ece85d8"

|

||||

digest = "1:b7cb6054d3dff43b38ad2e92492f220f57ae6087ee797dca298139776749ace8"

|

||||

name = "github.com/golang/groupcache"

|

||||

packages = ["lru"]

|

||||

pruneopts = "NUT"

|

||||

revision = "24b0969c4cb722950103eed87108c8d291a8df00"

|

||||

revision = "5b532d6fd5efaf7fa130d4e859a2fde0fc3a9e1b"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:63ccdfbd20f7ccd2399d0647a7d100b122f79c13bb83da9660b1598396fd9f62"

|

||||

digest = "1:2d0636a8c490d2272dd725db26f74a537111b99b9dbdda0d8b98febe63702aa4"

|

||||

name = "github.com/golang/protobuf"

|

||||

packages = [

|

||||

"proto",

|

||||

@@ -72,8 +72,8 @@

|

||||

"ptypes/timestamp",

|

||||

]

|

||||

pruneopts = "NUT"

|

||||

revision = "aa810b61a9c79d51363740d207bb46cf8e620ed5"

|

||||

version = "v1.2.0"

|

||||

revision = "c823c79ea1570fb5ff454033735a8e68575d1d0f"

|

||||

version = "v1.3.0"

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

@@ -119,33 +119,41 @@

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

digest = "1:7fdf3223c7372d1ced0b98bf53457c5e89d89aecbad9a77ba9fcc6e01f9e5621"

|

||||

digest = "1:a86d65bc23eea505cd9139178e4d889733928fe165c7a008f41eaab039edf9df"

|

||||

name = "github.com/gregjones/httpcache"

|

||||

packages = [

|

||||

".",

|

||||

"diskcache",

|

||||

]

|

||||

pruneopts = "NUT"

|

||||

revision = "9cad4c3443a7200dd6400aef47183728de563a38"

|

||||

revision = "3befbb6ad0cc97d4c25d851e9528915809e1a22f"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:b42cde0e1f3c816dd57f57f7bbcf05ca40263ad96f168714c130c611fc0856a6"

|

||||

digest = "1:7313d6b9095eb86581402557bbf3871620cf82adf41853c5b9bee04b894290c7"

|

||||

name = "github.com/h2non/parth"

|

||||

packages = ["."]

|

||||

pruneopts = "NUT"

|

||||

revision = "b4df798d65426f8c8ab5ca5f9987aec5575d26c9"

|

||||

version = "v2.0.1"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:52094d0f8bdf831d1a2401e9b6fee5795fdc0b2a2d1f8bb1980834c289e79129"

|

||||

name = "github.com/hashicorp/golang-lru"

|

||||

packages = [

|

||||

".",

|

||||

"simplelru",

|

||||

]

|

||||

pruneopts = "NUT"

|

||||

revision = "20f1fb78b0740ba8c3cb143a61e86ba5c8669768"

|

||||

version = "v0.5.0"

|

||||

revision = "7087cb70de9f7a8bc0a10c375cb0d2280a8edf9c"

|

||||

version = "v0.5.1"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:9a52adf44086cead3b384e5d0dbf7a1c1cce65e67552ee3383a8561c42a18cd3"

|

||||

digest = "1:aaa38889f11896ee3644d77e17dc7764cc47f5f3d3b488268df2af2b52541c5f"

|

||||

name = "github.com/imdario/mergo"

|

||||

packages = ["."]

|

||||

pruneopts = "NUT"

|

||||

revision = "9f23e2d6bd2a77f959b2bf6acdbefd708a83a4a4"

|

||||

version = "v0.3.6"

|

||||

revision = "7c29201646fa3de8506f701213473dd407f19646"

|

||||

version = "v0.3.7"

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

@@ -162,32 +170,6 @@

|

||||

pruneopts = "NUT"

|

||||

revision = "f2b4162afba35581b6d4a50d3b8f34e33c144682"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:03a74b0d86021c8269b52b7c908eb9bb3852ff590b363dad0a807cf58cec2f89"

|

||||

name = "github.com/knative/pkg"

|

||||

packages = [

|

||||

"apis",

|

||||

"apis/duck",

|

||||

"apis/duck/v1alpha1",

|

||||

"apis/istio",

|

||||

"apis/istio/authentication",

|

||||

"apis/istio/authentication/v1alpha1",

|

||||

"apis/istio/common/v1alpha1",

|

||||

"apis/istio/v1alpha3",

|

||||

"client/clientset/versioned",

|

||||

"client/clientset/versioned/fake",

|

||||

"client/clientset/versioned/scheme",

|

||||

"client/clientset/versioned/typed/authentication/v1alpha1",

|

||||

"client/clientset/versioned/typed/authentication/v1alpha1/fake",

|

||||

"client/clientset/versioned/typed/duck/v1alpha1",

|

||||

"client/clientset/versioned/typed/duck/v1alpha1/fake",

|

||||

"client/clientset/versioned/typed/istio/v1alpha3",

|

||||

"client/clientset/versioned/typed/istio/v1alpha3/fake",

|

||||

"signals",

|

||||

]

|

||||

pruneopts = "NUT"

|

||||

revision = "c15d7c8f2220a7578b33504df6edefa948c845ae"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:5985ef4caf91ece5d54817c11ea25f182697534f8ae6521eadcd628c142ac4b6"

|

||||

name = "github.com/matttproud/golang_protobuf_extensions"

|

||||

@@ -245,11 +227,10 @@

|

||||

name = "github.com/prometheus/client_model"

|

||||

packages = ["go"]

|

||||

pruneopts = "NUT"

|

||||

revision = "5c3871d89910bfb32f5fcab2aa4b9ec68e65a99f"

|

||||

revision = "fd36f4220a901265f90734c3183c5f0c91daa0b8"

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

digest = "1:fad5a35eea6a1a33d6c8f949fbc146f24275ca809ece854248187683f52cc30b"

|

||||

digest = "1:4e776079b966091d3e6e12ed2aaf728bea5cd1175ef88bb654e03adbf5d4f5d3"

|

||||

name = "github.com/prometheus/common"

|

||||

packages = [

|

||||

"expfmt",

|

||||

@@ -257,28 +238,30 @@

|

||||

"model",

|

||||

]

|

||||

pruneopts = "NUT"

|

||||

revision = "c7de2306084e37d54b8be01f3541a8464345e9a5"

|

||||

revision = "cfeb6f9992ffa54aaa4f2170ade4067ee478b250"

|

||||

version = "v0.2.0"

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

digest = "1:26a2f5e891cc4d2321f18a0caa84c8e788663c17bed6a487f3cbe2c4295292d0"

|

||||

digest = "1:0a2e604afa3cbf53a1ddade2f240ee8472eded98856dd8c7cfbfea392ddbbfc7"

|

||||

name = "github.com/prometheus/procfs"

|

||||

packages = [

|

||||

".",

|

||||

"internal/util",

|

||||

"iostats",

|

||||

"nfs",

|

||||

"xfs",

|

||||

]

|

||||

pruneopts = "NUT"

|

||||

revision = "418d78d0b9a7b7de3a6bbc8a23def624cc977bb2"

|

||||

revision = "bbced9601137e764853b2fad7ec3e2dc4c504e02"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:e3707aeaccd2adc89eba6c062fec72116fe1fc1ba71097da85b4d8ae1668a675"

|

||||

digest = "1:9d8420bbf131d1618bde6530af37c3799340d3762cc47210c1d9532a4c3a2779"

|

||||

name = "github.com/spf13/pflag"

|

||||

packages = ["."]

|

||||

pruneopts = "NUT"

|

||||

revision = "9a97c102cda95a86cec2345a6f09f55a939babf5"

|

||||

version = "v1.0.2"

|

||||

revision = "298182f68c66c05229eb03ac171abe6e309ee79a"

|

||||

version = "v1.0.3"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:22f696cee54865fb8e9ff91df7b633f6b8f22037a8015253c6b6a71ca82219c7"

|

||||

@@ -313,15 +296,15 @@

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

digest = "1:3f3a05ae0b95893d90b9b3b5afdb79a9b3d96e4e36e099d841ae602e4aca0da8"

|

||||

digest = "1:058e9504b9a79bfe86092974d05bb3298d2aa0c312d266d43148de289a5065d9"

|

||||

name = "golang.org/x/crypto"

|

||||

packages = ["ssh/terminal"]

|

||||

pruneopts = "NUT"

|

||||

revision = "0e37d006457bf46f9e6692014ba72ef82c33022c"

|

||||

revision = "8dd112bcdc25174059e45e07517d9fc663123347"

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

digest = "1:1400b8e87c2c9bd486ea1a13155f59f8f02d385761206df05c0b7db007a53b2c"

|

||||

digest = "1:e3477b53a5c2fb71a7c9688e9b3d58be702807a5a88def8b9a327259d46e4979"

|

||||

name = "golang.org/x/net"

|

||||

packages = [

|

||||

"context",

|

||||

@@ -332,11 +315,11 @@

|

||||

"idna",

|

||||

]

|

||||

pruneopts = "NUT"

|

||||

revision = "26e67e76b6c3f6ce91f7c52def5af501b4e0f3a2"

|

||||

revision = "16b79f2e4e95ea23b2bf9903c9809ff7b013ce85"

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

digest = "1:bc2b221d465bb28ce46e8d472ecdc424b9a9b541bd61d8c311c5f29c8dd75b1b"

|

||||

digest = "1:17ee74a4d9b6078611784b873cdbfe91892d2c73052c430724e66fcc015b6c7b"

|

||||

name = "golang.org/x/oauth2"

|

||||

packages = [

|

||||

".",

|

||||

@@ -346,18 +329,18 @@

|

||||

"jwt",

|

||||

]

|

||||

pruneopts = "NUT"

|

||||

revision = "d2e6202438beef2727060aa7cabdd924d92ebfd9"

|

||||

revision = "e64efc72b421e893cbf63f17ba2221e7d6d0b0f3"

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

digest = "1:44261e94b6095310a2df925fd68632d399a00eb153b52566a7b3697f7c70638c"

|

||||

digest = "1:a0d91ab4d23badd4e64e115c6e6ba7dd56bd3cde5d287845822fb2599ac10236"

|

||||

name = "golang.org/x/sys"

|

||||

packages = [

|

||||

"unix",

|

||||

"windows",

|

||||

]

|

||||

pruneopts = "NUT"

|

||||

revision = "1561086e645b2809fb9f8a1e2a38160bf8d53bf4"

|

||||

revision = "30e92a19ae4a77dde818b8c3d41d51e4850cba12"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:e7071ed636b5422cc51c0e3a6cebc229d6c9fffc528814b519a980641422d619"

|

||||

@@ -384,26 +367,35 @@

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

digest = "1:c9e7a4b4d47c0ed205d257648b0e5b0440880cb728506e318f8ac7cd36270bc4"

|

||||

digest = "1:9fdc2b55e8e0fafe4b41884091e51e77344f7dc511c5acedcfd98200003bff90"

|

||||

name = "golang.org/x/time"

|

||||

packages = ["rate"]

|

||||

pruneopts = "NUT"

|

||||

revision = "fbb02b2291d28baffd63558aa44b4b56f178d650"

|

||||

revision = "85acf8d2951cb2a3bde7632f9ff273ef0379bcbd"

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

digest = "1:45751dc3302c90ea55913674261b2d74286b05cdd8e3ae9606e02e4e77f4353f"

|

||||

digest = "1:e46d8e20161401a9cf8765dfa428494a3492a0b56fe114156b7da792bf41ba78"

|

||||

name = "golang.org/x/tools"

|

||||

packages = [

|

||||

"go/ast/astutil",

|

||||

"go/gcexportdata",

|

||||

"go/internal/cgo",

|

||||

"go/internal/gcimporter",

|

||||

"go/internal/packagesdriver",

|

||||

"go/packages",

|

||||

"go/types/typeutil",

|

||||

"imports",

|

||||

"internal/fastwalk",

|

||||

"internal/gopathwalk",

|

||||

"internal/module",

|

||||

"internal/semver",

|

||||

]

|

||||

pruneopts = "NUT"

|

||||

revision = "90fa682c2a6e6a37b3a1364ce2fe1d5e41af9d6d"

|

||||

revision = "f8c04913dfb7b2339a756441456bdbe0af6eb508"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:e2da54c7866453ac5831c61c7ec5d887f39328cac088c806553303bff4048e6f"

|

||||

digest = "1:d395d49d784dd3a11938a3e85091b6570664aa90ff2767a626565c6c130fa7e9"

|

||||

name = "google.golang.org/appengine"

|

||||

packages = [

|

||||

".",

|

||||

@@ -418,8 +410,16 @@

|

||||

"urlfetch",

|

||||

]

|

||||

pruneopts = "NUT"

|

||||

revision = "ae0ab99deb4dc413a2b4bd6c8bdd0eb67f1e4d06"

|

||||

version = "v1.2.0"

|

||||

revision = "e9657d882bb81064595ca3b56cbe2546bbabf7b1"

|

||||

version = "v1.4.0"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:fe9eb931d7b59027c4a3467f7edc16cc8552dac5328039bec05045143c18e1ce"

|

||||

name = "gopkg.in/h2non/gock.v1"

|

||||

packages = ["."]

|

||||

pruneopts = "NUT"

|

||||

revision = "ba88c4862a27596539531ce469478a91bc5a0511"

|

||||

version = "v1.0.14"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:2d1fbdc6777e5408cabeb02bf336305e724b925ff4546ded0fa8715a7267922a"

|

||||

@@ -430,12 +430,12 @@

|

||||

version = "v0.9.1"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:7c95b35057a0ff2e19f707173cc1a947fa43a6eb5c4d300d196ece0334046082"

|

||||

digest = "1:18108594151654e9e696b27b181b953f9a90b16bf14d253dd1b397b025a1487f"

|

||||

name = "gopkg.in/yaml.v2"

|

||||

packages = ["."]

|

||||

pruneopts = "NUT"

|

||||

revision = "5420a8b6744d3b0345ab293f6fcba19c978f1183"

|

||||

version = "v2.2.1"

|

||||

revision = "51d6538a90f86fe93ac480b35f37b2be17fef232"

|

||||

version = "v2.2.2"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:8960ef753a87391086a307122d23cd5007cee93c28189437e4f1b6ed72bffc50"

|

||||

@@ -476,10 +476,9 @@

|

||||

version = "kubernetes-1.11.0"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:4b0d523ee389c762d02febbcfa0734c4530ebe87abe925db18f05422adcb33e8"

|

||||

digest = "1:83b01e3d6f85c4e911de84febd69a2d3ece614c5a4a518fbc2b5d59000645980"

|

||||

name = "k8s.io/apimachinery"

|

||||

packages = [

|

||||

"pkg/api/equality",

|

||||

"pkg/api/errors",

|

||||

"pkg/api/meta",

|

||||

"pkg/api/resource",

|

||||

@@ -659,7 +658,7 @@

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

digest = "1:5249c83f0fb9e277b2d28c19eca814feac7ef05dc762e4deaf0a2e4b1a7c5df3"

|

||||

digest = "1:61024ed77a53ac618effed55043bf6a9afbdeb64136bd6a5b0c992d4c0363766"

|

||||

name = "k8s.io/gengo"

|

||||

packages = [

|

||||

"args",

|

||||

@@ -672,15 +671,23 @@

|

||||

"types",

|

||||

]

|

||||

pruneopts = "NUT"

|

||||

revision = "4242d8e6c5dba56827bb7bcf14ad11cda38f3991"

|

||||

revision = "0689ccc1d7d65d9dd1bedcc3b0b1ed7df91ba266"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:c263611800c3a97991dbcf9d3bc4de390f6224aaa8ca0a7226a9d734f65a416a"

|

||||

name = "k8s.io/klog"

|

||||

packages = ["."]

|

||||

pruneopts = "NUT"

|

||||

revision = "71442cd4037d612096940ceb0f3fec3f7fff66e0"

|

||||

version = "v0.2.0"

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

digest = "1:a2c842a1e0aed96fd732b535514556323a6f5edfded3b63e5e0ab1bce188aa54"

|

||||

digest = "1:03a96603922fc1f6895ae083e1e16d943b55ef0656b56965351bd87e7d90485f"

|

||||

name = "k8s.io/kube-openapi"

|

||||

packages = ["pkg/util/proto"]

|

||||

pruneopts = "NUT"

|

||||

revision = "e3762e86a74c878ffed47484592986685639c2cd"

|

||||

revision = "b3a7cee44a305be0a69e1b9ac03018307287e1b0"

|

||||

|

||||

[solve-meta]

|

||||

analyzer-name = "dep"

|

||||

@@ -689,13 +696,11 @@

|

||||

"github.com/google/go-cmp/cmp",

|

||||

"github.com/google/go-cmp/cmp/cmpopts",

|

||||

"github.com/istio/glog",

|

||||

"github.com/knative/pkg/apis/istio/v1alpha3",

|

||||

"github.com/knative/pkg/client/clientset/versioned",

|

||||

"github.com/knative/pkg/client/clientset/versioned/fake",

|

||||

"github.com/knative/pkg/signals",

|

||||

"github.com/prometheus/client_golang/prometheus",

|

||||

"github.com/prometheus/client_golang/prometheus/promhttp",

|

||||

"go.uber.org/zap",

|

||||

"go.uber.org/zap/zapcore",

|

||||

"gopkg.in/h2non/gock.v1",

|

||||

"k8s.io/api/apps/v1",

|

||||

"k8s.io/api/autoscaling/v1",

|

||||

"k8s.io/api/autoscaling/v2beta1",

|

||||

|

||||

@@ -11,6 +11,10 @@ required = [

|

||||

name = "go.uber.org/zap"

|

||||

version = "v1.9.1"

|

||||

|

||||

[[constraint]]

|

||||

name = "gopkg.in/h2non/gock.v1"

|

||||

version = "v1.0.14"

|

||||

|

||||

[[override]]

|

||||

name = "gopkg.in/yaml.v2"

|

||||

version = "v2.2.1"

|

||||

@@ -45,10 +49,6 @@ required = [

|

||||

name = "github.com/google/go-cmp"

|

||||

version = "v0.2.0"

|

||||

|

||||

[[constraint]]

|

||||

name = "github.com/knative/pkg"

|

||||

revision = "c15d7c8f2220a7578b33504df6edefa948c845ae"

|

||||

|

||||

[[override]]

|

||||

name = "github.com/golang/glog"

|

||||

source = "github.com/istio/glog"

|

||||

|

||||

5

MAINTAINERS

Normal file

@@ -0,0 +1,5 @@

|

||||

The maintainers are generally available in Slack at

|

||||

https://weave-community.slack.com/messages/flagger/ (obtain an invitation

|

||||

at https://slack.weave.works/).

|

||||

|

||||

Stefan Prodan, Weaveworks <stefan@weave.works> (Slack: @stefan Twitter: @stefanprodan)

|

||||

25

Makefile

@@ -3,18 +3,26 @@ VERSION?=$(shell grep 'VERSION' pkg/version/version.go | awk '{ print $$4 }' | t

|

||||

VERSION_MINOR:=$(shell grep 'VERSION' pkg/version/version.go | awk '{ print $$4 }' | tr -d '"' | rev | cut -d'.' -f2- | rev)

|

||||

PATCH:=$(shell grep 'VERSION' pkg/version/version.go | awk '{ print $$4 }' | tr -d '"' | awk -F. '{print $$NF}')

|

||||

SOURCE_DIRS = cmd pkg/apis pkg/controller pkg/server pkg/logging pkg/version

|

||||

LT_VERSION?=$(shell grep 'VERSION' cmd/loadtester/main.go | awk '{ print $$4 }' | tr -d '"' | head -n1)

|

||||

|

||||

run:

|

||||

go run cmd/flagger/* -kubeconfig=$$HOME/.kube/config -log-level=info \

|

||||

-metrics-server=https://prometheus.iowa.weavedx.com \

|

||||

-metrics-server=https://prometheus.istio.weavedx.com \

|

||||

-slack-url=https://hooks.slack.com/services/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 \

|

||||

-slack-channel="devops-alerts"

|

||||

|

||||

run-appmesh:

|

||||

go run cmd/flagger/* -kubeconfig=$$HOME/.kube/config -log-level=info -mesh-provider=appmesh \

|

||||

-metrics-server=http://acfc235624ca911e9a94c02c4171f346-1585187926.us-west-2.elb.amazonaws.com:9090 \

|

||||

-slack-url=https://hooks.slack.com/services/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 \

|

||||

-slack-channel="devops-alerts"

|

||||

|

||||

build:

|

||||

docker build -t stefanprodan/flagger:$(TAG) . -f Dockerfile

|

||||

docker build -t weaveworks/flagger:$(TAG) . -f Dockerfile

|

||||

|

||||

push:

|

||||

docker tag stefanprodan/flagger:$(TAG) quay.io/stefanprodan/flagger:$(VERSION)

|

||||

docker push quay.io/stefanprodan/flagger:$(VERSION)

|

||||

docker tag weaveworks/flagger:$(TAG) quay.io/weaveworks/flagger:$(VERSION)

|

||||

docker push quay.io/weaveworks/flagger:$(VERSION)

|

||||

|

||||

fmt:

|

||||

gofmt -l -s -w $(SOURCE_DIRS)

|

||||

@@ -29,9 +37,9 @@ test: test-fmt test-codegen

|

||||

go test ./...

|

||||

|

||||

helm-package:

|

||||

cd charts/ && helm package flagger/ && helm package grafana/

|

||||

cd charts/ && helm package ./*

|

||||

mv charts/*.tgz docs/

|

||||

helm repo index docs --url https://stefanprodan.github.io/flagger --merge ./docs/index.yaml

|

||||

helm repo index docs --url https://weaveworks.github.io/flagger --merge ./docs/index.yaml

|

||||

|

||||

helm-up:

|

||||

helm upgrade --install flagger ./charts/flagger --namespace=istio-system --set crd.create=false

|

||||

@@ -44,6 +52,7 @@ version-set:

|

||||

sed -i '' "s/flagger:$$current/flagger:$$next/g" artifacts/flagger/deployment.yaml && \

|

||||

sed -i '' "s/tag: $$current/tag: $$next/g" charts/flagger/values.yaml && \

|

||||

sed -i '' "s/appVersion: $$current/appVersion: $$next/g" charts/flagger/Chart.yaml && \

|

||||

sed -i '' "s/version: $$current/version: $$next/g" charts/flagger/Chart.yaml && \

|

||||

echo "Version $$next set in code, deployment and charts"

|

||||

|

||||

version-up:

|

||||

@@ -77,3 +86,7 @@ reset-test:

|

||||

kubectl delete -f ./artifacts/namespaces

|

||||

kubectl apply -f ./artifacts/namespaces

|

||||

kubectl apply -f ./artifacts/canaries

|

||||

|

||||

loadtester-push:

|

||||

docker build -t weaveworks/flagger-loadtester:$(LT_VERSION) . -f Dockerfile.loadtester

|

||||

docker push weaveworks/flagger-loadtester:$(LT_VERSION)

|

||||

464

README.md

@@ -1,75 +1,56 @@

|

||||

# flagger

|

||||

|

||||

[](https://travis-ci.org/stefanprodan/flagger)

|

||||

[](https://goreportcard.com/report/github.com/stefanprodan/flagger)

|

||||

[](https://codecov.io/gh/stefanprodan/flagger)

|

||||

[](https://github.com/stefanprodan/flagger/blob/master/LICENSE)

|

||||

[](https://github.com/stefanprodan/flagger/releases)

|

||||

[](https://travis-ci.org/weaveworks/flagger)

|

||||

[](https://goreportcard.com/report/github.com/weaveworks/flagger)

|

||||

[](https://codecov.io/gh/weaveworks/flagger)

|

||||

[](https://github.com/weaveworks/flagger/blob/master/LICENSE)

|

||||

[](https://github.com/weaveworks/flagger/releases)

|

||||

|

||||

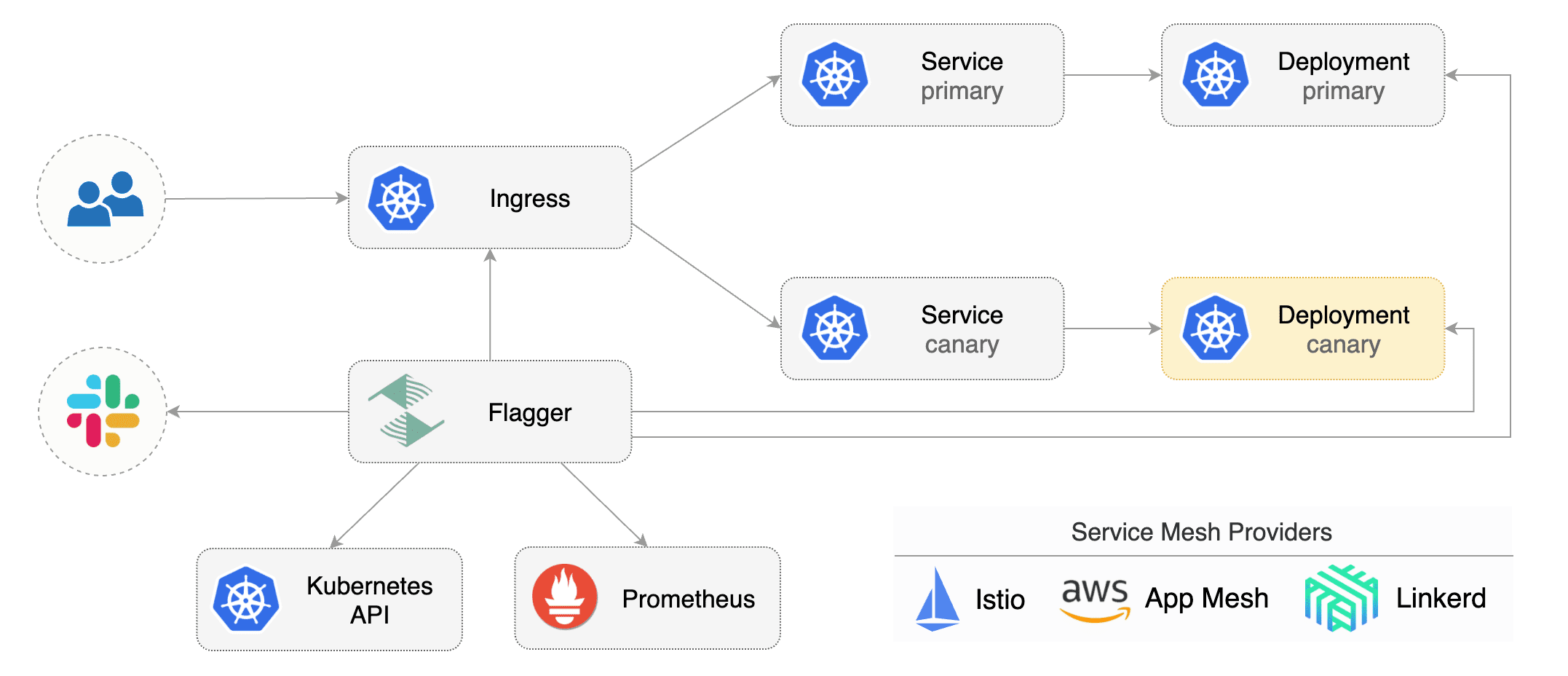

Flagger is a Kubernetes operator that automates the promotion of canary deployments

|

||||

using Istio routing for traffic shifting and Prometheus metrics for canary analysis.

|

||||

The canary analysis can be extended with webhooks for running integration tests,

|

||||

using Istio or App Mesh routing for traffic shifting and Prometheus metrics for canary analysis.

|

||||

The canary analysis can be extended with webhooks for running acceptance tests,

|

||||

load tests or any other custom validation.

|

||||

|

||||

### Install

|

||||

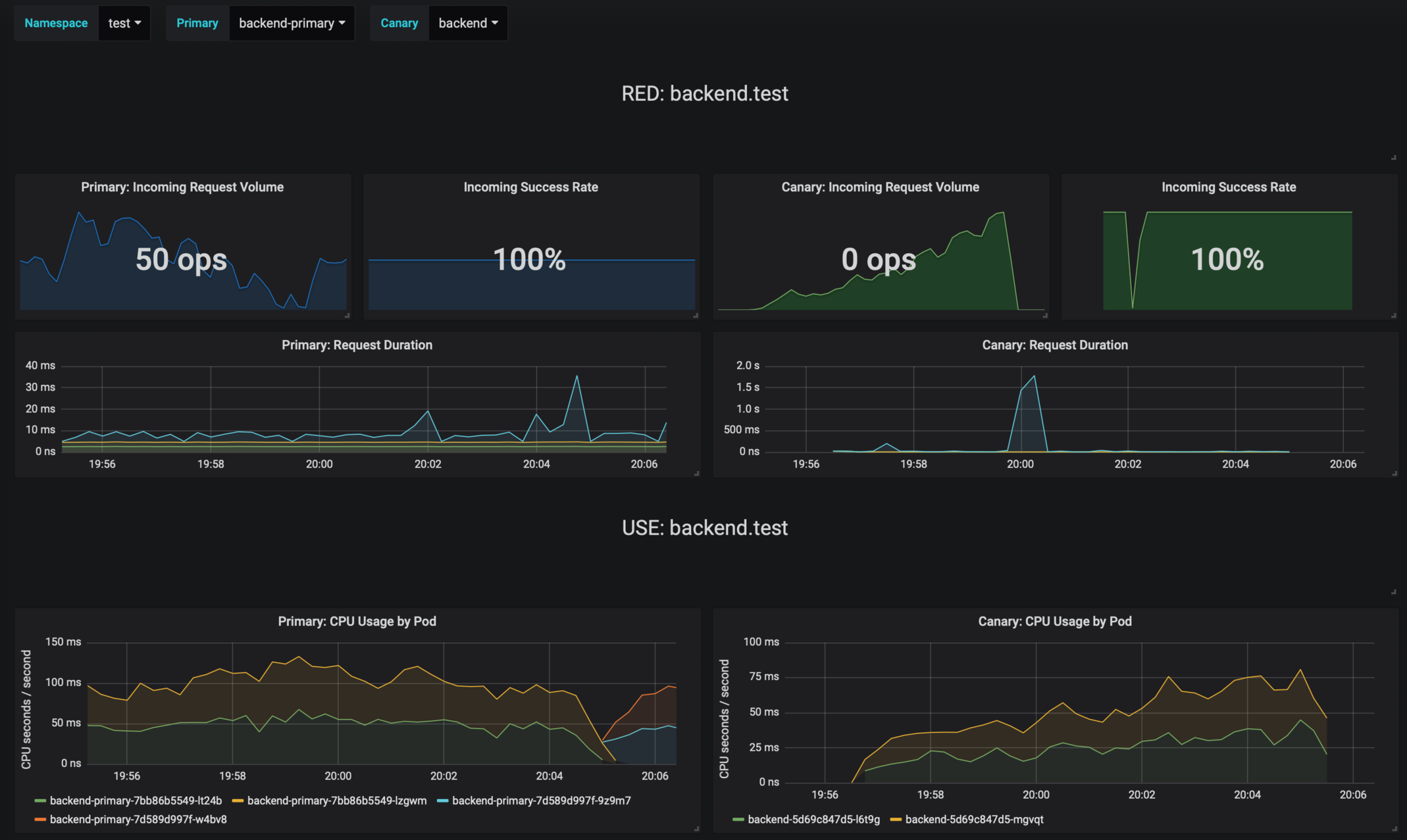

Flagger implements a control loop that gradually shifts traffic to the canary while measuring key performance

|

||||

indicators like HTTP requests success rate, requests average duration and pods health.

|

||||

Based on analysis of the KPIs a canary is promoted or aborted, and the analysis result is published to Slack.

|

||||

|

||||

Before installing Flagger make sure you have Istio setup up with Prometheus enabled.

|

||||

If you are new to Istio you can follow my [Istio service mesh walk-through](https://github.com/stefanprodan/istio-gke).

|

||||

|

||||

|

||||

Deploy Flagger in the `istio-system` namespace using Helm:

|

||||

## Documentation

|

||||

|

||||

```bash

|

||||

# add the Helm repository

|

||||

helm repo add flagger https://flagger.app

|

||||

Flagger documentation can be found at [docs.flagger.app](https://docs.flagger.app)

|

||||

|

||||

# install or upgrade

|

||||

helm upgrade -i flagger flagger/flagger \

|

||||

--namespace=istio-system \

|

||||

--set metricsServer=http://prometheus.istio-system:9090

|

||||

```

|

||||

* Install

|

||||

* [Flagger install on Kubernetes](https://docs.flagger.app/install/flagger-install-on-kubernetes)

|

||||

* [Flagger install on GKE Istio](https://docs.flagger.app/install/flagger-install-on-google-cloud)

|

||||

* [Flagger install on EKS App Mesh](https://docs.flagger.app/install/flagger-install-on-eks-appmesh)

|

||||

* How it works

|

||||

* [Canary custom resource](https://docs.flagger.app/how-it-works#canary-custom-resource)

|

||||

* [Routing](https://docs.flagger.app/how-it-works#istio-routing)

|

||||

* [Canary deployment stages](https://docs.flagger.app/how-it-works#canary-deployment)

|

||||

* [Canary analysis](https://docs.flagger.app/how-it-works#canary-analysis)

|

||||

* [HTTP metrics](https://docs.flagger.app/how-it-works#http-metrics)

|

||||

* [Custom metrics](https://docs.flagger.app/how-it-works#custom-metrics)

|

||||

* [Webhooks](https://docs.flagger.app/how-it-works#webhooks)

|

||||

* [Load testing](https://docs.flagger.app/how-it-works#load-testing)

|

||||

* Usage

|

||||

* [Istio canary deployments](https://docs.flagger.app/usage/progressive-delivery)

|

||||

* [Istio A/B testing](https://docs.flagger.app/usage/ab-testing)

|

||||

* [App Mesh canary deployments](https://docs.flagger.app/usage/appmesh-progressive-delivery)

|

||||

* [Monitoring](https://docs.flagger.app/usage/monitoring)

|

||||

* [Alerting](https://docs.flagger.app/usage/alerting)

|

||||

* Tutorials

|

||||

* [Canary deployments with Helm charts and Weave Flux](https://docs.flagger.app/tutorials/canary-helm-gitops)

|

||||

|

||||

Flagger is compatible with Kubernetes >1.11.0 and Istio >1.0.0.

|

||||

## Canary CRD

|

||||

|

||||

### Usage

|

||||

Flagger takes a Kubernetes deployment and optionally a horizontal pod autoscaler (HPA),

|

||||

then creates a series of objects (Kubernetes deployments, ClusterIP services and Istio or App Mesh virtual services).

|

||||

These objects expose the application on the mesh and drive the canary analysis and promotion.

|

||||

|

||||

Flagger takes a Kubernetes deployment and creates a series of objects

|

||||

(Kubernetes [deployments](https://kubernetes.io/docs/concepts/workloads/controllers/deployment/),

|

||||

ClusterIP [services](https://kubernetes.io/docs/concepts/services-networking/service/) and

|

||||

Istio [virtual services](https://istio.io/docs/reference/config/istio.networking.v1alpha3/#VirtualService))

|

||||

to drive the canary analysis and promotion.

|

||||

|

||||

|

||||

|

||||

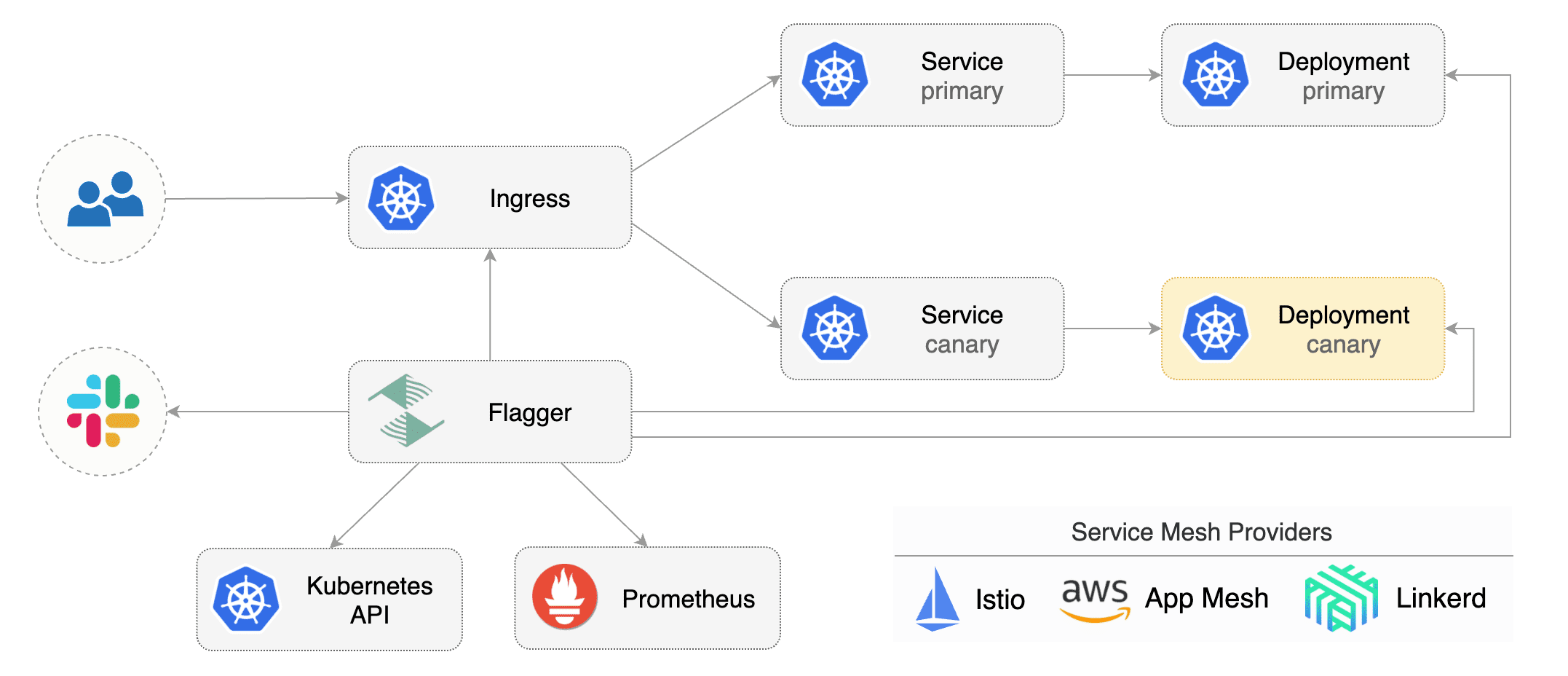

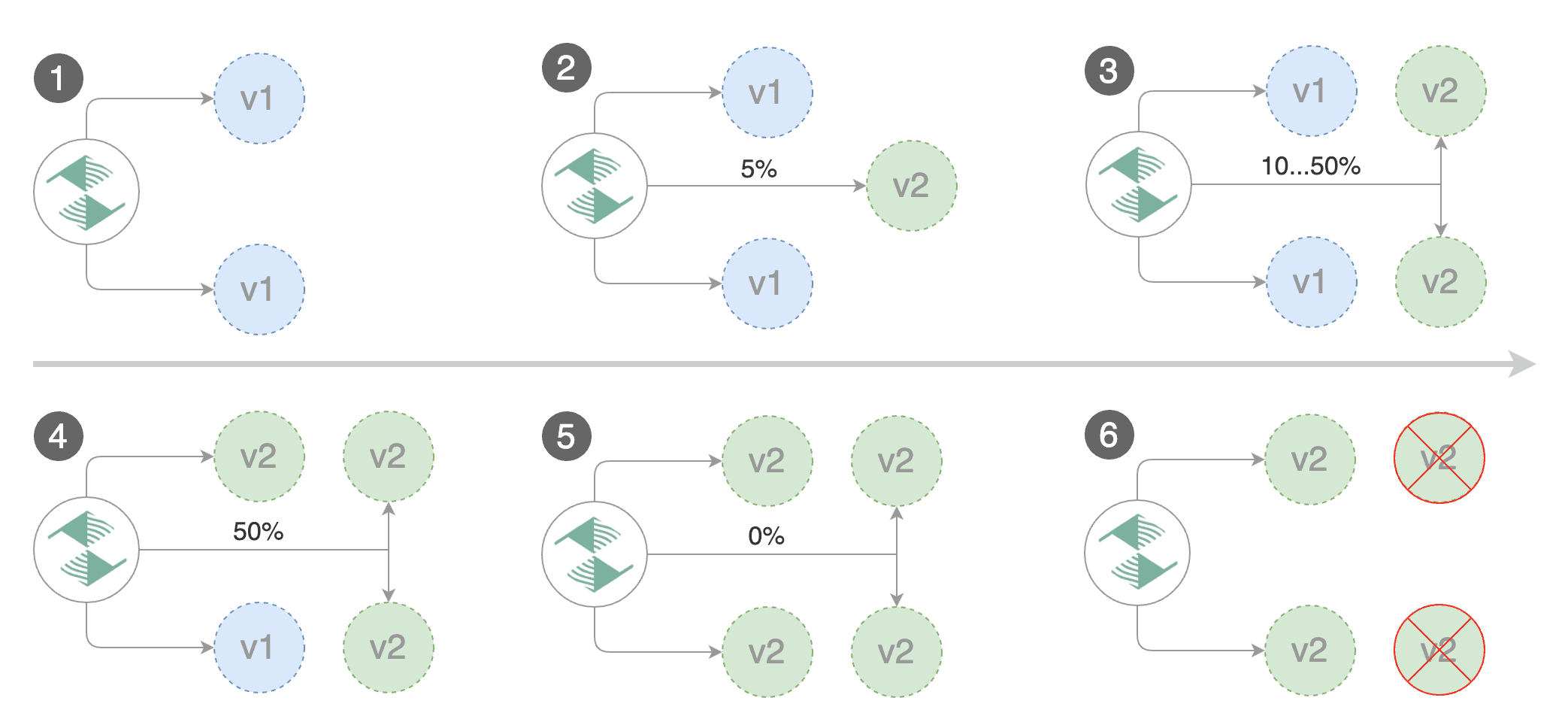

Gated canary promotion stages:

|

||||

|

||||

* scan for canary deployments

|

||||

* check Istio virtual service routes are mapped to primary and canary ClusterIP services

|

||||

* check primary and canary deployments status

|

||||

* halt advancement if a rolling update is underway

|

||||

* halt advancement if pods are unhealthy

|

||||

* increase canary traffic weight percentage from 0% to 5% (step weight)

|

||||

* check canary HTTP request success rate and latency

|

||||

* halt advancement if any metric is under the specified threshold

|

||||

* increment the failed checks counter

|

||||

* check if the number of failed checks reached the threshold

|

||||

* route all traffic to primary

|

||||

* scale to zero the canary deployment and mark it as failed

|

||||

* wait for the canary deployment to be updated (revision bump) and start over

|

||||

* increase canary traffic weight by 5% (step weight) till it reaches 50% (max weight)

|

||||

* halt advancement while canary request success rate is under the threshold

|

||||

* halt advancement while canary request duration P99 is over the threshold

|

||||

* halt advancement if the primary or canary deployment becomes unhealthy

|

||||

* halt advancement while canary deployment is being scaled up/down by HPA

|

||||

* promote canary to primary

|

||||

* copy canary deployment spec template over primary

|

||||

* wait for primary rolling update to finish

|

||||

* halt advancement if pods are unhealthy

|

||||

* route all traffic to primary

|

||||

* scale to zero the canary deployment

|

||||

* mark rollout as finished

|

||||

* wait for the canary deployment to be updated (revision bump) and start over

|

||||

|

||||

You can change the canary analysis _max weight_ and the _step weight_ percentage in the Flagger's custom resource.

|

||||

Flagger keeps track of ConfigMaps and Secrets referenced by a Kubernetes Deployment and triggers a canary analysis if any of those objects change.

|

||||

When promoting a workload in production, both code (container images) and configuration (config maps and secrets) are being synchronised.

|

||||

|

||||

For a deployment named _podinfo_, a canary promotion can be defined using Flagger's custom resource:

|

||||

|

||||

@@ -99,9 +80,31 @@ spec:

|

||||

# Istio gateways (optional)

|

||||

gateways:

|

||||

- public-gateway.istio-system.svc.cluster.local

|

||||

- mesh

|

||||

# Istio virtual service host names (optional)

|

||||

hosts:

|

||||

- podinfo.example.com

|

||||

# HTTP match conditions (optional)

|

||||

match:

|

||||

- uri:

|

||||

prefix: /

|

||||

# HTTP rewrite (optional)

|

||||

rewrite:

|

||||

uri: /

|

||||

# Envoy timeout and retry policy (optional)

|

||||

headers:

|

||||

request:

|

||||

add:

|

||||

x-envoy-upstream-rq-timeout-ms: "15000"

|

||||

x-envoy-max-retries: "10"

|

||||

x-envoy-retry-on: "gateway-error,connect-failure,refused-stream"

|

||||

# cross-origin resource sharing policy (optional)

|

||||

corsPolicy:

|

||||

allowOrigin:

|

||||

- example.com

|

||||

# promote the canary without analysing it (default false)

|

||||

skipAnalysis: false

|

||||

# define the canary analysis timing and KPIs

|

||||

canaryAnalysis:

|

||||

# schedule interval (default 60s)

|

||||

interval: 1m

|

||||

@@ -115,6 +118,7 @@ spec:

|

||||

stepWeight: 5

|

||||

# Istio Prometheus checks

|

||||

metrics:

|

||||

# builtin Istio checks

|

||||

- name: istio_requests_total

|

||||

# minimum req success rate (non 5xx responses)

|

||||

# percentage (0-100)

|

||||

@@ -125,320 +129,66 @@ spec:

|

||||

# milliseconds

|

||||

threshold: 500

|

||||

interval: 30s

|

||||

# custom check

|

||||

- name: "kafka lag"

|

||||

threshold: 100

|

||||

query: |

|

||||

avg_over_time(

|

||||

kafka_consumergroup_lag{

|

||||

consumergroup=~"podinfo-consumer-.*",

|

||||

topic="podinfo"

|

||||

}[1m]

|

||||

)

|

||||

# external checks (optional)

|

||||

webhooks:

|

||||

- name: integration-tests

|

||||

url: http://podinfo.test:9898/echo

|

||||

timeout: 1m

|

||||

- name: load-test

|

||||

url: http://flagger-loadtester.test/

|

||||

timeout: 5s

|

||||

metadata:

|

||||

test: "all"

|

||||

token: "16688eb5e9f289f1991c"

|

||||

cmd: "hey -z 1m -q 10 -c 2 http://podinfo.test:9898/"

|

||||

```

|

||||

|

||||

The canary analysis is using the following promql queries:

|

||||

|

||||

_HTTP requests success rate percentage_

|

||||

For more details on how the canary analysis and promotion works please [read the docs](https://docs.flagger.app/how-it-works).

|

||||

|

||||

```sql

|

||||

sum(

|

||||

rate(

|

||||

istio_requests_total{

|

||||

reporter="destination",

|

||||

destination_workload_namespace=~"$namespace",

|

||||

destination_workload=~"$workload",

|

||||

response_code!~"5.*"

|

||||

}[$interval]

|

||||

)

|

||||

)

|

||||

/

|

||||

sum(

|

||||

rate(

|

||||

istio_requests_total{

|

||||

reporter="destination",

|

||||

destination_workload_namespace=~"$namespace",

|

||||

destination_workload=~"$workload"

|

||||

}[$interval]

|

||||

)

|

||||

)

|

||||

```

|

||||

## Features

|

||||

|

||||

_HTTP requests milliseconds duration P99_

|

||||

| Feature | Istio | App Mesh |

|

||||

| -------------------------------------------- | ------------------ | ------------------ |

|

||||

| Canary deployments (weighted traffic) | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| A/B testing (headers and cookies filters) | :heavy_check_mark: | :heavy_minus_sign: |

|

||||

| Load testing | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Webhooks (custom acceptance tests) | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Request success rate check (Envoy metric) | :heavy_check_mark: | :heavy_check_mark: |

|

||||