Compare commits

175 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

fcd520787d | ||

|

|

e2417e4e40 | ||

|

|

70a2cbf1c6 | ||

|

|

fa0c6af6aa | ||

|

|

4f1abd0c8d | ||

|

|

41e839aa36 | ||

|

|

2fd1593ad2 | ||

|

|

27b601c5aa | ||

|

|

5fc69134e3 | ||

|

|

9adc0698bb | ||

|

|

119c2ff464 | ||

|

|

f3a4201c7d | ||

|

|

8b6aa73df0 | ||

|

|

1d4dfb0883 | ||

|

|

eab7f126a6 | ||

|

|

fe7547d83e | ||

|

|

7d0df82861 | ||

|

|

7f0cd27591 | ||

|

|

e094c2ae14 | ||

|

|

a5d438257f | ||

|

|

d8cb8f1064 | ||

|

|

a8d8bb2d6f | ||

|

|

a76ea5917c | ||

|

|

b0b6198ec8 | ||

|

|

eda97f35d2 | ||

|

|

2b6507d35a | ||

|

|

f7c4d5aa0b | ||

|

|

74f07cffa6 | ||

|

|

79c8ff0af8 | ||

|

|

ac544eea4b | ||

|

|

231a32331b | ||

|

|

104e8ef050 | ||

|

|

296015faff | ||

|

|

9a9964c968 | ||

|

|

0d05d86e32 | ||

|

|

9680ca98f2 | ||

|

|

42b850ca52 | ||

|

|

3f5c22d863 | ||

|

|

535a92e871 | ||

|

|

3411a6a981 | ||

|

|

b5adee271c | ||

|

|

e2abcd1323 | ||

|

|

25fbe7ecb6 | ||

|

|

6befee79c2 | ||

|

|

f09c5a60f1 | ||

|

|

52e89ff509 | ||

|

|

35e20406ef | ||

|

|

c6e96ff1bb | ||

|

|

793ab524b0 | ||

|

|

5a479d0187 | ||

|

|

a23e4f1d2a | ||

|

|

bd35a3f61c | ||

|

|

197e987d5f | ||

|

|

7f29beb639 | ||

|

|

1140af8dc7 | ||

|

|

a2688c3910 | ||

|

|

75b27ab3f3 | ||

|

|

59d3f55fb2 | ||

|

|

f34739f334 | ||

|

|

90c71ec18f | ||

|

|

395234d7c8 | ||

|

|

e322ba0065 | ||

|

|

6db8b96f72 | ||

|

|

44d7e96e96 | ||

|

|

1662479c8d | ||

|

|

2e351fcf0d | ||

|

|

5d81876d07 | ||

|

|

c81e6989ec | ||

|

|

4d61a896c3 | ||

|

|

d148933ab3 | ||

|

|

04a56a3591 | ||

|

|

4a354e74d4 | ||

|

|

1e3e6427d5 | ||

|

|

38826108c8 | ||

|

|

4c4752f907 | ||

|

|

94dcd6c94d | ||

|

|

eabef3db30 | ||

|

|

6750f10ffa | ||

|

|

56cb888cbf | ||

|

|

b3e7fb3417 | ||

|

|

2c6e1baca2 | ||

|

|

c8358929d1 | ||

|

|

1dc7677dfb | ||

|

|

8e699a7543 | ||

|

|

cbbabdfac0 | ||

|

|

9d92de234c | ||

|

|

ba65975fb5 | ||

|

|

ef423b2078 | ||

|

|

f451b4e36c | ||

|

|

0856e13ee6 | ||

|

|

87b9fa8ca7 | ||

|

|

5b43d3d314 | ||

|

|

ac4972dd8d | ||

|

|

8a8f68af5d | ||

|

|

c669dc0c4b | ||

|

|

863a5466cc | ||

|

|

e2347c84e3 | ||

|

|

e0e673f565 | ||

|

|

30cbf2a741 | ||

|

|

f58de3801c | ||

|

|

7c6b88d4c1 | ||

|

|

0c0ebaecd5 | ||

|

|

1925f99118 | ||

|

|

6f2a22a1cc | ||

|

|

ee04082cd7 | ||

|

|

efd901ac3a | ||

|

|

e565789ae8 | ||

|

|

d3953004f6 | ||

|

|

df1d9e3011 | ||

|

|

631c55fa6e | ||

|

|

29cdd43288 | ||

|

|

9b79af9fcd | ||

|

|

2c9c1adb47 | ||

|

|

5dfb5808c4 | ||

|

|

bb0175aebf | ||

|

|

adaf4c99c0 | ||

|

|

bed6ed09d5 | ||

|

|

4ff67a85ce | ||

|

|

702f4fcd14 | ||

|

|

8a03ae153d | ||

|

|

434c6149ab | ||

|

|

97fc4a90ae | ||

|

|

217ef06930 | ||

|

|

71057946e6 | ||

|

|

a74ad52c72 | ||

|

|

12d26874f8 | ||

|

|

27de9ce151 | ||

|

|

9e7cd5a8c5 | ||

|

|

38cb487b64 | ||

|

|

05ca266c5e | ||

|

|

5cc26de645 | ||

|

|

2b9a195fa3 | ||

|

|

4454749eec | ||

|

|

b435a03fab | ||

|

|

7c166e2b40 | ||

|

|

f7a7963dcf | ||

|

|

9c77c0d69c | ||

|

|

e8a9555346 | ||

|

|

59751dd007 | ||

|

|

9c4d4d16b6 | ||

|

|

0e3d1b3e8f | ||

|

|

f119b78940 | ||

|

|

456d914c35 | ||

|

|

737507b0fe | ||

|

|

4bcf82d295 | ||

|

|

e9cd7afc8a | ||

|

|

0830abd51d | ||

|

|

5b296e01b3 | ||

|

|

3fd039afd1 | ||

|

|

5904348ba5 | ||

|

|

1a98e93723 | ||

|

|

c9685fbd13 | ||

|

|

dc347e273d | ||

|

|

8170916897 | ||

|

|

71cd4e0cb7 | ||

|

|

0109788ccc | ||

|

|

1649dea468 | ||

|

|

b8a7ea8534 | ||

|

|

afe4d59d5a | ||

|

|

0f2697df23 | ||

|

|

05664fa648 | ||

|

|

3b2564f34b | ||

|

|

dd0cf2d588 | ||

|

|

7c66f23c6a | ||

|

|

a9f034de1a | ||

|

|

6ad2dca57a | ||

|

|

e8353c110b | ||

|

|

dbf26ddf53 | ||

|

|

acc72d207f | ||

|

|

a784f83464 | ||

|

|

07d8355363 | ||

|

|

f7a439274e | ||

|

|

bd6d446cb8 | ||

|

|

385d0e0549 | ||

|

|

02236374d8 |

22

.circleci/config.yml

Normal file

@@ -0,0 +1,22 @@

|

||||

version: 2.1

|

||||

jobs:

|

||||

e2e-testing:

|

||||

machine: true

|

||||

steps:

|

||||

- checkout

|

||||

- run: test/e2e-kind.sh

|

||||

- run: test/e2e-istio.sh

|

||||

- run: test/e2e-build.sh

|

||||

- run: test/e2e-tests.sh

|

||||

|

||||

workflows:

|

||||

version: 2

|

||||

build-and-test:

|

||||

jobs:

|

||||

- e2e-testing:

|

||||

filters:

|

||||

branches:

|

||||

ignore:

|

||||

- gh-pages

|

||||

- /docs-.*/

|

||||

- /release-.*/

|

||||

@@ -6,3 +6,6 @@ coverage:

|

||||

threshold: 50

|

||||

base: auto

|

||||

patch: off

|

||||

|

||||

comment:

|

||||

require_changes: yes

|

||||

17

.github/main.workflow

vendored

Normal file

@@ -0,0 +1,17 @@

|

||||

workflow "Publish Helm charts" {

|

||||

on = "push"

|

||||

resolves = ["helm-push"]

|

||||

}

|

||||

|

||||

action "helm-lint" {

|

||||

uses = "stefanprodan/gh-actions/helm@master"

|

||||

args = ["lint charts/*"]

|

||||

}

|

||||

|

||||

action "helm-push" {

|

||||

needs = ["helm-lint"]

|

||||

uses = "stefanprodan/gh-actions/helm-gh-pages@master"

|

||||

args = ["charts/*","https://flagger.app"]

|

||||

secrets = ["GITHUB_TOKEN"]

|

||||

}

|

||||

|

||||

4

.gitignore

vendored

@@ -11,3 +11,7 @@

|

||||

# Output of the go coverage tool, specifically when used with LiteIDE

|

||||

*.out

|

||||

.DS_Store

|

||||

|

||||

bin/

|

||||

artifacts/gcloud/

|

||||

.idea

|

||||

20

.travis.yml

@@ -12,20 +12,26 @@ addons:

|

||||

packages:

|

||||

- docker-ce

|

||||

|

||||

#before_script:

|

||||

# - go get -u sigs.k8s.io/kind

|

||||

# - curl https://raw.githubusercontent.com/kubernetes/helm/master/scripts/get | bash

|

||||

# - curl -LO https://storage.googleapis.com/kubernetes-release/release/$(curl -s https://storage.googleapis.com/kubernetes-release/release/stable.txt)/bin/linux/amd64/kubectl && chmod +x kubectl && sudo mv kubectl /usr/local/bin/

|

||||

|

||||

script:

|

||||

- set -e

|

||||

- make test-fmt

|

||||

- make test-codegen

|

||||

- go test -race -coverprofile=coverage.txt -covermode=atomic ./pkg/controller/

|

||||

- make build

|

||||

- set -e

|

||||

- make test-fmt

|

||||

- make test-codegen

|

||||

- go test -race -coverprofile=coverage.txt -covermode=atomic $(go list ./pkg/...)

|

||||

- make build

|

||||

|

||||

after_success:

|

||||

- if [ -z "$DOCKER_USER" ]; then

|

||||

echo "PR build, skipping image push";

|

||||

else

|

||||

docker tag stefanprodan/flagger:latest quay.io/stefanprodan/flagger:${TRAVIS_COMMIT};

|

||||

BRANCH_COMMIT=${TRAVIS_BRANCH}-$(echo ${TRAVIS_COMMIT} | head -c7);

|

||||

docker tag stefanprodan/flagger:latest quay.io/stefanprodan/flagger:${BRANCH_COMMIT};

|

||||

echo $DOCKER_PASS | docker login -u=$DOCKER_USER --password-stdin quay.io;

|

||||

docker push quay.io/stefanprodan/flagger:${TRAVIS_COMMIT};

|

||||

docker push quay.io/stefanprodan/flagger:${BRANCH_COMMIT};

|

||||

fi

|

||||

- if [ -z "$TRAVIS_TAG" ]; then

|

||||

echo "Not a release, skipping image push";

|

||||

|

||||

180

CHANGELOG.md

Normal file

@@ -0,0 +1,180 @@

|

||||

# Changelog

|

||||

|

||||

All notable changes to this project are documented in this file.

|

||||

|

||||

## 0.8.0 (2019-03-06)

|

||||

|

||||

Adds support for CORS policy and HTTP request headers manipulation

|

||||

|

||||

#### Features

|

||||

|

||||

- CORS policy support [#83](https://github.com/stefanprodan/flagger/pull/83)

|

||||

- Allow headers to be appended to HTTP requests [#82](https://github.com/stefanprodan/flagger/pull/82)

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Refactor the routing management

|

||||

[#72](https://github.com/stefanprodan/flagger/pull/72)

|

||||

[#80](https://github.com/stefanprodan/flagger/pull/80)

|

||||

- Fine-grained RBAC [#73](https://github.com/stefanprodan/flagger/pull/73)

|

||||

- Add option to limit Flagger to a single namespace [#78](https://github.com/stefanprodan/flagger/pull/78)

|

||||

|

||||

## 0.7.0 (2019-02-28)

|

||||

|

||||

Adds support for custom metric checks, HTTP timeouts and HTTP retries

|

||||

|

||||

#### Features

|

||||

|

||||

- Allow custom promql queries in the canary analysis spec [#60](https://github.com/stefanprodan/flagger/pull/60)

|

||||

- Add HTTP timeout and retries to canary service spec [#62](https://github.com/stefanprodan/flagger/pull/62)

|

||||

|

||||

## 0.6.0 (2019-02-25)

|

||||

|

||||

Allows for [HTTPMatchRequests](https://istio.io/docs/reference/config/istio.networking.v1alpha3/#HTTPMatchRequest)

|

||||

and [HTTPRewrite](https://istio.io/docs/reference/config/istio.networking.v1alpha3/#HTTPRewrite)

|

||||

to be customized in the service spec of the canary custom resource.

|

||||

|

||||

#### Features

|

||||

|

||||

- Add HTTP match conditions and URI rewrite to the canary service spec [#55](https://github.com/stefanprodan/flagger/pull/55)

|

||||

- Update virtual service when the canary service spec changes

|

||||

[#54](https://github.com/stefanprodan/flagger/pull/54)

|

||||

[#51](https://github.com/stefanprodan/flagger/pull/51)

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Run e2e testing on [Kubernetes Kind](https://github.com/kubernetes-sigs/kind) for canary promotion

|

||||

[#53](https://github.com/stefanprodan/flagger/pull/53)

|

||||

|

||||

## 0.5.1 (2019-02-14)

|

||||

|

||||

Allows skipping the analysis phase to ship changes directly to production

|

||||

|

||||

#### Features

|

||||

|

||||

- Add option to skip the canary analysis [#46](https://github.com/stefanprodan/flagger/pull/46)

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Reject deployment if the pod label selector doesn't match `app: <DEPLOYMENT_NAME>` [#43](https://github.com/stefanprodan/flagger/pull/43)

|

||||

|

||||

## 0.5.0 (2019-01-30)

|

||||

|

||||

Track changes in ConfigMaps and Secrets [#37](https://github.com/stefanprodan/flagger/pull/37)

|

||||

|

||||

#### Features

|

||||

|

||||

- Promote configmaps and secrets changes from canary to primary

|

||||

- Detect changes in configmaps and/or secrets and (re)start canary analysis

|

||||

- Add configs checksum to Canary CRD status

|

||||

- Create primary configmaps and secrets at bootstrap

|

||||

- Scan canary volumes and containers for configmaps and secrets

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Copy deployment labels from canary to primary at bootstrap and promotion

|

||||

|

||||

## 0.4.1 (2019-01-24)

|

||||

|

||||

Load testing webhook [#35](https://github.com/stefanprodan/flagger/pull/35)

|

||||

|

||||

#### Features

|

||||

|

||||

- Add the load tester chart to Flagger Helm repository

|

||||

- Implement a load test runner based on [rakyll/hey](https://github.com/rakyll/hey)

|

||||

- Log warning when no values are found for Istio metric due to lack of traffic

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Run wekbooks before the metrics checks to avoid failures when using a load tester

|

||||

|

||||

## 0.4.0 (2019-01-18)

|

||||

|

||||

Restart canary analysis if revision changes [#31](https://github.com/stefanprodan/flagger/pull/31)

|

||||

|

||||

#### Breaking changes

|

||||

|

||||

- Drop support for Kubernetes 1.10

|

||||

|

||||

#### Features

|

||||

|

||||

- Detect changes during canary analysis and reset advancement

|

||||

- Add status and additional printer columns to CRD

|

||||

- Add canary name and namespace to controller structured logs

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Allow canary name to be different to the target name

|

||||

- Check if multiple canaries have the same target and log error

|

||||

- Use deep copy when updating Kubernetes objects

|

||||

- Skip readiness checks if canary analysis has finished

|

||||

|

||||

## 0.3.0 (2019-01-11)

|

||||

|

||||

Configurable canary analysis duration [#20](https://github.com/stefanprodan/flagger/pull/20)

|

||||

|

||||

#### Breaking changes

|

||||

|

||||

- Helm chart: flag `controlLoopInterval` has been removed

|

||||

|

||||

#### Features

|

||||

|

||||

- CRD: canaries.flagger.app v1alpha3

|

||||

- Schedule canary analysis independently based on `canaryAnalysis.interval`

|

||||

- Add analysis interval to Canary CRD (defaults to one minute)

|

||||

- Make autoscaler (HPA) reference optional

|

||||

|

||||

## 0.2.0 (2019-01-04)

|

||||

|

||||

Webhooks [#18](https://github.com/stefanprodan/flagger/pull/18)

|

||||

|

||||

#### Features

|

||||

|

||||

- CRD: canaries.flagger.app v1alpha2

|

||||

- Implement canary external checks based on webhooks HTTP POST calls

|

||||

- Add webhooks to Canary CRD

|

||||

- Move docs to gitbook [docs.flagger.app](https://docs.flagger.app)

|

||||

|

||||

## 0.1.2 (2018-12-06)

|

||||

|

||||

Improve Slack notifications [#14](https://github.com/stefanprodan/flagger/pull/14)

|

||||

|

||||

#### Features

|

||||

|

||||

- Add canary analysis metadata to init and start Slack messages

|

||||

- Add rollback reason to failed canary Slack messages

|

||||

|

||||

## 0.1.1 (2018-11-28)

|

||||

|

||||

Canary progress deadline [#10](https://github.com/stefanprodan/flagger/pull/10)

|

||||

|

||||

#### Features

|

||||

|

||||

- Rollback canary based on the deployment progress deadline check

|

||||

- Add progress deadline to Canary CRD (defaults to 10 minutes)

|

||||

|

||||

## 0.1.0 (2018-11-25)

|

||||

|

||||

First stable release

|

||||

|

||||

#### Features

|

||||

|

||||

- CRD: canaries.flagger.app v1alpha1

|

||||

- Notifications: post canary events to Slack

|

||||

- Instrumentation: expose Prometheus metrics for canary status and traffic weight percentage

|

||||

- Autoscaling: add HPA reference to CRD and create primary HPA at bootstrap

|

||||

- Bootstrap: create primary deployment, ClusterIP services and Istio virtual service based on CRD spec

|

||||

|

||||

|

||||

## 0.0.1 (2018-10-07)

|

||||

|

||||

Initial semver release

|

||||

|

||||

#### Features

|

||||

|

||||

- Implement canary rollback based on failed checks threshold

|

||||

- Scale up the deployment when canary revision changes

|

||||

- Add OpenAPI v3 schema validation to Canary CRD

|

||||

- Use CRD status for canary state persistence

|

||||

- Add Helm charts for Flagger and Grafana

|

||||

- Add canary analysis Grafana dashboard

|

||||

44

Dockerfile.loadtester

Normal file

@@ -0,0 +1,44 @@

|

||||

FROM golang:1.11 AS hey-builder

|

||||

|

||||

RUN mkdir -p /go/src/github.com/rakyll/hey/

|

||||

|

||||

WORKDIR /go/src/github.com/rakyll/hey

|

||||

|

||||

ADD https://github.com/rakyll/hey/archive/v0.1.1.tar.gz .

|

||||

|

||||

RUN tar xzf v0.1.1.tar.gz --strip 1

|

||||

|

||||

RUN go get ./...

|

||||

|

||||

RUN CGO_ENABLED=0 GOOS=linux GOARCH=amd64 \

|

||||

go install -ldflags '-w -extldflags "-static"' \

|

||||

/go/src/github.com/rakyll/hey

|

||||

|

||||

FROM golang:1.11 AS builder

|

||||

|

||||

RUN mkdir -p /go/src/github.com/stefanprodan/flagger/

|

||||

|

||||

WORKDIR /go/src/github.com/stefanprodan/flagger

|

||||

|

||||

COPY . .

|

||||

|

||||

RUN go test -race ./pkg/loadtester/

|

||||

|

||||

RUN CGO_ENABLED=0 GOOS=linux go build -a -installsuffix cgo -o loadtester ./cmd/loadtester/*

|

||||

|

||||

FROM alpine:3.8

|

||||

|

||||

RUN addgroup -S app \

|

||||

&& adduser -S -g app app \

|

||||

&& apk --no-cache add ca-certificates curl

|

||||

|

||||

WORKDIR /home/app

|

||||

|

||||

COPY --from=hey-builder /go/bin/hey /usr/local/bin/hey

|

||||

COPY --from=builder /go/src/github.com/stefanprodan/flagger/loadtester .

|

||||

|

||||

RUN chown -R app:app ./

|

||||

|

||||

USER app

|

||||

|

||||

ENTRYPOINT ["./loadtester"]

|

||||

152

Gopkg.lock

generated

@@ -2,12 +2,12 @@

|

||||

|

||||

|

||||

[[projects]]

|

||||

digest = "1:5c3894b2aa4d6bead0ceeea6831b305d62879c871780e7b76296ded1b004bc57"

|

||||

digest = "1:4d6f036ea3fe636bcb2e89850bcdc62a771354e157cd51b8b22a2de8562bf663"

|

||||

name = "cloud.google.com/go"

|

||||

packages = ["compute/metadata"]

|

||||

pruneopts = "NUT"

|

||||

revision = "97efc2c9ffd9fe8ef47f7f3203dc60bbca547374"

|

||||

version = "v0.28.0"

|

||||

revision = "c9474f2f8deb81759839474b6bd1726bbfe1c1c4"

|

||||

version = "v0.36.0"

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

@@ -34,15 +34,15 @@

|

||||

version = "v1.0.0"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:8679b8a64f3613e9749c5640c3535c83399b8e69f67ce54d91dc73f6d77373af"

|

||||

digest = "1:a1b2a5e38f79688ee8250942d5fa960525fceb1024c855c7bc76fa77b0f3cca2"

|

||||

name = "github.com/gogo/protobuf"

|

||||

packages = [

|

||||

"proto",

|

||||

"sortkeys",

|

||||

]

|

||||

pruneopts = "NUT"

|

||||

revision = "636bf0302bc95575d69441b25a2603156ffdddf1"

|

||||

version = "v1.1.1"

|

||||

revision = "ba06b47c162d49f2af050fb4c75bcbc86a159d5c"

|

||||

version = "v1.2.1"

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

@@ -55,14 +55,14 @@

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

digest = "1:3fb07f8e222402962fa190eb060608b34eddfb64562a18e2167df2de0ece85d8"

|

||||

digest = "1:b7cb6054d3dff43b38ad2e92492f220f57ae6087ee797dca298139776749ace8"

|

||||

name = "github.com/golang/groupcache"

|

||||

packages = ["lru"]

|

||||

pruneopts = "NUT"

|

||||

revision = "24b0969c4cb722950103eed87108c8d291a8df00"

|

||||

revision = "5b532d6fd5efaf7fa130d4e859a2fde0fc3a9e1b"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:63ccdfbd20f7ccd2399d0647a7d100b122f79c13bb83da9660b1598396fd9f62"

|

||||

digest = "1:2d0636a8c490d2272dd725db26f74a537111b99b9dbdda0d8b98febe63702aa4"

|

||||

name = "github.com/golang/protobuf"

|

||||

packages = [

|

||||

"proto",

|

||||

@@ -72,8 +72,8 @@

|

||||

"ptypes/timestamp",

|

||||

]

|

||||

pruneopts = "NUT"

|

||||

revision = "aa810b61a9c79d51363740d207bb46cf8e620ed5"

|

||||

version = "v1.2.0"

|

||||

revision = "c823c79ea1570fb5ff454033735a8e68575d1d0f"

|

||||

version = "v1.3.0"

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

@@ -119,33 +119,33 @@

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

digest = "1:7fdf3223c7372d1ced0b98bf53457c5e89d89aecbad9a77ba9fcc6e01f9e5621"

|

||||

digest = "1:a86d65bc23eea505cd9139178e4d889733928fe165c7a008f41eaab039edf9df"

|

||||

name = "github.com/gregjones/httpcache"

|

||||

packages = [

|

||||

".",

|

||||

"diskcache",

|

||||

]

|

||||

pruneopts = "NUT"

|

||||

revision = "9cad4c3443a7200dd6400aef47183728de563a38"

|

||||

revision = "3befbb6ad0cc97d4c25d851e9528915809e1a22f"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:b42cde0e1f3c816dd57f57f7bbcf05ca40263ad96f168714c130c611fc0856a6"

|

||||

digest = "1:52094d0f8bdf831d1a2401e9b6fee5795fdc0b2a2d1f8bb1980834c289e79129"

|

||||

name = "github.com/hashicorp/golang-lru"

|

||||

packages = [

|

||||

".",

|

||||

"simplelru",

|

||||

]

|

||||

pruneopts = "NUT"

|

||||

revision = "20f1fb78b0740ba8c3cb143a61e86ba5c8669768"

|

||||

version = "v0.5.0"

|

||||

revision = "7087cb70de9f7a8bc0a10c375cb0d2280a8edf9c"

|

||||

version = "v0.5.1"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:9a52adf44086cead3b384e5d0dbf7a1c1cce65e67552ee3383a8561c42a18cd3"

|

||||

digest = "1:aaa38889f11896ee3644d77e17dc7764cc47f5f3d3b488268df2af2b52541c5f"

|

||||

name = "github.com/imdario/mergo"

|

||||

packages = ["."]

|

||||

pruneopts = "NUT"

|

||||

revision = "9f23e2d6bd2a77f959b2bf6acdbefd708a83a4a4"

|

||||

version = "v0.3.6"

|

||||

revision = "7c29201646fa3de8506f701213473dd407f19646"

|

||||

version = "v0.3.7"

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

@@ -162,32 +162,6 @@

|

||||

pruneopts = "NUT"

|

||||

revision = "f2b4162afba35581b6d4a50d3b8f34e33c144682"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:03a74b0d86021c8269b52b7c908eb9bb3852ff590b363dad0a807cf58cec2f89"

|

||||

name = "github.com/knative/pkg"

|

||||

packages = [

|

||||

"apis",

|

||||

"apis/duck",

|

||||

"apis/duck/v1alpha1",

|

||||

"apis/istio",

|

||||

"apis/istio/authentication",

|

||||

"apis/istio/authentication/v1alpha1",

|

||||

"apis/istio/common/v1alpha1",

|

||||

"apis/istio/v1alpha3",

|

||||

"client/clientset/versioned",

|

||||

"client/clientset/versioned/fake",

|

||||

"client/clientset/versioned/scheme",

|

||||

"client/clientset/versioned/typed/authentication/v1alpha1",

|

||||

"client/clientset/versioned/typed/authentication/v1alpha1/fake",

|

||||

"client/clientset/versioned/typed/duck/v1alpha1",

|

||||

"client/clientset/versioned/typed/duck/v1alpha1/fake",

|

||||

"client/clientset/versioned/typed/istio/v1alpha3",

|

||||

"client/clientset/versioned/typed/istio/v1alpha3/fake",

|

||||

"signals",

|

||||

]

|

||||

pruneopts = "NUT"

|

||||

revision = "c15d7c8f2220a7578b33504df6edefa948c845ae"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:5985ef4caf91ece5d54817c11ea25f182697534f8ae6521eadcd628c142ac4b6"

|

||||

name = "github.com/matttproud/golang_protobuf_extensions"

|

||||

@@ -245,11 +219,10 @@

|

||||

name = "github.com/prometheus/client_model"

|

||||

packages = ["go"]

|

||||

pruneopts = "NUT"

|

||||

revision = "5c3871d89910bfb32f5fcab2aa4b9ec68e65a99f"

|

||||

revision = "fd36f4220a901265f90734c3183c5f0c91daa0b8"

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

digest = "1:fad5a35eea6a1a33d6c8f949fbc146f24275ca809ece854248187683f52cc30b"

|

||||

digest = "1:4e776079b966091d3e6e12ed2aaf728bea5cd1175ef88bb654e03adbf5d4f5d3"

|

||||

name = "github.com/prometheus/common"

|

||||

packages = [

|

||||

"expfmt",

|

||||

@@ -257,28 +230,30 @@

|

||||

"model",

|

||||

]

|

||||

pruneopts = "NUT"

|

||||

revision = "c7de2306084e37d54b8be01f3541a8464345e9a5"

|

||||

revision = "cfeb6f9992ffa54aaa4f2170ade4067ee478b250"

|

||||

version = "v0.2.0"

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

digest = "1:26a2f5e891cc4d2321f18a0caa84c8e788663c17bed6a487f3cbe2c4295292d0"

|

||||

digest = "1:0a2e604afa3cbf53a1ddade2f240ee8472eded98856dd8c7cfbfea392ddbbfc7"

|

||||

name = "github.com/prometheus/procfs"

|

||||

packages = [

|

||||

".",

|

||||

"internal/util",

|

||||

"iostats",

|

||||

"nfs",

|

||||

"xfs",

|

||||

]

|

||||

pruneopts = "NUT"

|

||||

revision = "418d78d0b9a7b7de3a6bbc8a23def624cc977bb2"

|

||||

revision = "bbced9601137e764853b2fad7ec3e2dc4c504e02"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:e3707aeaccd2adc89eba6c062fec72116fe1fc1ba71097da85b4d8ae1668a675"

|

||||

digest = "1:9d8420bbf131d1618bde6530af37c3799340d3762cc47210c1d9532a4c3a2779"

|

||||

name = "github.com/spf13/pflag"

|

||||

packages = ["."]

|

||||

pruneopts = "NUT"

|

||||

revision = "9a97c102cda95a86cec2345a6f09f55a939babf5"

|

||||

version = "v1.0.2"

|

||||

revision = "298182f68c66c05229eb03ac171abe6e309ee79a"

|

||||

version = "v1.0.3"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:22f696cee54865fb8e9ff91df7b633f6b8f22037a8015253c6b6a71ca82219c7"

|

||||

@@ -313,15 +288,15 @@

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

digest = "1:3f3a05ae0b95893d90b9b3b5afdb79a9b3d96e4e36e099d841ae602e4aca0da8"

|

||||

digest = "1:058e9504b9a79bfe86092974d05bb3298d2aa0c312d266d43148de289a5065d9"

|

||||

name = "golang.org/x/crypto"

|

||||

packages = ["ssh/terminal"]

|

||||

pruneopts = "NUT"

|

||||

revision = "0e37d006457bf46f9e6692014ba72ef82c33022c"

|

||||

revision = "8dd112bcdc25174059e45e07517d9fc663123347"

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

digest = "1:1400b8e87c2c9bd486ea1a13155f59f8f02d385761206df05c0b7db007a53b2c"

|

||||

digest = "1:e3477b53a5c2fb71a7c9688e9b3d58be702807a5a88def8b9a327259d46e4979"

|

||||

name = "golang.org/x/net"

|

||||

packages = [

|

||||

"context",

|

||||

@@ -332,11 +307,11 @@

|

||||

"idna",

|

||||

]

|

||||

pruneopts = "NUT"

|

||||

revision = "26e67e76b6c3f6ce91f7c52def5af501b4e0f3a2"

|

||||

revision = "16b79f2e4e95ea23b2bf9903c9809ff7b013ce85"

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

digest = "1:bc2b221d465bb28ce46e8d472ecdc424b9a9b541bd61d8c311c5f29c8dd75b1b"

|

||||

digest = "1:17ee74a4d9b6078611784b873cdbfe91892d2c73052c430724e66fcc015b6c7b"

|

||||

name = "golang.org/x/oauth2"

|

||||

packages = [

|

||||

".",

|

||||

@@ -346,18 +321,18 @@

|

||||

"jwt",

|

||||

]

|

||||

pruneopts = "NUT"

|

||||

revision = "d2e6202438beef2727060aa7cabdd924d92ebfd9"

|

||||

revision = "e64efc72b421e893cbf63f17ba2221e7d6d0b0f3"

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

digest = "1:44261e94b6095310a2df925fd68632d399a00eb153b52566a7b3697f7c70638c"

|

||||

digest = "1:a0d91ab4d23badd4e64e115c6e6ba7dd56bd3cde5d287845822fb2599ac10236"

|

||||

name = "golang.org/x/sys"

|

||||

packages = [

|

||||

"unix",

|

||||

"windows",

|

||||

]

|

||||

pruneopts = "NUT"

|

||||

revision = "1561086e645b2809fb9f8a1e2a38160bf8d53bf4"

|

||||

revision = "30e92a19ae4a77dde818b8c3d41d51e4850cba12"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:e7071ed636b5422cc51c0e3a6cebc229d6c9fffc528814b519a980641422d619"

|

||||

@@ -384,26 +359,35 @@

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

digest = "1:c9e7a4b4d47c0ed205d257648b0e5b0440880cb728506e318f8ac7cd36270bc4"

|

||||

digest = "1:9fdc2b55e8e0fafe4b41884091e51e77344f7dc511c5acedcfd98200003bff90"

|

||||

name = "golang.org/x/time"

|

||||

packages = ["rate"]

|

||||

pruneopts = "NUT"

|

||||

revision = "fbb02b2291d28baffd63558aa44b4b56f178d650"

|

||||

revision = "85acf8d2951cb2a3bde7632f9ff273ef0379bcbd"

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

digest = "1:45751dc3302c90ea55913674261b2d74286b05cdd8e3ae9606e02e4e77f4353f"

|

||||

digest = "1:e46d8e20161401a9cf8765dfa428494a3492a0b56fe114156b7da792bf41ba78"

|

||||

name = "golang.org/x/tools"

|

||||

packages = [

|

||||

"go/ast/astutil",

|

||||

"go/gcexportdata",

|

||||

"go/internal/cgo",

|

||||

"go/internal/gcimporter",

|

||||

"go/internal/packagesdriver",

|

||||

"go/packages",

|

||||

"go/types/typeutil",

|

||||

"imports",

|

||||

"internal/fastwalk",

|

||||

"internal/gopathwalk",

|

||||

"internal/module",

|

||||

"internal/semver",

|

||||

]

|

||||

pruneopts = "NUT"

|

||||

revision = "90fa682c2a6e6a37b3a1364ce2fe1d5e41af9d6d"

|

||||

revision = "f8c04913dfb7b2339a756441456bdbe0af6eb508"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:e2da54c7866453ac5831c61c7ec5d887f39328cac088c806553303bff4048e6f"

|

||||

digest = "1:d395d49d784dd3a11938a3e85091b6570664aa90ff2767a626565c6c130fa7e9"

|

||||

name = "google.golang.org/appengine"

|

||||

packages = [

|

||||

".",

|

||||

@@ -418,8 +402,8 @@

|

||||

"urlfetch",

|

||||

]

|

||||

pruneopts = "NUT"

|

||||

revision = "ae0ab99deb4dc413a2b4bd6c8bdd0eb67f1e4d06"

|

||||

version = "v1.2.0"

|

||||

revision = "e9657d882bb81064595ca3b56cbe2546bbabf7b1"

|

||||

version = "v1.4.0"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:2d1fbdc6777e5408cabeb02bf336305e724b925ff4546ded0fa8715a7267922a"

|

||||

@@ -430,12 +414,12 @@

|

||||

version = "v0.9.1"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:7c95b35057a0ff2e19f707173cc1a947fa43a6eb5c4d300d196ece0334046082"

|

||||

digest = "1:18108594151654e9e696b27b181b953f9a90b16bf14d253dd1b397b025a1487f"

|

||||

name = "gopkg.in/yaml.v2"

|

||||

packages = ["."]

|

||||

pruneopts = "NUT"

|

||||

revision = "5420a8b6744d3b0345ab293f6fcba19c978f1183"

|

||||

version = "v2.2.1"

|

||||

revision = "51d6538a90f86fe93ac480b35f37b2be17fef232"

|

||||

version = "v2.2.2"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:8960ef753a87391086a307122d23cd5007cee93c28189437e4f1b6ed72bffc50"

|

||||

@@ -476,10 +460,9 @@

|

||||

version = "kubernetes-1.11.0"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:4b0d523ee389c762d02febbcfa0734c4530ebe87abe925db18f05422adcb33e8"

|

||||

digest = "1:83b01e3d6f85c4e911de84febd69a2d3ece614c5a4a518fbc2b5d59000645980"

|

||||

name = "k8s.io/apimachinery"

|

||||

packages = [

|

||||

"pkg/api/equality",

|

||||

"pkg/api/errors",

|

||||

"pkg/api/meta",

|

||||

"pkg/api/resource",

|

||||

@@ -659,7 +642,7 @@

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

digest = "1:5249c83f0fb9e277b2d28c19eca814feac7ef05dc762e4deaf0a2e4b1a7c5df3"

|

||||

digest = "1:61024ed77a53ac618effed55043bf6a9afbdeb64136bd6a5b0c992d4c0363766"

|

||||

name = "k8s.io/gengo"

|

||||

packages = [

|

||||

"args",

|

||||

@@ -672,15 +655,23 @@

|

||||

"types",

|

||||

]

|

||||

pruneopts = "NUT"

|

||||

revision = "4242d8e6c5dba56827bb7bcf14ad11cda38f3991"

|

||||

revision = "0689ccc1d7d65d9dd1bedcc3b0b1ed7df91ba266"

|

||||

|

||||

[[projects]]

|

||||

digest = "1:c263611800c3a97991dbcf9d3bc4de390f6224aaa8ca0a7226a9d734f65a416a"

|

||||

name = "k8s.io/klog"

|

||||

packages = ["."]

|

||||

pruneopts = "NUT"

|

||||

revision = "71442cd4037d612096940ceb0f3fec3f7fff66e0"

|

||||

version = "v0.2.0"

|

||||

|

||||

[[projects]]

|

||||

branch = "master"

|

||||

digest = "1:a2c842a1e0aed96fd732b535514556323a6f5edfded3b63e5e0ab1bce188aa54"

|

||||

digest = "1:03a96603922fc1f6895ae083e1e16d943b55ef0656b56965351bd87e7d90485f"

|

||||

name = "k8s.io/kube-openapi"

|

||||

packages = ["pkg/util/proto"]

|

||||

pruneopts = "NUT"

|

||||

revision = "e3762e86a74c878ffed47484592986685639c2cd"

|

||||

revision = "b3a7cee44a305be0a69e1b9ac03018307287e1b0"

|

||||

|

||||

[solve-meta]

|

||||

analyzer-name = "dep"

|

||||

@@ -689,10 +680,7 @@

|

||||

"github.com/google/go-cmp/cmp",

|

||||

"github.com/google/go-cmp/cmp/cmpopts",

|

||||

"github.com/istio/glog",

|

||||

"github.com/knative/pkg/apis/istio/v1alpha3",

|

||||

"github.com/knative/pkg/client/clientset/versioned",

|

||||

"github.com/knative/pkg/client/clientset/versioned/fake",

|

||||

"github.com/knative/pkg/signals",

|

||||

"github.com/prometheus/client_golang/prometheus",

|

||||

"github.com/prometheus/client_golang/prometheus/promhttp",

|

||||

"go.uber.org/zap",

|

||||

"go.uber.org/zap/zapcore",

|

||||

|

||||

@@ -45,10 +45,6 @@ required = [

|

||||

name = "github.com/google/go-cmp"

|

||||

version = "v0.2.0"

|

||||

|

||||

[[constraint]]

|

||||

name = "github.com/knative/pkg"

|

||||

revision = "c15d7c8f2220a7578b33504df6edefa948c845ae"

|

||||

|

||||

[[override]]

|

||||

name = "github.com/golang/glog"

|

||||

source = "github.com/istio/glog"

|

||||

|

||||

11

Makefile

@@ -3,9 +3,11 @@ VERSION?=$(shell grep 'VERSION' pkg/version/version.go | awk '{ print $$4 }' | t

|

||||

VERSION_MINOR:=$(shell grep 'VERSION' pkg/version/version.go | awk '{ print $$4 }' | tr -d '"' | rev | cut -d'.' -f2- | rev)

|

||||

PATCH:=$(shell grep 'VERSION' pkg/version/version.go | awk '{ print $$4 }' | tr -d '"' | awk -F. '{print $$NF}')

|

||||

SOURCE_DIRS = cmd pkg/apis pkg/controller pkg/server pkg/logging pkg/version

|

||||

LT_VERSION?=$(shell grep 'VERSION' cmd/loadtester/main.go | awk '{ print $$4 }' | tr -d '"' | head -n1)

|

||||

|

||||

run:

|

||||

go run cmd/flagger/* -kubeconfig=$$HOME/.kube/config -log-level=info \

|

||||

-metrics-server=https://prometheus.iowa.weavedx.com \

|

||||

-metrics-server=https://prometheus.istio.weavedx.com \

|

||||

-slack-url=https://hooks.slack.com/services/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 \

|

||||

-slack-channel="devops-alerts"

|

||||

|

||||

@@ -29,7 +31,7 @@ test: test-fmt test-codegen

|

||||

go test ./...

|

||||

|

||||

helm-package:

|

||||

cd charts/ && helm package flagger/ && helm package grafana/

|

||||

cd charts/ && helm package ./*

|

||||

mv charts/*.tgz docs/

|

||||

helm repo index docs --url https://stefanprodan.github.io/flagger --merge ./docs/index.yaml

|

||||

|

||||

@@ -44,6 +46,7 @@ version-set:

|

||||

sed -i '' "s/flagger:$$current/flagger:$$next/g" artifacts/flagger/deployment.yaml && \

|

||||

sed -i '' "s/tag: $$current/tag: $$next/g" charts/flagger/values.yaml && \

|

||||

sed -i '' "s/appVersion: $$current/appVersion: $$next/g" charts/flagger/Chart.yaml && \

|

||||

sed -i '' "s/version: $$current/version: $$next/g" charts/flagger/Chart.yaml && \

|

||||

echo "Version $$next set in code, deployment and charts"

|

||||

|

||||

version-up:

|

||||

@@ -77,3 +80,7 @@ reset-test:

|

||||

kubectl delete -f ./artifacts/namespaces

|

||||

kubectl apply -f ./artifacts/namespaces

|

||||

kubectl apply -f ./artifacts/canaries

|

||||

|

||||

loadtester-push:

|

||||

docker build -t quay.io/stefanprodan/flagger-loadtester:$(LT_VERSION) . -f Dockerfile.loadtester

|

||||

docker push quay.io/stefanprodan/flagger-loadtester:$(LT_VERSION)

|

||||

424

README.md

@@ -8,9 +8,38 @@

|

||||

|

||||

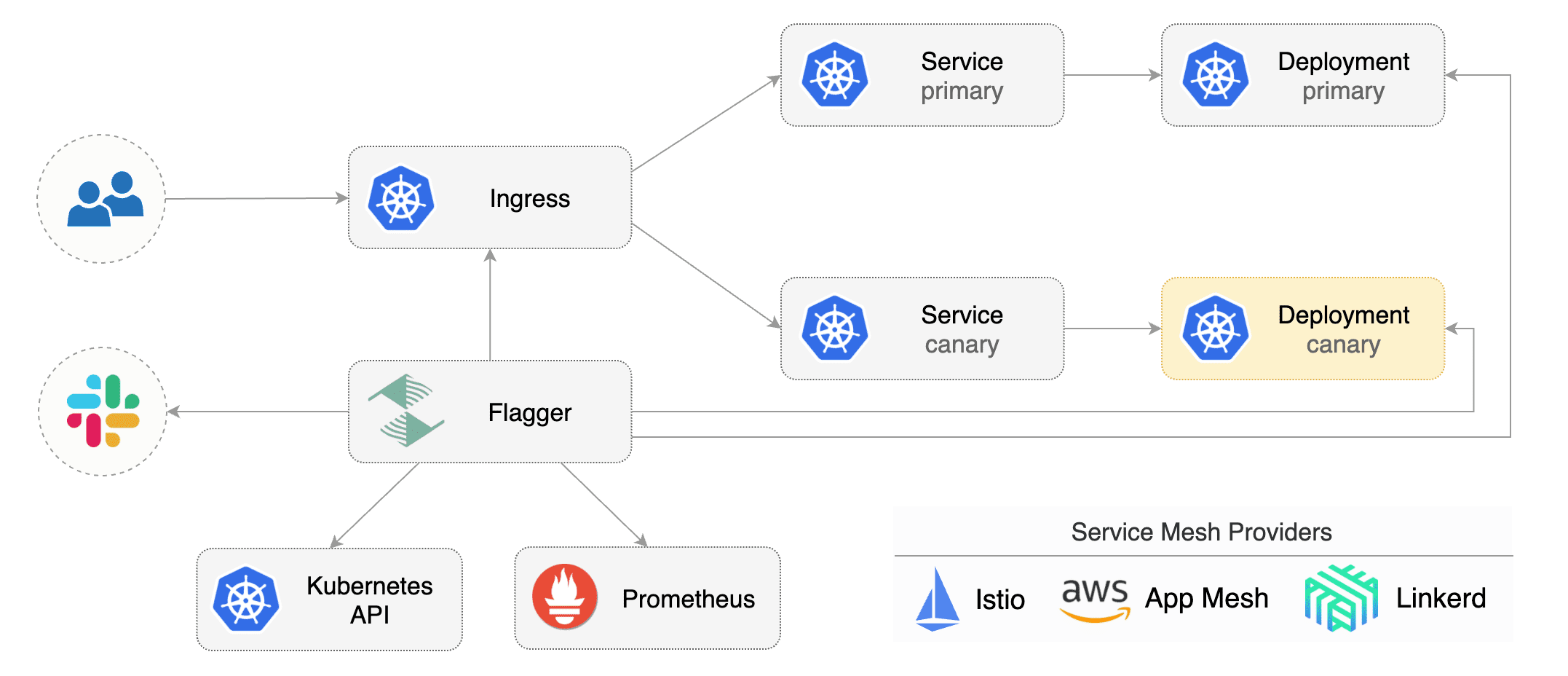

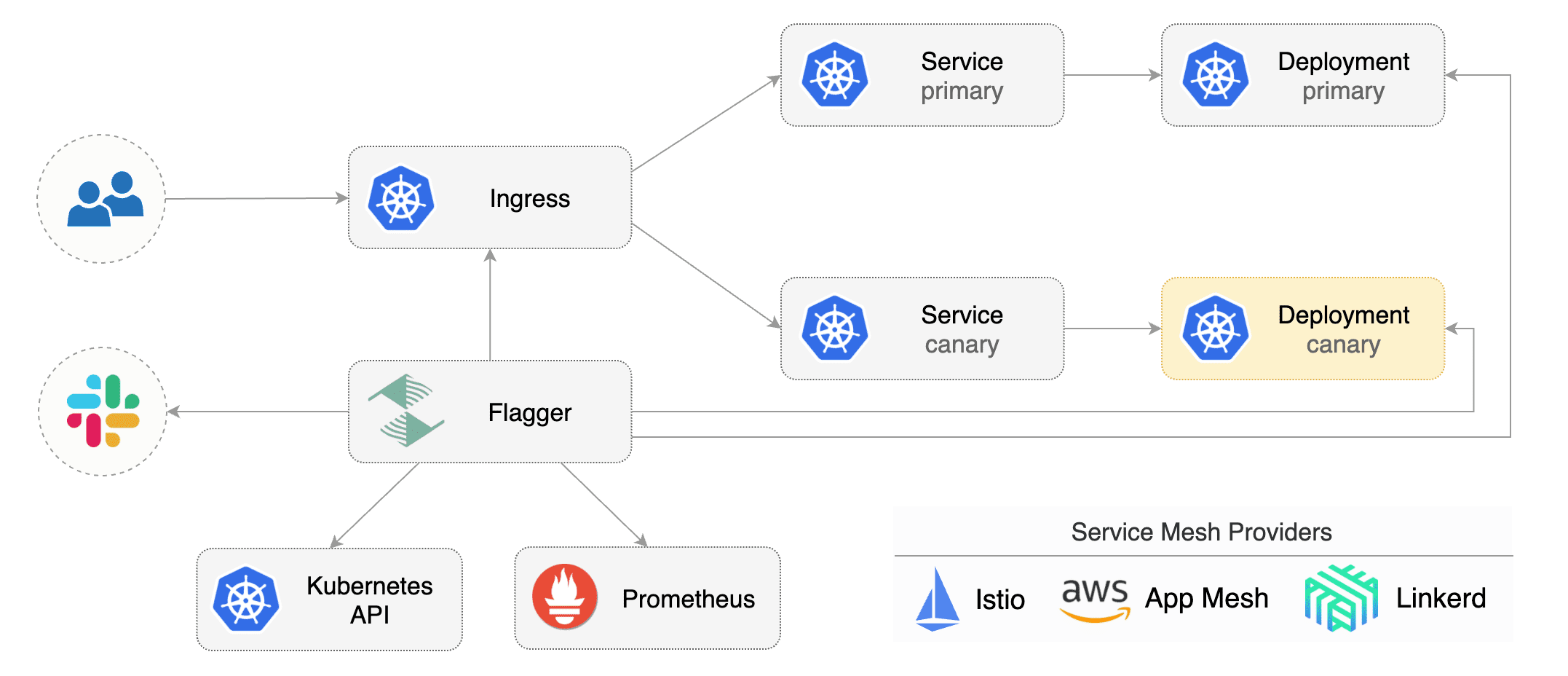

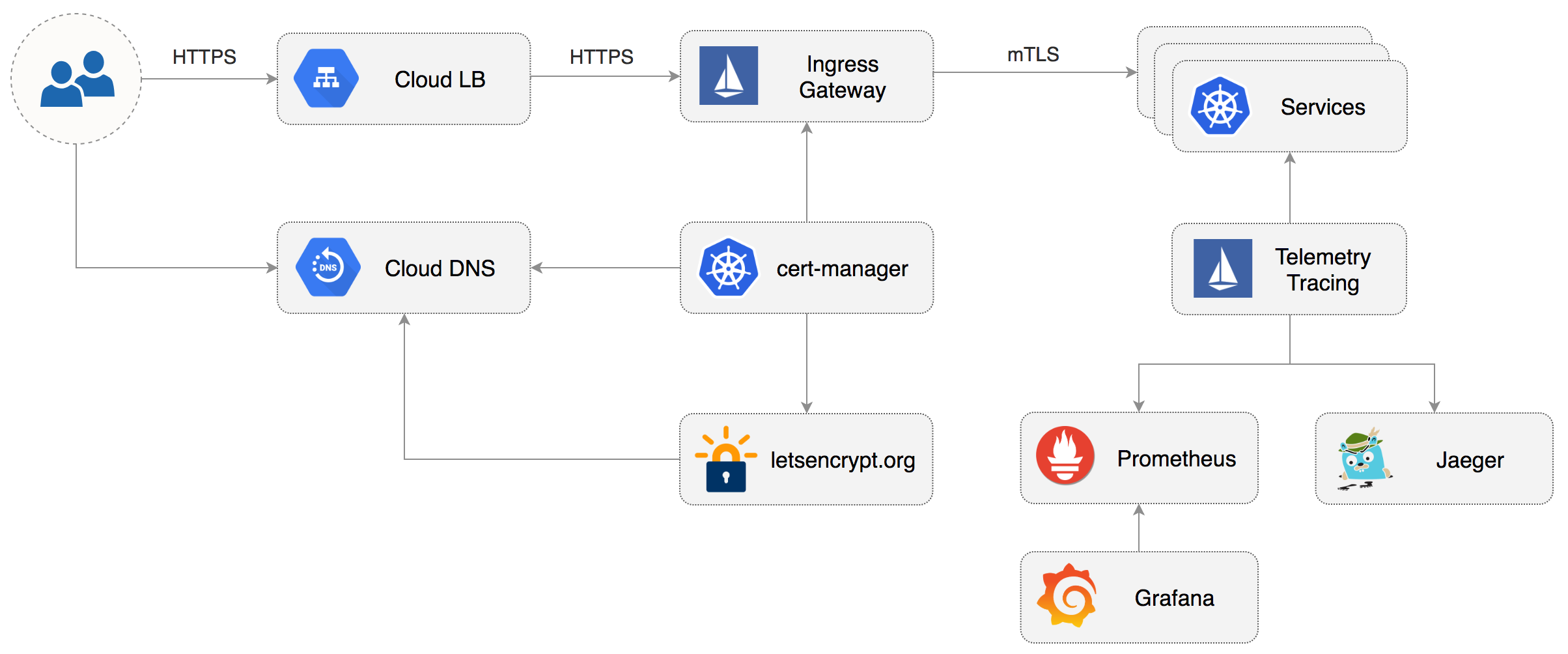

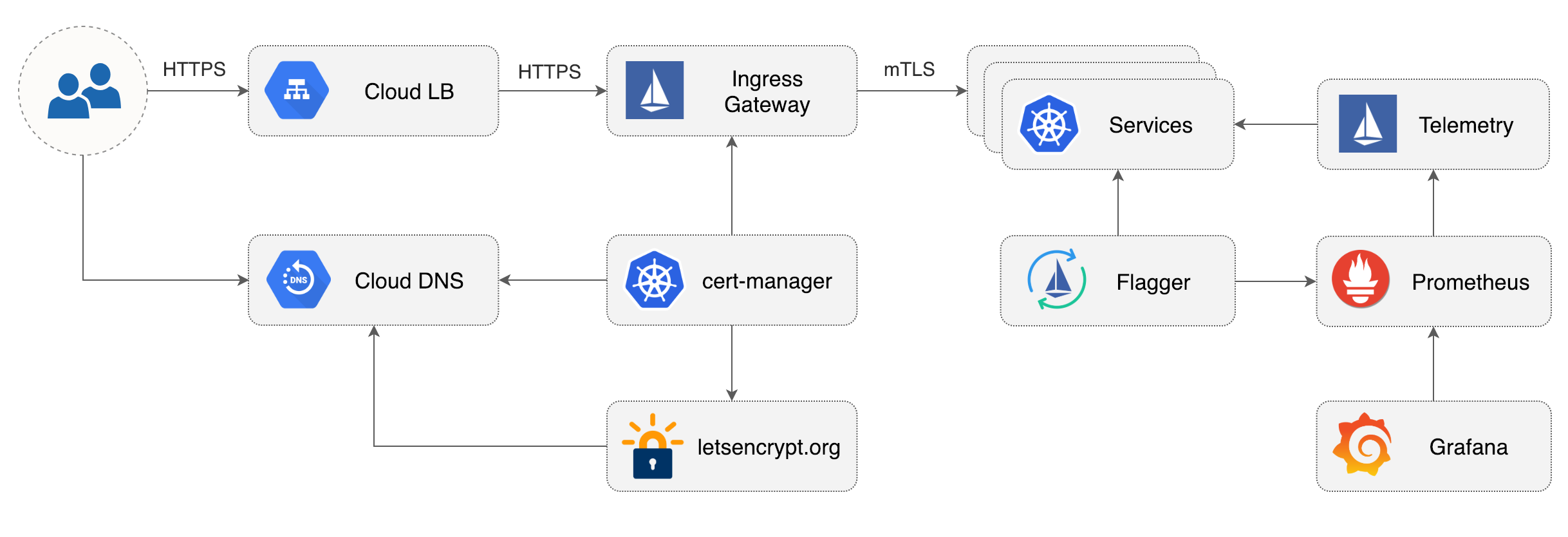

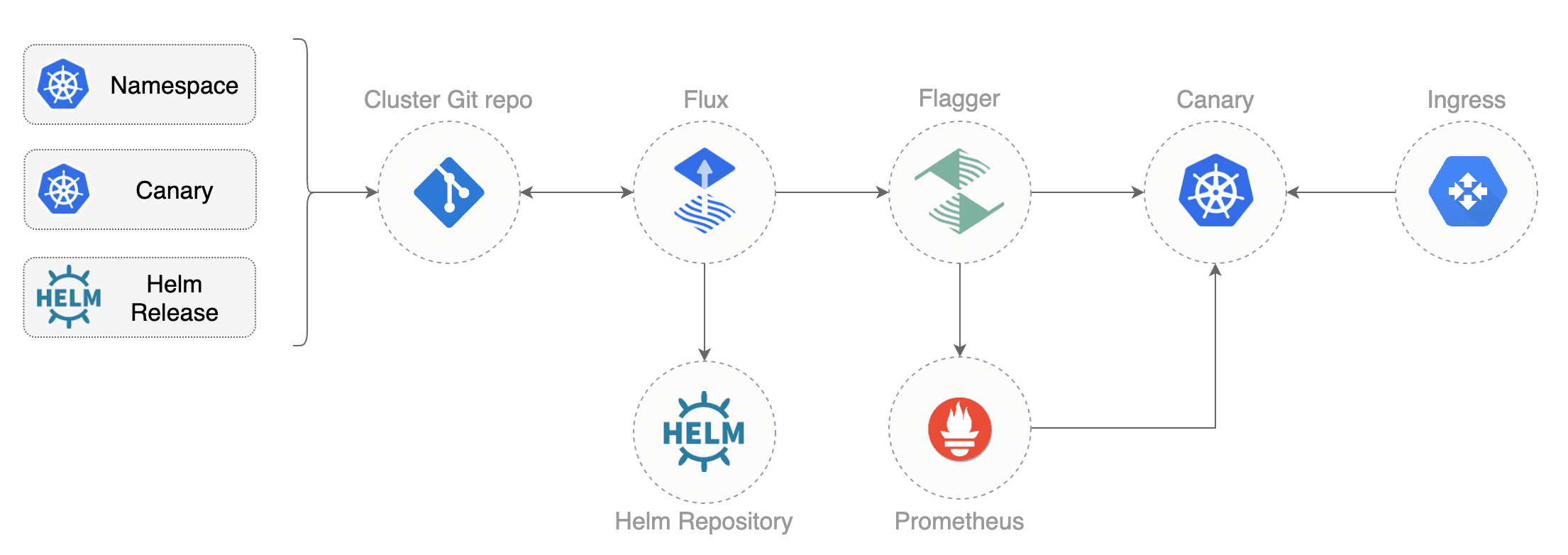

Flagger is a Kubernetes operator that automates the promotion of canary deployments

|

||||

using Istio routing for traffic shifting and Prometheus metrics for canary analysis.

|

||||

The canary analysis can be extended with webhooks for running integration tests,

|

||||

The canary analysis can be extended with webhooks for running acceptance tests,

|

||||

load tests or any other custom validation.

|

||||

|

||||

Flagger implements a control loop that gradually shifts traffic to the canary while measuring key performance

|

||||

indicators like HTTP requests success rate, requests average duration and pods health.

|

||||

Based on analysis of the KPIs a canary is promoted or aborted, and the analysis result is published to Slack.

|

||||

|

||||

|

||||

|

||||

### Documentation

|

||||

|

||||

Flagger documentation can be found at [docs.flagger.app](https://docs.flagger.app)

|

||||

|

||||

* Install

|

||||

* [Flagger install on Kubernetes](https://docs.flagger.app/install/flagger-install-on-kubernetes)

|

||||

* [Flagger install on GKE](https://docs.flagger.app/install/flagger-install-on-google-cloud)

|

||||

* How it works

|

||||

* [Canary custom resource](https://docs.flagger.app/how-it-works#canary-custom-resource)

|

||||

* [Routing](https://docs.flagger.app/how-it-works#istio-routing)

|

||||

* [Canary deployment stages](https://docs.flagger.app/how-it-works#canary-deployment)

|

||||

* [Canary analysis](https://docs.flagger.app/how-it-works#canary-analysis)

|

||||

* [HTTP metrics](https://docs.flagger.app/how-it-works#http-metrics)

|

||||

* [Custom metrics](https://docs.flagger.app/how-it-works#custom-metrics)

|

||||

* [Webhooks](https://docs.flagger.app/how-it-works#webhooks)

|

||||

* [Load testing](https://docs.flagger.app/how-it-works#load-testing)

|

||||

* Usage

|

||||

* [Canary promotions and rollbacks](https://docs.flagger.app/usage/progressive-delivery)

|

||||

* [Monitoring](https://docs.flagger.app/usage/monitoring)

|

||||

* [Alerting](https://docs.flagger.app/usage/alerting)

|

||||

* Tutorials

|

||||

* [Canary deployments with Helm charts and Weave Flux](https://docs.flagger.app/tutorials/canary-helm-gitops)

|

||||

|

||||

### Install

|

||||

|

||||

Before installing Flagger make sure you have Istio setup up with Prometheus enabled.

|

||||

@@ -30,46 +59,14 @@ helm upgrade -i flagger flagger/flagger \

|

||||

|

||||

Flagger is compatible with Kubernetes >1.11.0 and Istio >1.0.0.

|

||||

|

||||

### Usage

|

||||

### Canary CRD

|

||||

|

||||

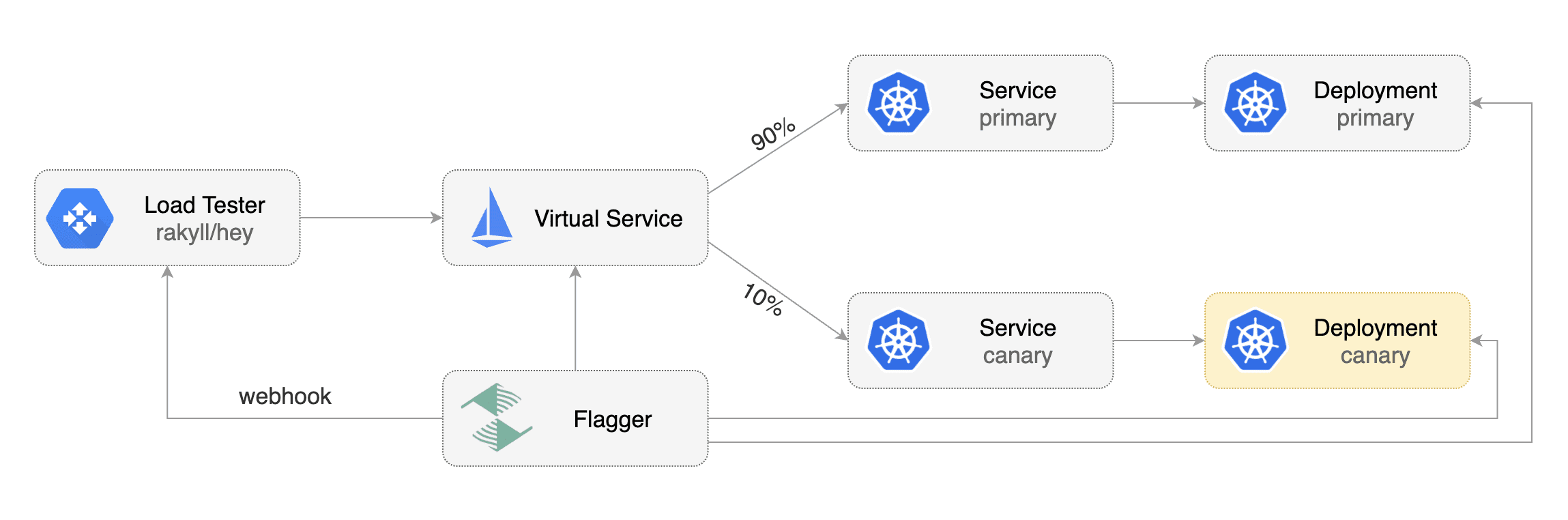

Flagger takes a Kubernetes deployment and creates a series of objects

|

||||

(Kubernetes [deployments](https://kubernetes.io/docs/concepts/workloads/controllers/deployment/),

|

||||

ClusterIP [services](https://kubernetes.io/docs/concepts/services-networking/service/) and

|

||||

Istio [virtual services](https://istio.io/docs/reference/config/istio.networking.v1alpha3/#VirtualService))

|

||||

to drive the canary analysis and promotion.

|

||||

Flagger takes a Kubernetes deployment and optionally a horizontal pod autoscaler (HPA),

|

||||

then creates a series of objects (Kubernetes deployments, ClusterIP services and Istio virtual services).

|

||||

These objects expose the application on the mesh and drive the canary analysis and promotion.

|

||||

|

||||

|

||||

|

||||

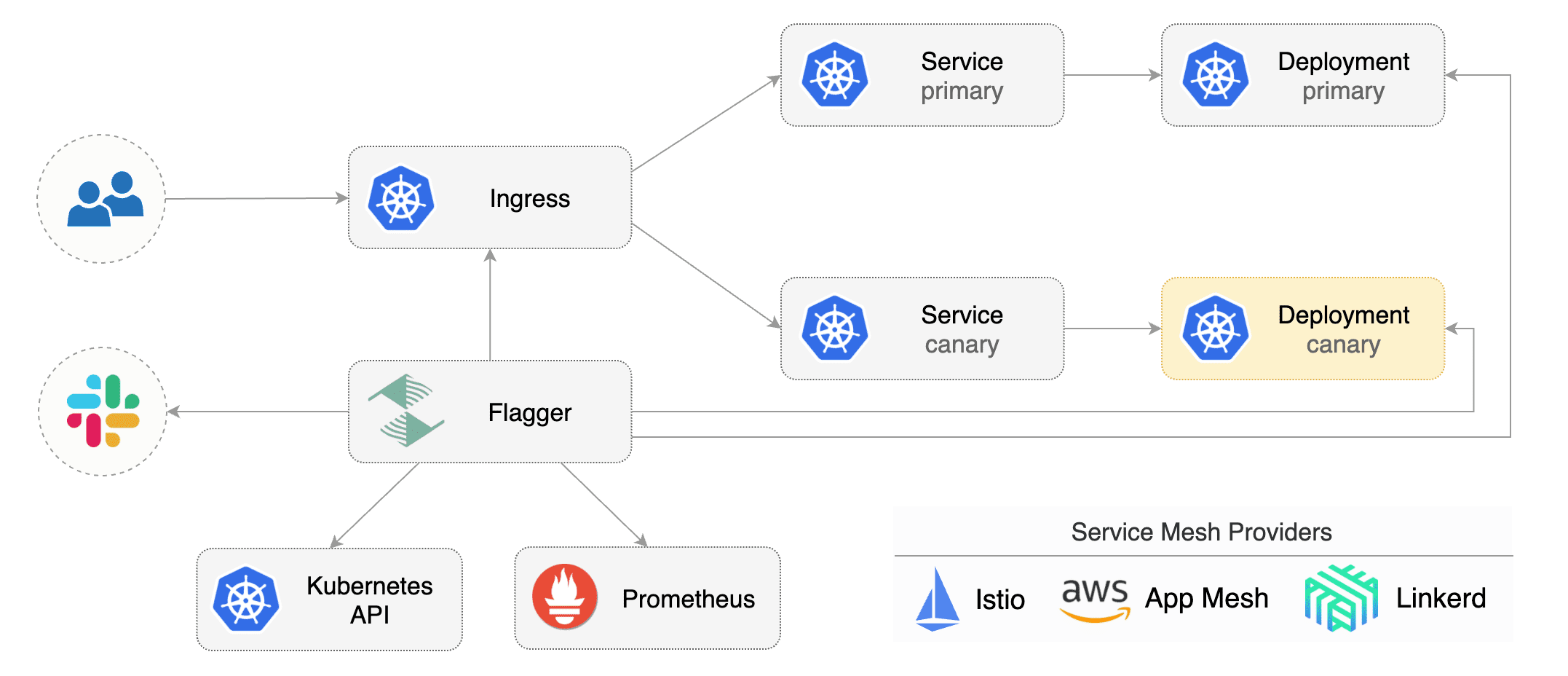

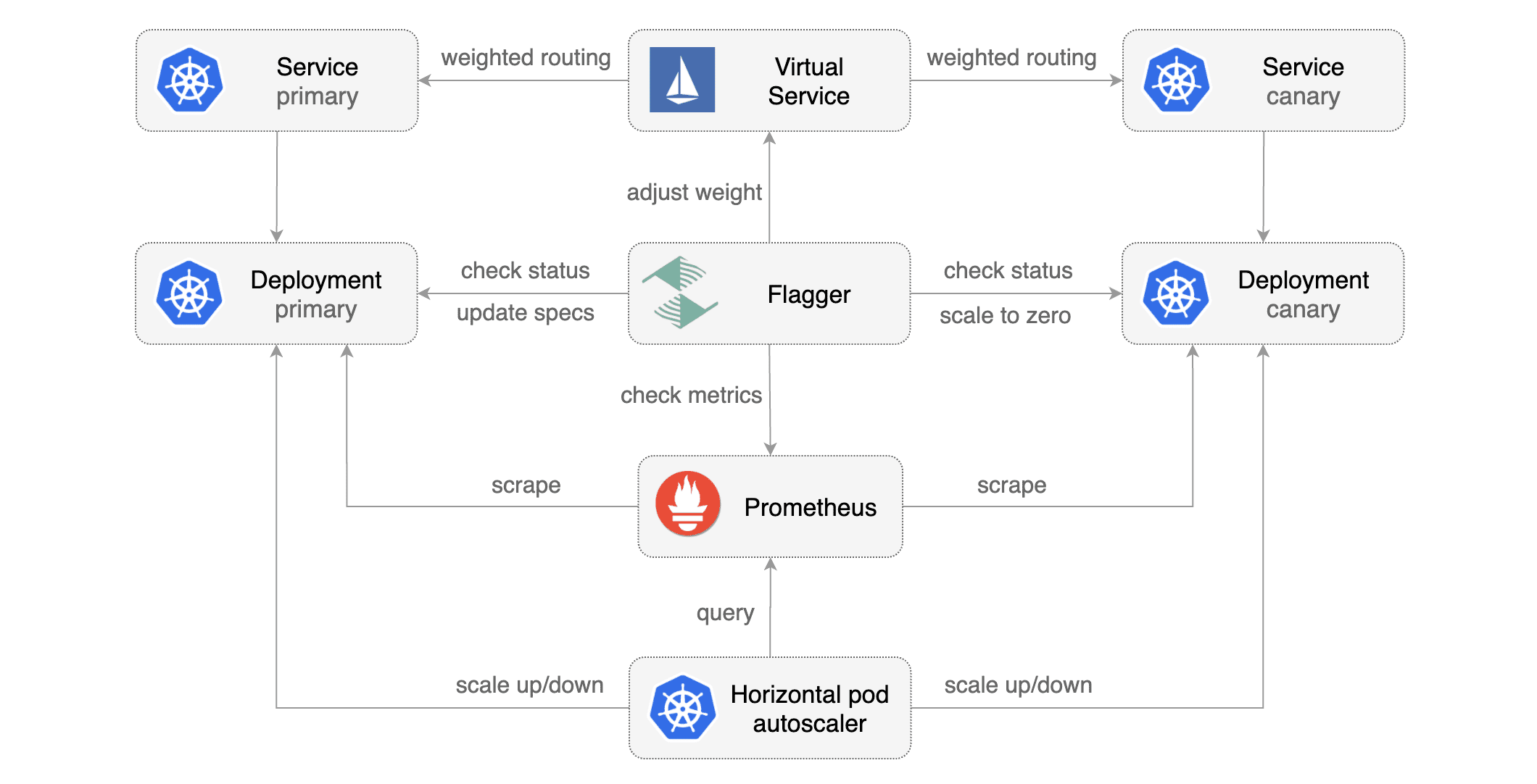

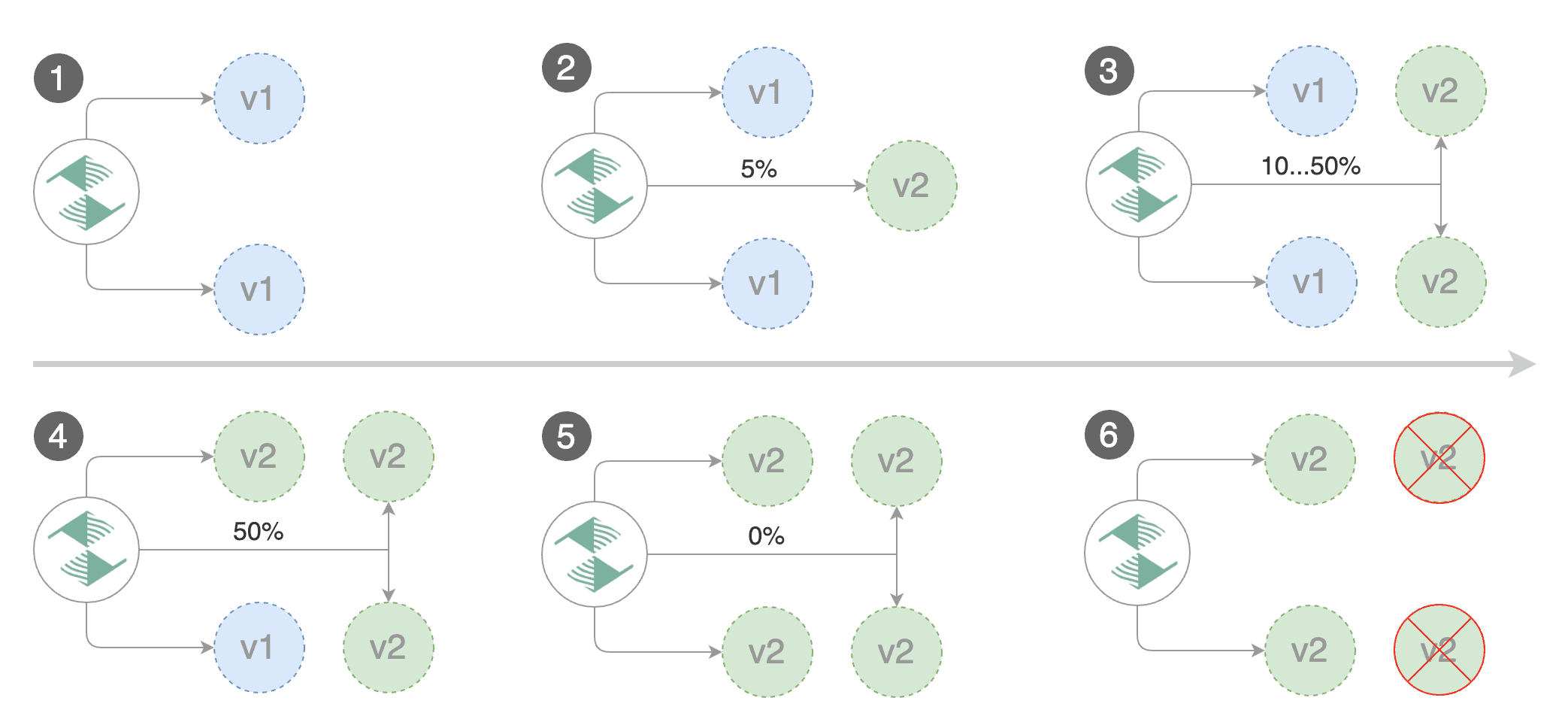

Gated canary promotion stages:

|

||||

|

||||

* scan for canary deployments

|

||||

* check Istio virtual service routes are mapped to primary and canary ClusterIP services

|

||||

* check primary and canary deployments status

|

||||

* halt advancement if a rolling update is underway

|

||||

* halt advancement if pods are unhealthy

|

||||

* increase canary traffic weight percentage from 0% to 5% (step weight)

|

||||

* check canary HTTP request success rate and latency

|

||||

* halt advancement if any metric is under the specified threshold

|

||||

* increment the failed checks counter

|

||||

* check if the number of failed checks reached the threshold

|

||||

* route all traffic to primary

|

||||

* scale to zero the canary deployment and mark it as failed

|

||||

* wait for the canary deployment to be updated (revision bump) and start over

|

||||

* increase canary traffic weight by 5% (step weight) till it reaches 50% (max weight)

|

||||

* halt advancement while canary request success rate is under the threshold

|

||||

* halt advancement while canary request duration P99 is over the threshold

|

||||

* halt advancement if the primary or canary deployment becomes unhealthy

|

||||

* halt advancement while canary deployment is being scaled up/down by HPA

|

||||

* promote canary to primary

|

||||

* copy canary deployment spec template over primary

|

||||

* wait for primary rolling update to finish

|

||||

* halt advancement if pods are unhealthy

|

||||

* route all traffic to primary

|

||||

* scale to zero the canary deployment

|

||||

* mark rollout as finished

|

||||

* wait for the canary deployment to be updated (revision bump) and start over

|

||||

|

||||

You can change the canary analysis _max weight_ and the _step weight_ percentage in the Flagger's custom resource.

|

||||

Flagger keeps track of ConfigMaps and Secrets referenced by a Kubernetes Deployment and triggers a canary analysis if any of those objects change.

|

||||

When promoting a workload in production, both code (container images) and configuration (config maps and secrets) are being synchronised.

|

||||

|

||||

For a deployment named _podinfo_, a canary promotion can be defined using Flagger's custom resource:

|

||||

|

||||

@@ -102,6 +99,27 @@ spec:

|

||||

# Istio virtual service host names (optional)

|

||||

hosts:

|

||||

- podinfo.example.com

|

||||

# HTTP match conditions (optional)

|

||||

match:

|

||||

- uri:

|

||||

prefix: /

|

||||

# HTTP rewrite (optional)

|

||||

rewrite:

|

||||

uri: /

|

||||

# Envoy timeout and retry policy (optional)

|

||||

headers:

|

||||

request:

|

||||

add:

|

||||

x-envoy-upstream-rq-timeout-ms: "15000"

|

||||

x-envoy-max-retries: "10"

|

||||

x-envoy-retry-on: "gateway-error,connect-failure,refused-stream"

|

||||

# cross-origin resource sharing policy (optional)

|

||||

corsPolicy:

|

||||

allowOrigin:

|

||||

- example.com

|

||||

# promote the canary without analysing it (default false)

|

||||

skipAnalysis: false

|

||||

# define the canary analysis timing and KPIs

|

||||

canaryAnalysis:

|

||||

# schedule interval (default 60s)

|

||||

interval: 1m

|

||||

@@ -115,6 +133,7 @@ spec:

|

||||

stepWeight: 5

|

||||

# Istio Prometheus checks

|

||||

metrics:

|

||||

# builtin Istio checks

|

||||

- name: istio_requests_total

|

||||

# minimum req success rate (non 5xx responses)

|

||||

# percentage (0-100)

|

||||

@@ -125,312 +144,32 @@ spec:

|

||||

# milliseconds

|

||||

threshold: 500

|

||||

interval: 30s

|

||||

# custom check

|

||||

- name: "kafka lag"

|

||||

threshold: 100

|

||||

query: |

|

||||

avg_over_time(

|

||||

kafka_consumergroup_lag{

|

||||

consumergroup=~"podinfo-consumer-.*",

|

||||

topic="podinfo"

|

||||

}[1m]

|

||||

)

|

||||

# external checks (optional)

|

||||

webhooks:

|

||||

- name: integration-tests

|

||||

url: http://podinfo.test:9898/echo

|

||||

timeout: 1m

|

||||

- name: load-test

|

||||

url: http://flagger-loadtester.test/

|

||||

timeout: 5s

|

||||

metadata:

|

||||

test: "all"

|

||||

token: "16688eb5e9f289f1991c"

|

||||

cmd: "hey -z 1m -q 10 -c 2 http://podinfo.test:9898/"

|

||||

```

|

||||

|

||||

The canary analysis is using the following promql queries:

|

||||

|

||||

_HTTP requests success rate percentage_

|

||||

|

||||

```sql

|

||||

sum(

|

||||

rate(

|

||||

istio_requests_total{

|

||||

reporter="destination",

|

||||

destination_workload_namespace=~"$namespace",

|

||||

destination_workload=~"$workload",

|

||||

response_code!~"5.*"

|

||||

}[$interval]

|

||||

)

|

||||

)

|

||||

/

|

||||

sum(

|

||||

rate(

|

||||

istio_requests_total{

|

||||

reporter="destination",

|

||||

destination_workload_namespace=~"$namespace",

|

||||

destination_workload=~"$workload"

|

||||

}[$interval]

|

||||

)

|

||||

)

|

||||

```

|

||||

|

||||

_HTTP requests milliseconds duration P99_

|

||||

|

||||

```sql

|

||||

histogram_quantile(0.99,

|

||||

sum(

|

||||

irate(

|

||||

istio_request_duration_seconds_bucket{

|

||||

reporter="destination",

|

||||

destination_workload=~"$workload",

|

||||

destination_workload_namespace=~"$namespace"

|

||||

}[$interval]

|

||||

)

|

||||

) by (le)

|

||||

)

|

||||

```

|

||||

|

||||

The canary analysis can be extended with webhooks.

|

||||

Flagger will call the webhooks (HTTP POST) and determine from the response status code (HTTP 2xx) if the canary is failing or not.

|

||||

|

||||

Webhook payload:

|

||||

|

||||

```json

|

||||

{

|

||||

"name": "podinfo",

|

||||

"namespace": "test",

|

||||

"metadata": {

|

||||

"test": "all",

|

||||

"token": "16688eb5e9f289f1991c"

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

### Automated canary analysis, promotions and rollbacks

|

||||

|

||||

Create a test namespace with Istio sidecar injection enabled:

|

||||

|

||||

```bash

|

||||

export REPO=https://raw.githubusercontent.com/stefanprodan/flagger/master

|

||||

|

||||

kubectl apply -f ${REPO}/artifacts/namespaces/test.yaml

|

||||

```

|

||||

|

||||

Create a deployment and a horizontal pod autoscaler:

|

||||

|

||||

```bash

|

||||

kubectl apply -f ${REPO}/artifacts/canaries/deployment.yaml

|

||||

kubectl apply -f ${REPO}/artifacts/canaries/hpa.yaml

|

||||

```

|

||||

|

||||

Create a canary promotion custom resource (replace the Istio gateway and the internet domain with your own):

|

||||

|

||||

```bash

|

||||

kubectl apply -f ${REPO}/artifacts/canaries/canary.yaml

|

||||

```

|

||||

|

||||

After a couple of seconds Flagger will create the canary objects:

|

||||

|

||||

```bash

|

||||

# applied

|

||||

deployment.apps/podinfo

|

||||

horizontalpodautoscaler.autoscaling/podinfo

|

||||

canary.flagger.app/podinfo

|

||||

# generated

|

||||

deployment.apps/podinfo-primary

|

||||

horizontalpodautoscaler.autoscaling/podinfo-primary

|

||||

service/podinfo

|

||||

service/podinfo-canary

|

||||

service/podinfo-primary

|

||||

virtualservice.networking.istio.io/podinfo

|

||||

```

|

||||

|

||||

|

||||

|

||||

Trigger a canary deployment by updating the container image:

|

||||

|

||||

```bash

|

||||

kubectl -n test set image deployment/podinfo \

|

||||

podinfod=quay.io/stefanprodan/podinfo:1.2.1

|

||||

```

|

||||

|

||||

Flagger detects that the deployment revision changed and starts a new canary analysis:

|

||||

|

||||

```

|

||||

kubectl -n test describe canary/podinfo

|

||||

|

||||

Status:

|

||||

Canary Weight: 0

|

||||

Failed Checks: 0

|

||||

Last Transition Time: 2019-01-16T13:47:16Z

|

||||

Phase: Succeeded

|

||||

Events:

|

||||

Type Reason Age From Message

|

||||

---- ------ ---- ---- -------

|

||||

Normal Synced 3m flagger New revision detected podinfo.test

|

||||

Normal Synced 3m flagger Scaling up podinfo.test

|

||||

Warning Synced 3m flagger Waiting for podinfo.test rollout to finish: 0 of 1 updated replicas are available

|

||||

Normal Synced 3m flagger Advance podinfo.test canary weight 5

|

||||

Normal Synced 3m flagger Advance podinfo.test canary weight 10

|

||||

Normal Synced 3m flagger Advance podinfo.test canary weight 15

|

||||

Normal Synced 2m flagger Advance podinfo.test canary weight 20

|

||||

Normal Synced 2m flagger Advance podinfo.test canary weight 25

|

||||

Normal Synced 1m flagger Advance podinfo.test canary weight 30

|

||||

Normal Synced 1m flagger Advance podinfo.test canary weight 35

|

||||

Normal Synced 55s flagger Advance podinfo.test canary weight 40

|

||||

Normal Synced 45s flagger Advance podinfo.test canary weight 45

|

||||

Normal Synced 35s flagger Advance podinfo.test canary weight 50

|

||||

Normal Synced 25s flagger Copying podinfo.test template spec to podinfo-primary.test

|

||||

Warning Synced 15s flagger Waiting for podinfo-primary.test rollout to finish: 1 of 2 updated replicas are available

|

||||

Normal Synced 5s flagger Promotion completed! Scaling down podinfo.test

|

||||

```

|

||||

|

||||

You can monitor all canaries with:

|

||||

|

||||

```bash

|

||||

watch kubectl get canaries --all-namespaces

|

||||

|

||||

NAMESPACE NAME STATUS WEIGHT LASTTRANSITIONTIME

|

||||

test podinfo Progressing 5 2019-01-16T14:05:07Z

|

||||

```

|

||||

|

||||

During the canary analysis you can generate HTTP 500 errors and high latency to test if Flagger pauses the rollout.

|

||||

|

||||

Create a tester pod and exec into it:

|

||||

|

||||

```bash

|

||||

kubectl -n test run tester --image=quay.io/stefanprodan/podinfo:1.2.1 -- ./podinfo --port=9898

|

||||

kubectl -n test exec -it tester-xx-xx sh

|

||||

```

|

||||

|

||||

Generate HTTP 500 errors:

|

||||

|

||||

```bash

|

||||

watch curl http://podinfo-canary:9898/status/500

|

||||

```

|

||||

|

||||

Generate latency:

|

||||

|

||||

```bash

|

||||

watch curl http://podinfo-canary:9898/delay/1

|

||||

```

|

||||

|

||||

When the number of failed checks reaches the canary analysis threshold, the traffic is routed back to the primary,

|

||||

the canary is scaled to zero and the rollout is marked as failed.

|

||||

|

||||

```

|

||||

kubectl -n test describe canary/podinfo

|

||||

|

||||

Status:

|

||||

Canary Weight: 0

|

||||

Failed Checks: 10

|

||||

Last Transition Time: 2019-01-16T13:47:16Z

|

||||

Phase: Failed

|

||||

Events:

|

||||

Type Reason Age From Message

|

||||

---- ------ ---- ---- -------

|

||||

Normal Synced 3m flagger Starting canary deployment for podinfo.test

|

||||

Normal Synced 3m flagger Advance podinfo.test canary weight 5

|

||||

Normal Synced 3m flagger Advance podinfo.test canary weight 10

|

||||

Normal Synced 3m flagger Advance podinfo.test canary weight 15

|

||||

Normal Synced 3m flagger Halt podinfo.test advancement success rate 69.17% < 99%

|

||||

Normal Synced 2m flagger Halt podinfo.test advancement success rate 61.39% < 99%

|

||||

Normal Synced 2m flagger Halt podinfo.test advancement success rate 55.06% < 99%

|

||||

Normal Synced 2m flagger Halt podinfo.test advancement success rate 47.00% < 99%

|

||||

Normal Synced 2m flagger (combined from similar events): Halt podinfo.test advancement success rate 38.08% < 99%

|

||||

Warning Synced 1m flagger Rolling back podinfo.test failed checks threshold reached 10

|

||||

Warning Synced 1m flagger Canary failed! Scaling down podinfo.test

|

||||

```

|

||||

|

||||

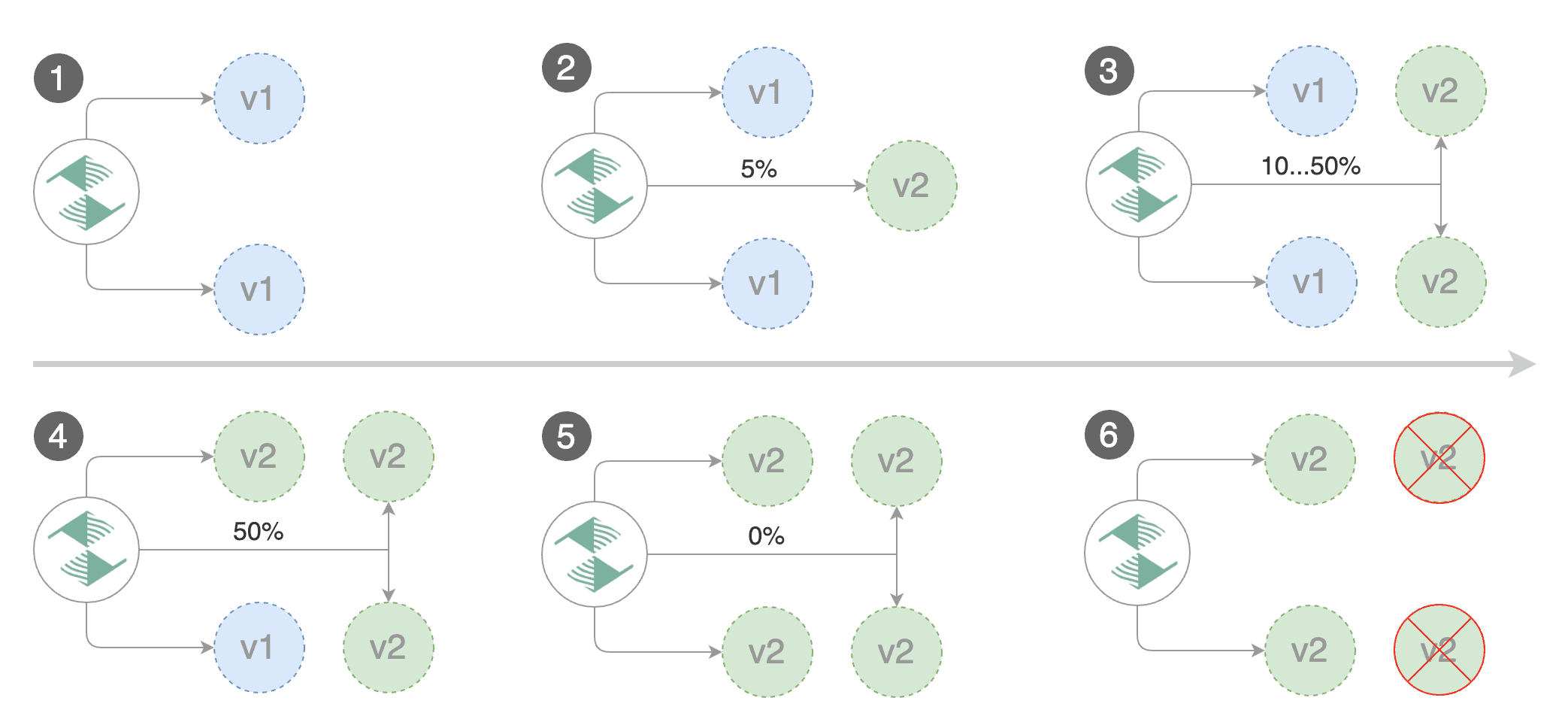

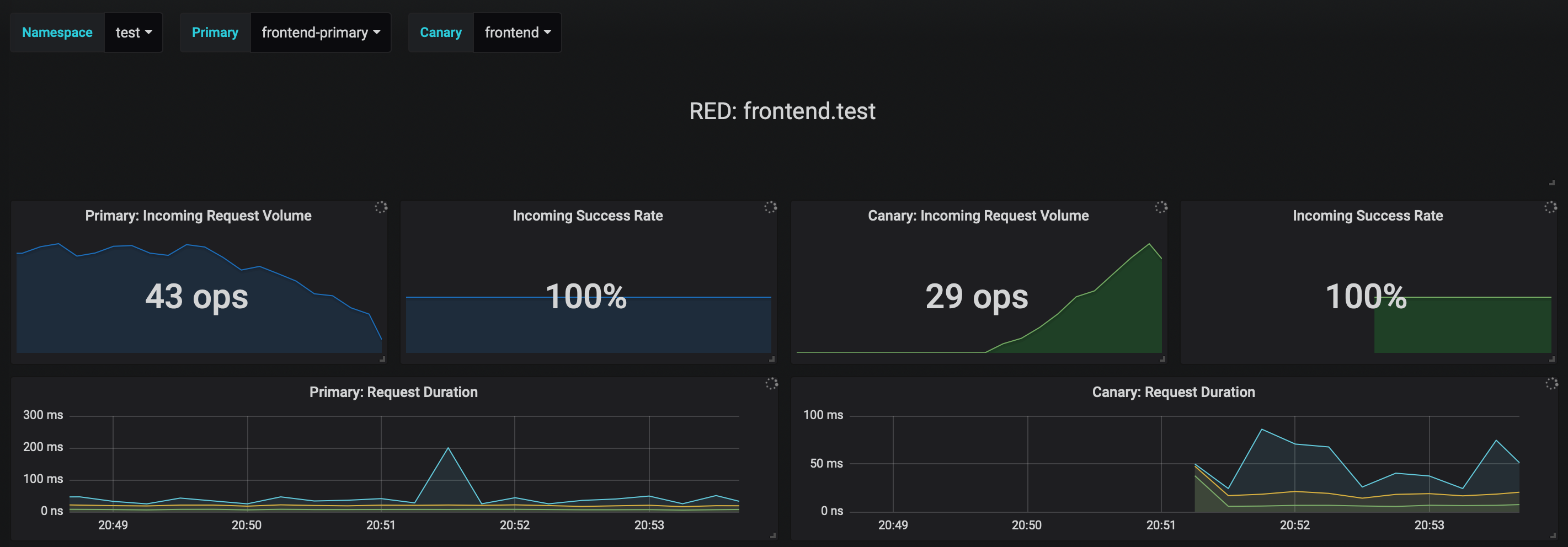

### Monitoring

|

||||

|

||||

Flagger comes with a Grafana dashboard made for canary analysis.

|

||||

|

||||

Install Grafana with Helm:

|

||||

|

||||

```bash

|

||||

helm upgrade -i flagger-grafana flagger/grafana \

|

||||

--namespace=istio-system \

|

||||

--set url=http://prometheus.istio-system:9090

|

||||

```

|

||||

|

||||

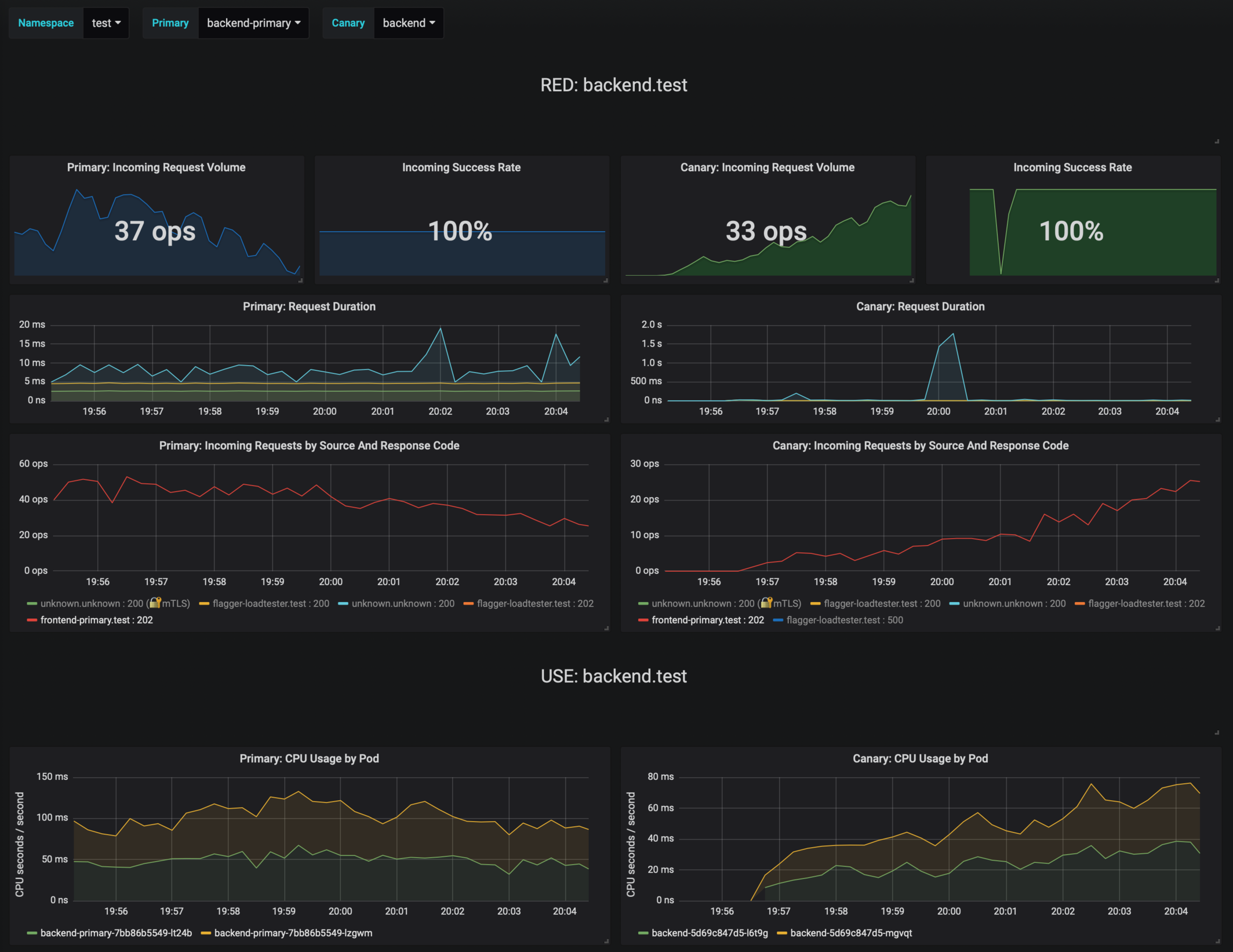

The dashboard shows the RED and USE metrics for the primary and canary workloads:

|

||||

|

||||

|

||||

|

||||

The canary errors and latency spikes have been recorded as Kubernetes events and logged by Flagger in json format:

|

||||

|

||||

```

|

||||

kubectl -n istio-system logs deployment/flagger --tail=100 | jq .msg

|

||||

|

||||

Starting canary deployment for podinfo.test

|

||||

Advance podinfo.test canary weight 5

|

||||

Advance podinfo.test canary weight 10

|

||||

Advance podinfo.test canary weight 15

|

||||

Advance podinfo.test canary weight 20

|

||||

Advance podinfo.test canary weight 25

|

||||

Advance podinfo.test canary weight 30

|

||||

Advance podinfo.test canary weight 35

|

||||

Halt podinfo.test advancement success rate 98.69% < 99%

|

||||

Advance podinfo.test canary weight 40

|

||||

Halt podinfo.test advancement request duration 1.515s > 500ms

|

||||

Advance podinfo.test canary weight 45

|

||||

Advance podinfo.test canary weight 50

|

||||

Copying podinfo.test template spec to podinfo-primary.test

|

||||

Halt podinfo-primary.test advancement waiting for rollout to finish: 1 old replicas are pending termination

|

||||

Scaling down podinfo.test

|

||||

Promotion completed! podinfo.test

|

||||

```

|

||||

|

||||

Flagger exposes Prometheus metrics that can be used to determine the canary analysis status and the destination weight values:

|

||||

|

||||

```bash

|

||||

# Canaries total gauge

|

||||

flagger_canary_total{namespace="test"} 1

|

||||

|

||||

# Canary promotion last known status gauge

|

||||

# 0 - running, 1 - successful, 2 - failed

|

||||

flagger_canary_status{name="podinfo" namespace="test"} 1

|

||||

|

||||

# Canary traffic weight gauge

|

||||

flagger_canary_weight{workload="podinfo-primary" namespace="test"} 95

|

||||

flagger_canary_weight{workload="podinfo" namespace="test"} 5

|

||||

|

||||

# Seconds spent performing canary analysis histogram

|

||||

flagger_canary_duration_seconds_bucket{name="podinfo",namespace="test",le="10"} 6

|

||||

flagger_canary_duration_seconds_bucket{name="podinfo",namespace="test",le="+Inf"} 6

|

||||

flagger_canary_duration_seconds_sum{name="podinfo",namespace="test"} 17.3561329

|

||||

flagger_canary_duration_seconds_count{name="podinfo",namespace="test"} 6

|

||||

```

|

||||

|

||||

### Alerting

|

||||

|

||||

Flagger can be configured to send Slack notifications:

|

||||

|

||||

```bash

|

||||

helm upgrade -i flagger flagger/flagger \

|

||||

--namespace=istio-system \

|

||||

--set slack.url=https://hooks.slack.com/services/YOUR/SLACK/WEBHOOK \

|

||||

--set slack.channel=general \

|

||||

--set slack.user=flagger

|

||||

```

|

||||

|

||||

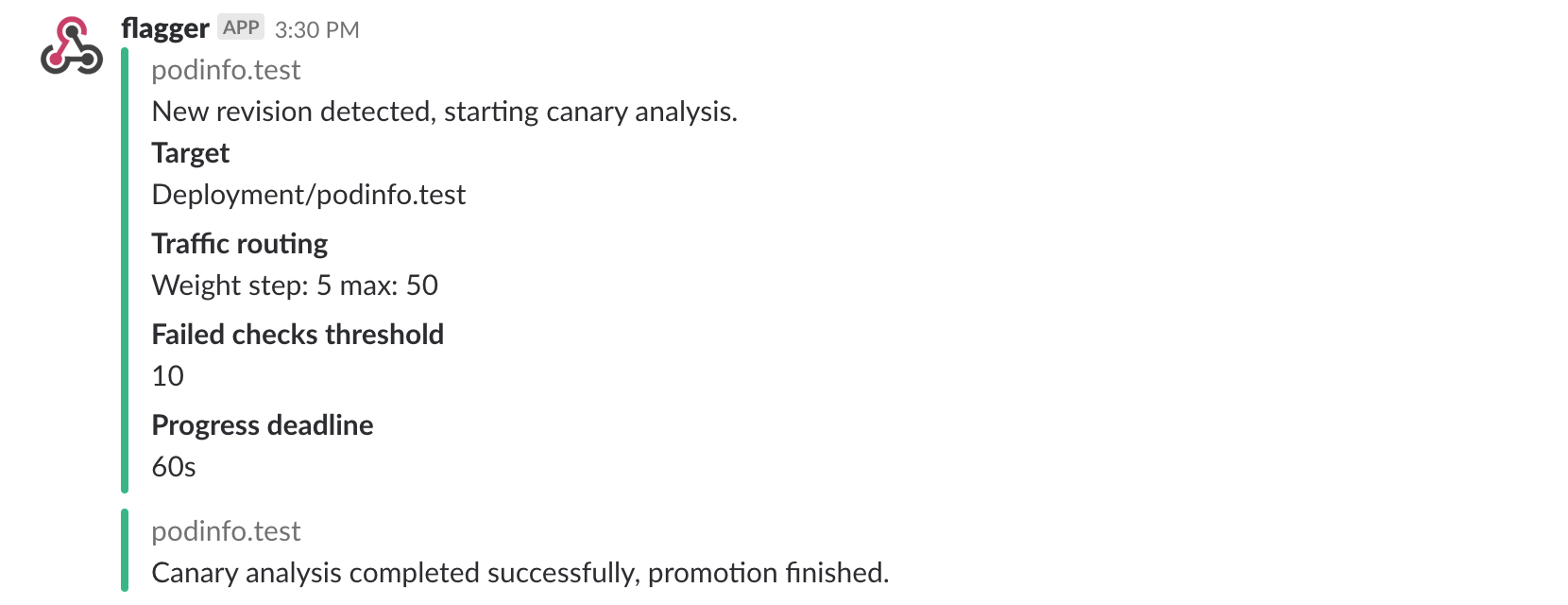

Once configured with a Slack incoming webhook, Flagger will post messages when a canary deployment has been initialized,

|

||||

when a new revision has been detected and if the canary analysis failed or succeeded.

|

||||

|

||||

|

||||

|

||||

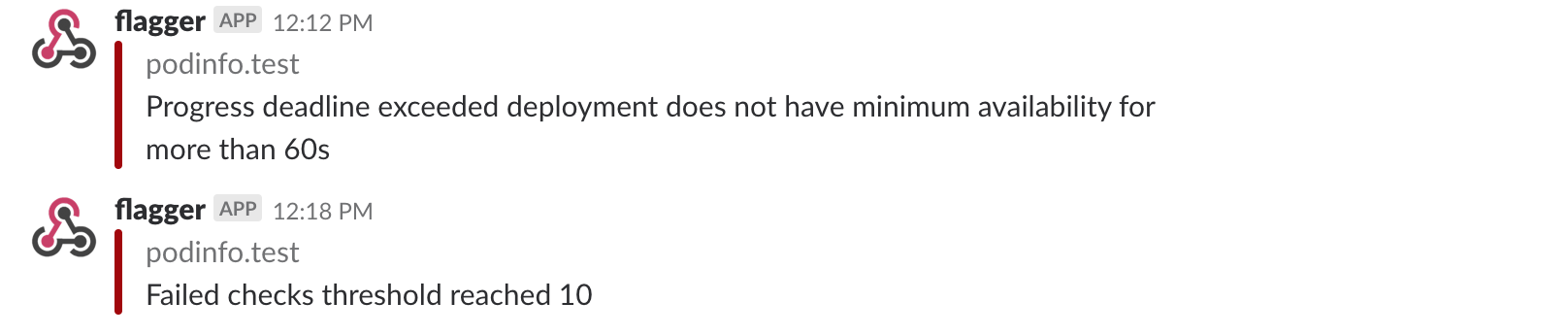

A canary deployment will be rolled back if the progress deadline exceeded or if the analysis

|

||||

reached the maximum number of failed checks:

|

||||

|

||||

|

||||

|

||||

Besides Slack, you can use Alertmanager to trigger alerts when a canary deployment failed:

|

||||

|

||||

```yaml

|

||||

- alert: canary_rollback

|

||||

expr: flagger_canary_status > 1

|

||||

for: 1m

|

||||

labels:

|

||||

severity: warning

|

||||

annotations:

|

||||

summary: "Canary failed"

|

||||

description: "Workload {{ $labels.name }} namespace {{ $labels.namespace }}"

|

||||

```

|

||||

For more details on how the canary analysis and promotion works please [read the docs](https://docs.flagger.app/how-it-works).

|

||||

|

||||

### Roadmap

|

||||

|

||||

* Extend the validation mechanism to support other metrics than HTTP success rate and latency

|

||||

* Add A/B testing capabilities using fixed routing based on HTTP headers and cookies match conditions

|

||||

* Integrate with other service mesh technologies like AWS AppMesh and Linkerd v2

|

||||

* Add support for comparing the canary metrics to the primary ones and do the validation based on the derivation between the two

|

||||

* Extend the canary analysis and promotion to other types than Kubernetes deployments such as Flux Helm releases or OpenFaaS functions

|

||||

|

||||

### Contributing

|

||||

|

||||

@@ -442,3 +181,16 @@ When submitting bug reports please include as much details as possible:

|

||||

* which Kubernetes/Istio version

|

||||

* what configuration (canary, virtual service and workloads definitions)

|

||||

* what happened (Flagger, Istio Pilot and Proxy logs)

|

||||

|

||||

### Getting Help

|

||||

|

||||

If you have any questions about Flagger and progressive delivery:

|

||||

|

||||

* Read the Flagger [docs](https://docs.flagger.app).

|

||||

* Invite yourself to the [Weave community slack](https://slack.weave.works/)

|

||||

and join the [#flagger](https://weave-community.slack.com/messages/flagger/) channel.

|

||||

* Join the [Weave User Group](https://www.meetup.com/pro/Weave/) and get invited to online talks,

|

||||

hands-on training and meetups in your area.

|

||||

* File an [issue](https://github.com/stefanprodan/flagger/issues/new).

|

||||

|

||||

Your feedback is always welcome!

|

||||

|

||||

@@ -25,7 +25,23 @@ spec:

|

||||

- public-gateway.istio-system.svc.cluster.local

|

||||

# Istio virtual service host names (optional)

|

||||

hosts:

|

||||

- app.iowa.weavedx.com

|

||||

- app.istio.weavedx.com

|

||||

# HTTP match conditions (optional)

|

||||

match:

|

||||

- uri:

|

||||

prefix: /

|

||||

# HTTP rewrite (optional)

|

||||

rewrite:

|

||||

uri: /

|

||||

# Envoy timeout and retry policy (optional)

|

||||

headers:

|

||||

request:

|

||||

add:

|

||||

x-envoy-upstream-rq-timeout-ms: "15000"

|

||||

x-envoy-max-retries: "10"

|

||||

x-envoy-retry-on: "gateway-error,connect-failure,refused-stream"

|

||||

# promote the canary without analysing it (default false)

|

||||

skipAnalysis: false

|

||||

canaryAnalysis:

|

||||

# schedule interval (default 60s)

|

||||

interval: 10s

|

||||

@@ -51,9 +67,8 @@ spec:

|

||||

interval: 30s

|

||||

# external checks (optional)

|

||||

webhooks:

|

||||

- name: integration-tests

|

||||

url: https://httpbin.org/post

|

||||

timeout: 1m

|

||||

- name: load-test

|

||||

url: http://flagger-loadtester.test/

|

||||

timeout: 5s

|

||||

metadata:

|

||||

test: "all"

|

||||

token: "16688eb5e9f289f1991c"

|

||||

cmd: "hey -z 1m -q 10 -c 2 http://podinfo.test:9898/"

|

||||

|

||||

@@ -25,7 +25,7 @@ spec:

|

||||

spec:

|

||||

containers:

|

||||

- name: podinfod

|

||||

image: quay.io/stefanprodan/podinfo:1.3.0

|

||||

image: quay.io/stefanprodan/podinfo:1.4.0

|

||||

imagePullPolicy: IfNotPresent

|

||||

ports:

|

||||

- containerPort: 9898

|

||||

|

||||

6

artifacts/cluster/namespaces/test.yaml