mirror of

https://github.com/fluxcd/flagger.git

synced 2026-04-15 06:57:34 +00:00

Add canary rollback scenario

This commit is contained in:

@@ -6,16 +6,16 @@ description: Flagger is an Istio progressive delivery Kubernetes operator

|

||||

|

||||

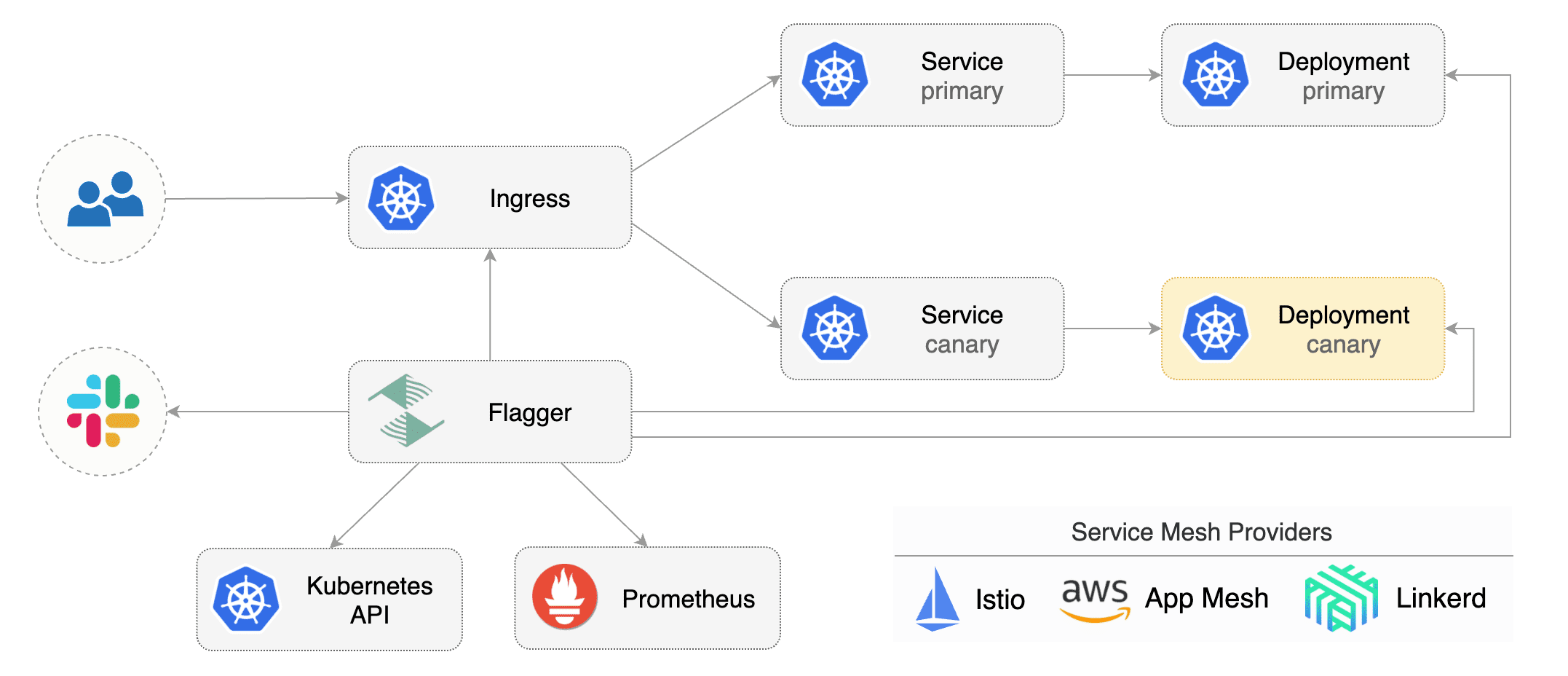

[Flagger](https://github.com/stefanprodan/flagger) is a **Kubernetes** operator that automates the promotion of canary

|

||||

deployments using **Istio** routing for traffic shifting and **Prometheus** metrics for canary analysis.

|

||||

The canary analysis can be extended with webhooks for running integration tests,

|

||||

load tests or any other custom validation.

|

||||

The canary analysis can be extended with webhooks for running

|

||||

system integration/acceptance tests, load tests, or any other custom validation.

|

||||

|

||||

Flagger implements a control loop that gradually shifts traffic to the canary while measuring key performance

|

||||

indicators like HTTP requests success rate, requests average duration and pods health.

|

||||

Based on the **KPIs** analysis a canary is promoted or aborted and the analysis result is published to **Slack**.

|

||||

Based on analysis of the **KPIs** a canary is promoted or aborted, and the analysis result is published to **Slack**.

|

||||

|

||||

|

||||

|

||||

Flagger can be configured with Kubernetes custom resources \(canaries.flagger.app kind\) and is compatible with

|

||||

Flagger can be configured with Kubernetes custom resources and is compatible with

|

||||

any CI/CD solutions made for Kubernetes. Since Flagger is declarative and reacts to Kubernetes events,

|

||||

it can be used in **GitOps** pipelines together with Weave Flux or JenkinsX.

|

||||

|

||||

|

||||

@@ -16,5 +16,4 @@

|

||||

|

||||

# Tutorials

|

||||

|

||||

* [Canary Deployments with Helm](tutorials/canary-helm-chart.md)

|

||||

|

||||

* [Canary Deployments with Helm charts](tutorials/canary-helm-gitops.md)

|

||||

|

||||

@@ -1,10 +1,11 @@

|

||||

# Canary Deployments with Helm charts

|

||||

|

||||

This guide shows you how to package a web app into a Helm chart and trigger a canary deployment on upgrade.

|

||||

This guide shows you how to package a web app into a Helm chart, trigger canary deployments on Helm upgrade

|

||||

and automate the chart release process with Weave Flux.

|

||||

|

||||

### Packaging

|

||||

|

||||

You'll be using the [podinfo](https://github.com/stefanprodan/flagger/tree/master/charts/podinfo) chart.

|

||||

You'll be using the [podinfo](https://github.com/stefanprodan/k8s-podinfo) chart.

|

||||

This chart packages a web app made with Go, it's configuration, a horizontal pod autoscaler (HPA)

|

||||

and the canary configuration file.

|

||||

|

||||

@@ -21,6 +22,8 @@ and the canary configuration file.

|

||||

└── values.yaml

|

||||

```

|

||||

|

||||

You can find the chart source [here](https://github.com/stefanprodan/flagger/tree/master/charts/podinfo).

|

||||

|

||||

### Install

|

||||

|

||||

Create a test namespace with Istio sidecar injection enabled:

|

||||

@@ -50,7 +53,9 @@ helm upgrade -i frontend flagger/podinfo \

|

||||

--set canary.istioIngress.host=frontend.istio.example.com

|

||||

```

|

||||

|

||||

After a couple of seconds Flagger will create the canary objects:

|

||||

Flagger takes a Kubernetes deployment and a horizontal pod autoscaler (HPA),

|

||||

then creates a series of objects (Kubernetes deployments, ClusterIP services and Istio virtual services).

|

||||

These objects expose the application on the mesh and drive the canary analysis and promotion.

|

||||

|

||||

```bash

|

||||

# generated by Helm

|

||||

@@ -74,7 +79,7 @@ Flagger will route all traffic to the primary pods and scale to zero the `fronte

|

||||

|

||||

Open your browser and navigate to the frontend URL:

|

||||

|

||||

|

||||

|

||||

|

||||

Now let's install the `backend` release without exposing it outside the mesh:

|

||||

|

||||

@@ -99,7 +104,7 @@ frontend Initialized 0 2019-02-12T17:50:50Z

|

||||

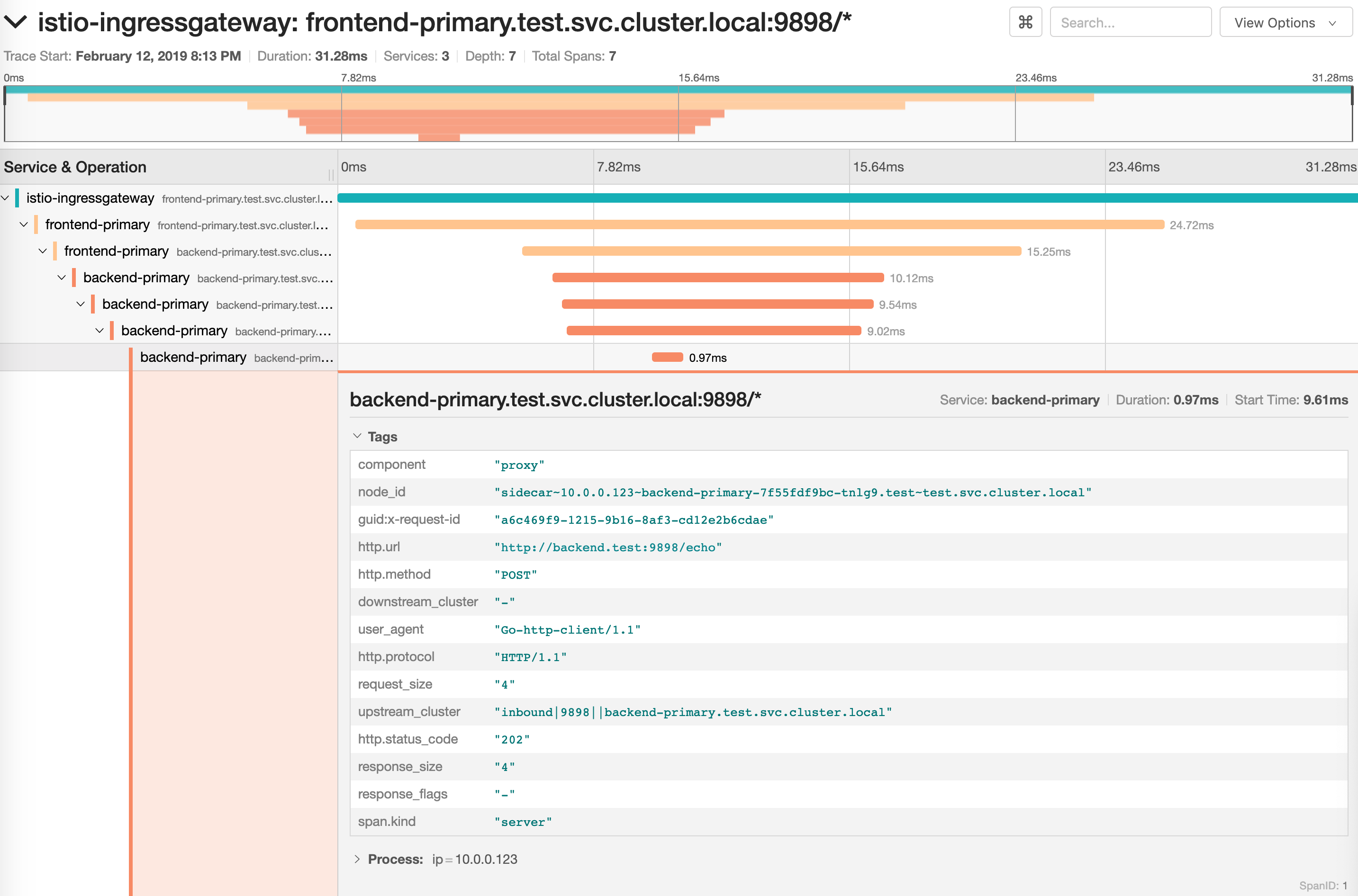

Click on the ping button in the `frontend` UI to trigger a HTTP POST request

|

||||

that will reach the `backend` app:

|

||||

|

||||

|

||||

|

||||

|

||||

We'll use the `/echo` endpoint (same as the one the ping button calls)

|

||||

to generate load on both apps during a canary deployment.

|

||||

@@ -203,8 +208,8 @@ Advance backend.test canary weight 45

|

||||

Halt backend.test advancement request duration 2.415s > 500ms

|

||||

Halt backend.test advancement request duration 2.42s > 500ms

|

||||

Advance backend.test canary weight 50

|

||||

Copying backend.test template spec to backend-primary.test

|

||||

ConfigMap backend-primary synced

|

||||

Copying backend.test template spec to backend-primary.test

|

||||

Promotion completed! Scaling down backend.test

|

||||

```

|

||||

|

||||

@@ -223,7 +228,6 @@ frontend Failed 0 2019-02-12T19:47:20Z

|

||||

|

||||

If you've enabled the Slack notifications, you'll receive an alert with the reason why the `backend` promotion failed.

|

||||

|

||||

|

||||

### GitOps automation

|

||||

|

||||

Instead of using Helm CLI from a CI tool to perform the install and upgrade, you could use a Git based approach.

|

||||

@@ -242,6 +246,8 @@ Create a git repository with the following content:

|

||||

└── loadtester.yaml

|

||||

```

|

||||

|

||||

You can find the git source [here](https://github.com/stefanprodan/flagger/tree/master/artifacts/cluster).

|

||||

|

||||

Define the `frontend` release using Flux `HelmRelease` custom resource:

|

||||

|

||||

```yaml

|

||||

@@ -278,7 +284,7 @@ In the `chart` section I've defined the release source by specifying the Helm re

|

||||

In the `values` section I've overwritten the defaults set in values.yaml.

|

||||

|

||||

With the `flux.weave.works` annotations I instruct Flux to automate this release.

|

||||

When a image tag in the sem ver range of `1.4.0 - 1.4.99` is pushed to Quay,

|

||||

When an image tag in the sem ver range of `1.4.0 - 1.4.99` is pushed to Quay,

|

||||

Flux will upgrade the Helm release and from there Flagger will pick up the change and start a canary deployment.

|

||||

|

||||

A CI/CD pipeline for the frontend release could look like this:

|

||||

@@ -296,3 +302,14 @@ A CI/CD pipeline for the frontend release could look like this:

|

||||

|

||||

If the canary fails, fix the bug, do another patch release eg `1.4.2` and the whole process will run again.

|

||||

|

||||

There are a couple of reasons why a canary deployment fails:

|

||||

|

||||

* the container image can't be downloaded

|

||||

* the deployment replica set is stuck for more then ten minutes (eg. due to a container crash loop)

|

||||

* the webooks (acceptance tests, load tests, etc) are returning a non 2xx response

|

||||

* the HTTP success rate (non 5xx responses) metric drops under the threshold

|

||||

* the HTTP average duration metric goes over the threshold

|

||||

* the Istio telemetry service is unable to collect traffic metrics

|

||||

* the metrics server (Prometheus) can't be reached

|

||||

|

||||

|

||||

Reference in New Issue

Block a user