Compare commits

439 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

e7fc72e6b5 | ||

|

|

4203232b05 | ||

|

|

a06aa05201 | ||

|

|

8e582e9b73 | ||

|

|

0e9fe8a446 | ||

|

|

27b4bcc648 | ||

|

|

614b7c74c4 | ||

|

|

5901129ec6 | ||

|

|

ded14345b4 | ||

|

|

dd272c6870 | ||

|

|

b31c7c6230 | ||

|

|

b0297213c3 | ||

|

|

d0fba2d111 | ||

|

|

9924cc2152 | ||

|

|

008a74f86c | ||

|

|

4ca110292f | ||

|

|

55b4c19670 | ||

|

|

8349dd1cda | ||

|

|

402fb66b2a | ||

|

|

f991274b97 | ||

|

|

0d94a49b6a | ||

|

|

7c14225442 | ||

|

|

2af0a050bc | ||

|

|

582f8d6abd | ||

|

|

eeea3123ac | ||

|

|

51fe43e169 | ||

|

|

6e6b127092 | ||

|

|

c9bacdfe05 | ||

|

|

f56a69770c | ||

|

|

0196124c9f | ||

|

|

63756d9d5f | ||

|

|

8e346960ac | ||

|

|

1b485b3459 | ||

|

|

ee05108279 | ||

|

|

dfaa039c9c | ||

|

|

46579d2ee6 | ||

|

|

f372523fb8 | ||

|

|

5e434df6ea | ||

|

|

d6c5bdd241 | ||

|

|

cdcd97244c | ||

|

|

60c4bba263 | ||

|

|

2b73bc5e38 | ||

|

|

03652dc631 | ||

|

|

00155aff37 | ||

|

|

206c3e6d7a | ||

|

|

8345fea812 | ||

|

|

c11dba1e05 | ||

|

|

7d4c3c5814 | ||

|

|

9b36794c9d | ||

|

|

1f34c656e9 | ||

|

|

9982dc9c83 | ||

|

|

780f3d2ab9 | ||

|

|

1cb09890fb | ||

|

|

faae6a7c3b | ||

|

|

d4250f3248 | ||

|

|

a8ee477b62 | ||

|

|

673b6102a7 | ||

|

|

316de42a2c | ||

|

|

dfb4b35e6c | ||

|

|

61ab596d1b | ||

|

|

3345692751 | ||

|

|

dff9287c75 | ||

|

|

b5fb7cdae5 | ||

|

|

2e79817437 | ||

|

|

5f439adc36 | ||

|

|

45df96ff3c | ||

|

|

98ee150364 | ||

|

|

d328a2146a | ||

|

|

4513f2e8be | ||

|

|

095fef1de6 | ||

|

|

754f02a30f | ||

|

|

01a4e7f6a8 | ||

|

|

6bba84422d | ||

|

|

26190d0c6a | ||

|

|

2d9098e43c | ||

|

|

7581b396b2 | ||

|

|

67a6366906 | ||

|

|

5605fab740 | ||

|

|

b76d0001ed | ||

|

|

625eed0840 | ||

|

|

37f9151de3 | ||

|

|

20af98e4dc | ||

|

|

76800d0ed0 | ||

|

|

3103bde7f7 | ||

|

|

298d8c2d65 | ||

|

|

5cdacf81e3 | ||

|

|

2141d88ce1 | ||

|

|

e8a2d4be2e | ||

|

|

9a9baadf0e | ||

|

|

a21e53fa31 | ||

|

|

61f8aea7d8 | ||

|

|

e384b03d49 | ||

|

|

0c60cf39f8 | ||

|

|

268fa9999f | ||

|

|

ff7d4e747c | ||

|

|

121fc57aa6 | ||

|

|

991fa1cfc8 | ||

|

|

fb2961715d | ||

|

|

74c1c2f1ef | ||

|

|

4da6c1b6e4 | ||

|

|

fff03b170f | ||

|

|

434acbb71b | ||

|

|

01962c32cd | ||

|

|

6b0856a054 | ||

|

|

708dbd6bbc | ||

|

|

e3801cbff6 | ||

|

|

fc68635098 | ||

|

|

6706ca5d65 | ||

|

|

44c2fd57c5 | ||

|

|

a9aab3e3ac | ||

|

|

6478d0b6cf | ||

|

|

958af18dc0 | ||

|

|

54b8257c60 | ||

|

|

e86f62744e | ||

|

|

0734773993 | ||

|

|

888cc667f1 | ||

|

|

053d0da617 | ||

|

|

7a4e0bc80c | ||

|

|

7b7306584f | ||

|

|

d6027af632 | ||

|

|

761746af21 | ||

|

|

510a6eaaed | ||

|

|

655df36913 | ||

|

|

2e079ba7a1 | ||

|

|

9df6bfbb5e | ||

|

|

2ff86fa56e | ||

|

|

1b2e0481b9 | ||

|

|

fe96af64e9 | ||

|

|

77d8e4e4d3 | ||

|

|

800b0475ee | ||

|

|

b58e13809c | ||

|

|

9845578cdd | ||

|

|

96ccfa54fb | ||

|

|

b8a64c79be | ||

|

|

4a4c261a88 | ||

|

|

8282f86d9c | ||

|

|

2b6966d8e3 | ||

|

|

c667c947ad | ||

|

|

105b28bf42 | ||

|

|

37a1ff5c99 | ||

|

|

d19a070faf | ||

|

|

d908355ab3 | ||

|

|

a6d86f2e81 | ||

|

|

9d856a4f96 | ||

|

|

a7112fafb0 | ||

|

|

93f9e51280 | ||

|

|

65e9a402cf | ||

|

|

f7513b33a6 | ||

|

|

0b3fa517d3 | ||

|

|

507075920c | ||

|

|

a212f032a6 | ||

|

|

eb8755249f | ||

|

|

73bb2a9fa2 | ||

|

|

5d3ffa8c90 | ||

|

|

87f143f5fd | ||

|

|

f56b6dd6a7 | ||

|

|

5e40340f9c | ||

|

|

2456737df7 | ||

|

|

1191d708de | ||

|

|

4d26971fc7 | ||

|

|

0421b32834 | ||

|

|

360dd63e49 | ||

|

|

f1670dbe6a | ||

|

|

e7ad5c0381 | ||

|

|

2cfe2a105a | ||

|

|

bc83cee503 | ||

|

|

5091d3573c | ||

|

|

ffe5dd91c5 | ||

|

|

d76b560967 | ||

|

|

f062ef3a57 | ||

|

|

5fc1baf4df | ||

|

|

777b77b69e | ||

|

|

5d221e781a | ||

|

|

ddab72cd59 | ||

|

|

87d0b33327 | ||

|

|

225a9015bb | ||

|

|

c0b60b1497 | ||

|

|

0463c19825 | ||

|

|

8e70aa90c1 | ||

|

|

0a418eb88a | ||

|

|

040dbb8d03 | ||

|

|

64f2288bdd | ||

|

|

8008562a33 | ||

|

|

a39652724d | ||

|

|

691c3c4f36 | ||

|

|

f6fa5e3891 | ||

|

|

a305a0b705 | ||

|

|

dfe619e2ea | ||

|

|

2b3d425b70 | ||

|

|

6e55fea413 | ||

|

|

b6a08b6615 | ||

|

|

eaa6906516 | ||

|

|

62a7a92f2a | ||

|

|

3aeb0945c5 | ||

|

|

e8c85efeae | ||

|

|

6651f6452b | ||

|

|

0ca48d77be | ||

|

|

a9e0e018e3 | ||

|

|

122d11f445 | ||

|

|

b03555858c | ||

|

|

dcc5a40441 | ||

|

|

8c949f59de | ||

|

|

e8d91a0375 | ||

|

|

fae9aa664d | ||

|

|

c31e9e5a96 | ||

|

|

99fff98274 | ||

|

|

11d84bf35d | ||

|

|

e56ba480c7 | ||

|

|

b9f0517c5d | ||

|

|

6e66f02585 | ||

|

|

5922e96044 | ||

|

|

f36e7e414a | ||

|

|

606754d4a5 | ||

|

|

a3847e64df | ||

|

|

7a3f9f2e73 | ||

|

|

2e4e8b0bf9 | ||

|

|

951fe80115 | ||

|

|

c0a8149acb | ||

|

|

80b75b227d | ||

|

|

dff7de09f2 | ||

|

|

b3bbadfccf | ||

|

|

fc676e3cb7 | ||

|

|

860c82dff9 | ||

|

|

4829f5af7f | ||

|

|

c463b6b231 | ||

|

|

b2ca0c4c16 | ||

|

|

69875cb3dc | ||

|

|

9e33a116d4 | ||

|

|

dab3d53b65 | ||

|

|

e3f8bff6fc | ||

|

|

0648d81d34 | ||

|

|

ece5c4401e | ||

|

|

bfc64c7cf1 | ||

|

|

0a2c134ece | ||

|

|

8bea9253c3 | ||

|

|

e1dacc3983 | ||

|

|

0c6a7355e7 | ||

|

|

83046282c3 | ||

|

|

65c9817295 | ||

|

|

e4905d3d35 | ||

|

|

6bc0670a7a | ||

|

|

95ff6adc19 | ||

|

|

7ee51c7def | ||

|

|

dfa065b745 | ||

|

|

e3b03debde | ||

|

|

ef759305cb | ||

|

|

ad65497d4e | ||

|

|

163f5292b0 | ||

|

|

e07a82d024 | ||

|

|

046245a8b5 | ||

|

|

aa6a180bcc | ||

|

|

c4d28e14fc | ||

|

|

bc4bdcdc1c | ||

|

|

be22ff9951 | ||

|

|

f204fe53f4 | ||

|

|

28e7e89047 | ||

|

|

75d49304f3 | ||

|

|

04cbacb6e0 | ||

|

|

c46c7b9e21 | ||

|

|

919dafa567 | ||

|

|

dfdcfed26e | ||

|

|

a0a4d4cfc5 | ||

|

|

970a589fd3 | ||

|

|

56d2c0952a | ||

|

|

4871be0345 | ||

|

|

e3e112e279 | ||

|

|

d2cbd40d89 | ||

|

|

3786a49f00 | ||

|

|

ff4aa62061 | ||

|

|

9b6cfdeef7 | ||

|

|

9d89e0c83f | ||

|

|

559cbd0d36 | ||

|

|

caea00e47f | ||

|

|

b26542f38d | ||

|

|

bbab7ce855 | ||

|

|

afa2d079f6 | ||

|

|

108bf9ca65 | ||

|

|

438f952128 | ||

|

|

3e84799644 | ||

|

|

d6e80bac7f | ||

|

|

9b3b24bddf | ||

|

|

5c831ae482 | ||

|

|

78233fafd3 | ||

|

|

73c3e07859 | ||

|

|

10c61daee4 | ||

|

|

b1bb9fa114 | ||

|

|

a7f4b6d2ae | ||

|

|

b937c4ea8d | ||

|

|

e577311b64 | ||

|

|

b847345308 | ||

|

|

85e683446f | ||

|

|

4f49aa5760 | ||

|

|

8ca9cf24bb | ||

|

|

61d0216c21 | ||

|

|

ba4a2406ba | ||

|

|

c2974416b4 | ||

|

|

48fac4e876 | ||

|

|

f0add9a67c | ||

|

|

20f9df01c2 | ||

|

|

514e850072 | ||

|

|

61fe78a982 | ||

|

|

c4b066c845 | ||

|

|

d24a23f3bd | ||

|

|

22045982e2 | ||

|

|

f496f1e18f | ||

|

|

2e802432c4 | ||

|

|

a2f747e16f | ||

|

|

982338e162 | ||

|

|

03fe4775dd | ||

|

|

def7d9bde0 | ||

|

|

a58a7cbeeb | ||

|

|

82ca66c23b | ||

|

|

92c971c0d7 | ||

|

|

30c4faf72b | ||

|

|

85ee7d17cf | ||

|

|

a6d278ae91 | ||

|

|

ad8d02f701 | ||

|

|

00fa5542f7 | ||

|

|

9ed2719d19 | ||

|

|

8a809baf35 | ||

|

|

ff90c42fa7 | ||

|

|

d651e8fe48 | ||

|

|

bc613905e9 | ||

|

|

e3321118e5 | ||

|

|

31f526cbd6 | ||

|

|

493554178f | ||

|

|

004b1cc7dd | ||

|

|

767602592c | ||

|

|

34676acaf5 | ||

|

|

491ab7affa | ||

|

|

b522bbd903 | ||

|

|

dd3bc28806 | ||

|

|

764e7e275d | ||

|

|

931c051153 | ||

|

|

3da86fe118 | ||

|

|

93f37a3022 | ||

|

|

77b3d861e6 | ||

|

|

ce0e16ffe8 | ||

|

|

fb9709ae78 | ||

|

|

191c3868ab | ||

|

|

d076f0859e | ||

|

|

df24ba86d0 | ||

|

|

3996bcfa67 | ||

|

|

9e8a4ad384 | ||

|

|

26ee668612 | ||

|

|

e3c102e7f8 | ||

|

|

ba60b127ea | ||

|

|

74c69dc07e | ||

|

|

0687d89178 | ||

|

|

7a454c005f | ||

|

|

2ce4f3a93e | ||

|

|

7baaaebdd4 | ||

|

|

608c7f7a31 | ||

|

|

1a0daa8678 | ||

|

|

ed0d25af97 | ||

|

|

720d04aba1 | ||

|

|

901648393a | ||

|

|

b5acd817fc | ||

|

|

2586fc6ef0 | ||

|

|

62e0eb6395 | ||

|

|

768b0490e2 | ||

|

|

852454fa2c | ||

|

|

970b67d6f6 | ||

|

|

ea0eddff82 | ||

|

|

0d4d2ac37b | ||

|

|

d0591916a4 | ||

|

|

6a8aef8675 | ||

|

|

a894a7a0ce | ||

|

|

0bbe724b8c | ||

|

|

bea22c0259 | ||

|

|

6363580120 | ||

|

|

cbdc7ef2d3 | ||

|

|

0959406609 | ||

|

|

cf41f9a478 | ||

|

|

6fe6a41e3e | ||

|

|

91cd2648d9 | ||

|

|

240591a6b8 | ||

|

|

2973822113 | ||

|

|

a6b2b1246c | ||

|

|

c74456411d | ||

|

|

31b3fcf906 | ||

|

|

767be5b6a8 | ||

|

|

48834cd8d1 | ||

|

|

f4bb0ea9c2 | ||

|

|

cf25a9a8a5 | ||

|

|

4f0ad7a067 | ||

|

|

c0fe461a9f | ||

|

|

1911143514 | ||

|

|

9b67b360d0 | ||

|

|

991e01efd2 | ||

|

|

83b8ae46c9 | ||

|

|

c3b7aee063 | ||

|

|

66d662c085 | ||

|

|

4d5876fb76 | ||

|

|

7ca2558a81 | ||

|

|

8957994c1a | ||

|

|

0147aea69b | ||

|

|

b5f73d66ec | ||

|

|

6800181594 | ||

|

|

6f5f80a085 | ||

|

|

fd23a2f98f | ||

|

|

63cb8a5ba5 | ||

|

|

4a9e3182c6 | ||

|

|

5cbc3df7b5 | ||

|

|

dcadc2303f | ||

|

|

cf5f364ed2 | ||

|

|

e45ace5d9b | ||

|

|

6e7421b0d8 | ||

|

|

647d02890f | ||

|

|

7e72d23b60 | ||

|

|

9fada306f0 | ||

|

|

8d1cc83405 | ||

|

|

1979bc59d0 | ||

|

|

bf7ebc9708 | ||

|

|

dc3cde88d2 | ||

|

|

98beb1011e | ||

|

|

8c59e9d2b4 | ||

|

|

9a87d47f45 | ||

|

|

f25023ed1b | ||

|

|

806b233d58 | ||

|

|

677ee8d639 | ||

|

|

61ac8d7a8c | ||

|

|

278680b248 | ||

|

|

5e4a58a1c1 | ||

|

|

757b5ca22e | ||

|

|

6d1da5bb45 | ||

|

|

9ca79d147d | ||

|

|

37fcfe15bb | ||

|

|

a9c7466359 | ||

|

|

91a3f2c9a7 | ||

|

|

9aa341d088 | ||

|

|

c9e09fa8eb | ||

|

|

e6257b7531 | ||

|

|

aee027c91c | ||

|

|

c106796751 | ||

|

|

42bd600482 | ||

|

|

47ad81be5b | ||

|

|

88c450e3bd | ||

|

|

2ebedd185c |

@@ -1,86 +1,247 @@

|

||||

version: 2.1

|

||||

jobs:

|

||||

|

||||

build-binary:

|

||||

docker:

|

||||

- image: circleci/golang:1.13

|

||||

working_directory: ~/build

|

||||

steps:

|

||||

- checkout

|

||||

- restore_cache:

|

||||

keys:

|

||||

- go-mod-v3-{{ checksum "go.sum" }}

|

||||

- run:

|

||||

name: Run go mod download

|

||||

command: go mod download

|

||||

- run:

|

||||

name: Run go fmt

|

||||

command: make test-fmt

|

||||

- run:

|

||||

name: Build Flagger

|

||||

command: |

|

||||

CGO_ENABLED=0 GOOS=linux go build \

|

||||

-ldflags "-s -w -X github.com/weaveworks/flagger/pkg/version.REVISION=${CIRCLE_SHA1}" \

|

||||

-a -installsuffix cgo -o bin/flagger ./cmd/flagger/*.go

|

||||

- run:

|

||||

name: Build Flagger load tester

|

||||

command: |

|

||||

CGO_ENABLED=0 GOOS=linux go build \

|

||||

-a -installsuffix cgo -o bin/loadtester ./cmd/loadtester/*.go

|

||||

- run:

|

||||

name: Run unit tests

|

||||

command: |

|

||||

go test -race -coverprofile=coverage.txt -covermode=atomic $(go list ./pkg/...)

|

||||

bash <(curl -s https://codecov.io/bash)

|

||||

- run:

|

||||

name: Verify code gen

|

||||

command: make test-codegen

|

||||

- save_cache:

|

||||

key: go-mod-v3-{{ checksum "go.sum" }}

|

||||

paths:

|

||||

- "/go/pkg/mod/"

|

||||

- persist_to_workspace:

|

||||

root: bin

|

||||

paths:

|

||||

- flagger

|

||||

- loadtester

|

||||

|

||||

push-container:

|

||||

docker:

|

||||

- image: circleci/golang:1.13

|

||||

steps:

|

||||

- checkout

|

||||

- setup_remote_docker:

|

||||

docker_layer_caching: true

|

||||

- attach_workspace:

|

||||

at: /tmp/bin

|

||||

- run: test/container-build.sh

|

||||

- run: test/container-push.sh

|

||||

|

||||

push-binary:

|

||||

docker:

|

||||

- image: circleci/golang:1.13

|

||||

working_directory: ~/build

|

||||

steps:

|

||||

- checkout

|

||||

- setup_remote_docker:

|

||||

docker_layer_caching: true

|

||||

- restore_cache:

|

||||

keys:

|

||||

- go-mod-v3-{{ checksum "go.sum" }}

|

||||

- run: test/goreleaser.sh

|

||||

|

||||

e2e-istio-testing:

|

||||

machine: true

|

||||

steps:

|

||||

- checkout

|

||||

- attach_workspace:

|

||||

at: /tmp/bin

|

||||

- run: test/container-build.sh

|

||||

- run: test/e2e-kind.sh

|

||||

- run: test/e2e-istio.sh

|

||||

- run: test/e2e-build.sh

|

||||

- run: test/e2e-tests.sh

|

||||

|

||||

e2e-kubernetes-testing:

|

||||

machine: true

|

||||

steps:

|

||||

- checkout

|

||||

- attach_workspace:

|

||||

at: /tmp/bin

|

||||

- run: test/container-build.sh

|

||||

- run: test/e2e-kind.sh

|

||||

- run: test/e2e-kubernetes.sh

|

||||

- run: test/e2e-kubernetes-tests.sh

|

||||

|

||||

e2e-smi-istio-testing:

|

||||

machine: true

|

||||

steps:

|

||||

- checkout

|

||||

- attach_workspace:

|

||||

at: /tmp/bin

|

||||

- run: test/container-build.sh

|

||||

- run: test/e2e-kind.sh

|

||||

- run: test/e2e-istio.sh

|

||||

- run: test/e2e-smi-istio-build.sh

|

||||

- run: test/e2e-tests.sh canary

|

||||

|

||||

e2e-supergloo-testing:

|

||||

machine: true

|

||||

steps:

|

||||

- checkout

|

||||

- run: test/e2e-kind.sh

|

||||

- run: test/e2e-supergloo.sh

|

||||

- run: test/e2e-build.sh supergloo:test.supergloo-system

|

||||

- run: test/e2e-smi-istio.sh

|

||||

- run: test/e2e-tests.sh canary

|

||||

|

||||

e2e-gloo-testing:

|

||||

machine: true

|

||||

steps:

|

||||

- checkout

|

||||

- attach_workspace:

|

||||

at: /tmp/bin

|

||||

- run: test/container-build.sh

|

||||

- run: test/e2e-kind.sh

|

||||

- run: test/e2e-gloo.sh

|

||||

- run: test/e2e-gloo-build.sh

|

||||

- run: test/e2e-gloo-tests.sh

|

||||

|

||||

e2e-nginx-testing:

|

||||

machine: true

|

||||

steps:

|

||||

- checkout

|

||||

- attach_workspace:

|

||||

at: /tmp/bin

|

||||

- run: test/container-build.sh

|

||||

- run: test/e2e-kind.sh

|

||||

- run: test/e2e-nginx.sh

|

||||

- run: test/e2e-nginx-build.sh

|

||||

- run: test/e2e-nginx-tests.sh

|

||||

- run: test/e2e-nginx-cleanup.sh

|

||||

- run: test/e2e-nginx-custom-annotations.sh

|

||||

- run: test/e2e-nginx-tests.sh

|

||||

|

||||

e2e-linkerd-testing:

|

||||

machine: true

|

||||

steps:

|

||||

- checkout

|

||||

- attach_workspace:

|

||||

at: /tmp/bin

|

||||

- run: test/container-build.sh

|

||||

- run: test/e2e-kind.sh

|

||||

- run: test/e2e-linkerd.sh

|

||||

- run: test/e2e-linkerd-tests.sh

|

||||

|

||||

push-helm-charts:

|

||||

docker:

|

||||

- image: circleci/golang:1.13

|

||||

steps:

|

||||

- checkout

|

||||

- run:

|

||||

name: Install kubectl

|

||||

command: sudo curl -L https://storage.googleapis.com/kubernetes-release/release/$(curl -s https://storage.googleapis.com/kubernetes-release/release/stable.txt)/bin/linux/amd64/kubectl -o /usr/local/bin/kubectl && sudo chmod +x /usr/local/bin/kubectl

|

||||

- run:

|

||||

name: Install helm

|

||||

command: sudo curl -L https://storage.googleapis.com/kubernetes-helm/helm-v2.14.2-linux-amd64.tar.gz | tar xz && sudo mv linux-amd64/helm /bin/helm && sudo rm -rf linux-amd64

|

||||

- run:

|

||||

name: Initialize helm

|

||||

command: helm init --client-only --kubeconfig=$HOME/.kube/kubeconfig

|

||||

- run:

|

||||

name: Lint charts

|

||||

command: |

|

||||

helm lint ./charts/*

|

||||

- run:

|

||||

name: Package charts

|

||||

command: |

|

||||

mkdir $HOME/charts

|

||||

helm package ./charts/* --destination $HOME/charts

|

||||

- run:

|

||||

name: Publish charts

|

||||

command: |

|

||||

if echo "${CIRCLE_TAG}" | grep -Eq "[0-9]+(\.[0-9]+)*(-[a-z]+)?$"; then

|

||||

REPOSITORY="https://weaveworksbot:${GITHUB_TOKEN}@github.com/weaveworks/flagger.git"

|

||||

git config user.email weaveworksbot@users.noreply.github.com

|

||||

git config user.name weaveworksbot

|

||||

git remote set-url origin ${REPOSITORY}

|

||||

git checkout gh-pages

|

||||

mv -f $HOME/charts/*.tgz .

|

||||

helm repo index . --url https://flagger.app

|

||||

git add .

|

||||

git commit -m "Publish Helm charts v${CIRCLE_TAG}"

|

||||

git push origin gh-pages

|

||||

else

|

||||

echo "Not a release! Skip charts publish"

|

||||

fi

|

||||

|

||||

workflows:

|

||||

version: 2

|

||||

build-and-test:

|

||||

build-test-push:

|

||||

jobs:

|

||||

- build-binary:

|

||||

filters:

|

||||

branches:

|

||||

ignore:

|

||||

- gh-pages

|

||||

- e2e-istio-testing:

|

||||

filters:

|

||||

branches:

|

||||

ignore:

|

||||

- /gh-pages.*/

|

||||

- /docs-.*/

|

||||

- /release-.*/

|

||||

- e2e-smi-istio-testing:

|

||||

filters:

|

||||

branches:

|

||||

ignore:

|

||||

- /gh-pages.*/

|

||||

- /docs-.*/

|

||||

- /release-.*/

|

||||

- e2e-supergloo-testing:

|

||||

filters:

|

||||

branches:

|

||||

ignore:

|

||||

- /gh-pages.*/

|

||||

- /docs-.*/

|

||||

- /release-.*/

|

||||

- e2e-nginx-testing:

|

||||

filters:

|

||||

branches:

|

||||

ignore:

|

||||

- /gh-pages.*/

|

||||

- /docs-.*/

|

||||

- /release-.*/

|

||||

requires:

|

||||

- build-binary

|

||||

- e2e-kubernetes-testing:

|

||||

requires:

|

||||

- build-binary

|

||||

- e2e-gloo-testing:

|

||||

requires:

|

||||

- build-binary

|

||||

- e2e-nginx-testing:

|

||||

requires:

|

||||

- build-binary

|

||||

- e2e-linkerd-testing:

|

||||

requires:

|

||||

- build-binary

|

||||

- push-container:

|

||||

requires:

|

||||

- build-binary

|

||||

- e2e-istio-testing

|

||||

- e2e-kubernetes-testing

|

||||

- e2e-gloo-testing

|

||||

- e2e-nginx-testing

|

||||

- e2e-linkerd-testing

|

||||

|

||||

release:

|

||||

jobs:

|

||||

- build-binary:

|

||||

filters:

|

||||

branches:

|

||||

ignore:

|

||||

- /gh-pages.*/

|

||||

- /docs-.*/

|

||||

- /release-.*/

|

||||

ignore: /.*/

|

||||

tags:

|

||||

ignore: /^chart.*/

|

||||

- push-container:

|

||||

requires:

|

||||

- build-binary

|

||||

filters:

|

||||

branches:

|

||||

ignore: /.*/

|

||||

tags:

|

||||

ignore: /^chart.*/

|

||||

- push-binary:

|

||||

requires:

|

||||

- push-container

|

||||

filters:

|

||||

branches:

|

||||

ignore: /.*/

|

||||

tags:

|

||||

ignore: /^chart.*/

|

||||

- push-helm-charts:

|

||||

requires:

|

||||

- push-container

|

||||

filters:

|

||||

branches:

|

||||

ignore: /.*/

|

||||

tags:

|

||||

ignore: /^chart.*/

|

||||

2

.gitignore

vendored

@@ -13,5 +13,7 @@

|

||||

.DS_Store

|

||||

|

||||

bin/

|

||||

_tmp/

|

||||

|

||||

artifacts/gcloud/

|

||||

.idea

|

||||

@@ -8,7 +8,11 @@ builds:

|

||||

- amd64

|

||||

env:

|

||||

- CGO_ENABLED=0

|

||||

archive:

|

||||

name_template: "{{ .Binary }}_{{ .Version }}_{{ .Os }}_{{ .Arch }}"

|

||||

files:

|

||||

- none*

|

||||

archives:

|

||||

- name_template: "{{ .Binary }}_{{ .Version }}_{{ .Os }}_{{ .Arch }}"

|

||||

files:

|

||||

- none*

|

||||

changelog:

|

||||

filters:

|

||||

exclude:

|

||||

- '^CircleCI'

|

||||

|

||||

50

.travis.yml

@@ -1,50 +0,0 @@

|

||||

sudo: required

|

||||

language: go

|

||||

|

||||

branches:

|

||||

except:

|

||||

- /gh-pages.*/

|

||||

- /docs-.*/

|

||||

|

||||

go:

|

||||

- 1.12.x

|

||||

|

||||

services:

|

||||

- docker

|

||||

|

||||

addons:

|

||||

apt:

|

||||

packages:

|

||||

- docker-ce

|

||||

|

||||

script:

|

||||

- make test-fmt

|

||||

- make test-codegen

|

||||

- go test -race -coverprofile=coverage.txt -covermode=atomic $(go list ./pkg/...)

|

||||

- make build

|

||||

|

||||

after_success:

|

||||

- if [ -z "$DOCKER_USER" ]; then

|

||||

echo "PR build, skipping image push";

|

||||

else

|

||||

BRANCH_COMMIT=${TRAVIS_BRANCH}-$(echo ${TRAVIS_COMMIT} | head -c7);

|

||||

docker tag weaveworks/flagger:latest weaveworks/flagger:${BRANCH_COMMIT};

|

||||

echo $DOCKER_PASS | docker login -u=$DOCKER_USER --password-stdin;

|

||||

docker push weaveworks/flagger:${BRANCH_COMMIT};

|

||||

fi

|

||||

- if [ -z "$TRAVIS_TAG" ]; then

|

||||

echo "Not a release, skipping image push";

|

||||

else

|

||||

docker tag weaveworks/flagger:latest weaveworks/flagger:${TRAVIS_TAG};

|

||||

echo $DOCKER_PASS | docker login -u=$DOCKER_USER --password-stdin;

|

||||

docker push weaveworks/flagger:$TRAVIS_TAG;

|

||||

fi

|

||||

- bash <(curl -s https://codecov.io/bash)

|

||||

- rm coverage.txt

|

||||

|

||||

deploy:

|

||||

- provider: script

|

||||

skip_cleanup: true

|

||||

script: curl -sL http://git.io/goreleaser | bash

|

||||

on:

|

||||

tags: true

|

||||

204

CHANGELOG.md

@@ -2,6 +2,210 @@

|

||||

|

||||

All notable changes to this project are documented in this file.

|

||||

|

||||

## 0.20.2 (2019-11-07)

|

||||

|

||||

Adds support for exposing canaries outside the cluster using App Mesh Gateway annotations

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Expose canaries on public domains with App Mesh Gateway [#358](https://github.com/weaveworks/flagger/pull/358)

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Use the specified replicas when scaling up the canary [#363](https://github.com/weaveworks/flagger/pull/363)

|

||||

|

||||

## 0.20.1 (2019-11-03)

|

||||

|

||||

Fixes promql execution and updates the load testing tools

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Update load tester Helm tools [#8349dd1](https://github.com/weaveworks/flagger/commit/8349dd1cda59a741c7bed9a0f67c0fc0fbff4635)

|

||||

- e2e testing: update providers [#346](https://github.com/weaveworks/flagger/pull/346)

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Fix Prometheus query escape [#353](https://github.com/weaveworks/flagger/pull/353)

|

||||

- Updating hey release link [#350](https://github.com/weaveworks/flagger/pull/350)

|

||||

|

||||

## 0.20.0 (2019-10-21)

|

||||

|

||||

Adds support for [A/B Testing](https://docs.flagger.app/usage/progressive-delivery#traffic-mirroring) and retry policies when using App Mesh

|

||||

|

||||

#### Features

|

||||

|

||||

- Implement App Mesh A/B testing based on HTTP headers match conditions [#340](https://github.com/weaveworks/flagger/pull/340)

|

||||

- Implement App Mesh HTTP retry policy [#338](https://github.com/weaveworks/flagger/pull/338)

|

||||

- Implement metrics server override [#342](https://github.com/weaveworks/flagger/pull/342)

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Add the app/name label to services and primary deployment [#333](https://github.com/weaveworks/flagger/pull/333)

|

||||

- Allow setting Slack and Teams URLs with env vars [#334](https://github.com/weaveworks/flagger/pull/334)

|

||||

- Refactor Gloo integration [#344](https://github.com/weaveworks/flagger/pull/344)

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Generate unique names for App Mesh virtual routers and routes [#336](https://github.com/weaveworks/flagger/pull/336)

|

||||

|

||||

## 0.19.0 (2019-10-08)

|

||||

|

||||

Adds support for canary and blue/green [traffic mirroring](https://docs.flagger.app/usage/progressive-delivery#traffic-mirroring)

|

||||

|

||||

#### Features

|

||||

|

||||

- Add traffic mirroring for Istio service mesh [#311](https://github.com/weaveworks/flagger/pull/311)

|

||||

- Implement canary service target port [#327](https://github.com/weaveworks/flagger/pull/327)

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Allow gPRC protocol for App Mesh [#325](https://github.com/weaveworks/flagger/pull/325)

|

||||

- Enforce blue/green when using Kubernetes networking [#326](https://github.com/weaveworks/flagger/pull/326)

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Fix port discovery diff [#324](https://github.com/weaveworks/flagger/pull/324)

|

||||

- Helm chart: Enable Prometheus scraping of Flagger metrics [#2141d88](https://github.com/weaveworks/flagger/commit/2141d88ce1cc6be220dab34171c215a334ecde24)

|

||||

|

||||

## 0.18.6 (2019-10-03)

|

||||

|

||||

Adds support for App Mesh conformance tests and latency metric checks

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Add support for acceptance testing when using App Mesh [#322](https://github.com/weaveworks/flagger/pull/322)

|

||||

- Add Kustomize installer for App Mesh [#310](https://github.com/weaveworks/flagger/pull/310)

|

||||

- Update Linkerd to v2.5.0 and Prometheus to v2.12.0 [#323](https://github.com/weaveworks/flagger/pull/323)

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Fix slack/teams notification fields mapping [#318](https://github.com/weaveworks/flagger/pull/318)

|

||||

|

||||

## 0.18.5 (2019-10-02)

|

||||

|

||||

Adds support for [confirm-promotion](https://docs.flagger.app/how-it-works#webhooks) webhooks and blue/green deployments when using a service mesh

|

||||

|

||||

#### Features

|

||||

|

||||

- Implement confirm-promotion hook [#307](https://github.com/weaveworks/flagger/pull/307)

|

||||

- Implement B/G for service mesh providers [#305](https://github.com/weaveworks/flagger/pull/305)

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Canary promotion improvements to avoid dropping in-flight requests [#310](https://github.com/weaveworks/flagger/pull/310)

|

||||

- Update end-to-end tests to Kubernetes v1.15.3 and Istio 1.3.0 [#306](https://github.com/weaveworks/flagger/pull/306)

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Skip primary check for App Mesh [#315](https://github.com/weaveworks/flagger/pull/315)

|

||||

|

||||

## 0.18.4 (2019-09-08)

|

||||

|

||||

Adds support for NGINX custom annotations and Helm v3 acceptance testing

|

||||

|

||||

#### Features

|

||||

|

||||

- Add annotations prefix for NGINX ingresses [#293](https://github.com/weaveworks/flagger/pull/293)

|

||||

- Add wide columns in CRD [#289](https://github.com/weaveworks/flagger/pull/289)

|

||||

- loadtester: implement Helm v3 test command [#296](https://github.com/weaveworks/flagger/pull/296)

|

||||

- loadtester: add gPRC health check to load tester image [#295](https://github.com/weaveworks/flagger/pull/295)

|

||||

|

||||

#### Fixes

|

||||

|

||||

- loadtester: fix tests error logging [#286](https://github.com/weaveworks/flagger/pull/286)

|

||||

|

||||

## 0.18.3 (2019-08-22)

|

||||

|

||||

Adds support for tillerless helm tests and protobuf health checking

|

||||

|

||||

#### Features

|

||||

|

||||

- loadtester: add support for tillerless helm [#280](https://github.com/weaveworks/flagger/pull/280)

|

||||

- loadtester: add support for protobuf health checking [#280](https://github.com/weaveworks/flagger/pull/280)

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Set HTTP listeners for AppMesh virtual routers [#272](https://github.com/weaveworks/flagger/pull/272)

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Add missing fields to CRD validation spec [#271](https://github.com/weaveworks/flagger/pull/271)

|

||||

- Fix App Mesh backends validation in CRD [#281](https://github.com/weaveworks/flagger/pull/281)

|

||||

|

||||

## 0.18.2 (2019-08-05)

|

||||

|

||||

Fixes multi-port support for Istio

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Fix port discovery for multiple port services [#267](https://github.com/weaveworks/flagger/pull/267)

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Update e2e testing to Istio v1.2.3, Gloo v0.18.8 and NGINX ingress chart v1.12.1 [#268](https://github.com/weaveworks/flagger/pull/268)

|

||||

|

||||

## 0.18.1 (2019-07-30)

|

||||

|

||||

Fixes Blue/Green style deployments for Kubernetes and Linkerd providers

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Fix Blue/Green metrics provider and add e2e tests [#261](https://github.com/weaveworks/flagger/pull/261)

|

||||

|

||||

## 0.18.0 (2019-07-29)

|

||||

|

||||

Adds support for [manual gating](https://docs.flagger.app/how-it-works#manual-gating) and pausing/resuming an ongoing analysis

|

||||

|

||||

#### Features

|

||||

|

||||

- Implement confirm rollout gate, hook and API [#251](https://github.com/weaveworks/flagger/pull/251)

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Refactor canary change detection and status [#240](https://github.com/weaveworks/flagger/pull/240)

|

||||

- Implement finalising state [#257](https://github.com/weaveworks/flagger/pull/257)

|

||||

- Add gRPC load testing tool [#248](https://github.com/weaveworks/flagger/pull/248)

|

||||

|

||||

#### Breaking changes

|

||||

|

||||

- Due to the status sub-resource changes in [#240](https://github.com/weaveworks/flagger/pull/240), when upgrading Flagger the canaries status phase will be reset to `Initialized`

|

||||

- Upgrading Flagger with Helm will fail due to Helm poor support of CRDs, see [workaround](https://github.com/weaveworks/flagger/issues/223)

|

||||

|

||||

## 0.17.0 (2019-07-08)

|

||||

|

||||

Adds support for Linkerd (SMI Traffic Split API), MS Teams notifications and HA mode with leader election

|

||||

|

||||

#### Features

|

||||

|

||||

- Add Linkerd support [#230](https://github.com/weaveworks/flagger/pull/230)

|

||||

- Implement MS Teams notifications [#235](https://github.com/weaveworks/flagger/pull/235)

|

||||

- Implement leader election [#236](https://github.com/weaveworks/flagger/pull/236)

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Add [Kustomize](https://docs.flagger.app/install/flagger-install-on-kubernetes#install-flagger-with-kustomize) installer [#232](https://github.com/weaveworks/flagger/pull/232)

|

||||

- Add Pod Security Policy to Helm chart [#234](https://github.com/weaveworks/flagger/pull/234)

|

||||

|

||||

## 0.16.0 (2019-06-23)

|

||||

|

||||

Adds support for running [Blue/Green deployments](https://docs.flagger.app/usage/blue-green) without a service mesh or ingress controller

|

||||

|

||||

#### Features

|

||||

|

||||

- Allow blue/green deployments without a service mesh provider [#211](https://github.com/weaveworks/flagger/pull/211)

|

||||

- Add the service mesh provider to the canary spec [#217](https://github.com/weaveworks/flagger/pull/217)

|

||||

- Allow multi-port services and implement port discovery [#207](https://github.com/weaveworks/flagger/pull/207)

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Add [FAQ page](https://docs.flagger.app/faq) to docs website

|

||||

- Switch to go modules in CI [#218](https://github.com/weaveworks/flagger/pull/218)

|

||||

- Update e2e testing to Kubernetes Kind 0.3.0 and Istio 1.2.0

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Update the primary HPA on canary promotion [#216](https://github.com/weaveworks/flagger/pull/216)

|

||||

|

||||

## 0.15.0 (2019-06-12)

|

||||

|

||||

Adds support for customising the Istio [traffic policy](https://docs.flagger.app/how-it-works#istio-routing) in the canary service spec

|

||||

|

||||

19

Dockerfile

@@ -1,19 +1,4 @@

|

||||

FROM golang:1.12

|

||||

|

||||

RUN mkdir -p /go/src/github.com/weaveworks/flagger/

|

||||

|

||||

WORKDIR /go/src/github.com/weaveworks/flagger

|

||||

|

||||

COPY . .

|

||||

|

||||

#RUN GO111MODULE=on go mod download

|

||||

|

||||

RUN GIT_COMMIT=$(git rev-list -1 HEAD) && \

|

||||

GO111MODULE=on CGO_ENABLED=0 GOOS=linux go build -mod=vendor -ldflags "-s -w \

|

||||

-X github.com/weaveworks/flagger/pkg/version.REVISION=${GIT_COMMIT}" \

|

||||

-a -installsuffix cgo -o flagger ./cmd/flagger/*

|

||||

|

||||

FROM alpine:3.9

|

||||

FROM alpine:3.10

|

||||

|

||||

RUN addgroup -S flagger \

|

||||

&& adduser -S -g flagger flagger \

|

||||

@@ -21,7 +6,7 @@ RUN addgroup -S flagger \

|

||||

|

||||

WORKDIR /home/flagger

|

||||

|

||||

COPY --from=0 /go/src/github.com/weaveworks/flagger/flagger .

|

||||

COPY /bin/flagger .

|

||||

|

||||

RUN chown -R flagger:flagger ./

|

||||

|

||||

|

||||

@@ -1,15 +1,3 @@

|

||||

FROM golang:1.12 AS builder

|

||||

|

||||

RUN mkdir -p /go/src/github.com/weaveworks/flagger/

|

||||

|

||||

WORKDIR /go/src/github.com/weaveworks/flagger

|

||||

|

||||

COPY . .

|

||||

|

||||

RUN go test -race ./pkg/loadtester/

|

||||

|

||||

RUN CGO_ENABLED=0 GOOS=linux go build -a -installsuffix cgo -o loadtester ./cmd/loadtester/*

|

||||

|

||||

FROM bats/bats:v1.1.0

|

||||

|

||||

RUN addgroup -S app \

|

||||

@@ -18,17 +6,39 @@ RUN addgroup -S app \

|

||||

|

||||

WORKDIR /home/app

|

||||

|

||||

RUN curl -sSLo hey "https://storage.googleapis.com/jblabs/dist/hey_linux_v0.1.2" && \

|

||||

RUN curl -sSLo hey "https://storage.googleapis.com/hey-release/hey_linux_amd64" && \

|

||||

chmod +x hey && mv hey /usr/local/bin/hey

|

||||

|

||||

RUN curl -sSL "https://get.helm.sh/helm-v2.12.3-linux-amd64.tar.gz" | tar xvz && \

|

||||

# verify hey works

|

||||

RUN hey -n 1 -c 1 https://flagger.app > /dev/null && echo $? | grep 0

|

||||

|

||||

RUN curl -sSL "https://get.helm.sh/helm-v2.15.1-linux-amd64.tar.gz" | tar xvz && \

|

||||

chmod +x linux-amd64/helm && mv linux-amd64/helm /usr/local/bin/helm && \

|

||||

chmod +x linux-amd64/tiller && mv linux-amd64/tiller /usr/local/bin/tiller && \

|

||||

rm -rf linux-amd64

|

||||

|

||||

COPY --from=builder /go/src/github.com/weaveworks/flagger/loadtester .

|

||||

RUN curl -sSL "https://get.helm.sh/helm-v3.0.0-rc.2-linux-amd64.tar.gz" | tar xvz && \

|

||||

chmod +x linux-amd64/helm && mv linux-amd64/helm /usr/local/bin/helmv3 && \

|

||||

rm -rf linux-amd64

|

||||

|

||||

RUN GRPC_HEALTH_PROBE_VERSION=v0.3.1 && \

|

||||

wget -qO /usr/local/bin/grpc_health_probe https://github.com/grpc-ecosystem/grpc-health-probe/releases/download/${GRPC_HEALTH_PROBE_VERSION}/grpc_health_probe-linux-amd64 && \

|

||||

chmod +x /usr/local/bin/grpc_health_probe

|

||||

|

||||

RUN curl -sSL "https://github.com/bojand/ghz/releases/download/v0.39.0/ghz_0.39.0_Linux_x86_64.tar.gz" | tar xz -C /tmp && \

|

||||

mv /tmp/ghz /usr/local/bin && chmod +x /usr/local/bin/ghz && rm -rf /tmp/ghz-web

|

||||

|

||||

ADD https://raw.githubusercontent.com/grpc/grpc-proto/master/grpc/health/v1/health.proto /tmp/ghz/health.proto

|

||||

|

||||

RUN ls /tmp

|

||||

|

||||

COPY ./bin/loadtester .

|

||||

|

||||

RUN chown -R app:app ./

|

||||

|

||||

USER app

|

||||

|

||||

ENTRYPOINT ["./loadtester"]

|

||||

RUN curl -sSL "https://github.com/rimusz/helm-tiller/archive/v0.9.3.tar.gz" | tar xvz && \

|

||||

helm init --client-only && helm plugin install helm-tiller-0.9.3 && helm plugin list

|

||||

|

||||

ENTRYPOINT ["./loadtester"]

|

||||

|

||||

54

Makefile

@@ -2,41 +2,40 @@ TAG?=latest

|

||||

VERSION?=$(shell grep 'VERSION' pkg/version/version.go | awk '{ print $$4 }' | tr -d '"')

|

||||

VERSION_MINOR:=$(shell grep 'VERSION' pkg/version/version.go | awk '{ print $$4 }' | tr -d '"' | rev | cut -d'.' -f2- | rev)

|

||||

PATCH:=$(shell grep 'VERSION' pkg/version/version.go | awk '{ print $$4 }' | tr -d '"' | awk -F. '{print $$NF}')

|

||||

SOURCE_DIRS = cmd pkg/apis pkg/controller pkg/server pkg/logging pkg/version

|

||||

SOURCE_DIRS = cmd pkg/apis pkg/controller pkg/server pkg/canary pkg/metrics pkg/router pkg/notifier

|

||||

LT_VERSION?=$(shell grep 'VERSION' cmd/loadtester/main.go | awk '{ print $$4 }' | tr -d '"' | head -n1)

|

||||

TS=$(shell date +%Y-%m-%d_%H-%M-%S)

|

||||

|

||||

run:

|

||||

go run cmd/flagger/* -kubeconfig=$$HOME/.kube/config -log-level=info -mesh-provider=istio -namespace=test \

|

||||

-metrics-server=https://prometheus.istio.weavedx.com \

|

||||

-slack-url=https://hooks.slack.com/services/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 \

|

||||

-slack-channel="devops-alerts"

|

||||

GO111MODULE=on go run cmd/flagger/* -kubeconfig=$$HOME/.kube/config -log-level=info -mesh-provider=istio -namespace=test-istio \

|

||||

-metrics-server=https://prometheus.istio.flagger.dev

|

||||

|

||||

run-appmesh:

|

||||

go run cmd/flagger/* -kubeconfig=$$HOME/.kube/config -log-level=info -mesh-provider=appmesh \

|

||||

-metrics-server=http://acfc235624ca911e9a94c02c4171f346-1585187926.us-west-2.elb.amazonaws.com:9090 \

|

||||

-slack-url=https://hooks.slack.com/services/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 \

|

||||

-slack-channel="devops-alerts"

|

||||

GO111MODULE=on go run cmd/flagger/* -kubeconfig=$$HOME/.kube/config -log-level=info -mesh-provider=appmesh \

|

||||

-metrics-server=http://acfc235624ca911e9a94c02c4171f346-1585187926.us-west-2.elb.amazonaws.com:9090

|

||||

|

||||

run-nginx:

|

||||

go run cmd/flagger/* -kubeconfig=$$HOME/.kube/config -log-level=info -mesh-provider=nginx -namespace=nginx \

|

||||

-metrics-server=http://prometheus-weave.istio.weavedx.com \

|

||||

-slack-url=https://hooks.slack.com/services/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 \

|

||||

-slack-channel="devops-alerts"

|

||||

GO111MODULE=on go run cmd/flagger/* -kubeconfig=$$HOME/.kube/config -log-level=info -mesh-provider=nginx -namespace=nginx \

|

||||

-metrics-server=http://prometheus-weave.istio.weavedx.com

|

||||

|

||||

run-smi:

|

||||

go run cmd/flagger/* -kubeconfig=$$HOME/.kube/config -log-level=info -mesh-provider=smi:istio -namespace=smi \

|

||||

-metrics-server=https://prometheus.istio.weavedx.com \

|

||||

-slack-url=https://hooks.slack.com/services/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 \

|

||||

-slack-channel="devops-alerts"

|

||||

GO111MODULE=on go run cmd/flagger/* -kubeconfig=$$HOME/.kube/config -log-level=info -mesh-provider=smi:istio -namespace=smi \

|

||||

-metrics-server=https://prometheus.istio.weavedx.com

|

||||

|

||||

run-gloo:

|

||||

go run cmd/flagger/* -kubeconfig=$$HOME/.kube/config -log-level=info -mesh-provider=gloo -namespace=gloo \

|

||||

-metrics-server=https://prometheus.istio.weavedx.com \

|

||||

-slack-url=https://hooks.slack.com/services/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 \

|

||||

-slack-channel="devops-alerts"

|

||||

GO111MODULE=on go run cmd/flagger/* -kubeconfig=$$HOME/.kube/config -log-level=info -mesh-provider=gloo -namespace=gloo \

|

||||

-metrics-server=https://prometheus.istio.weavedx.com

|

||||

|

||||

run-nop:

|

||||

GO111MODULE=on go run cmd/flagger/* -kubeconfig=$$HOME/.kube/config -log-level=info -mesh-provider=none -namespace=bg \

|

||||

-metrics-server=https://prometheus.istio.weavedx.com

|

||||

|

||||

run-linkerd:

|

||||

GO111MODULE=on go run cmd/flagger/* -kubeconfig=$$HOME/.kube/config -log-level=info -mesh-provider=linkerd -namespace=dev \

|

||||

-metrics-server=https://prometheus.linkerd.flagger.dev

|

||||

|

||||

build:

|

||||

GIT_COMMIT=$$(git rev-list -1 HEAD) && GO111MODULE=on CGO_ENABLED=0 GOOS=linux go build -ldflags "-s -w -X github.com/weaveworks/flagger/pkg/version.REVISION=$${GIT_COMMIT}" -a -installsuffix cgo -o ./bin/flagger ./cmd/flagger/*

|

||||

docker build -t weaveworks/flagger:$(TAG) . -f Dockerfile

|

||||

|

||||

push:

|

||||

@@ -57,8 +56,9 @@ test: test-fmt test-codegen

|

||||

|

||||

helm-package:

|

||||

cd charts/ && helm package ./*

|

||||

mv charts/*.tgz docs/

|

||||

helm repo index docs --url https://weaveworks.github.io/flagger --merge ./docs/index.yaml

|

||||

mv charts/*.tgz bin/

|

||||

curl -s https://raw.githubusercontent.com/weaveworks/flagger/gh-pages/index.yaml > ./bin/index.yaml

|

||||

helm repo index bin --url https://flagger.app --merge ./bin/index.yaml

|

||||

|

||||

helm-up:

|

||||

helm upgrade --install flagger ./charts/flagger --namespace=istio-system --set crd.create=false

|

||||

@@ -72,7 +72,8 @@ version-set:

|

||||

sed -i '' "s/tag: $$current/tag: $$next/g" charts/flagger/values.yaml && \

|

||||

sed -i '' "s/appVersion: $$current/appVersion: $$next/g" charts/flagger/Chart.yaml && \

|

||||

sed -i '' "s/version: $$current/version: $$next/g" charts/flagger/Chart.yaml && \

|

||||

echo "Version $$next set in code, deployment and charts"

|

||||

sed -i '' "s/newTag: $$current/newTag: $$next/g" kustomize/base/flagger/kustomization.yaml && \

|

||||

echo "Version $$next set in code, deployment, chart and kustomize"

|

||||

|

||||

version-up:

|

||||

@next="$(VERSION_MINOR).$$(($(PATCH) + 1))" && \

|

||||

@@ -106,11 +107,14 @@ reset-test:

|

||||

kubectl apply -f ./artifacts/namespaces

|

||||

kubectl apply -f ./artifacts/canaries

|

||||

|

||||

loadtester-run:

|

||||

loadtester-run: loadtester-build

|

||||

docker build -t weaveworks/flagger-loadtester:$(LT_VERSION) . -f Dockerfile.loadtester

|

||||

docker rm -f tester || true

|

||||

docker run -dp 8888:9090 --name tester weaveworks/flagger-loadtester:$(LT_VERSION)

|

||||

|

||||

loadtester-build:

|

||||

GO111MODULE=on CGO_ENABLED=0 GOOS=linux go build -a -installsuffix cgo -o ./bin/loadtester ./cmd/loadtester/*

|

||||

|

||||

loadtester-push:

|

||||

docker build -t weaveworks/flagger-loadtester:$(LT_VERSION) . -f Dockerfile.loadtester

|

||||

docker push weaveworks/flagger-loadtester:$(LT_VERSION)

|

||||

|

||||

68

README.md

@@ -1,19 +1,19 @@

|

||||

# flagger

|

||||

|

||||

[](https://travis-ci.org/weaveworks/flagger)

|

||||

[](https://circleci.com/gh/weaveworks/flagger)

|

||||

[](https://goreportcard.com/report/github.com/weaveworks/flagger)

|

||||

[](https://codecov.io/gh/weaveworks/flagger)

|

||||

[](https://github.com/weaveworks/flagger/blob/master/LICENSE)

|

||||

[](https://github.com/weaveworks/flagger/releases)

|

||||

|

||||

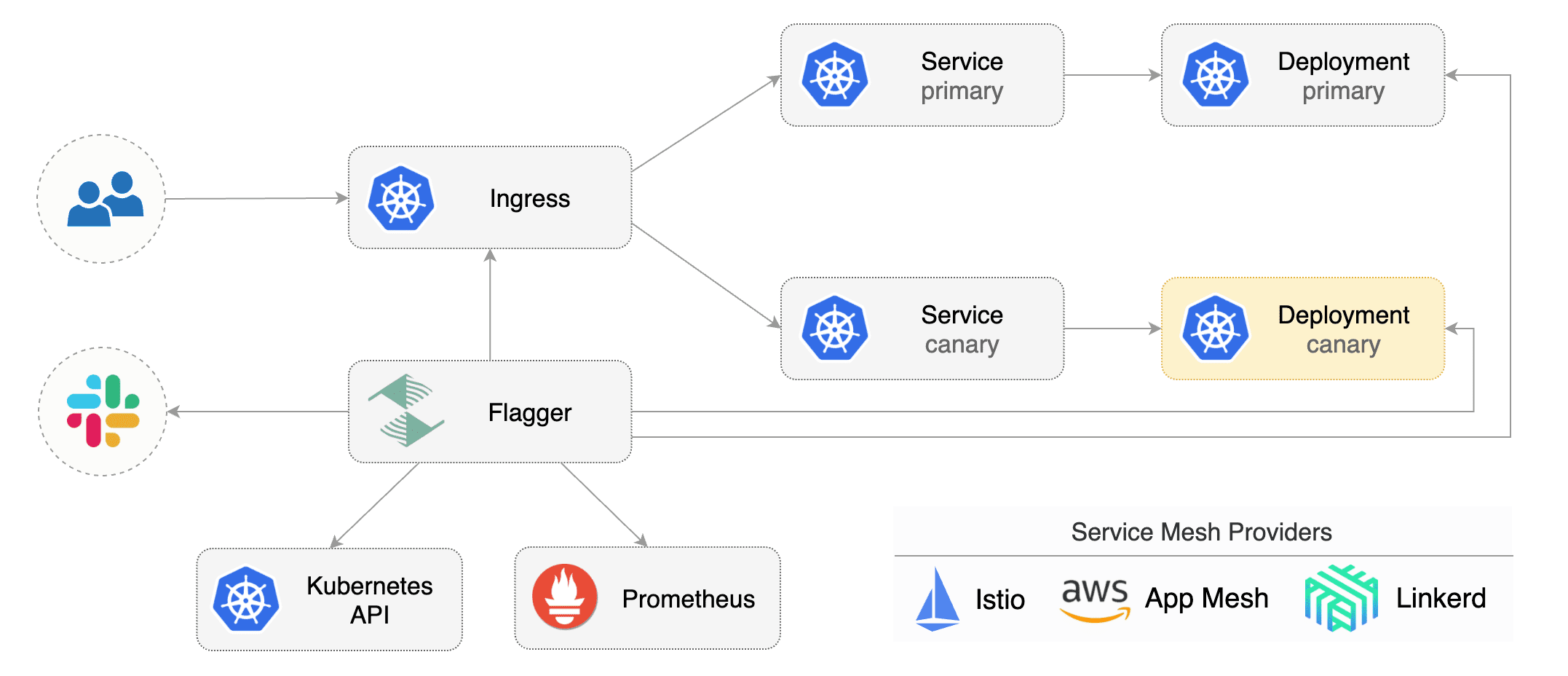

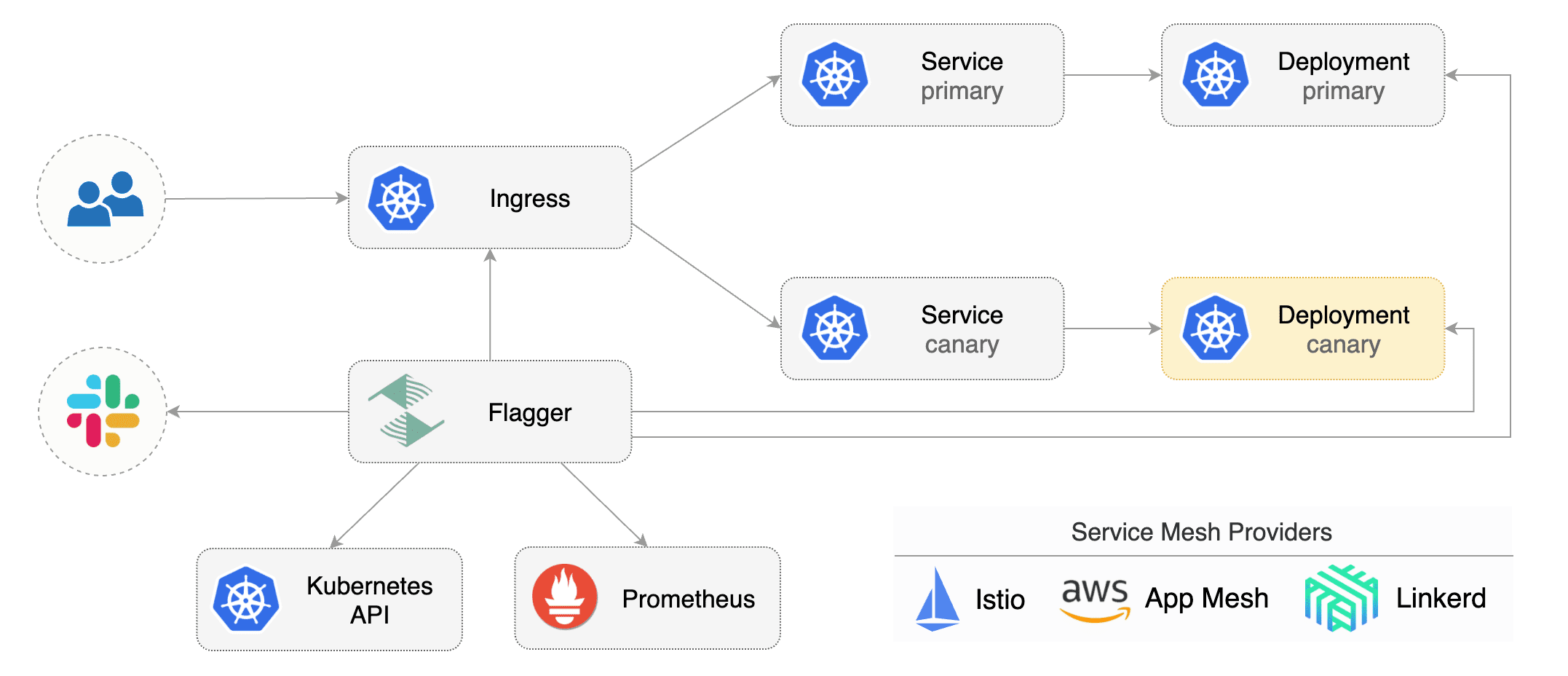

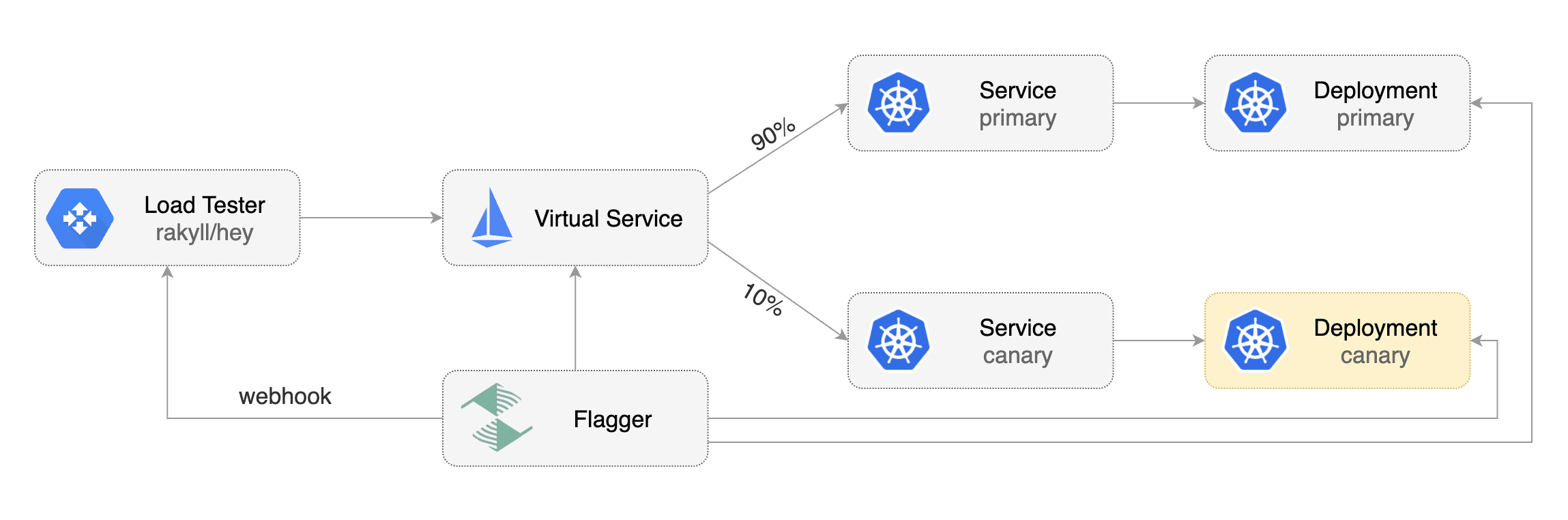

Flagger is a Kubernetes operator that automates the promotion of canary deployments

|

||||

using Istio, App Mesh, NGINX or Gloo routing for traffic shifting and Prometheus metrics for canary analysis.

|

||||

using Istio, Linkerd, App Mesh, NGINX or Gloo routing for traffic shifting and Prometheus metrics for canary analysis.

|

||||

The canary analysis can be extended with webhooks for running acceptance tests,

|

||||

load tests or any other custom validation.

|

||||

|

||||

Flagger implements a control loop that gradually shifts traffic to the canary while measuring key performance

|

||||

indicators like HTTP requests success rate, requests average duration and pods health.

|

||||

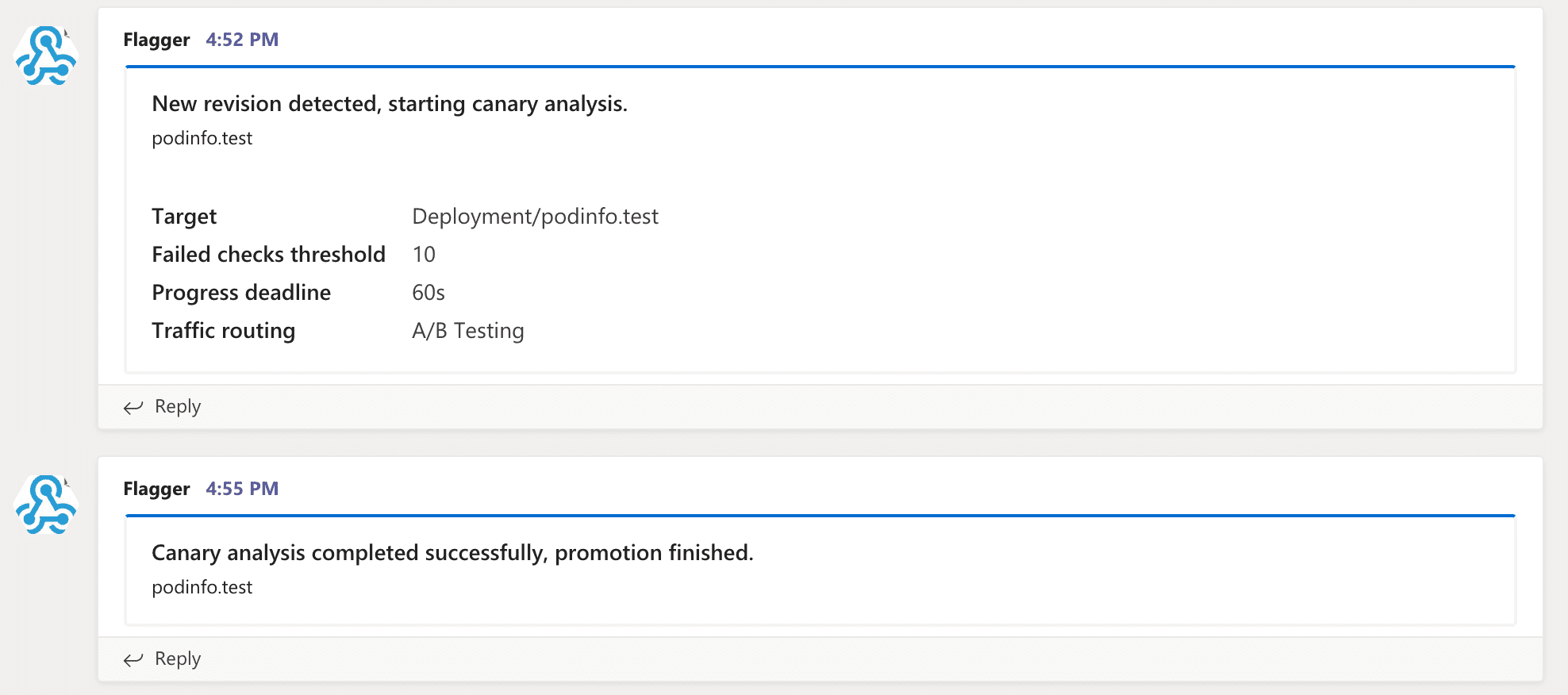

Based on analysis of the KPIs a canary is promoted or aborted, and the analysis result is published to Slack.

|

||||

Based on analysis of the KPIs a canary is promoted or aborted, and the analysis result is published to Slack or MS Teams.

|

||||

|

||||

|

||||

|

||||

@@ -35,12 +35,15 @@ Flagger documentation can be found at [docs.flagger.app](https://docs.flagger.ap

|

||||

* [Custom metrics](https://docs.flagger.app/how-it-works#custom-metrics)

|

||||

* [Webhooks](https://docs.flagger.app/how-it-works#webhooks)

|

||||

* [Load testing](https://docs.flagger.app/how-it-works#load-testing)

|

||||

* [Manual gating](https://docs.flagger.app/how-it-works#manual-gating)

|

||||

* [FAQ](https://docs.flagger.app/faq)

|

||||

* Usage

|

||||

* [Istio canary deployments](https://docs.flagger.app/usage/progressive-delivery)

|

||||

* [Istio A/B testing](https://docs.flagger.app/usage/ab-testing)

|

||||

* [Linkerd canary deployments](https://docs.flagger.app/usage/linkerd-progressive-delivery)

|

||||

* [App Mesh canary deployments](https://docs.flagger.app/usage/appmesh-progressive-delivery)

|

||||

* [NGINX ingress controller canary deployments](https://docs.flagger.app/usage/nginx-progressive-delivery)

|

||||

* [Gloo Canary Deployments](https://docs.flagger.app/usage/gloo-progressive-delivery)

|

||||

* [Gloo ingress controller canary deployments](https://docs.flagger.app/usage/gloo-progressive-delivery)

|

||||

* [Blue/Green deployments](https://docs.flagger.app/usage/blue-green)

|

||||

* [Monitoring](https://docs.flagger.app/usage/monitoring)

|

||||

* [Alerting](https://docs.flagger.app/usage/alerting)

|

||||

* Tutorials

|

||||

@@ -64,6 +67,9 @@ metadata:

|

||||

name: podinfo

|

||||

namespace: test

|

||||

spec:

|

||||

# service mesh provider (optional)

|

||||

# can be: kubernetes, istio, linkerd, appmesh, nginx, gloo, supergloo

|

||||

provider: istio

|

||||

# deployment reference

|

||||

targetRef:

|

||||

apiVersion: apps/v1

|

||||

@@ -78,14 +84,12 @@ spec:

|

||||

kind: HorizontalPodAutoscaler

|

||||

name: podinfo

|

||||

service:

|

||||

# container port

|

||||

# ClusterIP port number

|

||||

port: 9898

|

||||

# Istio gateways (optional)

|

||||

gateways:

|

||||

- public-gateway.istio-system.svc.cluster.local

|

||||

# Istio virtual service host names (optional)

|

||||

hosts:

|

||||

- podinfo.example.com

|

||||

# container port name or number (optional)

|

||||

targetPort: 9898

|

||||

# port name can be http or grpc (default http)

|

||||

portName: http

|

||||

# HTTP match conditions (optional)

|

||||

match:

|

||||

- uri:

|

||||

@@ -93,10 +97,6 @@ spec:

|

||||

# HTTP rewrite (optional)

|

||||

rewrite:

|

||||

uri: /

|

||||

# cross-origin resource sharing policy (optional)

|

||||

corsPolicy:

|

||||

allowOrigin:

|

||||

- example.com

|

||||

# request timeout (optional)

|

||||

timeout: 5s

|

||||

# promote the canary without analysing it (default false)

|

||||

@@ -136,7 +136,7 @@ spec:

|

||||

topic="podinfo"

|

||||

}[1m]

|

||||

)

|

||||

# external checks (optional)

|

||||

# testing (optional)

|

||||

webhooks:

|

||||

- name: load-test

|

||||

url: http://flagger-loadtester.test/

|

||||

@@ -149,31 +149,21 @@ For more details on how the canary analysis and promotion works please [read the

|

||||

|

||||

## Features

|

||||

|

||||

| Service Mesh Feature | Istio | App Mesh | SuperGloo |

|

||||

| -------------------------------------------- | ------------------ | ------------------ |------------------ |

|

||||

| Canary deployments (weighted traffic) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| A/B testing (headers and cookies filters) | :heavy_check_mark: | :heavy_minus_sign: | :heavy_minus_sign: |

|

||||

| Load testing | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Webhooks (acceptance testing) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Request success rate check (L7 metric) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Request duration check (L7 metric) | :heavy_check_mark: | :heavy_minus_sign: | :heavy_check_mark: |

|

||||

| Custom promql checks | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Ingress gateway (CORS, retries and timeouts) | :heavy_check_mark: | :heavy_minus_sign: | :heavy_check_mark: |

|

||||

|

||||

| Ingress Controller Feature | NGINX | Gloo |

|

||||

| -------------------------------------------- | ------------------ | ------------------ |

|

||||

| Canary deployments (weighted traffic) | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| A/B testing (headers and cookies filters) | :heavy_check_mark: | :heavy_minus_sign: |

|

||||

| Load testing | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Webhooks (acceptance testing) | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Request success rate check (L7 metric) | :heavy_minus_sign: | :heavy_check_mark: |

|

||||

| Request duration check (L7 metric) | :heavy_minus_sign: | :heavy_check_mark: |

|

||||

| Custom promql checks | :heavy_check_mark: | :heavy_check_mark: |

|

||||

|

||||

| Feature | Istio | Linkerd | App Mesh | NGINX | Gloo | Kubernetes CNI |

|

||||

| -------------------------------------------- | ------------------ | ------------------ |------------------ |------------------ |------------------ |------------------ |

|

||||

| Canary deployments (weighted traffic) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: |

|

||||

| A/B testing (headers and cookies routing) | :heavy_check_mark: | :heavy_minus_sign: | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: | :heavy_minus_sign: |

|

||||

| Blue/Green deployments (traffic switch) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Webhooks (acceptance/load testing) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Manual gating (approve/pause/resume) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Request success rate check (L7 metric) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: |

|

||||

| Request duration check (L7 metric) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: |

|

||||

| Custom promql checks | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Traffic policy, CORS, retries and timeouts | :heavy_check_mark: | :heavy_minus_sign: | :heavy_minus_sign: | :heavy_minus_sign: | :heavy_minus_sign: | :heavy_minus_sign: |

|

||||

|

||||

## Roadmap

|

||||

|

||||

* Integrate with other service mesh technologies like Linkerd v2

|

||||

* Integrate with other ingress controllers like Contour, HAProxy, ALB

|

||||

* Add support for comparing the canary metrics to the primary ones and do the validation based on the derivation between the two

|

||||

|

||||

## Contributing

|

||||

|

||||

@@ -1,67 +0,0 @@

|

||||

apiVersion: apps/v1

|

||||

kind: Deployment

|

||||

metadata:

|

||||

name: abtest

|

||||

namespace: test

|

||||

labels:

|

||||

app: abtest

|

||||

spec:

|

||||

minReadySeconds: 5

|

||||

revisionHistoryLimit: 5

|

||||

progressDeadlineSeconds: 60

|

||||

strategy:

|

||||

rollingUpdate:

|

||||

maxUnavailable: 0

|

||||

type: RollingUpdate

|

||||

selector:

|

||||

matchLabels:

|

||||

app: abtest

|

||||

template:

|

||||

metadata:

|

||||

annotations:

|

||||

prometheus.io/scrape: "true"

|

||||

labels:

|

||||

app: abtest

|

||||

spec:

|

||||

containers:

|

||||

- name: podinfod

|

||||

image: quay.io/stefanprodan/podinfo:1.4.0

|

||||

imagePullPolicy: IfNotPresent

|

||||

ports:

|

||||

- containerPort: 9898

|

||||

name: http

|

||||

protocol: TCP

|

||||

command:

|

||||

- ./podinfo

|

||||

- --port=9898

|

||||

- --level=info

|

||||

- --random-delay=false

|

||||

- --random-error=false

|

||||

env:

|

||||

- name: PODINFO_UI_COLOR

|

||||

value: blue

|

||||

livenessProbe:

|

||||

exec:

|

||||

command:

|

||||

- podcli

|

||||

- check

|

||||

- http

|

||||

- localhost:9898/healthz

|

||||

initialDelaySeconds: 5

|

||||

timeoutSeconds: 5

|

||||

readinessProbe:

|

||||

exec:

|

||||

command:

|

||||

- podcli

|

||||

- check

|

||||

- http

|

||||

- localhost:9898/readyz

|

||||

initialDelaySeconds: 5

|

||||

timeoutSeconds: 5

|

||||

resources:

|

||||

limits:

|

||||

cpu: 2000m

|

||||

memory: 512Mi

|

||||

requests:

|

||||

cpu: 100m

|

||||

memory: 64Mi

|

||||

@@ -1,19 +0,0 @@

|

||||

apiVersion: autoscaling/v2beta1

|

||||

kind: HorizontalPodAutoscaler

|

||||

metadata:

|

||||

name: abtest

|

||||

namespace: test

|

||||

spec:

|

||||

scaleTargetRef:

|

||||

apiVersion: apps/v1

|

||||

kind: Deployment

|

||||

name: abtest

|

||||

minReplicas: 2

|

||||

maxReplicas: 4

|

||||

metrics:

|

||||

- type: Resource

|

||||

resource:

|

||||

name: cpu

|

||||

# scale up if usage is above

|

||||

# 99% of the requested CPU (100m)

|

||||

targetAverageUtilization: 99

|

||||

@@ -20,8 +20,16 @@ spec:

|

||||

service:

|

||||

# container port

|

||||

port: 9898

|

||||

# container port name (optional)

|

||||

# can be http or grpc

|

||||

portName: http

|

||||

# App Mesh reference

|

||||

meshName: global

|

||||

# App Mesh retry policy (optional)

|

||||

retries:

|

||||

attempts: 3

|

||||

perTryTimeout: 1s

|

||||

retryOn: "gateway-error,client-error,stream-error"

|

||||

# define the canary analysis timing and KPIs

|

||||

canaryAnalysis:

|

||||

# schedule interval (default 60s)

|

||||

@@ -41,8 +49,20 @@ spec:

|

||||

# percentage (0-100)

|

||||

threshold: 99

|

||||

interval: 1m

|

||||

# external checks (optional)

|

||||

- name: request-duration

|

||||

# maximum req duration P99

|

||||

# milliseconds

|

||||

threshold: 500

|

||||

interval: 30s

|

||||

# testing (optional)

|

||||

webhooks:

|

||||

- name: acceptance-test

|

||||

type: pre-rollout

|

||||

url: http://flagger-loadtester.test/

|

||||

timeout: 30s

|

||||

metadata:

|

||||

type: bash

|

||||

cmd: "curl -sd 'test' http://podinfo-canary.test:9898/token | grep token"

|

||||

- name: load-test

|

||||

url: http://flagger-loadtester.test/

|

||||

timeout: 5s

|

||||

|

||||

@@ -25,7 +25,7 @@ spec:

|

||||

spec:

|

||||

containers:

|

||||

- name: podinfod

|

||||

image: quay.io/stefanprodan/podinfo:1.4.0

|

||||

image: stefanprodan/podinfo:3.1.0

|

||||

imagePullPolicy: IfNotPresent

|

||||

ports:

|

||||

- containerPort: 9898

|

||||

|

||||

@@ -13,7 +13,7 @@ data:

|

||||

- address:

|

||||

socket_address:

|

||||

address: 0.0.0.0

|

||||

port_value: 80

|

||||

port_value: 8080

|

||||

filter_chains:

|

||||

- filters:

|

||||

- name: envoy.http_connection_manager

|

||||

@@ -48,11 +48,15 @@ data:

|

||||

connect_timeout: 0.30s

|

||||

type: strict_dns

|

||||

lb_policy: round_robin

|

||||

http2_protocol_options: {}

|

||||

hosts:

|

||||

- socket_address:

|

||||

address: podinfo.test

|

||||

port_value: 9898

|

||||

load_assignment:

|

||||

cluster_name: podinfo

|

||||

endpoints:

|

||||

- lb_endpoints:

|

||||