Compare commits

326 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

c63554c534 | ||

|

|

be8ed8a696 | ||

|

|

98530d9968 | ||

|

|

38adc513a6 | ||

|

|

eb12e3bde1 | ||

|

|

8b2839d36e | ||

|

|

f0fa2aa6bb | ||

|

|

33528b073f | ||

|

|

cf8783ea37 | ||

|

|

00355635f8 | ||

|

|

aa485f4bf1 | ||

|

|

273b05fb24 | ||

|

|

e470474d6f | ||

|

|

ddfd2fe2ec | ||

|

|

7533d0ae99 | ||

|

|

04ec7f0388 | ||

|

|

419000cc13 | ||

|

|

0dc8edb437 | ||

|

|

0759b6531b | ||

|

|

d8f984de7d | ||

|

|

82e490a875 | ||

|

|

c6dffd9d3e | ||

|

|

8ee3d5835a | ||

|

|

1209d7e42b | ||

|

|

cdc05ba506 | ||

|

|

a6fae0195f | ||

|

|

11375b6890 | ||

|

|

3811470ebf | ||

|

|

e2b08eb4dc | ||

|

|

38d3ca1022 | ||

|

|

df459c5fe6 | ||

|

|

d1d9c0e2a9 | ||

|

|

c1b1d7d448 | ||

|

|

e6b5ee2042 | ||

|

|

0170fc6166 | ||

|

|

4cc2ada2a2 | ||

|

|

a5d3e4f6a6 | ||

|

|

7c92b33886 | ||

|

|

0f0b9414ae | ||

|

|

6fbb67ee8c | ||

|

|

6634f1a9ae | ||

|

|

8da8138f77 | ||

|

|

588f4c477b | ||

|

|

fda1775d3a | ||

|

|

fc71d53c71 | ||

|

|

ab2a320659 | ||

|

|

7f50f81ac7 | ||

|

|

c36a13ccff | ||

|

|

47de726345 | ||

|

|

7a4fdbddc0 | ||

|

|

0dc6f33550 | ||

|

|

b2436eb0df | ||

|

|

cc673159d7 | ||

|

|

17c310d66d | ||

|

|

e7357c4e07 | ||

|

|

c44de2d7c3 | ||

|

|

d82b2c219a | ||

|

|

35c8957a55 | ||

|

|

8555f8250a | ||

|

|

8137a25b13 | ||

|

|

2db5573c0e | ||

|

|

1e382203b8 | ||

|

|

873903a4cb | ||

|

|

e5b8afc085 | ||

|

|

ded658fed9 | ||

|

|

88d8858900 | ||

|

|

737c185aa6 | ||

|

|

0006a68740 | ||

|

|

4db91f7062 | ||

|

|

b8c23967b7 | ||

|

|

2019d048a4 | ||

|

|

fe0a4eb20c | ||

|

|

a35b0e8639 | ||

|

|

4c0843f92a | ||

|

|

867c1af897 | ||

|

|

100308289f | ||

|

|

3d4739760d | ||

|

|

9f321dd685 | ||

|

|

ba6078f235 | ||

|

|

cd2f1a24bd | ||

|

|

b87a81b798 | ||

|

|

0f9dd61786 | ||

|

|

4869a9f3ae | ||

|

|

cd6f36302d | ||

|

|

e5fdc7a57d | ||

|

|

834a601311 | ||

|

|

a2784c533e | ||

|

|

8e3ee3439c | ||

|

|

f9d40cfe1b | ||

|

|

b26b49fac2 | ||

|

|

f68d647fd0 | ||

|

|

deb3fb01a2 | ||

|

|

3accd23a19 | ||

|

|

6a66113560 | ||

|

|

6a7f7415fa | ||

|

|

4654f2cba9 | ||

|

|

17557dc206 | ||

|

|

39a3898234 | ||

|

|

9372cf9f18 | ||

|

|

41e427d645 | ||

|

|

84ff6f7e89 | ||

|

|

a286625ad9 | ||

|

|

d7999e6627 | ||

|

|

41481c1ca8 | ||

|

|

e926486b0f | ||

|

|

3ad55c9750 | ||

|

|

06c4151ec4 | ||

|

|

51605d9e04 | ||

|

|

438b558809 | ||

|

|

293c44b2a5 | ||

|

|

55de241f48 | ||

|

|

fda1d32dec | ||

|

|

b8d5295690 | ||

|

|

fdc8dd8795 | ||

|

|

99b3775926 | ||

|

|

f0f44c9d35 | ||

|

|

d7b878f980 | ||

|

|

d8c8b90a95 | ||

|

|

8be3d82ae9 | ||

|

|

fd778be943 | ||

|

|

51936bdc89 | ||

|

|

2935fad54d | ||

|

|

c9257bdb99 | ||

|

|

90a6ace666 | ||

|

|

5eb2b5211c | ||

|

|

2ed9ddcdf8 | ||

|

|

92f4be79ac | ||

|

|

593f450093 | ||

|

|

d20efe4509 | ||

|

|

fcac992e71 | ||

|

|

d59663810c | ||

|

|

37abdbb774 | ||

|

|

a5909682cd | ||

|

|

927b4324ae | ||

|

|

1ae72dafca | ||

|

|

392865ec59 | ||

|

|

3f1af1ec85 | ||

|

|

487432d873 | ||

|

|

a1ff44454a | ||

|

|

05b0557430 | ||

|

|

975b84a2eb | ||

|

|

3e845f1a29 | ||

|

|

49a266c207 | ||

|

|

7284e55eb0 | ||

|

|

b82fd5e5a5 | ||

|

|

4b084cf685 | ||

|

|

e6c740d917 | ||

|

|

cb93a9a158 | ||

|

|

3ea65ea1ad | ||

|

|

70d91bb399 | ||

|

|

f5fa18d7ca | ||

|

|

cb24c74769 | ||

|

|

775dc91ede | ||

|

|

ffef8ba4d9 | ||

|

|

ef2df0d7bc | ||

|

|

f0bf5219d4 | ||

|

|

c8a1165db2 | ||

|

|

e9c3518ce7 | ||

|

|

a5ea6587c0 | ||

|

|

2792835d66 | ||

|

|

16867db1b4 | ||

|

|

164bbb884d | ||

|

|

873141b9ca | ||

|

|

4a66a28c01 | ||

|

|

c886c1db62 | ||

|

|

f2d121a13b | ||

|

|

f255938780 | ||

|

|

aa17367de7 | ||

|

|

d5df6df661 | ||

|

|

ec6004963c | ||

|

|

61d272dbbc | ||

|

|

6be97b3fc7 | ||

|

|

6f95275051 | ||

|

|

49829fc80f | ||

|

|

9112c5a064 | ||

|

|

73c4833697 | ||

|

|

f3ca6266ab | ||

|

|

03acb869b7 | ||

|

|

8470d728f9 | ||

|

|

71965b199b | ||

|

|

fd47d385c2 | ||

|

|

dfdd8cffd7 | ||

|

|

138c42ec3a | ||

|

|

e53723c9c2 | ||

|

|

03be6a58ac | ||

|

|

864f362f7d | ||

|

|

bfb329b2f3 | ||

|

|

4ce65be0c6 | ||

|

|

6ab598d71b | ||

|

|

06b8ab4096 | ||

|

|

596bb6487c | ||

|

|

fa33a3e4bb | ||

|

|

abecb891dd | ||

|

|

fa14cebbf4 | ||

|

|

bde24d28b7 | ||

|

|

c0b400eb7c | ||

|

|

4772c91835 | ||

|

|

6da8d919b3 | ||

|

|

10b5504446 | ||

|

|

76b73a6319 | ||

|

|

0bebfbbb23 | ||

|

|

8cb147920b | ||

|

|

5cb343d89f | ||

|

|

67f34f1b29 | ||

|

|

349d781085 | ||

|

|

d7524414ce | ||

|

|

2e6cccaa90 | ||

|

|

565b99e210 | ||

|

|

5eb37b8f76 | ||

|

|

41cb713367 | ||

|

|

b29fe1f13b | ||

|

|

339780dcc4 | ||

|

|

56c77085bf | ||

|

|

118cac5941 | ||

|

|

7027f18394 | ||

|

|

99a85205f0 | ||

|

|

4b8d8718c2 | ||

|

|

0c4dd94e38 | ||

|

|

52cec59ca3 | ||

|

|

2c1ba42bcc | ||

|

|

6d204b52ce | ||

|

|

55c3745ef8 | ||

|

|

c876f879de | ||

|

|

f2beab1fdc | ||

|

|

85af1abb26 | ||

|

|

4f54901d08 | ||

|

|

98b10866bf | ||

|

|

ccf4dc55e9 | ||

|

|

8ce90e1814 | ||

|

|

2b6047d124 | ||

|

|

05e832ed55 | ||

|

|

45fddab9a9 | ||

|

|

34e9c0da6b | ||

|

|

9891375c20 | ||

|

|

377f145a3f | ||

|

|

6c7fff080f | ||

|

|

b1a168c5f2 | ||

|

|

d15df9ae88 | ||

|

|

c7d93d9ca7 | ||

|

|

0ae4ccede1 | ||

|

|

c6aa66ab94 | ||

|

|

2e10d8bf05 | ||

|

|

f64295bcee | ||

|

|

3fe8119e0c | ||

|

|

b6880213ce | ||

|

|

aca6b2b558 | ||

|

|

aa33af25fc | ||

|

|

aa3a93da98 | ||

|

|

b42db67d85 | ||

|

|

0d2163cd94 | ||

|

|

371e177ff3 | ||

|

|

e62668ab48 | ||

|

|

005e3928e7 | ||

|

|

0d5b2a2277 | ||

|

|

bc8cfa91ee | ||

|

|

63b217faee | ||

|

|

376bf194b3 | ||

|

|

a69e9abf3c | ||

|

|

c22529bbd0 | ||

|

|

6fd8498f6d | ||

|

|

39cce0196f | ||

|

|

4e39e5608c | ||

|

|

ba4d16fd76 | ||

|

|

b9f14ee57a | ||

|

|

a3f791be17 | ||

|

|

41497c73f4 | ||

|

|

2e1b3fc8de | ||

|

|

44cf4d08e9 | ||

|

|

ca07b47523 | ||

|

|

8fceafc017 | ||

|

|

47dcf6a7b9 | ||

|

|

c63ec2d95d | ||

|

|

f4aeb98744 | ||

|

|

e6aefb8f4b | ||

|

|

6cf1f35eca | ||

|

|

cff742d7c4 | ||

|

|

67f8f414bf | ||

|

|

ecf73e967a | ||

|

|

7f8986a06d | ||

|

|

ec6aab2c8d | ||

|

|

b8625d5e1e | ||

|

|

0fa4654034 | ||

|

|

6349dbf5c0 | ||

|

|

c8cec8e18b | ||

|

|

70114e3fd3 | ||

|

|

cd75c5fa25 | ||

|

|

1535f7aa41 | ||

|

|

90abb7ba5b | ||

|

|

e6739711b0 | ||

|

|

333780e78b | ||

|

|

38777801de | ||

|

|

3750ed850c | ||

|

|

fda53fbf80 | ||

|

|

c8a472c01b | ||

|

|

ccd64a3df9 | ||

|

|

2ea13cec88 | ||

|

|

5afc800b11 | ||

|

|

1fb898ac22 | ||

|

|

73b7fc1cfc | ||

|

|

b25ff35e5b | ||

|

|

4fe4053cdd | ||

|

|

ed70160583 | ||

|

|

bb00f8cabd | ||

|

|

7bef999c41 | ||

|

|

a2774d92da | ||

|

|

be9b03d99b | ||

|

|

b4af9e5f32 | ||

|

|

3ba2762805 | ||

|

|

2884a80d31 | ||

|

|

54266acfb1 | ||

|

|

9cb44815c4 | ||

|

|

27b2616330 | ||

|

|

8ed729cd54 | ||

|

|

34f7bca33c | ||

|

|

fee442ffe0 | ||

|

|

eb890ef174 | ||

|

|

24c61df388 | ||

|

|

bfb3331457 | ||

|

|

7fc6f8a04d | ||

|

|

3c37020260 | ||

|

|

d05b684dbe | ||

|

|

da978254b1 | ||

|

|

0cfeceb3c9 | ||

|

|

814aee8f4f | ||

|

|

48bfb062d8 | ||

|

|

08be31f022 |

@@ -1,296 +0,0 @@

|

||||

version: 2.1

|

||||

jobs:

|

||||

|

||||

build-binary:

|

||||

docker:

|

||||

- image: circleci/golang:1.14

|

||||

working_directory: ~/build

|

||||

steps:

|

||||

- checkout

|

||||

- restore_cache:

|

||||

keys:

|

||||

- go-mod-v3-{{ checksum "go.sum" }}

|

||||

- run:

|

||||

name: Run go mod download

|

||||

command: go mod download

|

||||

- run:

|

||||

name: Check code formatting

|

||||

command: go install golang.org/x/tools/cmd/goimports && make test-fmt

|

||||

- run:

|

||||

name: Build Flagger

|

||||

command: |

|

||||

CGO_ENABLED=0 GOOS=linux go build \

|

||||

-ldflags "-s -w -X github.com/weaveworks/flagger/pkg/version.REVISION=${CIRCLE_SHA1}" \

|

||||

-a -installsuffix cgo -o bin/flagger ./cmd/flagger/*.go

|

||||

- run:

|

||||

name: Build Flagger load tester

|

||||

command: |

|

||||

CGO_ENABLED=0 GOOS=linux go build \

|

||||

-a -installsuffix cgo -o bin/loadtester ./cmd/loadtester/*.go

|

||||

- run:

|

||||

name: Run unit tests

|

||||

command: |

|

||||

go test -race -coverprofile=coverage.txt -covermode=atomic $(go list ./pkg/...)

|

||||

bash <(curl -s https://codecov.io/bash)

|

||||

- run:

|

||||

name: Verify code gen

|

||||

command: make test-codegen

|

||||

- save_cache:

|

||||

key: go-mod-v3-{{ checksum "go.sum" }}

|

||||

paths:

|

||||

- "/go/pkg/mod/"

|

||||

- persist_to_workspace:

|

||||

root: bin

|

||||

paths:

|

||||

- flagger

|

||||

- loadtester

|

||||

|

||||

push-container:

|

||||

docker:

|

||||

- image: circleci/golang:1.14

|

||||

steps:

|

||||

- checkout

|

||||

- setup_remote_docker:

|

||||

docker_layer_caching: true

|

||||

- attach_workspace:

|

||||

at: /tmp/bin

|

||||

- run: test/container-build.sh

|

||||

- run: test/container-push.sh

|

||||

|

||||

push-binary:

|

||||

docker:

|

||||

- image: circleci/golang:1.14

|

||||

working_directory: ~/build

|

||||

steps:

|

||||

- checkout

|

||||

- setup_remote_docker:

|

||||

docker_layer_caching: true

|

||||

- restore_cache:

|

||||

keys:

|

||||

- go-mod-v3-{{ checksum "go.sum" }}

|

||||

- run: make release-notes

|

||||

- run: github-release-notes -org weaveworks -repo flagger -since-latest-release -include-author > /tmp/release.txt

|

||||

- run: test/goreleaser.sh

|

||||

|

||||

e2e-kubernetes-testing:

|

||||

machine: true

|

||||

steps:

|

||||

- checkout

|

||||

- attach_workspace:

|

||||

at: /tmp/bin

|

||||

- run: test/container-build.sh

|

||||

- run: test/e2e-kind.sh v1.18.2

|

||||

- run: test/e2e-kubernetes.sh

|

||||

- run: test/e2e-kubernetes-tests-deployment.sh

|

||||

- run: test/e2e-kubernetes-cleanup.sh

|

||||

- run: test/e2e-kubernetes-tests-daemonset.sh

|

||||

|

||||

e2e-istio-testing:

|

||||

machine: true

|

||||

steps:

|

||||

- checkout

|

||||

- attach_workspace:

|

||||

at: /tmp/bin

|

||||

- run: test/container-build.sh

|

||||

- run: test/e2e-kind.sh v1.18.2

|

||||

- run: test/e2e-istio.sh

|

||||

- run: test/e2e-istio-dependencies.sh

|

||||

- run: test/e2e-istio-tests.sh

|

||||

- run: test/e2e-istio-tests-skip-analysis.sh

|

||||

- run: test/e2e-kubernetes-cleanup.sh

|

||||

- run: test/e2e-istio-dependencies.sh

|

||||

- run: test/e2e-istio-tests-delegate.sh

|

||||

|

||||

e2e-gloo-testing:

|

||||

machine: true

|

||||

steps:

|

||||

- checkout

|

||||

- attach_workspace:

|

||||

at: /tmp/bin

|

||||

- run: test/container-build.sh

|

||||

- run: test/e2e-kind.sh

|

||||

- run: test/e2e-gloo.sh

|

||||

- run: test/e2e-gloo-tests.sh

|

||||

|

||||

e2e-nginx-testing:

|

||||

machine: true

|

||||

steps:

|

||||

- checkout

|

||||

- attach_workspace:

|

||||

at: /tmp/bin

|

||||

- run: test/container-build.sh

|

||||

- run: test/e2e-kind.sh

|

||||

- run: test/e2e-nginx.sh

|

||||

- run: test/e2e-nginx-tests.sh

|

||||

- run: test/e2e-nginx-cleanup.sh

|

||||

- run: test/e2e-nginx-custom-annotations.sh

|

||||

- run: test/e2e-nginx-tests.sh

|

||||

|

||||

e2e-linkerd-testing:

|

||||

machine: true

|

||||

steps:

|

||||

- checkout

|

||||

- attach_workspace:

|

||||

at: /tmp/bin

|

||||

- run: test/container-build.sh

|

||||

- run: test/e2e-kind.sh

|

||||

- run: test/e2e-linkerd.sh

|

||||

- run: test/e2e-linkerd-tests.sh

|

||||

|

||||

e2e-contour-testing:

|

||||

machine: true

|

||||

steps:

|

||||

- checkout

|

||||

- attach_workspace:

|

||||

at: /tmp/bin

|

||||

- run: test/container-build.sh

|

||||

- run: test/e2e-kind.sh

|

||||

- run: test/e2e-contour.sh

|

||||

- run: test/e2e-contour-tests.sh

|

||||

|

||||

e2e-skipper-testing:

|

||||

machine: true

|

||||

steps:

|

||||

- checkout

|

||||

- attach_workspace:

|

||||

at: /tmp/bin

|

||||

- run: test/container-build.sh

|

||||

- run: test/e2e-kind.sh

|

||||

- run: test/e2e-skipper.sh

|

||||

- run: test/e2e-skipper-tests.sh

|

||||

- run: test/e2e-skipper-cleanup.sh

|

||||

|

||||

e2e-traefik-testing:

|

||||

machine: true

|

||||

steps:

|

||||

- checkout

|

||||

- attach_workspace:

|

||||

at: /tmp/bin

|

||||

- run: test/container-build.sh

|

||||

- run: test/e2e-kind.sh

|

||||

- run: test/e2e-traefik.sh

|

||||

- run: test/e2e-traefik-tests.sh

|

||||

- run: test/e2e-skipper-cleanup.sh

|

||||

|

||||

push-helm-charts:

|

||||

docker:

|

||||

- image: circleci/golang:1.14

|

||||

steps:

|

||||

- checkout

|

||||

- run:

|

||||

name: Install kubectl

|

||||

command: sudo curl -L https://storage.googleapis.com/kubernetes-release/release/$(curl -s https://storage.googleapis.com/kubernetes-release/release/stable.txt)/bin/linux/amd64/kubectl -o /usr/local/bin/kubectl && sudo chmod +x /usr/local/bin/kubectl

|

||||

- run:

|

||||

name: Install helm

|

||||

command: sudo curl -L https://storage.googleapis.com/kubernetes-helm/helm-v2.14.2-linux-amd64.tar.gz | tar xz && sudo mv linux-amd64/helm /bin/helm && sudo rm -rf linux-amd64

|

||||

- run:

|

||||

name: Initialize helm

|

||||

command: helm init --client-only --kubeconfig=$HOME/.kube/kubeconfig

|

||||

- run:

|

||||

name: Lint charts

|

||||

command: |

|

||||

helm lint ./charts/*

|

||||

- run:

|

||||

name: Package charts

|

||||

command: |

|

||||

mkdir $HOME/charts

|

||||

helm package ./charts/* --destination $HOME/charts

|

||||

- run:

|

||||

name: Publish charts

|

||||

command: |

|

||||

if echo "${CIRCLE_TAG}" | grep v; then

|

||||

REPOSITORY="https://weaveworksbot:${GITHUB_TOKEN}@github.com/weaveworks/flagger.git"

|

||||

git config user.email weaveworksbot@users.noreply.github.com

|

||||

git config user.name weaveworksbot

|

||||

git remote set-url origin ${REPOSITORY}

|

||||

git checkout gh-pages

|

||||

mv -f $HOME/charts/*.tgz .

|

||||

helm repo index . --url https://flagger.app

|

||||

git add .

|

||||

git commit -m "Publish Helm charts v${CIRCLE_TAG}"

|

||||

git push origin gh-pages

|

||||

else

|

||||

echo "Not a release! Skip charts publish"

|

||||

fi

|

||||

|

||||

workflows:

|

||||

version: 2

|

||||

build-test-push:

|

||||

jobs:

|

||||

- build-binary:

|

||||

filters:

|

||||

branches:

|

||||

ignore:

|

||||

- gh-pages

|

||||

- /^user-.*/

|

||||

- e2e-kubernetes-testing:

|

||||

requires:

|

||||

- build-binary

|

||||

- e2e-istio-testing:

|

||||

requires:

|

||||

- build-binary

|

||||

- e2e-gloo-testing:

|

||||

requires:

|

||||

- build-binary

|

||||

- e2e-nginx-testing:

|

||||

requires:

|

||||

- build-binary

|

||||

- e2e-linkerd-testing:

|

||||

requires:

|

||||

- build-binary

|

||||

- e2e-contour-testing:

|

||||

requires:

|

||||

- build-binary

|

||||

- e2e-skipper-testing:

|

||||

requires:

|

||||

- build-binary

|

||||

- e2e-traefik-testing:

|

||||

requires:

|

||||

- build-binary

|

||||

- push-container:

|

||||

requires:

|

||||

- build-binary

|

||||

- e2e-kubernetes-testing

|

||||

- e2e-istio-testing

|

||||

- e2e-gloo-testing

|

||||

- e2e-nginx-testing

|

||||

- e2e-linkerd-testing

|

||||

- e2e-skipper-testing

|

||||

- e2e-traefik-testing

|

||||

filters:

|

||||

branches:

|

||||

only:

|

||||

- master

|

||||

|

||||

release:

|

||||

jobs:

|

||||

- build-binary:

|

||||

filters:

|

||||

branches:

|

||||

ignore: /.*/

|

||||

tags:

|

||||

ignore: /^chart.*/

|

||||

- push-container:

|

||||

requires:

|

||||

- build-binary

|

||||

filters:

|

||||

branches:

|

||||

ignore: /.*/

|

||||

tags:

|

||||

ignore: /^chart.*/

|

||||

- push-binary:

|

||||

requires:

|

||||

- push-container

|

||||

filters:

|

||||

branches:

|

||||

ignore: /.*/

|

||||

tags:

|

||||

ignore: /^chart.*/

|

||||

- push-helm-charts:

|

||||

requires:

|

||||

- push-container

|

||||

filters:

|

||||

branches:

|

||||

ignore: /.*/

|

||||

tags:

|

||||

ignore: /^chart.*/

|

||||

50

.cosign/README.md

Normal file

@@ -0,0 +1,50 @@

|

||||

# Flagger signed releases

|

||||

|

||||

Flagger releases published to GitHub Container Registry as multi-arch container images

|

||||

are signed using [cosign](https://github.com/sigstore/cosign).

|

||||

|

||||

## Verify Flagger images

|

||||

|

||||

Install the [cosign](https://github.com/sigstore/cosign) CLI:

|

||||

|

||||

```sh

|

||||

brew install sigstore/tap/cosign

|

||||

```

|

||||

|

||||

Verify a Flagger release with cosign CLI:

|

||||

|

||||

```sh

|

||||

cosign verify -key https://raw.githubusercontent.com/fluxcd/flagger/main/cosign/cosign.pub \

|

||||

ghcr.io/fluxcd/flagger:1.13.0

|

||||

```

|

||||

|

||||

Verify Flagger images before they get pulled on your Kubernetes clusters with [Kyverno](https://github.com/kyverno/kyverno/):

|

||||

|

||||

```yaml

|

||||

apiVersion: kyverno.io/v1

|

||||

kind: ClusterPolicy

|

||||

metadata:

|

||||

name: verify-flagger-image

|

||||

annotations:

|

||||

policies.kyverno.io/title: Verify Flagger Image

|

||||

policies.kyverno.io/category: Cosign

|

||||

policies.kyverno.io/severity: medium

|

||||

policies.kyverno.io/subject: Pod

|

||||

policies.kyverno.io/minversion: 1.4.2

|

||||

spec:

|

||||

validationFailureAction: enforce

|

||||

background: false

|

||||

rules:

|

||||

- name: verify-image

|

||||

match:

|

||||

resources:

|

||||

kinds:

|

||||

- Pod

|

||||

verifyImages:

|

||||

- image: "ghcr.io/fluxcd/flagger:*"

|

||||

key: |-

|

||||

-----BEGIN PUBLIC KEY-----

|

||||

MFkwEwYHKoZIzj0CAQYIKoZIzj0DAQcDQgAEST+BqQ1XZhhVYx0YWQjdUJYIG5Lt

|

||||

iz2+UxRIqmKBqNmce2T+l45qyqOs99qfD7gLNGmkVZ4vtJ9bM7FxChFczg==

|

||||

-----END PUBLIC KEY-----

|

||||

```

|

||||

11

.cosign/cosign.key

Normal file

@@ -0,0 +1,11 @@

|

||||

-----BEGIN ENCRYPTED COSIGN PRIVATE KEY-----

|

||||

eyJrZGYiOnsibmFtZSI6InNjcnlwdCIsInBhcmFtcyI6eyJOIjozMjc2OCwiciI6

|

||||

OCwicCI6MX0sInNhbHQiOiIvK1MwbTNrU3pGMFFXdVVYQkFoY2gvTDc3NVJBSy9O

|

||||

cnkzUC9iMkxBZGF3PSJ9LCJjaXBoZXIiOnsibmFtZSI6Im5hY2wvc2VjcmV0Ym94

|

||||

Iiwibm9uY2UiOiJBNEFYL2IyU1BsMDBuY3JUNk45QkNOb0VLZTZLZEluRCJ9LCJj

|

||||

aXBoZXJ0ZXh0IjoiZ054UlJweXpraWtRMUVaRldsSnEvQXVUWTl0Vis2enBlWkIy

|

||||

dUFHREMzOVhUQlAwaWY5YStaZTE1V0NTT2FQZ01XQmtSZWhrQVVjQ3dZOGF2WTZa

|

||||

eFhZWWE3T1B4eFdidHJuSUVZM2hwZUk1M1dVQVZ6SXEzQjl0N0ZmV1JlVGsxdFlo

|

||||

b3hwQmxUSHY4U0c2azdPYk1aQnJleitzSGRWclF6YUdMdG12V1FOMTNZazRNb25i

|

||||

ZUpRSUJpUXFQTFg5NzFhSUlxU0dxYVhCanc9PSJ9

|

||||

-----END ENCRYPTED COSIGN PRIVATE KEY-----

|

||||

4

.cosign/cosign.pub

Normal file

@@ -0,0 +1,4 @@

|

||||

-----BEGIN PUBLIC KEY-----

|

||||

MFkwEwYHKoZIzj0CAQYIKoZIzj0DAQcDQgAEST+BqQ1XZhhVYx0YWQjdUJYIG5Lt

|

||||

iz2+UxRIqmKBqNmce2T+l45qyqOs99qfD7gLNGmkVZ4vtJ9bM7FxChFczg==

|

||||

-----END PUBLIC KEY-----

|

||||

@@ -13,3 +13,4 @@ redirects:

|

||||

usage/skipper-progressive-delivery: tutorials/skipper-progressive-delivery.md

|

||||

usage/crossover-progressive-delivery: tutorials/crossover-progressive-delivery.md

|

||||

usage/traefik-progressive-delivery: tutorials/traefik-progressive-delivery.md

|

||||

usage/osm-progressive-delivery: tutorials/osm-progressive-delivery.md

|

||||

|

||||

29

.github/ISSUE_TEMPLATE/bug_report.md

vendored

Normal file

@@ -0,0 +1,29 @@

|

||||

---

|

||||

name: Bug report

|

||||

about: Create a report to help us improve this project

|

||||

title: ''

|

||||

assignees: ''

|

||||

|

||||

---

|

||||

|

||||

### Describe the bug

|

||||

|

||||

A clear and concise description of what the bug is.

|

||||

Please provide the Canary definition and Flagger logs.

|

||||

|

||||

### To Reproduce

|

||||

|

||||

<!--

|

||||

Steps to reproduce the behaviour

|

||||

-->

|

||||

|

||||

### Expected behavior

|

||||

|

||||

A clear and concise description of what you expected to happen.

|

||||

|

||||

### Additional context

|

||||

|

||||

- Flagger version:

|

||||

- Kubernetes version:

|

||||

- Service Mesh provider:

|

||||

- Ingress provider:

|

||||

2

.github/ISSUE_TEMPLATE/config.yml

vendored

Normal file

@@ -0,0 +1,2 @@

|

||||

blank_issues_enabled: true

|

||||

|

||||

19

.github/ISSUE_TEMPLATE/feature_request.md

vendored

Normal file

@@ -0,0 +1,19 @@

|

||||

---

|

||||

name: Feature Request

|

||||

about: I have a suggestion (and may want to implement it 🙂)!

|

||||

title: ''

|

||||

assignees: ''

|

||||

|

||||

---

|

||||

|

||||

## Describe the feature

|

||||

|

||||

What problem are you trying to solve?

|

||||

|

||||

### Proposed solution

|

||||

|

||||

What do you want to happen? Add any considered drawbacks.

|

||||

|

||||

### Any alternatives you've considered?

|

||||

|

||||

Is there another way to solve this problem that isn't as good a solution?

|

||||

17

.github/_main.workflow

vendored

@@ -1,17 +0,0 @@

|

||||

workflow "Publish Helm charts" {

|

||||

on = "push"

|

||||

resolves = ["helm-push"]

|

||||

}

|

||||

|

||||

action "helm-lint" {

|

||||

uses = "stefanprodan/gh-actions/helm@master"

|

||||

args = ["lint charts/*"]

|

||||

}

|

||||

|

||||

action "helm-push" {

|

||||

needs = ["helm-lint"]

|

||||

uses = "stefanprodan/gh-actions/helm-gh-pages@master"

|

||||

args = ["charts/*","https://flagger.app"]

|

||||

secrets = ["GITHUB_TOKEN"]

|

||||

}

|

||||

|

||||

49

.github/workflows/build.yaml

vendored

Normal file

@@ -0,0 +1,49 @@

|

||||

name: build

|

||||

|

||||

on:

|

||||

workflow_dispatch:

|

||||

pull_request:

|

||||

branches:

|

||||

- main

|

||||

push:

|

||||

branches:

|

||||

- main

|

||||

|

||||

jobs:

|

||||

container:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- name: Checkout

|

||||

uses: actions/checkout@v2

|

||||

- name: Restore Go cache

|

||||

uses: actions/cache@v1

|

||||

with:

|

||||

path: ~/go/pkg/mod

|

||||

key: ${{ runner.os }}-go-${{ hashFiles('**/go.sum') }}

|

||||

restore-keys: |

|

||||

${{ runner.os }}-go-

|

||||

- name: Setup Go

|

||||

uses: actions/setup-go@v2

|

||||

with:

|

||||

go-version: 1.16.x

|

||||

- name: Download modules

|

||||

run: |

|

||||

go mod download

|

||||

go install golang.org/x/tools/cmd/goimports

|

||||

- name: Run linters

|

||||

run: make test-fmt test-codegen

|

||||

- name: Run tests

|

||||

run: go test -race -coverprofile=coverage.txt -covermode=atomic $(go list ./pkg/...)

|

||||

- name: Check if working tree is dirty

|

||||

run: |

|

||||

if [[ $(git diff --stat) != '' ]]; then

|

||||

git --no-pager diff

|

||||

echo 'run make test and commit changes'

|

||||

exit 1

|

||||

fi

|

||||

- name: Upload coverage to Codecov

|

||||

uses: codecov/codecov-action@v1

|

||||

with:

|

||||

file: ./coverage.txt

|

||||

- name: Build container image

|

||||

run: docker build -t test/flagger:latest .

|

||||

41

.github/workflows/e2e.yaml

vendored

Normal file

@@ -0,0 +1,41 @@

|

||||

name: e2e

|

||||

|

||||

on:

|

||||

workflow_dispatch:

|

||||

pull_request:

|

||||

branches:

|

||||

- main

|

||||

push:

|

||||

branches:

|

||||

- main

|

||||

|

||||

jobs:

|

||||

kind:

|

||||

runs-on: ubuntu-latest

|

||||

strategy:

|

||||

matrix:

|

||||

provider:

|

||||

- istio

|

||||

- linkerd

|

||||

- contour

|

||||

- nginx

|

||||

- traefik

|

||||

- gloo

|

||||

- skipper

|

||||

- osm

|

||||

- kubernetes

|

||||

steps:

|

||||

- name: Checkout

|

||||

uses: actions/checkout@v2

|

||||

- name: Setup Kubernetes

|

||||

uses: engineerd/setup-kind@v0.5.0

|

||||

with:

|

||||

version: "v0.11.0"

|

||||

image: kindest/node:v1.21.1@sha256:fae9a58f17f18f06aeac9772ca8b5ac680ebbed985e266f711d936e91d113bad

|

||||

- name: Build container image

|

||||

run: |

|

||||

docker build -t test/flagger:latest .

|

||||

kind load docker-image test/flagger:latest

|

||||

- name: Run tests

|

||||

run: |

|

||||

./test/${{ matrix['provider'] }}/run.sh

|

||||

84

.github/workflows/release.yml

vendored

Normal file

@@ -0,0 +1,84 @@

|

||||

name: release

|

||||

on:

|

||||

push:

|

||||

tags:

|

||||

- 'v*'

|

||||

|

||||

jobs:

|

||||

build-push:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v2

|

||||

- uses: sigstore/cosign-installer@main

|

||||

- name: Prepare

|

||||

id: prep

|

||||

run: |

|

||||

VERSION=$(grep 'VERSION' pkg/version/version.go | awk '{ print $4 }' | tr -d '"')

|

||||

CHANGELOG="https://github.com/fluxcd/flagger/blob/main/CHANGELOG.md#$(echo $VERSION | tr -d '.')"

|

||||

echo ::set-output name=BUILD_DATE::$(date -u +'%Y-%m-%dT%H:%M:%SZ')

|

||||

echo ::set-output name=VERSION::${VERSION}

|

||||

echo ::set-output name=CHANGELOG::${CHANGELOG}

|

||||

- name: Setup QEMU

|

||||

uses: docker/setup-qemu-action@v1

|

||||

with:

|

||||

platforms: all

|

||||

- name: Setup Docker Buildx

|

||||

id: buildx

|

||||

uses: docker/setup-buildx-action@v1

|

||||

with:

|

||||

buildkitd-flags: "--debug"

|

||||

- name: Login to GitHub Container Registry

|

||||

uses: docker/login-action@v1

|

||||

with:

|

||||

registry: ghcr.io

|

||||

username: fluxcdbot

|

||||

password: ${{ secrets.GHCR_TOKEN }}

|

||||

- name: Publish image

|

||||

uses: docker/build-push-action@v2

|

||||

with:

|

||||

push: true

|

||||

builder: ${{ steps.buildx.outputs.name }}

|

||||

context: .

|

||||

file: ./Dockerfile

|

||||

platforms: linux/amd64,linux/arm64,linux/arm/v7

|

||||

build-args: |

|

||||

REVISON=${{ github.sha }}

|

||||

tags: |

|

||||

ghcr.io/fluxcd/flagger:${{ steps.prep.outputs.VERSION }}

|

||||

labels: |

|

||||

org.opencontainers.image.title=${{ github.event.repository.name }}

|

||||

org.opencontainers.image.description=${{ github.event.repository.description }}

|

||||

org.opencontainers.image.url=${{ github.event.repository.html_url }}

|

||||

org.opencontainers.image.source=${{ github.event.repository.html_url }}

|

||||

org.opencontainers.image.revision=${{ github.sha }}

|

||||

org.opencontainers.image.version=${{ steps.prep.outputs.VERSION }}

|

||||

org.opencontainers.image.created=${{ steps.prep.outputs.BUILD_DATE }}

|

||||

- name: Sign image

|

||||

run: |

|

||||

echo -n "${{secrets.COSIGN_PASSWORD}}" | \

|

||||

cosign sign -key ./.cosign/cosign.key -a git_sha=$GITHUB_SHA \

|

||||

ghcr.io/fluxcd/flagger:${{ steps.prep.outputs.VERSION }}

|

||||

- name: Check images

|

||||

run: |

|

||||

docker buildx imagetools inspect ghcr.io/fluxcd/flagger:${{ steps.prep.outputs.VERSION }}

|

||||

- name: Verifiy image signature

|

||||

run: |

|

||||

cosign verify -key ./.cosign/cosign.pub \

|

||||

ghcr.io/fluxcd/flagger:${{ steps.prep.outputs.VERSION }}

|

||||

- name: Publish Helm charts

|

||||

uses: stefanprodan/helm-gh-pages@v1.3.0

|

||||

with:

|

||||

token: ${{ secrets.GITHUB_TOKEN }}

|

||||

charts_url: https://flagger.app

|

||||

linting: off

|

||||

- name: Create release

|

||||

uses: actions/create-release@latest

|

||||

env:

|

||||

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

with:

|

||||

tag_name: ${{ github.ref }}

|

||||

release_name: ${{ github.ref }}

|

||||

draft: false

|

||||

prerelease: false

|

||||

body: |

|

||||

[CHANGELOG](${{ steps.prep.outputs.CHANGELOG }})

|

||||

37

.github/workflows/scan.yml

vendored

Normal file

@@ -0,0 +1,37 @@

|

||||

name: scan

|

||||

|

||||

on:

|

||||

push:

|

||||

branches: [ main ]

|

||||

pull_request:

|

||||

branches: [ main ]

|

||||

schedule:

|

||||

- cron: '18 10 * * 3'

|

||||

|

||||

jobs:

|

||||

fossa:

|

||||

name: FOSSA

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v2

|

||||

- name: Run FOSSA scan and upload build data

|

||||

uses: fossa-contrib/fossa-action@v1

|

||||

with:

|

||||

# FOSSA Push-Only API Token

|

||||

fossa-api-key: 5ee8bf422db1471e0bcf2bcb289185de

|

||||

github-token: ${{ github.token }}

|

||||

|

||||

codeql:

|

||||

name: CodeQL

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- name: Checkout repository

|

||||

uses: actions/checkout@v2

|

||||

- name: Initialize CodeQL

|

||||

uses: github/codeql-action/init@v1

|

||||

with:

|

||||

languages: go

|

||||

- name: Autobuild

|

||||

uses: github/codeql-action/autobuild@v1

|

||||

- name: Perform CodeQL Analysis

|

||||

uses: github/codeql-action/analyze@v1

|

||||

1

.gitignore

vendored

@@ -20,3 +20,4 @@ artifacts/gcloud/

|

||||

Makefile.dev

|

||||

|

||||

vendor

|

||||

coverage.txt

|

||||

|

||||

@@ -1,7 +1,7 @@

|

||||

builds:

|

||||

- main: ./cmd/flagger

|

||||

binary: flagger

|

||||

ldflags: -s -w -X github.com/weaveworks/flagger/pkg/version.REVISION={{.Commit}}

|

||||

ldflags: -s -w -X github.com/fluxcd/flagger/pkg/version.REVISION={{.Commit}}

|

||||

goos:

|

||||

- linux

|

||||

goarch:

|

||||

@@ -12,7 +12,3 @@ archives:

|

||||

- name_template: "{{ .Binary }}_{{ .Version }}_{{ .Os }}_{{ .Arch }}"

|

||||

files:

|

||||

- none*

|

||||

changelog:

|

||||

filters:

|

||||

exclude:

|

||||

- '^CircleCI'

|

||||

|

||||

751

CHANGELOG.md

3

CODE_OF_CONDUCT.md

Normal file

@@ -0,0 +1,3 @@

|

||||

## Code of Conduct

|

||||

|

||||

Flagger follows the [CNCF Code of Conduct](https://github.com/cncf/foundation/blob/master/code-of-conduct.md).

|

||||

@@ -14,10 +14,28 @@ Origin (DCO). This document was created by the Linux Kernel community and is a

|

||||

simple statement that you, as a contributor, have the legal right to make the

|

||||

contribution.

|

||||

|

||||

## Chat

|

||||

We require all commits to be signed. By signing off with your signature, you

|

||||

certify that you wrote the patch or otherwise have the right to contribute the

|

||||

material by the rules of the [DCO](DCO):

|

||||

|

||||

`Signed-off-by: Jane Doe <jane.doe@example.com>`

|

||||

|

||||

The signature must contain your real name

|

||||

(sorry, no pseudonyms or anonymous contributions)

|

||||

If your `user.name` and `user.email` are configured in your Git config,

|

||||

you can sign your commit automatically with `git commit -s`.

|

||||

|

||||

## Communications

|

||||

|

||||

The project uses Slack: To join the conversation, simply join the

|

||||

[Weave community](https://slack.weave.works/) Slack workspace #flagger channel.

|

||||

[CNCF](https://slack.cncf.io/) Slack workspace and use the

|

||||

[#flagger](https://cloud-native.slack.com/messages/flagger/) channel.

|

||||

|

||||

The developers use a mailing list to discuss development as well.

|

||||

Simply subscribe to [flux-dev on cncf.io](https://lists.cncf.io/g/cncf-flux-dev)

|

||||

to join the conversation (this will also add an invitation to your

|

||||

Google calendar for our [Flux

|

||||

meeting](https://docs.google.com/document/d/1l_M0om0qUEN_NNiGgpqJ2tvsF2iioHkaARDeh6b70B0/edit#)).

|

||||

|

||||

## Getting Started

|

||||

|

||||

@@ -69,4 +87,3 @@ For Flagger we prefer the following rules for good commit messages:

|

||||

The [following article](https://chris.beams.io/posts/git-commit/#seven-rules)

|

||||

has some more helpful advice on documenting your work.

|

||||

|

||||

This doc is adapted from [FluxCD](https://github.com/fluxcd/flux/blob/master/CONTRIBUTING.md).

|

||||

|

||||

36

DCO

Normal file

@@ -0,0 +1,36 @@

|

||||

Developer Certificate of Origin

|

||||

Version 1.1

|

||||

|

||||

Copyright (C) 2004, 2006 The Linux Foundation and its contributors.

|

||||

660 York Street, Suite 102,

|

||||

San Francisco, CA 94110 USA

|

||||

|

||||

Everyone is permitted to copy and distribute verbatim copies of this

|

||||

license document, but changing it is not allowed.

|

||||

|

||||

|

||||

Developer's Certificate of Origin 1.1

|

||||

|

||||

By making a contribution to this project, I certify that:

|

||||

|

||||

(a) The contribution was created in whole or in part by me and I

|

||||

have the right to submit it under the open source license

|

||||

indicated in the file; or

|

||||

|

||||

(b) The contribution is based upon previous work that, to the best

|

||||

of my knowledge, is covered under an appropriate open source

|

||||

license and I have the right under that license to submit that

|

||||

work with modifications, whether created in whole or in part

|

||||

by me, under the same open source license (unless I am

|

||||

permitted to submit under a different license), as indicated

|

||||

in the file; or

|

||||

|

||||

(c) The contribution was provided directly to me by some other

|

||||

person who certified (a), (b) or (c) and I have not modified

|

||||

it.

|

||||

|

||||

(d) I understand and agree that this project and the contribution

|

||||

are public and that a record of the contribution (including all

|

||||

personal information I submit with it, including my sign-off) is

|

||||

maintained indefinitely and may be redistributed consistent with

|

||||

this project or the open source license(s) involved.

|

||||

27

Dockerfile

@@ -1,9 +1,32 @@

|

||||

FROM alpine:3.12

|

||||

FROM golang:1.16-alpine as builder

|

||||

|

||||

ARG TARGETPLATFORM

|

||||

ARG REVISON

|

||||

|

||||

WORKDIR /workspace

|

||||

|

||||

# copy modules manifests

|

||||

COPY go.mod go.mod

|

||||

COPY go.sum go.sum

|

||||

|

||||

# cache modules

|

||||

RUN go mod download

|

||||

|

||||

# copy source code

|

||||

COPY cmd/ cmd/

|

||||

COPY pkg/ pkg/

|

||||

|

||||

# build

|

||||

RUN CGO_ENABLED=0 go build \

|

||||

-ldflags "-s -w -X github.com/fluxcd/flagger/pkg/version.REVISION=${REVISON}" \

|

||||

-a -o flagger ./cmd/flagger

|

||||

|

||||

FROM alpine:3.13

|

||||

|

||||

RUN apk --no-cache add ca-certificates

|

||||

|

||||

USER nobody

|

||||

|

||||

COPY --chown=nobody:nobody /bin/flagger .

|

||||

COPY --from=builder --chown=nobody:nobody /workspace/flagger .

|

||||

|

||||

ENTRYPOINT ["./flagger"]

|

||||

|

||||

2

LICENSE

@@ -186,7 +186,7 @@

|

||||

same "printed page" as the copyright notice for easier

|

||||

identification within third-party archives.

|

||||

|

||||

Copyright 2018 Weaveworks. All rights reserved.

|

||||

Copyright [yyyy] [name of copyright owner]

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License");

|

||||

you may not use this file except in compliance with the License.

|

||||

|

||||

@@ -1,6 +1,6 @@

|

||||

The maintainers are generally available in Slack at

|

||||

https://weave-community.slack.com/messages/flagger/ (obtain an invitation

|

||||

at https://slack.weave.works/).

|

||||

https://cloud-native.slack.com/messages/flagger/ (obtain an invitation

|

||||

at https://slack.cncf.io/).

|

||||

|

||||

Stefan Prodan, Weaveworks <stefan@weave.works> (Slack: @stefan Twitter: @stefanprodan)

|

||||

Takeshi Yoneda, DMM.com <cz.rk.t0415y.g@gmail.com> (Slack: @mathetake Twitter: @mathetake)

|

||||

|

||||

17

Makefile

@@ -3,14 +3,7 @@ VERSION?=$(shell grep 'VERSION' pkg/version/version.go | awk '{ print $$4 }' | t

|

||||

LT_VERSION?=$(shell grep 'VERSION' cmd/loadtester/main.go | awk '{ print $$4 }' | tr -d '"' | head -n1)

|

||||

|

||||

build:

|

||||

GIT_COMMIT=$$(git rev-list -1 HEAD) && CGO_ENABLED=0 GOOS=linux go build \

|

||||

-ldflags "-s -w -X github.com/weaveworks/flagger/pkg/version.REVISION=$${GIT_COMMIT}" \

|

||||

-a -installsuffix cgo -o ./bin/flagger ./cmd/flagger/*

|

||||

docker build -t weaveworks/flagger:$(TAG) . -f Dockerfile

|

||||

|

||||

push:

|

||||

docker tag weaveworks/flagger:$(TAG) weaveworks/flagger:$(VERSION)

|

||||

docker push weaveworks/flagger:$(VERSION)

|

||||

CGO_ENABLED=0 go build -a -o ./bin/flagger ./cmd/flagger

|

||||

|

||||

fmt:

|

||||

gofmt -l -s -w ./

|

||||

@@ -48,13 +41,9 @@ release:

|

||||

git tag "v$(VERSION)"

|

||||

git push origin "v$(VERSION)"

|

||||

|

||||

release-notes:

|

||||

cd /tmp && GH_REL_URL="https://github.com/buchanae/github-release-notes/releases/download/0.2.0/github-release-notes-linux-amd64-0.2.0.tar.gz" && \

|

||||

curl -sSL $${GH_REL_URL} | tar xz && sudo mv github-release-notes /usr/local/bin/

|

||||

|

||||

loadtester-build:

|

||||

CGO_ENABLED=0 GOOS=linux go build -a -installsuffix cgo -o ./bin/loadtester ./cmd/loadtester/*

|

||||

docker build -t weaveworks/flagger-loadtester:$(LT_VERSION) . -f Dockerfile.loadtester

|

||||

docker build -t ghcr.io/fluxcd/flagger-loadtester:$(LT_VERSION) . -f Dockerfile.loadtester

|

||||

|

||||

loadtester-push:

|

||||

docker push weaveworks/flagger-loadtester:$(LT_VERSION)

|

||||

docker push ghcr.io/fluxcd/flagger-loadtester:$(LT_VERSION)

|

||||

|

||||

80

README.md

@@ -1,21 +1,25 @@

|

||||

# flagger

|

||||

|

||||

[](https://circleci.com/gh/weaveworks/flagger)

|

||||

[](https://goreportcard.com/report/github.com/weaveworks/flagger)

|

||||

[](https://codecov.io/gh/weaveworks/flagger)

|

||||

[](https://github.com/weaveworks/flagger/blob/master/LICENSE)

|

||||

[](https://github.com/weaveworks/flagger/releases)

|

||||

[](https://bestpractices.coreinfrastructure.org/projects/4783)

|

||||

[](https://github.com/fluxcd/flagger/actions)

|

||||

[](https://goreportcard.com/report/github.com/fluxcd/flagger)

|

||||

[](https://github.com/fluxcd/flagger/blob/main/LICENSE)

|

||||

[](https://github.com/fluxcd/flagger/releases)

|

||||

|

||||

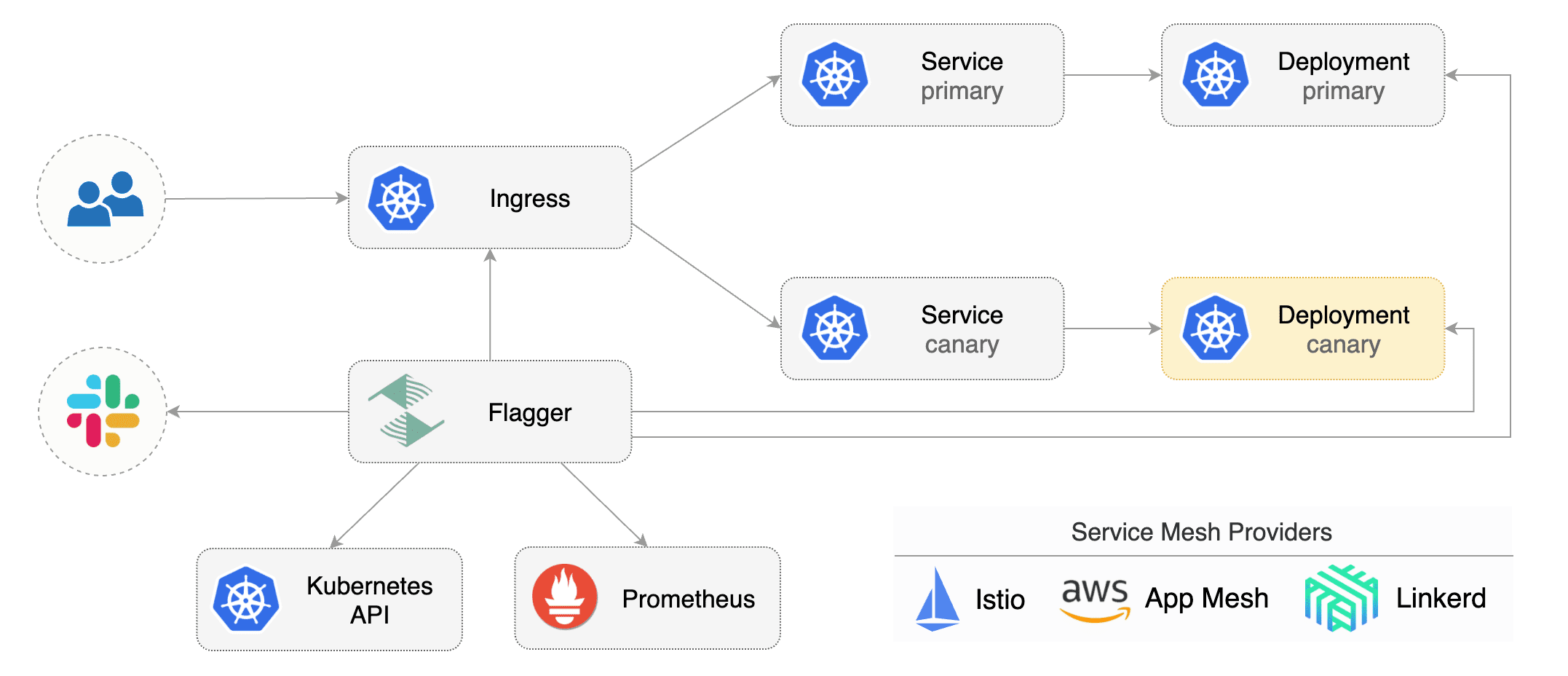

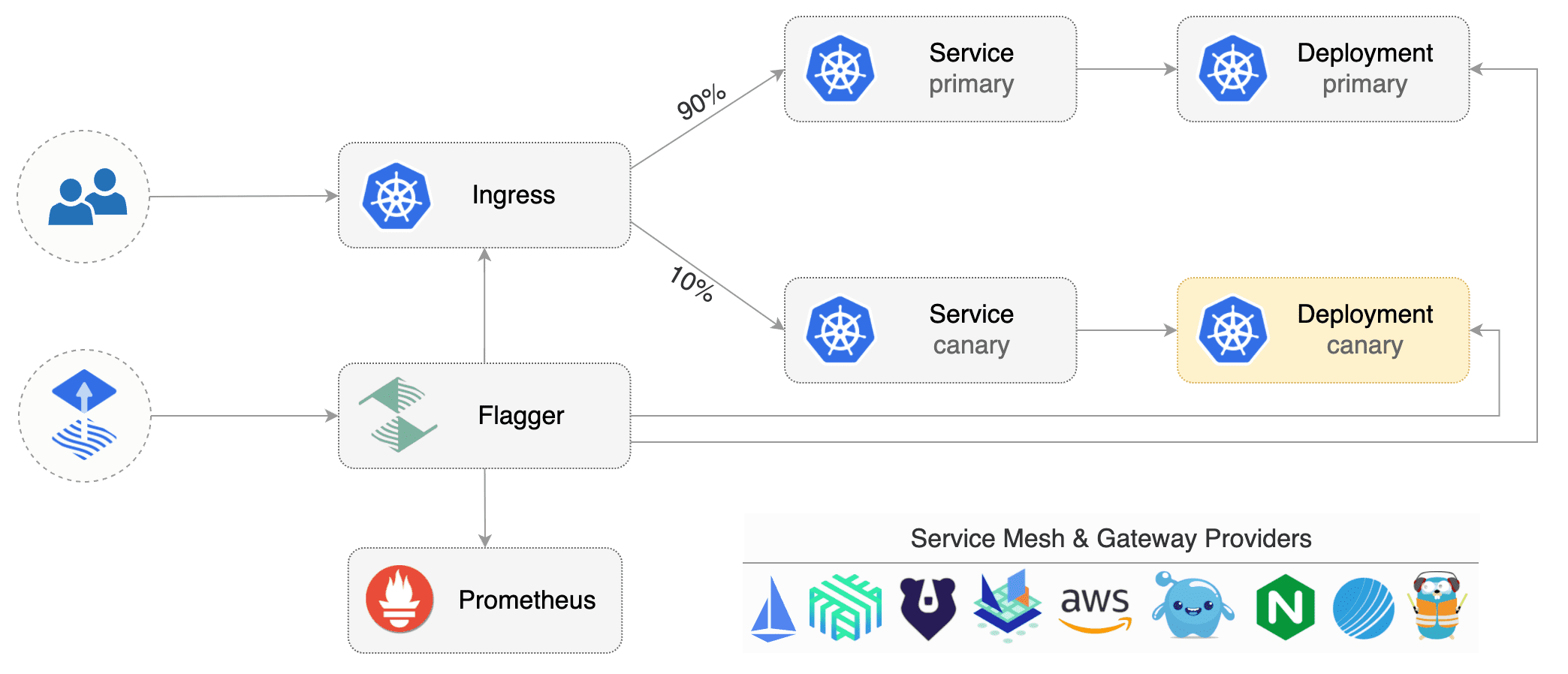

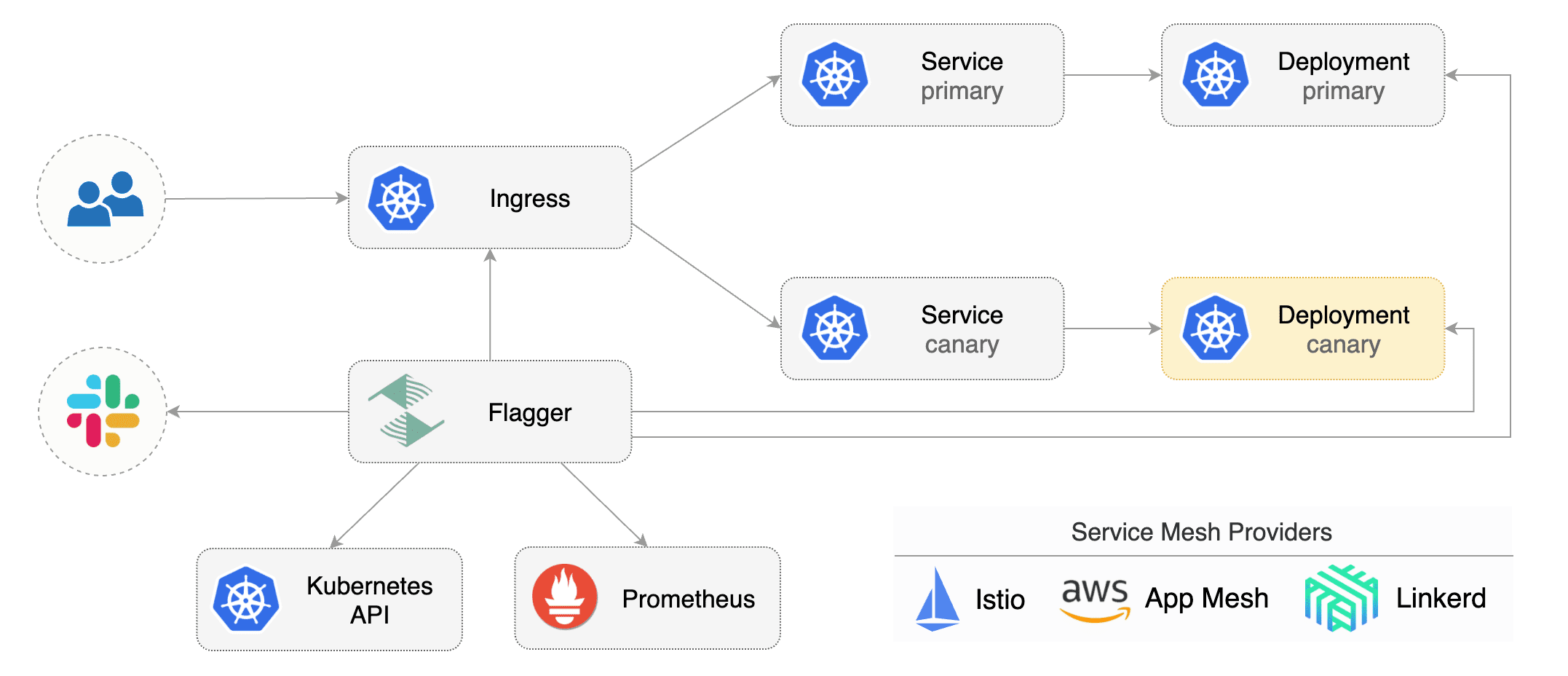

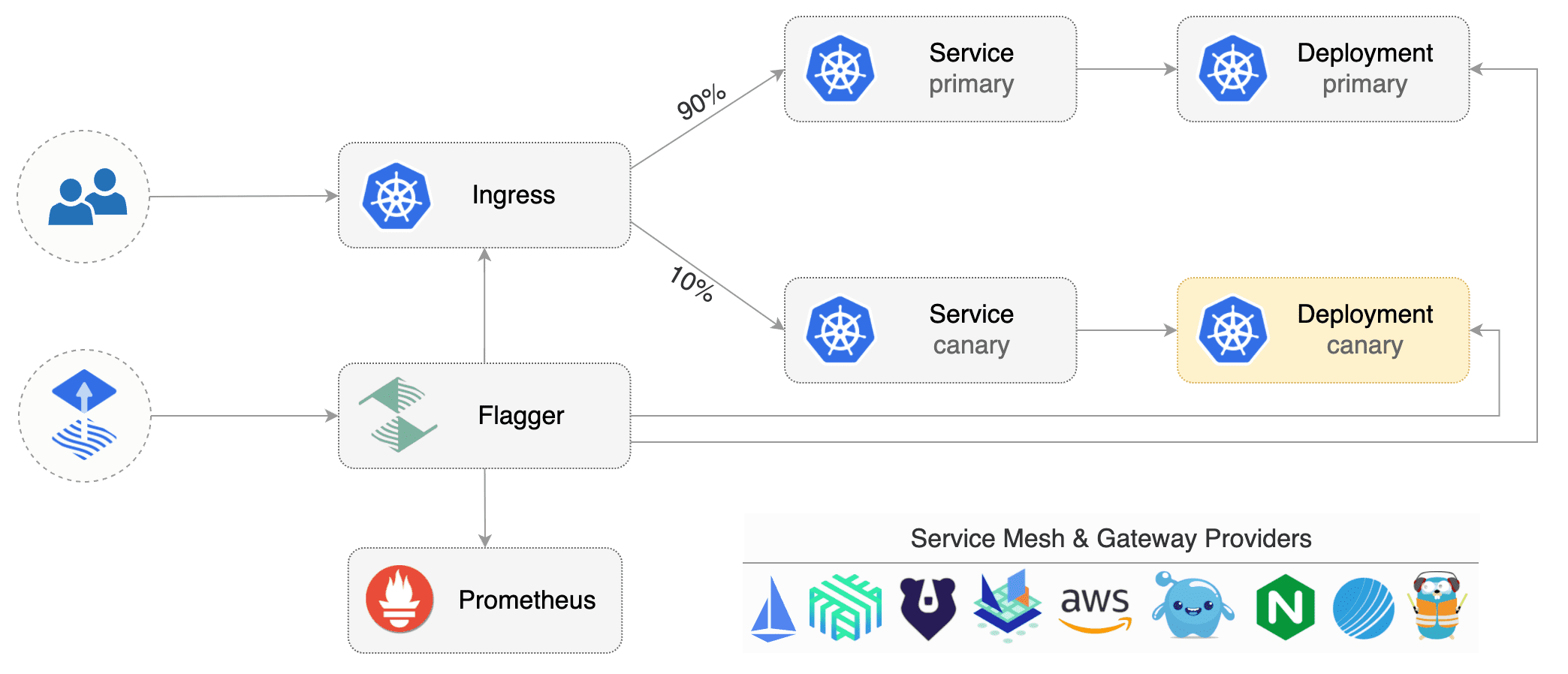

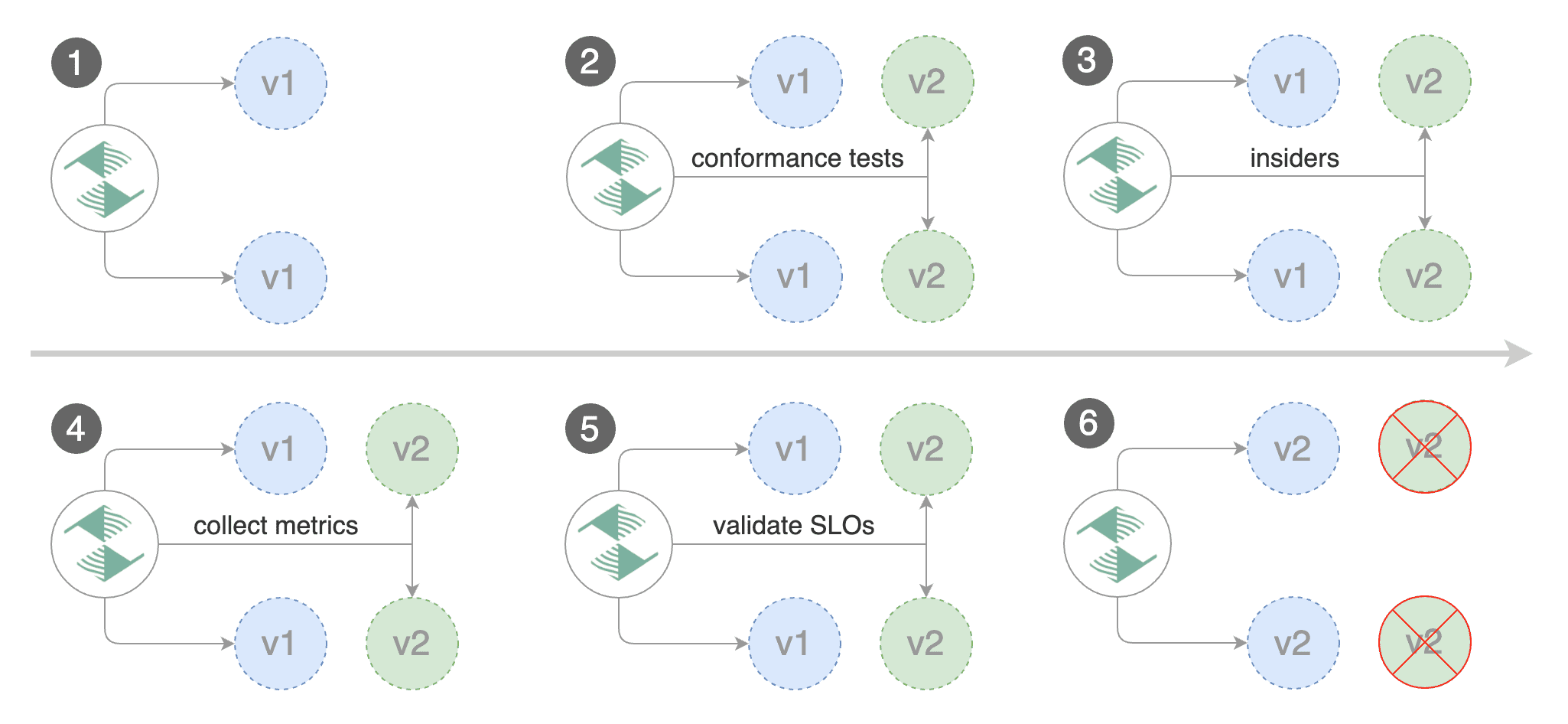

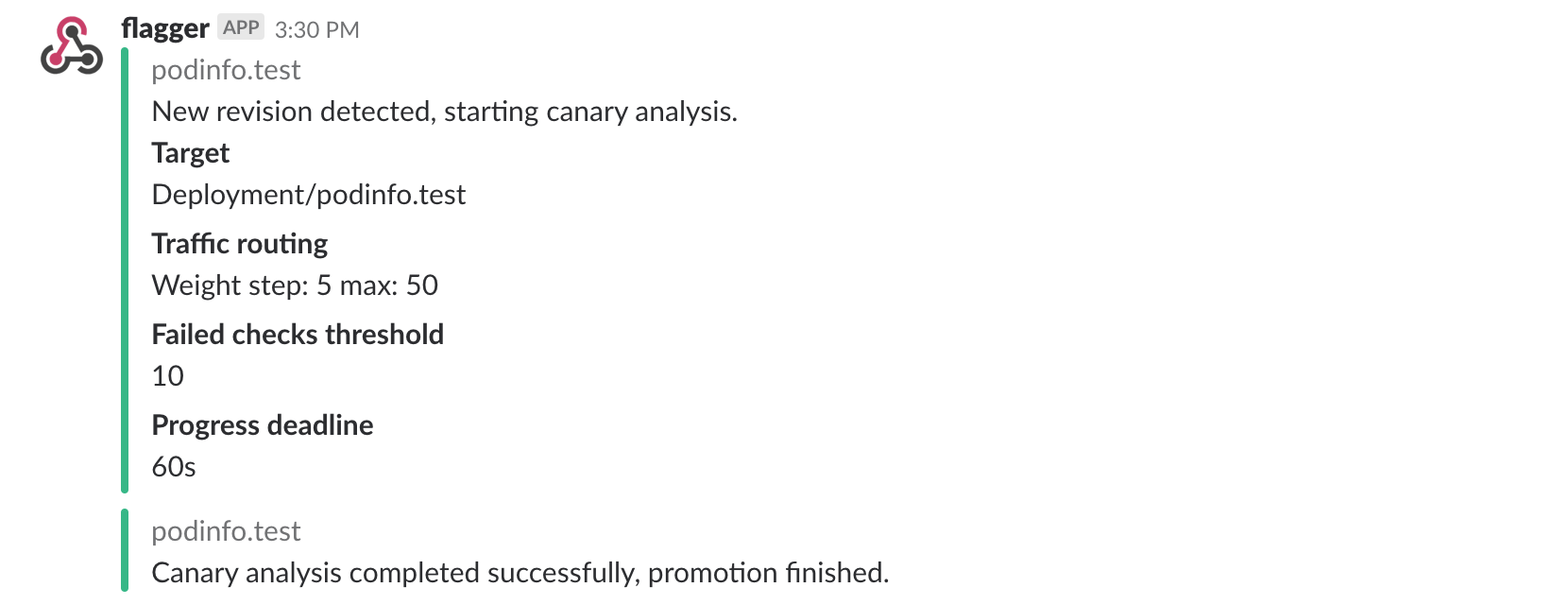

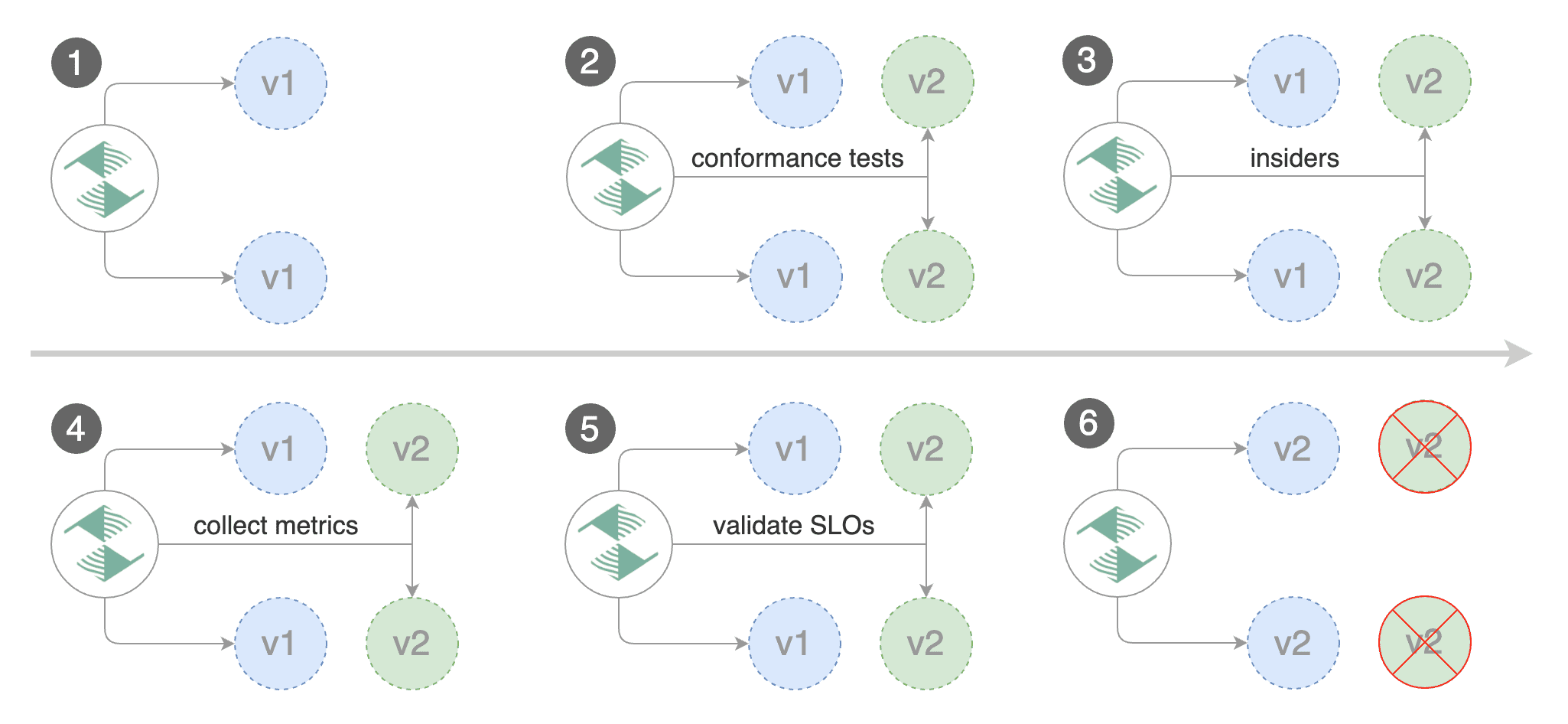

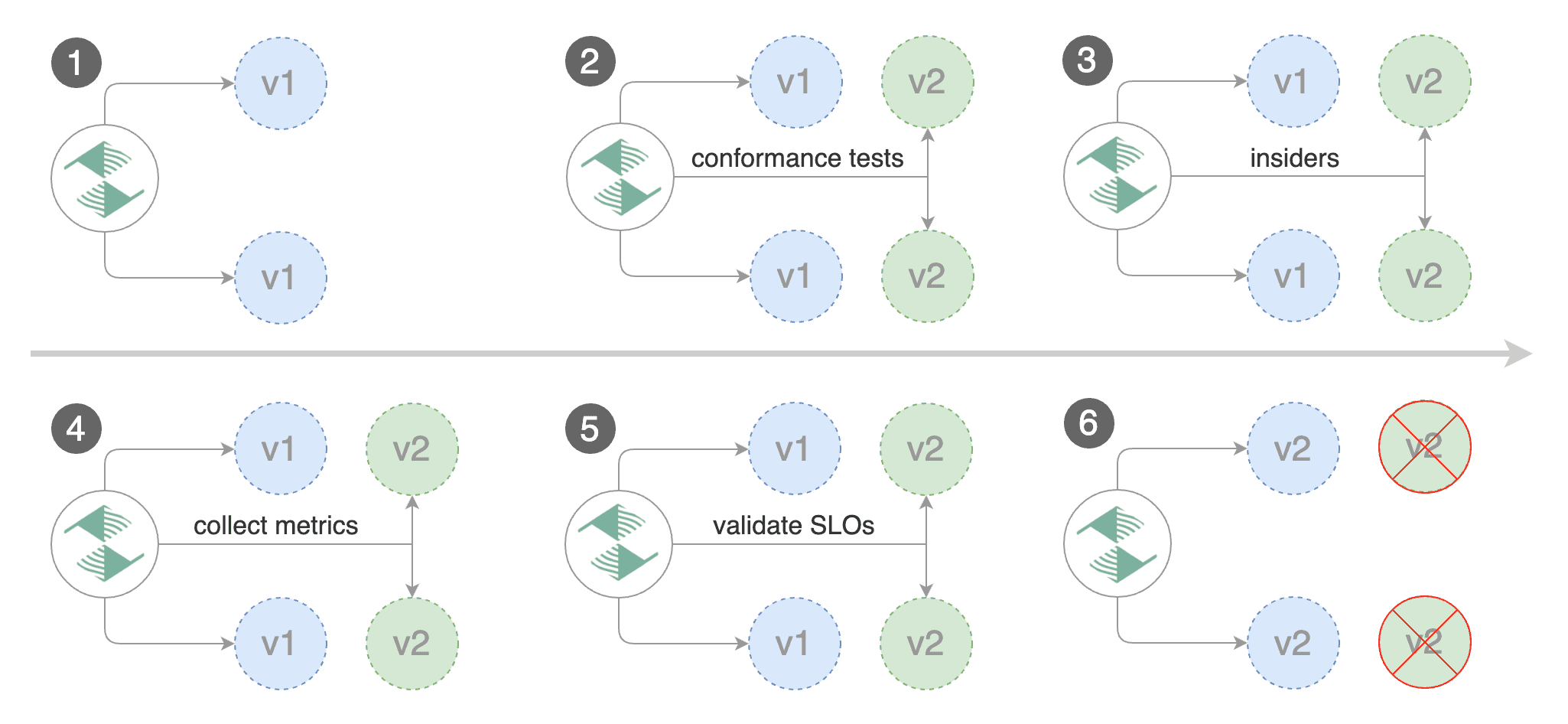

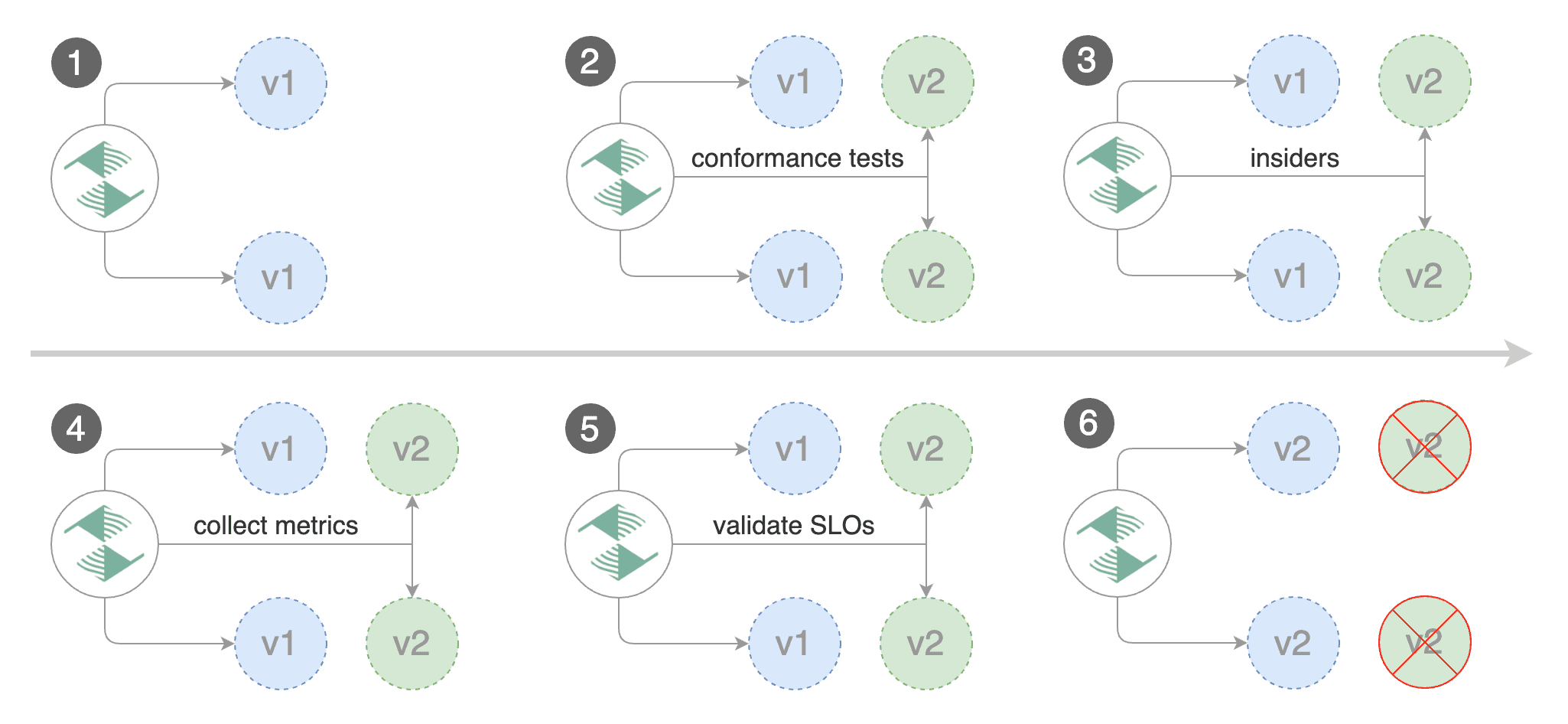

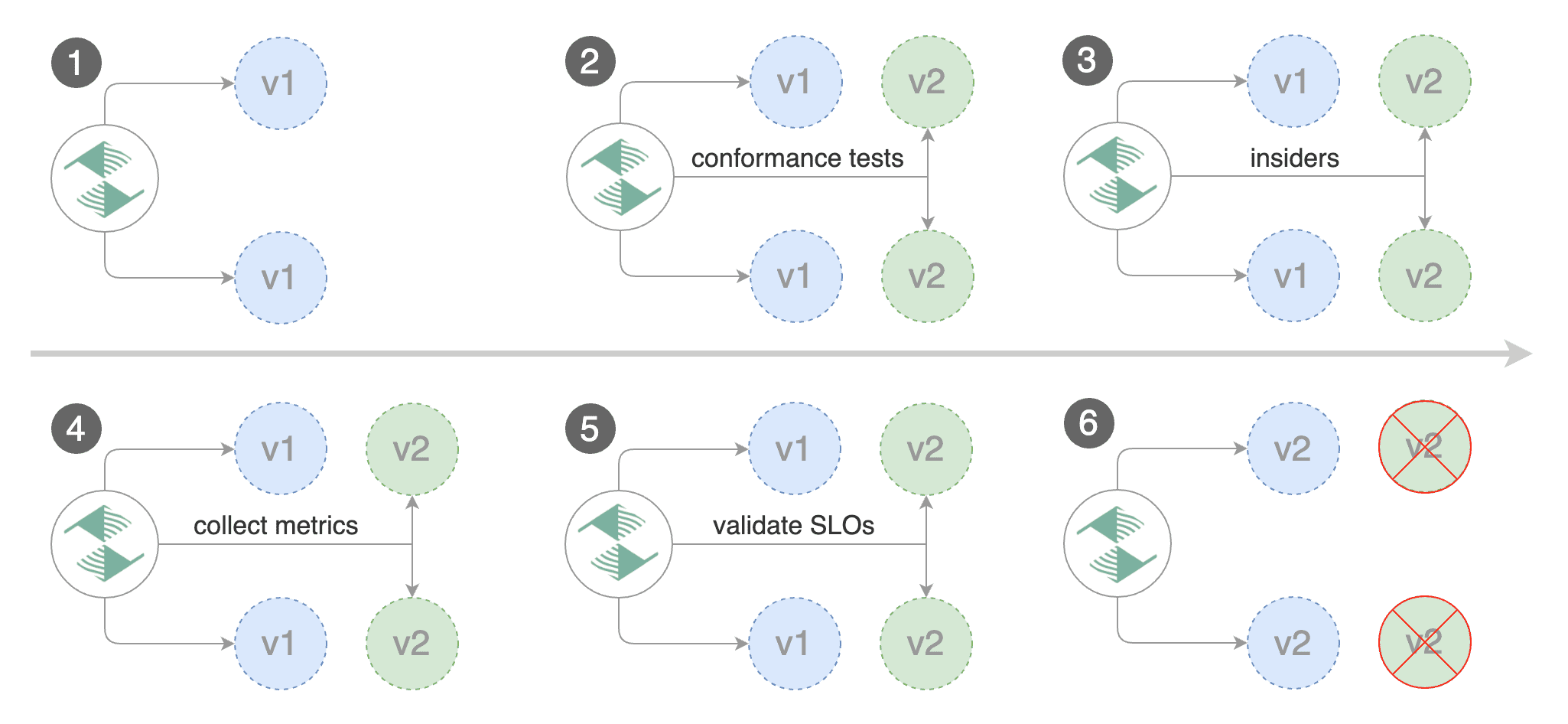

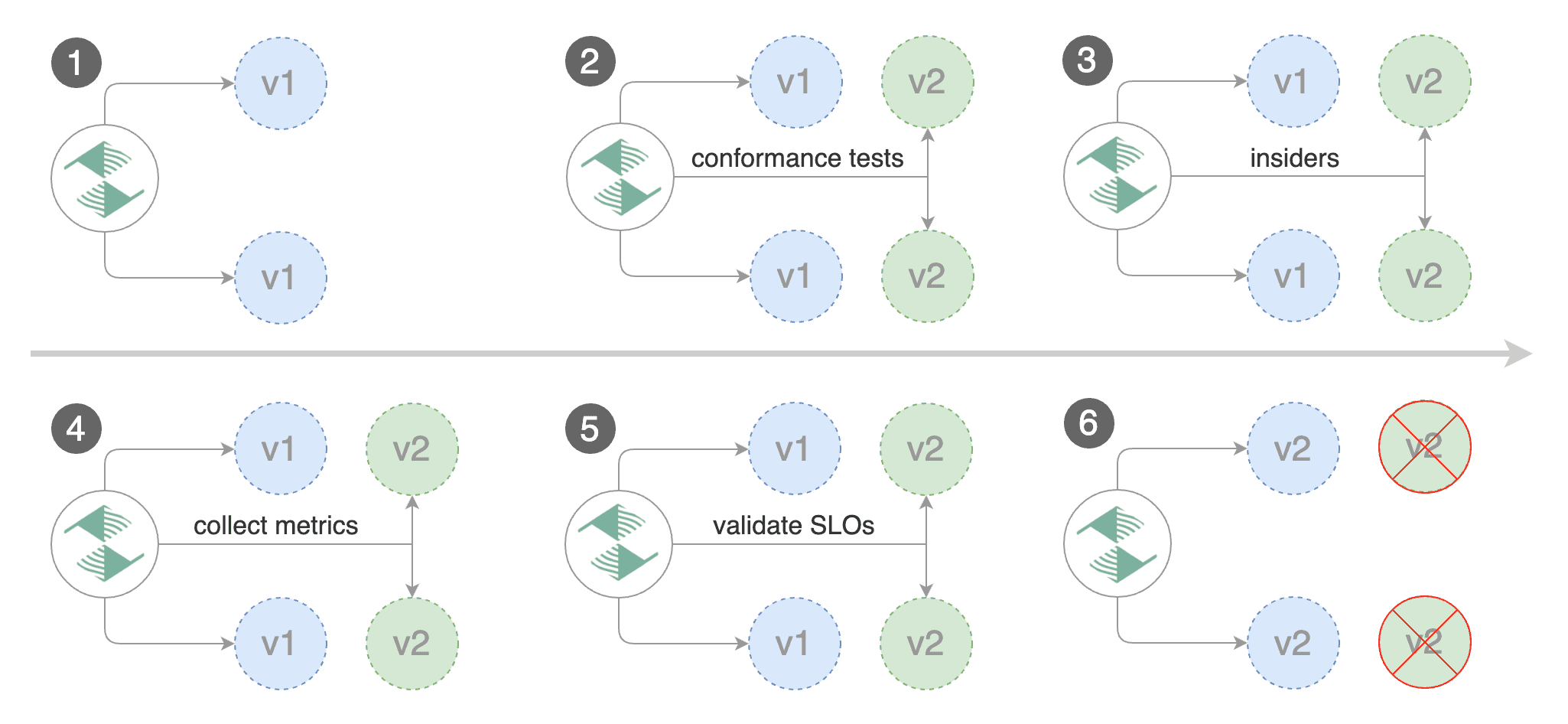

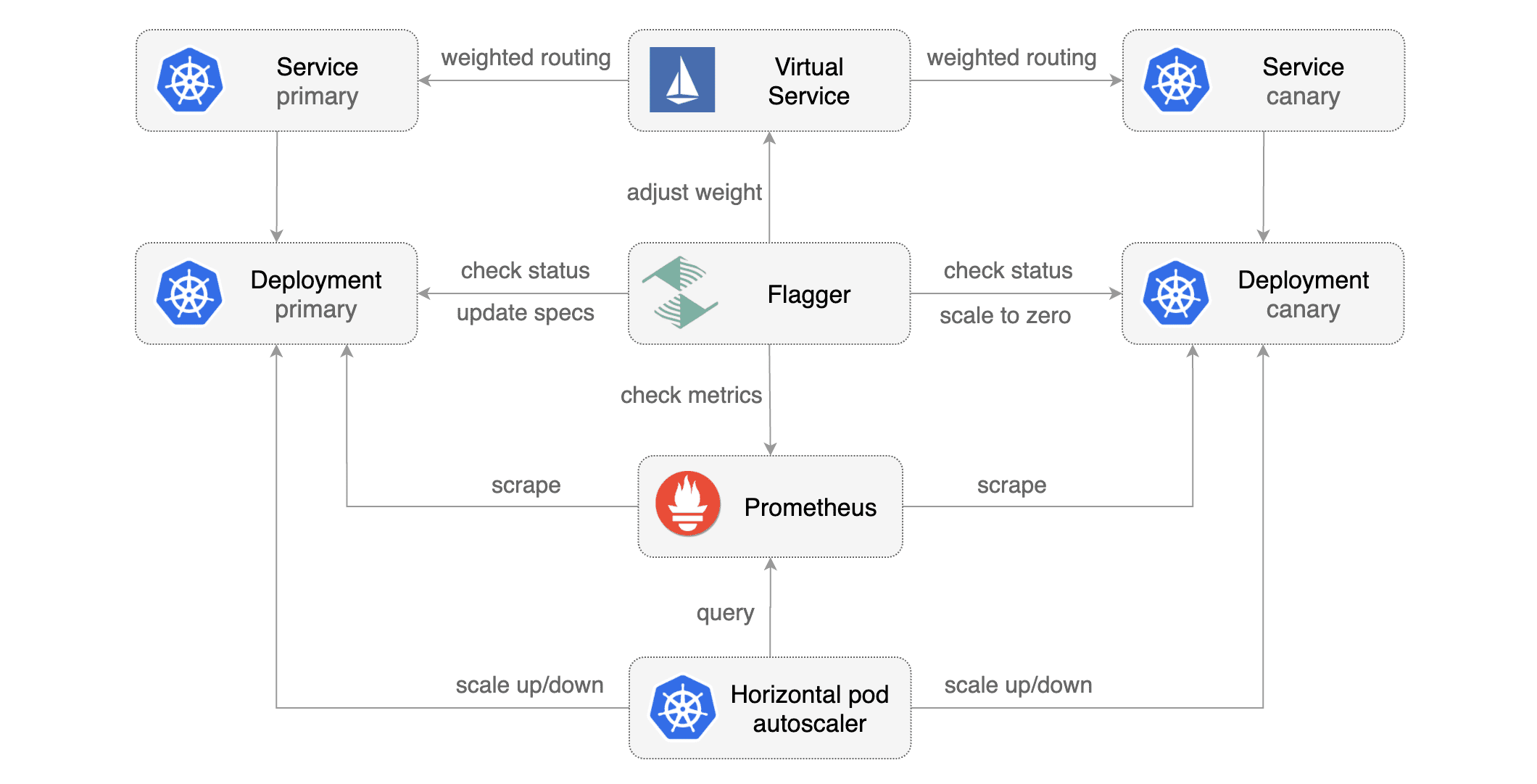

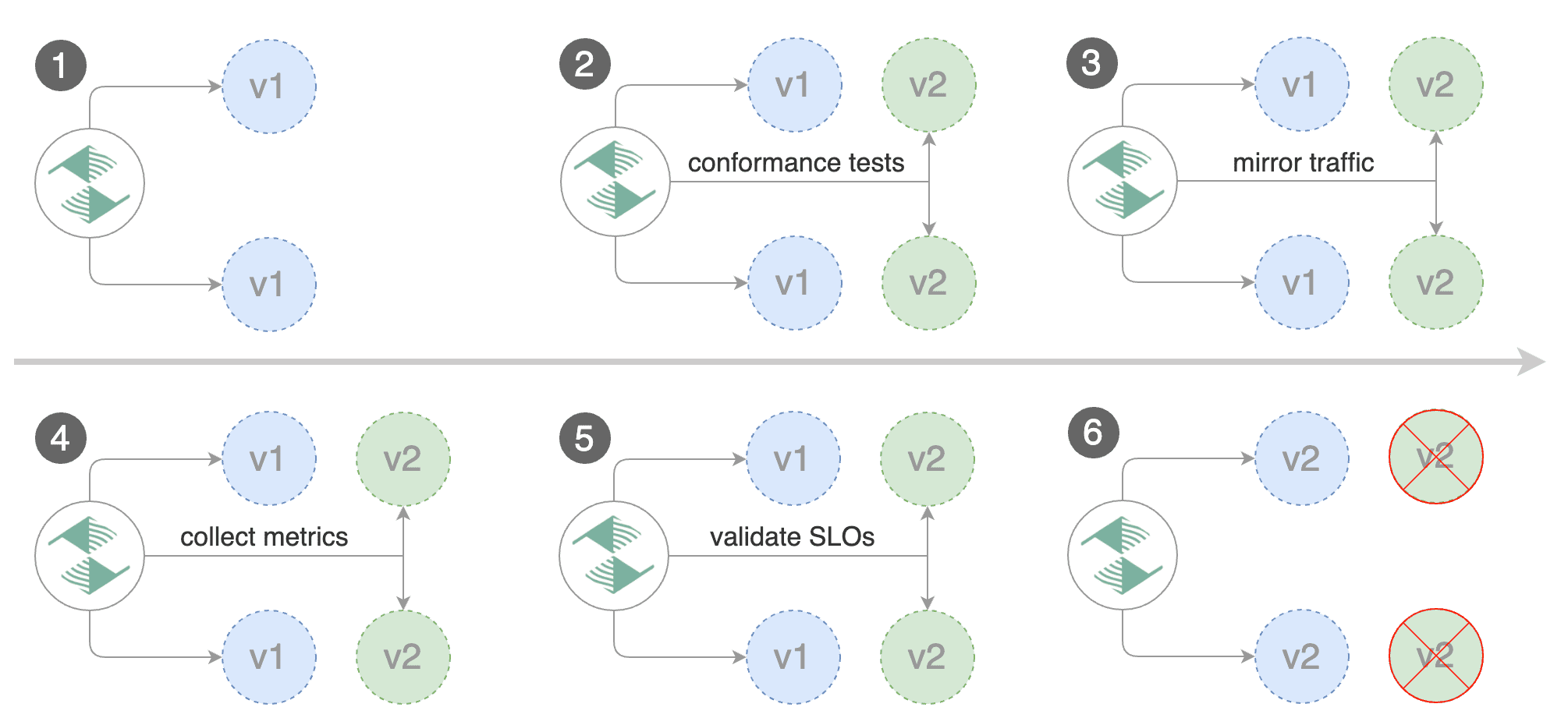

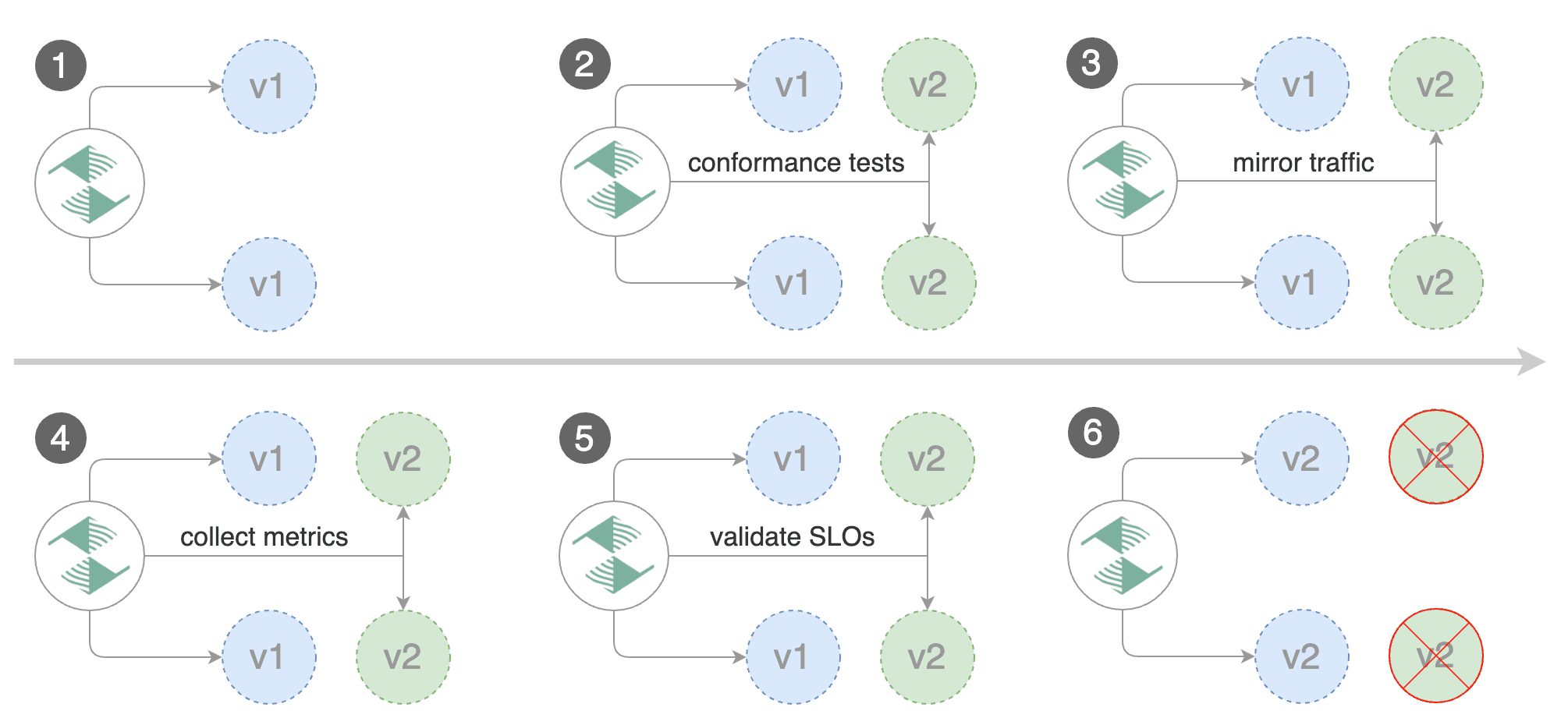

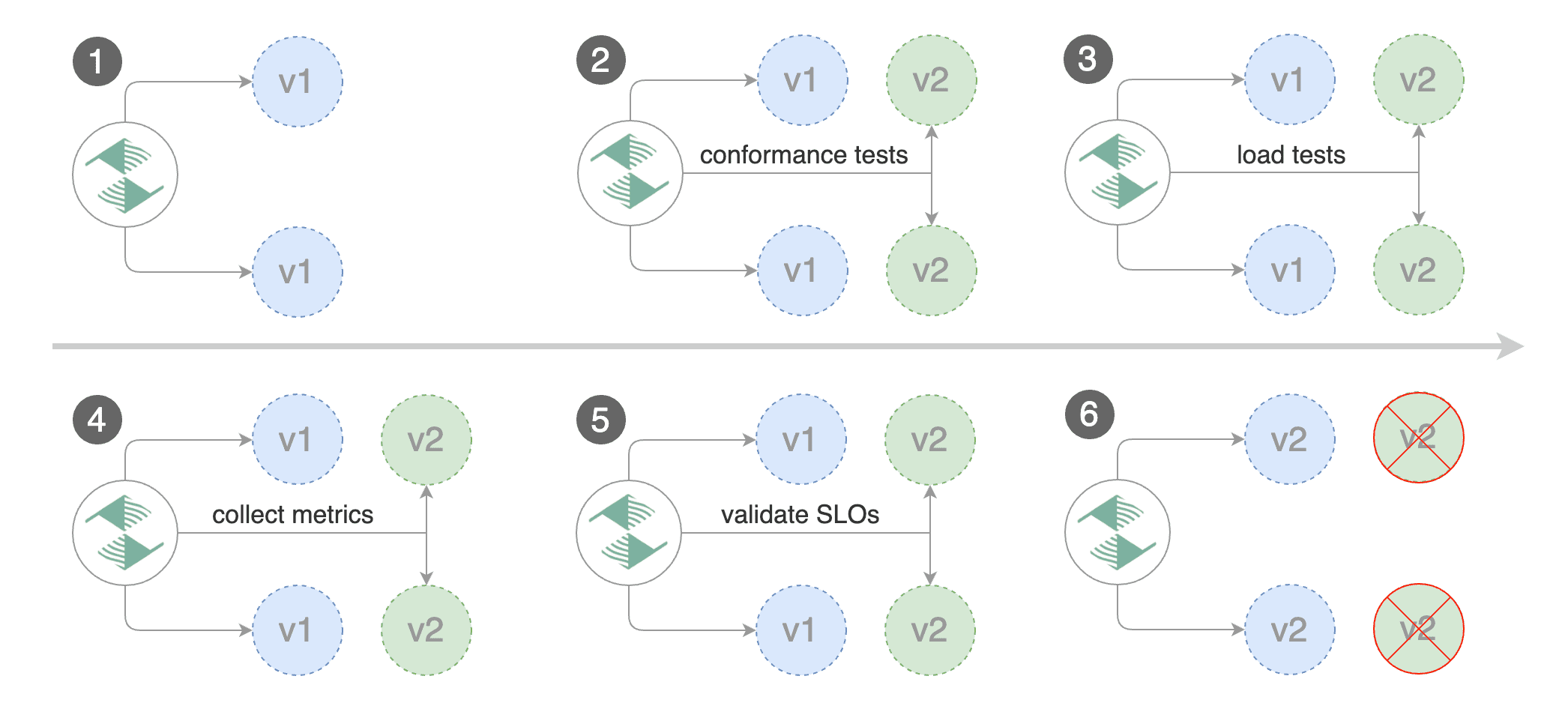

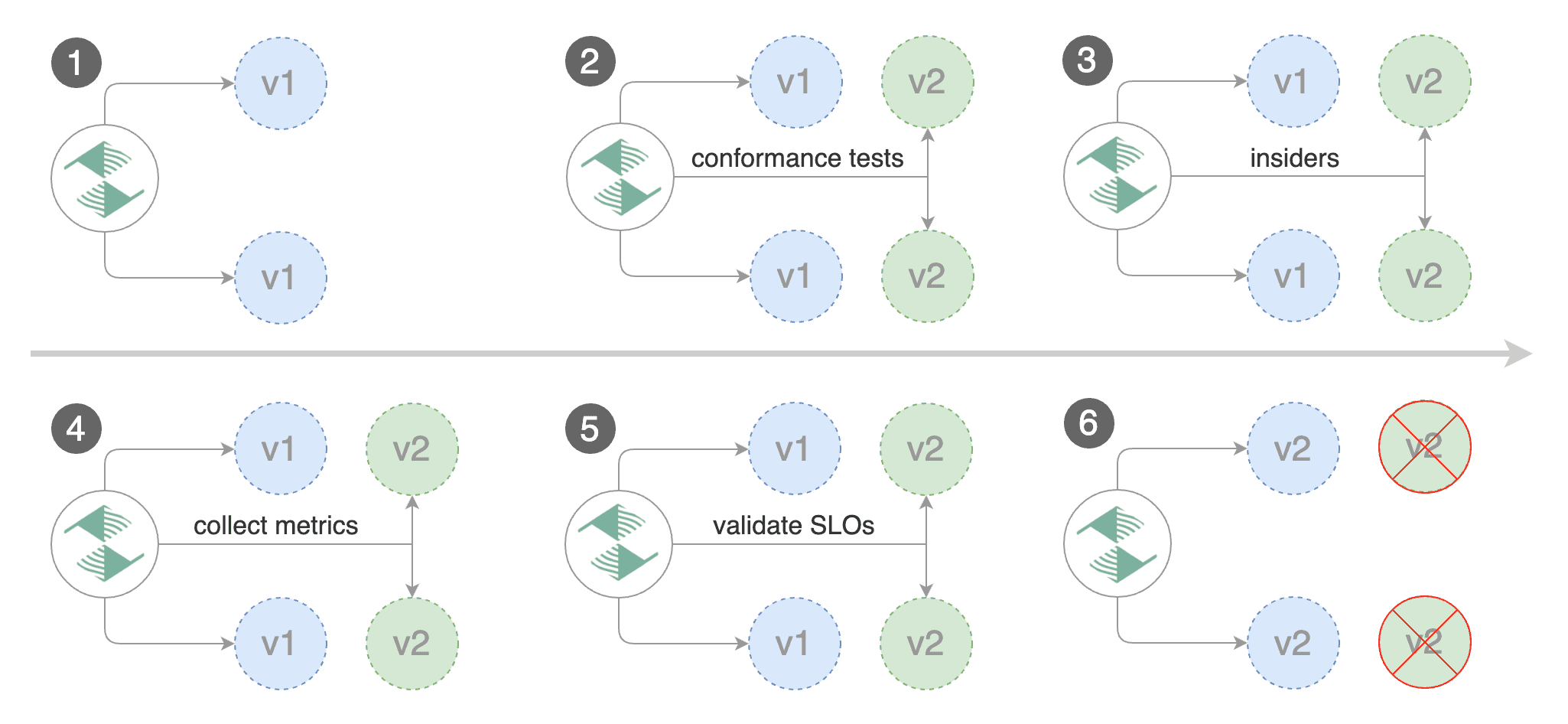

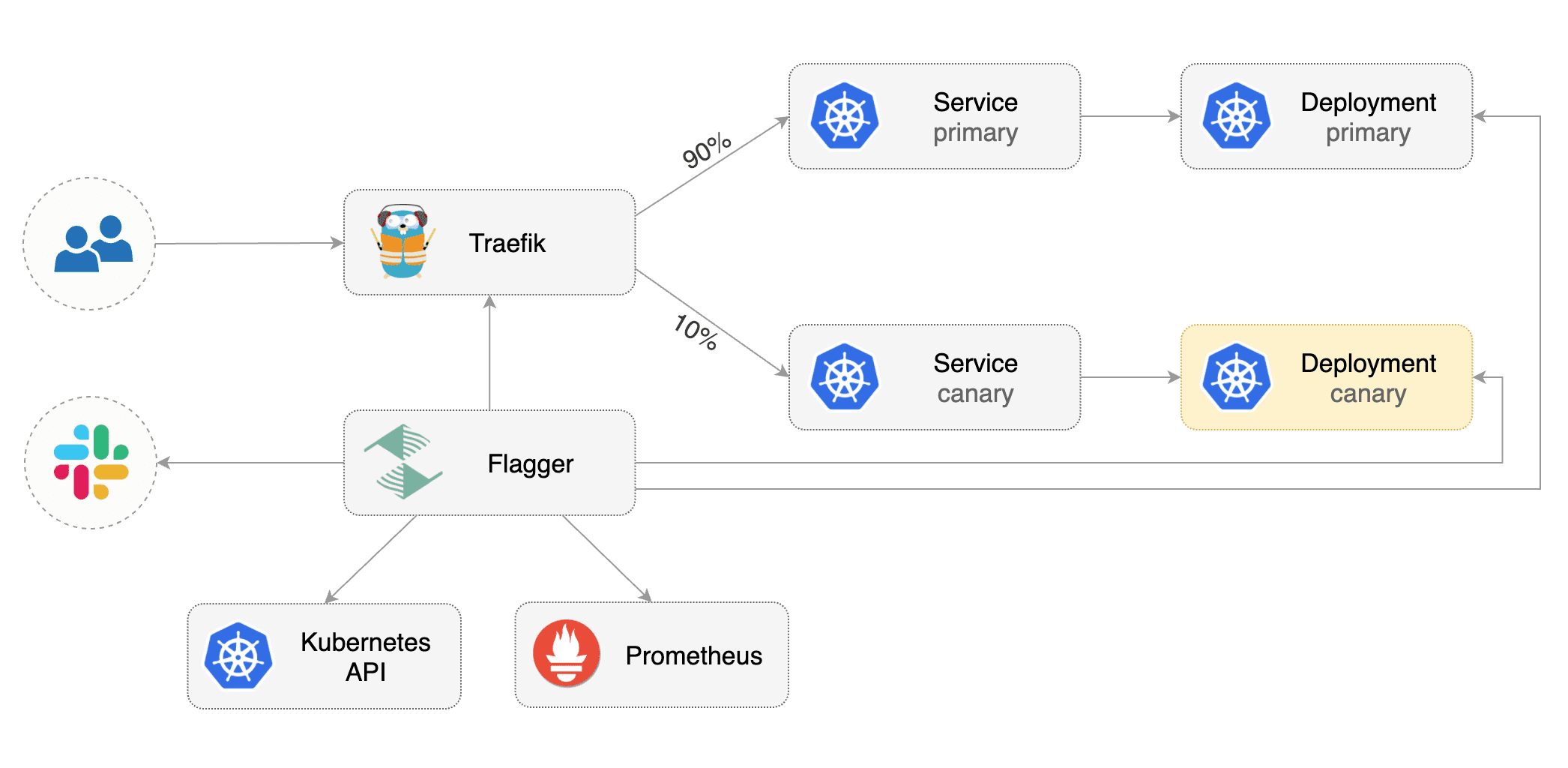

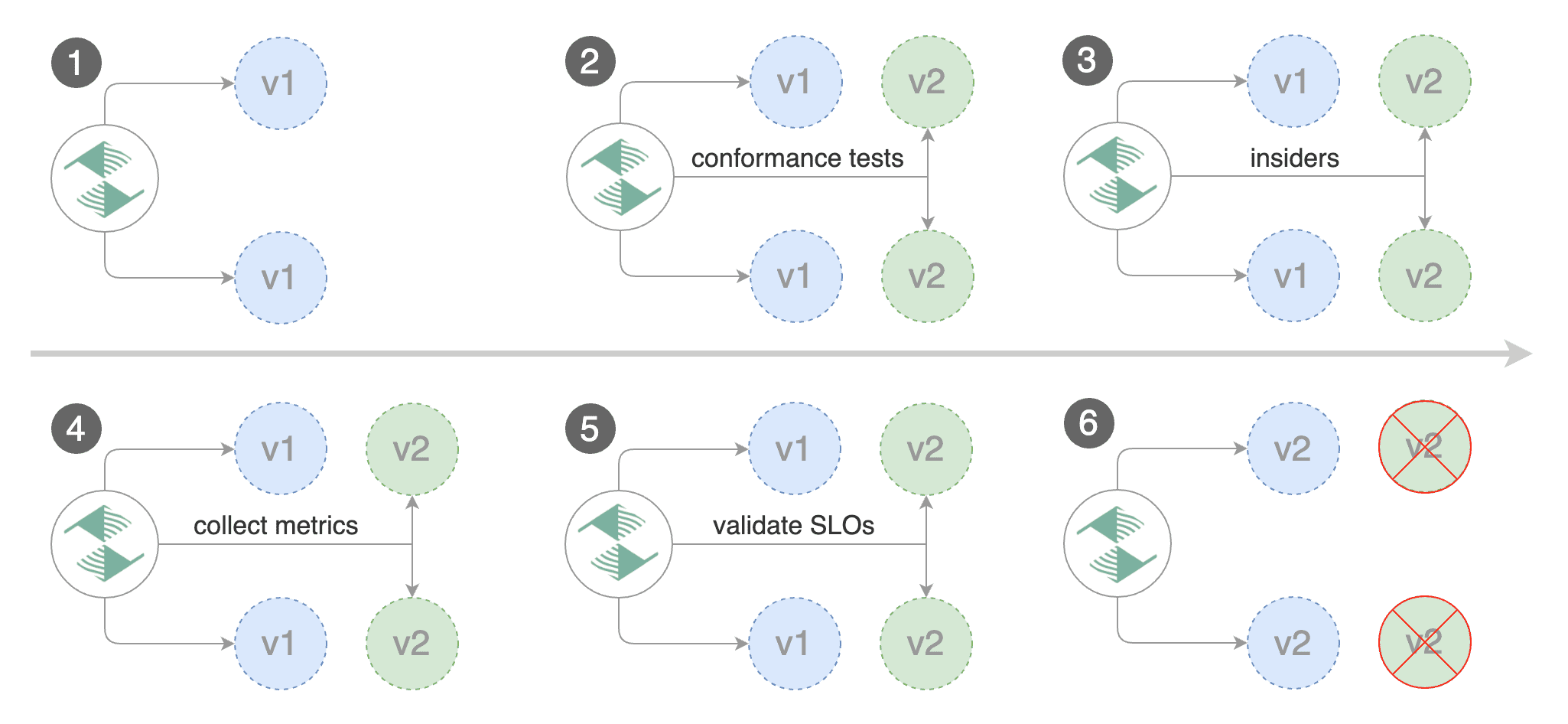

Flagger is a progressive delivery tool that automates the release process for applications running on Kubernetes.

|

||||

It reduces the risk of introducing a new software version in production

|

||||

by gradually shifting traffic to the new version while measuring metrics and running conformance tests.

|

||||

|

||||

|

||||

|

||||

|

||||

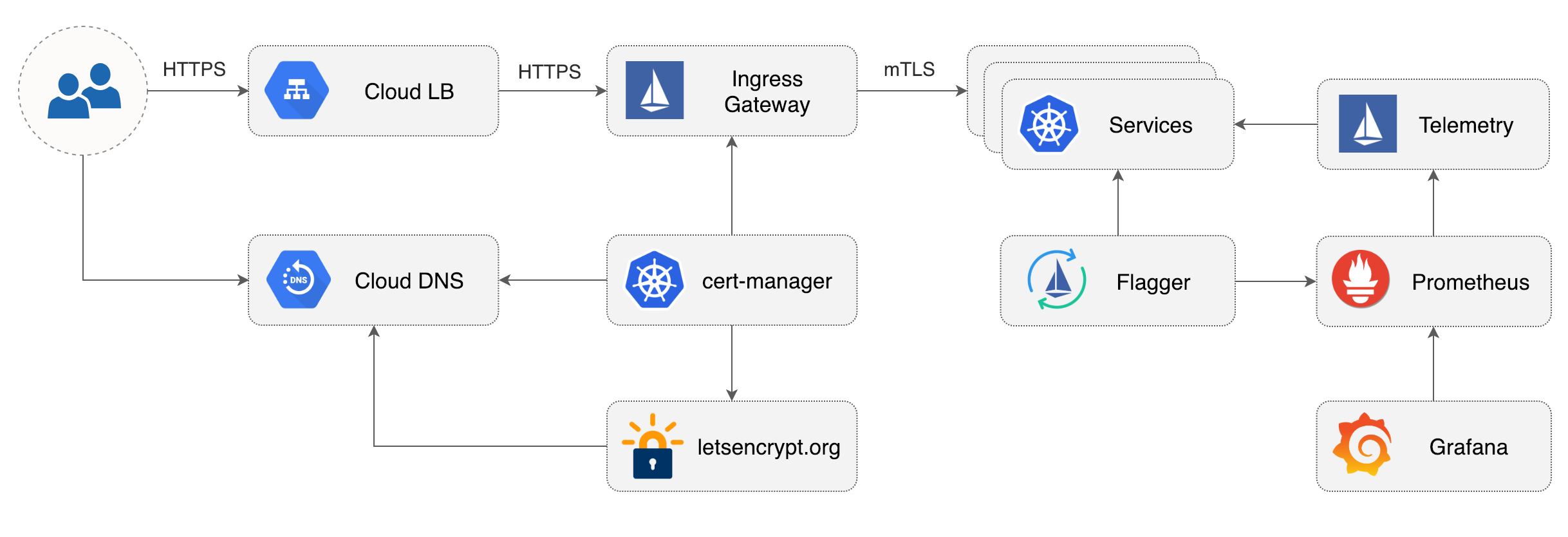

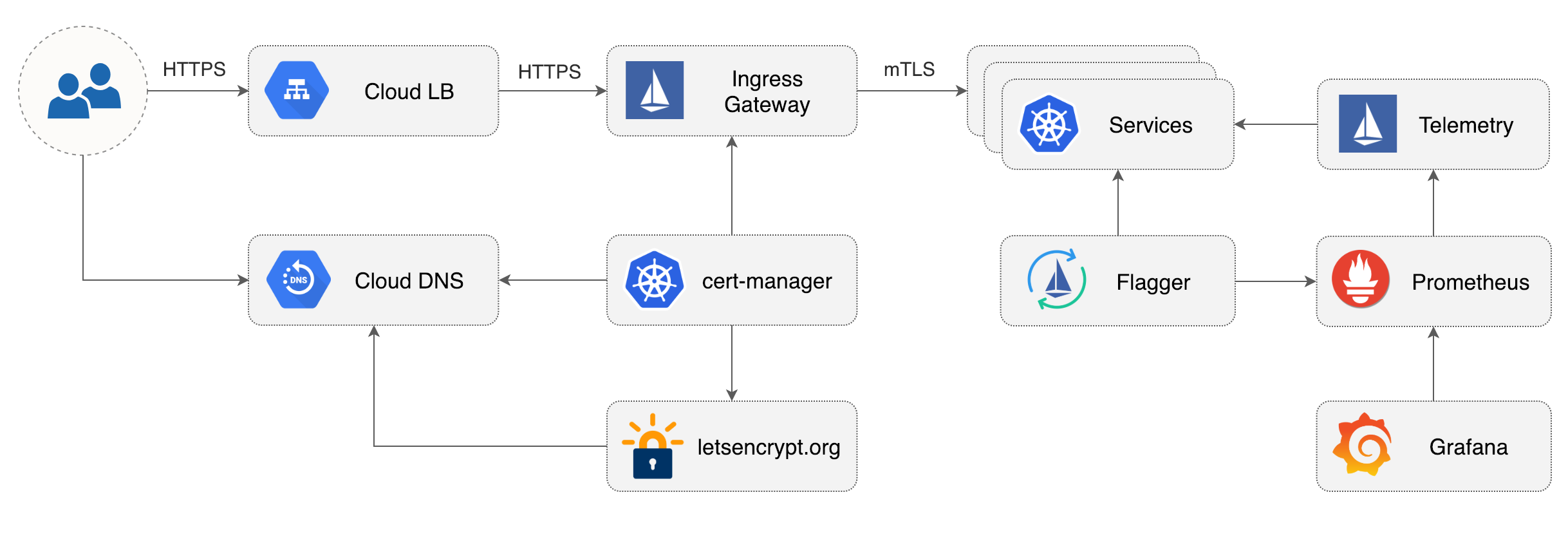

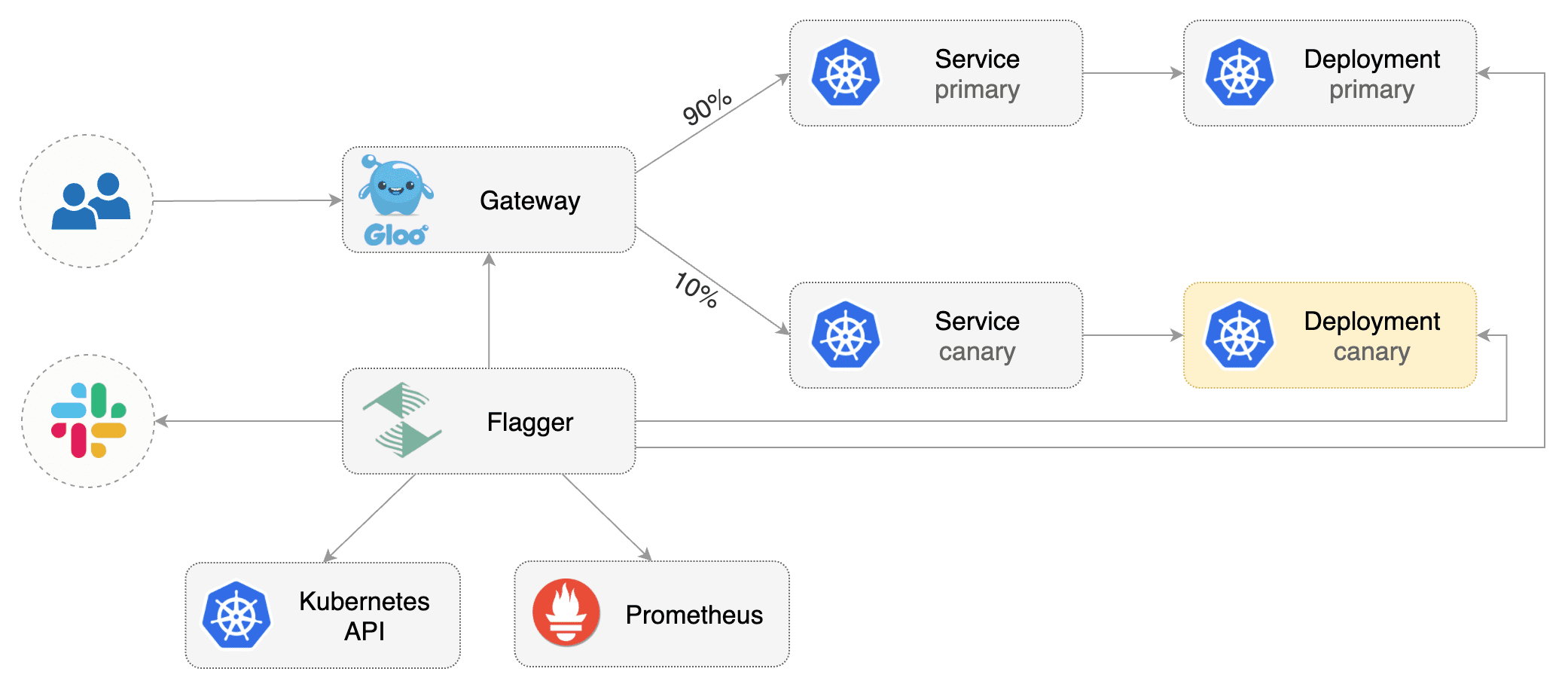

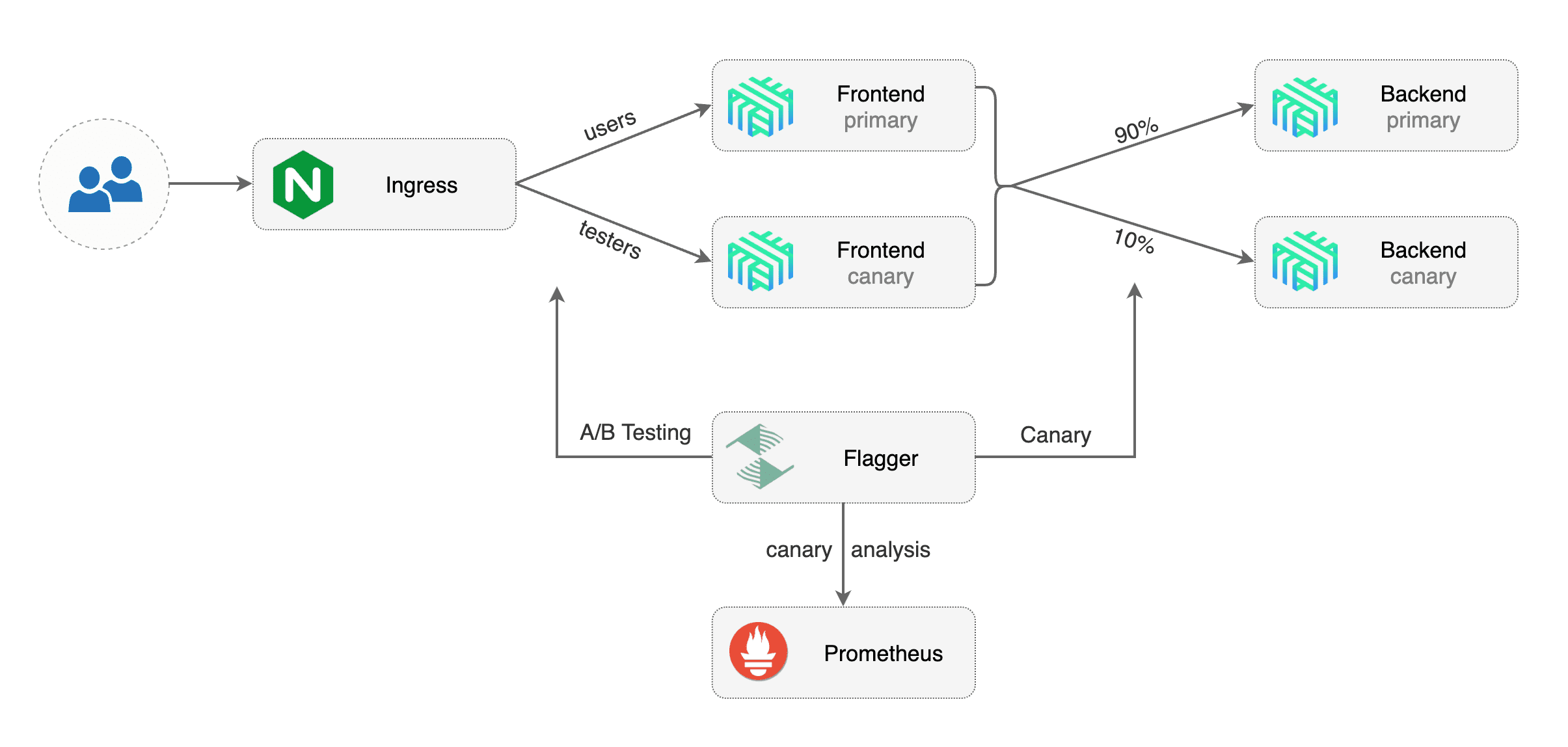

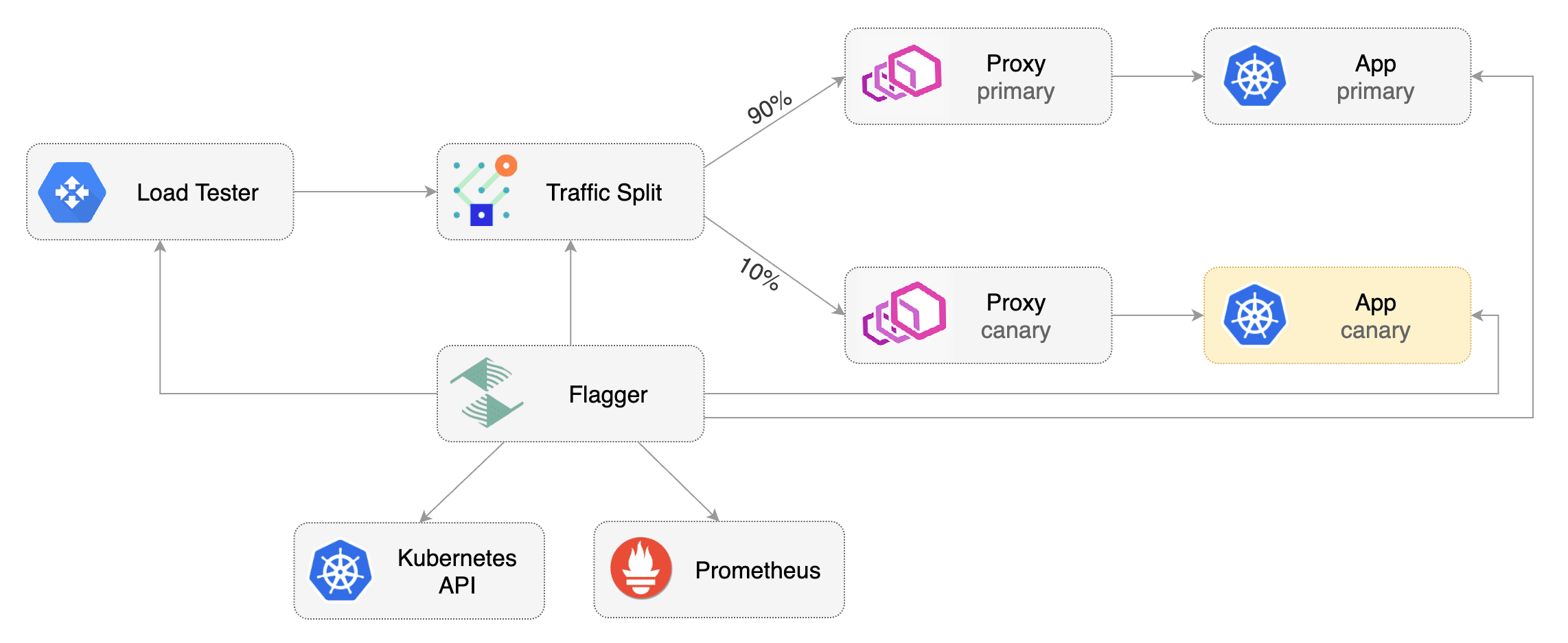

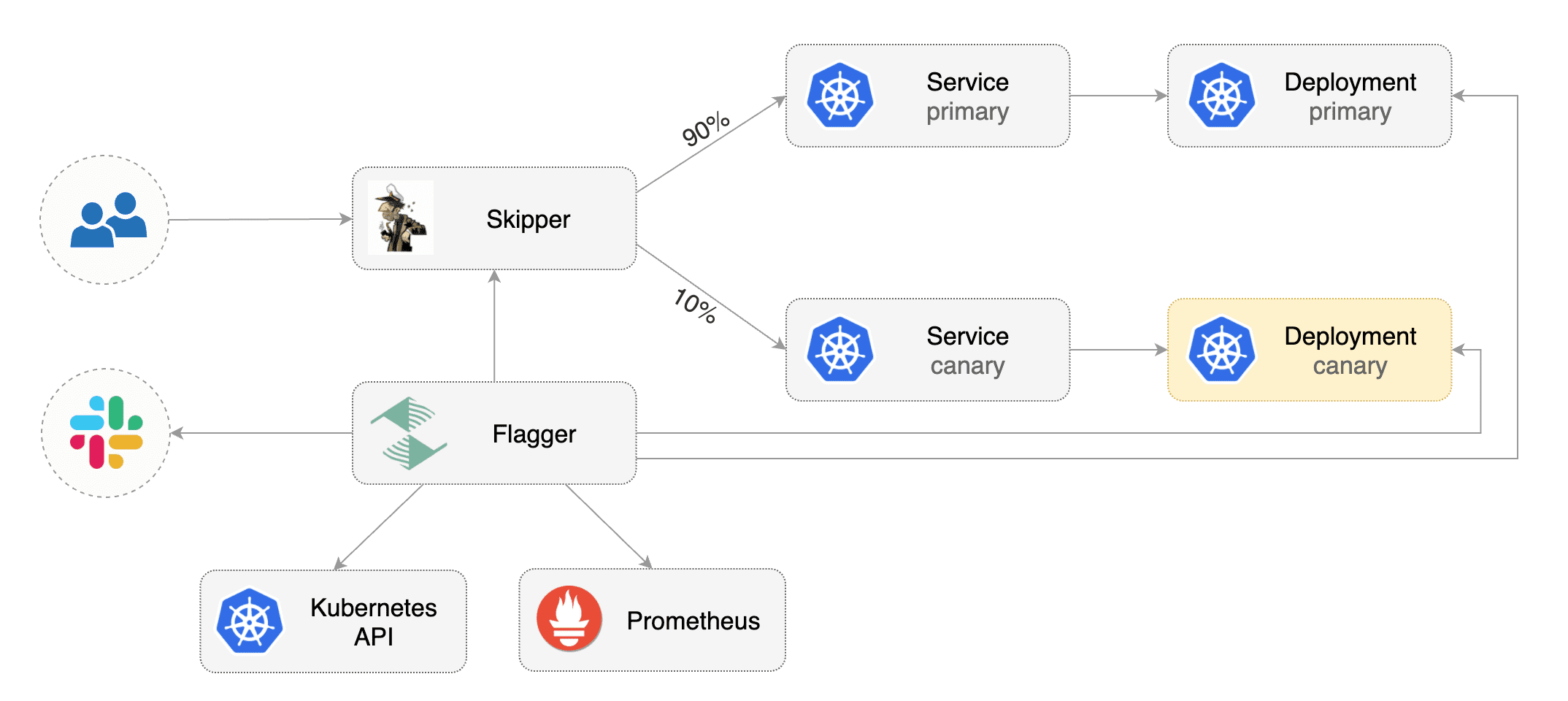

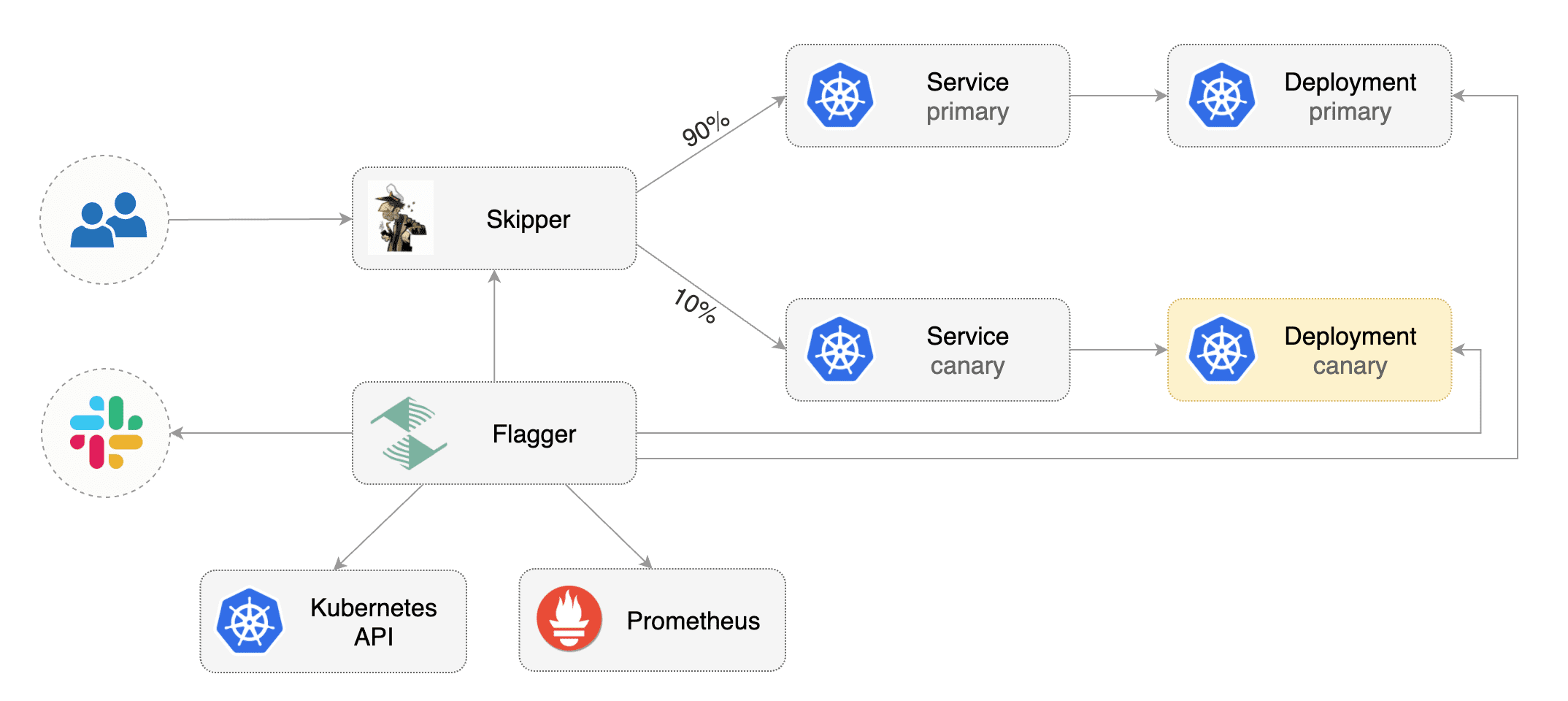

Flagger implements several deployment strategies (Canary releases, A/B testing, Blue/Green mirroring)

|

||||

using a service mesh (App Mesh, Istio, Linkerd) or an ingress controller (Contour, Gloo, NGINX, Skipper, Traefik) for traffic routing.

|

||||

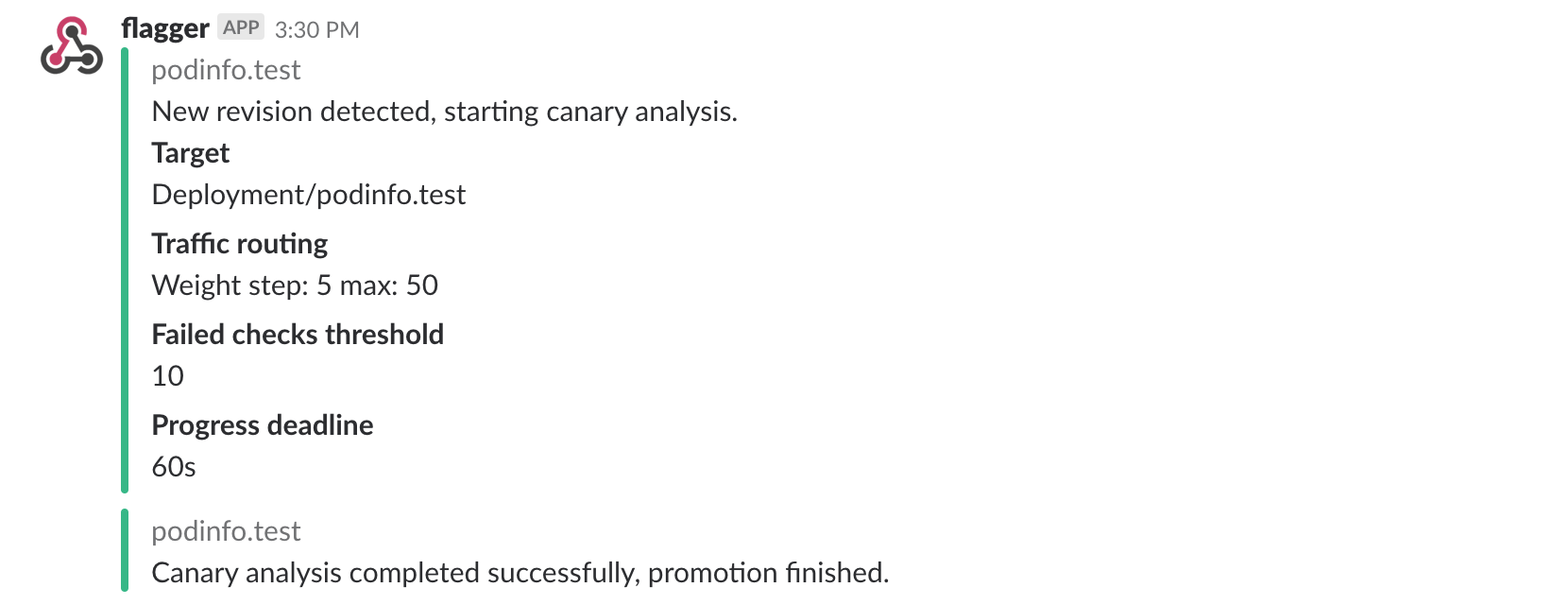

For release analysis, Flagger can query Prometheus, Datadog or CloudWatch

|

||||

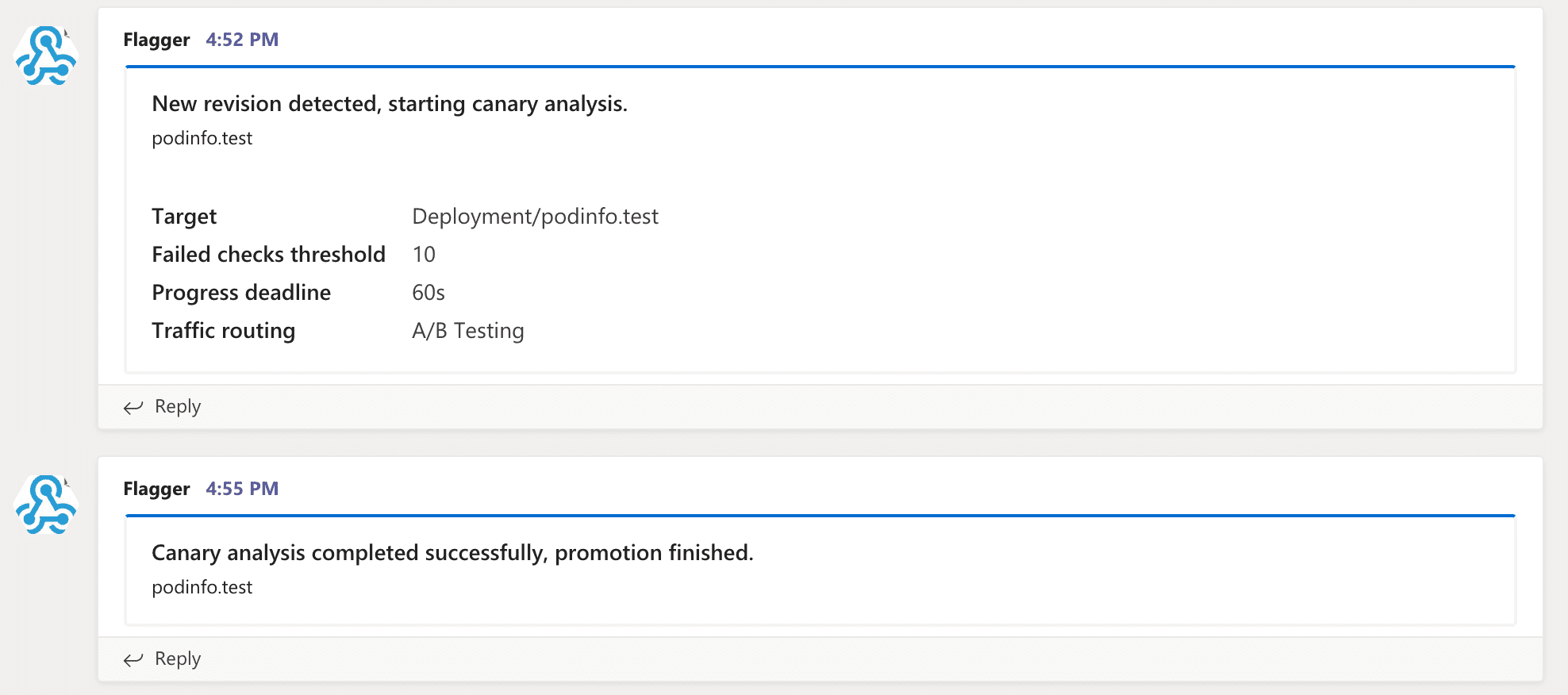

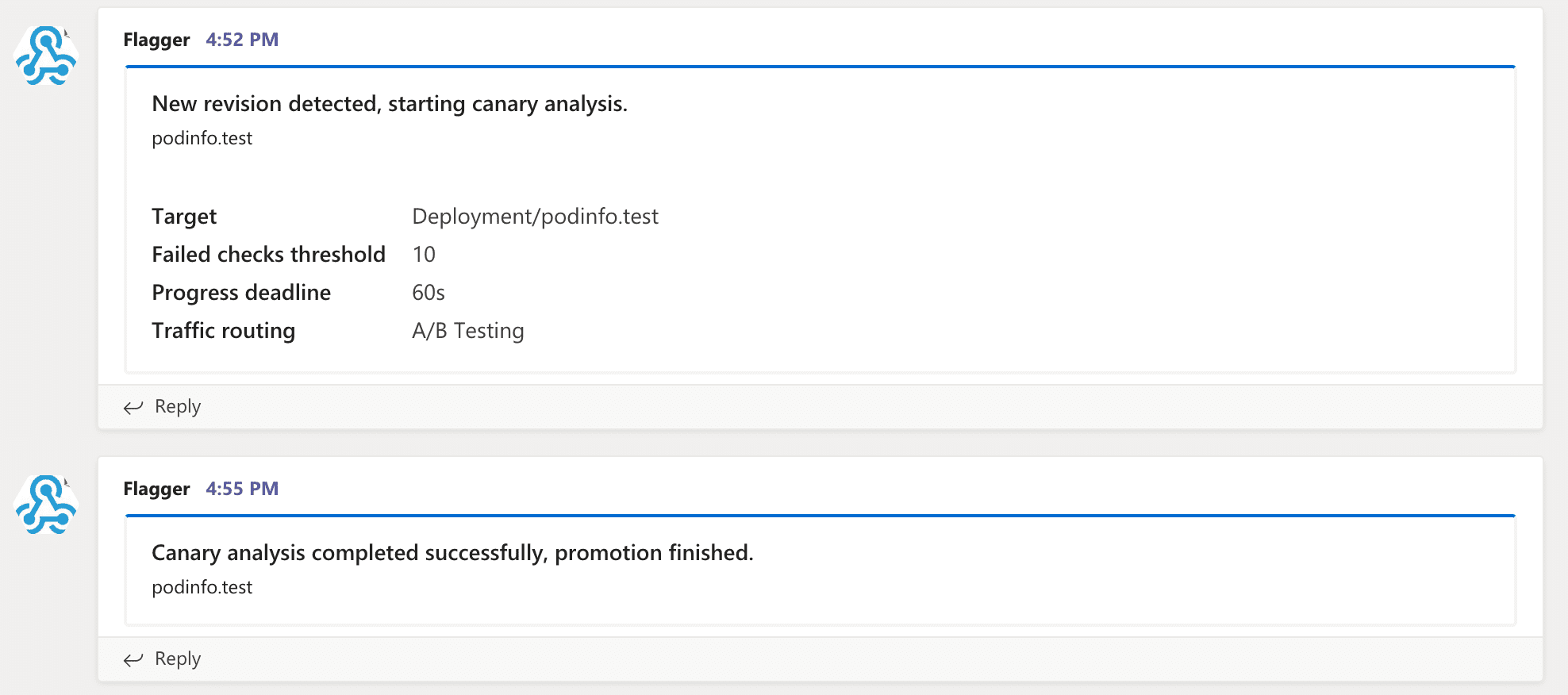

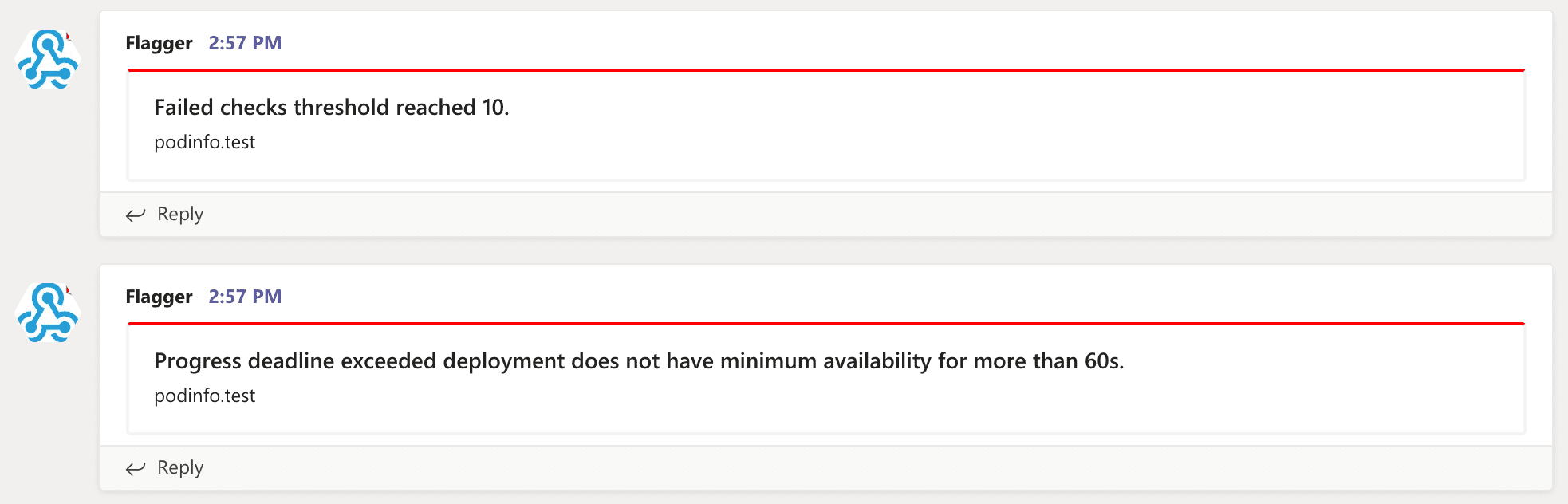

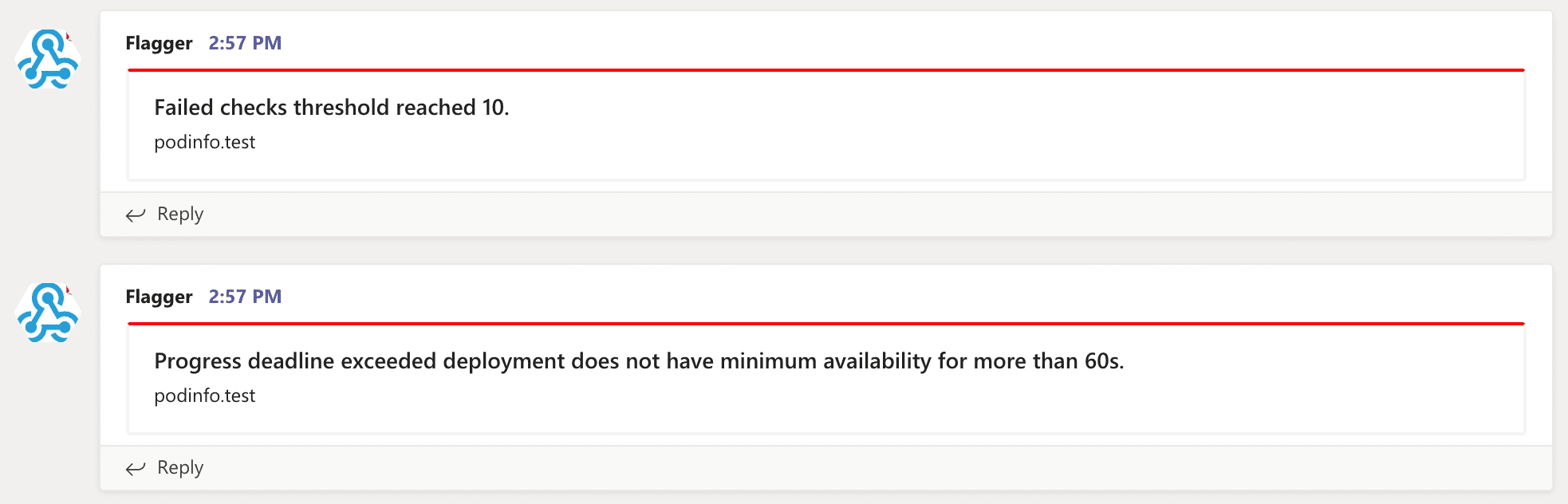

and for alerting it uses Slack, MS Teams, Discord and Rocket.

|

||||

using a service mesh (App Mesh, Istio, Linkerd, Open Service Mesh)

|

||||

or an ingress controller (Contour, Gloo, NGINX, Skipper, Traefik) for traffic routing.

|

||||

For release analysis, Flagger can query Prometheus, Datadog, New Relic or CloudWatch

|

||||

and for alerting it uses Slack, MS Teams, Discord, Rocket and Google Chat.

|

||||

|

||||

Flagger is a [Cloud Native Computing Foundation](https://cncf.io/) project

|

||||

and part of [Flux](https://fluxcd.io) family of GitOps tools.

|

||||

|

||||

### Documentation

|

||||

|

||||

@@ -39,21 +43,14 @@ Flagger documentation can be found at [docs.flagger.app](https://docs.flagger.ap

|

||||

* [NGINX Ingress](https://docs.flagger.app/tutorials/nginx-progressive-delivery)

|

||||

* [Skipper](https://docs.flagger.app/tutorials/skipper-progressive-delivery)

|

||||

* [Traefik](https://docs.flagger.app/tutorials/traefik-progressive-delivery)

|

||||

* [Open Service Mesh (OSM)](https://docs.flagger.app/tutorials/osm-progressive-delivery)

|

||||

* [Kubernetes Blue/Green](https://docs.flagger.app/tutorials/kubernetes-blue-green)

|

||||

|

||||

### Who is using Flagger

|

||||

|

||||

List of organizations using Flagger:

|

||||

**Our list of production users has moved to <https://fluxcd.io/adopters/#flagger>**.

|

||||

|

||||

* [Chick-fil-A](https://www.chick-fil-a.com)

|

||||

* [Capra Consulting](https://www.capraconsulting.no)

|

||||

* [DMM.com](https://dmm-corp.com)

|

||||

* [MediaMarktSaturn](https://www.mediamarktsaturn.com)

|

||||

* [Weaveworks](https://weave.works)

|

||||

* [Jumia Group](https://group.jumia.com)

|

||||

* [eLife](https://elifesciences.org/)

|

||||

|

||||

If you are using Flagger, please submit a PR to add your organization to the list!

|

||||

If you are using Flagger, please [submit a PR to add your organization](https://github.com/fluxcd/website/tree/main/adopters#readme) to the list!

|

||||

|

||||

### Canary CRD

|

||||

|

||||

@@ -74,7 +71,8 @@ metadata:

|

||||

namespace: test

|

||||

spec:

|

||||

# service mesh provider (optional)

|

||||

# can be: kubernetes, istio, linkerd, appmesh, nginx, skipper, contour, gloo, supergloo, traefik

|

||||

# can be: kubernetes, istio, linkerd, appmesh, nginx, skipper, contour, gloo, supergloo, traefik, osm

|

||||

# for SMI TrafficSplit can be: smi:v1alpha1, smi:v1alpha2, smi:v1alpha3

|

||||

provider: istio

|

||||

# deployment reference

|

||||

targetRef:

|

||||

@@ -185,24 +183,28 @@ For more details on how the canary analysis and promotion works please [read the

|

||||

|

||||

**Service Mesh**

|

||||

|

||||

| Feature | App Mesh | Istio | Linkerd | Kubernetes CNI |

|

||||

| ------------------------------------------ | ------------------ | ------------------ | ------------------ | ----------------- |

|

||||

| Canary deployments (weighted traffic) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: |

|

||||

| A/B testing (headers and cookies routing) | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: | :heavy_minus_sign: |

|

||||

| Blue/Green deployments (traffic switch) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Blue/Green deployments (traffic mirroring) | :heavy_minus_sign: | :heavy_check_mark: | :heavy_minus_sign: | :heavy_minus_sign: |

|

||||

| Webhooks (acceptance/load testing) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Manual gating (approve/pause/resume) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Request success rate check (L7 metric) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: |

|

||||

| Request duration check (L7 metric) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: |

|

||||

| Custom metric checks | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Feature | App Mesh | Istio | Linkerd | Open Service Mesh | SMI | Kubernetes CNI |

|

||||

| ------------------------------------------ | ------------------ | ------------------ | ------------------ | ------------------ | ------------------ | ------------------ |

|

||||

| Canary deployments (weighted traffic) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: |

|

||||

| A/B testing (headers and cookies routing) | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: | :heavy_minus_sign: | :heavy_minus_sign: | :heavy_minus_sign: |

|

||||

| Blue/Green deployments (traffic switch) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Blue/Green deployments (traffic mirroring) | :heavy_minus_sign: | :heavy_check_mark: | :heavy_minus_sign: | :heavy_minus_sign: | :heavy_minus_sign: | :heavy_minus_sign: |

|

||||

| Webhooks (acceptance/load testing) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Manual gating (approve/pause/resume) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Request success rate check (L7 metric) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: | :heavy_minus_sign: |

|

||||

| Request duration check (L7 metric) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: | :heavy_minus_sign: |

|

||||

| Custom metric checks | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

|

||||

For other SMI compatible service mesh solutions like Consul Connect or Nginx Service Mesh,

|

||||

[Prometheus MetricTemplates](https://docs.flagger.app/usage/metrics#prometheus) can be used to implement

|

||||

the request success rate and request duration checks.

|

||||

|

||||

**Ingress**

|

||||

|

||||

| Feature | Contour | Gloo | NGINX | Skipper | Traefik |

|

||||

| ------------------------------------------ | ------------------ | ------------------ | ------------------ | ------------------ | ------------------ |

|

||||

| Canary deployments (weighted traffic) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| A/B testing (headers and cookies routing) | :heavy_check_mark: | :heavy_minus_sign: | :heavy_check_mark: | :heavy_minus_sign: | :heavy_minus_sign: |

|

||||

| A/B testing (headers and cookies routing) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: | :heavy_minus_sign: |

|

||||

| Blue/Green deployments (traffic switch) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Webhooks (acceptance/load testing) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Manual gating (approve/pause/resume) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

@@ -212,18 +214,16 @@ For more details on how the canary analysis and promotion works please [read the

|

||||

|

||||

### Roadmap

|

||||

|

||||

#### [GitOps Toolkit](https://github.com/fluxcd/toolkit) compatibility

|

||||

#### [GitOps Toolkit](https://github.com/fluxcd/flux2) compatibility

|

||||

|

||||

* Migrate Flagger to Kubernetes controller-runtime and [kubebuilder](https://github.com/kubernetes-sigs/kubebuilder)

|

||||

* Make the Canary status compatible with [kstatus](https://github.com/kubernetes-sigs/cli-utils)

|

||||

* Make Flagger emit Kubernetes events compatible with Flux v2 notification API

|

||||

* Migrate CI to GitHub Actions and publish AMD64, ARM64 and ARMv7 container images

|

||||

* Integrate Flagger into Flux v2 as the progressive delivery component

|

||||

|

||||

#### Integrations

|

||||

|

||||

* Add support for Kubernetes [Ingress v2](https://github.com/kubernetes-sigs/service-apis)

|

||||

* Add support for SMI compatible service mesh solutions like Open Service Mesh and Consul Connect

|

||||

* Add support for ingress controllers like HAProxy and ALB

|

||||

* Add support for metrics providers like InfluxDB, Stackdriver, SignalFX

|

||||

|

||||

@@ -245,10 +245,10 @@ When submitting bug reports please include as much details as possible:

|

||||

If you have any questions about Flagger and progressive delivery:

|

||||

|

||||

* Read the Flagger [docs](https://docs.flagger.app).

|

||||

* Invite yourself to the [Weave community slack](https://slack.weave.works/)

|

||||

and join the [#flagger](https://weave-community.slack.com/messages/flagger/) channel.

|

||||

* Join the [Weave User Group](https://www.meetup.com/pro/Weave/) and get invited to online talks,

|

||||

hands-on training and meetups in your area.

|

||||

* File an [issue](https://github.com/weaveworks/flagger/issues/new).

|

||||

* Invite yourself to the [CNCF community slack](https://slack.cncf.io/)

|

||||

and join the [#flagger](https://cloud-native.slack.com/messages/flagger/) channel.

|

||||

* Check out the **[Flux events calendar](https://fluxcd.io/#calendar)**, both with upcoming talks, events and meetings you can attend.

|

||||

* Or view the **[Flux resources section](https://fluxcd.io/resources)** with past events videos you can watch.

|

||||

* File an [issue](https://github.com/fluxcd/flagger/issues/new).

|

||||

|

||||

Your feedback is always welcome!

|

||||

|

||||

42

artifacts/examples/osm-canary-steps.yaml

Normal file

@@ -0,0 +1,42 @@

|

||||

apiVersion: flagger.app/v1beta1

|

||||

kind: Canary

|

||||

metadata:

|

||||

name: podinfo

|

||||

namespace: test

|

||||

spec:

|

||||

provider: osm

|

||||

targetRef:

|

||||

apiVersion: apps/v1

|

||||

kind: Deployment

|

||||

name: podinfo

|

||||

progressDeadlineSeconds: 600

|

||||

service:

|

||||

port: 9898

|

||||

targetPort: 9898

|

||||

analysis:

|

||||

interval: 15s

|

||||

threshold: 10

|

||||

stepWeights: [5, 10, 15, 20, 25, 30, 35, 40, 45, 50, 55]

|

||||

metrics:

|

||||

- name: request-success-rate

|

||||

thresholdRange:

|

||||

min: 99

|

||||

interval: 1m

|

||||

- name: request-duration

|

||||

thresholdRange:

|

||||

max: 500

|

||||

interval: 30s

|

||||

webhooks:

|

||||

- name: acceptance-test

|

||||

type: pre-rollout

|

||||

url: http://flagger-loadtester.test/

|

||||

timeout: 15s

|

||||

metadata:

|

||||

type: bash

|

||||

cmd: "curl -sd 'test' http://podinfo-canary.test:9898/token | grep token"

|

||||

- name: load-test

|

||||

type: rollout

|

||||

url: http://flagger-loadtester.test/

|

||||

timeout: 5s

|

||||

metadata:

|

||||

cmd: "hey -z 1m -q 10 -c 2 http://podinfo-canary.test:9898/"

|

||||

43

artifacts/examples/osm-canary.yaml

Normal file

@@ -0,0 +1,43 @@

|

||||

apiVersion: flagger.app/v1beta1

|

||||

kind: Canary

|

||||

metadata:

|

||||

name: podinfo

|

||||

namespace: test

|

||||

spec:

|

||||

provider: osm

|

||||

targetRef:

|

||||

apiVersion: apps/v1

|

||||

kind: Deployment

|

||||

name: podinfo

|

||||

progressDeadlineSeconds: 600

|

||||

service:

|

||||

port: 9898

|

||||

targetPort: 9898

|

||||

analysis:

|

||||

interval: 15s

|

||||

threshold: 10

|

||||

maxWeight: 50

|

||||

stepWeight: 5

|

||||

metrics:

|

||||

- name: request-success-rate

|

||||

thresholdRange:

|

||||

min: 99

|

||||

interval: 1m

|

||||

- name: request-duration

|

||||

thresholdRange:

|

||||

max: 500

|

||||

interval: 30s

|

||||

webhooks:

|

||||

- name: acceptance-test

|

||||

type: pre-rollout

|

||||

url: http://flagger-loadtester.test/

|

||||

timeout: 15s

|

||||

metadata:

|

||||

type: bash

|

||||

cmd: "curl -sd 'test' http://podinfo-canary.test:9898/token | grep token"

|

||||

- name: load-test

|

||||

type: rollout

|

||||

url: http://flagger-loadtester.test/

|

||||

timeout: 5s

|

||||

metadata:

|

||||

cmd: "hey -z 1m -q 10 -c 2 http://podinfo-canary.test:9898/"

|

||||

@@ -6,7 +6,7 @@ metadata:

|

||||

labels:

|

||||

app: flagger

|

||||

---

|

||||

apiVersion: rbac.authorization.k8s.io/v1beta1

|

||||

apiVersion: rbac.authorization.k8s.io/v1

|

||||

kind: ClusterRole

|

||||

metadata:

|

||||

name: flagger

|

||||

@@ -153,8 +153,19 @@ rules:

|

||||

resources:

|

||||

- upstreams

|

||||

- upstreams/finalizers

|

||||

- upstreamgroups

|

||||

- upstreamgroups/finalizers

|

||||

verbs:

|

||||

- get

|

||||

- list

|

||||

- watch

|

||||

- create

|

||||

- update

|

||||

- patch

|

||||

- delete

|

||||

- apiGroups:

|

||||

- gateway.solo.io

|

||||

resources:

|

||||

- routetables

|

||||

- routetables/finalizers

|

||||

verbs:

|

||||

- get

|

||||

- list

|

||||

@@ -181,7 +192,7 @@ rules:

|

||||

verbs:

|

||||

- get

|

||||

---

|

||||

apiVersion: rbac.authorization.k8s.io/v1beta1

|

||||

apiVersion: rbac.authorization.k8s.io/v1

|

||||

kind: ClusterRoleBinding

|

||||

metadata:

|

||||

name: flagger

|

||||

|

||||

@@ -22,7 +22,7 @@ spec:

|

||||

serviceAccountName: flagger

|

||||

containers:

|

||||

- name: flagger

|

||||

image: weaveworks/flagger:1.4.0

|

||||

image: ghcr.io/fluxcd/flagger:1.13.0

|

||||

imagePullPolicy: IfNotPresent

|

||||

ports:

|

||||

- name: http

|

||||

|

||||

@@ -1,14 +1,14 @@

|

||||

apiVersion: v1

|

||||

name: flagger

|

||||

version: 1.4.0

|

||||

appVersion: 1.4.0

|

||||

kubeVersion: ">=1.11.0-0"

|

||||

version: 1.13.0

|

||||

appVersion: 1.13.0

|

||||

kubeVersion: ">=1.16.0-0"

|

||||

engine: gotpl

|

||||

description: Flagger is a progressive delivery operator for Kubernetes

|

||||

home: https://flagger.app

|

||||

icon: https://raw.githubusercontent.com/weaveworks/flagger/master/docs/logo/weaveworks.png

|

||||

icon: https://raw.githubusercontent.com/fluxcd/flagger/main/docs/logo/flagger-icon.png

|

||||

sources:

|

||||

- https://github.com/weaveworks/flagger

|

||||

- https://github.com/fluxcd/flagger

|

||||

maintainers:

|

||||

- name: stefanprodan

|

||||

url: https://github.com/stefanprodan

|

||||

@@ -21,5 +21,8 @@ keywords:

|

||||

- gloo

|

||||

- contour

|

||||

- nginx

|

||||

- traefik

|

||||

- osm

|

||||

- smi

|

||||

- gitops

|

||||

- canary

|

||||

|

||||

@@ -186,7 +186,7 @@

|

||||

same "printed page" as the copyright notice for easier

|

||||

identification within third-party archives.

|

||||

|

||||