mirror of

https://github.com/fluxcd/flagger.git

synced 2026-02-15 02:20:22 +00:00

Compare commits

79 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

db427b5e54 | ||

|

|

b49d63bdfe | ||

|

|

c84f7addff | ||

|

|

5d72398925 | ||

|

|

11d16468c9 | ||

|

|

82b61d69b7 | ||

|

|

824391321f | ||

|

|

a7c242e437 | ||

|

|

1544610203 | ||

|

|

14ca775ed9 | ||

|

|

f1d29f5951 | ||

|

|

ad0a66ffcc | ||

|

|

4288fa261c | ||

|

|

a537637dc9 | ||

|

|

851c6701b3 | ||

|

|

bb4591106a | ||

|

|

7641190ecb | ||

|

|

02b579f128 | ||

|

|

9cf6b407f1 | ||

|

|

c3564176f8 | ||

|

|

ae9cf57fd5 | ||

|

|

ae63b01373 | ||

|

|

c066a9163b | ||

|

|

38b04f2690 | ||

|

|

ee0e7b091a | ||

|

|

e922c3e9d9 | ||

|

|

2c31a4bf90 | ||

|

|

7332e6b173 | ||

|

|

968d67a7c3 | ||

|

|

266b957fc6 | ||

|

|

357ef86c8b | ||

|

|

d75ade5e8c | ||

|

|

806b95c8ce | ||

|

|

bf58cd763f | ||

|

|

52856177e3 | ||

|

|

58c3cebaac | ||

|

|

1e5d05c3fc | ||

|

|

020129bf5c | ||

|

|

3ff0786e1f | ||

|

|

a60dc55dad | ||

|

|

ff6acae544 | ||

|

|

09b5295c85 | ||

|

|

9e423a6f71 | ||

|

|

0ef05edf1e | ||

|

|

a59901aaa9 | ||

|

|

53be3e07d2 | ||

|

|

2eb2ae52cd | ||

|

|

7bcc76eca0 | ||

|

|

0d531e7bd1 | ||

|

|

08851f83c7 | ||

|

|

295f5d7b39 | ||

|

|

a828524957 | ||

|

|

6661406b75 | ||

|

|

8766523279 | ||

|

|

b02a6da614 | ||

|

|

89d7cb1b04 | ||

|

|

59d18de753 | ||

|

|

e1d8703a15 | ||

|

|

1ba595bc6f | ||

|

|

446a2b976c | ||

|

|

9af6ade54d | ||

|

|

3fbe62aa47 | ||

|

|

4454c9b5b5 | ||

|

|

c2cf9bf4b1 | ||

|

|

3afc7978bd | ||

|

|

7a0ba8b477 | ||

|

|

0eb21a98a5 | ||

|

|

2876092912 | ||

|

|

3dbfa34a53 | ||

|

|

656f81787c | ||

|

|

920d558fde | ||

|

|

638a9f1c93 | ||

|

|

f1c3ee7a82 | ||

|

|

878f106573 | ||

|

|

945eded6bf | ||

|

|

f94f9c23d6 | ||

|

|

527b73e8ef | ||

|

|

d4555c5919 | ||

|

|

560bb93e3d |

@@ -92,6 +92,17 @@ jobs:

|

||||

- run: test/e2e-kubernetes.sh

|

||||

- run: test/e2e-kubernetes-tests.sh

|

||||

|

||||

e2e-kubernetes-svc-testing:

|

||||

machine: true

|

||||

steps:

|

||||

- checkout

|

||||

- attach_workspace:

|

||||

at: /tmp/bin

|

||||

- run: test/container-build.sh

|

||||

- run: test/e2e-kind.sh

|

||||

- run: test/e2e-kubernetes.sh

|

||||

- run: test/e2e-kubernetes-svc-tests.sh

|

||||

|

||||

e2e-smi-istio-testing:

|

||||

machine: true

|

||||

steps:

|

||||

@@ -139,6 +150,17 @@ jobs:

|

||||

- run: test/e2e-linkerd.sh

|

||||

- run: test/e2e-linkerd-tests.sh

|

||||

|

||||

e2e-contour-testing:

|

||||

machine: true

|

||||

steps:

|

||||

- checkout

|

||||

- attach_workspace:

|

||||

at: /tmp/bin

|

||||

- run: test/container-build.sh

|

||||

- run: test/e2e-kind.sh

|

||||

- run: test/e2e-contour.sh

|

||||

- run: test/e2e-contour-tests.sh

|

||||

|

||||

push-helm-charts:

|

||||

docker:

|

||||

- image: circleci/golang:1.13

|

||||

@@ -204,6 +226,9 @@ workflows:

|

||||

- e2e-linkerd-testing:

|

||||

requires:

|

||||

- build-binary

|

||||

- e2e-contour-testing:

|

||||

requires:

|

||||

- build-binary

|

||||

- push-container:

|

||||

requires:

|

||||

- build-binary

|

||||

|

||||

37

CHANGELOG.md

37

CHANGELOG.md

@@ -2,6 +2,43 @@

|

||||

|

||||

All notable changes to this project are documented in this file.

|

||||

|

||||

## 0.21.0 (2020-01-06)

|

||||

|

||||

Adds support for Contour ingress controller

|

||||

|

||||

#### Features

|

||||

|

||||

- Add support for Contour ingress controller [#397](https://github.com/weaveworks/flagger/pull/397)

|

||||

- Add support for Envoy managed by Crossover via SMI [#386](https://github.com/weaveworks/flagger/pull/386)

|

||||

- Extend canary target ref to Kubernetes Service kind [#372](https://github.com/weaveworks/flagger/pull/372)

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Add Prometheus operator PodMonitor template to Helm chart [#399](https://github.com/weaveworks/flagger/pull/399)

|

||||

- Update e2e tests to Kubernetes v1.16 [#390](https://github.com/weaveworks/flagger/pull/390)

|

||||

|

||||

## 0.20.4 (2019-12-03)

|

||||

|

||||

Adds support for taking over a running deployment without disruption

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Add initialization phase to Kubernetes router [#384](https://github.com/weaveworks/flagger/pull/384)

|

||||

- Add canary controller interface and Kubernetes deployment kind implementation [#378](https://github.com/weaveworks/flagger/pull/378)

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Skip primary check on skip analysis [#380](https://github.com/weaveworks/flagger/pull/380)

|

||||

|

||||

## 0.20.3 (2019-11-13)

|

||||

|

||||

Adds wrk to load tester tools and the App Mesh gateway chart to Flagger Helm repository

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Add wrk to load tester tools [#368](https://github.com/weaveworks/flagger/pull/368)

|

||||

- Add App Mesh gateway chart [#365](https://github.com/weaveworks/flagger/pull/365)

|

||||

|

||||

## 0.20.2 (2019-11-07)

|

||||

|

||||

Adds support for exposing canaries outside the cluster using App Mesh Gateway annotations

|

||||

|

||||

@@ -1,44 +1,64 @@

|

||||

FROM bats/bats:v1.1.0

|

||||

FROM alpine:3.10.3 as build

|

||||

|

||||

RUN addgroup -S app \

|

||||

&& adduser -S -g app app \

|

||||

&& apk --no-cache add ca-certificates curl jq

|

||||

|

||||

WORKDIR /home/app

|

||||

RUN apk --no-cache add alpine-sdk perl curl

|

||||

|

||||

RUN curl -sSLo hey "https://storage.googleapis.com/hey-release/hey_linux_amd64" && \

|

||||

chmod +x hey && mv hey /usr/local/bin/hey

|

||||

|

||||

# verify hey works

|

||||

RUN hey -n 1 -c 1 https://flagger.app > /dev/null && echo $? | grep 0

|

||||

|

||||

RUN curl -sSL "https://get.helm.sh/helm-v2.15.1-linux-amd64.tar.gz" | tar xvz && \

|

||||

RUN HELM2_VERSION=2.16.1 && \

|

||||

curl -sSL "https://get.helm.sh/helm-v${HELM2_VERSION}-linux-amd64.tar.gz" | tar xvz && \

|

||||

chmod +x linux-amd64/helm && mv linux-amd64/helm /usr/local/bin/helm && \

|

||||

chmod +x linux-amd64/tiller && mv linux-amd64/tiller /usr/local/bin/tiller && \

|

||||

rm -rf linux-amd64

|

||||

chmod +x linux-amd64/tiller && mv linux-amd64/tiller /usr/local/bin/tiller

|

||||

|

||||

RUN curl -sSL "https://get.helm.sh/helm-v3.0.0-rc.2-linux-amd64.tar.gz" | tar xvz && \

|

||||

chmod +x linux-amd64/helm && mv linux-amd64/helm /usr/local/bin/helmv3 && \

|

||||

rm -rf linux-amd64

|

||||

RUN HELM3_VERSION=3.0.1 && \

|

||||

curl -sSL "https://get.helm.sh/helm-v${HELM3_VERSION}-linux-amd64.tar.gz" | tar xvz && \

|

||||

chmod +x linux-amd64/helm && mv linux-amd64/helm /usr/local/bin/helmv3

|

||||

|

||||

RUN GRPC_HEALTH_PROBE_VERSION=v0.3.1 && \

|

||||

wget -qO /usr/local/bin/grpc_health_probe https://github.com/grpc-ecosystem/grpc-health-probe/releases/download/${GRPC_HEALTH_PROBE_VERSION}/grpc_health_probe-linux-amd64 && \

|

||||

chmod +x /usr/local/bin/grpc_health_probe

|

||||

|

||||

RUN curl -sSL "https://github.com/bojand/ghz/releases/download/v0.39.0/ghz_0.39.0_Linux_x86_64.tar.gz" | tar xz -C /tmp && \

|

||||

mv /tmp/ghz /usr/local/bin && chmod +x /usr/local/bin/ghz && rm -rf /tmp/ghz-web

|

||||

RUN GHZ_VERSION=0.39.0 && \

|

||||

curl -sSL "https://github.com/bojand/ghz/releases/download/v${GHZ_VERSION}/ghz_${GHZ_VERSION}_Linux_x86_64.tar.gz" | tar xz -C /tmp && \

|

||||

mv /tmp/ghz /usr/local/bin && chmod +x /usr/local/bin/ghz

|

||||

|

||||

RUN HELM_TILLER_VERSION=0.9.3 && \

|

||||

curl -sSL "https://github.com/rimusz/helm-tiller/archive/v${HELM_TILLER_VERSION}.tar.gz" | tar xz -C /tmp && \

|

||||

mv /tmp/helm-tiller-${HELM_TILLER_VERSION} /tmp/helm-tiller

|

||||

|

||||

RUN WRK_VERSION=4.0.2 && \

|

||||

cd /tmp && git clone -b ${WRK_VERSION} https://github.com/wg/wrk

|

||||

RUN cd /tmp/wrk && make

|

||||

|

||||

FROM bats/bats:v1.1.0

|

||||

|

||||

RUN addgroup -S app && \

|

||||

adduser -S -g app app && \

|

||||

apk --no-cache add ca-certificates curl jq libgcc

|

||||

|

||||

WORKDIR /home/app

|

||||

|

||||

COPY --from=build /usr/local/bin/hey /usr/local/bin/

|

||||

COPY --from=build /tmp/wrk/wrk /usr/local/bin/

|

||||

COPY --from=build /usr/local/bin/helm /usr/local/bin/

|

||||

COPY --from=build /usr/local/bin/tiller /usr/local/bin/

|

||||

COPY --from=build /usr/local/bin/ghz /usr/local/bin/

|

||||

COPY --from=build /usr/local/bin/helmv3 /usr/local/bin/

|

||||

COPY --from=build /usr/local/bin/grpc_health_probe /usr/local/bin/

|

||||

COPY --from=build /tmp/helm-tiller /tmp/helm-tiller

|

||||

ADD https://raw.githubusercontent.com/grpc/grpc-proto/master/grpc/health/v1/health.proto /tmp/ghz/health.proto

|

||||

|

||||

RUN ls /tmp

|

||||

|

||||

COPY ./bin/loadtester .

|

||||

|

||||

RUN chown -R app:app ./

|

||||

|

||||

USER app

|

||||

|

||||

RUN curl -sSL "https://github.com/rimusz/helm-tiller/archive/v0.9.3.tar.gz" | tar xvz && \

|

||||

helm init --client-only && helm plugin install helm-tiller-0.9.3 && helm plugin list

|

||||

# test load generator tools

|

||||

RUN hey -n 1 -c 1 https://flagger.app > /dev/null && echo $? | grep 0

|

||||

RUN wrk -d 1s -c 1 -t 1 https://flagger.app > /dev/null && echo $? | grep 0

|

||||

|

||||

# install Helm v2 plugins

|

||||

RUN helm init --client-only && helm plugin install /tmp/helm-tiller

|

||||

|

||||

ENTRYPOINT ["./loadtester"]

|

||||

|

||||

36

README.md

36

README.md

@@ -7,7 +7,7 @@

|

||||

[](https://github.com/weaveworks/flagger/releases)

|

||||

|

||||

Flagger is a Kubernetes operator that automates the promotion of canary deployments

|

||||

using Istio, Linkerd, App Mesh, NGINX or Gloo routing for traffic shifting and Prometheus metrics for canary analysis.

|

||||

using Istio, Linkerd, App Mesh, NGINX, Contour or Gloo routing for traffic shifting and Prometheus metrics for canary analysis.

|

||||

The canary analysis can be extended with webhooks for running acceptance tests,

|

||||

load tests or any other custom validation.

|

||||

|

||||

@@ -43,6 +43,8 @@ Flagger documentation can be found at [docs.flagger.app](https://docs.flagger.ap

|

||||

* [App Mesh canary deployments](https://docs.flagger.app/usage/appmesh-progressive-delivery)

|

||||

* [NGINX ingress controller canary deployments](https://docs.flagger.app/usage/nginx-progressive-delivery)

|

||||

* [Gloo ingress controller canary deployments](https://docs.flagger.app/usage/gloo-progressive-delivery)

|

||||

* [Contour Canary Deployments](https://docs.flagger.app/usage/contour-progressive-delivery)

|

||||

* [Crossover canary deployments](https://docs.flagger.app/usage/crossover-progressive-delivery)

|

||||

* [Blue/Green deployments](https://docs.flagger.app/usage/blue-green)

|

||||

* [Monitoring](https://docs.flagger.app/usage/monitoring)

|

||||

* [Alerting](https://docs.flagger.app/usage/alerting)

|

||||

@@ -68,7 +70,7 @@ metadata:

|

||||

namespace: test

|

||||

spec:

|

||||

# service mesh provider (optional)

|

||||

# can be: kubernetes, istio, linkerd, appmesh, nginx, gloo, supergloo

|

||||

# can be: kubernetes, istio, linkerd, appmesh, nginx, contour, gloo, supergloo

|

||||

provider: istio

|

||||

# deployment reference

|

||||

targetRef:

|

||||

@@ -149,21 +151,21 @@ For more details on how the canary analysis and promotion works please [read the

|

||||

|

||||

## Features

|

||||

|

||||

| Feature | Istio | Linkerd | App Mesh | NGINX | Gloo | Kubernetes CNI |

|

||||

| -------------------------------------------- | ------------------ | ------------------ |------------------ |------------------ |------------------ |------------------ |

|

||||

| Canary deployments (weighted traffic) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: |

|

||||

| A/B testing (headers and cookies routing) | :heavy_check_mark: | :heavy_minus_sign: | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: | :heavy_minus_sign: |

|

||||

| Blue/Green deployments (traffic switch) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Webhooks (acceptance/load testing) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Manual gating (approve/pause/resume) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Request success rate check (L7 metric) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: |

|

||||

| Request duration check (L7 metric) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: |

|

||||

| Custom promql checks | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Traffic policy, CORS, retries and timeouts | :heavy_check_mark: | :heavy_minus_sign: | :heavy_minus_sign: | :heavy_minus_sign: | :heavy_minus_sign: | :heavy_minus_sign: |

|

||||

| Feature | Istio | Linkerd | App Mesh | NGINX | Gloo | Contour | CNI |

|

||||

| -------------------------------------------- | ------------------ | ------------------ |------------------ |------------------ |------------------ |------------------ |------------------ |

|

||||

| Canary deployments (weighted traffic) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: |

|

||||

| A/B testing (headers and cookies routing) | :heavy_check_mark: | :heavy_minus_sign: | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: | :heavy_check_mark: | :heavy_minus_sign: |

|

||||

| Blue/Green deployments (traffic switch) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Webhooks (acceptance/load testing) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Manual gating (approve/pause/resume) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Request success rate check (L7 metric) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: |

|

||||

| Request duration check (L7 metric) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: |

|

||||

| Custom promql checks | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Traffic policy, CORS, retries and timeouts | :heavy_check_mark: | :heavy_minus_sign: | :heavy_minus_sign: | :heavy_minus_sign: | :heavy_minus_sign: | :heavy_check_mark: | :heavy_minus_sign: |

|

||||

|

||||

## Roadmap

|

||||

|

||||

* Integrate with other ingress controllers like Contour, HAProxy, ALB

|

||||

* Integrate with other service mesh like Consul Connect and ingress controllers like HAProxy, ALB

|

||||

* Add support for comparing the canary metrics to the primary ones and do the validation based on the derivation between the two

|

||||

|

||||

## Contributing

|

||||

@@ -174,9 +176,9 @@ When submitting bug reports please include as much details as possible:

|

||||

|

||||

* which Flagger version

|

||||

* which Flagger CRD version

|

||||

* which Kubernetes/Istio version

|

||||

* what configuration (canary, virtual service and workloads definitions)

|

||||

* what happened (Flagger, Istio Pilot and Proxy logs)

|

||||

* which Kubernetes version

|

||||

* what configuration (canary, ingress and workloads definitions)

|

||||

* what happened (Flagger and Proxy logs)

|

||||

|

||||

## Getting Help

|

||||

|

||||

|

||||

@@ -81,6 +81,11 @@ rules:

|

||||

- virtualservices

|

||||

- gateways

|

||||

verbs: ["*"]

|

||||

- apiGroups:

|

||||

- projectcontour.io

|

||||

resources:

|

||||

- httpproxies

|

||||

verbs: ["*"]

|

||||

- nonResourceURLs:

|

||||

- /version

|

||||

verbs:

|

||||

|

||||

@@ -22,7 +22,7 @@ spec:

|

||||

serviceAccountName: flagger

|

||||

containers:

|

||||

- name: flagger

|

||||

image: weaveworks/flagger:0.20.2

|

||||

image: weaveworks/flagger:0.21.0

|

||||

imagePullPolicy: IfNotPresent

|

||||

ports:

|

||||

- name: http

|

||||

|

||||

@@ -17,7 +17,7 @@ spec:

|

||||

spec:

|

||||

containers:

|

||||

- name: loadtester

|

||||

image: weaveworks/flagger-loadtester:0.11.0

|

||||

image: weaveworks/flagger-loadtester:0.12.1

|

||||

imagePullPolicy: IfNotPresent

|

||||

ports:

|

||||

- name: http

|

||||

|

||||

21

charts/appmesh-gateway/.helmignore

Normal file

21

charts/appmesh-gateway/.helmignore

Normal file

@@ -0,0 +1,21 @@

|

||||

# Patterns to ignore when building packages.

|

||||

# This supports shell glob matching, relative path matching, and

|

||||

# negation (prefixed with !). Only one pattern per line.

|

||||

.DS_Store

|

||||

# Common VCS dirs

|

||||

.git/

|

||||

.gitignore

|

||||

.bzr/

|

||||

.bzrignore

|

||||

.hg/

|

||||

.hgignore

|

||||

.svn/

|

||||

# Common backup files

|

||||

*.swp

|

||||

*.bak

|

||||

*.tmp

|

||||

*~

|

||||

# Various IDEs

|

||||

.project

|

||||

.idea/

|

||||

*.tmproj

|

||||

19

charts/appmesh-gateway/Chart.yaml

Normal file

19

charts/appmesh-gateway/Chart.yaml

Normal file

@@ -0,0 +1,19 @@

|

||||

apiVersion: v1

|

||||

name: appmesh-gateway

|

||||

description: Flagger Gateway for AWS App Mesh is an edge L7 load balancer that exposes applications outside the mesh.

|

||||

version: 1.1.1

|

||||

appVersion: 1.1.0

|

||||

home: https://flagger.app

|

||||

icon: https://raw.githubusercontent.com/weaveworks/flagger/master/docs/logo/weaveworks.png

|

||||

sources:

|

||||

- https://github.com/stefanprodan/appmesh-gateway

|

||||

maintainers:

|

||||

- name: Stefan Prodan

|

||||

url: https://github.com/stefanprodan

|

||||

email: stefanprodan@users.noreply.github.com

|

||||

keywords:

|

||||

- flagger

|

||||

- appmesh

|

||||

- envoy

|

||||

- gateway

|

||||

- ingress

|

||||

87

charts/appmesh-gateway/README.md

Normal file

87

charts/appmesh-gateway/README.md

Normal file

@@ -0,0 +1,87 @@

|

||||

# Flagger Gateway for App Mesh

|

||||

|

||||

[Flagger Gateway for App Mesh](https://github.com/stefanprodan/appmesh-gateway) is an

|

||||

Envoy-powered load balancer that exposes applications outside the mesh.

|

||||

The gateway facilitates canary deployments and A/B testing for user-facing web applications running on AWS App Mesh.

|

||||

|

||||

## Prerequisites

|

||||

|

||||

* Kubernetes >= 1.13

|

||||

* [App Mesh controller](https://github.com/aws/eks-charts/tree/master/stable/appmesh-controller) >= 0.2.0

|

||||

* [App Mesh inject](https://github.com/aws/eks-charts/tree/master/stable/appmesh-inject) >= 0.2.0

|

||||

|

||||

## Installing the Chart

|

||||

|

||||

Add Flagger Helm repository:

|

||||

|

||||

```console

|

||||

$ helm repo add flagger https://flagger.app

|

||||

```

|

||||

|

||||

Create a namespace with App Mesh sidecar injection enabled:

|

||||

|

||||

```sh

|

||||

kubectl create ns flagger-system

|

||||

kubectl label namespace test appmesh.k8s.aws/sidecarInjectorWebhook=enabled

|

||||

```

|

||||

|

||||

Install App Mesh Gateway for an existing mesh:

|

||||

|

||||

```sh

|

||||

helm upgrade -i appmesh-gateway flagger/appmesh-gateway \

|

||||

--namespace flagger-system \

|

||||

--set mesh.name=global

|

||||

```

|

||||

|

||||

Optionally you can create a mesh at install time:

|

||||

|

||||

```sh

|

||||

helm upgrade -i appmesh-gateway flagger/appmesh-gateway \

|

||||

--namespace flagger-system \

|

||||

--set mesh.name=global \

|

||||

--set mesh.create=true

|

||||

```

|

||||

|

||||

The [configuration](#configuration) section lists the parameters that can be configured during installation.

|

||||

|

||||

## Uninstalling the Chart

|

||||

|

||||

To uninstall/delete the `appmesh-gateway` deployment:

|

||||

|

||||

```console

|

||||

helm delete --purge appmesh-gateway

|

||||

```

|

||||

|

||||

The command removes all the Kubernetes components associated with the chart and deletes the release.

|

||||

|

||||

## Configuration

|

||||

|

||||

The following tables lists the configurable parameters of the chart and their default values.

|

||||

|

||||

Parameter | Description | Default

|

||||

--- | --- | ---

|

||||

`service.type` | When set to LoadBalancer it creates an AWS NLB | `LoadBalancer`

|

||||

`proxy.access_log_path` | to enable the access logs, set it to `/dev/stdout` | `/dev/null`

|

||||

`proxy.image.repository` | image repository | `envoyproxy/envoy`

|

||||

`proxy.image.tag` | image tag | `<VERSION>`

|

||||

`proxy.image.pullPolicy` | image pull policy | `IfNotPresent`

|

||||

`controller.image.repository` | image repository | `weaveworks/flagger-appmesh-gateway`

|

||||

`controller.image.tag` | image tag | `<VERSION>`

|

||||

`controller.image.pullPolicy` | image pull policy | `IfNotPresent`

|

||||

`resources.requests/cpu` | pod CPU request | `100m`

|

||||

`resources.requests/memory` | pod memory request | `128Mi`

|

||||

`resources.limits/memory` | pod memory limit | `2Gi`

|

||||

`nodeSelector` | node labels for pod assignment | `{}`

|

||||

`tolerations` | list of node taints to tolerate | `[]`

|

||||

`rbac.create` | if `true`, create and use RBAC resources | `true`

|

||||

`rbac.pspEnabled` | If `true`, create and use a restricted pod security policy | `false`

|

||||

`serviceAccount.create` | If `true`, create a new service account | `true`

|

||||

`serviceAccount.name` | Service account to be used | None

|

||||

`mesh.create` | If `true`, create mesh custom resource | `false`

|

||||

`mesh.name` | The name of the mesh to use | `global`

|

||||

`mesh.discovery` | The service discovery type to use, can be dns or cloudmap | `dns`

|

||||

`hpa.enabled` | `true` if HPA resource should be created, metrics-server is required | `true`

|

||||

`hpa.maxReplicas` | number of max replicas | `3`

|

||||

`hpa.cpu` | average total CPU usage per pod (1-100) | `99`

|

||||

`hpa.memory` | average memory usage per pod (100Mi-1Gi) | None

|

||||

`discovery.optIn` | `true` if only services with the 'expose' annotation are discoverable | `true`

|

||||

1

charts/appmesh-gateway/templates/NOTES.txt

Normal file

1

charts/appmesh-gateway/templates/NOTES.txt

Normal file

@@ -0,0 +1 @@

|

||||

App Mesh Gateway installed!

|

||||

56

charts/appmesh-gateway/templates/_helpers.tpl

Normal file

56

charts/appmesh-gateway/templates/_helpers.tpl

Normal file

@@ -0,0 +1,56 @@

|

||||

{{/* vim: set filetype=mustache: */}}

|

||||

{{/*

|

||||

Expand the name of the chart.

|

||||

*/}}

|

||||

{{- define "appmesh-gateway.name" -}}

|

||||

{{- default .Chart.Name .Values.nameOverride | trunc 63 | trimSuffix "-" -}}

|

||||

{{- end -}}

|

||||

|

||||

{{/*

|

||||

Create a default fully qualified app name.

|

||||

We truncate at 63 chars because some Kubernetes name fields are limited to this (by the DNS naming spec).

|

||||

If release name contains chart name it will be used as a full name.

|

||||

*/}}

|

||||

{{- define "appmesh-gateway.fullname" -}}

|

||||

{{- if .Values.fullnameOverride -}}

|

||||

{{- .Values.fullnameOverride | trunc 63 | trimSuffix "-" -}}

|

||||

{{- else -}}

|

||||

{{- $name := default .Chart.Name .Values.nameOverride -}}

|

||||

{{- if contains $name .Release.Name -}}

|

||||

{{- .Release.Name | trunc 63 | trimSuffix "-" -}}

|

||||

{{- else -}}

|

||||

{{- printf "%s-%s" .Release.Name $name | trunc 63 | trimSuffix "-" -}}

|

||||

{{- end -}}

|

||||

{{- end -}}

|

||||

{{- end -}}

|

||||

|

||||

{{/*

|

||||

Create chart name and version as used by the chart label.

|

||||

*/}}

|

||||

{{- define "appmesh-gateway.chart" -}}

|

||||

{{- printf "%s-%s" .Chart.Name .Chart.Version | replace "+" "_" | trunc 63 | trimSuffix "-" -}}

|

||||

{{- end -}}

|

||||

|

||||

{{/*

|

||||

Common labels

|

||||

*/}}

|

||||

{{- define "appmesh-gateway.labels" -}}

|

||||

app.kubernetes.io/name: {{ include "appmesh-gateway.name" . }}

|

||||

helm.sh/chart: {{ include "appmesh-gateway.chart" . }}

|

||||

app.kubernetes.io/instance: {{ .Release.Name }}

|

||||

{{- if .Chart.AppVersion }}

|

||||

app.kubernetes.io/version: {{ .Chart.AppVersion | quote }}

|

||||

{{- end }}

|

||||

app.kubernetes.io/managed-by: {{ .Release.Service }}

|

||||

{{- end -}}

|

||||

|

||||

{{/*

|

||||

Create the name of the service account to use

|

||||

*/}}

|

||||

{{- define "appmesh-gateway.serviceAccountName" -}}

|

||||

{{- if .Values.serviceAccount.create -}}

|

||||

{{ default (include "appmesh-gateway.fullname" .) .Values.serviceAccount.name }}

|

||||

{{- else -}}

|

||||

{{ default "default" .Values.serviceAccount.name }}

|

||||

{{- end -}}

|

||||

{{- end -}}

|

||||

8

charts/appmesh-gateway/templates/account.yaml

Normal file

8

charts/appmesh-gateway/templates/account.yaml

Normal file

@@ -0,0 +1,8 @@

|

||||

{{- if .Values.serviceAccount.create }}

|

||||

apiVersion: v1

|

||||

kind: ServiceAccount

|

||||

metadata:

|

||||

name: {{ template "appmesh-gateway.serviceAccountName" . }}

|

||||

labels:

|

||||

{{ include "appmesh-gateway.labels" . | indent 4 }}

|

||||

{{- end }}

|

||||

41

charts/appmesh-gateway/templates/config.yaml

Normal file

41

charts/appmesh-gateway/templates/config.yaml

Normal file

@@ -0,0 +1,41 @@

|

||||

apiVersion: v1

|

||||

kind: ConfigMap

|

||||

metadata:

|

||||

name: {{ template "appmesh-gateway.fullname" . }}

|

||||

labels:

|

||||

{{ include "appmesh-gateway.labels" . | indent 4 }}

|

||||

data:

|

||||

envoy.yaml: |-

|

||||

admin:

|

||||

access_log_path: {{ .Values.proxy.access_log_path }}

|

||||

address:

|

||||

socket_address:

|

||||

address: 0.0.0.0

|

||||

port_value: 8081

|

||||

|

||||

dynamic_resources:

|

||||

ads_config:

|

||||

api_type: GRPC

|

||||

grpc_services:

|

||||

- envoy_grpc:

|

||||

cluster_name: xds

|

||||

cds_config:

|

||||

ads: {}

|

||||

lds_config:

|

||||

ads: {}

|

||||

|

||||

static_resources:

|

||||

clusters:

|

||||

- name: xds

|

||||

connect_timeout: 0.50s

|

||||

type: static

|

||||

http2_protocol_options: {}

|

||||

load_assignment:

|

||||

cluster_name: xds

|

||||

endpoints:

|

||||

- lb_endpoints:

|

||||

- endpoint:

|

||||

address:

|

||||

socket_address:

|

||||

address: 127.0.0.1

|

||||

port_value: 18000

|

||||

144

charts/appmesh-gateway/templates/deployment.yaml

Normal file

144

charts/appmesh-gateway/templates/deployment.yaml

Normal file

@@ -0,0 +1,144 @@

|

||||

apiVersion: apps/v1

|

||||

kind: Deployment

|

||||

metadata:

|

||||

name: {{ template "appmesh-gateway.fullname" . }}

|

||||

labels:

|

||||

{{ include "appmesh-gateway.labels" . | indent 4 }}

|

||||

spec:

|

||||

replicas: {{ .Values.replicaCount }}

|

||||

strategy:

|

||||

type: Recreate

|

||||

selector:

|

||||

matchLabels:

|

||||

app.kubernetes.io/name: {{ include "appmesh-gateway.name" . }}

|

||||

app.kubernetes.io/instance: {{ .Release.Name }}

|

||||

template:

|

||||

metadata:

|

||||

labels:

|

||||

app.kubernetes.io/name: {{ include "appmesh-gateway.name" . }}

|

||||

app.kubernetes.io/instance: {{ .Release.Name }}

|

||||

app.kubernetes.io/part-of: appmesh

|

||||

annotations:

|

||||

prometheus.io/scrape: "true"

|

||||

prometheus.io/path: "/stats/prometheus"

|

||||

prometheus.io/port: "8081"

|

||||

# exclude inbound traffic on port 8080

|

||||

appmesh.k8s.aws/ports: "444"

|

||||

# exclude egress traffic to xDS server and Kubernetes API

|

||||

appmesh.k8s.aws/egressIgnoredPorts: "18000,22,443"

|

||||

checksum/config: {{ include (print $.Template.BasePath "/config.yaml") . | sha256sum | quote }}

|

||||

spec:

|

||||

serviceAccountName: {{ include "appmesh-gateway.serviceAccountName" . }}

|

||||

terminationGracePeriodSeconds: 45

|

||||

affinity:

|

||||

podAntiAffinity:

|

||||

preferredDuringSchedulingIgnoredDuringExecution:

|

||||

- podAffinityTerm:

|

||||

labelSelector:

|

||||

matchLabels:

|

||||

app.kubernetes.io/name: {{ include "appmesh-gateway.name" . }}

|

||||

topologyKey: kubernetes.io/hostname

|

||||

weight: 100

|

||||

volumes:

|

||||

- name: appmesh-gateway-config

|

||||

configMap:

|

||||

name: {{ template "appmesh-gateway.fullname" . }}

|

||||

containers:

|

||||

- name: controller

|

||||

image: "{{ .Values.controller.image.repository }}:{{ .Values.controller.image.tag }}"

|

||||

imagePullPolicy: {{ .Values.controller.image.pullPolicy }}

|

||||

securityContext:

|

||||

readOnlyRootFilesystem: true

|

||||

runAsUser: 10001

|

||||

capabilities:

|

||||

drop:

|

||||

- ALL

|

||||

add:

|

||||

- NET_BIND_SERVICE

|

||||

command:

|

||||

- ./flagger-appmesh-gateway

|

||||

- --opt-in={{ .Values.discovery.optIn }}

|

||||

- --gateway-mesh={{ .Values.mesh.name }}

|

||||

- --gateway-name=$(POD_SERVICE_ACCOUNT)

|

||||

- --gateway-namespace=$(POD_NAMESPACE)

|

||||

env:

|

||||

- name: POD_SERVICE_ACCOUNT

|

||||

valueFrom:

|

||||

fieldRef:

|

||||

fieldPath: spec.serviceAccountName

|

||||

- name: POD_NAMESPACE

|

||||

valueFrom:

|

||||

fieldRef:

|

||||

fieldPath: metadata.namespace

|

||||

ports:

|

||||

- name: grpc

|

||||

containerPort: 18000

|

||||

protocol: TCP

|

||||

livenessProbe:

|

||||

initialDelaySeconds: 5

|

||||

tcpSocket:

|

||||

port: grpc

|

||||

readinessProbe:

|

||||

initialDelaySeconds: 5

|

||||

tcpSocket:

|

||||

port: grpc

|

||||

resources:

|

||||

limits:

|

||||

memory: 1Gi

|

||||

requests:

|

||||

cpu: 10m

|

||||

memory: 32Mi

|

||||

- name: proxy

|

||||

image: "{{ .Values.proxy.image.repository }}:{{ .Values.proxy.image.tag }}"

|

||||

imagePullPolicy: {{ .Values.proxy.image.pullPolicy }}

|

||||

securityContext:

|

||||

capabilities:

|

||||

drop:

|

||||

- ALL

|

||||

add:

|

||||

- NET_BIND_SERVICE

|

||||

args:

|

||||

- -c

|

||||

- /config/envoy.yaml

|

||||

- --service-cluster $(POD_NAMESPACE)

|

||||

- --service-node $(POD_NAME)

|

||||

- --log-level info

|

||||

- --base-id 1234

|

||||

ports:

|

||||

- name: admin

|

||||

containerPort: 8081

|

||||

protocol: TCP

|

||||

- name: http

|

||||

containerPort: 8080

|

||||

protocol: TCP

|

||||

livenessProbe:

|

||||

initialDelaySeconds: 5

|

||||

tcpSocket:

|

||||

port: admin

|

||||

readinessProbe:

|

||||

initialDelaySeconds: 5

|

||||

httpGet:

|

||||

path: /ready

|

||||

port: admin

|

||||

env:

|

||||

- name: POD_NAME

|

||||

valueFrom:

|

||||

fieldRef:

|

||||

fieldPath: metadata.name

|

||||

- name: POD_NAMESPACE

|

||||

valueFrom:

|

||||

fieldRef:

|

||||

fieldPath: metadata.namespace

|

||||

volumeMounts:

|

||||

- name: appmesh-gateway-config

|

||||

mountPath: /config

|

||||

resources:

|

||||

{{ toYaml .Values.resources | indent 12 }}

|

||||

{{- with .Values.nodeSelector }}

|

||||

nodeSelector:

|

||||

{{ toYaml . | indent 8 }}

|

||||

{{- end }}

|

||||

{{- with .Values.tolerations }}

|

||||

tolerations:

|

||||

{{ toYaml . | indent 8 }}

|

||||

{{- end }}

|

||||

28

charts/appmesh-gateway/templates/hpa.yaml

Normal file

28

charts/appmesh-gateway/templates/hpa.yaml

Normal file

@@ -0,0 +1,28 @@

|

||||

{{- if .Values.hpa.enabled }}

|

||||

apiVersion: autoscaling/v2beta1

|

||||

kind: HorizontalPodAutoscaler

|

||||

metadata:

|

||||

name: {{ template "appmesh-gateway.fullname" . }}

|

||||

labels:

|

||||

{{ include "appmesh-gateway.labels" . | indent 4 }}

|

||||

spec:

|

||||

scaleTargetRef:

|

||||

apiVersion: apps/v1

|

||||

kind: Deployment

|

||||

name: {{ template "appmesh-gateway.fullname" . }}

|

||||

minReplicas: {{ .Values.replicaCount }}

|

||||

maxReplicas: {{ .Values.hpa.maxReplicas }}

|

||||

metrics:

|

||||

{{- if .Values.hpa.cpu }}

|

||||

- type: Resource

|

||||

resource:

|

||||

name: cpu

|

||||

targetAverageUtilization: {{ .Values.hpa.cpu }}

|

||||

{{- end }}

|

||||

{{- if .Values.hpa.memory }}

|

||||

- type: Resource

|

||||

resource:

|

||||

name: memory

|

||||

targetAverageValue: {{ .Values.hpa.memory }}

|

||||

{{- end }}

|

||||

{{- end }}

|

||||

12

charts/appmesh-gateway/templates/mesh.yaml

Normal file

12

charts/appmesh-gateway/templates/mesh.yaml

Normal file

@@ -0,0 +1,12 @@

|

||||

{{- if .Values.mesh.create }}

|

||||

apiVersion: appmesh.k8s.aws/v1beta1

|

||||

kind: Mesh

|

||||

metadata:

|

||||

name: {{ .Values.mesh.name }}

|

||||

annotations:

|

||||

helm.sh/resource-policy: keep

|

||||

labels:

|

||||

{{ include "appmesh-gateway.labels" . | indent 4 }}

|

||||

spec:

|

||||

serviceDiscoveryType: {{ .Values.mesh.discovery }}

|

||||

{{- end }}

|

||||

57

charts/appmesh-gateway/templates/psp.yaml

Normal file

57

charts/appmesh-gateway/templates/psp.yaml

Normal file

@@ -0,0 +1,57 @@

|

||||

{{- if .Values.rbac.pspEnabled }}

|

||||

apiVersion: policy/v1beta1

|

||||

kind: PodSecurityPolicy

|

||||

metadata:

|

||||

name: {{ template "appmesh-gateway.fullname" . }}

|

||||

labels:

|

||||

{{ include "appmesh-gateway.labels" . | indent 4 }}

|

||||

annotations:

|

||||

seccomp.security.alpha.kubernetes.io/allowedProfileNames: '*'

|

||||

spec:

|

||||

privileged: false

|

||||

hostIPC: false

|

||||

hostNetwork: false

|

||||

hostPID: false

|

||||

readOnlyRootFilesystem: false

|

||||

allowPrivilegeEscalation: false

|

||||

allowedCapabilities:

|

||||

- '*'

|

||||

fsGroup:

|

||||

rule: RunAsAny

|

||||

runAsUser:

|

||||

rule: RunAsAny

|

||||

seLinux:

|

||||

rule: RunAsAny

|

||||

supplementalGroups:

|

||||

rule: RunAsAny

|

||||

volumes:

|

||||

- '*'

|

||||

---

|

||||

kind: ClusterRole

|

||||

apiVersion: rbac.authorization.k8s.io/v1

|

||||

metadata:

|

||||

name: {{ template "appmesh-gateway.fullname" . }}-psp

|

||||

labels:

|

||||

{{ include "appmesh-gateway.labels" . | indent 4 }}

|

||||

rules:

|

||||

- apiGroups: ['policy']

|

||||

resources: ['podsecuritypolicies']

|

||||

verbs: ['use']

|

||||

resourceNames:

|

||||

- {{ template "appmesh-gateway.fullname" . }}

|

||||

---

|

||||

apiVersion: rbac.authorization.k8s.io/v1

|

||||

kind: RoleBinding

|

||||

metadata:

|

||||

name: {{ template "appmesh-gateway.fullname" . }}-psp

|

||||

labels:

|

||||

{{ include "appmesh-gateway.labels" . | indent 4 }}

|

||||

roleRef:

|

||||

apiGroup: rbac.authorization.k8s.io

|

||||

kind: ClusterRole

|

||||

name: {{ template "appmesh-gateway.fullname" . }}-psp

|

||||

subjects:

|

||||

- kind: ServiceAccount

|

||||

name: {{ template "appmesh-gateway.serviceAccountName" . }}

|

||||

namespace: {{ .Release.Namespace }}

|

||||

{{- end }}

|

||||

39

charts/appmesh-gateway/templates/rbac.yaml

Normal file

39

charts/appmesh-gateway/templates/rbac.yaml

Normal file

@@ -0,0 +1,39 @@

|

||||

{{- if .Values.rbac.create }}

|

||||

apiVersion: rbac.authorization.k8s.io/v1beta1

|

||||

kind: ClusterRole

|

||||

metadata:

|

||||

name: {{ template "appmesh-gateway.fullname" . }}

|

||||

labels:

|

||||

{{ include "appmesh-gateway.labels" . | indent 4 }}

|

||||

rules:

|

||||

- apiGroups:

|

||||

- ""

|

||||

resources:

|

||||

- services

|

||||

verbs: ["*"]

|

||||

- apiGroups:

|

||||

- appmesh.k8s.aws

|

||||

resources:

|

||||

- meshes

|

||||

- meshes/status

|

||||

- virtualnodes

|

||||

- virtualnodes/status

|

||||

- virtualservices

|

||||

- virtualservices/status

|

||||

verbs: ["*"]

|

||||

---

|

||||

apiVersion: rbac.authorization.k8s.io/v1beta1

|

||||

kind: ClusterRoleBinding

|

||||

metadata:

|

||||

name: {{ template "appmesh-gateway.fullname" . }}

|

||||

labels:

|

||||

{{ include "appmesh-gateway.labels" . | indent 4 }}

|

||||

roleRef:

|

||||

apiGroup: rbac.authorization.k8s.io

|

||||

kind: ClusterRole

|

||||

name: {{ template "appmesh-gateway.fullname" . }}

|

||||

subjects:

|

||||

- name: {{ template "appmesh-gateway.serviceAccountName" . }}

|

||||

namespace: {{ .Release.Namespace }}

|

||||

kind: ServiceAccount

|

||||

{{- end }}

|

||||

24

charts/appmesh-gateway/templates/service.yaml

Normal file

24

charts/appmesh-gateway/templates/service.yaml

Normal file

@@ -0,0 +1,24 @@

|

||||

apiVersion: v1

|

||||

kind: Service

|

||||

metadata:

|

||||

name: {{ template "appmesh-gateway.fullname" . }}

|

||||

annotations:

|

||||

gateway.appmesh.k8s.aws/expose: "false"

|

||||

{{- if eq .Values.service.type "LoadBalancer" }}

|

||||

service.beta.kubernetes.io/aws-load-balancer-type: "nlb"

|

||||

{{- end }}

|

||||

labels:

|

||||

{{ include "appmesh-gateway.labels" . | indent 4 }}

|

||||

spec:

|

||||

type: {{ .Values.service.type }}

|

||||

{{- if eq .Values.service.type "LoadBalancer" }}

|

||||

externalTrafficPolicy: Local

|

||||

{{- end }}

|

||||

ports:

|

||||

- port: {{ .Values.service.port }}

|

||||

targetPort: http

|

||||

protocol: TCP

|

||||

name: http

|

||||

selector:

|

||||

app.kubernetes.io/name: {{ include "appmesh-gateway.name" . }}

|

||||

app.kubernetes.io/instance: {{ .Release.Name }}

|

||||

69

charts/appmesh-gateway/values.yaml

Normal file

69

charts/appmesh-gateway/values.yaml

Normal file

@@ -0,0 +1,69 @@

|

||||

# Default values for appmesh-gateway.

|

||||

# This is a YAML-formatted file.

|

||||

# Declare variables to be passed into your templates.

|

||||

|

||||

replicaCount: 1

|

||||

discovery:

|

||||

# discovery.optIn `true` if only services with the 'expose' annotation are discoverable

|

||||

optIn: true

|

||||

|

||||

proxy:

|

||||

access_log_path: /dev/null

|

||||

image:

|

||||

repository: docker.io/envoyproxy/envoy

|

||||

tag: v1.12.0

|

||||

pullPolicy: IfNotPresent

|

||||

|

||||

controller:

|

||||

image:

|

||||

repository: weaveworks/flagger-appmesh-gateway

|

||||

tag: v1.1.0

|

||||

pullPolicy: IfNotPresent

|

||||

|

||||

nameOverride: ""

|

||||

fullnameOverride: ""

|

||||

|

||||

service:

|

||||

# service.type: When set to LoadBalancer it creates an AWS NLB

|

||||

type: LoadBalancer

|

||||

port: 80

|

||||

|

||||

hpa:

|

||||

# hpa.enabled `true` if HPA resource should be created, metrics-server is required

|

||||

enabled: true

|

||||

maxReplicas: 3

|

||||

# hpa.cpu average total CPU usage per pod (1-100)

|

||||

cpu: 99

|

||||

# hpa.memory average memory usage per pod (100Mi-1Gi)

|

||||

memory:

|

||||

|

||||

resources:

|

||||

limits:

|

||||

memory: 2Gi

|

||||

requests:

|

||||

cpu: 100m

|

||||

memory: 128Mi

|

||||

|

||||

nodeSelector: {}

|

||||

|

||||

tolerations: []

|

||||

|

||||

serviceAccount:

|

||||

# serviceAccount.create: Whether to create a service account or not

|

||||

create: true

|

||||

# serviceAccount.name: The name of the service account to create or use

|

||||

name: ""

|

||||

|

||||

rbac:

|

||||

# rbac.create: `true` if rbac resources should be created

|

||||

create: true

|

||||

# rbac.pspEnabled: `true` if PodSecurityPolicy resources should be created

|

||||

pspEnabled: false

|

||||

|

||||

mesh:

|

||||

# mesh.create: `true` if mesh resource should be created

|

||||

create: false

|

||||

# mesh.name: The name of the mesh to use

|

||||

name: "global"

|

||||

# mesh.discovery: The service discovery type to use, can be dns or cloudmap

|

||||

discovery: dns

|

||||

@@ -1,21 +1,23 @@

|

||||

apiVersion: v1

|

||||

name: flagger

|

||||

version: 0.20.2

|

||||

appVersion: 0.20.2

|

||||

version: 0.21.0

|

||||

appVersion: 0.21.0

|

||||

kubeVersion: ">=1.11.0-0"

|

||||

engine: gotpl

|

||||

description: Flagger is a Kubernetes operator that automates the promotion of canary deployments using Istio, Linkerd, App Mesh, Gloo or NGINX routing for traffic shifting and Prometheus metrics for canary analysis.

|

||||

home: https://docs.flagger.app

|

||||

icon: https://raw.githubusercontent.com/weaveworks/flagger/master/docs/logo/flagger-icon.png

|

||||

description: Flagger is a progressive delivery operator for Kubernetes

|

||||

home: https://flagger.app

|

||||

icon: https://raw.githubusercontent.com/weaveworks/flagger/master/docs/logo/weaveworks.png

|

||||

sources:

|

||||

- https://github.com/weaveworks/flagger

|

||||

- https://github.com/weaveworks/flagger

|

||||

maintainers:

|

||||

- name: stefanprodan

|

||||

url: https://github.com/stefanprodan

|

||||

email: stefanprodan@users.noreply.github.com

|

||||

- name: stefanprodan

|

||||

url: https://github.com/stefanprodan

|

||||

email: stefanprodan@users.noreply.github.com

|

||||

keywords:

|

||||

- canary

|

||||

- istio

|

||||

- appmesh

|

||||

- linkerd

|

||||

- gitops

|

||||

- flagger

|

||||

- istio

|

||||

- appmesh

|

||||

- linkerd

|

||||

- gloo

|

||||

- gitops

|

||||

- canary

|

||||

|

||||

@@ -1,7 +1,8 @@

|

||||

# Flagger

|

||||

|

||||

[Flagger](https://github.com/weaveworks/flagger) is a Kubernetes operator that automates the promotion of

|

||||

canary deployments using Istio, Linkerd, App Mesh, NGINX or Gloo routing for traffic shifting and Prometheus metrics for canary analysis.

|

||||

[Flagger](https://github.com/weaveworks/flagger) is a Kubernetes operator that automates the promotion of canary

|

||||

deployments using Istio, Linkerd, App Mesh, NGINX or Gloo routing for traffic shifting and Prometheus metrics for canary analysis.

|

||||

|

||||

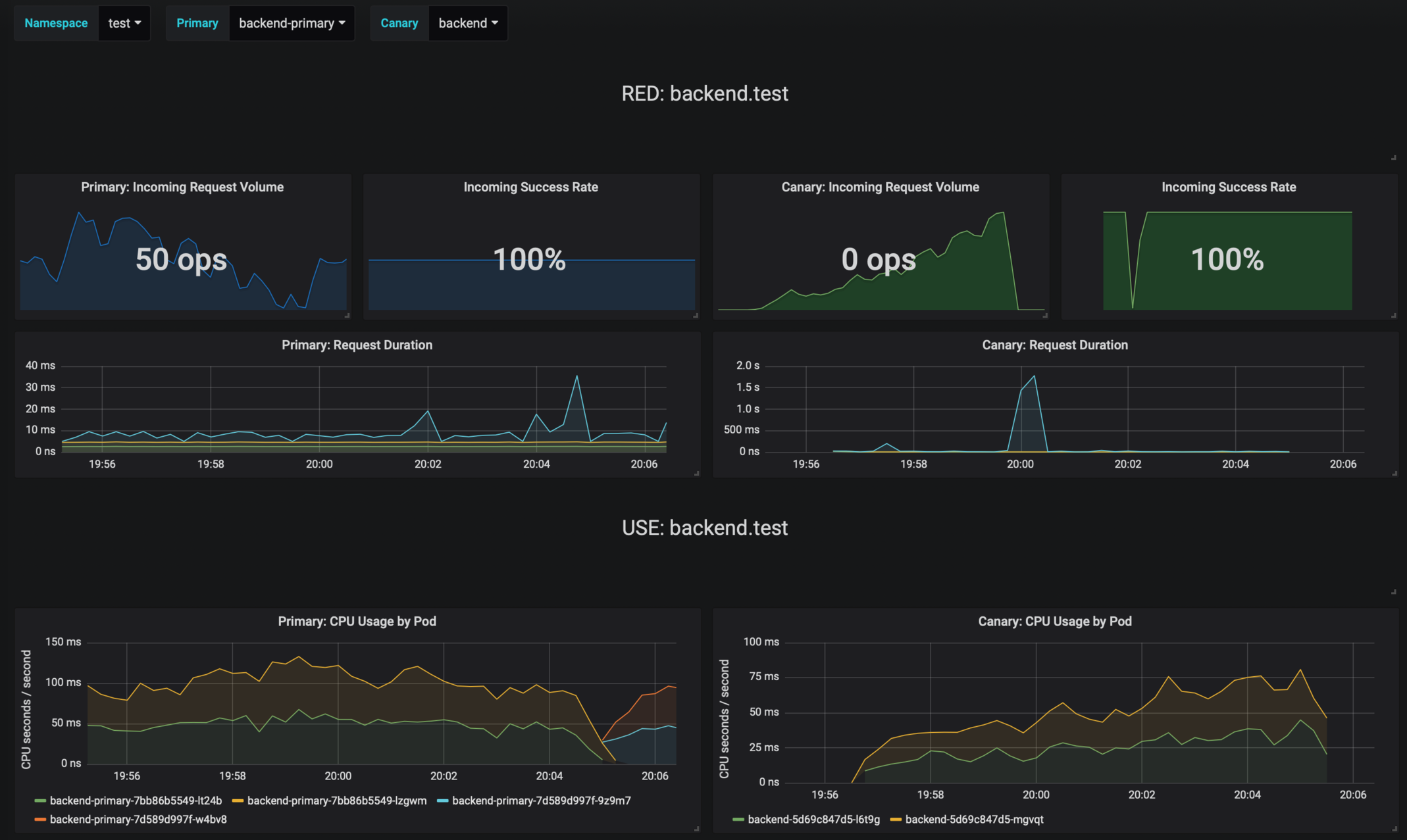

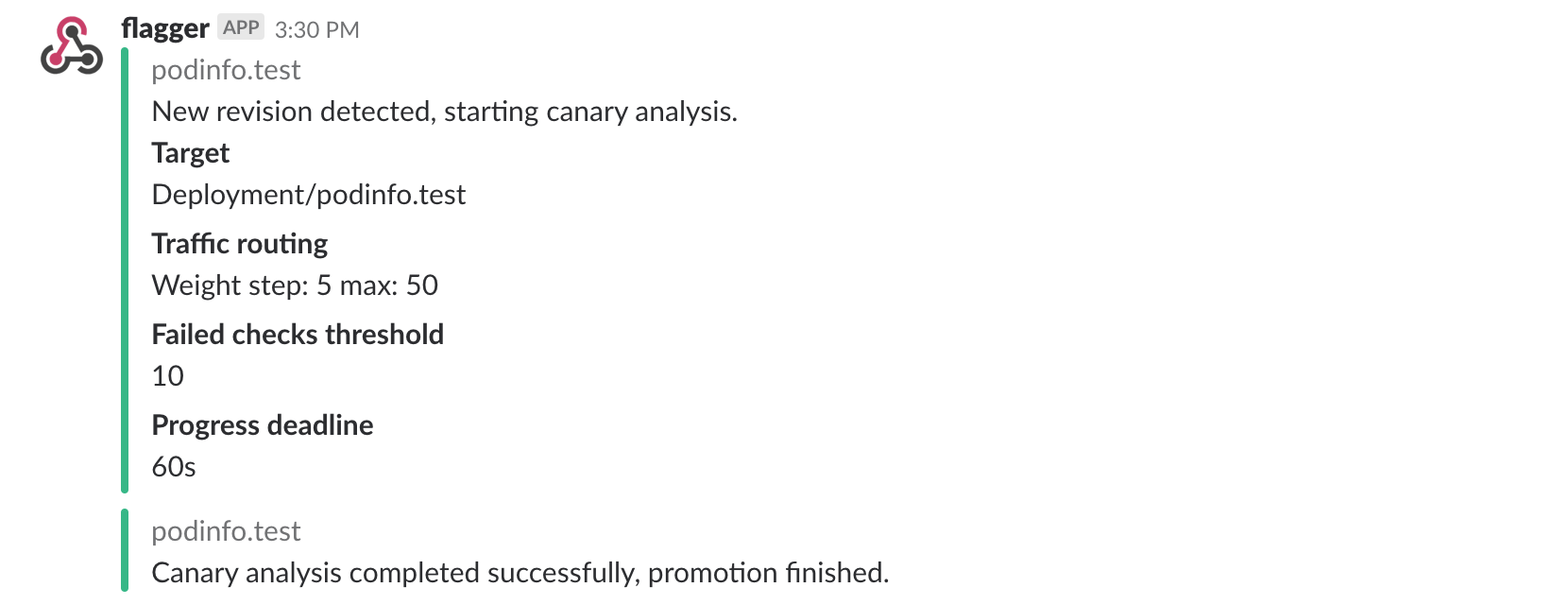

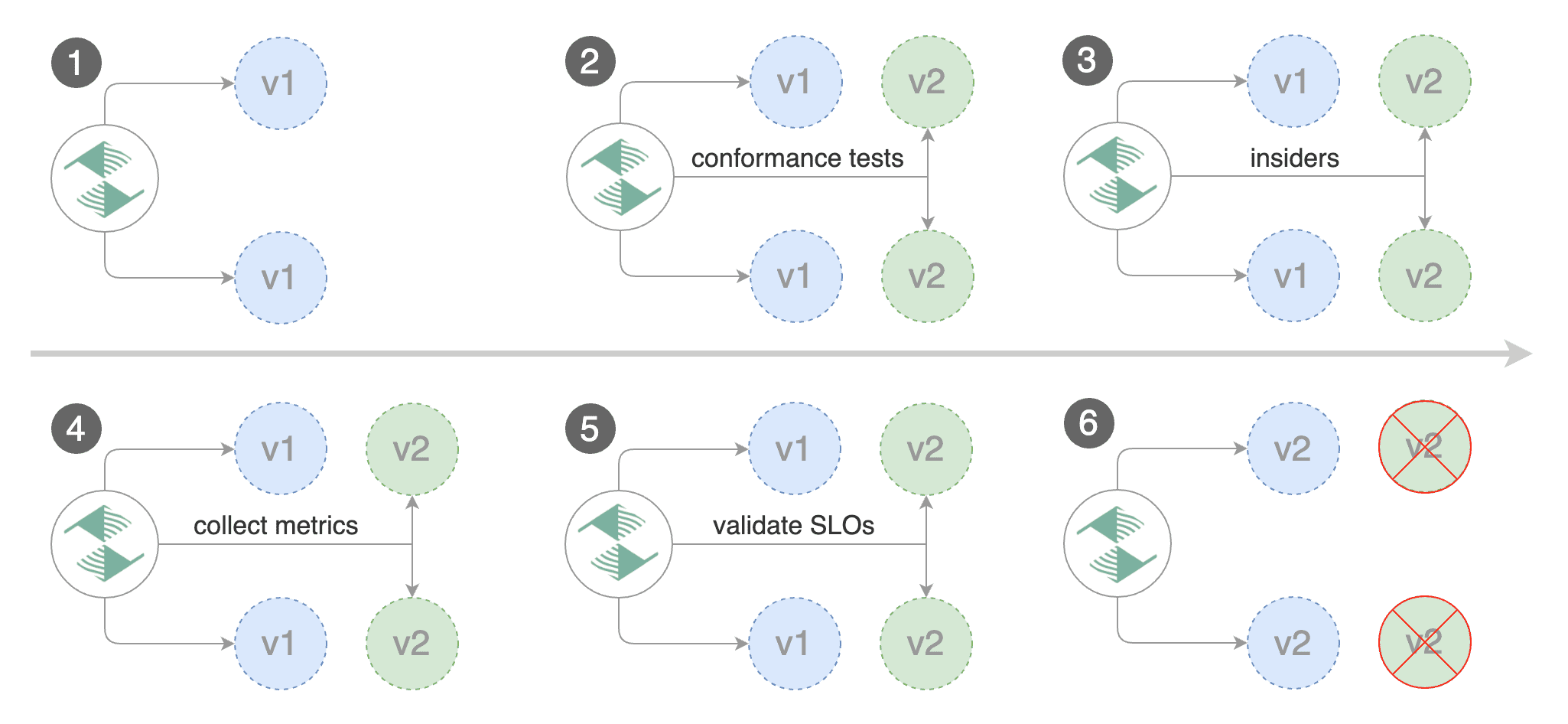

Flagger implements a control loop that gradually shifts traffic to the canary while measuring key performance indicators

|

||||

like HTTP requests success rate, requests average duration and pods health.

|

||||

Based on the KPIs analysis a canary is promoted or aborted and the analysis result is published to Slack or MS Teams.

|

||||

@@ -45,6 +46,16 @@ $ helm upgrade -i flagger flagger/flagger \

|

||||

--set metricsServer=http://linkerd-prometheus:9090

|

||||

```

|

||||

|

||||

To install the chart with the release name `flagger` for AWS App Mesh:

|

||||

|

||||

```console

|

||||

$ helm upgrade -i flagger flagger/flagger \

|

||||

--namespace=appmesh-system \

|

||||

--set crd.create=false \

|

||||

--set meshProvider=appmesh \

|

||||

--set metricsServer=http://appmesh-prometheus:9090

|

||||

```

|

||||

|

||||

The [configuration](#configuration) section lists the parameters that can be configured during installation.

|

||||

|

||||

## Uninstalling the Chart

|

||||

@@ -73,6 +84,10 @@ Parameter | Description | Default

|

||||

`slack.channel` | Slack channel | None

|

||||

`slack.user` | Slack username | `flagger`

|

||||

`msteams.url` | Microsoft Teams incoming webhook | None

|

||||

`podMonitor.enabled` | if `true`, create a PodMonitor for [monitoring the metrics](https://docs.flagger.app/usage/monitoring#metrics) | `false`

|

||||

`podMonitor.namespace` | the namespace where the PodMonitor is created | the same namespace

|

||||

`podMonitor.interval` | interval at which metrics should be scraped | `15s`

|

||||

`podMonitor.podMonitor` | additional labels to add to the PodMonitor | `{}`

|

||||

`leaderElection.enabled` | leader election must be enabled when running more than one replica | `false`

|

||||

`leaderElection.replicaCount` | number of replicas | `1`

|

||||

`ingressAnnotationsPrefix` | annotations prefix for ingresses | `custom.ingress.kubernetes.io`

|

||||

@@ -91,7 +106,7 @@ Specify each parameter using the `--set key=value[,key=value]` argument to `helm

|

||||

|

||||

```console

|

||||

$ helm upgrade -i flagger flagger/flagger \

|

||||

--namespace istio-system \

|

||||

--namespace flagger-system \

|

||||

--set crd.create=false \

|

||||

--set slack.url=https://hooks.slack.com/services/YOUR/SLACK/WEBHOOK \

|

||||

--set slack.channel=general

|

||||

|

||||

27

charts/flagger/templates/podmonitor.yaml

Normal file

27

charts/flagger/templates/podmonitor.yaml

Normal file

@@ -0,0 +1,27 @@

|

||||

{{- if .Values.podMonitor.enabled }}

|

||||

apiVersion: monitoring.coreos.com/v1

|

||||

kind: PodMonitor

|

||||

metadata:

|

||||

labels:

|

||||

helm.sh/chart: {{ template "flagger.chart" . }}

|

||||

app.kubernetes.io/name: {{ template "flagger.name" . }}

|

||||

app.kubernetes.io/managed-by: {{ .Release.Service }}

|

||||

app.kubernetes.io/instance: {{ .Release.Name }}

|

||||

{{- range $k, $v := .Values.podMonitor.additionalLabels }}

|

||||

{{ $k }}: {{ $v | quote }}

|

||||

{{- end }}

|

||||

name: {{ include "flagger.fullname" . }}

|

||||

namespace: {{ .Values.podMonitor.namespace | default .Release.Namespace }}

|

||||

spec:

|

||||

podMetricsEndpoints:

|

||||

- interval: {{ .Values.podMonitor.interval }}

|

||||

path: /metrics

|

||||

port: http

|

||||

namespaceSelector:

|

||||

matchNames:

|

||||

- {{ .Release.Namespace }}

|

||||

selector:

|

||||

matchLabels:

|

||||

app.kubernetes.io/name: {{ template "flagger.name" . }}

|

||||

app.kubernetes.io/instance: {{ .Release.Name }}

|

||||

{{- end }}

|

||||

@@ -77,6 +77,11 @@ rules:

|

||||

- virtualservices

|

||||

- gateways

|

||||

verbs: ["*"]

|

||||

- apiGroups:

|

||||

- projectcontour.io

|

||||

resources:

|

||||

- httpproxies

|

||||

verbs: ["*"]

|

||||

- nonResourceURLs:

|

||||

- /version

|

||||

verbs:

|

||||

|

||||

@@ -2,13 +2,14 @@

|

||||

|

||||

image:

|

||||

repository: weaveworks/flagger

|

||||

tag: 0.20.2

|

||||

tag: 0.21.0

|

||||

pullPolicy: IfNotPresent

|

||||

pullSecret:

|

||||

|

||||

podAnnotations:

|

||||

prometheus.io/scrape: "true"

|

||||

prometheus.io/port: "8080"

|

||||

appmesh.k8s.aws/sidecarInjectorWebhook: disabled

|

||||

|

||||

metricsServer: "http://prometheus:9090"

|

||||

|

||||

@@ -32,6 +33,12 @@ msteams:

|

||||

# MS Teams incoming webhook URL

|

||||

url:

|

||||

|

||||

podMonitor:

|

||||

enabled: false

|

||||

namespace:

|

||||

interval: 15s

|

||||

additionalLabels: {}

|

||||

|

||||

#env:

|

||||

#- name: SLACK_URL

|

||||

# valueFrom:

|

||||

|

||||

@@ -1,13 +1,20 @@

|

||||

apiVersion: v1

|

||||

name: grafana

|

||||

version: 1.3.0

|

||||

appVersion: 6.2.5

|

||||

version: 1.4.0

|

||||

appVersion: 6.5.1

|

||||

description: Grafana dashboards for monitoring Flagger canary deployments

|

||||

icon: https://raw.githubusercontent.com/weaveworks/flagger/master/docs/logo/flagger-icon.png

|

||||

icon: https://raw.githubusercontent.com/weaveworks/flagger/master/docs/logo/weaveworks.png

|

||||

home: https://flagger.app

|

||||

sources:

|

||||

- https://github.com/weaveworks/flagger

|

||||

- https://github.com/weaveworks/flagger

|

||||

maintainers:

|

||||

- name: stefanprodan

|

||||

url: https://github.com/stefanprodan

|

||||

email: stefanprodan@users.noreply.github.com

|

||||

- name: stefanprodan

|

||||

url: https://github.com/stefanprodan

|

||||

email: stefanprodan@users.noreply.github.com

|

||||

keywords:

|

||||

- flagger

|

||||

- grafana

|

||||

- canary

|

||||

- istio

|

||||

- appmesh

|

||||

|

||||

|

||||

@@ -1,13 +1,12 @@

|

||||

# Flagger Grafana

|

||||

|

||||

Grafana dashboards for monitoring progressive deployments powered by Istio, Prometheus and Flagger.

|

||||

Grafana dashboards for monitoring progressive deployments powered by Flagger and Prometheus.

|

||||

|

||||

|

||||

|

||||

## Prerequisites

|

||||

|

||||

* Kubernetes >= 1.11

|

||||

* Istio >= 1.0

|

||||

* Prometheus >= 2.6

|

||||

|

||||

## Installing the Chart

|

||||

@@ -18,14 +17,20 @@ Add Flagger Helm repository:

|

||||

helm repo add flagger https://flagger.app

|

||||

```

|

||||

|

||||

To install the chart with the release name `flagger-grafana`:

|

||||

To install the chart for Istio run:

|

||||

|

||||

```console

|

||||

helm upgrade -i flagger-grafana flagger/grafana \

|

||||

--namespace=istio-system \

|

||||

--set url=http://prometheus:9090 \

|

||||

--set user=admin \

|

||||

--set password=admin

|

||||

--set url=http://prometheus:9090

|

||||

```

|

||||

|

||||

To install the chart for AWS App Mesh run:

|

||||

|

||||

```console

|

||||

helm upgrade -i flagger-grafana flagger/grafana \

|

||||

--namespace=appmesh-system \

|

||||

--set url=http://appmesh-prometheus:9090

|

||||

```

|

||||

|

||||

The command deploys Grafana on the Kubernetes cluster in the default namespace.

|

||||

@@ -56,10 +61,7 @@ Parameter | Description | Default

|

||||

`affinity` | node/pod affinities | `node`

|

||||

`nodeSelector` | node labels for pod assignment | `{}`

|

||||

`service.type` | type of service | `ClusterIP`

|

||||

`url` | Prometheus URL, used when Weave Cloud token is empty | `http://prometheus:9090`

|

||||

`token` | Weave Cloud token | `none`

|

||||

`user` | Grafana admin username | `admin`

|

||||

`password` | Grafana admin password | `admin`

|

||||

`url` | Prometheus URL | `http://prometheus:9090`

|

||||

|

||||

Specify each parameter using the `--set key=value[,key=value]` argument to `helm install`. For example,

|

||||

|

||||

|

||||

1226

charts/grafana/dashboards/envoy.json

Normal file

1226

charts/grafana/dashboards/envoy.json

Normal file

File diff suppressed because it is too large

Load Diff

@@ -1,4 +1,4 @@

|

||||

apiVersion: apps/v1beta2

|

||||

apiVersion: apps/v1

|

||||

kind: Deployment

|

||||

metadata:

|

||||

name: {{ template "grafana.fullname" . }}

|

||||

|

||||

@@ -6,7 +6,7 @@ replicaCount: 1

|

||||

|

||||

image:

|

||||

repository: grafana/grafana

|

||||

tag: 6.2.5

|

||||

tag: 6.5.1

|

||||

pullPolicy: IfNotPresent

|

||||

|

||||

podAnnotations: {}

|

||||

|

||||

@@ -1,12 +1,12 @@

|

||||

apiVersion: v1

|

||||

name: loadtester

|

||||

version: 0.11.0

|

||||

appVersion: 0.11.0

|

||||

version: 0.12.1

|

||||

appVersion: 0.12.1

|

||||

kubeVersion: ">=1.11.0-0"

|

||||

engine: gotpl

|

||||

description: Flagger's load testing services based on rakyll/hey and bojand/ghz that generates traffic during canary analysis when configured as a webhook.

|

||||

home: https://docs.flagger.app

|

||||

icon: https://raw.githubusercontent.com/weaveworks/flagger/master/docs/logo/flagger-icon.png

|

||||

icon: https://raw.githubusercontent.com/weaveworks/flagger/master/docs/logo/weaveworks.png

|

||||

sources:

|

||||

- https://github.com/weaveworks/flagger

|

||||

maintainers:

|

||||

@@ -14,8 +14,10 @@ maintainers:

|

||||

url: https://github.com/stefanprodan

|

||||

email: stefanprodan@users.noreply.github.com

|

||||

keywords:

|

||||

- canary

|

||||

- flagger

|

||||

- istio

|

||||

- appmesh

|

||||

- linkerd

|

||||

- gloo

|

||||

- gitops

|

||||

- load testing

|

||||

|

||||

@@ -2,7 +2,7 @@ replicaCount: 1

|

||||

|

||||

image:

|

||||

repository: weaveworks/flagger-loadtester

|

||||

tag: 0.11.0

|

||||

tag: 0.12.1

|

||||

pullPolicy: IfNotPresent

|

||||

|

||||

podAnnotations:

|

||||

|

||||

@@ -3,10 +3,12 @@ version: 3.1.0

|

||||

appVersion: 3.1.0

|

||||

name: podinfo

|

||||

engine: gotpl

|

||||

description: Flagger canary deployment demo chart

|

||||

home: https://flagger.app

|

||||

maintainers:

|

||||

- email: stefanprodan@users.noreply.github.com

|

||||

name: stefanprodan

|

||||

description: Flagger canary deployment demo application

|

||||

home: https://docs.flagger.app

|

||||

icon: https://raw.githubusercontent.com/weaveworks/flagger/master/docs/logo/weaveworks.png

|

||||

sources:

|

||||

- https://github.com/weaveworks/flagger

|

||||

- https://github.com/stefanprodan/podinfo

|

||||

maintainers:

|

||||

- name: stefanprodan

|

||||

url: https://github.com/stefanprodan

|

||||

email: stefanprodan@users.noreply.github.com

|

||||

|

||||

@@ -10,16 +10,6 @@ import (

|

||||

"time"

|

||||

|

||||

"github.com/Masterminds/semver"

|

||||

clientset "github.com/weaveworks/flagger/pkg/client/clientset/versioned"

|

||||

informers "github.com/weaveworks/flagger/pkg/client/informers/externalversions"

|

||||

"github.com/weaveworks/flagger/pkg/controller"

|

||||

"github.com/weaveworks/flagger/pkg/logger"

|

||||

"github.com/weaveworks/flagger/pkg/metrics"

|

||||

"github.com/weaveworks/flagger/pkg/notifier"

|

||||

"github.com/weaveworks/flagger/pkg/router"

|

||||

"github.com/weaveworks/flagger/pkg/server"

|

||||

"github.com/weaveworks/flagger/pkg/signals"

|

||||

"github.com/weaveworks/flagger/pkg/version"

|

||||

"go.uber.org/zap"

|

||||

"k8s.io/apimachinery/pkg/util/uuid"

|

||||

"k8s.io/client-go/kubernetes"

|

||||

@@ -30,6 +20,18 @@ import (

|

||||

"k8s.io/client-go/tools/leaderelection/resourcelock"

|

||||

"k8s.io/client-go/transport"

|

||||

_ "k8s.io/code-generator/cmd/client-gen/generators"

|

||||

|

||||

"github.com/weaveworks/flagger/pkg/canary"

|

||||

clientset "github.com/weaveworks/flagger/pkg/client/clientset/versioned"

|

||||

informers "github.com/weaveworks/flagger/pkg/client/informers/externalversions"

|

||||

"github.com/weaveworks/flagger/pkg/controller"

|

||||

"github.com/weaveworks/flagger/pkg/logger"

|

||||

"github.com/weaveworks/flagger/pkg/metrics"

|

||||

"github.com/weaveworks/flagger/pkg/notifier"

|

||||

"github.com/weaveworks/flagger/pkg/router"

|

||||

"github.com/weaveworks/flagger/pkg/server"

|

||||

"github.com/weaveworks/flagger/pkg/signals"

|

||||

"github.com/weaveworks/flagger/pkg/version"

|

||||

)

|

||||

|

||||

var (

|

||||

@@ -159,7 +161,7 @@ func main() {

|

||||

logger.Infof("Watching namespace %s", namespace)

|

||||

}

|

||||

|

||||

observerFactory, err := metrics.NewFactory(metricsServer, meshProvider, 5*time.Second)

|

||||

observerFactory, err := metrics.NewFactory(metricsServer, 5*time.Second)

|

||||

if err != nil {

|

||||

logger.Fatalf("Error building prometheus client: %s", err.Error())

|

||||

}

|

||||

@@ -178,6 +180,12 @@ func main() {

|

||||

go server.ListenAndServe(port, 3*time.Second, logger, stopCh)

|

||||

|

||||

routerFactory := router.NewFactory(cfg, kubeClient, flaggerClient, ingressAnnotationsPrefix, logger, meshClient)

|

||||

configTracker := canary.ConfigTracker{

|

||||

Logger: logger,

|

||||

KubeClient: kubeClient,

|

||||

FlaggerClient: flaggerClient,

|

||||

}

|

||||

canaryFactory := canary.NewFactory(kubeClient, flaggerClient, configTracker, labels, logger)

|

||||

|

||||

c := controller.NewController(

|

||||

kubeClient,

|

||||

@@ -187,11 +195,11 @@ func main() {

|

||||

controlLoopInterval,

|

||||

logger,

|

||||

notifierClient,

|

||||

canaryFactory,

|

||||

routerFactory,

|

||||

observerFactory,