mirror of

https://github.com/fluxcd/flagger.git

synced 2026-02-17 11:29:59 +00:00

Compare commits

44 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

1902884b56 | ||

|

|

98d2805267 | ||

|

|

24a74d3589 | ||

|

|

15463456ec | ||

|

|

752eceed4b | ||

|

|

eadce34d6f | ||

|

|

11ccf34bbc | ||

|

|

e308678ed5 | ||

|

|

cbe72f0aa2 | ||

|

|

bc84e1c154 | ||

|

|

344bd45a0e | ||

|

|

72014f736f | ||

|

|

0a2949b6ad | ||

|

|

2ff695ecfe | ||

|

|

8d0b54e059 | ||

|

|

121a65fad0 | ||

|

|

ecaa203091 | ||

|

|

6d0e3c6468 | ||

|

|

c933476fff | ||

|

|

1335210cf5 | ||

|

|

9d12794600 | ||

|

|

d57fc7d03e | ||

|

|

1f9f6fb55a | ||

|

|

948df55de3 | ||

|

|

8914f26754 | ||

|

|

79b3370892 | ||

|

|

a233b99f0b | ||

|

|

0d94c01678 | ||

|

|

00151e92fe | ||

|

|

f7db0210ea | ||

|

|

cf3ba35fb9 | ||

|

|

177dc824e3 | ||

|

|

5f544b90d6 | ||

|

|

921ac00383 | ||

|

|

7df7218978 | ||

|

|

e4c6903a01 | ||

|

|

027342dc72 | ||

|

|

e17a747785 | ||

|

|

e477b37bd0 | ||

|

|

ad25068375 | ||

|

|

c92230c109 | ||

|

|

9e082d9ee3 | ||

|

|

cfd610ac55 | ||

|

|

82067f13bf |

@@ -1,6 +1,6 @@

|

||||

version: 2.1

|

||||

jobs:

|

||||

e2e-testing:

|

||||

e2e-istio-testing:

|

||||

machine: true

|

||||

steps:

|

||||

- checkout

|

||||

@@ -18,11 +18,20 @@ jobs:

|

||||

- run: test/e2e-build.sh supergloo:test.supergloo-system

|

||||

- run: test/e2e-tests.sh canary

|

||||

|

||||

e2e-nginx-testing:

|

||||

machine: true

|

||||

steps:

|

||||

- checkout

|

||||

- run: test/e2e-kind.sh

|

||||

- run: test/e2e-nginx.sh

|

||||

- run: test/e2e-nginx-build.sh

|

||||

- run: test/e2e-nginx-tests.sh

|

||||

|

||||

workflows:

|

||||

version: 2

|

||||

build-and-test:

|

||||

jobs:

|

||||

- e2e-testing:

|

||||

- e2e-istio-testing:

|

||||

filters:

|

||||

branches:

|

||||

ignore:

|

||||

@@ -36,3 +45,10 @@ workflows:

|

||||

- /gh-pages.*/

|

||||

- /docs-.*/

|

||||

- /release-.*/

|

||||

- e2e-nginx-testing:

|

||||

filters:

|

||||

branches:

|

||||

ignore:

|

||||

- /gh-pages.*/

|

||||

- /docs-.*/

|

||||

- /release-.*/

|

||||

33

CHANGELOG.md

33

CHANGELOG.md

@@ -2,6 +2,39 @@

|

||||

|

||||

All notable changes to this project are documented in this file.

|

||||

|

||||

## 0.13.2 (2019-04-11)

|

||||

|

||||

Fixes for Jenkins X deployments (prevent the jx GC from removing the primary instance)

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Do not copy labels from canary to primary deployment [#178](https://github.com/weaveworks/flagger/pull/178)

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Add NGINX ingress controller e2e and unit tests [#176](https://github.com/weaveworks/flagger/pull/176)

|

||||

|

||||

## 0.13.1 (2019-04-09)

|

||||

|

||||

Fixes for custom metrics checks and NGINX Prometheus queries

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Fix promql queries for custom checks and NGINX [#174](https://github.com/weaveworks/flagger/pull/174)

|

||||

|

||||

## 0.13.0 (2019-04-08)

|

||||

|

||||

Adds support for [NGINX](https://docs.flagger.app/usage/nginx-progressive-delivery) ingress controller

|

||||

|

||||

#### Features

|

||||

|

||||

- Add support for nginx ingress controller (weighted traffic and A/B testing) [#170](https://github.com/weaveworks/flagger/pull/170)

|

||||

- Add Prometheus add-on to Flagger Helm chart for App Mesh and NGINX [79b3370](https://github.com/weaveworks/flagger/pull/170/commits/79b337089294a92961bc8446fd185b38c50a32df)

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Fix duplicate hosts Istio error when using wildcards [#162](https://github.com/weaveworks/flagger/pull/162)

|

||||

|

||||

## 0.12.0 (2019-04-29)

|

||||

|

||||

Adds support for [SuperGloo](https://docs.flagger.app/install/flagger-install-with-supergloo)

|

||||

|

||||

6

Makefile

6

Makefile

@@ -18,6 +18,12 @@ run-appmesh:

|

||||

-slack-url=https://hooks.slack.com/services/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 \

|

||||

-slack-channel="devops-alerts"

|

||||

|

||||

run-nginx:

|

||||

go run cmd/flagger/* -kubeconfig=$$HOME/.kube/config -log-level=info -mesh-provider=nginx -namespace=nginx \

|

||||

-metrics-server=http://prometheus-weave.istio.weavedx.com \

|

||||

-slack-url=https://hooks.slack.com/services/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 \

|

||||

-slack-channel="devops-alerts"

|

||||

|

||||

build:

|

||||

docker build -t weaveworks/flagger:$(TAG) . -f Dockerfile

|

||||

|

||||

|

||||

23

README.md

23

README.md

@@ -7,7 +7,7 @@

|

||||

[](https://github.com/weaveworks/flagger/releases)

|

||||

|

||||

Flagger is a Kubernetes operator that automates the promotion of canary deployments

|

||||

using Istio or App Mesh routing for traffic shifting and Prometheus metrics for canary analysis.

|

||||

using Istio, App Mesh or NGINX routing for traffic shifting and Prometheus metrics for canary analysis.

|

||||

The canary analysis can be extended with webhooks for running acceptance tests,

|

||||

load tests or any other custom validation.

|

||||

|

||||

@@ -39,6 +39,7 @@ Flagger documentation can be found at [docs.flagger.app](https://docs.flagger.ap

|

||||

* [Istio canary deployments](https://docs.flagger.app/usage/progressive-delivery)

|

||||

* [Istio A/B testing](https://docs.flagger.app/usage/ab-testing)

|

||||

* [App Mesh canary deployments](https://docs.flagger.app/usage/appmesh-progressive-delivery)

|

||||

* [NGINX ingress controller canary deployments](https://docs.flagger.app/usage/nginx-progressive-delivery)

|

||||

* [Monitoring](https://docs.flagger.app/usage/monitoring)

|

||||

* [Alerting](https://docs.flagger.app/usage/alerting)

|

||||

* Tutorials

|

||||

@@ -153,16 +154,16 @@ For more details on how the canary analysis and promotion works please [read the

|

||||

|

||||

## Features

|

||||

|

||||

| Feature | Istio | App Mesh | SuperGloo |

|

||||

| -------------------------------------------- | ------------------ | ------------------ |------------------ |

|

||||

| Canary deployments (weighted traffic) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| A/B testing (headers and cookies filters) | :heavy_check_mark: | :heavy_minus_sign: | :heavy_minus_sign: |

|

||||

| Load testing | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Webhooks (custom acceptance tests) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Request success rate check (Envoy metric) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Request duration check (Envoy metric) | :heavy_check_mark: | :heavy_minus_sign: | :heavy_check_mark: |

|

||||

| Custom promql checks | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Ingress gateway (CORS, retries and timeouts) | :heavy_check_mark: | :heavy_minus_sign: | :heavy_check_mark: |

|

||||

| Feature | Istio | App Mesh | SuperGloo | NGINX Ingress |

|

||||

| -------------------------------------------- | ------------------ | ------------------ |------------------ |------------------ |

|

||||

| Canary deployments (weighted traffic) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| A/B testing (headers and cookies filters) | :heavy_check_mark: | :heavy_minus_sign: | :heavy_minus_sign: | :heavy_check_mark: |

|

||||

| Load testing | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Webhooks (custom acceptance tests) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Request success rate check (L7 metric) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Request duration check (L7 metric) | :heavy_check_mark: | :heavy_minus_sign: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Custom promql checks | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Ingress gateway (CORS, retries and timeouts) | :heavy_check_mark: | :heavy_minus_sign: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

|

||||

## Roadmap

|

||||

|

||||

|

||||

@@ -31,6 +31,12 @@ rules:

|

||||

resources:

|

||||

- horizontalpodautoscalers

|

||||

verbs: ["*"]

|

||||

- apiGroups:

|

||||

- "extensions"

|

||||

resources:

|

||||

- ingresses

|

||||

- ingresses/status

|

||||

verbs: ["*"]

|

||||

- apiGroups:

|

||||

- flagger.app

|

||||

resources:

|

||||

|

||||

@@ -69,6 +69,18 @@ spec:

|

||||

type: string

|

||||

name:

|

||||

type: string

|

||||

ingressRef:

|

||||

anyOf:

|

||||

- type: string

|

||||

- type: object

|

||||

required: ['apiVersion', 'kind', 'name']

|

||||

properties:

|

||||

apiVersion:

|

||||

type: string

|

||||

kind:

|

||||

type: string

|

||||

name:

|

||||

type: string

|

||||

service:

|

||||

type: object

|

||||

required: ['port']

|

||||

|

||||

@@ -22,7 +22,7 @@ spec:

|

||||

serviceAccountName: flagger

|

||||

containers:

|

||||

- name: flagger

|

||||

image: weaveworks/flagger:0.12.0

|

||||

image: weaveworks/flagger:0.13.2

|

||||

imagePullPolicy: IfNotPresent

|

||||

ports:

|

||||

- name: http

|

||||

|

||||

68

artifacts/nginx/canary.yaml

Normal file

68

artifacts/nginx/canary.yaml

Normal file

@@ -0,0 +1,68 @@

|

||||

apiVersion: flagger.app/v1alpha3

|

||||

kind: Canary

|

||||

metadata:

|

||||

name: podinfo

|

||||

namespace: test

|

||||

spec:

|

||||

# deployment reference

|

||||

targetRef:

|

||||

apiVersion: apps/v1

|

||||

kind: Deployment

|

||||

name: podinfo

|

||||

# ingress reference

|

||||

ingressRef:

|

||||

apiVersion: extensions/v1beta1

|

||||

kind: Ingress

|

||||

name: podinfo

|

||||

# HPA reference (optional)

|

||||

autoscalerRef:

|

||||

apiVersion: autoscaling/v2beta1

|

||||

kind: HorizontalPodAutoscaler

|

||||

name: podinfo

|

||||

# the maximum time in seconds for the canary deployment

|

||||

# to make progress before it is rollback (default 600s)

|

||||

progressDeadlineSeconds: 60

|

||||

service:

|

||||

# container port

|

||||

port: 9898

|

||||

canaryAnalysis:

|

||||

# schedule interval (default 60s)

|

||||

interval: 10s

|

||||

# max number of failed metric checks before rollback

|

||||

threshold: 10

|

||||

# max traffic percentage routed to canary

|

||||

# percentage (0-100)

|

||||

maxWeight: 50

|

||||

# canary increment step

|

||||

# percentage (0-100)

|

||||

stepWeight: 5

|

||||

# NGINX Prometheus checks

|

||||

metrics:

|

||||

- name: request-success-rate

|

||||

# minimum req success rate (non 5xx responses)

|

||||

# percentage (0-100)

|

||||

threshold: 99

|

||||

interval: 1m

|

||||

- name: "latency"

|

||||

threshold: 0.5

|

||||

interval: 1m

|

||||

query: |

|

||||

histogram_quantile(0.99,

|

||||

sum(

|

||||

rate(

|

||||

http_request_duration_seconds_bucket{

|

||||

kubernetes_namespace="test",

|

||||

kubernetes_pod_name=~"podinfo-[0-9a-zA-Z]+(-[0-9a-zA-Z]+)"

|

||||

}[1m]

|

||||

)

|

||||

) by (le)

|

||||

)

|

||||

# external checks (optional)

|

||||

webhooks:

|

||||

- name: load-test

|

||||

url: http://flagger-loadtester.test/

|

||||

timeout: 5s

|

||||

metadata:

|

||||

type: cmd

|

||||

cmd: "hey -z 1m -q 10 -c 2 http://app.example.com/"

|

||||

logCmdOutput: "true"

|

||||

69

artifacts/nginx/deployment.yaml

Normal file

69

artifacts/nginx/deployment.yaml

Normal file

@@ -0,0 +1,69 @@

|

||||

apiVersion: apps/v1

|

||||

kind: Deployment

|

||||

metadata:

|

||||

name: podinfo

|

||||

namespace: test

|

||||

labels:

|

||||

app: podinfo

|

||||

spec:

|

||||

replicas: 1

|

||||

strategy:

|

||||

rollingUpdate:

|

||||

maxUnavailable: 0

|

||||

type: RollingUpdate

|

||||

selector:

|

||||

matchLabels:

|

||||

app: podinfo

|

||||

template:

|

||||

metadata:

|

||||

annotations:

|

||||

prometheus.io/scrape: "true"

|

||||

labels:

|

||||

app: podinfo

|

||||

spec:

|

||||

containers:

|

||||

- name: podinfod

|

||||

image: quay.io/stefanprodan/podinfo:1.4.0

|

||||

imagePullPolicy: IfNotPresent

|

||||

ports:

|

||||

- containerPort: 9898

|

||||

name: http

|

||||

protocol: TCP

|

||||

command:

|

||||

- ./podinfo

|

||||

- --port=9898

|

||||

- --level=info

|

||||

- --random-delay=false

|

||||

- --random-error=false

|

||||

env:

|

||||

- name: PODINFO_UI_COLOR

|

||||

value: green

|

||||

livenessProbe:

|

||||

exec:

|

||||

command:

|

||||

- podcli

|

||||

- check

|

||||

- http

|

||||

- localhost:9898/healthz

|

||||

failureThreshold: 3

|

||||

periodSeconds: 10

|

||||

successThreshold: 1

|

||||

timeoutSeconds: 2

|

||||

readinessProbe:

|

||||

exec:

|

||||

command:

|

||||

- podcli

|

||||

- check

|

||||

- http

|

||||

- localhost:9898/readyz

|

||||

failureThreshold: 3

|

||||

periodSeconds: 3

|

||||

successThreshold: 1

|

||||

timeoutSeconds: 2

|

||||

resources:

|

||||

limits:

|

||||

cpu: 1000m

|

||||

memory: 256Mi

|

||||

requests:

|

||||

cpu: 100m

|

||||

memory: 16Mi

|

||||

19

artifacts/nginx/hpa.yaml

Normal file

19

artifacts/nginx/hpa.yaml

Normal file

@@ -0,0 +1,19 @@

|

||||

apiVersion: autoscaling/v2beta1

|

||||

kind: HorizontalPodAutoscaler

|

||||

metadata:

|

||||

name: podinfo

|

||||

namespace: test

|

||||

spec:

|

||||

scaleTargetRef:

|

||||

apiVersion: apps/v1

|

||||

kind: Deployment

|

||||

name: podinfo

|

||||

minReplicas: 2

|

||||

maxReplicas: 4

|

||||

metrics:

|

||||

- type: Resource

|

||||

resource:

|

||||

name: cpu

|

||||

# scale up if usage is above

|

||||

# 99% of the requested CPU (100m)

|

||||

targetAverageUtilization: 99

|

||||

17

artifacts/nginx/ingress.yaml

Normal file

17

artifacts/nginx/ingress.yaml

Normal file

@@ -0,0 +1,17 @@

|

||||

apiVersion: extensions/v1beta1

|

||||

kind: Ingress

|

||||

metadata:

|

||||

name: podinfo

|

||||

namespace: test

|

||||

labels:

|

||||

app: podinfo

|

||||

annotations:

|

||||

kubernetes.io/ingress.class: "nginx"

|

||||

spec:

|

||||

rules:

|

||||

- host: app.example.com

|

||||

http:

|

||||

paths:

|

||||

- backend:

|

||||

serviceName: podinfo

|

||||

servicePort: 9898

|

||||

@@ -1,10 +1,10 @@

|

||||

apiVersion: v1

|

||||

name: flagger

|

||||

version: 0.12.0

|

||||

appVersion: 0.12.0

|

||||

version: 0.13.2

|

||||

appVersion: 0.13.2

|

||||

kubeVersion: ">=1.11.0-0"

|

||||

engine: gotpl

|

||||

description: Flagger is a Kubernetes operator that automates the promotion of canary deployments using Istio routing for traffic shifting and Prometheus metrics for canary analysis.

|

||||

description: Flagger is a Kubernetes operator that automates the promotion of canary deployments using Istio, App Mesh or NGINX routing for traffic shifting and Prometheus metrics for canary analysis.

|

||||

home: https://docs.flagger.app

|

||||

icon: https://raw.githubusercontent.com/weaveworks/flagger/master/docs/logo/flagger-icon.png

|

||||

sources:

|

||||

|

||||

@@ -45,7 +45,7 @@ The following tables lists the configurable parameters of the Flagger chart and

|

||||

|

||||

Parameter | Description | Default

|

||||

--- | --- | ---

|

||||

`image.repository` | image repository | `quay.io/stefanprodan/flagger`

|

||||

`image.repository` | image repository | `weaveworks/flagger`

|

||||

`image.tag` | image tag | `<VERSION>`

|

||||

`image.pullPolicy` | image pull policy | `IfNotPresent`

|

||||

`metricsServer` | Prometheus URL | `http://prometheus.istio-system:9090`

|

||||

|

||||

@@ -70,6 +70,18 @@ spec:

|

||||

type: string

|

||||

name:

|

||||

type: string

|

||||

ingressRef:

|

||||

anyOf:

|

||||

- type: string

|

||||

- type: object

|

||||

required: ['apiVersion', 'kind', 'name']

|

||||

properties:

|

||||

apiVersion:

|

||||

type: string

|

||||

kind:

|

||||

type: string

|

||||

name:

|

||||

type: string

|

||||

service:

|

||||

type: object

|

||||

required: ['port']

|

||||

|

||||

@@ -38,7 +38,11 @@ spec:

|

||||

{{- if .Values.meshProvider }}

|

||||

- -mesh-provider={{ .Values.meshProvider }}

|

||||

{{- end }}

|

||||

{{- if .Values.prometheus.install }}

|

||||

- -metrics-server=http://{{ template "flagger.fullname" . }}-prometheus:9090

|

||||

{{- else }}

|

||||

- -metrics-server={{ .Values.metricsServer }}

|

||||

{{- end }}

|

||||

{{- if .Values.namespace }}

|

||||

- -namespace={{ .Values.namespace }}

|

||||

{{- end }}

|

||||

|

||||

292

charts/flagger/templates/prometheus.yaml

Normal file

292

charts/flagger/templates/prometheus.yaml

Normal file

@@ -0,0 +1,292 @@

|

||||

{{- if .Values.prometheus.install }}

|

||||

apiVersion: rbac.authorization.k8s.io/v1beta1

|

||||

kind: ClusterRole

|

||||

metadata:

|

||||

name: {{ template "flagger.fullname" . }}-prometheus

|

||||

labels:

|

||||

helm.sh/chart: {{ template "flagger.chart" . }}

|

||||

app.kubernetes.io/name: {{ template "flagger.name" . }}

|

||||

app.kubernetes.io/managed-by: {{ .Release.Service }}

|

||||

app.kubernetes.io/instance: {{ .Release.Name }}

|

||||

rules:

|

||||

- apiGroups: [""]

|

||||

resources:

|

||||

- nodes

|

||||

- services

|

||||

- endpoints

|

||||

- pods

|

||||

- nodes/proxy

|

||||

verbs: ["get", "list", "watch"]

|

||||

- apiGroups: [""]

|

||||

resources:

|

||||

- configmaps

|

||||

verbs: ["get"]

|

||||

- nonResourceURLs: ["/metrics"]

|

||||

verbs: ["get"]

|

||||

---

|

||||

apiVersion: rbac.authorization.k8s.io/v1beta1

|

||||

kind: ClusterRoleBinding

|

||||

metadata:

|

||||

name: {{ template "flagger.fullname" . }}-prometheus

|

||||

labels:

|

||||

helm.sh/chart: {{ template "flagger.chart" . }}

|

||||

app.kubernetes.io/name: {{ template "flagger.name" . }}

|

||||

app.kubernetes.io/managed-by: {{ .Release.Service }}

|

||||

app.kubernetes.io/instance: {{ .Release.Name }}

|

||||

roleRef:

|

||||

apiGroup: rbac.authorization.k8s.io

|

||||

kind: ClusterRole

|

||||

name: {{ template "flagger.fullname" . }}-prometheus

|

||||

subjects:

|

||||

- kind: ServiceAccount

|

||||

name: {{ template "flagger.serviceAccountName" . }}-prometheus

|

||||

namespace: {{ .Release.Namespace }}

|

||||

---

|

||||

apiVersion: v1

|

||||

kind: ServiceAccount

|

||||

metadata:

|

||||

name: {{ template "flagger.serviceAccountName" . }}-prometheus

|

||||

namespace: {{ .Release.Namespace }}

|

||||

labels:

|

||||

helm.sh/chart: {{ template "flagger.chart" . }}

|

||||

app.kubernetes.io/name: {{ template "flagger.name" . }}

|

||||

app.kubernetes.io/managed-by: {{ .Release.Service }}

|

||||

app.kubernetes.io/instance: {{ .Release.Name }}

|

||||

---

|

||||

apiVersion: v1

|

||||

kind: ConfigMap

|

||||

metadata:

|

||||

name: {{ template "flagger.fullname" . }}-prometheus

|

||||

namespace: {{ .Release.Namespace }}

|

||||

labels:

|

||||

helm.sh/chart: {{ template "flagger.chart" . }}

|

||||

app.kubernetes.io/name: {{ template "flagger.name" . }}

|

||||

app.kubernetes.io/managed-by: {{ .Release.Service }}

|

||||

app.kubernetes.io/instance: {{ .Release.Name }}

|

||||

data:

|

||||

prometheus.yml: |-

|

||||

global:

|

||||

scrape_interval: 5s

|

||||

scrape_configs:

|

||||

|

||||

# Scrape config for AppMesh Envoy sidecar

|

||||

- job_name: 'appmesh-envoy'

|

||||

metrics_path: /stats/prometheus

|

||||

kubernetes_sd_configs:

|

||||

- role: pod

|

||||

|

||||

relabel_configs:

|

||||

- source_labels: [__meta_kubernetes_pod_container_name]

|

||||

action: keep

|

||||

regex: '^envoy$'

|

||||

- source_labels: [__address__, __meta_kubernetes_pod_annotation_prometheus_io_port]

|

||||

action: replace

|

||||

regex: ([^:]+)(?::\d+)?;(\d+)

|

||||

replacement: ${1}:9901

|

||||

target_label: __address__

|

||||

- action: labelmap

|

||||

regex: __meta_kubernetes_pod_label_(.+)

|

||||

- source_labels: [__meta_kubernetes_namespace]

|

||||

action: replace

|

||||

target_label: kubernetes_namespace

|

||||

- source_labels: [__meta_kubernetes_pod_name]

|

||||

action: replace

|

||||

target_label: kubernetes_pod_name

|

||||

|

||||

# Exclude high cardinality metrics

|

||||

metric_relabel_configs:

|

||||

- source_labels: [ cluster_name ]

|

||||

regex: '(outbound|inbound|prometheus_stats).*'

|

||||

action: drop

|

||||

- source_labels: [ tcp_prefix ]

|

||||

regex: '(outbound|inbound|prometheus_stats).*'

|

||||

action: drop

|

||||

- source_labels: [ listener_address ]

|

||||

regex: '(.+)'

|

||||

action: drop

|

||||

- source_labels: [ http_conn_manager_listener_prefix ]

|

||||

regex: '(.+)'

|

||||

action: drop

|

||||

- source_labels: [ http_conn_manager_prefix ]

|

||||

regex: '(.+)'

|

||||

action: drop

|

||||

- source_labels: [ __name__ ]

|

||||

regex: 'envoy_tls.*'

|

||||

action: drop

|

||||

- source_labels: [ __name__ ]

|

||||

regex: 'envoy_tcp_downstream.*'

|

||||

action: drop

|

||||

- source_labels: [ __name__ ]

|

||||

regex: 'envoy_http_(stats|admin).*'

|

||||

action: drop

|

||||

- source_labels: [ __name__ ]

|

||||

regex: 'envoy_cluster_(lb|retry|bind|internal|max|original).*'

|

||||

action: drop

|

||||

|

||||

# Scrape config for API servers

|

||||

- job_name: 'kubernetes-apiservers'

|

||||

kubernetes_sd_configs:

|

||||

- role: endpoints

|

||||

namespaces:

|

||||

names:

|

||||

- default

|

||||

scheme: https

|

||||

tls_config:

|

||||

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

|

||||

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

|

||||

relabel_configs:

|

||||

- source_labels: [__meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name]

|

||||

action: keep

|

||||

regex: kubernetes;https

|

||||

|

||||

# Scrape config for nodes

|

||||

- job_name: 'kubernetes-nodes'

|

||||

scheme: https

|

||||

tls_config:

|

||||

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

|

||||

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

|

||||

kubernetes_sd_configs:

|

||||

- role: node

|

||||

relabel_configs:

|

||||

- action: labelmap

|

||||

regex: __meta_kubernetes_node_label_(.+)

|

||||

- target_label: __address__

|

||||

replacement: kubernetes.default.svc:443

|

||||

- source_labels: [__meta_kubernetes_node_name]

|

||||

regex: (.+)

|

||||

target_label: __metrics_path__

|

||||

replacement: /api/v1/nodes/${1}/proxy/metrics

|

||||

|

||||

# scrape config for cAdvisor

|

||||

- job_name: 'kubernetes-cadvisor'

|

||||

scheme: https

|

||||

tls_config:

|

||||

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

|

||||

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

|

||||

kubernetes_sd_configs:

|

||||

- role: node

|

||||

relabel_configs:

|

||||

- action: labelmap

|

||||

regex: __meta_kubernetes_node_label_(.+)

|

||||

- target_label: __address__

|

||||

replacement: kubernetes.default.svc:443

|

||||

- source_labels: [__meta_kubernetes_node_name]

|

||||

regex: (.+)

|

||||

target_label: __metrics_path__

|

||||

replacement: /api/v1/nodes/${1}/proxy/metrics/cadvisor

|

||||

|

||||

# scrape config for pods

|

||||

- job_name: kubernetes-pods

|

||||

kubernetes_sd_configs:

|

||||

- role: pod

|

||||

relabel_configs:

|

||||

- action: keep

|

||||

regex: true

|

||||

source_labels:

|

||||

- __meta_kubernetes_pod_annotation_prometheus_io_scrape

|

||||

- source_labels: [ __address__ ]

|

||||

regex: '.*9901.*'

|

||||

action: drop

|

||||

- action: replace

|

||||

regex: (.+)

|

||||

source_labels:

|

||||

- __meta_kubernetes_pod_annotation_prometheus_io_path

|

||||

target_label: __metrics_path__

|

||||

- action: replace

|

||||

regex: ([^:]+)(?::\d+)?;(\d+)

|

||||

replacement: $1:$2

|

||||

source_labels:

|

||||

- __address__

|

||||

- __meta_kubernetes_pod_annotation_prometheus_io_port

|

||||

target_label: __address__

|

||||

- action: labelmap

|

||||

regex: __meta_kubernetes_pod_label_(.+)

|

||||

- action: replace

|

||||

source_labels:

|

||||

- __meta_kubernetes_namespace

|

||||

target_label: kubernetes_namespace

|

||||

- action: replace

|

||||

source_labels:

|

||||

- __meta_kubernetes_pod_name

|

||||

target_label: kubernetes_pod_name

|

||||

---

|

||||

apiVersion: apps/v1

|

||||

kind: Deployment

|

||||

metadata:

|

||||

name: {{ template "flagger.fullname" . }}-prometheus

|

||||

namespace: {{ .Release.Namespace }}

|

||||

labels:

|

||||

helm.sh/chart: {{ template "flagger.chart" . }}

|

||||

app.kubernetes.io/name: {{ template "flagger.name" . }}

|

||||

app.kubernetes.io/managed-by: {{ .Release.Service }}

|

||||

app.kubernetes.io/instance: {{ .Release.Name }}

|

||||

spec:

|

||||

replicas: 1

|

||||

selector:

|

||||

matchLabels:

|

||||

app.kubernetes.io/name: {{ template "flagger.name" . }}-prometheus

|

||||

app.kubernetes.io/instance: {{ .Release.Name }}

|

||||

template:

|

||||

metadata:

|

||||

labels:

|

||||

app.kubernetes.io/name: {{ template "flagger.name" . }}-prometheus

|

||||

app.kubernetes.io/instance: {{ .Release.Name }}

|

||||

annotations:

|

||||

appmesh.k8s.aws/sidecarInjectorWebhook: disabled

|

||||

sidecar.istio.io/inject: "false"

|

||||

spec:

|

||||

serviceAccountName: {{ template "flagger.serviceAccountName" . }}-prometheus

|

||||

containers:

|

||||

- name: prometheus

|

||||

image: "docker.io/prom/prometheus:v2.7.1"

|

||||

imagePullPolicy: IfNotPresent

|

||||

args:

|

||||

- '--storage.tsdb.retention=6h'

|

||||

- '--config.file=/etc/prometheus/prometheus.yml'

|

||||

ports:

|

||||

- containerPort: 9090

|

||||

name: http

|

||||

livenessProbe:

|

||||

httpGet:

|

||||

path: /-/healthy

|

||||

port: 9090

|

||||

readinessProbe:

|

||||

httpGet:

|

||||

path: /-/ready

|

||||

port: 9090

|

||||

resources:

|

||||

requests:

|

||||

cpu: 10m

|

||||

memory: 128Mi

|

||||

volumeMounts:

|

||||

- name: config-volume

|

||||

mountPath: /etc/prometheus

|

||||

- name: data-volume

|

||||

mountPath: /prometheus/data

|

||||

|

||||

volumes:

|

||||

- name: config-volume

|

||||

configMap:

|

||||

name: {{ template "flagger.fullname" . }}-prometheus

|

||||

- name: data-volume

|

||||

emptyDir: {}

|

||||

---

|

||||

apiVersion: v1

|

||||

kind: Service

|

||||

metadata:

|

||||

name: {{ template "flagger.fullname" . }}-prometheus

|

||||

namespace: {{ .Release.Namespace }}

|

||||

labels:

|

||||

helm.sh/chart: {{ template "flagger.chart" . }}

|

||||

app.kubernetes.io/name: {{ template "flagger.name" . }}

|

||||

app.kubernetes.io/managed-by: {{ .Release.Service }}

|

||||

app.kubernetes.io/instance: {{ .Release.Name }}

|

||||

spec:

|

||||

selector:

|

||||

app.kubernetes.io/name: {{ template "flagger.name" . }}-prometheus

|

||||

app.kubernetes.io/instance: {{ .Release.Name }}

|

||||

ports:

|

||||

- name: http

|

||||

protocol: TCP

|

||||

port: 9090

|

||||

{{- end }}

|

||||

@@ -27,6 +27,12 @@ rules:

|

||||

resources:

|

||||

- horizontalpodautoscalers

|

||||

verbs: ["*"]

|

||||

- apiGroups:

|

||||

- "extensions"

|

||||

resources:

|

||||

- ingresses

|

||||

- ingresses/status

|

||||

verbs: ["*"]

|

||||

- apiGroups:

|

||||

- flagger.app

|

||||

resources:

|

||||

|

||||

@@ -2,12 +2,12 @@

|

||||

|

||||

image:

|

||||

repository: weaveworks/flagger

|

||||

tag: 0.12.0

|

||||

tag: 0.13.2

|

||||

pullPolicy: IfNotPresent

|

||||

|

||||

metricsServer: "http://prometheus:9090"

|

||||

|

||||

# accepted values are istio or appmesh (defaults to istio)

|

||||

# accepted values are istio, appmesh, nginx or supergloo:mesh.namespace (defaults to istio)

|

||||

meshProvider: ""

|

||||

|

||||

# single namespace restriction

|

||||

@@ -49,3 +49,7 @@ nodeSelector: {}

|

||||

tolerations: []

|

||||

|

||||

affinity: {}

|

||||

|

||||

prometheus:

|

||||

# to be used with AppMesh or nginx ingress

|

||||

install: false

|

||||

|

||||

@@ -1,6 +1,6 @@

|

||||

apiVersion: v1

|

||||

name: grafana

|

||||

version: 1.1.0

|

||||

version: 1.2.0

|

||||

appVersion: 5.4.3

|

||||

description: Grafana dashboards for monitoring Flagger canary deployments

|

||||

icon: https://raw.githubusercontent.com/weaveworks/flagger/master/docs/logo/flagger-icon.png

|

||||

|

||||

@@ -1614,9 +1614,9 @@

|

||||

"multi": false,

|

||||

"name": "primary",

|

||||

"options": [],

|

||||

"query": "query_result(sum(istio_requests_total{destination_workload_namespace=~\"$namespace\"}) by (destination_service_name))",

|

||||

"query": "query_result(sum(istio_requests_total{destination_workload_namespace=~\"$namespace\"}) by (destination_workload))",

|

||||

"refresh": 1,

|

||||

"regex": "/.*destination_service_name=\"([^\"]*).*/",

|

||||

"regex": "/.*destination_workload=\"([^\"]*).*/",

|

||||

"skipUrlSync": false,

|

||||

"sort": 1,

|

||||

"tagValuesQuery": "",

|

||||

@@ -1636,9 +1636,9 @@

|

||||

"multi": false,

|

||||

"name": "canary",

|

||||

"options": [],

|

||||

"query": "query_result(sum(istio_requests_total{destination_workload_namespace=~\"$namespace\"}) by (destination_service_name))",

|

||||

"query": "query_result(sum(istio_requests_total{destination_workload_namespace=~\"$namespace\"}) by (destination_workload))",

|

||||

"refresh": 1,

|

||||

"regex": "/.*destination_service_name=\"([^\"]*).*/",

|

||||

"regex": "/.*destination_workload=\"([^\"]*).*/",

|

||||

"skipUrlSync": false,

|

||||

"sort": 1,

|

||||

"tagValuesQuery": "",

|

||||

|

||||

@@ -99,7 +99,7 @@ func main() {

|

||||

|

||||

canaryInformer := flaggerInformerFactory.Flagger().V1alpha3().Canaries()

|

||||

|

||||

logger.Infof("Starting flagger version %s revision %s", version.VERSION, version.REVISION)

|

||||

logger.Infof("Starting flagger version %s revision %s mesh provider %s", version.VERSION, version.REVISION, meshProvider)

|

||||

|

||||

ver, err := kubeClient.Discovery().ServerVersion()

|

||||

if err != nil {

|

||||

|

||||

BIN

docs/diagrams/flagger-gitops-istio.png

Normal file

BIN

docs/diagrams/flagger-gitops-istio.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 35 KiB |

BIN

docs/diagrams/flagger-nginx-overview.png

Normal file

BIN

docs/diagrams/flagger-nginx-overview.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 40 KiB |

@@ -5,7 +5,7 @@ description: Flagger is a progressive delivery Kubernetes operator

|

||||

# Introduction

|

||||

|

||||

[Flagger](https://github.com/weaveworks/flagger) is a **Kubernetes** operator that automates the promotion of canary

|

||||

deployments using **Istio** or **App Mesh** routing for traffic shifting and **Prometheus** metrics for canary analysis.

|

||||

deployments using **Istio**, **App Mesh** or **NGINX** routing for traffic shifting and **Prometheus** metrics for canary analysis.

|

||||

The canary analysis can be extended with webhooks for running

|

||||

system integration/acceptance tests, load tests, or any other custom validation.

|

||||

|

||||

|

||||

@@ -15,6 +15,7 @@

|

||||

* [Istio Canary Deployments](usage/progressive-delivery.md)

|

||||

* [Istio A/B Testing](usage/ab-testing.md)

|

||||

* [App Mesh Canary Deployments](usage/appmesh-progressive-delivery.md)

|

||||

* [NGINX Canary Deployments](usage/nginx-progressive-delivery.md)

|

||||

* [Monitoring](usage/monitoring.md)

|

||||

* [Alerting](usage/alerting.md)

|

||||

|

||||

|

||||

@@ -689,7 +689,7 @@ webhooks:

|

||||

|

||||

When the canary analysis starts, Flagger will call the webhooks and the load tester will run the `hey` commands

|

||||

in the background, if they are not already running. This will ensure that during the

|

||||

analysis, the `podinfo.test` virtual service will receive a steady steam of GET and POST requests.

|

||||

analysis, the `podinfo.test` virtual service will receive a steady stream of GET and POST requests.

|

||||

|

||||

If your workload is exposed outside the mesh with the Istio Gateway and TLS you can point `hey` to the

|

||||

public URL and use HTTP2.

|

||||

|

||||

@@ -125,19 +125,6 @@ Status:

|

||||

Type: MeshActive

|

||||

```

|

||||

|

||||

### Install Prometheus

|

||||

|

||||

In order to collect the App Mesh metrics that Flagger needs to run the canary analysis,

|

||||

you'll need to setup a Prometheus instance to scrape the Envoy sidecars.

|

||||

|

||||

Deploy Prometheus in the `appmesh-system` namespace:

|

||||

|

||||

```bash

|

||||

REPO=https://raw.githubusercontent.com/weaveworks/flagger/master

|

||||

|

||||

kubectl apply -f ${REPO}/artifacts/eks/appmesh-prometheus.yaml

|

||||

```

|

||||

|

||||

### Install Flagger and Grafana

|

||||

|

||||

Add Flagger Helm repository:

|

||||

@@ -146,16 +133,17 @@ Add Flagger Helm repository:

|

||||

helm repo add flagger https://flagger.app

|

||||

```

|

||||

|

||||

Deploy Flagger in the _**appmesh-system**_ namespace:

|

||||

Deploy Flagger and Prometheus in the _**appmesh-system**_ namespace:

|

||||

|

||||

```bash

|

||||

helm upgrade -i flagger flagger/flagger \

|

||||

--namespace=appmesh-system \

|

||||

--set meshProvider=appmesh \

|

||||

--set metricsServer=http://prometheus.appmesh-system:9090

|

||||

--set prometheus.install=true

|

||||

```

|

||||

|

||||

You can install Flagger in any namespace as long as it can talk to the Istio Prometheus service on port 9090.

|

||||

In order to collect the App Mesh metrics that Flagger needs to run the canary analysis,

|

||||

you'll need to setup a Prometheus instance to scrape the Envoy sidecars.

|

||||

|

||||

You can enable **Slack** notifications with:

|

||||

|

||||

|

||||

@@ -2,37 +2,61 @@

|

||||

|

||||

This guide walks you through setting up Flagger on a Kubernetes cluster using [SuperGloo](https://github.com/solo-io/supergloo).

|

||||

|

||||

SuperGloo by [Solo.io](https://solo.io) is an opinionated abstraction layer that will simplify the installation, management, and operation of your service mesh.

|

||||

It supports running multiple ingress with multiple mesh (Istio, App Mesh, Consul Connect and Linkerd 2) in the same cluster.

|

||||

SuperGloo by [Solo.io](https://solo.io) is an opinionated abstraction layer that simplifies the installation, management, and operation of your service mesh.

|

||||

It supports running multiple ingresses with multiple meshes (Istio, App Mesh, Consul Connect and Linkerd 2) in the same cluster.

|

||||

|

||||

### Prerequisites

|

||||

|

||||

Flagger requires a Kubernetes cluster **v1.11** or newer with the following admission controllers enabled:

|

||||

|

||||

* MutatingAdmissionWebhook

|

||||

* ValidatingAdmissionWebhook

|

||||

* ValidatingAdmissionWebhook

|

||||

|

||||

### Install Istio with SuperGloo

|

||||

|

||||

Download SuperGloo CLI and add it to your path:

|

||||

#### Install SuperGloo command line interface helper

|

||||

|

||||

SuperGloo includes a command line helper (CLI) that makes operation of SuperGloo easier.

|

||||

The CLI is not required for SuperGloo to function correctly.

|

||||

|

||||

If you use [Homebrew](https://brew.sh) package manager run the following

|

||||

commands to install the SuperGloo CLI.

|

||||

|

||||

```bash

|

||||

brew tap solo-io/tap

|

||||

brew solo-io/tap/supergloo

|

||||

```

|

||||

|

||||

Or you can download SuperGloo CLI and add it to your path:

|

||||

|

||||

```bash

|

||||

curl -sL https://run.solo.io/supergloo/install | sh

|

||||

export PATH=$HOME/.supergloo/bin:$PATH

|

||||

```

|

||||

|

||||

#### Install SuperGloo controller

|

||||

|

||||

Deploy the SuperGloo controller in the `supergloo-system` namespace:

|

||||

|

||||

```bash

|

||||

supergloo init

|

||||

```

|

||||

|

||||

This is equivalent to installing SuperGloo using its Helm chart

|

||||

|

||||

```bash

|

||||

helm repo add supergloo http://storage.googleapis.com/supergloo-helm

|

||||

helm upgrade --install supergloo supergloo/supergloo --namespace supergloo-system

|

||||

```

|

||||

|

||||

#### Install Istio using SuperGloo

|

||||

|

||||

Create the `istio-system` namespace and install Istio with traffic management, telemetry and Prometheus enabled:

|

||||

|

||||

```bash

|

||||

ISTIO_VER="1.0.6"

|

||||

|

||||

kubectl create ns istio-system

|

||||

kubectl create namespace istio-system

|

||||

|

||||

supergloo install istio --name istio \

|

||||

--namespace=supergloo-system \

|

||||

@@ -40,9 +64,34 @@ supergloo install istio --name istio \

|

||||

--installation-namespace=istio-system \

|

||||

--mtls=false \

|

||||

--prometheus=true \

|

||||

--version ${ISTIO_VER}

|

||||

--version=${ISTIO_VER}

|

||||

```

|

||||

|

||||

This creates a Kubernetes Custom Resource (CRD) like the following.

|

||||

|

||||

```yaml

|

||||

apiVersion: supergloo.solo.io/v1

|

||||

kind: Install

|

||||

metadata:

|

||||

name: istio

|

||||

namespace: supergloo-system

|

||||

spec:

|

||||

installationNamespace: istio-system

|

||||

mesh:

|

||||

installedMesh:

|

||||

name: istio

|

||||

namespace: supergloo-system

|

||||

istioMesh:

|

||||

enableAutoInject: true

|

||||

enableMtls: false

|

||||

installGrafana: false

|

||||

installJaeger: false

|

||||

installPrometheus: true

|

||||

istioVersion: 1.0.6

|

||||

```

|

||||

|

||||

#### Allow Flagger to manipulate SuperGloo

|

||||

|

||||

Create a cluster role binding so that Flagger can manipulate SuperGloo custom resources:

|

||||

|

||||

```bash

|

||||

@@ -54,8 +103,8 @@ kubectl create clusterrolebinding flagger-supergloo \

|

||||

Wait for the Istio control plane to become available:

|

||||

|

||||

```bash

|

||||

kubectl -n istio-system rollout status deployment/istio-sidecar-injector

|

||||

kubectl -n istio-system rollout status deployment/prometheus

|

||||

kubectl --namespace istio-system rollout status deployment/istio-sidecar-injector

|

||||

kubectl --namespace istio-system rollout status deployment/prometheus

|

||||

```

|

||||

|

||||

### Install Flagger

|

||||

@@ -106,9 +155,9 @@ You can access Grafana using port forwarding:

|

||||

kubectl -n istio-system port-forward svc/flagger-grafana 3000:80

|

||||

```

|

||||

|

||||

### Install Load Tester

|

||||

### Install Load Tester

|

||||

|

||||

Flagger comes with an optional load testing service that generates traffic

|

||||

Flagger comes with an optional load testing service that generates traffic

|

||||

during canary analysis when configured as a webhook.

|

||||

|

||||

Deploy the load test runner with Helm:

|

||||

|

||||

421

docs/gitbook/usage/nginx-progressive-delivery.md

Normal file

421

docs/gitbook/usage/nginx-progressive-delivery.md

Normal file

@@ -0,0 +1,421 @@

|

||||

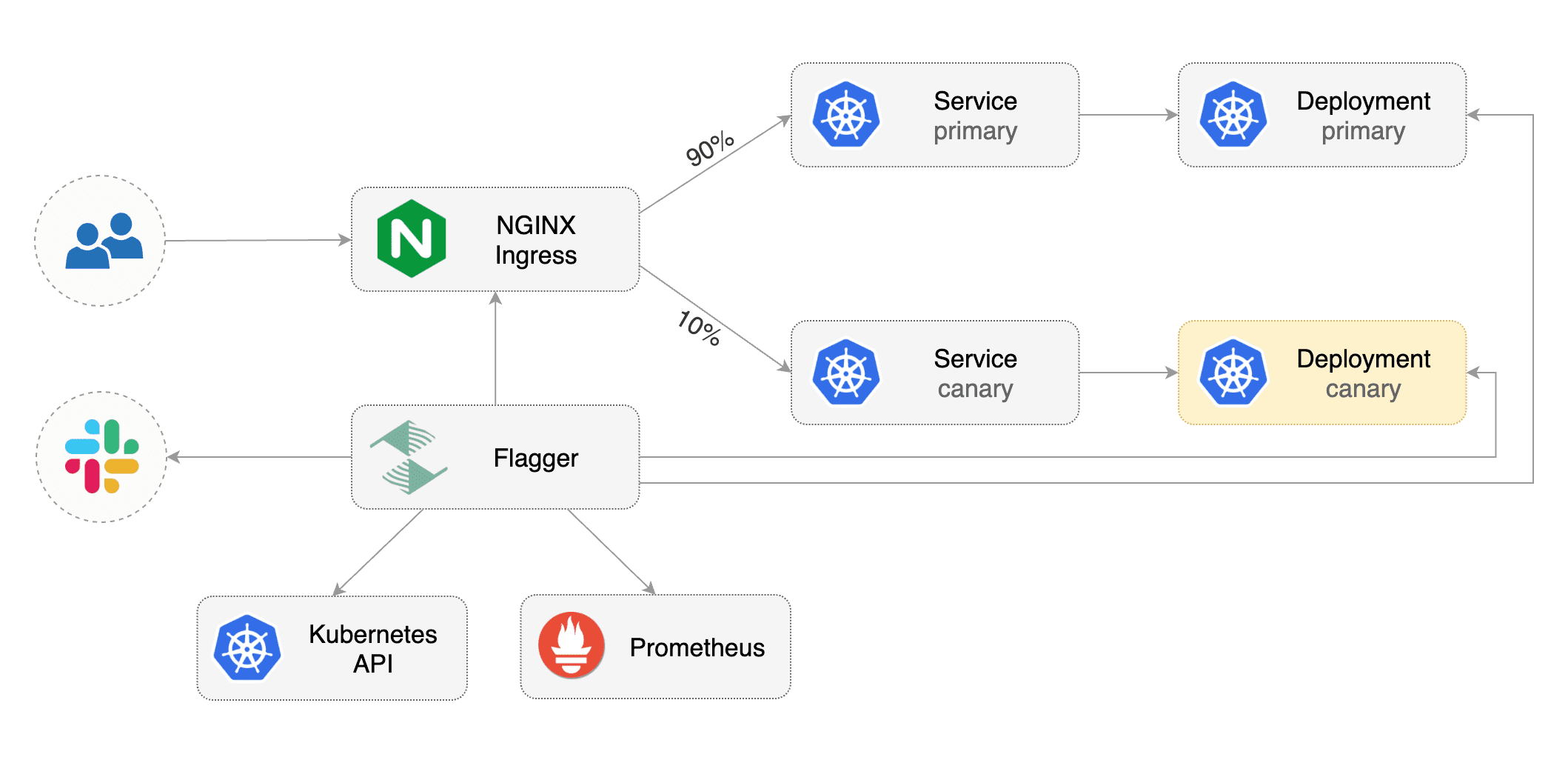

# NGNIX Ingress Controller Canary Deployments

|

||||

|

||||

This guide shows you how to use the NGINX ingress controller and Flagger to automate canary deployments and A/B testing.

|

||||

|

||||

|

||||

|

||||

### Prerequisites

|

||||

|

||||

Flagger requires a Kubernetes cluster **v1.11** or newer and NGINX ingress **0.24** or newer.

|

||||

|

||||

Install NGINX with Helm:

|

||||

|

||||

```bash

|

||||

helm upgrade -i nginx-ingress stable/nginx-ingress \

|

||||

--namespace ingress-nginx \

|

||||

--set controller.stats.enabled=true \

|

||||

--set controller.metrics.enabled=true \

|

||||

--set controller.podAnnotations."prometheus\.io/scrape"=true \

|

||||

--set controller.podAnnotations."prometheus\.io/port"=10254

|

||||

```

|

||||

|

||||

Install Flagger and the Prometheus add-on in the same namespace as NGINX:

|

||||

|

||||

```bash

|

||||

helm repo add flagger https://flagger.app

|

||||

|

||||

helm upgrade -i flagger flagger/flagger \

|

||||

--namespace ingress-nginx \

|

||||

--set prometheus.install=true \

|

||||

--set meshProvider=nginx

|

||||

```

|

||||

|

||||

Optionally you can enable Slack notifications:

|

||||

|

||||

```bash

|

||||

helm upgrade -i flagger flagger/flagger \

|

||||

--reuse-values \

|

||||

--namespace ingress-nginx \

|

||||

--set slack.url=https://hooks.slack.com/services/YOUR/SLACK/WEBHOOK \

|

||||

--set slack.channel=general \

|

||||

--set slack.user=flagger

|

||||

```

|

||||

|

||||

### Bootstrap

|

||||

|

||||

Flagger takes a Kubernetes deployment and optionally a horizontal pod autoscaler (HPA),

|

||||

then creates a series of objects (Kubernetes deployments, ClusterIP services and canary ingress).

|

||||

These objects expose the application outside the cluster and drive the canary analysis and promotion.

|

||||

|

||||

Create a test namespace:

|

||||

|

||||

```bash

|

||||

kubectl create ns test

|

||||

```

|

||||

|

||||

Create a deployment and a horizontal pod autoscaler:

|

||||

|

||||

```bash

|

||||

kubectl apply -f ${REPO}/artifacts/nginx/deployment.yaml

|

||||

kubectl apply -f ${REPO}/artifacts/nginx/hpa.yaml

|

||||

```

|

||||

|

||||

Deploy the load testing service to generate traffic during the canary analysis:

|

||||

|

||||

```bash

|

||||

helm upgrade -i flagger-loadtester flagger/loadtester \

|

||||

--namespace=test

|

||||

```

|

||||

|

||||

Create an ingress definition (replace `app.example.com` with your own domain):

|

||||

|

||||

```yaml

|

||||

apiVersion: extensions/v1beta1

|

||||

kind: Ingress

|

||||

metadata:

|

||||

name: podinfo

|

||||

namespace: test

|

||||

labels:

|

||||

app: podinfo

|

||||

annotations:

|

||||

kubernetes.io/ingress.class: "nginx"

|

||||

spec:

|

||||

rules:

|

||||

- host: app.example.com

|

||||

http:

|

||||

paths:

|

||||

- backend:

|

||||

serviceName: podinfo

|

||||

servicePort: 9898

|

||||

```

|

||||

|

||||

Save the above resource as podinfo-ingress.yaml and then apply it:

|

||||

|

||||

```bash

|

||||

kubectl apply -f ./podinfo-ingress.yaml

|

||||

```

|

||||

|

||||

Create a canary custom resource (replace `app.example.com` with your own domain):

|

||||

|

||||

```yaml

|

||||

apiVersion: flagger.app/v1alpha3

|

||||

kind: Canary

|

||||

metadata:

|

||||

name: podinfo

|

||||

namespace: test

|

||||

spec:

|

||||

# deployment reference

|

||||

targetRef:

|

||||

apiVersion: apps/v1

|

||||

kind: Deployment

|

||||

name: podinfo

|

||||

# ingress reference

|

||||

ingressRef:

|

||||

apiVersion: extensions/v1beta1

|

||||

kind: Ingress

|

||||

name: podinfo

|

||||

# HPA reference (optional)

|

||||

autoscalerRef:

|

||||

apiVersion: autoscaling/v2beta1

|

||||

kind: HorizontalPodAutoscaler

|

||||

name: podinfo

|

||||

# the maximum time in seconds for the canary deployment

|

||||

# to make progress before it is rollback (default 600s)

|

||||

progressDeadlineSeconds: 60

|

||||

service:

|

||||

# container port

|

||||

port: 9898

|

||||

canaryAnalysis:

|

||||

# schedule interval (default 60s)

|

||||

interval: 10s

|

||||

# max number of failed metric checks before rollback

|

||||

threshold: 10

|

||||

# max traffic percentage routed to canary

|

||||

# percentage (0-100)

|

||||

maxWeight: 50

|

||||

# canary increment step

|

||||

# percentage (0-100)

|

||||

stepWeight: 5

|

||||

# NGINX Prometheus checks

|

||||

metrics:

|

||||

- name: request-success-rate

|

||||

# minimum req success rate (non 5xx responses)

|

||||

# percentage (0-100)

|

||||

threshold: 99

|

||||

interval: 1m

|

||||

# load testing (optional)

|

||||

webhooks:

|

||||

- name: load-test

|

||||

url: http://flagger-loadtester.test/

|

||||

timeout: 5s

|

||||

metadata:

|

||||

type: cmd

|

||||

cmd: "hey -z 1m -q 10 -c 2 http://app.example.com/"

|

||||

```

|

||||

|

||||

Save the above resource as podinfo-canary.yaml and then apply it:

|

||||

|

||||

```bash

|

||||

kubectl apply -f ./podinfo-canary.yaml

|

||||

```

|

||||

|

||||

After a couple of seconds Flagger will create the canary objects:

|

||||

|

||||

```bash

|

||||

# applied

|

||||

deployment.apps/podinfo

|

||||

horizontalpodautoscaler.autoscaling/podinfo

|

||||

ingresses.extensions/podinfo

|

||||

canary.flagger.app/podinfo

|

||||

|

||||

# generated

|

||||

deployment.apps/podinfo-primary

|

||||

horizontalpodautoscaler.autoscaling/podinfo-primary

|

||||

service/podinfo

|

||||

service/podinfo-canary

|

||||

service/podinfo-primary

|

||||

ingresses.extensions/podinfo-canary

|

||||

```

|

||||

|

||||

### Automated canary promotion

|

||||

|

||||

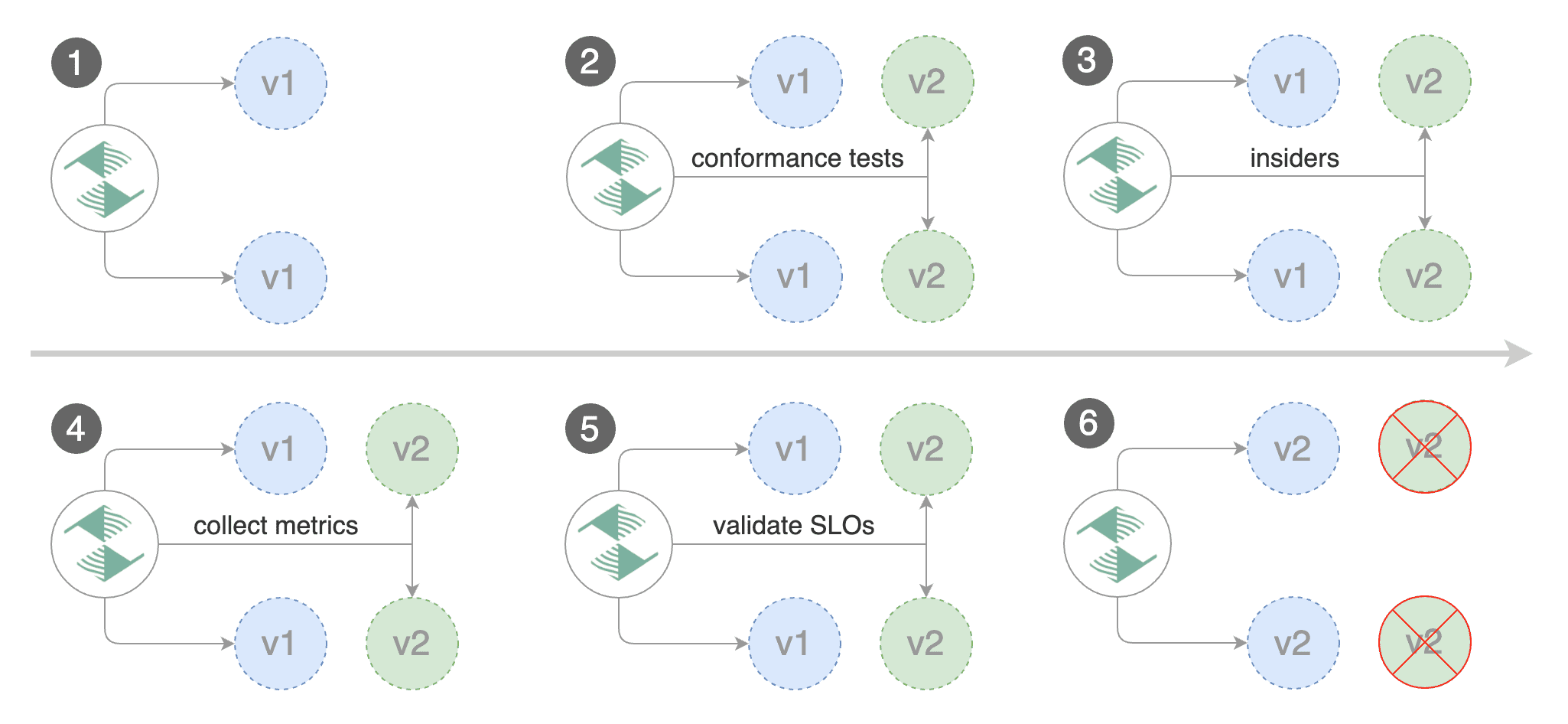

Flagger implements a control loop that gradually shifts traffic to the canary while measuring key performance indicators

|

||||

like HTTP requests success rate, requests average duration and pod health.

|

||||

Based on analysis of the KPIs a canary is promoted or aborted, and the analysis result is published to Slack.

|

||||

|

||||

|

||||

|

||||

Trigger a canary deployment by updating the container image:

|

||||

|

||||

```bash

|

||||

kubectl -n test set image deployment/podinfo \

|

||||

podinfod=quay.io/stefanprodan/podinfo:1.4.1

|

||||

```

|

||||

|

||||

Flagger detects that the deployment revision changed and starts a new rollout:

|

||||

|

||||

```text

|

||||

kubectl -n test describe canary/podinfo

|

||||

|

||||

Status:

|

||||

Canary Weight: 0

|

||||

Failed Checks: 0

|

||||

Phase: Succeeded

|

||||

Events:

|

||||

Type Reason Age From Message

|

||||

---- ------ ---- ---- -------

|

||||

Normal Synced 3m flagger New revision detected podinfo.test

|

||||

Normal Synced 3m flagger Scaling up podinfo.test

|

||||

Warning Synced 3m flagger Waiting for podinfo.test rollout to finish: 0 of 1 updated replicas are available

|

||||

Normal Synced 3m flagger Advance podinfo.test canary weight 5

|

||||

Normal Synced 3m flagger Advance podinfo.test canary weight 10

|

||||

Normal Synced 3m flagger Advance podinfo.test canary weight 15

|

||||

Normal Synced 2m flagger Advance podinfo.test canary weight 20

|

||||

Normal Synced 2m flagger Advance podinfo.test canary weight 25

|

||||

Normal Synced 1m flagger Advance podinfo.test canary weight 30

|

||||

Normal Synced 1m flagger Advance podinfo.test canary weight 35

|

||||

Normal Synced 55s flagger Advance podinfo.test canary weight 40

|

||||

Normal Synced 45s flagger Advance podinfo.test canary weight 45

|

||||

Normal Synced 35s flagger Advance podinfo.test canary weight 50

|

||||

Normal Synced 25s flagger Copying podinfo.test template spec to podinfo-primary.test

|

||||

Warning Synced 15s flagger Waiting for podinfo-primary.test rollout to finish: 1 of 2 updated replicas are available

|

||||

Normal Synced 5s flagger Promotion completed! Scaling down podinfo.test

|

||||

```

|

||||

|

||||

**Note** that if you apply new changes to the deployment during the canary analysis, Flagger will restart the analysis.

|

||||

|

||||

You can monitor all canaries with:

|

||||

|

||||

```bash

|

||||

watch kubectl get canaries --all-namespaces

|

||||

|

||||

NAMESPACE NAME STATUS WEIGHT LASTTRANSITIONTIME

|

||||

test podinfo Progressing 15 2019-05-06T14:05:07Z

|

||||

prod frontend Succeeded 0 2019-05-05T16:15:07Z

|

||||

prod backend Failed 0 2019-05-04T17:05:07Z

|

||||

```

|

||||

|

||||

### Automated rollback

|

||||

|

||||

During the canary analysis you can generate HTTP 500 errors to test if Flagger pauses and rolls back the faulted version.

|

||||

|

||||

Trigger another canary deployment:

|

||||

|

||||

```bash

|

||||

kubectl -n test set image deployment/podinfo \

|

||||

podinfod=quay.io/stefanprodan/podinfo:1.4.2

|

||||

```

|

||||

|

||||

Generate HTTP 500 errors:

|

||||

|

||||

```bash

|

||||

watch curl http://app.example.com/status/500

|

||||

```

|

||||

|

||||

When the number of failed checks reaches the canary analysis threshold, the traffic is routed back to the primary,

|

||||

the canary is scaled to zero and the rollout is marked as failed.

|

||||

|

||||

```text

|

||||

kubectl -n test describe canary/podinfo

|

||||

|

||||

Status:

|

||||

Canary Weight: 0

|

||||

Failed Checks: 10

|

||||

Phase: Failed

|

||||

Events:

|

||||

Type Reason Age From Message

|

||||

---- ------ ---- ---- -------

|

||||

Normal Synced 3m flagger Starting canary deployment for podinfo.test

|

||||

Normal Synced 3m flagger Advance podinfo.test canary weight 5

|

||||

Normal Synced 3m flagger Advance podinfo.test canary weight 10

|

||||

Normal Synced 3m flagger Advance podinfo.test canary weight 15

|

||||

Normal Synced 3m flagger Halt podinfo.test advancement success rate 69.17% < 99%

|

||||

Normal Synced 2m flagger Halt podinfo.test advancement success rate 61.39% < 99%

|

||||

Normal Synced 2m flagger Halt podinfo.test advancement success rate 55.06% < 99%

|

||||

Normal Synced 2m flagger Halt podinfo.test advancement success rate 47.00% < 99%

|

||||

Normal Synced 2m flagger (combined from similar events): Halt podinfo.test advancement success rate 38.08% < 99%

|

||||

Warning Synced 1m flagger Rolling back podinfo.test failed checks threshold reached 10

|

||||

Warning Synced 1m flagger Canary failed! Scaling down podinfo.test

|

||||

```

|

||||

|

||||

### Custom metrics

|

||||

|

||||

The canary analysis can be extended with Prometheus queries.

|

||||

|

||||

The demo app is instrumented with Prometheus so you can create a custom check that will use the HTTP request duration

|

||||

histogram to validate the canary.

|

||||

|

||||

Edit the canary analysis and add the following metric:

|

||||

|

||||

```yaml

|

||||

canaryAnalysis:

|

||||

metrics:

|

||||

- name: "latency"

|

||||

threshold: 0.5

|

||||

interval: 1m

|

||||

query: |

|

||||

histogram_quantile(0.99,

|

||||

sum(

|

||||

rate(

|

||||

http_request_duration_seconds_bucket{

|

||||

kubernetes_namespace="test",

|

||||

kubernetes_pod_name=~"podinfo-[0-9a-zA-Z]+(-[0-9a-zA-Z]+)"

|

||||

}[1m]

|

||||

)

|

||||

) by (le)

|

||||

)

|

||||

```

|

||||

|

||||

The threshold is set to 500ms so if the average request duration in the last minute

|

||||

goes over half a second then the analysis will fail and the canary will not be promoted.

|

||||

|

||||

Trigger a canary deployment by updating the container image:

|

||||

|

||||

```bash

|

||||

kubectl -n test set image deployment/podinfo \

|

||||

podinfod=quay.io/stefanprodan/podinfo:1.4.3

|

||||

```

|

||||

|

||||

Generate high response latency:

|

||||

|

||||

```bash

|

||||

watch curl http://app.exmaple.com/delay/2

|

||||

```

|

||||

|

||||

Watch Flagger logs:

|

||||

|

||||

```

|

||||

kubectl -n nginx-ingress logs deployment/flagger -f | jq .msg

|

||||

|

||||

Starting canary deployment for podinfo.test

|

||||

Advance podinfo.test canary weight 5

|

||||

Advance podinfo.test canary weight 10

|

||||

Advance podinfo.test canary weight 15

|

||||

Halt podinfo.test advancement latency 1.20 > 0.5

|

||||

Halt podinfo.test advancement latency 1.45 > 0.5

|

||||

Halt podinfo.test advancement latency 1.60 > 0.5

|

||||

Halt podinfo.test advancement latency 1.69 > 0.5

|

||||

Halt podinfo.test advancement latency 1.70 > 0.5

|

||||

Rolling back podinfo.test failed checks threshold reached 5

|

||||

Canary failed! Scaling down podinfo.test

|

||||

```

|

||||

|

||||

If you have Slack configured, Flagger will send a notification with the reason why the canary failed.

|

||||

|

||||

### A/B Testing

|

||||

|

||||

Besides weighted routing, Flagger can be configured to route traffic to the canary based on HTTP match conditions.

|

||||

In an A/B testing scenario, you'll be using HTTP headers or cookies to target a certain segment of your users.

|

||||

This is particularly useful for frontend applications that require session affinity.

|

||||

|

||||

|

||||

|

||||

Edit the canary analysis, remove the max/step weight and add the match conditions and iterations:

|

||||

|

||||

```yaml

|

||||

canaryAnalysis:

|

||||

interval: 1m

|

||||

threshold: 10

|

||||

iterations: 10

|

||||

match:

|

||||

# curl -H 'X-Canary: insider' http://app.example.com

|

||||

- headers:

|

||||

x-canary:

|

||||

exact: "insider"

|

||||

# curl -b 'canary=always' http://app.example.com

|

||||

- headers:

|

||||

cookie:

|

||||

exact: "canary"

|

||||

metrics:

|

||||

- name: request-success-rate

|

||||

threshold: 99

|

||||

interval: 1m

|

||||

webhooks:

|

||||

- name: load-test

|

||||

url: http://localhost:8888/

|

||||

timeout: 5s

|

||||

metadata:

|

||||

type: cmd

|

||||

cmd: "hey -z 1m -q 10 -c 2 -H 'Cookie: canary=always' http://app.example.com/"

|

||||

logCmdOutput: "true"

|

||||

```

|

||||

|

||||

The above configuration will run an analysis for ten minutes targeting users that have a `canary` cookie set to `always` or

|

||||

those that call the service using the `X-Canary: insider` header.

|

||||

|

||||

Trigger a canary deployment by updating the container image:

|

||||

|

||||

```bash

|

||||

kubectl -n test set image deployment/podinfo \

|

||||

podinfod=quay.io/stefanprodan/podinfo:1.5.0

|

||||

```

|

||||

|

||||

Flagger detects that the deployment revision changed and starts the A/B testing:

|

||||

|

||||

```text

|

||||

kubectl -n test describe canary/podinfo

|

||||

|

||||

Status:

|

||||

Failed Checks: 0

|

||||

Phase: Succeeded

|

||||

Events:

|

||||

Type Reason Age From Message

|

||||

---- ------ ---- ---- -------

|

||||

Normal Synced 3m flagger New revision detected podinfo.test

|

||||

Normal Synced 3m flagger Scaling up podinfo.test

|

||||

Warning Synced 3m flagger Waiting for podinfo.test rollout to finish: 0 of 1 updated replicas are available

|

||||

Normal Synced 3m flagger Advance podinfo.test canary iteration 1/10

|

||||

Normal Synced 3m flagger Advance podinfo.test canary iteration 2/10

|

||||

Normal Synced 3m flagger Advance podinfo.test canary iteration 3/10

|

||||

Normal Synced 2m flagger Advance podinfo.test canary iteration 4/10

|

||||

Normal Synced 2m flagger Advance podinfo.test canary iteration 5/10

|

||||