mirror of

https://github.com/fluxcd/flagger.git

synced 2026-04-15 06:57:34 +00:00

Compare commits

44 Commits

0.1.0-beta

...

0.1.2

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

71137ba3bb | ||

|

|

6372c7dfcc | ||

|

|

4584733f6f | ||

|

|

03408683c0 | ||

|

|

29137ae75b | ||

|

|

6bf85526d0 | ||

|

|

9f6a30f43e | ||

|

|

11bc0390c4 | ||

|

|

9a29ea69d7 | ||

|

|

2d8adbaca4 | ||

|

|

f3904ea099 | ||

|

|

1b2b13e77f | ||

|

|

8878f15806 | ||

|

|

5977ff9bae | ||

|

|

11ef6bdf37 | ||

|

|

9c342e35be | ||

|

|

c7e7785b06 | ||

|

|

4cb5ceb48b | ||

|

|

5a79402a73 | ||

|

|

c24b11ff8b | ||

|

|

042d3c1a5b | ||

|

|

f8821cf30b | ||

|

|

8c12cdb21d | ||

|

|

923799dce7 | ||

|

|

ebc932fba5 | ||

|

|

3d8d30db47 | ||

|

|

1022c3438a | ||

|

|

9159855df2 | ||

|

|

7927ac0a5d | ||

|

|

f438e9a4b2 | ||

|

|

4c70a330d4 | ||

|

|

d8875a3da1 | ||

|

|

769aff57cb | ||

|

|

4138f37f9a | ||

|

|

583c9cc004 | ||

|

|

c5930e6f70 | ||

|

|

423d9bbbb3 | ||

|

|

07771f500f | ||

|

|

65bd77c88f | ||

|

|

82bf63f89b | ||

|

|

7f735ead07 | ||

|

|

56ffd618d6 | ||

|

|

19cb34479e | ||

|

|

2d906f0b71 |

8

.codecov.yml

Normal file

8

.codecov.yml

Normal file

@@ -0,0 +1,8 @@

|

||||

coverage:

|

||||

status:

|

||||

project:

|

||||

default:

|

||||

target: auto

|

||||

threshold: 50

|

||||

base: auto

|

||||

patch: off

|

||||

19

.travis.yml

19

.travis.yml

@@ -2,7 +2,7 @@ sudo: required

|

||||

language: go

|

||||

|

||||

go:

|

||||

- 1.10.x

|

||||

- 1.11.x

|

||||

|

||||

services:

|

||||

- docker

|

||||

@@ -21,19 +21,18 @@ script:

|

||||

|

||||

after_success:

|

||||

- if [ -z "$DOCKER_USER" ]; then

|

||||

echo "PR build, skipping Docker Hub push";

|

||||

echo "PR build, skipping image push";

|

||||

else

|

||||

docker tag stefanprodan/flagger:latest stefanprodan/flagger:${TRAVIS_COMMIT};

|

||||

echo $DOCKER_PASS | docker login -u=$DOCKER_USER --password-stdin;

|

||||

docker push stefanprodan/flagger:${TRAVIS_COMMIT};

|

||||

docker tag stefanprodan/flagger:latest quay.io/stefanprodan/flagger:${TRAVIS_COMMIT};

|

||||

echo $DOCKER_PASS | docker login -u=$DOCKER_USER --password-stdin quay.io;

|

||||

docker push quay.io/stefanprodan/flagger:${TRAVIS_COMMIT};

|

||||

fi

|

||||

- if [ -z "$TRAVIS_TAG" ]; then

|

||||

echo "Not a release, skipping Docker Hub push";

|

||||

echo "Not a release, skipping image push";

|

||||

else

|

||||

docker tag stefanprodan/flagger:latest stefanprodan/flagger:$TRAVIS_TAG;

|

||||

echo $DOCKER_PASS | docker login -u=$DOCKER_USER --password-stdin;

|

||||

docker push stefanprodan/flagger:latest;

|

||||

docker push stefanprodan/flagger:$TRAVIS_TAG;

|

||||

docker tag stefanprodan/flagger:latest quay.io/stefanprodan/flagger:${TRAVIS_TAG};

|

||||

echo $DOCKER_PASS | docker login -u=$DOCKER_USER --password-stdin quay.io;

|

||||

docker push quay.io/stefanprodan/flagger:$TRAVIS_TAG;

|

||||

fi

|

||||

- bash <(curl -s https://codecov.io/bash)

|

||||

- rm coverage.txt

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

FROM golang:1.10

|

||||

FROM golang:1.11

|

||||

|

||||

RUN mkdir -p /go/src/github.com/stefanprodan/flagger/

|

||||

|

||||

|

||||

15

Makefile

15

Makefile

@@ -4,13 +4,17 @@ VERSION_MINOR:=$(shell grep 'VERSION' pkg/version/version.go | awk '{ print $$4

|

||||

PATCH:=$(shell grep 'VERSION' pkg/version/version.go | awk '{ print $$4 }' | tr -d '"' | awk -F. '{print $$NF}')

|

||||

SOURCE_DIRS = cmd pkg/apis pkg/controller pkg/server pkg/logging pkg/version

|

||||

run:

|

||||

go run cmd/flagger/* -kubeconfig=$$HOME/.kube/config -log-level=info -metrics-server=https://prometheus.iowa.weavedx.com

|

||||

go run cmd/flagger/* -kubeconfig=$$HOME/.kube/config -log-level=info \

|

||||

-metrics-server=https://prometheus.iowa.weavedx.com \

|

||||

-slack-url=https://hooks.slack.com/services/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 \

|

||||

-slack-channel="devops-alerts"

|

||||

|

||||

build:

|

||||

docker build -t stefanprodan/flagger:$(TAG) . -f Dockerfile

|

||||

|

||||

push:

|

||||

docker push stefanprodan/flagger:$(TAG)

|

||||

docker tag stefanprodan/flagger:$(TAG) quay.io/stefanprodan/flagger:$(VERSION)

|

||||

docker push quay.io/stefanprodan/flagger:$(VERSION)

|

||||

|

||||

fmt:

|

||||

gofmt -l -s -w $(SOURCE_DIRS)

|

||||

@@ -31,6 +35,7 @@ helm-package:

|

||||

|

||||

helm-up:

|

||||

helm upgrade --install flagger ./charts/flagger --namespace=istio-system --set crd.create=false

|

||||

helm upgrade --install flagger-grafana ./charts/grafana --namespace=istio-system

|

||||

|

||||

version-set:

|

||||

@next="$(TAG)" && \

|

||||

@@ -52,10 +57,10 @@ version-up:

|

||||

|

||||

dev-up: version-up

|

||||

@echo "Starting build/push/deploy pipeline for $(VERSION)"

|

||||

docker build -t stefanprodan/flagger:$(VERSION) . -f Dockerfile

|

||||

docker push stefanprodan/flagger:$(VERSION)

|

||||

docker build -t quay.io/stefanprodan/flagger:$(VERSION) . -f Dockerfile

|

||||

docker push quay.io/stefanprodan/flagger:$(VERSION)

|

||||

kubectl apply -f ./artifacts/flagger/crd.yaml

|

||||

helm upgrade --install flagger ./charts/flagger --namespace=istio-system --set crd.create=false

|

||||

helm upgrade -i flagger ./charts/flagger --namespace=istio-system --set crd.create=false

|

||||

|

||||

release:

|

||||

git tag $(VERSION)

|

||||

|

||||

44

README.md

44

README.md

@@ -8,8 +8,6 @@

|

||||

|

||||

Flagger is a Kubernetes operator that automates the promotion of canary deployments

|

||||

using Istio routing for traffic shifting and Prometheus metrics for canary analysis.

|

||||

The project is currently in experimental phase and it is expected that breaking changes

|

||||

to the API will be made in the upcoming releases.

|

||||

|

||||

### Install

|

||||

|

||||

@@ -86,6 +84,9 @@ spec:

|

||||

apiVersion: apps/v1

|

||||

kind: Deployment

|

||||

name: podinfo

|

||||

# the maximum time in seconds for the canary deployment

|

||||

# to make progress before it is rollback (default 600s)

|

||||

progressDeadlineSeconds: 60

|

||||

# hpa reference (optional)

|

||||

autoscalerRef:

|

||||

apiVersion: autoscaling/v2beta1

|

||||

@@ -351,13 +352,46 @@ flagger_canary_duration_seconds_sum{name="podinfo",namespace="test"} 17.3561329

|

||||

flagger_canary_duration_seconds_count{name="podinfo",namespace="test"} 6

|

||||

```

|

||||

|

||||

### Alerting

|

||||

|

||||

Flagger can be configured to send Slack notifications:

|

||||

|

||||

```bash

|

||||

helm upgrade -i flagger flagger/flagger \

|

||||

--namespace=istio-system \

|

||||

--set slack.url=https://hooks.slack.com/services/YOUR/SLACK/WEBHOOK \

|

||||

--set slack.channel=general \

|

||||

--set slack.user=flagger

|

||||

```

|

||||

|

||||

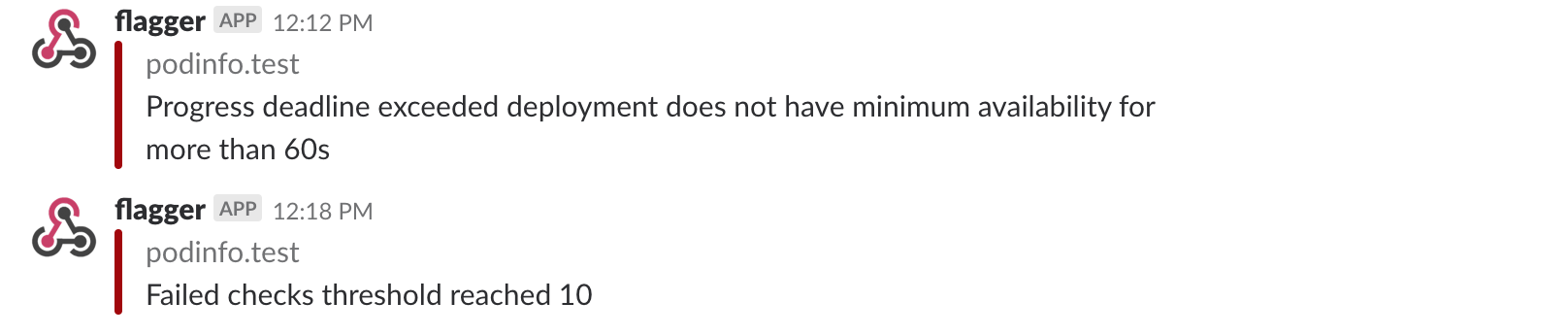

Once configured with a Slack incoming webhook, Flagger will post messages when a canary deployment has been initialized,

|

||||

when a new revision has been detected and if the canary analysis failed or succeeded.

|

||||

|

||||

|

||||

|

||||

A canary deployment will be rolled back if the progress deadline exceeded or if the analysis

|

||||

reached the maximum number of failed checks:

|

||||

|

||||

|

||||

|

||||

Besides Slack, you can use Alertmanager to trigger alerts when a canary deployment failed:

|

||||

|

||||

```yaml

|

||||

- alert: canary_rollback

|

||||

expr: flagger_canary_status > 1

|

||||

for: 1m

|

||||

labels:

|

||||

severity: warning

|

||||

annotations:

|

||||

summary: "Canary failed"

|

||||

description: "Workload {{ $labels.name }} namespace {{ $labels.namespace }}"

|

||||

```

|

||||

|

||||

### Roadmap

|

||||

|

||||

* Extend the canary analysis and promotion to other types than Kubernetes deployments such as Flux Helm releases or OpenFaaS functions

|

||||

* Extend the validation mechanism to support other metrics than HTTP success rate and latency

|

||||

* Add support for comparing the canary metrics to the primary ones and do the validation based on the derivation between the two

|

||||

* Alerting: trigger Alertmanager on successful or failed promotions

|

||||

* Reporting: publish canary analysis results to Slack/Jira/etc

|

||||

* Extend the canary analysis and promotion to other types than Kubernetes deployments such as Flux Helm releases or OpenFaaS functions

|

||||

|

||||

### Contributing

|

||||

|

||||

|

||||

@@ -9,6 +9,9 @@ spec:

|

||||

apiVersion: apps/v1

|

||||

kind: Deployment

|

||||

name: podinfo

|

||||

# the maximum time in seconds for the canary deployment

|

||||

# to make progress before it is rollback (default 600s)

|

||||

progressDeadlineSeconds: 60

|

||||

# HPA reference (optional)

|

||||

autoscalerRef:

|

||||

apiVersion: autoscaling/v2beta1

|

||||

@@ -22,7 +25,7 @@ spec:

|

||||

- public-gateway.istio-system.svc.cluster.local

|

||||

# Istio virtual service host names (optional)

|

||||

hosts:

|

||||

- podinfo.iowa.weavedx.com

|

||||

- app.iowa.weavedx.com

|

||||

canaryAnalysis:

|

||||

# max number of failed metric checks before rollback

|

||||

threshold: 10

|

||||

|

||||

@@ -6,6 +6,9 @@ metadata:

|

||||

labels:

|

||||

app: podinfo

|

||||

spec:

|

||||

minReadySeconds: 5

|

||||

revisionHistoryLimit: 5

|

||||

progressDeadlineSeconds: 60

|

||||

strategy:

|

||||

rollingUpdate:

|

||||

maxUnavailable: 0

|

||||

@@ -45,10 +48,7 @@ spec:

|

||||

- http

|

||||

- localhost:9898/healthz

|

||||

initialDelaySeconds: 5

|

||||

failureThreshold: 3

|

||||

periodSeconds: 10

|

||||

successThreshold: 1

|

||||

timeoutSeconds: 1

|

||||

timeoutSeconds: 5

|

||||

readinessProbe:

|

||||

exec:

|

||||

command:

|

||||

@@ -57,10 +57,7 @@ spec:

|

||||

- http

|

||||

- localhost:9898/readyz

|

||||

initialDelaySeconds: 5

|

||||

failureThreshold: 3

|

||||

periodSeconds: 3

|

||||

successThreshold: 1

|

||||

timeoutSeconds: 1

|

||||

timeoutSeconds: 5

|

||||

resources:

|

||||

limits:

|

||||

cpu: 2000m

|

||||

|

||||

@@ -23,6 +23,8 @@ spec:

|

||||

- service

|

||||

- canaryAnalysis

|

||||

properties:

|

||||

progressDeadlineSeconds:

|

||||

type: number

|

||||

targetRef:

|

||||

properties:

|

||||

apiVersion:

|

||||

|

||||

@@ -22,7 +22,7 @@ spec:

|

||||

serviceAccountName: flagger

|

||||

containers:

|

||||

- name: flagger

|

||||

image: stefanprodan/flagger:0.1.0-beta.6

|

||||

image: quay.io/stefanprodan/flagger:0.1.2

|

||||

imagePullPolicy: Always

|

||||

ports:

|

||||

- name: http

|

||||

@@ -41,6 +41,7 @@ spec:

|

||||

- --timeout=2

|

||||

- --spider

|

||||

- http://localhost:8080/healthz

|

||||

timeoutSeconds: 5

|

||||

readinessProbe:

|

||||

exec:

|

||||

command:

|

||||

@@ -50,6 +51,7 @@ spec:

|

||||

- --timeout=2

|

||||

- --spider

|

||||

- http://localhost:8080/healthz

|

||||

timeoutSeconds: 5

|

||||

resources:

|

||||

limits:

|

||||

memory: "512Mi"

|

||||

|

||||

@@ -1,6 +1,6 @@

|

||||

apiVersion: v1

|

||||

name: flagger

|

||||

version: 0.1.0

|

||||

appVersion: 0.1.0-beta.6

|

||||

version: 0.1.2

|

||||

appVersion: 0.1.2

|

||||

description: Flagger is a Kubernetes operator that automates the promotion of canary deployments using Istio routing for traffic shifting and Prometheus metrics for canary analysis.

|

||||

home: https://github.com/stefanprodan/flagger

|

||||

|

||||

@@ -1,14 +1,20 @@

|

||||

# Flagger

|

||||

|

||||

Flagger is a Kubernetes operator that automates the promotion of canary deployments

|

||||

[Flagger](https://flagger.app) is a Kubernetes operator that automates the promotion of canary deployments

|

||||

using Istio routing for traffic shifting and Prometheus metrics for canary analysis.

|

||||

|

||||

## Installing the Chart

|

||||

|

||||

Add Flagger Hel repository:

|

||||

|

||||

```console

|

||||

helm repo add flagger https://flagger.app

|

||||

```

|

||||

|

||||

To install the chart with the release name `flagger`:

|

||||

|

||||

```console

|

||||

$ helm upgrade --install flagger ./charts/flagger --namespace=istio-system

|

||||

$ helm install --name flagger --namespace istio-system flagger/flagger

|

||||

```

|

||||

|

||||

The command deploys Flagger on the Kubernetes cluster in the istio-system namespace.

|

||||

@@ -30,9 +36,16 @@ The following tables lists the configurable parameters of the Flagger chart and

|

||||

|

||||

Parameter | Description | Default

|

||||

--- | --- | ---

|

||||

`image.repository` | image repository | `stefanprodan/flagger`

|

||||

`image.repository` | image repository | `quay.io/stefanprodan/flagger`

|

||||

`image.tag` | image tag | `<VERSION>`

|

||||

`image.pullPolicy` | image pull policy | `IfNotPresent`

|

||||

`controlLoopInterval` | wait interval between checks | `10s`

|

||||

`metricsServer` | Prometheus URL | `http://prometheus.istio-system:9090`

|

||||

`slack.url` | Slack incoming webhook | None

|

||||

`slack.channel` | Slack channel | None

|

||||

`slack.user` | Slack username | `flagger`

|

||||

`rbac.create` | if `true`, create and use RBAC resources | `true`

|

||||

`crd.create` | if `true`, create Flagger's CRDs | `true`

|

||||

`resources.requests/cpu` | pod CPU request | `10m`

|

||||

`resources.requests/memory` | pod memory request | `32Mi`

|

||||

`resources.limits/cpu` | pod CPU limit | `1000m`

|

||||

@@ -44,16 +57,16 @@ Parameter | Description | Default

|

||||

Specify each parameter using the `--set key=value[,key=value]` argument to `helm upgrade`. For example,

|

||||

|

||||

```console

|

||||

$ helm upgrade --install flagger ./charts/flagger \

|

||||

--namespace=istio-system \

|

||||

--set=image.tag=0.0.2

|

||||

$ helm upgrade -i flagger flagger/flagger \

|

||||

--namespace istio-system \

|

||||

--set controlLoopInterval=1m

|

||||

```

|

||||

|

||||

Alternatively, a YAML file that specifies the values for the above parameters can be provided while installing the chart. For example,

|

||||

|

||||

```console

|

||||

$ helm upgrade --install flagger ./charts/flagger \

|

||||

--namespace=istio-system \

|

||||

$ helm upgrade -i flagger flagger/flagger \

|

||||

--namespace istio-system \

|

||||

-f values.yaml

|

||||

```

|

||||

|

||||

|

||||

@@ -3,6 +3,8 @@ apiVersion: apiextensions.k8s.io/v1beta1

|

||||

kind: CustomResourceDefinition

|

||||

metadata:

|

||||

name: canaries.flagger.app

|

||||

annotations:

|

||||

"helm.sh/resource-policy": keep

|

||||

spec:

|

||||

group: flagger.app

|

||||

version: v1alpha1

|

||||

@@ -24,6 +26,8 @@ spec:

|

||||

- service

|

||||

- canaryAnalysis

|

||||

properties:

|

||||

progressDeadlineSeconds:

|

||||

type: number

|

||||

targetRef:

|

||||

properties:

|

||||

apiVersion:

|

||||

|

||||

@@ -37,24 +37,31 @@ spec:

|

||||

- -log-level=info

|

||||

- -control-loop-interval={{ .Values.controlLoopInterval }}

|

||||

- -metrics-server={{ .Values.metricsServer }}

|

||||

{{- if .Values.slack.url }}

|

||||

- -slack-url={{ .Values.slack.url }}

|

||||

- -slack-user={{ .Values.slack.user }}

|

||||

- -slack-channel={{ .Values.slack.channel }}

|

||||

{{- end }}

|

||||

livenessProbe:

|

||||

exec:

|

||||

command:

|

||||

- wget

|

||||

- --quiet

|

||||

- --tries=1

|

||||

- --timeout=2

|

||||

- --timeout=4

|

||||

- --spider

|

||||

- http://localhost:8080/healthz

|

||||

timeoutSeconds: 5

|

||||

readinessProbe:

|

||||

exec:

|

||||

command:

|

||||

- wget

|

||||

- --quiet

|

||||

- --tries=1

|

||||

- --timeout=2

|

||||

- --timeout=4

|

||||

- --spider

|

||||

- http://localhost:8080/healthz

|

||||

timeoutSeconds: 5

|

||||

resources:

|

||||

{{ toYaml .Values.resources | indent 12 }}

|

||||

{{- with .Values.nodeSelector }}

|

||||

|

||||

@@ -1,13 +1,19 @@

|

||||

# Default values for flagger.

|

||||

|

||||

image:

|

||||

repository: stefanprodan/flagger

|

||||

tag: 0.1.0-beta.6

|

||||

repository: quay.io/stefanprodan/flagger

|

||||

tag: 0.1.2

|

||||

pullPolicy: IfNotPresent

|

||||

|

||||

controlLoopInterval: "10s"

|

||||

metricsServer: "http://prometheus.istio-system.svc.cluster.local:9090"

|

||||

|

||||

slack:

|

||||

user: flagger

|

||||

channel:

|

||||

# incoming webhook https://api.slack.com/incoming-webhooks

|

||||

url:

|

||||

|

||||

crd:

|

||||

create: true

|

||||

|

||||

|

||||

@@ -6,12 +6,13 @@ import (

|

||||

"time"

|

||||

|

||||

_ "github.com/istio/glog"

|

||||

sharedclientset "github.com/knative/pkg/client/clientset/versioned"

|

||||

istioclientset "github.com/knative/pkg/client/clientset/versioned"

|

||||

"github.com/knative/pkg/signals"

|

||||

clientset "github.com/stefanprodan/flagger/pkg/client/clientset/versioned"

|

||||

informers "github.com/stefanprodan/flagger/pkg/client/informers/externalversions"

|

||||

"github.com/stefanprodan/flagger/pkg/controller"

|

||||

"github.com/stefanprodan/flagger/pkg/logging"

|

||||

"github.com/stefanprodan/flagger/pkg/notifier"

|

||||

"github.com/stefanprodan/flagger/pkg/server"

|

||||

"github.com/stefanprodan/flagger/pkg/version"

|

||||

"k8s.io/client-go/kubernetes"

|

||||

@@ -27,6 +28,9 @@ var (

|

||||

controlLoopInterval time.Duration

|

||||

logLevel string

|

||||

port string

|

||||

slackURL string

|

||||

slackUser string

|

||||

slackChannel string

|

||||

)

|

||||

|

||||

func init() {

|

||||

@@ -36,6 +40,9 @@ func init() {

|

||||

flag.DurationVar(&controlLoopInterval, "control-loop-interval", 10*time.Second, "wait interval between rollouts")

|

||||

flag.StringVar(&logLevel, "log-level", "debug", "Log level can be: debug, info, warning, error.")

|

||||

flag.StringVar(&port, "port", "8080", "Port to listen on.")

|

||||

flag.StringVar(&slackURL, "slack-url", "", "Slack hook URL.")

|

||||

flag.StringVar(&slackUser, "slack-user", "flagger", "Slack user name.")

|

||||

flag.StringVar(&slackChannel, "slack-channel", "", "Slack channel.")

|

||||

}

|

||||

|

||||

func main() {

|

||||

@@ -59,9 +66,9 @@ func main() {

|

||||

logger.Fatalf("Error building kubernetes clientset: %v", err)

|

||||

}

|

||||

|

||||

sharedClient, err := sharedclientset.NewForConfig(cfg)

|

||||

istioClient, err := istioclientset.NewForConfig(cfg)

|

||||

if err != nil {

|

||||

logger.Fatalf("Error building shared clientset: %v", err)

|

||||

logger.Fatalf("Error building istio clientset: %v", err)

|

||||

}

|

||||

|

||||

flaggerClient, err := clientset.NewForConfig(cfg)

|

||||

@@ -88,17 +95,28 @@ func main() {

|

||||

logger.Errorf("Metrics server %s unreachable %v", metricsServer, err)

|

||||

}

|

||||

|

||||

var slack *notifier.Slack

|

||||

if slackURL != "" {

|

||||

slack, err = notifier.NewSlack(slackURL, slackUser, slackChannel)

|

||||

if err != nil {

|

||||

logger.Errorf("Notifier %v", err)

|

||||

} else {

|

||||

logger.Infof("Slack notifications enabled for channel %s", slack.Channel)

|

||||

}

|

||||

}

|

||||

|

||||

// start HTTP server

|

||||

go server.ListenAndServe(port, 3*time.Second, logger, stopCh)

|

||||

|

||||

c := controller.NewController(

|

||||

kubeClient,

|

||||

sharedClient,

|

||||

istioClient,

|

||||

flaggerClient,

|

||||

canaryInformer,

|

||||

controlLoopInterval,

|

||||

metricsServer,

|

||||

logger,

|

||||

slack,

|

||||

)

|

||||

|

||||

flaggerInformerFactory.Start(stopCh)

|

||||

|

||||

@@ -8,8 +8,6 @@

|

||||

|

||||

Flagger is a Kubernetes operator that automates the promotion of canary deployments

|

||||

using Istio routing for traffic shifting and Prometheus metrics for canary analysis.

|

||||

The project is currently in experimental phase and it is expected that breaking changes

|

||||

to the API will be made in the upcoming releases.

|

||||

|

||||

### Install

|

||||

|

||||

@@ -86,6 +84,9 @@ spec:

|

||||

apiVersion: apps/v1

|

||||

kind: Deployment

|

||||

name: podinfo

|

||||

# the maximum time in seconds for the canary deployment

|

||||

# to make progress before it is rollback (default 600s)

|

||||

progressDeadlineSeconds: 60

|

||||

# hpa reference (optional)

|

||||

autoscalerRef:

|

||||

apiVersion: autoscaling/v2beta1

|

||||

@@ -351,13 +352,46 @@ flagger_canary_duration_seconds_sum{name="podinfo",namespace="test"} 17.3561329

|

||||

flagger_canary_duration_seconds_count{name="podinfo",namespace="test"} 6

|

||||

```

|

||||

|

||||

### Alerting

|

||||

|

||||

Flagger can be configured to send Slack notifications:

|

||||

|

||||

```bash

|

||||

helm upgrade -i flagger flagger/flagger \

|

||||

--namespace=istio-system \

|

||||

--set slack.url=https://hooks.slack.com/services/YOUR/SLACK/WEBHOOK \

|

||||

--set slack.channel=general \

|

||||

--set slack.user=flagger

|

||||

```

|

||||

|

||||

Once configured with a Slack incoming webhook, Flagger will post messages when a canary deployment has been initialized,

|

||||

when a new revision has been detected and if the canary analysis failed or succeeded.

|

||||

|

||||

|

||||

|

||||

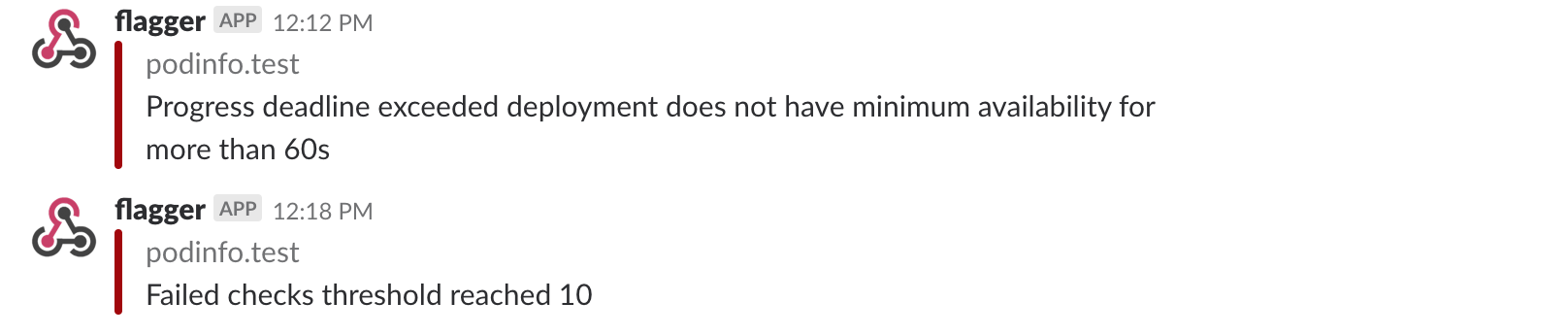

A canary deployment will be rolled back if the progress deadline exceeded or if the analysis

|

||||

reached the maximum number of failed checks:

|

||||

|

||||

|

||||

|

||||

Besides Slack, you can use Alertmanager to trigger alerts when a canary deployment failed:

|

||||

|

||||

```yaml

|

||||

- alert: canary_rollback

|

||||

expr: flagger_canary_status > 1

|

||||

for: 1m

|

||||

labels:

|

||||

severity: warning

|

||||

annotations:

|

||||

summary: "Canary failed"

|

||||

description: "Workload {{ $labels.name }} namespace {{ $labels.namespace }}"

|

||||

```

|

||||

|

||||

### Roadmap

|

||||

|

||||

* Extend the canary analysis and promotion to other types than Kubernetes deployments such as Flux Helm releases or OpenFaaS functions

|

||||

* Extend the validation mechanism to support other metrics than HTTP success rate and latency

|

||||

* Add support for comparing the canary metrics to the primary ones and do the validation based on the derivation between the two

|

||||

* Alerting: trigger Alertmanager on successful or failed promotions

|

||||

* Reporting: publish canary analysis results to Slack/Jira/etc

|

||||

* Extend the canary analysis and promotion to other types than Kubernetes deployments such as Flux Helm releases or OpenFaaS functions

|

||||

|

||||

### Contributing

|

||||

|

||||

|

||||

@@ -4,7 +4,7 @@ remote_theme: errordeveloper/simple-project-homepage

|

||||

repository: stefanprodan/flagger

|

||||

by_weaveworks: true

|

||||

|

||||

url: "https://stefanprodan.github.io/flagger"

|

||||

url: "https://flagger.app"

|

||||

baseurl: "/"

|

||||

|

||||

twitter:

|

||||

@@ -15,7 +15,7 @@ author:

|

||||

# Set default og:image

|

||||

defaults:

|

||||

- scope: {path: ""}

|

||||

values: {image: "logo/logo-flagger.png"}

|

||||

values: {image: "diagrams/flagger-overview.png"}

|

||||

|

||||

# See: https://material.io/guidelines/style/color.html

|

||||

# Use color-name-value, like pink-200 or deep-purple-100

|

||||

@@ -51,14 +51,4 @@ plugins:

|

||||

|

||||

exclude:

|

||||

- CNAME

|

||||

- Dockerfile

|

||||

- Gopkg.lock

|

||||

- Gopkg.toml

|

||||

- LICENSE

|

||||

- Makefile

|

||||

- add-model.sh

|

||||

- build

|

||||

- cmd

|

||||

- pkg

|

||||

- tag_release.sh

|

||||

- vendor

|

||||

|

||||

|

||||

Binary file not shown.

BIN

docs/flagger-0.1.1.tgz

Normal file

BIN

docs/flagger-0.1.1.tgz

Normal file

Binary file not shown.

BIN

docs/flagger-0.1.2.tgz

Normal file

BIN

docs/flagger-0.1.2.tgz

Normal file

Binary file not shown.

Binary file not shown.

@@ -2,12 +2,36 @@ apiVersion: v1

|

||||

entries:

|

||||

flagger:

|

||||

- apiVersion: v1

|

||||

appVersion: 0.1.0-beta.6

|

||||

created: 2018-10-29T21:46:00.29473+02:00

|

||||

appVersion: 0.1.2

|

||||

created: 2018-12-06T13:57:50.322474+07:00

|

||||

description: Flagger is a Kubernetes operator that automates the promotion of

|

||||

canary deployments using Istio routing for traffic shifting and Prometheus metrics

|

||||

for canary analysis.

|

||||

digest: c17380b0f4e08a9b1f76a0e52d53677248c5756eff6a1fcd5629d3465dd1ad58

|

||||

digest: a52bf1bf797d60d3d92f46f84805edbd1ffb7d87504727266f08543532ff5e08

|

||||

home: https://github.com/stefanprodan/flagger

|

||||

name: flagger

|

||||

urls:

|

||||

- https://stefanprodan.github.io/flagger/flagger-0.1.2.tgz

|

||||

version: 0.1.2

|

||||

- apiVersion: v1

|

||||

appVersion: 0.1.1

|

||||

created: 2018-12-06T13:57:50.322115+07:00

|

||||

description: Flagger is a Kubernetes operator that automates the promotion of

|

||||

canary deployments using Istio routing for traffic shifting and Prometheus metrics

|

||||

for canary analysis.

|

||||

digest: 2bb8f72fcf63a5ba5ecbaa2ab0d0446f438ec93fbf3a598cd7de45e64d8f9628

|

||||

home: https://github.com/stefanprodan/flagger

|

||||

name: flagger

|

||||

urls:

|

||||

- https://stefanprodan.github.io/flagger/flagger-0.1.1.tgz

|

||||

version: 0.1.1

|

||||

- apiVersion: v1

|

||||

appVersion: 0.1.0

|

||||

created: 2018-12-06T13:57:50.321509+07:00

|

||||

description: Flagger is a Kubernetes operator that automates the promotion of

|

||||

canary deployments using Istio routing for traffic shifting and Prometheus metrics

|

||||

for canary analysis.

|

||||

digest: 03e05634149e13ddfddae6757266d65c271878a026c21c7d1429c16712bf3845

|

||||

home: https://github.com/stefanprodan/flagger

|

||||

name: flagger

|

||||

urls:

|

||||

@@ -16,13 +40,13 @@ entries:

|

||||

grafana:

|

||||

- apiVersion: v1

|

||||

appVersion: 5.3.1

|

||||

created: 2018-10-29T21:46:00.295247+02:00

|

||||

created: 2018-12-06T13:57:50.323051+07:00

|

||||

description: A Grafana Helm chart for monitoring progressive deployments powered

|

||||

by Istio and Flagger

|

||||

digest: 370aa2e6a0d4ab717f047658bdb02969b8f2a4d2e81c0bc96b90e3365229715f

|

||||

digest: 692b7c545214b652374249cc814d37decd8df4f915530e82dff4d9dfa25e8762

|

||||

home: https://github.com/stefanprodan/flagger

|

||||

name: grafana

|

||||

urls:

|

||||

- https://stefanprodan.github.io/flagger/grafana-0.1.0.tgz

|

||||

version: 0.1.0

|

||||

generated: 2018-10-29T21:46:00.293821+02:00

|

||||

generated: 2018-12-06T13:57:50.320726+07:00

|

||||

|

||||

Binary file not shown.

|

Before Width: | Height: | Size: 620 KiB After Width: | Height: | Size: 442 KiB |

BIN

docs/screens/slack-canary-failed.png

Normal file

BIN

docs/screens/slack-canary-failed.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 78 KiB |

BIN

docs/screens/slack-canary-success.png

Normal file

BIN

docs/screens/slack-canary-success.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 113 KiB |

@@ -21,7 +21,10 @@ import (

|

||||

metav1 "k8s.io/apimachinery/pkg/apis/meta/v1"

|

||||

)

|

||||

|

||||

const CanaryKind = "Canary"

|

||||

const (

|

||||

CanaryKind = "Canary"

|

||||

ProgressDeadlineSeconds = 600

|

||||

)

|

||||

|

||||

// +genclient

|

||||

// +k8s:deepcopy-gen:interfaces=k8s.io/apimachinery/pkg/runtime.Object

|

||||

@@ -48,6 +51,10 @@ type CanarySpec struct {

|

||||

|

||||

// metrics and thresholds

|

||||

CanaryAnalysis CanaryAnalysis `json:"canaryAnalysis"`

|

||||

|

||||

// the maximum time in seconds for a canary deployment to make progress

|

||||

// before it is considered to be failed. Defaults to 60s.

|

||||

ProgressDeadlineSeconds *int32 `json:"progressDeadlineSeconds,omitempty"`

|

||||

}

|

||||

|

||||

// +k8s:deepcopy-gen:interfaces=k8s.io/apimachinery/pkg/runtime.Object

|

||||

@@ -60,11 +67,23 @@ type CanaryList struct {

|

||||

Items []Canary `json:"items"`

|

||||

}

|

||||

|

||||

// CanaryState used for status state op

|

||||

type CanaryState string

|

||||

|

||||

const (

|

||||

CanaryRunning CanaryState = "running"

|

||||

CanaryFinished CanaryState = "finished"

|

||||

CanaryFailed CanaryState = "failed"

|

||||

CanaryInitialized CanaryState = "initialized"

|

||||

)

|

||||

|

||||

// CanaryStatus is used for state persistence (read-only)

|

||||

type CanaryStatus struct {

|

||||

State string `json:"state"`

|

||||

CanaryRevision string `json:"canaryRevision"`

|

||||

FailedChecks int `json:"failedChecks"`

|

||||

State CanaryState `json:"state"`

|

||||

CanaryRevision string `json:"canaryRevision"`

|

||||

FailedChecks int `json:"failedChecks"`

|

||||

// +optional

|

||||

LastTransitionTime metav1.Time `json:"lastTransitionTime,omitempty"`

|

||||

}

|

||||

|

||||

// CanaryService is used to create ClusterIP services

|

||||

@@ -89,3 +108,11 @@ type CanaryMetric struct {

|

||||

Interval string `json:"interval"`

|

||||

Threshold int `json:"threshold"`

|

||||

}

|

||||

|

||||

func (c *Canary) GetProgressDeadlineSeconds() int {

|

||||

if c.Spec.ProgressDeadlineSeconds != nil {

|

||||

return int(*c.Spec.ProgressDeadlineSeconds)

|

||||

}

|

||||

|

||||

return ProgressDeadlineSeconds

|

||||

}

|

||||

|

||||

@@ -30,7 +30,7 @@ func (in *Canary) DeepCopyInto(out *Canary) {

|

||||

out.TypeMeta = in.TypeMeta

|

||||

in.ObjectMeta.DeepCopyInto(&out.ObjectMeta)

|

||||

in.Spec.DeepCopyInto(&out.Spec)

|

||||

out.Status = in.Status

|

||||

in.Status.DeepCopyInto(&out.Status)

|

||||

return

|

||||

}

|

||||

|

||||

@@ -155,6 +155,11 @@ func (in *CanarySpec) DeepCopyInto(out *CanarySpec) {

|

||||

out.AutoscalerRef = in.AutoscalerRef

|

||||

in.Service.DeepCopyInto(&out.Service)

|

||||

in.CanaryAnalysis.DeepCopyInto(&out.CanaryAnalysis)

|

||||

if in.ProgressDeadlineSeconds != nil {

|

||||

in, out := &in.ProgressDeadlineSeconds, &out.ProgressDeadlineSeconds

|

||||

*out = new(int32)

|

||||

**out = **in

|

||||

}

|

||||

return

|

||||

}

|

||||

|

||||

@@ -171,6 +176,7 @@ func (in *CanarySpec) DeepCopy() *CanarySpec {

|

||||

// DeepCopyInto is an autogenerated deepcopy function, copying the receiver, writing into out. in must be non-nil.

|

||||

func (in *CanaryStatus) DeepCopyInto(out *CanaryStatus) {

|

||||

*out = *in

|

||||

in.LastTransitionTime.DeepCopyInto(&out.LastTransitionTime)

|

||||

return

|

||||

}

|

||||

|

||||

|

||||

@@ -12,6 +12,7 @@ import (

|

||||

flaggerscheme "github.com/stefanprodan/flagger/pkg/client/clientset/versioned/scheme"

|

||||

flaggerinformers "github.com/stefanprodan/flagger/pkg/client/informers/externalversions/flagger/v1alpha1"

|

||||

flaggerlisters "github.com/stefanprodan/flagger/pkg/client/listers/flagger/v1alpha1"

|

||||

"github.com/stefanprodan/flagger/pkg/notifier"

|

||||

"go.uber.org/zap"

|

||||

corev1 "k8s.io/api/core/v1"

|

||||

"k8s.io/apimachinery/pkg/api/errors"

|

||||

@@ -43,6 +44,7 @@ type Controller struct {

|

||||

router CanaryRouter

|

||||

observer CanaryObserver

|

||||

recorder CanaryRecorder

|

||||

notifier *notifier.Slack

|

||||

}

|

||||

|

||||

func NewController(

|

||||

@@ -53,6 +55,7 @@ func NewController(

|

||||

flaggerWindow time.Duration,

|

||||

metricServer string,

|

||||

logger *zap.SugaredLogger,

|

||||

notifier *notifier.Slack,

|

||||

|

||||

) *Controller {

|

||||

logger.Debug("Creating event broadcaster")

|

||||

@@ -100,6 +103,7 @@ func NewController(

|

||||

router: router,

|

||||

observer: observer,

|

||||

recorder: recorder,

|

||||

notifier: notifier,

|

||||

}

|

||||

|

||||

flaggerInformer.Informer().AddEventHandler(cache.ResourceEventHandlerFuncs{

|

||||

@@ -256,6 +260,41 @@ func (c *Controller) recordEventWarningf(r *flaggerv1.Canary, template string, a

|

||||

c.eventRecorder.Event(r, corev1.EventTypeWarning, "Synced", fmt.Sprintf(template, args...))

|

||||

}

|

||||

|

||||

func (c *Controller) sendNotification(cd *flaggerv1.Canary, message string, metadata bool, warn bool) {

|

||||

if c.notifier == nil {

|

||||

return

|

||||

}

|

||||

|

||||

var fields []notifier.SlackField

|

||||

|

||||

if metadata {

|

||||

fields = append(fields,

|

||||

notifier.SlackField{

|

||||

Title: "Target",

|

||||

Value: fmt.Sprintf("%s/%s.%s", cd.Spec.TargetRef.Kind, cd.Spec.TargetRef.Name, cd.Namespace),

|

||||

},

|

||||

notifier.SlackField{

|

||||

Title: "Traffic routing",

|

||||

Value: fmt.Sprintf("Weight step: %v max: %v",

|

||||

cd.Spec.CanaryAnalysis.StepWeight,

|

||||

cd.Spec.CanaryAnalysis.MaxWeight),

|

||||

},

|

||||

notifier.SlackField{

|

||||

Title: "Failed checks threshold",

|

||||

Value: fmt.Sprintf("%v", cd.Spec.CanaryAnalysis.Threshold),

|

||||

},

|

||||

notifier.SlackField{

|

||||

Title: "Progress deadline",

|

||||

Value: fmt.Sprintf("%vs", cd.GetProgressDeadlineSeconds()),

|

||||

},

|

||||

)

|

||||

}

|

||||

err := c.notifier.Post(cd.Name, cd.Namespace, message, fields, warn)

|

||||

if err != nil {

|

||||

c.logger.Error(err)

|

||||

}

|

||||

}

|

||||

|

||||

func int32p(i int32) *int32 {

|

||||

return &i

|

||||

}

|

||||

|

||||

@@ -4,6 +4,7 @@ import (

|

||||

"encoding/base64"

|

||||

"encoding/json"

|

||||

"fmt"

|

||||

"time"

|

||||

|

||||

"github.com/google/go-cmp/cmp"

|

||||

"github.com/google/go-cmp/cmp/cmpopts"

|

||||

@@ -48,6 +49,10 @@ func (c *CanaryDeployer) Promote(cd *flaggerv1.Canary) error {

|

||||

return fmt.Errorf("deployment %s.%s query error %v", primaryName, cd.Namespace, err)

|

||||

}

|

||||

|

||||

primary.Spec.ProgressDeadlineSeconds = canary.Spec.ProgressDeadlineSeconds

|

||||

primary.Spec.MinReadySeconds = canary.Spec.MinReadySeconds

|

||||

primary.Spec.RevisionHistoryLimit = canary.Spec.RevisionHistoryLimit

|

||||

primary.Spec.Strategy = canary.Spec.Strategy

|

||||

primary.Spec.Template.Spec = canary.Spec.Template.Spec

|

||||

_, err = c.kubeClient.AppsV1().Deployments(primary.Namespace).Update(primary)

|

||||

if err != nil {

|

||||

@@ -58,37 +63,58 @@ func (c *CanaryDeployer) Promote(cd *flaggerv1.Canary) error {

|

||||

return nil

|

||||

}

|

||||

|

||||

// IsReady checks the primary and canary deployment status and returns an error if

|

||||

// the deployments are in the middle of a rolling update or if the pods are unhealthy

|

||||

func (c *CanaryDeployer) IsReady(cd *flaggerv1.Canary) error {

|

||||

canary, err := c.kubeClient.AppsV1().Deployments(cd.Namespace).Get(cd.Spec.TargetRef.Name, metav1.GetOptions{})

|

||||

if err != nil {

|

||||

if errors.IsNotFound(err) {

|

||||

return fmt.Errorf("deployment %s.%s not found", cd.Spec.TargetRef.Name, cd.Namespace)

|

||||

}

|

||||

return fmt.Errorf("deployment %s.%s query error %v", cd.Spec.TargetRef.Name, cd.Namespace, err)

|

||||

}

|

||||

if msg, healthy := c.getDeploymentStatus(canary); !healthy {

|

||||

return fmt.Errorf("Halt %s.%s advancement %s", cd.Name, cd.Namespace, msg)

|

||||

}

|

||||

|

||||

// IsPrimaryReady checks the primary deployment status and returns an error if

|

||||

// the deployment is in the middle of a rolling update or if the pods are unhealthy

|

||||

// it will return a non retriable error if the rolling update is stuck

|

||||

func (c *CanaryDeployer) IsPrimaryReady(cd *flaggerv1.Canary) (bool, error) {

|

||||

primaryName := fmt.Sprintf("%s-primary", cd.Spec.TargetRef.Name)

|

||||

primary, err := c.kubeClient.AppsV1().Deployments(cd.Namespace).Get(primaryName, metav1.GetOptions{})

|

||||

if err != nil {

|

||||

if errors.IsNotFound(err) {

|

||||

return fmt.Errorf("deployment %s.%s not found", primaryName, cd.Namespace)

|

||||

return true, fmt.Errorf("deployment %s.%s not found", primaryName, cd.Namespace)

|

||||

}

|

||||

return fmt.Errorf("deployment %s.%s query error %v", primaryName, cd.Namespace, err)

|

||||

return true, fmt.Errorf("deployment %s.%s query error %v", primaryName, cd.Namespace, err)

|

||||

}

|

||||

if msg, healthy := c.getDeploymentStatus(primary); !healthy {

|

||||

return fmt.Errorf("Halt %s.%s advancement %s", cd.Name, cd.Namespace, msg)

|

||||

|

||||

retriable, err := c.isDeploymentReady(primary, cd.GetProgressDeadlineSeconds())

|

||||

if err != nil {

|

||||

if retriable {

|

||||

return retriable, fmt.Errorf("Halt %s.%s advancement %s", cd.Name, cd.Namespace, err.Error())

|

||||

} else {

|

||||

return retriable, err

|

||||

}

|

||||

}

|

||||

|

||||

if primary.Spec.Replicas == int32p(0) {

|

||||

return fmt.Errorf("halt %s.%s advancement %s",

|

||||

cd.Name, cd.Namespace, "primary deployment is scaled to zero")

|

||||

return true, fmt.Errorf("halt %s.%s advancement primary deployment is scaled to zero",

|

||||

cd.Name, cd.Namespace)

|

||||

}

|

||||

return nil

|

||||

return true, nil

|

||||

}

|

||||

|

||||

// IsCanaryReady checks the primary deployment status and returns an error if

|

||||

// the deployment is in the middle of a rolling update or if the pods are unhealthy

|

||||

// it will return a non retriable error if the rolling update is stuck

|

||||

func (c *CanaryDeployer) IsCanaryReady(cd *flaggerv1.Canary) (bool, error) {

|

||||

canary, err := c.kubeClient.AppsV1().Deployments(cd.Namespace).Get(cd.Spec.TargetRef.Name, metav1.GetOptions{})

|

||||

if err != nil {

|

||||

if errors.IsNotFound(err) {

|

||||

return true, fmt.Errorf("deployment %s.%s not found", cd.Spec.TargetRef.Name, cd.Namespace)

|

||||

}

|

||||

return true, fmt.Errorf("deployment %s.%s query error %v", cd.Spec.TargetRef.Name, cd.Namespace, err)

|

||||

}

|

||||

|

||||

retriable, err := c.isDeploymentReady(canary, cd.GetProgressDeadlineSeconds())

|

||||

if err != nil {

|

||||

if retriable {

|

||||

return retriable, fmt.Errorf("Halt %s.%s advancement %s", cd.Name, cd.Namespace, err.Error())

|

||||

} else {

|

||||

return retriable, fmt.Errorf("deployment does not have minimum availability for more than %vs",

|

||||

cd.GetProgressDeadlineSeconds())

|

||||

}

|

||||

}

|

||||

|

||||

return true, nil

|

||||

}

|

||||

|

||||

// IsNewSpec returns true if the canary deployment pod spec has changed

|

||||

@@ -127,6 +153,7 @@ func (c *CanaryDeployer) IsNewSpec(cd *flaggerv1.Canary) (bool, error) {

|

||||

// SetFailedChecks updates the canary failed checks counter

|

||||

func (c *CanaryDeployer) SetFailedChecks(cd *flaggerv1.Canary, val int) error {

|

||||

cd.Status.FailedChecks = val

|

||||

cd.Status.LastTransitionTime = metav1.Now()

|

||||

cd, err := c.flaggerClient.FlaggerV1alpha1().Canaries(cd.Namespace).Update(cd)

|

||||

if err != nil {

|

||||

return fmt.Errorf("deployment %s.%s update error %v", cd.Spec.TargetRef.Name, cd.Namespace, err)

|

||||

@@ -135,8 +162,9 @@ func (c *CanaryDeployer) SetFailedChecks(cd *flaggerv1.Canary, val int) error {

|

||||

}

|

||||

|

||||

// SetState updates the canary status state

|

||||

func (c *CanaryDeployer) SetState(cd *flaggerv1.Canary, state string) error {

|

||||

func (c *CanaryDeployer) SetState(cd *flaggerv1.Canary, state flaggerv1.CanaryState) error {

|

||||

cd.Status.State = state

|

||||

cd.Status.LastTransitionTime = metav1.Now()

|

||||

cd, err := c.flaggerClient.FlaggerV1alpha1().Canaries(cd.Namespace).Update(cd)

|

||||

if err != nil {

|

||||

return fmt.Errorf("deployment %s.%s update error %v", cd.Spec.TargetRef.Name, cd.Namespace, err)

|

||||

@@ -163,6 +191,7 @@ func (c *CanaryDeployer) SyncStatus(cd *flaggerv1.Canary, status flaggerv1.Canar

|

||||

cd.Status.State = status.State

|

||||

cd.Status.FailedChecks = status.FailedChecks

|

||||

cd.Status.CanaryRevision = specEnc

|

||||

cd.Status.LastTransitionTime = metav1.Now()

|

||||

cd, err = c.flaggerClient.FlaggerV1alpha1().Canaries(cd.Namespace).Update(cd)

|

||||

if err != nil {

|

||||

return fmt.Errorf("deployment %s.%s update error %v", cd.Spec.TargetRef.Name, cd.Namespace, err)

|

||||

@@ -238,8 +267,11 @@ func (c *CanaryDeployer) createPrimaryDeployment(cd *flaggerv1.Canary) error {

|

||||

},

|

||||

},

|

||||

Spec: appsv1.DeploymentSpec{

|

||||

Replicas: canaryDep.Spec.Replicas,

|

||||

Strategy: canaryDep.Spec.Strategy,

|

||||

ProgressDeadlineSeconds: canaryDep.Spec.ProgressDeadlineSeconds,

|

||||

MinReadySeconds: canaryDep.Spec.MinReadySeconds,

|

||||

RevisionHistoryLimit: canaryDep.Spec.RevisionHistoryLimit,

|

||||

Replicas: canaryDep.Spec.Replicas,

|

||||

Strategy: canaryDep.Spec.Strategy,

|

||||

Selector: &metav1.LabelSelector{

|

||||

MatchLabels: map[string]string{

|

||||

"app": primaryName,

|

||||

@@ -314,26 +346,41 @@ func (c *CanaryDeployer) createPrimaryHpa(cd *flaggerv1.Canary) error {

|

||||

return nil

|

||||

}

|

||||

|

||||

func (c *CanaryDeployer) getDeploymentStatus(deployment *appsv1.Deployment) (string, bool) {

|

||||

// isDeploymentReady determines if a deployment is ready by checking the status conditions

|

||||

// if a deployment has exceeded the progress deadline it returns a non retriable error

|

||||

func (c *CanaryDeployer) isDeploymentReady(deployment *appsv1.Deployment, deadline int) (bool, error) {

|

||||

retriable := true

|

||||

if deployment.Generation <= deployment.Status.ObservedGeneration {

|

||||

cond := c.getDeploymentCondition(deployment.Status, appsv1.DeploymentProgressing)

|

||||

if cond != nil && cond.Reason == "ProgressDeadlineExceeded" {

|

||||

return fmt.Sprintf("deployment %q exceeded its progress deadline", deployment.GetName()), false

|

||||

} else if deployment.Spec.Replicas != nil && deployment.Status.UpdatedReplicas < *deployment.Spec.Replicas {

|

||||

return fmt.Sprintf("waiting for rollout to finish: %d out of %d new replicas have been updated",

|

||||

deployment.Status.UpdatedReplicas, *deployment.Spec.Replicas), false

|

||||

} else if deployment.Status.Replicas > deployment.Status.UpdatedReplicas {

|

||||

return fmt.Sprintf("waiting for rollout to finish: %d old replicas are pending termination",

|

||||

deployment.Status.Replicas-deployment.Status.UpdatedReplicas), false

|

||||

} else if deployment.Status.AvailableReplicas < deployment.Status.UpdatedReplicas {

|

||||

return fmt.Sprintf("waiting for rollout to finish: %d of %d updated replicas are available",

|

||||

deployment.Status.AvailableReplicas, deployment.Status.UpdatedReplicas), false

|

||||

progress := c.getDeploymentCondition(deployment.Status, appsv1.DeploymentProgressing)

|

||||

if progress != nil {

|

||||

// Determine if the deployment is stuck by checking if there is a minimum replicas unavailable condition

|

||||

// and if the last update time exceeds the deadline

|

||||

available := c.getDeploymentCondition(deployment.Status, appsv1.DeploymentAvailable)

|

||||

if available != nil && available.Status == "False" && available.Reason == "MinimumReplicasUnavailable" {

|

||||

from := available.LastUpdateTime

|

||||

delta := time.Duration(deadline) * time.Second

|

||||

retriable = !from.Add(delta).Before(time.Now())

|

||||

}

|

||||

}

|

||||

|

||||

if progress != nil && progress.Reason == "ProgressDeadlineExceeded" {

|

||||

return false, fmt.Errorf("deployment %q exceeded its progress deadline", deployment.GetName())

|

||||

} else if deployment.Spec.Replicas != nil && deployment.Status.UpdatedReplicas < *deployment.Spec.Replicas {

|

||||

return retriable, fmt.Errorf("waiting for rollout to finish: %d out of %d new replicas have been updated",

|

||||

deployment.Status.UpdatedReplicas, *deployment.Spec.Replicas)

|

||||

} else if deployment.Status.Replicas > deployment.Status.UpdatedReplicas {

|

||||

return retriable, fmt.Errorf("waiting for rollout to finish: %d old replicas are pending termination",

|

||||

deployment.Status.Replicas-deployment.Status.UpdatedReplicas)

|

||||

} else if deployment.Status.AvailableReplicas < deployment.Status.UpdatedReplicas {

|

||||

return retriable, fmt.Errorf("waiting for rollout to finish: %d of %d updated replicas are available",

|

||||

deployment.Status.AvailableReplicas, deployment.Status.UpdatedReplicas)

|

||||

}

|

||||

|

||||

} else {

|

||||

return "waiting for rollout to finish: observed deployment generation less then desired generation", false

|

||||

return true, fmt.Errorf("waiting for rollout to finish: observed deployment generation less then desired generation")

|

||||

}

|

||||

|

||||

return "ready", true

|

||||

return true, nil

|

||||

}

|

||||

|

||||

func (c *CanaryDeployer) getDeploymentCondition(

|

||||

|

||||

@@ -162,7 +162,7 @@ func newTestHPA() *hpav2.HorizontalPodAutoscaler {

|

||||

{

|

||||

Type: "Resource",

|

||||

Resource: &hpav2.ResourceMetricSource{

|

||||

Name: "cpu",

|

||||

Name: "cpu",

|

||||

TargetAverageUtilization: int32p(99),

|

||||

},

|

||||

},

|

||||

@@ -319,7 +319,12 @@ func TestCanaryDeployer_IsReady(t *testing.T) {

|

||||

t.Fatal(err.Error())

|

||||

}

|

||||

|

||||

err = deployer.IsReady(canary)

|

||||

_, err = deployer.IsPrimaryReady(canary)

|

||||

if err != nil {

|

||||

t.Fatal(err.Error())

|

||||

}

|

||||

|

||||

_, err = deployer.IsCanaryReady(canary)

|

||||

if err != nil {

|

||||

t.Fatal(err.Error())

|

||||

}

|

||||

@@ -382,7 +387,7 @@ func TestCanaryDeployer_SetState(t *testing.T) {

|

||||

t.Fatal(err.Error())

|

||||

}

|

||||

|

||||

err = deployer.SetState(canary, "running")

|

||||

err = deployer.SetState(canary, v1alpha1.CanaryRunning)

|

||||

if err != nil {

|

||||

t.Fatal(err.Error())

|

||||

}

|

||||

@@ -392,8 +397,8 @@ func TestCanaryDeployer_SetState(t *testing.T) {

|

||||

t.Fatal(err.Error())

|

||||

}

|

||||

|

||||

if res.Status.State != "running" {

|

||||

t.Errorf("Got %v wanted %v", res.Status.State, "running")

|

||||

if res.Status.State != v1alpha1.CanaryRunning {

|

||||

t.Errorf("Got %v wanted %v", res.Status.State, v1alpha1.CanaryRunning)

|

||||

}

|

||||

}

|

||||

|

||||

@@ -419,7 +424,7 @@ func TestCanaryDeployer_SyncStatus(t *testing.T) {

|

||||

}

|

||||

|

||||

status := v1alpha1.CanaryStatus{

|

||||

State: "running",

|

||||

State: v1alpha1.CanaryRunning,

|

||||

FailedChecks: 2,

|

||||

}

|

||||

err = deployer.SyncStatus(canary, status)

|

||||

|

||||

@@ -73,9 +73,9 @@ func (cr *CanaryRecorder) SetTotal(namespace string, total int) {

|

||||

func (cr *CanaryRecorder) SetStatus(cd *flaggerv1.Canary) {

|

||||

status := 1

|

||||

switch cd.Status.State {

|

||||

case "running":

|

||||

case flaggerv1.CanaryRunning:

|

||||

status = 0

|

||||

case "failed":

|

||||

case flaggerv1.CanaryFailed:

|

||||

status = 2

|

||||

default:

|

||||

status = 1

|

||||

|

||||

@@ -56,8 +56,8 @@ func (c *Controller) advanceCanary(name string, namespace string) {

|

||||

maxWeight = cd.Spec.CanaryAnalysis.MaxWeight

|

||||

}

|

||||

|

||||

// check primary and canary deployments status

|

||||

if err := c.deployer.IsReady(cd); err != nil {

|

||||

// check primary deployment status

|

||||

if _, err := c.deployer.IsPrimaryReady(cd); err != nil {

|

||||

c.recordEventWarningf(cd, "%v", err)

|

||||

return

|

||||

}

|

||||

@@ -81,10 +81,30 @@ func (c *Controller) advanceCanary(name string, namespace string) {

|

||||

c.recorder.SetDuration(cd, time.Since(begin))

|

||||

}()

|

||||

|

||||

// check canary deployment status

|

||||

retriable, err := c.deployer.IsCanaryReady(cd)

|

||||

if err != nil && retriable {

|

||||

c.recordEventWarningf(cd, "%v", err)

|

||||

return

|

||||

}

|

||||

|

||||

// check if the number of failed checks reached the threshold

|

||||

if cd.Status.State == "running" && cd.Status.FailedChecks >= cd.Spec.CanaryAnalysis.Threshold {

|

||||

c.recordEventWarningf(cd, "Rolling back %s.%s failed checks threshold reached %v",

|

||||

cd.Name, cd.Namespace, cd.Status.FailedChecks)

|

||||

if cd.Status.State == flaggerv1.CanaryRunning &&

|

||||

(!retriable || cd.Status.FailedChecks >= cd.Spec.CanaryAnalysis.Threshold) {

|

||||

|

||||

if cd.Status.FailedChecks >= cd.Spec.CanaryAnalysis.Threshold {

|

||||

c.recordEventWarningf(cd, "Rolling back %s.%s failed checks threshold reached %v",

|

||||

cd.Name, cd.Namespace, cd.Status.FailedChecks)

|

||||

c.sendNotification(cd, fmt.Sprintf("Failed checks threshold reached %v", cd.Status.FailedChecks),

|

||||

false, true)

|

||||

}

|

||||

|

||||

if !retriable {

|

||||

c.recordEventWarningf(cd, "Rolling back %s.%s progress deadline exceeded %v",

|

||||

cd.Name, cd.Namespace, err)

|

||||

c.sendNotification(cd, fmt.Sprintf("Progress deadline exceeded %v", err),

|

||||

false, true)

|

||||

}

|

||||

|

||||

// route all traffic back to primary

|

||||

primaryRoute.Weight = 100

|

||||

@@ -96,7 +116,7 @@ func (c *Controller) advanceCanary(name string, namespace string) {

|

||||

|

||||

c.recorder.SetWeight(cd, primaryRoute.Weight, canaryRoute.Weight)

|

||||

c.recordEventWarningf(cd, "Canary failed! Scaling down %s.%s",

|

||||

cd.Spec.TargetRef.Name, cd.Namespace)

|

||||

cd.Name, cd.Namespace)

|

||||

|

||||

// shutdown canary

|

||||

if err := c.deployer.Scale(cd, 0); err != nil {

|

||||

@@ -105,10 +125,11 @@ func (c *Controller) advanceCanary(name string, namespace string) {

|

||||

}

|

||||

|

||||

// mark canary as failed

|

||||

if err := c.deployer.SetState(cd, "failed"); err != nil {

|

||||

if err := c.deployer.SyncStatus(cd, flaggerv1.CanaryStatus{State: flaggerv1.CanaryFailed}); err != nil {

|

||||

c.logger.Errorf("%v", err)

|

||||

return

|

||||

}

|

||||

|

||||

c.recorder.SetStatus(cd)

|

||||

return

|

||||

}

|

||||

@@ -175,11 +196,13 @@ func (c *Controller) advanceCanary(name string, namespace string) {

|

||||

}

|

||||

|

||||

// update status

|

||||

if err := c.deployer.SetState(cd, "finished"); err != nil {

|

||||

if err := c.deployer.SetState(cd, flaggerv1.CanaryFinished); err != nil {

|

||||

c.recordEventWarningf(cd, "%v", err)

|

||||

return

|

||||

}

|

||||

c.recorder.SetStatus(cd)

|

||||

c.sendNotification(cd, "Canary analysis completed successfully, promotion finished.",

|

||||

false, false)

|

||||

}

|

||||

}

|

||||

|

||||

@@ -190,22 +213,26 @@ func (c *Controller) checkCanaryStatus(cd *flaggerv1.Canary, deployer CanaryDepl

|

||||

}

|

||||

|

||||

if cd.Status.State == "" {

|

||||

if err := deployer.SyncStatus(cd, flaggerv1.CanaryStatus{State: "initialized"}); err != nil {

|

||||

if err := deployer.SyncStatus(cd, flaggerv1.CanaryStatus{State: flaggerv1.CanaryInitialized}); err != nil {

|

||||

c.logger.Errorf("%v", err)

|

||||

return false

|

||||

}

|

||||

c.recorder.SetStatus(cd)

|

||||

c.recordEventInfof(cd, "Initialization done! %s.%s", cd.Name, cd.Namespace)

|

||||

c.sendNotification(cd, "New deployment detected, initialization completed.",

|

||||

true, false)

|

||||

return false

|

||||

}

|

||||

|

||||

if diff, err := deployer.IsNewSpec(cd); diff {

|

||||

c.recordEventInfof(cd, "New revision detected! Scaling up %s.%s", cd.Spec.TargetRef.Name, cd.Namespace)

|

||||

c.sendNotification(cd, "New revision detected, starting canary analysis.",

|

||||

true, false)

|

||||

if err = deployer.Scale(cd, 1); err != nil {

|

||||

c.recordEventErrorf(cd, "%v", err)

|

||||

return false

|

||||

}

|

||||

if err := deployer.SyncStatus(cd, flaggerv1.CanaryStatus{State: "running"}); err != nil {

|

||||

if err := deployer.SyncStatus(cd, flaggerv1.CanaryStatus{State: flaggerv1.CanaryRunning}); err != nil {

|

||||

c.logger.Errorf("%v", err)

|

||||

return false

|

||||

}

|

||||

|

||||

@@ -6,6 +6,7 @@ import (

|

||||

"time"

|

||||

|

||||

fakeIstio "github.com/knative/pkg/client/clientset/versioned/fake"

|

||||

"github.com/stefanprodan/flagger/pkg/apis/flagger/v1alpha1"

|

||||

fakeFlagger "github.com/stefanprodan/flagger/pkg/client/clientset/versioned/fake"

|

||||

informers "github.com/stefanprodan/flagger/pkg/client/informers/externalversions"

|

||||

"github.com/stefanprodan/flagger/pkg/logging"

|

||||

@@ -142,3 +143,71 @@ func TestScheduler_NewRevision(t *testing.T) {

|

||||

t.Errorf("Got canary replicas %v wanted %v", *c.Spec.Replicas, 1)

|

||||

}

|

||||

}

|

||||

|

||||

func TestScheduler_Rollback(t *testing.T) {

|

||||

canary := newTestCanary()

|

||||

dep := newTestDeployment()

|

||||

hpa := newTestHPA()

|

||||

|

||||

flaggerClient := fakeFlagger.NewSimpleClientset(canary)

|

||||

kubeClient := fake.NewSimpleClientset(dep, hpa)

|

||||

istioClient := fakeIstio.NewSimpleClientset()

|

||||

|

||||

logger, _ := logging.NewLogger("debug")

|

||||

deployer := CanaryDeployer{

|

||||

flaggerClient: flaggerClient,

|

||||

kubeClient: kubeClient,

|

||||

logger: logger,

|

||||

}

|

||||

router := CanaryRouter{

|

||||

flaggerClient: flaggerClient,

|

||||

kubeClient: kubeClient,

|

||||

istioClient: istioClient,

|

||||

logger: logger,

|

||||

}

|

||||

observer := CanaryObserver{

|

||||

metricsServer: "fake",

|

||||

}

|

||||

|

||||

flaggerInformerFactory := informers.NewSharedInformerFactory(flaggerClient, noResyncPeriodFunc())

|

||||

flaggerInformer := flaggerInformerFactory.Flagger().V1alpha1().Canaries()

|

||||

|

||||

ctrl := &Controller{

|

||||

kubeClient: kubeClient,

|

||||

istioClient: istioClient,

|

||||

flaggerClient: flaggerClient,

|

||||

flaggerLister: flaggerInformer.Lister(),

|

||||

flaggerSynced: flaggerInformer.Informer().HasSynced,

|

||||

workqueue: workqueue.NewNamedRateLimitingQueue(workqueue.DefaultControllerRateLimiter(), controllerAgentName),

|

||||

eventRecorder: &record.FakeRecorder{},

|

||||

logger: logger,

|

||||

canaries: new(sync.Map),

|

||||

flaggerWindow: time.Second,

|

||||

deployer: deployer,

|

||||

router: router,

|

||||

observer: observer,

|

||||

recorder: NewCanaryRecorder(false),

|

||||

}

|

||||

ctrl.flaggerSynced = alwaysReady

|

||||

|

||||

// init

|

||||

ctrl.advanceCanary("podinfo", "default")

|

||||

|

||||

// update failed checks to max

|

||||

err := deployer.SyncStatus(canary, v1alpha1.CanaryStatus{State: v1alpha1.CanaryRunning, FailedChecks: 11})

|

||||

if err != nil {

|

||||

t.Fatal(err.Error())

|

||||

}

|

||||

|

||||

// detect changes

|

||||

ctrl.advanceCanary("podinfo", "default")

|

||||

|

||||

c, err := flaggerClient.FlaggerV1alpha1().Canaries("default").Get("podinfo", metav1.GetOptions{})

|

||||

if err != nil {

|

||||

t.Fatal(err.Error())

|

||||

}

|

||||

|

||||

if c.Status.State != v1alpha1.CanaryFailed {

|

||||

t.Errorf("Got canary state %v wanted %v", c.Status.State, v1alpha1.CanaryFailed)

|

||||

}

|

||||

}

|

||||

|

||||

110

pkg/notifier/slack.go

Normal file

110

pkg/notifier/slack.go

Normal file

@@ -0,0 +1,110 @@

|

||||

package notifier

|

||||

|

||||

import (

|

||||

"bytes"

|

||||

"encoding/json"

|

||||

"errors"

|

||||

"fmt"

|

||||

"io/ioutil"

|

||||

"net/http"

|

||||

"net/url"

|

||||

)

|

||||

|

||||

// Slack holds the hook URL

|

||||

type Slack struct {

|

||||

URL string

|

||||

Username string

|

||||

Channel string

|

||||

IconEmoji string

|

||||

}

|

||||

|

||||

// SlackPayload holds the channel and attachments

|

||||

type SlackPayload struct {

|

||||

Channel string `json:"channel"`

|

||||

Username string `json:"username"`

|

||||

IconUrl string `json:"icon_url"`

|

||||

IconEmoji string `json:"icon_emoji"`

|

||||

Text string `json:"text,omitempty"`

|

||||

Attachments []SlackAttachment `json:"attachments,omitempty"`

|

||||

}

|

||||

|

||||

// SlackAttachment holds the markdown message body

|

||||

type SlackAttachment struct {

|

||||

Color string `json:"color"`

|

||||

AuthorName string `json:"author_name"`

|

||||

Text string `json:"text"`

|

||||

MrkdwnIn []string `json:"mrkdwn_in"`

|

||||

Fields []SlackField `json:"fields"`

|

||||

}

|

||||

|

||||

type SlackField struct {

|

||||

Title string `json:"title"`

|

||||

Value string `json:"value"`

|