mirror of

https://github.com/fluxcd/flagger.git

synced 2026-04-15 06:57:34 +00:00

Compare commits

259 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

210e21176b | ||

|

|

0a0c3835d6 | ||

|

|

531893b279 | ||

|

|

e6bb47f920 | ||

|

|

307813a628 | ||

|

|

38fc6b567f | ||

|

|

17015b23bf | ||

|

|

c9e53dd069 | ||

|

|

e26a10b481 | ||

|

|

281d869f54 | ||

|

|

91126d102d | ||

|

|

ba4646cddb | ||

|

|

438877674a | ||

|

|

da451a0cf4 | ||

|

|

5e1d00d4d2 | ||

|

|

00d54d268c | ||

|

|

174e9fdc93 | ||

|

|

f7fd6cce8c | ||

|

|

5dc336d609 | ||

|

|

ae6a683f23 | ||

|

|

5acf189fbe | ||

|

|

090329d0c9 | ||

|

|

96fd359b99 | ||

|

|

519f343fcc | ||

|

|

5d2a7ba9e7 | ||

|

|

1664ca436e | ||

|

|

84ae65c763 | ||

|

|

6085753d84 | ||

|

|

da706be4aa | ||

|

|

65e3bcb1d8 | ||

|

|

582f6eec77 | ||

|

|

4200c0159d | ||

|

|

cf8fe94fca | ||

|

|

30d553c6f3 | ||

|

|

f8f6a994dd | ||

|

|

085639bbde | ||

|

|

3bfa7c974d | ||

|

|

d29e475277 | ||

|

|

b7ba3ab063 | ||

|

|

9796903c78 | ||

|

|

2f25fab560 | ||

|

|

215c859619 | ||

|

|

7071d42152 | ||

|

|

08b1e52278 | ||

|

|

801f801e02 | ||

|

|

af5634962f | ||

|

|

fe7615afb4 | ||

|

|

fc6bedda23 | ||

|

|

a7f997c092 | ||

|

|

121eb767cb | ||

|

|

cd3a1d8478 | ||

|

|

6f6af25467 | ||

|

|

a0f1638f6c | ||

|

|

fc13276f0e | ||

|

|

8a0b92db19 | ||

|

|

2f0d34adb2 | ||

|

|

617f416291 | ||

|

|

7a438ad323 | ||

|

|

5776f0b64b | ||

|

|

96d190a789 | ||

|

|

d2038699c0 | ||

|

|

cb3b5cba90 | ||

|

|

8c881ab758 | ||

|

|

caefaf73aa | ||

|

|

e8d7001f5e | ||

|

|

ae0f20a445 | ||

|

|

4ddc12185f | ||

|

|

e81627a96d | ||

|

|

47be2a25f2 | ||

|

|

6832a4ffde | ||

|

|

bd58a47862 | ||

|

|

613fb92a25 | ||

|

|

250d9f2836 | ||

|

|

0cab25e44c | ||

|

|

cbf6b462e4 | ||

|

|

8695660c58 | ||

|

|

1216990f52 | ||

|

|

204228bc8f | ||

|

|

ebc26e9ea0 | ||

|

|

3c03119d2d | ||

|

|

644049092f | ||

|

|

578f447728 | ||

|

|

3bf926e419 | ||

|

|

48ee4f8bd2 | ||

|

|

b4964a0535 | ||

|

|

47ff00e9b9 | ||

|

|

6ca99a5ddb | ||

|

|

30b5054692 | ||

|

|

edf7b90c11 | ||

|

|

7f0f97d14d | ||

|

|

b03b75cd7e | ||

|

|

f0d2e60a9a | ||

|

|

328f1d9ea2 | ||

|

|

a14013f393 | ||

|

|

aef1d7904d | ||

|

|

dc478188c1 | ||

|

|

9fa6e775c0 | ||

|

|

584350623b | ||

|

|

919959b32c | ||

|

|

ec54eedf93 | ||

|

|

f311797215 | ||

|

|

059b5d0f89 | ||

|

|

7542640494 | ||

|

|

52493f181a | ||

|

|

5d95143536 | ||

|

|

a2c5861ca5 | ||

|

|

fcc07f02b0 | ||

|

|

3f43526aac | ||

|

|

cd07da9137 | ||

|

|

30ab182b2e | ||

|

|

2ddd9587f7 | ||

|

|

50800857b6 | ||

|

|

8f50521435 | ||

|

|

45ecaa9084 | ||

|

|

9c7db58d87 | ||

|

|

6b11e9714b | ||

|

|

7f5a9ed34a | ||

|

|

bc9a231d26 | ||

|

|

0bb3815f73 | ||

|

|

944cc8ef62 | ||

|

|

e97334d7c1 | ||

|

|

2dacf08c30 | ||

|

|

6f6590774e | ||

|

|

fe5bb3fd26 | ||

|

|

c638edd346 | ||

|

|

c02477a245 | ||

|

|

da6da9c839 | ||

|

|

d83293776d | ||

|

|

d5994ac127 | ||

|

|

36584826bb | ||

|

|

7a6fccb70d | ||

|

|

ca1971c085 | ||

|

|

97eaecec48 | ||

|

|

01d47808a7 | ||

|

|

7d2f3dea7a | ||

|

|

bce1d02b3b | ||

|

|

9a993b131d | ||

|

|

636a1d7576 | ||

|

|

fb621ec465 | ||

|

|

00e993c686 | ||

|

|

374a55d8f5 | ||

|

|

1e88e2fa72 | ||

|

|

a2326198f6 | ||

|

|

a0031d626a | ||

|

|

a2b58d59ab | ||

|

|

e8b17406b7 | ||

|

|

5245045d84 | ||

|

|

b57d39369b | ||

|

|

db72fe3d97 | ||

|

|

3a2f688c56 | ||

|

|

13a2a5073f | ||

|

|

418853fd0c | ||

|

|

cfb68a6e56 | ||

|

|

88b13274d7 | ||

|

|

056ba675a7 | ||

|

|

873b74561c | ||

|

|

8b42ce374d | ||

|

|

4871003ff1 | ||

|

|

b4e7ad5575 | ||

|

|

1a246060e2 | ||

|

|

6a3d74c645 | ||

|

|

2073bd2027 | ||

|

|

c63554c534 | ||

|

|

be8ed8a696 | ||

|

|

98530d9968 | ||

|

|

38adc513a6 | ||

|

|

eb12e3bde1 | ||

|

|

8b2839d36e | ||

|

|

f0fa2aa6bb | ||

|

|

33528b073f | ||

|

|

cf8783ea37 | ||

|

|

00355635f8 | ||

|

|

aa485f4bf1 | ||

|

|

273b05fb24 | ||

|

|

e470474d6f | ||

|

|

ddfd2fe2ec | ||

|

|

7533d0ae99 | ||

|

|

04ec7f0388 | ||

|

|

419000cc13 | ||

|

|

0dc8edb437 | ||

|

|

0759b6531b | ||

|

|

d8f984de7d | ||

|

|

82e490a875 | ||

|

|

c6dffd9d3e | ||

|

|

8ee3d5835a | ||

|

|

1209d7e42b | ||

|

|

cdc05ba506 | ||

|

|

a6fae0195f | ||

|

|

11375b6890 | ||

|

|

3811470ebf | ||

|

|

e2b08eb4dc | ||

|

|

38d3ca1022 | ||

|

|

df459c5fe6 | ||

|

|

d1d9c0e2a9 | ||

|

|

c1b1d7d448 | ||

|

|

e6b5ee2042 | ||

|

|

0170fc6166 | ||

|

|

4cc2ada2a2 | ||

|

|

a5d3e4f6a6 | ||

|

|

7c92b33886 | ||

|

|

0f0b9414ae | ||

|

|

6fbb67ee8c | ||

|

|

6634f1a9ae | ||

|

|

8da8138f77 | ||

|

|

588f4c477b | ||

|

|

fda1775d3a | ||

|

|

fc71d53c71 | ||

|

|

ab2a320659 | ||

|

|

7f50f81ac7 | ||

|

|

c36a13ccff | ||

|

|

47de726345 | ||

|

|

7a4fdbddc0 | ||

|

|

0dc6f33550 | ||

|

|

b2436eb0df | ||

|

|

cc673159d7 | ||

|

|

17c310d66d | ||

|

|

e7357c4e07 | ||

|

|

c44de2d7c3 | ||

|

|

d82b2c219a | ||

|

|

35c8957a55 | ||

|

|

8555f8250a | ||

|

|

8137a25b13 | ||

|

|

2db5573c0e | ||

|

|

1e382203b8 | ||

|

|

873903a4cb | ||

|

|

e5b8afc085 | ||

|

|

ded658fed9 | ||

|

|

88d8858900 | ||

|

|

737c185aa6 | ||

|

|

0006a68740 | ||

|

|

4db91f7062 | ||

|

|

b8c23967b7 | ||

|

|

2019d048a4 | ||

|

|

fe0a4eb20c | ||

|

|

a35b0e8639 | ||

|

|

4c0843f92a | ||

|

|

867c1af897 | ||

|

|

100308289f | ||

|

|

3d4739760d | ||

|

|

9f321dd685 | ||

|

|

ba6078f235 | ||

|

|

cd2f1a24bd | ||

|

|

b87a81b798 | ||

|

|

0f9dd61786 | ||

|

|

4869a9f3ae | ||

|

|

cd6f36302d | ||

|

|

e5fdc7a57d | ||

|

|

834a601311 | ||

|

|

a2784c533e | ||

|

|

8e3ee3439c | ||

|

|

f9d40cfe1b | ||

|

|

b26b49fac2 | ||

|

|

f68d647fd0 | ||

|

|

deb3fb01a2 | ||

|

|

3accd23a19 | ||

|

|

6a66113560 | ||

|

|

6a7f7415fa | ||

|

|

4654f2cba9 | ||

|

|

17557dc206 |

50

.cosign/README.md

Normal file

50

.cosign/README.md

Normal file

@@ -0,0 +1,50 @@

|

||||

# Flagger signed releases

|

||||

|

||||

Flagger releases published to GitHub Container Registry as multi-arch container images

|

||||

are signed using [cosign](https://github.com/sigstore/cosign).

|

||||

|

||||

## Verify Flagger images

|

||||

|

||||

Install the [cosign](https://github.com/sigstore/cosign) CLI:

|

||||

|

||||

```sh

|

||||

brew install sigstore/tap/cosign

|

||||

```

|

||||

|

||||

Verify a Flagger release with cosign CLI:

|

||||

|

||||

```sh

|

||||

cosign verify -key https://raw.githubusercontent.com/fluxcd/flagger/main/cosign/cosign.pub \

|

||||

ghcr.io/fluxcd/flagger:1.13.0

|

||||

```

|

||||

|

||||

Verify Flagger images before they get pulled on your Kubernetes clusters with [Kyverno](https://github.com/kyverno/kyverno/):

|

||||

|

||||

```yaml

|

||||

apiVersion: kyverno.io/v1

|

||||

kind: ClusterPolicy

|

||||

metadata:

|

||||

name: verify-flagger-image

|

||||

annotations:

|

||||

policies.kyverno.io/title: Verify Flagger Image

|

||||

policies.kyverno.io/category: Cosign

|

||||

policies.kyverno.io/severity: medium

|

||||

policies.kyverno.io/subject: Pod

|

||||

policies.kyverno.io/minversion: 1.4.2

|

||||

spec:

|

||||

validationFailureAction: enforce

|

||||

background: false

|

||||

rules:

|

||||

- name: verify-image

|

||||

match:

|

||||

resources:

|

||||

kinds:

|

||||

- Pod

|

||||

verifyImages:

|

||||

- image: "ghcr.io/fluxcd/flagger:*"

|

||||

key: |-

|

||||

-----BEGIN PUBLIC KEY-----

|

||||

MFkwEwYHKoZIzj0CAQYIKoZIzj0DAQcDQgAEST+BqQ1XZhhVYx0YWQjdUJYIG5Lt

|

||||

iz2+UxRIqmKBqNmce2T+l45qyqOs99qfD7gLNGmkVZ4vtJ9bM7FxChFczg==

|

||||

-----END PUBLIC KEY-----

|

||||

```

|

||||

11

.cosign/cosign.key

Normal file

11

.cosign/cosign.key

Normal file

@@ -0,0 +1,11 @@

|

||||

-----BEGIN ENCRYPTED COSIGN PRIVATE KEY-----

|

||||

eyJrZGYiOnsibmFtZSI6InNjcnlwdCIsInBhcmFtcyI6eyJOIjozMjc2OCwiciI6

|

||||

OCwicCI6MX0sInNhbHQiOiIvK1MwbTNrU3pGMFFXdVVYQkFoY2gvTDc3NVJBSy9O

|

||||

cnkzUC9iMkxBZGF3PSJ9LCJjaXBoZXIiOnsibmFtZSI6Im5hY2wvc2VjcmV0Ym94

|

||||

Iiwibm9uY2UiOiJBNEFYL2IyU1BsMDBuY3JUNk45QkNOb0VLZTZLZEluRCJ9LCJj

|

||||

aXBoZXJ0ZXh0IjoiZ054UlJweXpraWtRMUVaRldsSnEvQXVUWTl0Vis2enBlWkIy

|

||||

dUFHREMzOVhUQlAwaWY5YStaZTE1V0NTT2FQZ01XQmtSZWhrQVVjQ3dZOGF2WTZa

|

||||

eFhZWWE3T1B4eFdidHJuSUVZM2hwZUk1M1dVQVZ6SXEzQjl0N0ZmV1JlVGsxdFlo

|

||||

b3hwQmxUSHY4U0c2azdPYk1aQnJleitzSGRWclF6YUdMdG12V1FOMTNZazRNb25i

|

||||

ZUpRSUJpUXFQTFg5NzFhSUlxU0dxYVhCanc9PSJ9

|

||||

-----END ENCRYPTED COSIGN PRIVATE KEY-----

|

||||

4

.cosign/cosign.pub

Normal file

4

.cosign/cosign.pub

Normal file

@@ -0,0 +1,4 @@

|

||||

-----BEGIN PUBLIC KEY-----

|

||||

MFkwEwYHKoZIzj0CAQYIKoZIzj0DAQcDQgAEST+BqQ1XZhhVYx0YWQjdUJYIG5Lt

|

||||

iz2+UxRIqmKBqNmce2T+l45qyqOs99qfD7gLNGmkVZ4vtJ9bM7FxChFczg==

|

||||

-----END PUBLIC KEY-----

|

||||

@@ -13,3 +13,6 @@ redirects:

|

||||

usage/skipper-progressive-delivery: tutorials/skipper-progressive-delivery.md

|

||||

usage/crossover-progressive-delivery: tutorials/crossover-progressive-delivery.md

|

||||

usage/traefik-progressive-delivery: tutorials/traefik-progressive-delivery.md

|

||||

usage/osm-progressive-delivery: tutorials/osm-progressive-delivery.md

|

||||

usage/kuma-progressive-delivery: tutorials/kuma-progressive-delivery.md

|

||||

usage/gatewayapi-progressive-delivery: tutorials/gatewayapi-progressive-delivery.md

|

||||

|

||||

5

.github/workflows/build.yaml

vendored

5

.github/workflows/build.yaml

vendored

@@ -9,6 +9,9 @@ on:

|

||||

branches:

|

||||

- main

|

||||

|

||||

permissions:

|

||||

contents: read # for actions/checkout to fetch code

|

||||

|

||||

jobs:

|

||||

container:

|

||||

runs-on: ubuntu-latest

|

||||

@@ -25,7 +28,7 @@ jobs:

|

||||

- name: Setup Go

|

||||

uses: actions/setup-go@v2

|

||||

with:

|

||||

go-version: 1.15.x

|

||||

go-version: 1.17.x

|

||||

- name: Download modules

|

||||

run: |

|

||||

go mod download

|

||||

|

||||

12

.github/workflows/e2e.yaml

vendored

12

.github/workflows/e2e.yaml

vendored

@@ -9,25 +9,37 @@ on:

|

||||

branches:

|

||||

- main

|

||||

|

||||

permissions:

|

||||

contents: read # for actions/checkout to fetch code

|

||||

|

||||

jobs:

|

||||

kind:

|

||||

runs-on: ubuntu-latest

|

||||

strategy:

|

||||

fail-fast: false

|

||||

matrix:

|

||||

provider:

|

||||

# service mesh

|

||||

- istio

|

||||

- linkerd

|

||||

- osm

|

||||

- kuma

|

||||

# ingress controllers

|

||||

- contour

|

||||

- nginx

|

||||

- traefik

|

||||

- gloo

|

||||

- skipper

|

||||

- kubernetes

|

||||

- gatewayapi

|

||||

steps:

|

||||

- name: Checkout

|

||||

uses: actions/checkout@v2

|

||||

- name: Setup Kubernetes

|

||||

uses: engineerd/setup-kind@v0.5.0

|

||||

with:

|

||||

version: "v0.11.1"

|

||||

image: kindest/node:v1.21.1@sha256:fae9a58f17f18f06aeac9772ca8b5ac680ebbed985e266f711d936e91d113bad

|

||||

- name: Build container image

|

||||

run: |

|

||||

docker build -t test/flagger:latest .

|

||||

|

||||

18

.github/workflows/helm.yaml

vendored

Normal file

18

.github/workflows/helm.yaml

vendored

Normal file

@@ -0,0 +1,18 @@

|

||||

name: helm

|

||||

|

||||

on:

|

||||

workflow_dispatch:

|

||||

|

||||

permissions:

|

||||

contents: write # needed to push chart

|

||||

|

||||

jobs:

|

||||

build-push:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v2

|

||||

- name: Publish Helm charts

|

||||

uses: stefanprodan/helm-gh-pages@v1.3.0

|

||||

with:

|

||||

token: ${{ secrets.GITHUB_TOKEN }}

|

||||

charts_url: https://flagger.app

|

||||

56

.github/workflows/push-ld.yml

vendored

Normal file

56

.github/workflows/push-ld.yml

vendored

Normal file

@@ -0,0 +1,56 @@

|

||||

name: push-ld

|

||||

on:

|

||||

workflow_dispatch:

|

||||

|

||||

env:

|

||||

IMAGE: "ghcr.io/fluxcd/flagger-loadtester"

|

||||

|

||||

permissions:

|

||||

contents: write # needed to write releases

|

||||

packages: write # needed for ghcr access

|

||||

|

||||

jobs:

|

||||

build-push:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v2

|

||||

- name: Prepare

|

||||

id: prep

|

||||

run: |

|

||||

VERSION=$(grep 'VERSION' cmd/loadtester/main.go | head -1 | awk '{ print $4 }' | tr -d '"')

|

||||

echo ::set-output name=BUILD_DATE::$(date -u +'%Y-%m-%dT%H:%M:%SZ')

|

||||

echo ::set-output name=VERSION::${VERSION}

|

||||

- name: Setup QEMU

|

||||

uses: docker/setup-qemu-action@v1

|

||||

- name: Setup Docker Buildx

|

||||

id: buildx

|

||||

uses: docker/setup-buildx-action@v1

|

||||

- name: Login to GitHub Container Registry

|

||||

uses: docker/login-action@v1

|

||||

with:

|

||||

registry: ghcr.io

|

||||

username: fluxcdbot

|

||||

password: ${{ secrets.GHCR_TOKEN }}

|

||||

- name: Generate image meta

|

||||

id: meta

|

||||

uses: docker/metadata-action@v3

|

||||

with:

|

||||

images: |

|

||||

${{ env.IMAGE }}

|

||||

tags: |

|

||||

type=raw,value=${{ steps.prep.outputs.VERSION }}

|

||||

- name: Publish image

|

||||

uses: docker/build-push-action@v2

|

||||

with:

|

||||

push: true

|

||||

builder: ${{ steps.buildx.outputs.name }}

|

||||

context: .

|

||||

file: ./Dockerfile.loadtester

|

||||

platforms: linux/amd64,linux/arm64

|

||||

build-args: |

|

||||

REVISION=${{ github.sha }}

|

||||

tags: ${{ steps.meta.outputs.tags }}

|

||||

labels: ${{ steps.meta.outputs.labels }}

|

||||

- name: Check images

|

||||

run: |

|

||||

docker buildx imagetools inspect ${{ env.IMAGE }}:${{ steps.prep.outputs.VERSION }}

|

||||

61

.github/workflows/release.yml

vendored

61

.github/workflows/release.yml

vendored

@@ -4,34 +4,47 @@ on:

|

||||

tags:

|

||||

- 'v*'

|

||||

|

||||

permissions:

|

||||

contents: write # needed to write releases

|

||||

id-token: write # needed for keyless signing

|

||||

packages: write # needed for ghcr access

|

||||

|

||||

env:

|

||||

IMAGE: "ghcr.io/fluxcd/${{ github.event.repository.name }}"

|

||||

|

||||

jobs:

|

||||

build-push:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v2

|

||||

- uses: sigstore/cosign-installer@main

|

||||

- name: Prepare

|

||||

id: prep

|

||||

run: |

|

||||

VERSION=$(grep 'VERSION' pkg/version/version.go | awk '{ print $4 }' | tr -d '"')

|

||||

CHANGELOG="https://github.com/fluxcd/flagger/blob/main/CHANGELOG.md#$(echo $VERSION | tr -d '.')"

|

||||

echo "[CHANGELOG](${CHANGELOG})" > notes.md

|

||||

echo ::set-output name=BUILD_DATE::$(date -u +'%Y-%m-%dT%H:%M:%SZ')

|

||||

echo ::set-output name=VERSION::${VERSION}

|

||||

echo ::set-output name=CHANGELOG::${CHANGELOG}

|

||||

- name: Setup QEMU

|

||||

uses: docker/setup-qemu-action@v1

|

||||

with:

|

||||

platforms: all

|

||||

- name: Setup Docker Buildx

|

||||

id: buildx

|

||||

uses: docker/setup-buildx-action@v1

|

||||

with:

|

||||

buildkitd-flags: "--debug"

|

||||

- name: Login to GitHub Container Registry

|

||||

uses: docker/login-action@v1

|

||||

with:

|

||||

registry: ghcr.io

|

||||

username: fluxcdbot

|

||||

password: ${{ secrets.GHCR_TOKEN }}

|

||||

- name: Generate image meta

|

||||

id: meta

|

||||

uses: docker/metadata-action@v3

|

||||

with:

|

||||

images: |

|

||||

${{ env.IMAGE }}

|

||||

tags: |

|

||||

type=raw,value=${{ steps.prep.outputs.VERSION }}

|

||||

- name: Publish image

|

||||

uses: docker/build-push-action@v2

|

||||

with:

|

||||

@@ -42,33 +55,31 @@ jobs:

|

||||

platforms: linux/amd64,linux/arm64,linux/arm/v7

|

||||

build-args: |

|

||||

REVISON=${{ github.sha }}

|

||||

tags: |

|

||||

ghcr.io/fluxcd/flagger:${{ steps.prep.outputs.VERSION }}

|

||||

labels: |

|

||||

org.opencontainers.image.title=${{ github.event.repository.name }}

|

||||

org.opencontainers.image.description=${{ github.event.repository.description }}

|

||||

org.opencontainers.image.url=${{ github.event.repository.html_url }}

|

||||

org.opencontainers.image.source=${{ github.event.repository.html_url }}

|

||||

org.opencontainers.image.revision=${{ github.sha }}

|

||||

org.opencontainers.image.version=${{ steps.prep.outputs.VERSION }}

|

||||

org.opencontainers.image.created=${{ steps.prep.outputs.BUILD_DATE }}

|

||||

tags: ${{ steps.meta.outputs.tags }}

|

||||

labels: ${{ steps.meta.outputs.labels }}

|

||||

- name: Sign image

|

||||

run: |

|

||||

echo -n "${{secrets.COSIGN_PASSWORD}}" | \

|

||||

cosign sign -key ./.cosign/cosign.key -a git_sha=$GITHUB_SHA \

|

||||

${{ env.IMAGE }}:${{ steps.prep.outputs.VERSION }}

|

||||

- name: Check images

|

||||

run: |

|

||||

docker buildx imagetools inspect ghcr.io/fluxcd/flagger:${{ steps.prep.outputs.VERSION }}

|

||||

docker buildx imagetools inspect ${{ env.IMAGE }}:${{ steps.prep.outputs.VERSION }}

|

||||

- name: Verifiy image signature

|

||||

run: |

|

||||

cosign verify -key ./.cosign/cosign.pub \

|

||||

${{ env.IMAGE }}:${{ steps.prep.outputs.VERSION }}

|

||||

- name: Publish Helm charts

|

||||

uses: stefanprodan/helm-gh-pages@v1.3.0

|

||||

with:

|

||||

token: ${{ secrets.GITHUB_TOKEN }}

|

||||

charts_url: https://flagger.app

|

||||

linting: off

|

||||

- name: Create release

|

||||

uses: actions/create-release@latest

|

||||

- uses: anchore/sbom-action/download-syft@v0

|

||||

- name: Create release and SBOM

|

||||

uses: goreleaser/goreleaser-action@v2

|

||||

with:

|

||||

version: latest

|

||||

args: release --release-notes=notes.md --rm-dist --skip-validate

|

||||

env:

|

||||

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

with:

|

||||

tag_name: ${{ github.ref }}

|

||||

release_name: ${{ github.ref }}

|

||||

draft: false

|

||||

prerelease: false

|

||||

body: |

|

||||

[CHANGELOG](${{ steps.prep.outputs.CHANGELOG }})

|

||||

|

||||

4

.github/workflows/scan.yml

vendored

4

.github/workflows/scan.yml

vendored

@@ -8,6 +8,10 @@ on:

|

||||

schedule:

|

||||

- cron: '18 10 * * 3'

|

||||

|

||||

permissions:

|

||||

contents: read # for actions/checkout to fetch code

|

||||

security-events: write # for codeQL to write security events

|

||||

|

||||

jobs:

|

||||

fossa:

|

||||

name: FOSSA

|

||||

|

||||

@@ -1,14 +1,17 @@

|

||||

project_name: flagger

|

||||

|

||||

builds:

|

||||

- main: ./cmd/flagger

|

||||

binary: flagger

|

||||

ldflags: -s -w -X github.com/fluxcd/flagger/pkg/version.REVISION={{.Commit}}

|

||||

goos:

|

||||

- linux

|

||||

goarch:

|

||||

- amd64

|

||||

env:

|

||||

- CGO_ENABLED=0

|

||||

archives:

|

||||

- name_template: "{{ .Binary }}_{{ .Version }}_{{ .Os }}_{{ .Arch }}"

|

||||

files:

|

||||

- none*

|

||||

- skip: true

|

||||

|

||||

release:

|

||||

prerelease: auto

|

||||

|

||||

source:

|

||||

enabled: true

|

||||

name_template: "{{ .ProjectName }}_{{ .Version }}_source_code"

|

||||

|

||||

sboms:

|

||||

- id: source

|

||||

artifacts: source

|

||||

documents:

|

||||

- "{{ .ProjectName }}_{{ .Version }}_sbom.spdx.json"

|

||||

|

||||

301

CHANGELOG.md

301

CHANGELOG.md

@@ -2,6 +2,307 @@

|

||||

|

||||

All notable changes to this project are documented in this file.

|

||||

|

||||

## 1.19.0

|

||||

|

||||

**Release date:** 2022-03-14

|

||||

|

||||

This release comes with support for Kubernetes [Gateway API](https://gateway-api.sigs.k8s.io/) v1alpha2.

|

||||

For more details see the [Gateway API Progressive Delivery tutorial](https://docs.flagger.app/tutorials/gatewayapi-progressive-delivery).

|

||||

|

||||

#### Features

|

||||

|

||||

- Add Gateway API as a provider

|

||||

[#1108](https://github.com/fluxcd/flagger/pull/1108)

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Add arm64 support for loadtester

|

||||

[#1128](https://github.com/fluxcd/flagger/pull/1128)

|

||||

- Restrict source namespaces in flagger-loadtester

|

||||

[#1119](https://github.com/fluxcd/flagger/pull/1119)

|

||||

- Remove support for Helm v2 in loadtester

|

||||

[#1130](https://github.com/fluxcd/flagger/pull/1130)

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Fix potential canary finalizer duplication

|

||||

[#1125](https://github.com/fluxcd/flagger/pull/1125)

|

||||

- Use the primary replicas when scaling up the canary (no hpa)

|

||||

[#1110](https://github.com/fluxcd/flagger/pull/1110)

|

||||

|

||||

## 1.18.0

|

||||

|

||||

**Release date:** 2022-02-14

|

||||

|

||||

This release comes with a new API field called `canaryReadyThreshold`

|

||||

that allows setting the percentage of pods that need to be available

|

||||

to consider the canary deployment as ready.

|

||||

|

||||

Starting with version, the canary deployment labels, annotations and

|

||||

replicas fields are copied to the primary deployment at promotion time.

|

||||

|

||||

#### Features

|

||||

|

||||

- Add field `spec.analysis.canaryReadyThreshold` for configuring canary threshold

|

||||

[#1102](https://github.com/fluxcd/flagger/pull/1102)

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Update metadata during subsequent promote

|

||||

[#1092](https://github.com/fluxcd/flagger/pull/1092)

|

||||

- Set primary deployment `replicas` when autoscaler isn't used

|

||||

[#1106](https://github.com/fluxcd/flagger/pull/1106)

|

||||

- Update `matchLabels` for `TopologySpreadContstraints` in Deployments

|

||||

[#1041](https://github.com/fluxcd/flagger/pull/1041)

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Send warning and error alerts correctly

|

||||

[#1105](https://github.com/fluxcd/flagger/pull/1105)

|

||||

- Fix for when Prometheus returns NaN

|

||||

[#1095](https://github.com/fluxcd/flagger/pull/1095)

|

||||

- docs: Fix typo ExternalDNS

|

||||

[#1103](https://github.com/fluxcd/flagger/pull/1103)

|

||||

|

||||

## 1.17.0

|

||||

|

||||

**Release date:** 2022-01-11

|

||||

|

||||

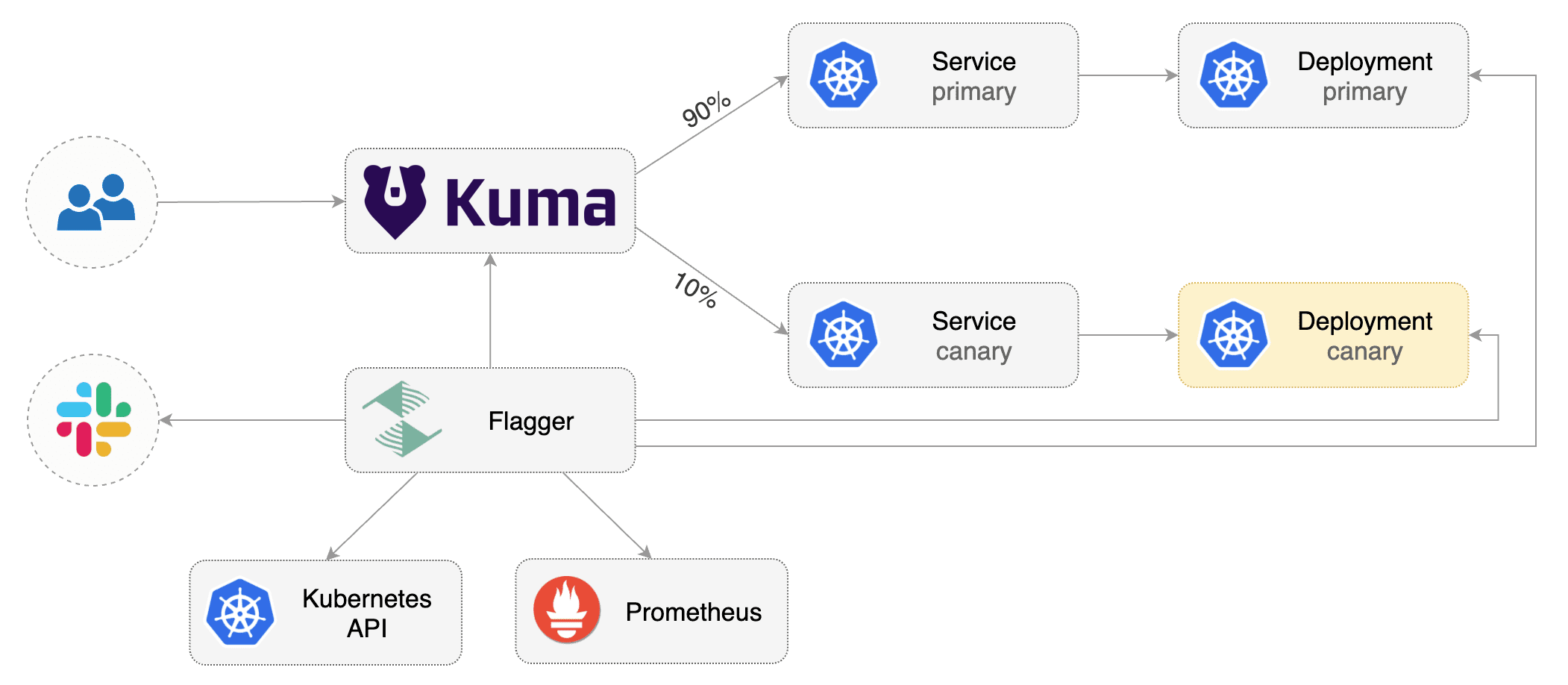

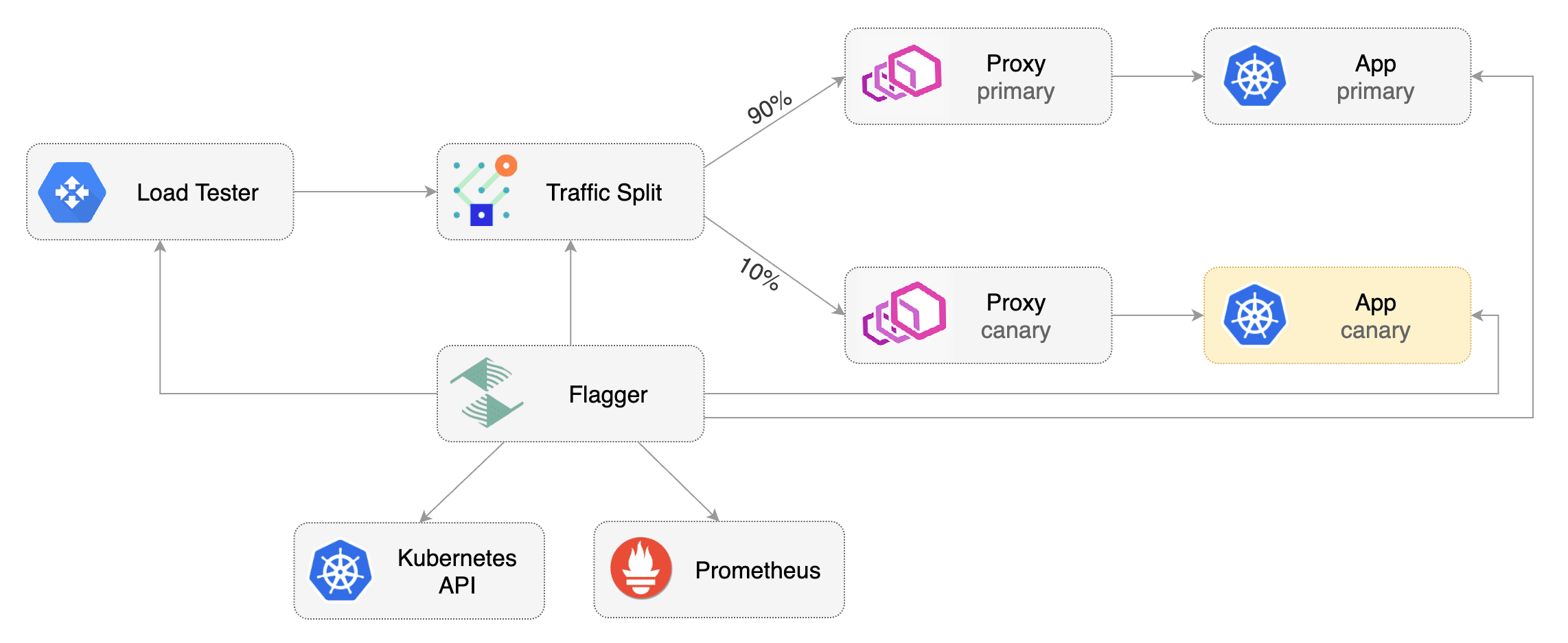

This release comes with support for [Kuma Service Mesh](https://kuma.io/).

|

||||

For more details see the [Kuma Progressive Delivery tutorial](https://docs.flagger.app/tutorials/kuma-progressive-delivery).

|

||||

|

||||

To differentiate alerts based on the cluster name, you can configure Flagger with the `-cluster-name=my-cluster`

|

||||

command flag, or with Helm `--set clusterName=my-cluster`.

|

||||

|

||||

#### Features

|

||||

|

||||

- Add kuma support for progressive traffic shifting canaries

|

||||

[#1085](https://github.com/fluxcd/flagger/pull/1085)

|

||||

[#1093](https://github.com/fluxcd/flagger/pull/1093)

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Publish a Software Bill of Materials (SBOM)

|

||||

[#1094](https://github.com/fluxcd/flagger/pull/1094)

|

||||

- Add cluster name to flagger cmd args for altering

|

||||

[#1041](https://github.com/fluxcd/flagger/pull/1041)

|

||||

|

||||

## 1.16.1

|

||||

|

||||

**Release date:** 2021-12-17

|

||||

|

||||

This release contains updates to Kubernetes packages (1.23.0), Alpine (3.15)

|

||||

and load tester components.

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Release loadtester v0.21.0

|

||||

[#1083](https://github.com/fluxcd/flagger/pull/1083)

|

||||

- Add loadtester image pull secrets to Helm chart

|

||||

[#1076](https://github.com/fluxcd/flagger/pull/1076)

|

||||

- Update libraries included in the load tester to newer versions

|

||||

[#1063](https://github.com/fluxcd/flagger/pull/1063)

|

||||

[#1080](https://github.com/fluxcd/flagger/pull/1080)

|

||||

- Update Kubernetes packages to v1.23.0

|

||||

[#1078](https://github.com/fluxcd/flagger/pull/1078)

|

||||

- Update Alpine to 3.15

|

||||

[#1081](https://github.com/fluxcd/flagger/pull/1081)

|

||||

- Update Go to v1.17

|

||||

[#1077](https://github.com/fluxcd/flagger/pull/1077)

|

||||

|

||||

## 1.16.0

|

||||

|

||||

**Release date:** 2021-11-22

|

||||

|

||||

This release comes with a new API field called `primaryReadyThreshold`

|

||||

that allows setting the percentage of pods that need to be available

|

||||

to consider the primary deployment as ready.

|

||||

|

||||

#### Features

|

||||

|

||||

- Allow configuring threshold for primary

|

||||

[#1048](https://github.com/fluxcd/flagger/pull/1048)

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Append to list of ownerReferences for primary configmaps and secrets

|

||||

[#1052](https://github.com/fluxcd/flagger/pull/1052)

|

||||

- Prevent Flux from overriding Flagger managed objects

|

||||

[#1049](https://github.com/fluxcd/flagger/pull/1049)

|

||||

- Add warning in docs about ExternalDNS + Istio configuration

|

||||

[#1044](https://github.com/fluxcd/flagger/pull/1044)

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Mark `CanaryMetric.Threshold` as omitempty

|

||||

[#1047](https://github.com/fluxcd/flagger/pull/1047)

|

||||

- Replace `ioutil` in testing of gchat

|

||||

[#1045](https://github.com/fluxcd/flagger/pull/1045)

|

||||

|

||||

## 1.15.0

|

||||

|

||||

**Release date:** 2021-10-28

|

||||

|

||||

This release comes with support for NGINX ingress canary metrics.

|

||||

The nginx-ingress minimum supported version is now v1.0.2.

|

||||

|

||||

Starting with version, Flagger will use the `spec.service.apex.annotations`

|

||||

to annotate the generated apex VirtualService, TrafficSplit or HTTPProxy.

|

||||

|

||||

#### Features

|

||||

|

||||

- Use nginx controller canary metrics

|

||||

[#1023](https://github.com/fluxcd/flagger/pull/1023)

|

||||

- Add metadata annotations to generated apex objects

|

||||

[#1034](https://github.com/fluxcd/flagger/pull/1034)

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Update load tester binaries (CVEs fix)

|

||||

[#1038](https://github.com/fluxcd/flagger/pull/1038)

|

||||

- Add podLabels to load tester Helm chart

|

||||

[#1036](https://github.com/fluxcd/flagger/pull/1036)

|

||||

|

||||

## 1.14.0

|

||||

|

||||

**Release date:** 2021-09-20

|

||||

|

||||

This release comes with support for extending the canary analysis with

|

||||

Dynatrace, InfluxDB and Google Cloud Monitoring (Stackdriver) metrics.

|

||||

|

||||

#### Features

|

||||

|

||||

- Add Stackdriver metric provider

|

||||

[#991](https://github.com/fluxcd/flagger/pull/991)

|

||||

- Add Influxdb metric provider

|

||||

[#1012](https://github.com/fluxcd/flagger/pull/1012)

|

||||

- Add Dynatrace metric provider

|

||||

[#1013](https://github.com/fluxcd/flagger/pull/1013)

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Fix inline promql query

|

||||

[#1015](https://github.com/fluxcd/flagger/pull/1015)

|

||||

- Fix Istio load balancer settings mapping

|

||||

[#1016](https://github.com/fluxcd/flagger/pull/1016)

|

||||

|

||||

## 1.13.0

|

||||

|

||||

**Release date:** 2021-08-25

|

||||

|

||||

This release comes with support for [Open Service Mesh](https://openservicemesh.io).

|

||||

For more details see the [OSM Progressive Delivery tutorial](https://docs.flagger.app/tutorials/osm-progressive-delivery).

|

||||

|

||||

Starting with this version, Flagger container images are signed with

|

||||

[sigstore/cosign](https://github.com/sigstore/cosign), for more details see the

|

||||

[Flagger cosign docs](https://github.com/fluxcd/flagger/blob/main/.cosign/README.md).

|

||||

|

||||

#### Features

|

||||

|

||||

- Support OSM progressive traffic shifting in Flagger

|

||||

[#955](https://github.com/fluxcd/flagger/pull/955)

|

||||

[#977](https://github.com/fluxcd/flagger/pull/977)

|

||||

- Add support for Google Chat alerts

|

||||

[#953](https://github.com/fluxcd/flagger/pull/953)

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Sign Flagger container images with cosign

|

||||

[#983](https://github.com/fluxcd/flagger/pull/983)

|

||||

- Update Gloo APIs and e2e tests to Gloo v1.8.9

|

||||

[#982](https://github.com/fluxcd/flagger/pull/982)

|

||||

- Update e2e tests to Istio v1.11, Contour v1.18, Linkerd v2.10.2 and NGINX v0.49.0

|

||||

[#979](https://github.com/fluxcd/flagger/pull/979)

|

||||

- Update e2e tests to Traefik to 2.4.9

|

||||

[#960](https://github.com/fluxcd/flagger/pull/960)

|

||||

- Add support for volumes/volumeMounts in loadtester Helm chart

|

||||

[#975](https://github.com/fluxcd/flagger/pull/975)

|

||||

- Add extra podLabels options to Flagger Helm Chart

|

||||

[#966](https://github.com/fluxcd/flagger/pull/966)

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Fix for the http client proxy overriding the default client

|

||||

[#943](https://github.com/fluxcd/flagger/pull/943)

|

||||

- Drop deprecated io/ioutil

|

||||

[#964](https://github.com/fluxcd/flagger/pull/964)

|

||||

- Remove problematic nulls from Grafana dashboard

|

||||

[#952](https://github.com/fluxcd/flagger/pull/952)

|

||||

|

||||

## 1.12.1

|

||||

|

||||

**Release date:** 2021-06-17

|

||||

|

||||

This release comes with a fix to Flagger when used with Flux v2.

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Update Go to v1.16 and Kubernetes packages to v1.21.1

|

||||

[#940](https://github.com/fluxcd/flagger/pull/940)

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Remove the GitOps Toolkit metadata from generated objects

|

||||

[#939](https://github.com/fluxcd/flagger/pull/939)

|

||||

|

||||

## 1.12.0

|

||||

|

||||

**Release date:** 2021-06-16

|

||||

|

||||

This release comes with support for disabling the SSL certificate verification

|

||||

for the Prometheus and Graphite metric providers.

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Add `insecureSkipVerify` option for Prometheus and Graphite

|

||||

[#935](https://github.com/fluxcd/flagger/pull/935)

|

||||

- Copy labels from Gloo upstreams

|

||||

[#932](https://github.com/fluxcd/flagger/pull/932)

|

||||

- Improve language and correct typos in FAQs docs

|

||||

[#925](https://github.com/fluxcd/flagger/pull/925)

|

||||

- Remove Flux GC markers from generated objects

|

||||

[#936](https://github.com/fluxcd/flagger/pull/936)

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Require SMI TrafficSplit Service and Weight

|

||||

[#878](https://github.com/fluxcd/flagger/pull/878)

|

||||

|

||||

## 1.11.0

|

||||

|

||||

**Release date:** 2021-06-01

|

||||

|

||||

**Breaking change:** the minimum supported version of Kubernetes is v1.19.0.

|

||||

|

||||

This release comes with support for Kubernetes Ingress `networking.k8s.io/v1`.

|

||||

The Ingress from `networking.k8s.io/v1beta1` is no longer supported,

|

||||

affected integrations: **NGINX** and **Skipper** ingress controllers.

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Upgrade Ingress to networking.k8s.io/v1

|

||||

[#917](https://github.com/fluxcd/flagger/pull/917)

|

||||

- Update Kubernetes manifests to rbac.authorization.k8s.io/v1

|

||||

[#920](https://github.com/fluxcd/flagger/pull/920)

|

||||

|

||||

## 1.10.0

|

||||

|

||||

**Release date:** 2021-05-28

|

||||

|

||||

This release comes with support for [Graphite](https://docs.flagger.app/usage/metrics#graphite) metric templates.

|

||||

|

||||

#### Features

|

||||

|

||||

- Add Graphite metrics provider

|

||||

[#915](https://github.com/fluxcd/flagger/pull/915)

|

||||

|

||||

#### Improvements

|

||||

|

||||

- ConfigTracker: Scan envFrom in init-containers

|

||||

[#914](https://github.com/fluxcd/flagger/pull/914)

|

||||

- e2e: Update Istio to v1.10 and Contour to v1.15

|

||||

[#914](https://github.com/fluxcd/flagger/pull/914)

|

||||

|

||||

## 1.9.0

|

||||

|

||||

**Release date:** 2021-05-14

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

FROM golang:1.15-alpine as builder

|

||||

FROM golang:1.17-alpine as builder

|

||||

|

||||

ARG TARGETPLATFORM

|

||||

ARG REVISON

|

||||

@@ -21,7 +21,7 @@ RUN CGO_ENABLED=0 go build \

|

||||

-ldflags "-s -w -X github.com/fluxcd/flagger/pkg/version.REVISION=${REVISON}" \

|

||||

-a -o flagger ./cmd/flagger

|

||||

|

||||

FROM alpine:3.13

|

||||

FROM alpine:3.15

|

||||

|

||||

RUN apk --no-cache add ca-certificates

|

||||

|

||||

|

||||

@@ -1,59 +1,59 @@

|

||||

FROM alpine:3.11 as build

|

||||

FROM golang:1.17-alpine as builder

|

||||

|

||||

RUN apk --no-cache add alpine-sdk perl curl

|

||||

ARG TARGETPLATFORM

|

||||

ARG TARGETARCH

|

||||

ARG REVISION

|

||||

|

||||

RUN curl -sSLo hey "https://storage.googleapis.com/hey-release/hey_linux_amd64" && \

|

||||

chmod +x hey && mv hey /usr/local/bin/hey

|

||||

RUN apk --no-cache add alpine-sdk perl curl bash tar

|

||||

|

||||

RUN HELM2_VERSION=2.16.8 && \

|

||||

curl -sSL "https://get.helm.sh/helm-v${HELM2_VERSION}-linux-amd64.tar.gz" | tar xvz && \

|

||||

chmod +x linux-amd64/helm && mv linux-amd64/helm /usr/local/bin/helm && \

|

||||

chmod +x linux-amd64/tiller && mv linux-amd64/tiller /usr/local/bin/tiller

|

||||

RUN HELM3_VERSION=3.7.2 && \

|

||||

curl -sSL "https://get.helm.sh/helm-v${HELM3_VERSION}-linux-${TARGETARCH}.tar.gz" | tar xvz && \

|

||||

chmod +x linux-${TARGETARCH}/helm && mv linux-${TARGETARCH}/helm /usr/local/bin/helm

|

||||

|

||||

RUN HELM3_VERSION=3.2.3 && \

|

||||

curl -sSL "https://get.helm.sh/helm-v${HELM3_VERSION}-linux-amd64.tar.gz" | tar xvz && \

|

||||

chmod +x linux-amd64/helm && mv linux-amd64/helm /usr/local/bin/helmv3

|

||||

|

||||

RUN GRPC_HEALTH_PROBE_VERSION=v0.3.1 && \

|

||||

wget -qO /usr/local/bin/grpc_health_probe https://github.com/grpc-ecosystem/grpc-health-probe/releases/download/${GRPC_HEALTH_PROBE_VERSION}/grpc_health_probe-linux-amd64 && \

|

||||

RUN GRPC_HEALTH_PROBE_VERSION=v0.4.6 && \

|

||||

wget -qO /usr/local/bin/grpc_health_probe https://github.com/grpc-ecosystem/grpc-health-probe/releases/download/${GRPC_HEALTH_PROBE_VERSION}/grpc_health_probe-linux-${TARGETARCH} && \

|

||||

chmod +x /usr/local/bin/grpc_health_probe

|

||||

|

||||

RUN GHZ_VERSION=0.39.0 && \

|

||||

curl -sSL "https://github.com/bojand/ghz/releases/download/v${GHZ_VERSION}/ghz_${GHZ_VERSION}_Linux_x86_64.tar.gz" | tar xz -C /tmp && \

|

||||

mv /tmp/ghz /usr/local/bin && chmod +x /usr/local/bin/ghz

|

||||

RUN GHZ_VERSION=0.105.0 && \

|

||||

curl -sSL "https://github.com/bojand/ghz/archive/refs/tags/v${GHZ_VERSION}.tar.gz" | tar xz -C /tmp && \

|

||||

cd /tmp/ghz-${GHZ_VERSION}/cmd/ghz && GOARCH=$TARGETARCH go build . && mv ghz /usr/local/bin && \

|

||||

chmod +x /usr/local/bin/ghz

|

||||

|

||||

RUN HELM_TILLER_VERSION=0.9.3 && \

|

||||

curl -sSL "https://github.com/rimusz/helm-tiller/archive/v${HELM_TILLER_VERSION}.tar.gz" | tar xz -C /tmp && \

|

||||

mv /tmp/helm-tiller-${HELM_TILLER_VERSION} /tmp/helm-tiller

|

||||

WORKDIR /workspace

|

||||

|

||||

RUN WRK_VERSION=4.0.2 && \

|

||||

cd /tmp && git clone -b ${WRK_VERSION} https://github.com/wg/wrk

|

||||

RUN cd /tmp/wrk && make

|

||||

# copy modules manifests

|

||||

COPY go.mod go.mod

|

||||

COPY go.sum go.sum

|

||||

|

||||

# cache modules

|

||||

RUN go mod download

|

||||

|

||||

# copy source code

|

||||

COPY cmd/ cmd/

|

||||

COPY pkg/ pkg/

|

||||

|

||||

# build

|

||||

RUN CGO_ENABLED=0 go build -o loadtester ./cmd/loadtester/*

|

||||

|

||||

FROM bash:5.0

|

||||

|

||||

ARG TARGETPLATFORM

|

||||

|

||||

RUN addgroup -S app && \

|

||||

adduser -S -g app app && \

|

||||

apk --no-cache add ca-certificates curl jq libgcc

|

||||

apk --no-cache add ca-certificates curl jq libgcc wrk hey

|

||||

|

||||

WORKDIR /home/app

|

||||

|

||||

COPY --from=bats/bats:v1.1.0 /opt/bats/ /opt/bats/

|

||||

RUN ln -s /opt/bats/bin/bats /usr/local/bin/

|

||||

|

||||

COPY --from=build /usr/local/bin/hey /usr/local/bin/

|

||||

COPY --from=build /tmp/wrk/wrk /usr/local/bin/

|

||||

COPY --from=build /usr/local/bin/helm /usr/local/bin/

|

||||

COPY --from=build /usr/local/bin/tiller /usr/local/bin/

|

||||

COPY --from=build /usr/local/bin/ghz /usr/local/bin/

|

||||

COPY --from=build /usr/local/bin/helmv3 /usr/local/bin/

|

||||

COPY --from=build /usr/local/bin/grpc_health_probe /usr/local/bin/

|

||||

COPY --from=build /tmp/helm-tiller /tmp/helm-tiller

|

||||

COPY --from=builder /usr/local/bin/helm /usr/local/bin/

|

||||

COPY --from=builder /usr/local/bin/ghz /usr/local/bin/

|

||||

COPY --from=builder /usr/local/bin/grpc_health_probe /usr/local/bin/

|

||||

|

||||

ADD https://raw.githubusercontent.com/grpc/grpc-proto/master/grpc/health/v1/health.proto /tmp/ghz/health.proto

|

||||

|

||||

COPY ./bin/loadtester .

|

||||

|

||||

RUN chown -R app:app ./

|

||||

RUN chown -R app:app /tmp/ghz

|

||||

|

||||

@@ -63,7 +63,6 @@ USER app

|

||||

RUN hey -n 1 -c 1 https://flagger.app > /dev/null && echo $? | grep 0

|

||||

RUN wrk -d 1s -c 1 -t 1 https://flagger.app > /dev/null && echo $? | grep 0

|

||||

|

||||

# install Helm v2 plugins

|

||||

RUN helm init --client-only && helm plugin install /tmp/helm-tiller

|

||||

COPY --from=builder --chown=app:app /workspace/loadtester .

|

||||

|

||||

ENTRYPOINT ["./loadtester"]

|

||||

|

||||

@@ -2,5 +2,7 @@ The maintainers are generally available in Slack at

|

||||

https://cloud-native.slack.com/messages/flagger/ (obtain an invitation

|

||||

at https://slack.cncf.io/).

|

||||

|

||||

Stefan Prodan, Weaveworks <stefan@weave.works> (Slack: @stefan Twitter: @stefanprodan)

|

||||

Takeshi Yoneda, DMM.com <cz.rk.t0415y.g@gmail.com> (Slack: @mathetake Twitter: @mathetake)

|

||||

In alphabetical order:

|

||||

|

||||

Stefan Prodan, Weaveworks <stefan@weave.works> (github: @stefanprodan, slack: stefanprodan)

|

||||

Takeshi Yoneda, Tetrate <takeshi@tetrate.io> (github: @mathetake, slack: mathetake)

|

||||

|

||||

14

Makefile

14

Makefile

@@ -6,6 +6,7 @@ build:

|

||||

CGO_ENABLED=0 go build -a -o ./bin/flagger ./cmd/flagger

|

||||

|

||||

fmt:

|

||||

go mod tidy

|

||||

gofmt -l -s -w ./

|

||||

goimports -l -w ./

|

||||

|

||||

@@ -29,12 +30,12 @@ crd:

|

||||

version-set:

|

||||

@next="$(TAG)" && \

|

||||

current="$(VERSION)" && \

|

||||

sed -i '' "s/$$current/$$next/g" pkg/version/version.go && \

|

||||

sed -i '' "s/flagger:$$current/flagger:$$next/g" artifacts/flagger/deployment.yaml && \

|

||||

sed -i '' "s/tag: $$current/tag: $$next/g" charts/flagger/values.yaml && \

|

||||

sed -i '' "s/appVersion: $$current/appVersion: $$next/g" charts/flagger/Chart.yaml && \

|

||||

sed -i '' "s/version: $$current/version: $$next/g" charts/flagger/Chart.yaml && \

|

||||

sed -i '' "s/newTag: $$current/newTag: $$next/g" kustomize/base/flagger/kustomization.yaml && \

|

||||

sed -i "s/$$current/$$next/g" pkg/version/version.go && \

|

||||

sed -i "s/flagger:$$current/flagger:$$next/g" artifacts/flagger/deployment.yaml && \

|

||||

sed -i "s/tag: $$current/tag: $$next/g" charts/flagger/values.yaml && \

|

||||

sed -i "s/appVersion: $$current/appVersion: $$next/g" charts/flagger/Chart.yaml && \

|

||||

sed -i "s/version: $$current/version: $$next/g" charts/flagger/Chart.yaml && \

|

||||

sed -i "s/newTag: $$current/newTag: $$next/g" kustomize/base/flagger/kustomization.yaml && \

|

||||

echo "Version $$next set in code, deployment, chart and kustomize"

|

||||

|

||||

release:

|

||||

@@ -42,7 +43,6 @@ release:

|

||||

git push origin "v$(VERSION)"

|

||||

|

||||

loadtester-build:

|

||||

CGO_ENABLED=0 GOOS=linux go build -a -installsuffix cgo -o ./bin/loadtester ./cmd/loadtester/*

|

||||

docker build -t ghcr.io/fluxcd/flagger-loadtester:$(LT_VERSION) . -f Dockerfile.loadtester

|

||||

|

||||

loadtester-push:

|

||||

|

||||

79

README.md

79

README.md

@@ -1,4 +1,4 @@

|

||||

# flagger

|

||||

# flagger

|

||||

|

||||

[](https://bestpractices.coreinfrastructure.org/projects/4783)

|

||||

[](https://github.com/fluxcd/flagger/actions)

|

||||

@@ -13,10 +13,10 @@ by gradually shifting traffic to the new version while measuring metrics and run

|

||||

|

||||

|

||||

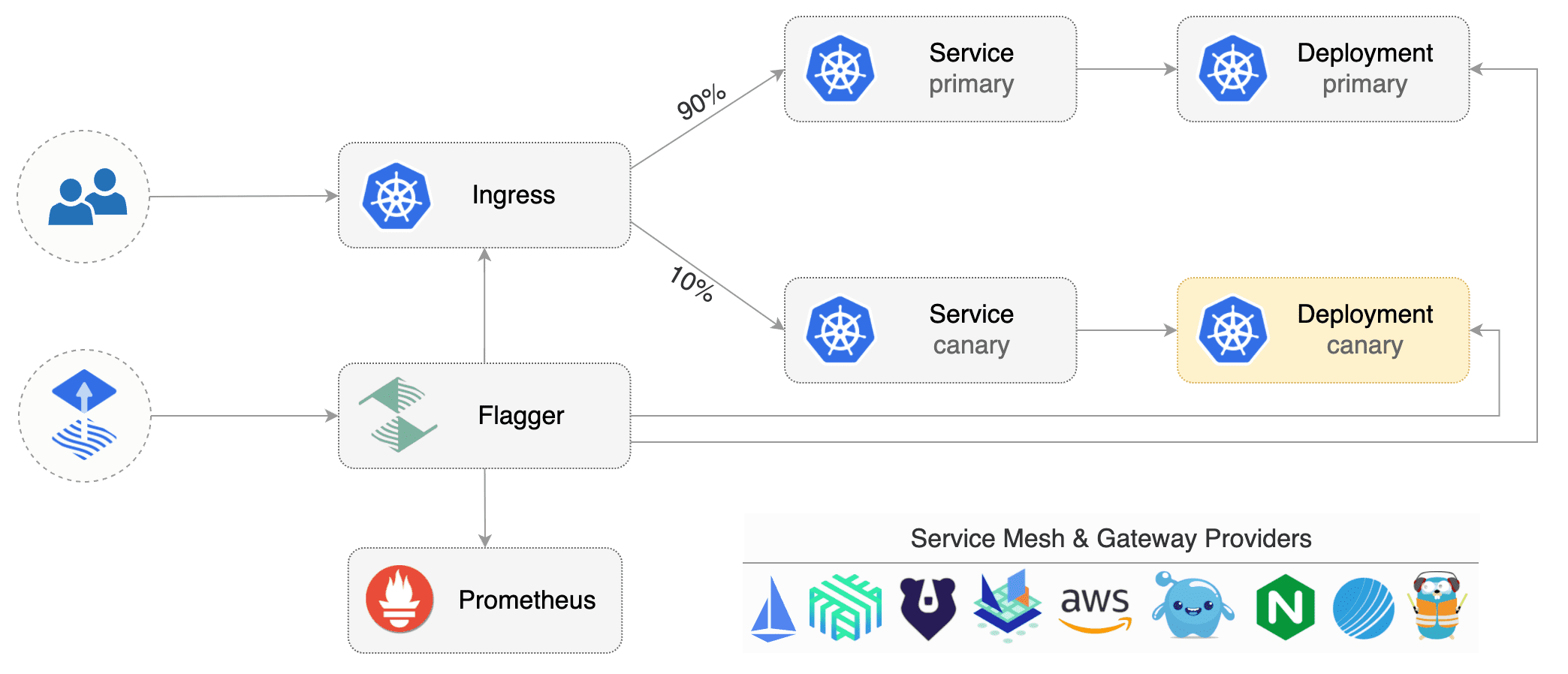

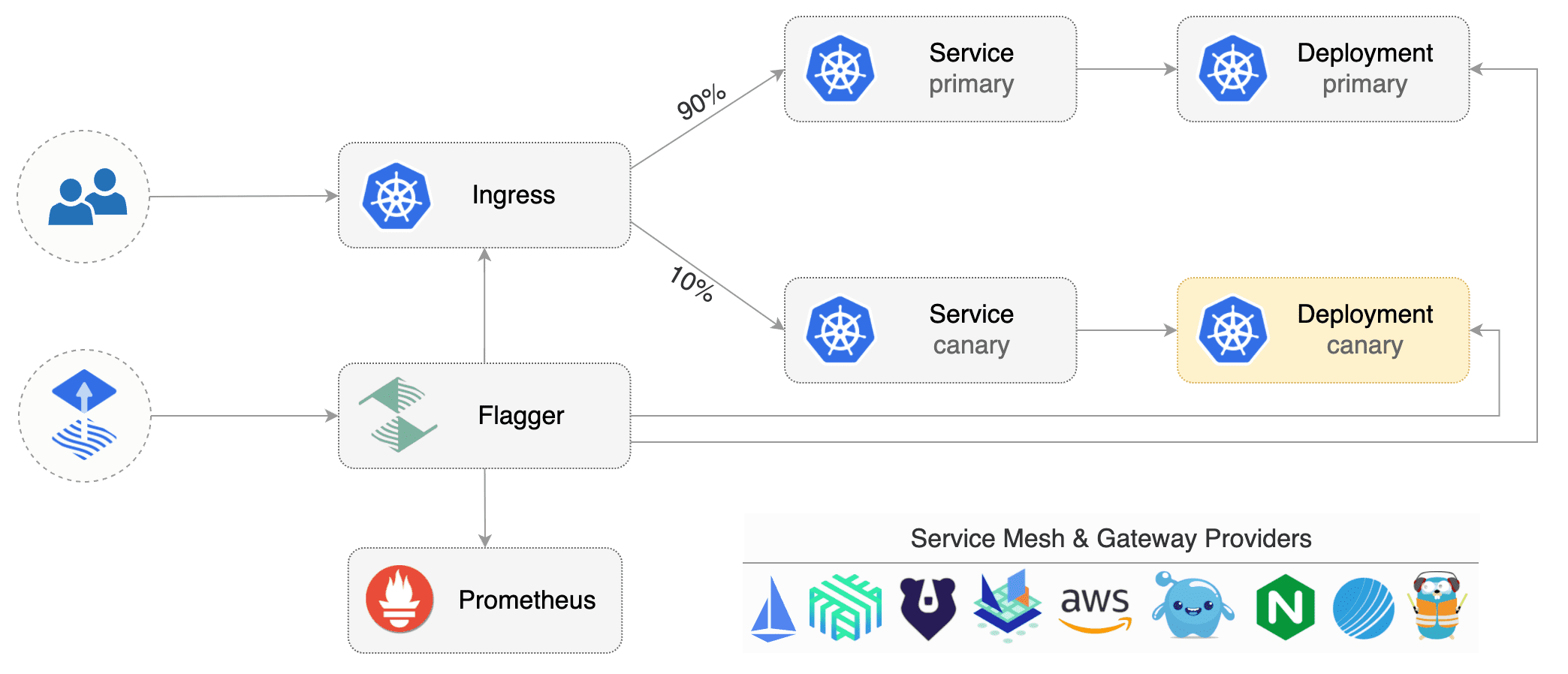

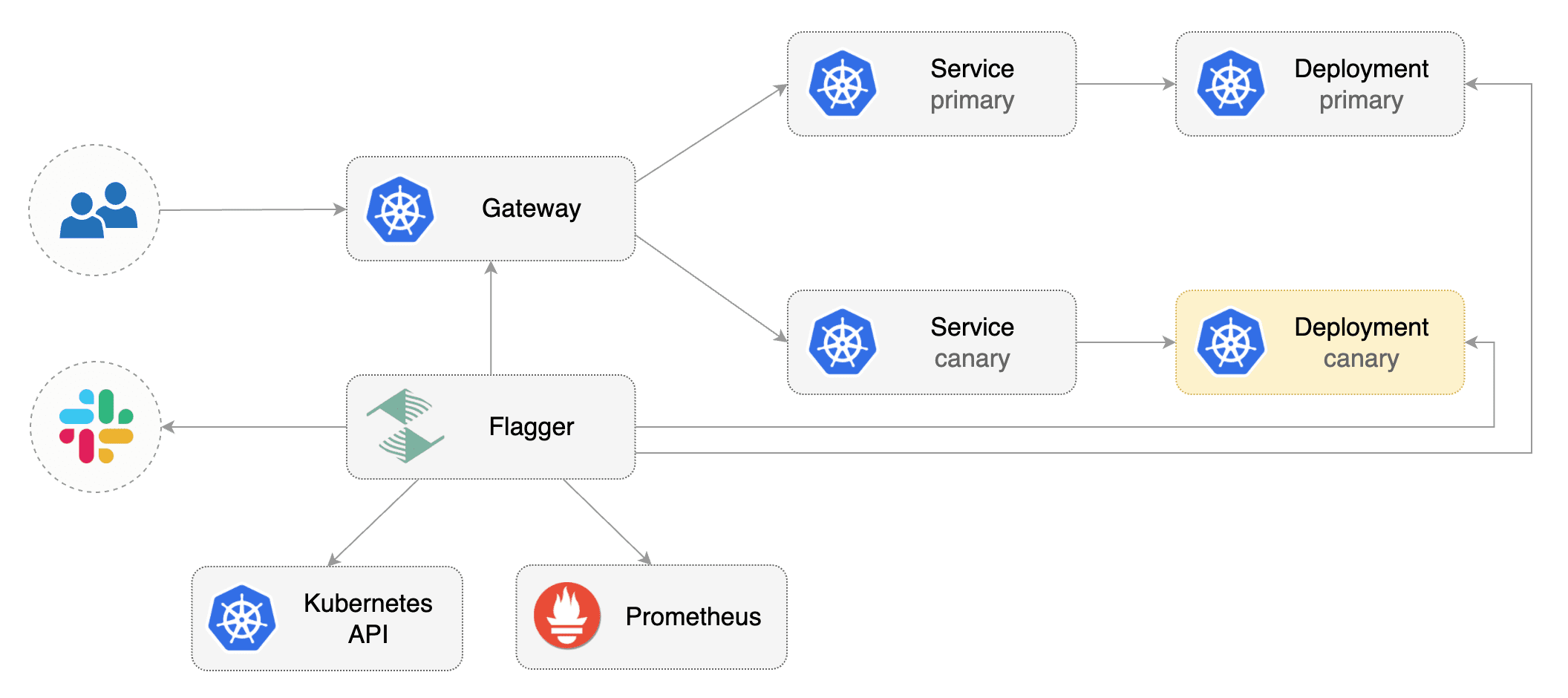

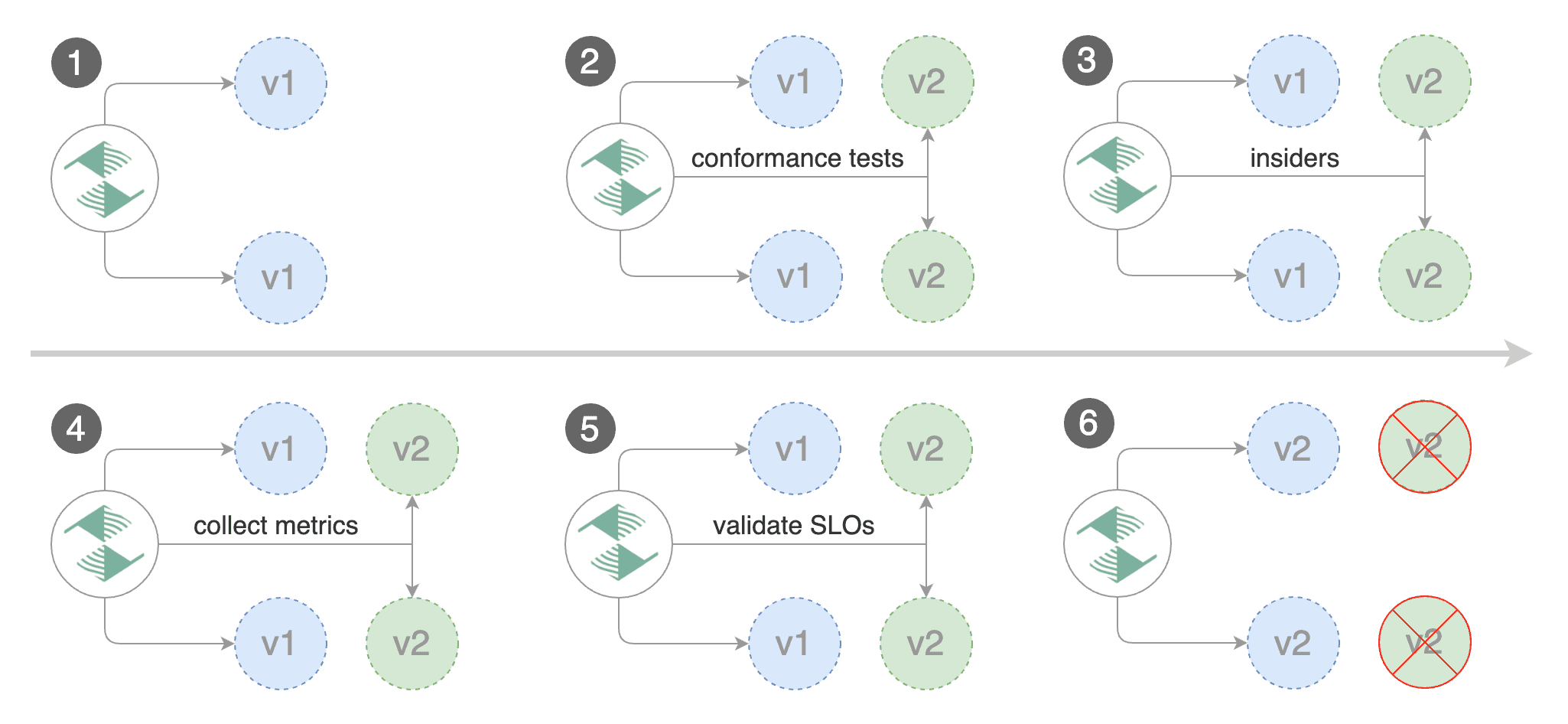

Flagger implements several deployment strategies (Canary releases, A/B testing, Blue/Green mirroring)

|

||||

using a service mesh (App Mesh, Istio, Linkerd)

|

||||

using a service mesh (App Mesh, Istio, Linkerd, Open Service Mesh, Kuma)

|

||||

or an ingress controller (Contour, Gloo, NGINX, Skipper, Traefik) for traffic routing.

|

||||

For release analysis, Flagger can query Prometheus, Datadog, New Relic or CloudWatch

|

||||

and for alerting it uses Slack, MS Teams, Discord and Rocket.

|

||||

For release analysis, Flagger can query Prometheus, Datadog, New Relic, CloudWatch, Dynatrace,

|

||||

InfluxDB and Stackdriver and for alerting it uses Slack, MS Teams, Discord, Rocket and Google Chat.

|

||||

|

||||

Flagger is a [Cloud Native Computing Foundation](https://cncf.io/) project

|

||||

and part of [Flux](https://fluxcd.io) family of GitOps tools.

|

||||

@@ -38,6 +38,8 @@ Flagger documentation can be found at [docs.flagger.app](https://docs.flagger.ap

|

||||

* [App Mesh](https://docs.flagger.app/tutorials/appmesh-progressive-delivery)

|

||||

* [Istio](https://docs.flagger.app/tutorials/istio-progressive-delivery)

|

||||

* [Linkerd](https://docs.flagger.app/tutorials/linkerd-progressive-delivery)

|

||||

* [Open Service Mesh (OSM)](https://docs.flagger.app/tutorials/osm-progressive-delivery)

|

||||

* [Kuma Service Mesh](https://docs.flagger.app/tutorials/kuma-progressive-delivery)

|

||||

* [Contour](https://docs.flagger.app/tutorials/contour-progressive-delivery)

|

||||

* [Gloo](https://docs.flagger.app/tutorials/gloo-progressive-delivery)

|

||||

* [NGINX Ingress](https://docs.flagger.app/tutorials/nginx-progressive-delivery)

|

||||

@@ -70,7 +72,7 @@ metadata:

|

||||

namespace: test

|

||||

spec:

|

||||

# service mesh provider (optional)

|

||||

# can be: kubernetes, istio, linkerd, appmesh, nginx, skipper, contour, gloo, supergloo, traefik

|

||||

# can be: kubernetes, istio, linkerd, appmesh, nginx, skipper, contour, gloo, supergloo, traefik, osm

|

||||

# for SMI TrafficSplit can be: smi:v1alpha1, smi:v1alpha2, smi:v1alpha3

|

||||

provider: istio

|

||||

# deployment reference

|

||||

@@ -182,34 +184,46 @@ For more details on how the canary analysis and promotion works please [read the

|

||||

|

||||

**Service Mesh**

|

||||

|

||||

| Feature | App Mesh | Istio | Linkerd | SMI | Kubernetes CNI |

|

||||

| ------------------------------------------ | ------------------ | ------------------ | ------------------ | ----------------- | ----------------- |

|

||||

| Canary deployments (weighted traffic) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: |

|

||||

| A/B testing (headers and cookies routing) | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: | :heavy_minus_sign: | :heavy_minus_sign: |

|

||||

| Blue/Green deployments (traffic switch) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Blue/Green deployments (traffic mirroring) | :heavy_minus_sign: | :heavy_check_mark: | :heavy_minus_sign: | :heavy_minus_sign: | :heavy_minus_sign: |

|

||||

| Webhooks (acceptance/load testing) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Manual gating (approve/pause/resume) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Request success rate check (L7 metric) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: | :heavy_minus_sign: |

|

||||

| Request duration check (L7 metric) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: | :heavy_minus_sign: |

|

||||

| Custom metric checks | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

|

||||

For SMI compatible service mesh solutions like Open Service Mesh, Consul Connect or Nginx Service Mesh,

|

||||

[Prometheus MetricTemplates](https://docs.flagger.app/usage/metrics#prometheus) can be used to implement

|

||||

the request success rate and request duration checks.

|

||||

| Feature | App Mesh | Istio | Linkerd | Kuma | OSM | Kubernetes CNI |

|

||||

|--------------------------------------------|--------------------|--------------------|--------------------|--------------------|--------------------|--------------------|

|

||||

| Canary deployments (weighted traffic) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: |

|

||||

| A/B testing (headers and cookies routing) | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: | :heavy_minus_sign: | :heavy_minus_sign: | :heavy_minus_sign: |

|

||||

| Blue/Green deployments (traffic switch) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Blue/Green deployments (traffic mirroring) | :heavy_minus_sign: | :heavy_check_mark: | :heavy_minus_sign: | :heavy_minus_sign: | :heavy_minus_sign: | :heavy_minus_sign: |

|

||||

| Webhooks (acceptance/load testing) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Manual gating (approve/pause/resume) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Request success rate check (L7 metric) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: |

|

||||

| Request duration check (L7 metric) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: |

|

||||

| Custom metric checks | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

|

||||

**Ingress**

|

||||

|

||||

| Feature | Contour | Gloo | NGINX | Skipper | Traefik |

|

||||

| ------------------------------------------ | ------------------ | ------------------ | ------------------ | ------------------ | ------------------ |

|

||||

| Canary deployments (weighted traffic) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| A/B testing (headers and cookies routing) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: | :heavy_minus_sign: |

|

||||

| Blue/Green deployments (traffic switch) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Webhooks (acceptance/load testing) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Manual gating (approve/pause/resume) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Request success rate check (L7 metric) | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Request duration check (L7 metric) | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Custom metric checks | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Feature | Contour | Gloo | NGINX | Skipper | Traefik |

|

||||

|-------------------------------------------|--------------------|--------------------|--------------------|--------------------|--------------------|

|

||||

| Canary deployments (weighted traffic) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| A/B testing (headers and cookies routing) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: | :heavy_minus_sign: |

|

||||

| Blue/Green deployments (traffic switch) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Webhooks (acceptance/load testing) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Manual gating (approve/pause/resume) | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Request success rate check (L7 metric) | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Request duration check (L7 metric) | :heavy_check_mark: | :heavy_check_mark: | :heavy_minus_sign: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Custom metric checks | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: | :heavy_check_mark: |

|

||||

|

||||

**Networking Interface**

|

||||

|

||||

| Feature | Gateway API | SMI |

|

||||

|-----------------------------------------------|--------------------|--------------------|

|

||||

| Canary deployments (weighted traffic) | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| A/B testing (headers and cookies routing) | :heavy_check_mark: | :heavy_minus_sign: |

|

||||

| Blue/Green deployments (traffic switch) | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Blue/Green deployments (traffic mirrroring) | :heavy_minus_sign: | :heavy_minus_sign: |

|

||||

| Webhooks (acceptance/load testing) | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Manual gating (approve/pause/resume) | :heavy_check_mark: | :heavy_check_mark: |

|

||||

| Request success rate check (L7 metric) | :heavy_minus_sign: | :heavy_minus_sign: |

|

||||

| Request duration check (L7 metric) | :heavy_minus_sign: | :heavy_minus_sign: |

|

||||

| Custom metric checks | :heavy_check_mark: | :heavy_check_mark: |

|

||||

|

||||

For all [Gateway API](https://gateway-api.sigs.k8s.io/) implementations like [Contour](https://projectcontour.io/guides/gateway-api/), [Istio](https://istio.io/latest/docs/tasks/traffic-management/ingress/gateway-api/) and [SMI](https://smi-spec.io) compatible service mesh solutions like [Consul Connect](https://www.consul.io/docs/connect) or [Nginx Service Mesh](https://docs.nginx.com/nginx-service-mesh/), [Prometheus MetricTemplates](https://docs.flagger.app/usage/metrics#prometheus) can be used to implement the request success rate and request duration checks.

|

||||

|

||||

### Roadmap

|

||||

|

||||

@@ -222,7 +236,6 @@ the request success rate and request duration checks.

|

||||

|

||||

#### Integrations

|

||||

|

||||

* Add support for Kubernetes [Ingress v2](https://github.com/kubernetes-sigs/service-apis)

|

||||

* Add support for ingress controllers like HAProxy and ALB

|

||||

* Add support for metrics providers like InfluxDB, Stackdriver, SignalFX

|

||||

|

||||

@@ -246,8 +259,8 @@ If you have any questions about Flagger and progressive delivery:

|

||||

* Read the Flagger [docs](https://docs.flagger.app).

|

||||

* Invite yourself to the [CNCF community slack](https://slack.cncf.io/)

|

||||

and join the [#flagger](https://cloud-native.slack.com/messages/flagger/) channel.

|

||||

* Check out the [Flux talks section](https://fluxcd.io/community/#talks) and to see a list of online talks,

|

||||

hands-on training and meetups.

|

||||

* Check out the **[Flux events calendar](https://fluxcd.io/#calendar)**, both with upcoming talks, events and meetings you can attend.

|

||||

* Or view the **[Flux resources section](https://fluxcd.io/resources)** with past events videos you can watch.

|

||||

* File an [issue](https://github.com/fluxcd/flagger/issues/new).

|

||||

|

||||

Your feedback is always welcome!

|

||||

|

||||

50

artifacts/examples/kuma-canary.yaml

Normal file

50

artifacts/examples/kuma-canary.yaml

Normal file

@@ -0,0 +1,50 @@

|

||||

apiVersion: flagger.app/v1beta1

|

||||

kind: Canary

|

||||

metadata:

|

||||

name: podinfo

|

||||

namespace: test

|

||||

annotations:

|

||||

kuma.io/mesh: default

|

||||

spec:

|

||||

targetRef:

|

||||

apiVersion: apps/v1

|

||||

kind: Deployment

|

||||

name: podinfo

|

||||

progressDeadlineSeconds: 60

|

||||

service:

|

||||

port: 9898

|

||||

targetPort: 9898

|

||||

apex:

|

||||

annotations:

|

||||

9898.service.kuma.io/protocol: "http"

|

||||

canary:

|

||||

annotations:

|

||||

9898.service.kuma.io/protocol: "http"

|

||||

primary:

|

||||

annotations:

|

||||

9898.service.kuma.io/protocol: "http"

|

||||

analysis:

|

||||

interval: 15s

|

||||

threshold: 15

|

||||

maxWeight: 50

|

||||

stepWeight: 10

|

||||

metrics:

|

||||

- name: request-success-rate

|

||||

threshold: 99

|

||||

interval: 1m

|

||||

- name: request-duration

|

||||

threshold: 500

|

||||

interval: 30s

|

||||

webhooks:

|

||||

- name: acceptance-test

|

||||

type: pre-rollout

|

||||

url: http://flagger-loadtester.test/

|

||||

timeout: 30s

|

||||

metadata:

|

||||

type: bash

|

||||

cmd: "curl -sd 'test' http://podinfo-canary.test:9898/token | grep token"

|

||||

- name: load-test

|

||||

type: rollout

|

||||

url: http://flagger-loadtester.test/

|

||||

metadata:

|

||||

cmd: "hey -z 2m -q 10 -c 2 http://podinfo-canary.test:9898/"

|

||||

42

artifacts/examples/osm-canary-steps.yaml

Normal file

42

artifacts/examples/osm-canary-steps.yaml

Normal file

@@ -0,0 +1,42 @@

|

||||

apiVersion: flagger.app/v1beta1

|

||||

kind: Canary

|

||||

metadata:

|

||||

name: podinfo

|

||||

namespace: test

|

||||

spec:

|

||||

provider: osm

|

||||

targetRef:

|

||||

apiVersion: apps/v1

|

||||

kind: Deployment

|

||||

name: podinfo

|

||||

progressDeadlineSeconds: 600

|

||||

service:

|

||||

port: 9898

|

||||

targetPort: 9898

|

||||

analysis:

|

||||

interval: 15s

|

||||

threshold: 10

|

||||

stepWeights: [5, 10, 15, 20, 25, 30, 35, 40, 45, 50, 55]

|

||||

metrics:

|

||||

- name: request-success-rate

|

||||

thresholdRange:

|

||||

min: 99

|

||||

interval: 1m

|

||||

- name: request-duration

|

||||

thresholdRange:

|

||||

max: 500

|

||||

interval: 30s

|

||||

webhooks:

|

||||

- name: acceptance-test

|

||||

type: pre-rollout

|

||||

url: http://flagger-loadtester.test/

|

||||

timeout: 15s

|

||||

metadata:

|

||||

type: bash

|

||||

cmd: "curl -sd 'test' http://podinfo-canary.test:9898/token | grep token"

|

||||

- name: load-test

|

||||

type: rollout

|

||||

url: http://flagger-loadtester.test/

|

||||

timeout: 5s

|

||||

metadata:

|

||||

cmd: "hey -z 1m -q 10 -c 2 http://podinfo-canary.test:9898/"

|

||||

43

artifacts/examples/osm-canary.yaml

Normal file

43

artifacts/examples/osm-canary.yaml

Normal file

@@ -0,0 +1,43 @@

|

||||

apiVersion: flagger.app/v1beta1

|

||||

kind: Canary

|

||||

metadata:

|

||||

name: podinfo

|

||||

namespace: test

|

||||

spec:

|

||||

provider: osm

|

||||

targetRef:

|

||||

apiVersion: apps/v1

|

||||

kind: Deployment

|

||||

name: podinfo

|

||||

progressDeadlineSeconds: 600

|

||||

service:

|

||||

port: 9898

|

||||

targetPort: 9898

|

||||

analysis:

|

||||

interval: 15s

|

||||

threshold: 10

|

||||

maxWeight: 50

|

||||

stepWeight: 5

|

||||

metrics:

|

||||

- name: request-success-rate

|

||||

thresholdRange:

|

||||

min: 99

|

||||

interval: 1m

|

||||

- name: request-duration

|

||||

thresholdRange:

|

||||

max: 500

|

||||

interval: 30s

|

||||

webhooks:

|

||||

- name: acceptance-test

|

||||

type: pre-rollout

|

||||

url: http://flagger-loadtester.test/

|

||||

timeout: 15s

|

||||

metadata:

|

||||

type: bash

|

||||

cmd: "curl -sd 'test' http://podinfo-canary.test:9898/token | grep token"

|

||||

- name: load-test

|

||||

type: rollout

|