mirror of

https://github.com/fluxcd/flagger.git

synced 2026-04-09 20:27:21 +00:00

Compare commits

29 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

4463d41744 | ||

|

|

66663c2aaf | ||

|

|

d34a504516 | ||

|

|

9fc5f9ca31 | ||

|

|

3cf9fe7cb7 | ||

|

|

6d57b5c490 | ||

|

|

7254a5a7f6 | ||

|

|

671016834d | ||

|

|

41dc056c87 | ||

|

|

6757553685 | ||

|

|

1abceb8334 | ||

|

|

0939a1bcc9 | ||

|

|

d91012d79c | ||

|

|

6e9fe2c584 | ||

|

|

ccad05c92f | ||

|

|

8b9929008b | ||

|

|

a881446252 | ||

|

|

183c73f2c4 | ||

|

|

3e3eaebbf2 | ||

|

|

bcc5bbf6fa | ||

|

|

42c3052247 | ||

|

|

3593e20ad5 | ||

|

|

1f0819465b | ||

|

|

7e48d7537f | ||

|

|

45c69ce3f7 | ||

|

|

8699a07de9 | ||

|

|

fb92d31744 | ||

|

|

bb7c4b1909 | ||

|

|

84d74d1eb5 |

@@ -1,256 +0,0 @@

|

|||||||

version: 2.1

|

|

||||||

jobs:

|

|

||||||

|

|

||||||

build-binary:

|

|

||||||

docker:

|

|

||||||

- image: circleci/golang:1.12

|

|

||||||

working_directory: ~/build

|

|

||||||

steps:

|

|

||||||

- checkout

|

|

||||||

- restore_cache:

|

|

||||||

keys:

|

|

||||||

- go-mod-v3-{{ checksum "go.sum" }}

|

|

||||||

- run:

|

|

||||||

name: Run go fmt

|

|

||||||

command: make test-fmt

|

|

||||||

- run:

|

|

||||||

name: Build Flagger

|

|

||||||

command: |

|

|

||||||

CGO_ENABLED=0 GOOS=linux go build \

|

|

||||||

-ldflags "-s -w -X github.com/weaveworks/flagger/pkg/version.REVISION=${CIRCLE_SHA1}" \

|

|

||||||

-a -installsuffix cgo -o bin/flagger ./cmd/flagger/*.go

|

|

||||||

- run:

|

|

||||||

name: Build Flagger load tester

|

|

||||||

command: |

|

|

||||||

CGO_ENABLED=0 GOOS=linux go build \

|

|

||||||

-a -installsuffix cgo -o bin/loadtester ./cmd/loadtester/*.go

|

|

||||||

- run:

|

|

||||||

name: Run unit tests

|

|

||||||

command: |

|

|

||||||

go test -race -coverprofile=coverage.txt -covermode=atomic $(go list ./pkg/...)

|

|

||||||

bash <(curl -s https://codecov.io/bash)

|

|

||||||

- run:

|

|

||||||

name: Verify code gen

|

|

||||||

command: make test-codegen

|

|

||||||

- save_cache:

|

|

||||||

key: go-mod-v3-{{ checksum "go.sum" }}

|

|

||||||

paths:

|

|

||||||

- "/go/pkg/mod/"

|

|

||||||

- persist_to_workspace:

|

|

||||||

root: bin

|

|

||||||

paths:

|

|

||||||

- flagger

|

|

||||||

- loadtester

|

|

||||||

|

|

||||||

push-container:

|

|

||||||

docker:

|

|

||||||

- image: circleci/golang:1.12

|

|

||||||

steps:

|

|

||||||

- checkout

|

|

||||||

- setup_remote_docker:

|

|

||||||

docker_layer_caching: true

|

|

||||||

- attach_workspace:

|

|

||||||

at: /tmp/bin

|

|

||||||

- run: test/container-build.sh

|

|

||||||

- run: test/container-push.sh

|

|

||||||

|

|

||||||

push-binary:

|

|

||||||

docker:

|

|

||||||

- image: circleci/golang:1.12

|

|

||||||

working_directory: ~/build

|

|

||||||

steps:

|

|

||||||

- checkout

|

|

||||||

- setup_remote_docker:

|

|

||||||

docker_layer_caching: true

|

|

||||||

- restore_cache:

|

|

||||||

keys:

|

|

||||||

- go-mod-v3-{{ checksum "go.sum" }}

|

|

||||||

- run: test/goreleaser.sh

|

|

||||||

|

|

||||||

e2e-istio-testing:

|

|

||||||

machine: true

|

|

||||||

steps:

|

|

||||||

- checkout

|

|

||||||

- attach_workspace:

|

|

||||||

at: /tmp/bin

|

|

||||||

- run: test/container-build.sh

|

|

||||||

- run: test/e2e-kind.sh

|

|

||||||

- run: test/e2e-istio.sh

|

|

||||||

- run: test/e2e-tests.sh

|

|

||||||

|

|

||||||

e2e-kubernetes-testing:

|

|

||||||

machine: true

|

|

||||||

steps:

|

|

||||||

- checkout

|

|

||||||

- attach_workspace:

|

|

||||||

at: /tmp/bin

|

|

||||||

- run: test/container-build.sh

|

|

||||||

- run: test/e2e-kind.sh

|

|

||||||

- run: test/e2e-kubernetes.sh

|

|

||||||

- run: test/e2e-kubernetes-tests.sh

|

|

||||||

|

|

||||||

e2e-smi-istio-testing:

|

|

||||||

machine: true

|

|

||||||

steps:

|

|

||||||

- checkout

|

|

||||||

- attach_workspace:

|

|

||||||

at: /tmp/bin

|

|

||||||

- run: test/container-build.sh

|

|

||||||

- run: test/e2e-kind.sh

|

|

||||||

- run: test/e2e-smi-istio.sh

|

|

||||||

- run: test/e2e-tests.sh canary

|

|

||||||

|

|

||||||

e2e-supergloo-testing:

|

|

||||||

machine: true

|

|

||||||

steps:

|

|

||||||

- checkout

|

|

||||||

- attach_workspace:

|

|

||||||

at: /tmp/bin

|

|

||||||

- run: test/container-build.sh

|

|

||||||

- run: test/e2e-kind.sh 0.2.1

|

|

||||||

- run: test/e2e-supergloo.sh

|

|

||||||

- run: test/e2e-tests.sh canary

|

|

||||||

|

|

||||||

e2e-gloo-testing:

|

|

||||||

machine: true

|

|

||||||

steps:

|

|

||||||

- checkout

|

|

||||||

- attach_workspace:

|

|

||||||

at: /tmp/bin

|

|

||||||

- run: test/container-build.sh

|

|

||||||

- run: test/e2e-kind.sh

|

|

||||||

- run: test/e2e-gloo.sh

|

|

||||||

- run: test/e2e-gloo-tests.sh

|

|

||||||

|

|

||||||

e2e-nginx-testing:

|

|

||||||

machine: true

|

|

||||||

steps:

|

|

||||||

- checkout

|

|

||||||

- attach_workspace:

|

|

||||||

at: /tmp/bin

|

|

||||||

- run: test/container-build.sh

|

|

||||||

- run: test/e2e-kind.sh

|

|

||||||

- run: test/e2e-nginx.sh

|

|

||||||

- run: test/e2e-nginx-tests.sh

|

|

||||||

|

|

||||||

e2e-linkerd-testing:

|

|

||||||

machine: true

|

|

||||||

steps:

|

|

||||||

- checkout

|

|

||||||

- attach_workspace:

|

|

||||||

at: /tmp/bin

|

|

||||||

- run: test/container-build.sh

|

|

||||||

- run: test/e2e-kind.sh

|

|

||||||

- run: test/e2e-linkerd.sh

|

|

||||||

- run: test/e2e-linkerd-tests.sh

|

|

||||||

|

|

||||||

push-helm-charts:

|

|

||||||

docker:

|

|

||||||

- image: circleci/golang:1.12

|

|

||||||

steps:

|

|

||||||

- checkout

|

|

||||||

- run:

|

|

||||||

name: Install kubectl

|

|

||||||

command: sudo curl -L https://storage.googleapis.com/kubernetes-release/release/$(curl -s https://storage.googleapis.com/kubernetes-release/release/stable.txt)/bin/linux/amd64/kubectl -o /usr/local/bin/kubectl && sudo chmod +x /usr/local/bin/kubectl

|

|

||||||

- run:

|

|

||||||

name: Install helm

|

|

||||||

command: sudo curl -L https://storage.googleapis.com/kubernetes-helm/helm-v2.14.2-linux-amd64.tar.gz | tar xz && sudo mv linux-amd64/helm /bin/helm && sudo rm -rf linux-amd64

|

|

||||||

- run:

|

|

||||||

name: Initialize helm

|

|

||||||

command: helm init --client-only --kubeconfig=$HOME/.kube/kubeconfig

|

|

||||||

- run:

|

|

||||||

name: Lint charts

|

|

||||||

command: |

|

|

||||||

helm lint ./charts/*

|

|

||||||

- run:

|

|

||||||

name: Package charts

|

|

||||||

command: |

|

|

||||||

mkdir $HOME/charts

|

|

||||||

helm package ./charts/* --destination $HOME/charts

|

|

||||||

- run:

|

|

||||||

name: Publish charts

|

|

||||||

command: |

|

|

||||||

if echo "${CIRCLE_TAG}" | grep -Eq "[0-9]+(\.[0-9]+)*(-[a-z]+)?$"; then

|

|

||||||

REPOSITORY="https://weaveworksbot:${GITHUB_TOKEN}@github.com/weaveworks/flagger.git"

|

|

||||||

git config user.email weaveworksbot@users.noreply.github.com

|

|

||||||

git config user.name weaveworksbot

|

|

||||||

git remote set-url origin ${REPOSITORY}

|

|

||||||

git checkout gh-pages

|

|

||||||

mv -f $HOME/charts/*.tgz .

|

|

||||||

helm repo index . --url https://flagger.app

|

|

||||||

git add .

|

|

||||||

git commit -m "Publish Helm charts v${CIRCLE_TAG}"

|

|

||||||

git push origin gh-pages

|

|

||||||

else

|

|

||||||

echo "Not a release! Skip charts publish"

|

|

||||||

fi

|

|

||||||

|

|

||||||

workflows:

|

|

||||||

version: 2

|

|

||||||

build-test-push:

|

|

||||||

jobs:

|

|

||||||

- build-binary:

|

|

||||||

filters:

|

|

||||||

branches:

|

|

||||||

ignore:

|

|

||||||

- gh-pages

|

|

||||||

- e2e-istio-testing:

|

|

||||||

requires:

|

|

||||||

- build-binary

|

|

||||||

- e2e-kubernetes-testing:

|

|

||||||

requires:

|

|

||||||

- build-binary

|

|

||||||

# - e2e-supergloo-testing:

|

|

||||||

# requires:

|

|

||||||

# - build-binary

|

|

||||||

- e2e-gloo-testing:

|

|

||||||

requires:

|

|

||||||

- build-binary

|

|

||||||

- e2e-nginx-testing:

|

|

||||||

requires:

|

|

||||||

- build-binary

|

|

||||||

- e2e-linkerd-testing:

|

|

||||||

requires:

|

|

||||||

- build-binary

|

|

||||||

- push-container:

|

|

||||||

requires:

|

|

||||||

- build-binary

|

|

||||||

- e2e-istio-testing

|

|

||||||

- e2e-kubernetes-testing

|

|

||||||

#- e2e-supergloo-testing

|

|

||||||

- e2e-gloo-testing

|

|

||||||

- e2e-nginx-testing

|

|

||||||

- e2e-linkerd-testing

|

|

||||||

|

|

||||||

release:

|

|

||||||

jobs:

|

|

||||||

- build-binary:

|

|

||||||

filters:

|

|

||||||

branches:

|

|

||||||

ignore: /.*/

|

|

||||||

tags:

|

|

||||||

ignore: /^chart.*/

|

|

||||||

- push-container:

|

|

||||||

requires:

|

|

||||||

- build-binary

|

|

||||||

filters:

|

|

||||||

branches:

|

|

||||||

ignore: /.*/

|

|

||||||

tags:

|

|

||||||

ignore: /^chart.*/

|

|

||||||

- push-binary:

|

|

||||||

requires:

|

|

||||||

- push-container

|

|

||||||

filters:

|

|

||||||

branches:

|

|

||||||

ignore: /.*/

|

|

||||||

tags:

|

|

||||||

ignore: /^chart.*/

|

|

||||||

- push-helm-charts:

|

|

||||||

requires:

|

|

||||||

- push-container

|

|

||||||

filters:

|

|

||||||

branches:

|

|

||||||

ignore: /.*/

|

|

||||||

tags:

|

|

||||||

ignore: /^chart.*/

|

|

||||||

11

.codecov.yml

11

.codecov.yml

@@ -1,11 +0,0 @@

|

|||||||

coverage:

|

|

||||||

status:

|

|

||||||

project:

|

|

||||||

default:

|

|

||||||

target: auto

|

|

||||||

threshold: 50

|

|

||||||

base: auto

|

|

||||||

patch: off

|

|

||||||

|

|

||||||

comment:

|

|

||||||

require_changes: yes

|

|

||||||

@@ -1 +0,0 @@

|

|||||||

root: ./docs/gitbook

|

|

||||||

1

.github/CODEOWNERS

vendored

1

.github/CODEOWNERS

vendored

@@ -1 +0,0 @@

|

|||||||

* @stefanprodan

|

|

||||||

17

.github/_main.workflow

vendored

17

.github/_main.workflow

vendored

@@ -1,17 +0,0 @@

|

|||||||

workflow "Publish Helm charts" {

|

|

||||||

on = "push"

|

|

||||||

resolves = ["helm-push"]

|

|

||||||

}

|

|

||||||

|

|

||||||

action "helm-lint" {

|

|

||||||

uses = "stefanprodan/gh-actions/helm@master"

|

|

||||||

args = ["lint charts/*"]

|

|

||||||

}

|

|

||||||

|

|

||||||

action "helm-push" {

|

|

||||||

needs = ["helm-lint"]

|

|

||||||

uses = "stefanprodan/gh-actions/helm-gh-pages@master"

|

|

||||||

args = ["charts/*","https://flagger.app"]

|

|

||||||

secrets = ["GITHUB_TOKEN"]

|

|

||||||

}

|

|

||||||

|

|

||||||

53

.github/workflows/website.yaml

vendored

Normal file

53

.github/workflows/website.yaml

vendored

Normal file

@@ -0,0 +1,53 @@

|

|||||||

|

name: website

|

||||||

|

|

||||||

|

on:

|

||||||

|

push:

|

||||||

|

branches:

|

||||||

|

- website

|

||||||

|

|

||||||

|

jobs:

|

||||||

|

vuepress:

|

||||||

|

runs-on: ubuntu-latest

|

||||||

|

steps:

|

||||||

|

- name: Checkout

|

||||||

|

uses: actions/checkout@v5

|

||||||

|

- uses: actions/setup-node@v5

|

||||||

|

with:

|

||||||

|

node-version: '12.x'

|

||||||

|

- id: yarn-cache-dir-path

|

||||||

|

run: echo "::set-output name=dir::$(yarn cache dir)"

|

||||||

|

- uses: actions/cache@v4

|

||||||

|

id: yarn-cache

|

||||||

|

with:

|

||||||

|

path: ${{ steps.yarn-cache-dir-path.outputs.dir }}

|

||||||

|

key: ${{ runner.os }}-yarn-${{ hashFiles('**/yarn.lock') }}

|

||||||

|

restore-keys: |

|

||||||

|

${{ runner.os }}-yarn-

|

||||||

|

- name: Build

|

||||||

|

run: |

|

||||||

|

yarn add -D vuepress

|

||||||

|

yarn docs:build

|

||||||

|

mkdir $HOME/site

|

||||||

|

rsync -avzh docs/.vuepress/dist/ $HOME/site

|

||||||

|

- name: Publish

|

||||||

|

env:

|

||||||

|

REPOSITORY: "https://x-access-token:${{ secrets.BOT_GITHUB_TOKEN }}@github.com/fluxcd/flagger.git"

|

||||||

|

run: |

|

||||||

|

tmpDir=$(mktemp -d)

|

||||||

|

pushd $tmpDir >& /dev/null

|

||||||

|

|

||||||

|

git clone ${REPOSITORY}

|

||||||

|

cd flagger

|

||||||

|

git config user.name ${{ github.actor }}

|

||||||

|

git config user.email ${{ github.actor }}@users.noreply.github.com

|

||||||

|

git remote set-url origin ${REPOSITORY}

|

||||||

|

git checkout gh-pages

|

||||||

|

rm -rf assets

|

||||||

|

rsync -avzh $HOME/site/ .

|

||||||

|

rm -rf node_modules

|

||||||

|

git add .

|

||||||

|

git commit -m "Publish website"

|

||||||

|

git push origin gh-pages

|

||||||

|

|

||||||

|

popd >& /dev/null

|

||||||

|

rm -rf $tmpDir

|

||||||

76

.gitignore

vendored

76

.gitignore

vendored

@@ -1,19 +1,65 @@

|

|||||||

# Binaries for programs and plugins

|

# Logs

|

||||||

*.exe

|

logs

|

||||||

*.exe~

|

*.log

|

||||||

*.dll

|

npm-debug.log*

|

||||||

*.so

|

yarn-debug.log*

|

||||||

*.dylib

|

yarn-error.log*

|

||||||

|

|

||||||

# Test binary, build with `go test -c`

|

# Runtime data

|

||||||

*.test

|

pids

|

||||||

|

*.pid

|

||||||

|

*.seed

|

||||||

|

*.pid.lock

|

||||||

|

|

||||||

# Output of the go coverage tool, specifically when used with LiteIDE

|

# Directory for instrumented libs generated by jscoverage/JSCover

|

||||||

*.out

|

lib-cov

|

||||||

.DS_Store

|

|

||||||

|

# Coverage directory used by tools like istanbul

|

||||||

|

coverage

|

||||||

|

|

||||||

|

# nyc test coverage

|

||||||

|

.nyc_output

|

||||||

|

|

||||||

|

# Grunt intermediate storage (http://gruntjs.com/creating-plugins#storing-task-files)

|

||||||

|

.grunt

|

||||||

|

|

||||||

|

# Bower dependency directory (https://bower.io/)

|

||||||

|

bower_components

|

||||||

|

|

||||||

|

# node-waf configuration

|

||||||

|

.lock-wscript

|

||||||

|

|

||||||

|

# Compiled binary addons (https://nodejs.org/api/addons.html)

|

||||||

|

build/Release

|

||||||

|

|

||||||

|

# Dependency directories

|

||||||

|

node_modules/

|

||||||

|

jspm_packages/

|

||||||

|

|

||||||

|

# TypeScript v1 declaration files

|

||||||

|

typings/

|

||||||

|

|

||||||

|

# Optional npm cache directory

|

||||||

|

.npm

|

||||||

|

|

||||||

|

# Optional eslint cache

|

||||||

|

.eslintcache

|

||||||

|

|

||||||

|

# Optional REPL history

|

||||||

|

.node_repl_history

|

||||||

|

|

||||||

|

# Output of 'npm pack'

|

||||||

|

*.tgz

|

||||||

|

|

||||||

|

# Yarn Integrity file

|

||||||

|

.yarn-integrity

|

||||||

|

|

||||||

|

# dotenv environment variables file

|

||||||

|

.env

|

||||||

|

|

||||||

|

# next.js build output

|

||||||

|

.next

|

||||||

|

|

||||||

bin/

|

bin/

|

||||||

_tmp/

|

docs/.vuepress/dist/

|

||||||

|

Makefile.dev

|

||||||

artifacts/gcloud/

|

|

||||||

.idea

|

|

||||||

@@ -1,18 +0,0 @@

|

|||||||

builds:

|

|

||||||

- main: ./cmd/flagger

|

|

||||||

binary: flagger

|

|

||||||

ldflags: -s -w -X github.com/weaveworks/flagger/pkg/version.REVISION={{.Commit}}

|

|

||||||

goos:

|

|

||||||

- linux

|

|

||||||

goarch:

|

|

||||||

- amd64

|

|

||||||

env:

|

|

||||||

- CGO_ENABLED=0

|

|

||||||

archives:

|

|

||||||

- name_template: "{{ .Binary }}_{{ .Version }}_{{ .Os }}_{{ .Arch }}"

|

|

||||||

files:

|

|

||||||

- none*

|

|

||||||

changelog:

|

|

||||||

filters:

|

|

||||||

exclude:

|

|

||||||

- '^CircleCI'

|

|

||||||

386

CHANGELOG.md

386

CHANGELOG.md

@@ -1,386 +0,0 @@

|

|||||||

# Changelog

|

|

||||||

|

|

||||||

All notable changes to this project are documented in this file.

|

|

||||||

|

|

||||||

## 0.18.2 (2019-08-05)

|

|

||||||

|

|

||||||

Fixes multi-port support for Istio

|

|

||||||

|

|

||||||

#### Fixes

|

|

||||||

|

|

||||||

- Fix port discovery for multiple port services [#267](https://github.com/weaveworks/flagger/pull/267)

|

|

||||||

|

|

||||||

#### Improvements

|

|

||||||

|

|

||||||

- Update e2e testing to Istio v1.2.3, Gloo v0.18.8 and NGINX ingress chart v1.12.1 [#268](https://github.com/weaveworks/flagger/pull/268)

|

|

||||||

|

|

||||||

## 0.18.1 (2019-07-30)

|

|

||||||

|

|

||||||

Fixes Blue/Green style deployments for Kubernetes and Linkerd providers

|

|

||||||

|

|

||||||

#### Fixes

|

|

||||||

|

|

||||||

- Fix Blue/Green metrics provider and add e2e tests [#261](https://github.com/weaveworks/flagger/pull/261)

|

|

||||||

|

|

||||||

## 0.18.0 (2019-07-29)

|

|

||||||

|

|

||||||

Adds support for [manual gating](https://docs.flagger.app/how-it-works#manual-gating) and pausing/resuming an ongoing analysis

|

|

||||||

|

|

||||||

#### Features

|

|

||||||

|

|

||||||

- Implement confirm rollout gate, hook and API [#251](https://github.com/weaveworks/flagger/pull/251)

|

|

||||||

|

|

||||||

#### Improvements

|

|

||||||

|

|

||||||

- Refactor canary change detection and status [#240](https://github.com/weaveworks/flagger/pull/240)

|

|

||||||

- Implement finalising state [#257](https://github.com/weaveworks/flagger/pull/257)

|

|

||||||

- Add gRPC load testing tool [#248](https://github.com/weaveworks/flagger/pull/248)

|

|

||||||

|

|

||||||

#### Breaking changes

|

|

||||||

|

|

||||||

- Due to the status sub-resource changes in [#240](https://github.com/weaveworks/flagger/pull/240), when upgrading Flagger the canaries status phase will be reset to `Initialized`

|

|

||||||

- Upgrading Flagger with Helm will fail due to Helm poor support of CRDs, see [workaround](https://github.com/weaveworks/flagger/issues/223)

|

|

||||||

|

|

||||||

## 0.17.0 (2019-07-08)

|

|

||||||

|

|

||||||

Adds support for Linkerd (SMI Traffic Split API), MS Teams notifications and HA mode with leader election

|

|

||||||

|

|

||||||

#### Features

|

|

||||||

|

|

||||||

- Add Linkerd support [#230](https://github.com/weaveworks/flagger/pull/230)

|

|

||||||

- Implement MS Teams notifications [#235](https://github.com/weaveworks/flagger/pull/235)

|

|

||||||

- Implement leader election [#236](https://github.com/weaveworks/flagger/pull/236)

|

|

||||||

|

|

||||||

#### Improvements

|

|

||||||

|

|

||||||

- Add [Kustomize](https://docs.flagger.app/install/flagger-install-on-kubernetes#install-flagger-with-kustomize) installer [#232](https://github.com/weaveworks/flagger/pull/232)

|

|

||||||

- Add Pod Security Policy to Helm chart [#234](https://github.com/weaveworks/flagger/pull/234)

|

|

||||||

|

|

||||||

## 0.16.0 (2019-06-23)

|

|

||||||

|

|

||||||

Adds support for running [Blue/Green deployments](https://docs.flagger.app/usage/blue-green) without a service mesh or ingress controller

|

|

||||||

|

|

||||||

#### Features

|

|

||||||

|

|

||||||

- Allow blue/green deployments without a service mesh provider [#211](https://github.com/weaveworks/flagger/pull/211)

|

|

||||||

- Add the service mesh provider to the canary spec [#217](https://github.com/weaveworks/flagger/pull/217)

|

|

||||||

- Allow multi-port services and implement port discovery [#207](https://github.com/weaveworks/flagger/pull/207)

|

|

||||||

|

|

||||||

#### Improvements

|

|

||||||

|

|

||||||

- Add [FAQ page](https://docs.flagger.app/faq) to docs website

|

|

||||||

- Switch to go modules in CI [#218](https://github.com/weaveworks/flagger/pull/218)

|

|

||||||

- Update e2e testing to Kubernetes Kind 0.3.0 and Istio 1.2.0

|

|

||||||

|

|

||||||

#### Fixes

|

|

||||||

|

|

||||||

- Update the primary HPA on canary promotion [#216](https://github.com/weaveworks/flagger/pull/216)

|

|

||||||

|

|

||||||

## 0.15.0 (2019-06-12)

|

|

||||||

|

|

||||||

Adds support for customising the Istio [traffic policy](https://docs.flagger.app/how-it-works#istio-routing) in the canary service spec

|

|

||||||

|

|

||||||

#### Features

|

|

||||||

|

|

||||||

- Generate Istio destination rules and allow traffic policy customisation [#200](https://github.com/weaveworks/flagger/pull/200)

|

|

||||||

|

|

||||||

#### Improvements

|

|

||||||

|

|

||||||

- Update Kubernetes packages to 1.14 and use go modules instead of dep [#202](https://github.com/weaveworks/flagger/pull/202)

|

|

||||||

|

|

||||||

## 0.14.1 (2019-06-05)

|

|

||||||

|

|

||||||

Adds support for running [acceptance/integration tests](https://docs.flagger.app/how-it-works#integration-testing) with Helm test or Bash Bats using pre-rollout hooks

|

|

||||||

|

|

||||||

#### Features

|

|

||||||

|

|

||||||

- Implement Helm and Bash pre-rollout hooks [#196](https://github.com/weaveworks/flagger/pull/196)

|

|

||||||

|

|

||||||

#### Fixes

|

|

||||||

|

|

||||||

- Fix promoting canary when max weight is not a multiple of step [#190](https://github.com/weaveworks/flagger/pull/190)

|

|

||||||

- Add ability to set Prometheus url with custom path without trailing '/' [#197](https://github.com/weaveworks/flagger/pull/197)

|

|

||||||

|

|

||||||

## 0.14.0 (2019-05-21)

|

|

||||||

|

|

||||||

Adds support for Service Mesh Interface and [Gloo](https://docs.flagger.app/usage/gloo-progressive-delivery) ingress controller

|

|

||||||

|

|

||||||

#### Features

|

|

||||||

|

|

||||||

- Add support for SMI (Istio weighted traffic) [#180](https://github.com/weaveworks/flagger/pull/180)

|

|

||||||

- Add support for Gloo ingress controller (weighted traffic) [#179](https://github.com/weaveworks/flagger/pull/179)

|

|

||||||

|

|

||||||

## 0.13.2 (2019-04-11)

|

|

||||||

|

|

||||||

Fixes for Jenkins X deployments (prevent the jx GC from removing the primary instance)

|

|

||||||

|

|

||||||

#### Fixes

|

|

||||||

|

|

||||||

- Do not copy labels from canary to primary deployment [#178](https://github.com/weaveworks/flagger/pull/178)

|

|

||||||

|

|

||||||

#### Improvements

|

|

||||||

|

|

||||||

- Add NGINX ingress controller e2e and unit tests [#176](https://github.com/weaveworks/flagger/pull/176)

|

|

||||||

|

|

||||||

## 0.13.1 (2019-04-09)

|

|

||||||

|

|

||||||

Fixes for custom metrics checks and NGINX Prometheus queries

|

|

||||||

|

|

||||||

#### Fixes

|

|

||||||

|

|

||||||

- Fix promql queries for custom checks and NGINX [#174](https://github.com/weaveworks/flagger/pull/174)

|

|

||||||

|

|

||||||

## 0.13.0 (2019-04-08)

|

|

||||||

|

|

||||||

Adds support for [NGINX](https://docs.flagger.app/usage/nginx-progressive-delivery) ingress controller

|

|

||||||

|

|

||||||

#### Features

|

|

||||||

|

|

||||||

- Add support for nginx ingress controller (weighted traffic and A/B testing) [#170](https://github.com/weaveworks/flagger/pull/170)

|

|

||||||

- Add Prometheus add-on to Flagger Helm chart for App Mesh and NGINX [79b3370](https://github.com/weaveworks/flagger/pull/170/commits/79b337089294a92961bc8446fd185b38c50a32df)

|

|

||||||

|

|

||||||

#### Fixes

|

|

||||||

|

|

||||||

- Fix duplicate hosts Istio error when using wildcards [#162](https://github.com/weaveworks/flagger/pull/162)

|

|

||||||

|

|

||||||

## 0.12.0 (2019-04-29)

|

|

||||||

|

|

||||||

Adds support for [SuperGloo](https://docs.flagger.app/install/flagger-install-with-supergloo)

|

|

||||||

|

|

||||||

#### Features

|

|

||||||

|

|

||||||

- Supergloo support for canary deployment (weighted traffic) [#151](https://github.com/weaveworks/flagger/pull/151)

|

|

||||||

|

|

||||||

## 0.11.1 (2019-04-18)

|

|

||||||

|

|

||||||

Move Flagger and the load tester container images to Docker Hub

|

|

||||||

|

|

||||||

#### Features

|

|

||||||

|

|

||||||

- Add Bash Automated Testing System support to Flagger tester for running acceptance tests as pre-rollout hooks

|

|

||||||

|

|

||||||

## 0.11.0 (2019-04-17)

|

|

||||||

|

|

||||||

Adds pre/post rollout [webhooks](https://docs.flagger.app/how-it-works#webhooks)

|

|

||||||

|

|

||||||

#### Features

|

|

||||||

|

|

||||||

- Add `pre-rollout` and `post-rollout` webhook types [#147](https://github.com/weaveworks/flagger/pull/147)

|

|

||||||

|

|

||||||

#### Improvements

|

|

||||||

|

|

||||||

- Unify App Mesh and Istio builtin metric checks [#146](https://github.com/weaveworks/flagger/pull/146)

|

|

||||||

- Make the pod selector label configurable [#148](https://github.com/weaveworks/flagger/pull/148)

|

|

||||||

|

|

||||||

#### Breaking changes

|

|

||||||

|

|

||||||

- Set default `mesh` Istio gateway only if no gateway is specified [#141](https://github.com/weaveworks/flagger/pull/141)

|

|

||||||

|

|

||||||

## 0.10.0 (2019-03-27)

|

|

||||||

|

|

||||||

Adds support for App Mesh

|

|

||||||

|

|

||||||

#### Features

|

|

||||||

|

|

||||||

- AWS App Mesh integration

|

|

||||||

[#107](https://github.com/weaveworks/flagger/pull/107)

|

|

||||||

[#123](https://github.com/weaveworks/flagger/pull/123)

|

|

||||||

|

|

||||||

#### Improvements

|

|

||||||

|

|

||||||

- Reconcile Kubernetes ClusterIP services [#122](https://github.com/weaveworks/flagger/pull/122)

|

|

||||||

|

|

||||||

#### Fixes

|

|

||||||

|

|

||||||

- Preserve pod labels on canary promotion [#105](https://github.com/weaveworks/flagger/pull/105)

|

|

||||||

- Fix canary status Prometheus metric [#121](https://github.com/weaveworks/flagger/pull/121)

|

|

||||||

|

|

||||||

## 0.9.0 (2019-03-11)

|

|

||||||

|

|

||||||

Allows A/B testing scenarios where instead of weighted routing, the traffic is split between the

|

|

||||||

primary and canary based on HTTP headers or cookies.

|

|

||||||

|

|

||||||

#### Features

|

|

||||||

|

|

||||||

- A/B testing - canary with session affinity [#88](https://github.com/weaveworks/flagger/pull/88)

|

|

||||||

|

|

||||||

#### Fixes

|

|

||||||

|

|

||||||

- Update the analysis interval when the custom resource changes [#91](https://github.com/weaveworks/flagger/pull/91)

|

|

||||||

|

|

||||||

## 0.8.0 (2019-03-06)

|

|

||||||

|

|

||||||

Adds support for CORS policy and HTTP request headers manipulation

|

|

||||||

|

|

||||||

#### Features

|

|

||||||

|

|

||||||

- CORS policy support [#83](https://github.com/weaveworks/flagger/pull/83)

|

|

||||||

- Allow headers to be appended to HTTP requests [#82](https://github.com/weaveworks/flagger/pull/82)

|

|

||||||

|

|

||||||

#### Improvements

|

|

||||||

|

|

||||||

- Refactor the routing management

|

|

||||||

[#72](https://github.com/weaveworks/flagger/pull/72)

|

|

||||||

[#80](https://github.com/weaveworks/flagger/pull/80)

|

|

||||||

- Fine-grained RBAC [#73](https://github.com/weaveworks/flagger/pull/73)

|

|

||||||

- Add option to limit Flagger to a single namespace [#78](https://github.com/weaveworks/flagger/pull/78)

|

|

||||||

|

|

||||||

## 0.7.0 (2019-02-28)

|

|

||||||

|

|

||||||

Adds support for custom metric checks, HTTP timeouts and HTTP retries

|

|

||||||

|

|

||||||

#### Features

|

|

||||||

|

|

||||||

- Allow custom promql queries in the canary analysis spec [#60](https://github.com/weaveworks/flagger/pull/60)

|

|

||||||

- Add HTTP timeout and retries to canary service spec [#62](https://github.com/weaveworks/flagger/pull/62)

|

|

||||||

|

|

||||||

## 0.6.0 (2019-02-25)

|

|

||||||

|

|

||||||

Allows for [HTTPMatchRequests](https://istio.io/docs/reference/config/istio.networking.v1alpha3/#HTTPMatchRequest)

|

|

||||||

and [HTTPRewrite](https://istio.io/docs/reference/config/istio.networking.v1alpha3/#HTTPRewrite)

|

|

||||||

to be customized in the service spec of the canary custom resource.

|

|

||||||

|

|

||||||

#### Features

|

|

||||||

|

|

||||||

- Add HTTP match conditions and URI rewrite to the canary service spec [#55](https://github.com/weaveworks/flagger/pull/55)

|

|

||||||

- Update virtual service when the canary service spec changes

|

|

||||||

[#54](https://github.com/weaveworks/flagger/pull/54)

|

|

||||||

[#51](https://github.com/weaveworks/flagger/pull/51)

|

|

||||||

|

|

||||||

#### Improvements

|

|

||||||

|

|

||||||

- Run e2e testing on [Kubernetes Kind](https://github.com/kubernetes-sigs/kind) for canary promotion

|

|

||||||

[#53](https://github.com/weaveworks/flagger/pull/53)

|

|

||||||

|

|

||||||

## 0.5.1 (2019-02-14)

|

|

||||||

|

|

||||||

Allows skipping the analysis phase to ship changes directly to production

|

|

||||||

|

|

||||||

#### Features

|

|

||||||

|

|

||||||

- Add option to skip the canary analysis [#46](https://github.com/weaveworks/flagger/pull/46)

|

|

||||||

|

|

||||||

#### Fixes

|

|

||||||

|

|

||||||

- Reject deployment if the pod label selector doesn't match `app: <DEPLOYMENT_NAME>` [#43](https://github.com/weaveworks/flagger/pull/43)

|

|

||||||

|

|

||||||

## 0.5.0 (2019-01-30)

|

|

||||||

|

|

||||||

Track changes in ConfigMaps and Secrets [#37](https://github.com/weaveworks/flagger/pull/37)

|

|

||||||

|

|

||||||

#### Features

|

|

||||||

|

|

||||||

- Promote configmaps and secrets changes from canary to primary

|

|

||||||

- Detect changes in configmaps and/or secrets and (re)start canary analysis

|

|

||||||

- Add configs checksum to Canary CRD status

|

|

||||||

- Create primary configmaps and secrets at bootstrap

|

|

||||||

- Scan canary volumes and containers for configmaps and secrets

|

|

||||||

|

|

||||||

#### Fixes

|

|

||||||

|

|

||||||

- Copy deployment labels from canary to primary at bootstrap and promotion

|

|

||||||

|

|

||||||

## 0.4.1 (2019-01-24)

|

|

||||||

|

|

||||||

Load testing webhook [#35](https://github.com/weaveworks/flagger/pull/35)

|

|

||||||

|

|

||||||

#### Features

|

|

||||||

|

|

||||||

- Add the load tester chart to Flagger Helm repository

|

|

||||||

- Implement a load test runner based on [rakyll/hey](https://github.com/rakyll/hey)

|

|

||||||

- Log warning when no values are found for Istio metric due to lack of traffic

|

|

||||||

|

|

||||||

#### Fixes

|

|

||||||

|

|

||||||

- Run wekbooks before the metrics checks to avoid failures when using a load tester

|

|

||||||

|

|

||||||

## 0.4.0 (2019-01-18)

|

|

||||||

|

|

||||||

Restart canary analysis if revision changes [#31](https://github.com/weaveworks/flagger/pull/31)

|

|

||||||

|

|

||||||

#### Breaking changes

|

|

||||||

|

|

||||||

- Drop support for Kubernetes 1.10

|

|

||||||

|

|

||||||

#### Features

|

|

||||||

|

|

||||||

- Detect changes during canary analysis and reset advancement

|

|

||||||

- Add status and additional printer columns to CRD

|

|

||||||

- Add canary name and namespace to controller structured logs

|

|

||||||

|

|

||||||

#### Fixes

|

|

||||||

|

|

||||||

- Allow canary name to be different to the target name

|

|

||||||

- Check if multiple canaries have the same target and log error

|

|

||||||

- Use deep copy when updating Kubernetes objects

|

|

||||||

- Skip readiness checks if canary analysis has finished

|

|

||||||

|

|

||||||

## 0.3.0 (2019-01-11)

|

|

||||||

|

|

||||||

Configurable canary analysis duration [#20](https://github.com/weaveworks/flagger/pull/20)

|

|

||||||

|

|

||||||

#### Breaking changes

|

|

||||||

|

|

||||||

- Helm chart: flag `controlLoopInterval` has been removed

|

|

||||||

|

|

||||||

#### Features

|

|

||||||

|

|

||||||

- CRD: canaries.flagger.app v1alpha3

|

|

||||||

- Schedule canary analysis independently based on `canaryAnalysis.interval`

|

|

||||||

- Add analysis interval to Canary CRD (defaults to one minute)

|

|

||||||

- Make autoscaler (HPA) reference optional

|

|

||||||

|

|

||||||

## 0.2.0 (2019-01-04)

|

|

||||||

|

|

||||||

Webhooks [#18](https://github.com/weaveworks/flagger/pull/18)

|

|

||||||

|

|

||||||

#### Features

|

|

||||||

|

|

||||||

- CRD: canaries.flagger.app v1alpha2

|

|

||||||

- Implement canary external checks based on webhooks HTTP POST calls

|

|

||||||

- Add webhooks to Canary CRD

|

|

||||||

- Move docs to gitbook [docs.flagger.app](https://docs.flagger.app)

|

|

||||||

|

|

||||||

## 0.1.2 (2018-12-06)

|

|

||||||

|

|

||||||

Improve Slack notifications [#14](https://github.com/weaveworks/flagger/pull/14)

|

|

||||||

|

|

||||||

#### Features

|

|

||||||

|

|

||||||

- Add canary analysis metadata to init and start Slack messages

|

|

||||||

- Add rollback reason to failed canary Slack messages

|

|

||||||

|

|

||||||

## 0.1.1 (2018-11-28)

|

|

||||||

|

|

||||||

Canary progress deadline [#10](https://github.com/weaveworks/flagger/pull/10)

|

|

||||||

|

|

||||||

#### Features

|

|

||||||

|

|

||||||

- Rollback canary based on the deployment progress deadline check

|

|

||||||

- Add progress deadline to Canary CRD (defaults to 10 minutes)

|

|

||||||

|

|

||||||

## 0.1.0 (2018-11-25)

|

|

||||||

|

|

||||||

First stable release

|

|

||||||

|

|

||||||

#### Features

|

|

||||||

|

|

||||||

- CRD: canaries.flagger.app v1alpha1

|

|

||||||

- Notifications: post canary events to Slack

|

|

||||||

- Instrumentation: expose Prometheus metrics for canary status and traffic weight percentage

|

|

||||||

- Autoscaling: add HPA reference to CRD and create primary HPA at bootstrap

|

|

||||||

- Bootstrap: create primary deployment, ClusterIP services and Istio virtual service based on CRD spec

|

|

||||||

|

|

||||||

|

|

||||||

## 0.0.1 (2018-10-07)

|

|

||||||

|

|

||||||

Initial semver release

|

|

||||||

|

|

||||||

#### Features

|

|

||||||

|

|

||||||

- Implement canary rollback based on failed checks threshold

|

|

||||||

- Scale up the deployment when canary revision changes

|

|

||||||

- Add OpenAPI v3 schema validation to Canary CRD

|

|

||||||

- Use CRD status for canary state persistence

|

|

||||||

- Add Helm charts for Flagger and Grafana

|

|

||||||

- Add canary analysis Grafana dashboard

|

|

||||||

@@ -1,72 +0,0 @@

|

|||||||

# How to Contribute

|

|

||||||

|

|

||||||

Flagger is [Apache 2.0 licensed](LICENSE) and accepts contributions via GitHub

|

|

||||||

pull requests. This document outlines some of the conventions on development

|

|

||||||

workflow, commit message formatting, contact points and other resources to make

|

|

||||||

it easier to get your contribution accepted.

|

|

||||||

|

|

||||||

We gratefully welcome improvements to documentation as well as to code.

|

|

||||||

|

|

||||||

## Certificate of Origin

|

|

||||||

|

|

||||||

By contributing to this project you agree to the Developer Certificate of

|

|

||||||

Origin (DCO). This document was created by the Linux Kernel community and is a

|

|

||||||

simple statement that you, as a contributor, have the legal right to make the

|

|

||||||

contribution.

|

|

||||||

|

|

||||||

## Chat

|

|

||||||

|

|

||||||

The project uses Slack: To join the conversation, simply join the

|

|

||||||

[Weave community](https://slack.weave.works/) Slack workspace.

|

|

||||||

|

|

||||||

## Getting Started

|

|

||||||

|

|

||||||

- Fork the repository on GitHub

|

|

||||||

- If you want to contribute as a developer, continue reading this document for further instructions

|

|

||||||

- If you have questions, concerns, get stuck or need a hand, let us know

|

|

||||||

on the Slack channel. We are happy to help and look forward to having

|

|

||||||

you part of the team. No matter in which capacity.

|

|

||||||

- Play with the project, submit bugs, submit pull requests!

|

|

||||||

|

|

||||||

## Contribution workflow

|

|

||||||

|

|

||||||

This is a rough outline of how to prepare a contribution:

|

|

||||||

|

|

||||||

- Create a topic branch from where you want to base your work (usually branched from master).

|

|

||||||

- Make commits of logical units.

|

|

||||||

- Make sure your commit messages are in the proper format (see below).

|

|

||||||

- Push your changes to a topic branch in your fork of the repository.

|

|

||||||

- If you changed code:

|

|

||||||

- add automated tests to cover your changes

|

|

||||||

- Submit a pull request to the original repository.

|

|

||||||

|

|

||||||

## Acceptance policy

|

|

||||||

|

|

||||||

These things will make a PR more likely to be accepted:

|

|

||||||

|

|

||||||

- a well-described requirement

|

|

||||||

- new code and tests follow the conventions in old code and tests

|

|

||||||

- a good commit message (see below)

|

|

||||||

- All code must abide [Go Code Review Comments](https://github.com/golang/go/wiki/CodeReviewComments)

|

|

||||||

- Names should abide [What's in a name](https://talks.golang.org/2014/names.slide#1)

|

|

||||||

- Code must build on both Linux and Darwin, via plain `go build`

|

|

||||||

- Code should have appropriate test coverage and tests should be written

|

|

||||||

to work with `go test`

|

|

||||||

|

|

||||||

In general, we will merge a PR once one maintainer has endorsed it.

|

|

||||||

For substantial changes, more people may become involved, and you might

|

|

||||||

get asked to resubmit the PR or divide the changes into more than one PR.

|

|

||||||

|

|

||||||

### Format of the Commit Message

|

|

||||||

|

|

||||||

For Flux we prefer the following rules for good commit messages:

|

|

||||||

|

|

||||||

- Limit the subject to 50 characters and write as the continuation

|

|

||||||

of the sentence "If applied, this commit will ..."

|

|

||||||

- Explain what and why in the body, if more than a trivial change;

|

|

||||||

wrap it at 72 characters.

|

|

||||||

|

|

||||||

The [following article](https://chris.beams.io/posts/git-commit/#seven-rules)

|

|

||||||

has some more helpful advice on documenting your work.

|

|

||||||

|

|

||||||

This doc is adapted from the [Weaveworks Flux](https://github.com/weaveworks/flux/blob/master/CONTRIBUTING.md)

|

|

||||||

16

Dockerfile

16

Dockerfile

@@ -1,16 +0,0 @@

|

|||||||

FROM alpine:3.9

|

|

||||||

|

|

||||||

RUN addgroup -S flagger \

|

|

||||||

&& adduser -S -g flagger flagger \

|

|

||||||

&& apk --no-cache add ca-certificates

|

|

||||||

|

|

||||||

WORKDIR /home/flagger

|

|

||||||

|

|

||||||

COPY /bin/flagger .

|

|

||||||

|

|

||||||

RUN chown -R flagger:flagger ./

|

|

||||||

|

|

||||||

USER flagger

|

|

||||||

|

|

||||||

ENTRYPOINT ["./flagger"]

|

|

||||||

|

|

||||||

@@ -1,27 +0,0 @@

|

|||||||

FROM bats/bats:v1.1.0

|

|

||||||

|

|

||||||

RUN addgroup -S app \

|

|

||||||

&& adduser -S -g app app \

|

|

||||||

&& apk --no-cache add ca-certificates curl jq

|

|

||||||

|

|

||||||

WORKDIR /home/app

|

|

||||||

|

|

||||||

RUN curl -sSLo hey "https://storage.googleapis.com/jblabs/dist/hey_linux_v0.1.2" && \

|

|

||||||

chmod +x hey && mv hey /usr/local/bin/hey

|

|

||||||

|

|

||||||

RUN curl -sSL "https://get.helm.sh/helm-v2.12.3-linux-amd64.tar.gz" | tar xvz && \

|

|

||||||

chmod +x linux-amd64/helm && mv linux-amd64/helm /usr/local/bin/helm && \

|

|

||||||

rm -rf linux-amd64

|

|

||||||

|

|

||||||

RUN curl -sSL "https://github.com/bojand/ghz/releases/download/v0.39.0/ghz_0.39.0_Linux_x86_64.tar.gz" | tar xz -C /tmp && \

|

|

||||||

mv /tmp/ghz /usr/local/bin && chmod +x /usr/local/bin/ghz && rm -rf /tmp/ghz-web

|

|

||||||

|

|

||||||

RUN ls /tmp

|

|

||||||

|

|

||||||

COPY ./bin/loadtester .

|

|

||||||

|

|

||||||

RUN chown -R app:app ./

|

|

||||||

|

|

||||||

USER app

|

|

||||||

|

|

||||||

ENTRYPOINT ["./loadtester"]

|

|

||||||

2

LICENSE

2

LICENSE

@@ -186,7 +186,7 @@

|

|||||||

same "printed page" as the copyright notice for easier

|

same "printed page" as the copyright notice for easier

|

||||||

identification within third-party archives.

|

identification within third-party archives.

|

||||||

|

|

||||||

Copyright 2018 Weaveworks. All rights reserved.

|

Copyright 2019 Weaveworks

|

||||||

|

|

||||||

Licensed under the Apache License, Version 2.0 (the "License");

|

Licensed under the Apache License, Version 2.0 (the "License");

|

||||||

you may not use this file except in compliance with the License.

|

you may not use this file except in compliance with the License.

|

||||||

|

|||||||

@@ -1,5 +0,0 @@

|

|||||||

The maintainers are generally available in Slack at

|

|

||||||

https://weave-community.slack.com/messages/flagger/ (obtain an invitation

|

|

||||||

at https://slack.weave.works/).

|

|

||||||

|

|

||||||

Stefan Prodan, Weaveworks <stefan@weave.works> (Slack: @stefan Twitter: @stefanprodan)

|

|

||||||

127

Makefile

127

Makefile

@@ -1,127 +0,0 @@

|

|||||||

TAG?=latest

|

|

||||||

VERSION?=$(shell grep 'VERSION' pkg/version/version.go | awk '{ print $$4 }' | tr -d '"')

|

|

||||||

VERSION_MINOR:=$(shell grep 'VERSION' pkg/version/version.go | awk '{ print $$4 }' | tr -d '"' | rev | cut -d'.' -f2- | rev)

|

|

||||||

PATCH:=$(shell grep 'VERSION' pkg/version/version.go | awk '{ print $$4 }' | tr -d '"' | awk -F. '{print $$NF}')

|

|

||||||

SOURCE_DIRS = cmd pkg/apis pkg/controller pkg/server pkg/canary pkg/metrics pkg/router pkg/notifier

|

|

||||||

LT_VERSION?=$(shell grep 'VERSION' cmd/loadtester/main.go | awk '{ print $$4 }' | tr -d '"' | head -n1)

|

|

||||||

TS=$(shell date +%Y-%m-%d_%H-%M-%S)

|

|

||||||

|

|

||||||

run:

|

|

||||||

GO111MODULE=on go run cmd/flagger/* -kubeconfig=$$HOME/.kube/config -log-level=info -mesh-provider=istio -namespace=test \

|

|

||||||

-metrics-server=https://prometheus.istio.weavedx.com \

|

|

||||||

-enable-leader-election=true

|

|

||||||

|

|

||||||

run2:

|

|

||||||

GO111MODULE=on go run cmd/flagger/* -kubeconfig=$$HOME/.kube/config -log-level=info -mesh-provider=istio -namespace=test \

|

|

||||||

-metrics-server=https://prometheus.istio.weavedx.com \

|

|

||||||

-enable-leader-election=true \

|

|

||||||

-port=9092

|

|

||||||

|

|

||||||

run-appmesh:

|

|

||||||

GO111MODULE=on go run cmd/flagger/* -kubeconfig=$$HOME/.kube/config -log-level=info -mesh-provider=appmesh \

|

|

||||||

-metrics-server=http://acfc235624ca911e9a94c02c4171f346-1585187926.us-west-2.elb.amazonaws.com:9090

|

|

||||||

|

|

||||||

run-nginx:

|

|

||||||

GO111MODULE=on go run cmd/flagger/* -kubeconfig=$$HOME/.kube/config -log-level=info -mesh-provider=nginx -namespace=nginx \

|

|

||||||

-metrics-server=http://prometheus-weave.istio.weavedx.com

|

|

||||||

|

|

||||||

run-smi:

|

|

||||||

GO111MODULE=on go run cmd/flagger/* -kubeconfig=$$HOME/.kube/config -log-level=info -mesh-provider=smi:istio -namespace=smi \

|

|

||||||

-metrics-server=https://prometheus.istio.weavedx.com

|

|

||||||

|

|

||||||

run-gloo:

|

|

||||||

GO111MODULE=on go run cmd/flagger/* -kubeconfig=$$HOME/.kube/config -log-level=info -mesh-provider=gloo -namespace=gloo \

|

|

||||||

-metrics-server=https://prometheus.istio.weavedx.com

|

|

||||||

|

|

||||||

run-nop:

|

|

||||||

GO111MODULE=on go run cmd/flagger/* -kubeconfig=$$HOME/.kube/config -log-level=info -mesh-provider=none -namespace=bg \

|

|

||||||

-metrics-server=https://prometheus.istio.weavedx.com

|

|

||||||

|

|

||||||

run-linkerd:

|

|

||||||

GO111MODULE=on go run cmd/flagger/* -kubeconfig=$$HOME/.kube/config -log-level=info -mesh-provider=smi:linkerd -namespace=demo \

|

|

||||||

-metrics-server=https://linkerd-prometheus.istio.weavedx.com

|

|

||||||

|

|

||||||

build:

|

|

||||||

GIT_COMMIT=$$(git rev-list -1 HEAD) && GO111MODULE=on CGO_ENABLED=0 GOOS=linux go build -ldflags "-s -w -X github.com/weaveworks/flagger/pkg/version.REVISION=$${GIT_COMMIT}" -a -installsuffix cgo -o ./bin/flagger ./cmd/flagger/*

|

|

||||||

docker build -t weaveworks/flagger:$(TAG) . -f Dockerfile

|

|

||||||

|

|

||||||

push:

|

|

||||||

docker tag weaveworks/flagger:$(TAG) weaveworks/flagger:$(VERSION)

|

|

||||||

docker push weaveworks/flagger:$(VERSION)

|

|

||||||

|

|

||||||

fmt:

|

|

||||||

gofmt -l -s -w $(SOURCE_DIRS)

|

|

||||||

|

|

||||||

test-fmt:

|

|

||||||

gofmt -l -s $(SOURCE_DIRS) | grep ".*\.go"; if [ "$$?" = "0" ]; then exit 1; fi

|

|

||||||

|

|

||||||

test-codegen:

|

|

||||||

./hack/verify-codegen.sh

|

|

||||||

|

|

||||||

test: test-fmt test-codegen

|

|

||||||

go test ./...

|

|

||||||

|

|

||||||

helm-package:

|

|

||||||

cd charts/ && helm package ./*

|

|

||||||

mv charts/*.tgz bin/

|

|

||||||

curl -s https://raw.githubusercontent.com/weaveworks/flagger/gh-pages/index.yaml > ./bin/index.yaml

|

|

||||||

helm repo index bin --url https://flagger.app --merge ./bin/index.yaml

|

|

||||||

|

|

||||||

helm-up:

|

|

||||||

helm upgrade --install flagger ./charts/flagger --namespace=istio-system --set crd.create=false

|

|

||||||

helm upgrade --install flagger-grafana ./charts/grafana --namespace=istio-system

|

|

||||||

|

|

||||||

version-set:

|

|

||||||

@next="$(TAG)" && \

|

|

||||||

current="$(VERSION)" && \

|

|

||||||

sed -i '' "s/$$current/$$next/g" pkg/version/version.go && \

|

|

||||||

sed -i '' "s/flagger:$$current/flagger:$$next/g" artifacts/flagger/deployment.yaml && \

|

|

||||||

sed -i '' "s/tag: $$current/tag: $$next/g" charts/flagger/values.yaml && \

|

|

||||||

sed -i '' "s/appVersion: $$current/appVersion: $$next/g" charts/flagger/Chart.yaml && \

|

|

||||||

sed -i '' "s/version: $$current/version: $$next/g" charts/flagger/Chart.yaml && \

|

|

||||||

sed -i '' "s/newTag: $$current/newTag: $$next/g" kustomize/base/flagger/kustomization.yaml && \

|

|

||||||

echo "Version $$next set in code, deployment, chart and kustomize"

|

|

||||||

|

|

||||||

version-up:

|

|

||||||

@next="$(VERSION_MINOR).$$(($(PATCH) + 1))" && \

|

|

||||||

current="$(VERSION)" && \

|

|

||||||

sed -i '' "s/$$current/$$next/g" pkg/version/version.go && \

|

|

||||||

sed -i '' "s/flagger:$$current/flagger:$$next/g" artifacts/flagger/deployment.yaml && \

|

|

||||||

sed -i '' "s/tag: $$current/tag: $$next/g" charts/flagger/values.yaml && \

|

|

||||||

sed -i '' "s/appVersion: $$current/appVersion: $$next/g" charts/flagger/Chart.yaml && \

|

|

||||||

echo "Version $$next set in code, deployment and chart"

|

|

||||||

|

|

||||||

dev-up: version-up

|

|

||||||

@echo "Starting build/push/deploy pipeline for $(VERSION)"

|

|

||||||

docker build -t quay.io/stefanprodan/flagger:$(VERSION) . -f Dockerfile

|

|

||||||

docker push quay.io/stefanprodan/flagger:$(VERSION)

|

|

||||||

kubectl apply -f ./artifacts/flagger/crd.yaml

|

|

||||||

helm upgrade -i flagger ./charts/flagger --namespace=istio-system --set crd.create=false

|

|

||||||

|

|

||||||

release:

|

|

||||||

git tag $(VERSION)

|

|

||||||

git push origin $(VERSION)

|

|

||||||

|

|

||||||

release-set: fmt version-set helm-package

|

|

||||||

git add .

|

|

||||||

git commit -m "Release $(VERSION)"

|

|

||||||

git push origin master

|

|

||||||

git tag $(VERSION)

|

|

||||||

git push origin $(VERSION)

|

|

||||||

|

|

||||||

reset-test:

|

|

||||||

kubectl delete -f ./artifacts/namespaces

|

|

||||||

kubectl apply -f ./artifacts/namespaces

|

|

||||||

kubectl apply -f ./artifacts/canaries

|

|

||||||

|

|

||||||

loadtester-run: loadtester-build

|

|

||||||

docker build -t weaveworks/flagger-loadtester:$(LT_VERSION) . -f Dockerfile.loadtester

|

|

||||||

docker rm -f tester || true

|

|

||||||

docker run -dp 8888:9090 --name tester weaveworks/flagger-loadtester:$(LT_VERSION)

|

|

||||||

|

|

||||||

loadtester-build:

|

|

||||||

GO111MODULE=on CGO_ENABLED=0 GOOS=linux go build -a -installsuffix cgo -o ./bin/loadtester ./cmd/loadtester/*

|

|

||||||

|

|

||||||

loadtester-push:

|

|

||||||

docker build -t weaveworks/flagger-loadtester:$(LT_VERSION) . -f Dockerfile.loadtester

|

|

||||||

docker push weaveworks/flagger-loadtester:$(LT_VERSION)

|

|

||||||

198

README.md

198

README.md

@@ -1,198 +1,4 @@

|

|||||||

# flagger

|

# flagger website

|

||||||

|

|

||||||

[](https://circleci.com/gh/weaveworks/flagger)

|

[flagger.app](https://flagger.app)

|

||||||

[](https://goreportcard.com/report/github.com/weaveworks/flagger)

|

|

||||||

[](https://codecov.io/gh/weaveworks/flagger)

|

|

||||||

[](https://github.com/weaveworks/flagger/blob/master/LICENSE)

|

|

||||||

[](https://github.com/weaveworks/flagger/releases)

|

|

||||||

|

|

||||||

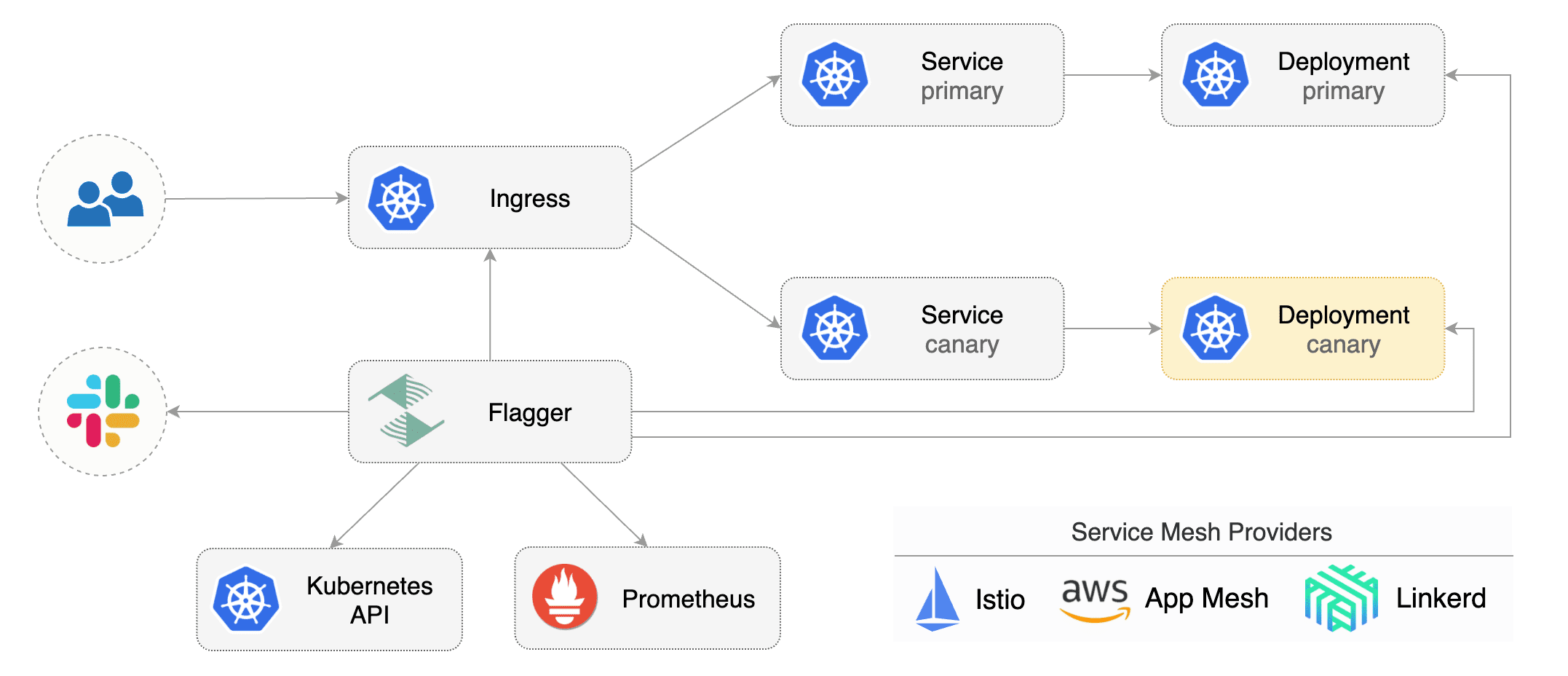

Flagger is a Kubernetes operator that automates the promotion of canary deployments

|

|

||||||

using Istio, Linkerd, App Mesh, NGINX or Gloo routing for traffic shifting and Prometheus metrics for canary analysis.

|

|

||||||

The canary analysis can be extended with webhooks for running acceptance tests,

|

|

||||||

load tests or any other custom validation.

|

|

||||||

|

|

||||||

Flagger implements a control loop that gradually shifts traffic to the canary while measuring key performance

|

|

||||||

indicators like HTTP requests success rate, requests average duration and pods health.

|

|

||||||

Based on analysis of the KPIs a canary is promoted or aborted, and the analysis result is published to Slack or MS Teams.

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

## Documentation

|

|

||||||

|

|

||||||

Flagger documentation can be found at [docs.flagger.app](https://docs.flagger.app)

|

|

||||||

|

|

||||||

* Install

|

|

||||||

* [Flagger install on Kubernetes](https://docs.flagger.app/install/flagger-install-on-kubernetes)

|

|

||||||

* [Flagger install on GKE Istio](https://docs.flagger.app/install/flagger-install-on-google-cloud)

|

|

||||||

* [Flagger install on EKS App Mesh](https://docs.flagger.app/install/flagger-install-on-eks-appmesh)

|

|

||||||

* [Flagger install with SuperGloo](https://docs.flagger.app/install/flagger-install-with-supergloo)

|

|

||||||

* How it works

|

|

||||||

* [Canary custom resource](https://docs.flagger.app/how-it-works#canary-custom-resource)

|

|

||||||

* [Routing](https://docs.flagger.app/how-it-works#istio-routing)

|

|

||||||

* [Canary deployment stages](https://docs.flagger.app/how-it-works#canary-deployment)

|

|

||||||

* [Canary analysis](https://docs.flagger.app/how-it-works#canary-analysis)

|

|

||||||

* [HTTP metrics](https://docs.flagger.app/how-it-works#http-metrics)

|

|

||||||

* [Custom metrics](https://docs.flagger.app/how-it-works#custom-metrics)

|

|

||||||

* [Webhooks](https://docs.flagger.app/how-it-works#webhooks)

|

|

||||||

* [Load testing](https://docs.flagger.app/how-it-works#load-testing)

|

|

||||||

* [Manual gating](https://docs.flagger.app/how-it-works#manual-gating)

|

|

||||||

* [FAQ](https://docs.flagger.app/faq)

|

|

||||||

* Usage

|

|

||||||

* [Istio canary deployments](https://docs.flagger.app/usage/progressive-delivery)

|

|

||||||

* [Istio A/B testing](https://docs.flagger.app/usage/ab-testing)

|

|

||||||

* [Linkerd canary deployments](https://docs.flagger.app/usage/linkerd-progressive-delivery)

|

|

||||||

* [App Mesh canary deployments](https://docs.flagger.app/usage/appmesh-progressive-delivery)

|

|

||||||

* [NGINX ingress controller canary deployments](https://docs.flagger.app/usage/nginx-progressive-delivery)

|

|

||||||

* [Gloo ingress controller canary deployments](https://docs.flagger.app/usage/gloo-progressive-delivery)

|

|

||||||

* [Blue/Green deployments](https://docs.flagger.app/usage/blue-green)

|

|

||||||

* [Monitoring](https://docs.flagger.app/usage/monitoring)

|

|

||||||

* [Alerting](https://docs.flagger.app/usage/alerting)

|

|

||||||

* Tutorials

|

|

||||||

* [Canary deployments with Helm charts and Weave Flux](https://docs.flagger.app/tutorials/canary-helm-gitops)

|

|

||||||

|

|

||||||

## Canary CRD

|

|

||||||

|

|

||||||

Flagger takes a Kubernetes deployment and optionally a horizontal pod autoscaler (HPA),

|

|

||||||

then creates a series of objects (Kubernetes deployments, ClusterIP services and Istio or App Mesh virtual services).

|

|

||||||

These objects expose the application on the mesh and drive the canary analysis and promotion.

|

|

||||||

|

|

||||||

Flagger keeps track of ConfigMaps and Secrets referenced by a Kubernetes Deployment and triggers a canary analysis if any of those objects change.

|

|

||||||

When promoting a workload in production, both code (container images) and configuration (config maps and secrets) are being synchronised.

|

|

||||||

|

|

||||||

For a deployment named _podinfo_, a canary promotion can be defined using Flagger's custom resource:

|

|

||||||

|

|

||||||

```yaml

|

|

||||||

apiVersion: flagger.app/v1alpha3

|

|

||||||

kind: Canary

|

|

||||||

metadata:

|

|

||||||

name: podinfo

|

|

||||||

namespace: test

|

|

||||||

spec:

|

|

||||||

# service mesh provider (optional)

|

|

||||||

# can be: kubernetes, istio, linkerd, appmesh, nginx, gloo, supergloo

|

|

||||||

# use the kubernetes provider for Blue/Green style deployments

|

|

||||||

provider: istio