mirror of

https://github.com/fluxcd/flagger.git

synced 2026-04-15 06:57:34 +00:00

Compare commits

294 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

1e5d83ad21 | ||

|

|

68f0920548 | ||

|

|

0d25d84230 | ||

|

|

15a6f742e0 | ||

|

|

495a5b24f4 | ||

|

|

956daea9dd | ||

|

|

7b17286b96 | ||

|

|

e535b01de1 | ||

|

|

d151a1b5e4 | ||

|

|

7242fa7d5c | ||

|

|

9d4ebd9ddd | ||

|

|

b2e713dbc1 | ||

|

|

8bcc7bf9af | ||

|

|

3078f96830 | ||

|

|

8708e35287 | ||

|

|

a8b96f053d | ||

|

|

a487357bd5 | ||

|

|

e8aba087ac | ||

|

|

5b7a679944 | ||

|

|

8229852585 | ||

|

|

f1def19f25 | ||

|

|

44363d5d99 | ||

|

|

f3f62667bf | ||

|

|

3d8615735b | ||

|

|

d1b6b36bcd | ||

|

|

e4755a4567 | ||

|

|

2ced721cf1 | ||

|

|

cf267d0bbd | ||

|

|

49d59f3b45 | ||

|

|

699ea2b8aa | ||

|

|

064d867510 | ||

|

|

5f63c4ae63 | ||

|

|

4ebb38743d | ||

|

|

01a7f3606c | ||

|

|

699c577fa6 | ||

|

|

6879038a63 | ||

|

|

cc2f9456cf | ||

|

|

7994989b29 | ||

|

|

1206132e0c | ||

|

|

74cfbda40c | ||

|

|

1266ff48d8 | ||

|

|

b1315679b8 | ||

|

|

859fb7e160 | ||

|

|

32077636ff | ||

|

|

e263d6a169 | ||

|

|

8d517799b5 | ||

|

|

a89cd6d3ba | ||

|

|

4c2de0c716 | ||

|

|

059a304a07 | ||

|

|

317d53a71f | ||

|

|

202b6e7eb1 | ||

|

|

e59e3aedd4 | ||

|

|

2b45c2013c | ||

|

|

6786668684 | ||

|

|

27eb21ecc8 | ||

|

|

e7d8adecb4 | ||

|

|

aa574d469e | ||

|

|

5ba20c254a | ||

|

|

bb2cf39393 | ||

|

|

05d08d3ff1 | ||

|

|

7ec3774172 | ||

|

|

e9ffef29f6 | ||

|

|

64035b4942 | ||

|

|

925cc37c8f | ||

|

|

1574e29376 | ||

|

|

4a34158587 | ||

|

|

534196adde | ||

|

|

57bf2ab7d1 | ||

|

|

c65d072249 | ||

|

|

a50d7de86d | ||

|

|

e365c21322 | ||

|

|

7ce679678f | ||

|

|

685c816a12 | ||

|

|

5d3ab056f0 | ||

|

|

2587a3d3f1 | ||

|

|

58270dd4b9 | ||

|

|

86081708a4 | ||

|

|

686e8a3e8b | ||

|

|

0aecddb00e | ||

|

|

26518cecbf | ||

|

|

9d1db87592 | ||

|

|

e352010bfd | ||

|

|

58267752b1 | ||

|

|

2dd48c6e79 | ||

|

|

6c29c21184 | ||

|

|

85fe251991 | ||

|

|

69861e0c8a | ||

|

|

e440be17ae | ||

|

|

ce52408bbc | ||

|

|

badf7b9a4f | ||

|

|

3e9fe97ba3 | ||

|

|

ec7066b31b | ||

|

|

ec74dc5a33 | ||

|

|

cbdc2c5a7c | ||

|

|

228fbeeda4 | ||

|

|

b0d9825afa | ||

|

|

7509264d73 | ||

|

|

f08725d661 | ||

|

|

f2a9a8d645 | ||

|

|

e799030ae3 | ||

|

|

ec0657f436 | ||

|

|

fce46e26d4 | ||

|

|

e015a409fe | ||

|

|

82ff90ce26 | ||

|

|

63edc627ad | ||

|

|

c2b4287ce1 | ||

|

|

5b61f15f95 | ||

|

|

9c815f2252 | ||

|

|

d16c9696c3 | ||

|

|

14ccda5506 | ||

|

|

a496b99d6e | ||

|

|

882286dce7 | ||

|

|

8aa9ca92e3 | ||

|

|

52dd8f8c14 | ||

|

|

4d28b9074b | ||

|

|

381c19b952 | ||

|

|

50f9255af2 | ||

|

|

a7df3457ad | ||

|

|

647f624554 | ||

|

|

3d3e051f03 | ||

|

|

4c0b2beb63 | ||

|

|

ec44f64465 | ||

|

|

19d4e521a3 | ||

|

|

85a3b7c388 | ||

|

|

26ec719c67 | ||

|

|

66364bb2c9 | ||

|

|

f9f8d7e71e | ||

|

|

bdbd1fb1f0 | ||

|

|

b3112a53f1 | ||

|

|

f1f4e68673 | ||

|

|

9b56445621 | ||

|

|

f5f3d92d3d | ||

|

|

4d074799ca | ||

|

|

d38a2406a7 | ||

|

|

25ccfca835 | ||

|

|

487b6566ee | ||

|

|

14caeb12ad | ||

|

|

cf8fcd0539 | ||

|

|

d8387a351e | ||

|

|

300cd24493 | ||

|

|

fb66d24f89 | ||

|

|

f1fc8c067e | ||

|

|

da1ee05c0a | ||

|

|

57099ecd43 | ||

|

|

8c5b41bbe6 | ||

|

|

7bc716508c | ||

|

|

d82d9765e1 | ||

|

|

74e570c198 | ||

|

|

6adf51083e | ||

|

|

a5be82a7d3 | ||

|

|

83693668ed | ||

|

|

c2929694a6 | ||

|

|

82db9ff213 | ||

|

|

5e853bb589 | ||

|

|

9e1fad3947 | ||

|

|

a4f5a983ba | ||

|

|

08d7520458 | ||

|

|

283de16660 | ||

|

|

5e47ae287b | ||

|

|

e7e155048d | ||

|

|

8197073cf0 | ||

|

|

310111bb8d | ||

|

|

3dd667f3b3 | ||

|

|

e06334cd12 | ||

|

|

8d8b99dc78 | ||

|

|

3418488902 | ||

|

|

b96f6f0920 | ||

|

|

e593f2e258 | ||

|

|

7b6c37ea1f | ||

|

|

4dbeec02c8 | ||

|

|

1b2df99799 | ||

|

|

6d72050e81 | ||

|

|

b97a87a1b4 | ||

|

|

89b0487376 | ||

|

|

0ae53e415c | ||

|

|

915c200c7b | ||

|

|

a4941bd764 | ||

|

|

5123cbae00 | ||

|

|

135f96d507 | ||

|

|

aa08ea9160 | ||

|

|

fb80eea144 | ||

|

|

bebcf1c7d4 | ||

|

|

f39f0ef101 | ||

|

|

f2f4c8397d | ||

|

|

ae4613fa76 | ||

|

|

8b1155123d | ||

|

|

e65dfbb659 | ||

|

|

fe37bdd9c7 | ||

|

|

f449ee1878 | ||

|

|

47b6807471 | ||

|

|

f93708e90f | ||

|

|

5285b76746 | ||

|

|

1a4d8b965a | ||

|

|

11209fe05d | ||

|

|

09c1eec8f3 | ||

|

|

d3373447c3 | ||

|

|

d4e54fe966 | ||

|

|

a5c284cabb | ||

|

|

80bae41df4 | ||

|

|

f5c267144e | ||

|

|

25a33fe58f | ||

|

|

bab12dc99b | ||

|

|

1abb1f16d4 | ||

|

|

7cf843d6f4 | ||

|

|

a8444a6328 | ||

|

|

ca044d3577 | ||

|

|

76bac5d971 | ||

|

|

f68f291b3d | ||

|

|

b108672fad | ||

|

|

377a8f48e2 | ||

|

|

a098d04d64 | ||

|

|

5e4b70bd51 | ||

|

|

9ce931abb4 | ||

|

|

072d9b9850 | ||

|

|

1bb4afaeac | ||

|

|

4dd6102a0f | ||

|

|

4f64377480 | ||

|

|

31856a2f46 | ||

|

|

358391bfde | ||

|

|

7b2c005d9b | ||

|

|

c31ef8a788 | ||

|

|

e1bd004683 | ||

|

|

0cecab530f | ||

|

|

844090f842 | ||

|

|

aa48ad45b7 | ||

|

|

1967e4857b | ||

|

|

21923d6f87 | ||

|

|

a5912ccd89 | ||

|

|

e4252d8cbd | ||

|

|

b01e4cf9ec | ||

|

|

703cfd50b2 | ||

|

|

6a1b765a77 | ||

|

|

b2dc762937 | ||

|

|

498f065dea | ||

|

|

9d8941176b | ||

|

|

4d2a03c0b2 | ||

|

|

e0e2d5c0e6 | ||

|

|

9b97bff7b1 | ||

|

|

f23be1d0ec | ||

|

|

fa595e160c | ||

|

|

4ea5a48f43 | ||

|

|

6dd8a755c8 | ||

|

|

063d38dbd2 | ||

|

|

165c953239 | ||

|

|

a0fae153cf | ||

|

|

bfcf288561 | ||

|

|

560f884cc0 | ||

|

|

d79898848e | ||

|

|

c03d138cd0 | ||

|

|

22d192e7e3 | ||

|

|

a4babd6fc4 | ||

|

|

edd5515bd7 | ||

|

|

00dde2358a | ||

|

|

8e84262a32 | ||

|

|

541696f3f7 | ||

|

|

8051d03f08 | ||

|

|

a78d273aeb | ||

|

|

07bd3563cd | ||

|

|

8c690d1b21 | ||

|

|

a8b4e9cc6d | ||

|

|

30ed9fb75c | ||

|

|

0382d9c1ca | ||

|

|

95381e1892 | ||

|

|

7df1beef85 | ||

|

|

a1e519b352 | ||

|

|

e7f16a8c06 | ||

|

|

a3adae4af0 | ||

|

|

c7c0c76bd3 | ||

|

|

67cc965d31 | ||

|

|

d09969e3b4 | ||

|

|

41904b42f8 | ||

|

|

f638410782 | ||

|

|

48cc7995d7 | ||

|

|

793b93c665 | ||

|

|

e0186cbe2a | ||

|

|

2cc2b5dce8 | ||

|

|

ccdbbdb0ec | ||

|

|

13483321ac | ||

|

|

5547533197 | ||

|

|

c68998d75e | ||

|

|

20f2d3f2f9 | ||

|

|

cc7b35b44a | ||

|

|

67a2cd6a48 | ||

|

|

08deddc4fe | ||

|

|

77b2eb36a5 | ||

|

|

ab84ac207a | ||

|

|

8957d91e01 | ||

|

|

c7cbb729b7 | ||

|

|

eca6fa7958 | ||

|

|

ee535afcb9 | ||

|

|

18b64910d7 | ||

|

|

3ca75140d0 | ||

|

|

960f924448 | ||

|

|

eed128a8b4 |

3

.clomonitor.yml

Normal file

3

.clomonitor.yml

Normal file

@@ -0,0 +1,3 @@

|

||||

exemptions:

|

||||

- check: analytics

|

||||

reason: "We don't track people"

|

||||

@@ -1,50 +0,0 @@

|

||||

# Flagger signed releases

|

||||

|

||||

Flagger releases published to GitHub Container Registry as multi-arch container images

|

||||

are signed using [cosign](https://github.com/sigstore/cosign).

|

||||

|

||||

## Verify Flagger images

|

||||

|

||||

Install the [cosign](https://github.com/sigstore/cosign) CLI:

|

||||

|

||||

```sh

|

||||

brew install sigstore/tap/cosign

|

||||

```

|

||||

|

||||

Verify a Flagger release with cosign CLI:

|

||||

|

||||

```sh

|

||||

cosign verify -key https://raw.githubusercontent.com/fluxcd/flagger/main/cosign/cosign.pub \

|

||||

ghcr.io/fluxcd/flagger:1.13.0

|

||||

```

|

||||

|

||||

Verify Flagger images before they get pulled on your Kubernetes clusters with [Kyverno](https://github.com/kyverno/kyverno/):

|

||||

|

||||

```yaml

|

||||

apiVersion: kyverno.io/v1

|

||||

kind: ClusterPolicy

|

||||

metadata:

|

||||

name: verify-flagger-image

|

||||

annotations:

|

||||

policies.kyverno.io/title: Verify Flagger Image

|

||||

policies.kyverno.io/category: Cosign

|

||||

policies.kyverno.io/severity: medium

|

||||

policies.kyverno.io/subject: Pod

|

||||

policies.kyverno.io/minversion: 1.4.2

|

||||

spec:

|

||||

validationFailureAction: enforce

|

||||

background: false

|

||||

rules:

|

||||

- name: verify-image

|

||||

match:

|

||||

resources:

|

||||

kinds:

|

||||

- Pod

|

||||

verifyImages:

|

||||

- image: "ghcr.io/fluxcd/flagger:*"

|

||||

key: |-

|

||||

-----BEGIN PUBLIC KEY-----

|

||||

MFkwEwYHKoZIzj0CAQYIKoZIzj0DAQcDQgAEST+BqQ1XZhhVYx0YWQjdUJYIG5Lt

|

||||

iz2+UxRIqmKBqNmce2T+l45qyqOs99qfD7gLNGmkVZ4vtJ9bM7FxChFczg==

|

||||

-----END PUBLIC KEY-----

|

||||

```

|

||||

@@ -1,11 +0,0 @@

|

||||

-----BEGIN ENCRYPTED COSIGN PRIVATE KEY-----

|

||||

eyJrZGYiOnsibmFtZSI6InNjcnlwdCIsInBhcmFtcyI6eyJOIjozMjc2OCwiciI6

|

||||

OCwicCI6MX0sInNhbHQiOiIvK1MwbTNrU3pGMFFXdVVYQkFoY2gvTDc3NVJBSy9O

|

||||

cnkzUC9iMkxBZGF3PSJ9LCJjaXBoZXIiOnsibmFtZSI6Im5hY2wvc2VjcmV0Ym94

|

||||

Iiwibm9uY2UiOiJBNEFYL2IyU1BsMDBuY3JUNk45QkNOb0VLZTZLZEluRCJ9LCJj

|

||||

aXBoZXJ0ZXh0IjoiZ054UlJweXpraWtRMUVaRldsSnEvQXVUWTl0Vis2enBlWkIy

|

||||

dUFHREMzOVhUQlAwaWY5YStaZTE1V0NTT2FQZ01XQmtSZWhrQVVjQ3dZOGF2WTZa

|

||||

eFhZWWE3T1B4eFdidHJuSUVZM2hwZUk1M1dVQVZ6SXEzQjl0N0ZmV1JlVGsxdFlo

|

||||

b3hwQmxUSHY4U0c2azdPYk1aQnJleitzSGRWclF6YUdMdG12V1FOMTNZazRNb25i

|

||||

ZUpRSUJpUXFQTFg5NzFhSUlxU0dxYVhCanc9PSJ9

|

||||

-----END ENCRYPTED COSIGN PRIVATE KEY-----

|

||||

@@ -1,4 +0,0 @@

|

||||

-----BEGIN PUBLIC KEY-----

|

||||

MFkwEwYHKoZIzj0CAQYIKoZIzj0DAQcDQgAEST+BqQ1XZhhVYx0YWQjdUJYIG5Lt

|

||||

iz2+UxRIqmKBqNmce2T+l45qyqOs99qfD7gLNGmkVZ4vtJ9bM7FxChFczg==

|

||||

-----END PUBLIC KEY-----

|

||||

@@ -16,3 +16,4 @@ redirects:

|

||||

usage/osm-progressive-delivery: tutorials/osm-progressive-delivery.md

|

||||

usage/kuma-progressive-delivery: tutorials/kuma-progressive-delivery.md

|

||||

usage/gatewayapi-progressive-delivery: tutorials/gatewayapi-progressive-delivery.md

|

||||

usage/apisix-progressive-delivery: tutorials/apisix-progressive-delivery.md

|

||||

|

||||

7

.github/dependabot.yml

vendored

Normal file

7

.github/dependabot.yml

vendored

Normal file

@@ -0,0 +1,7 @@

|

||||

version: 2

|

||||

|

||||

updates:

|

||||

- package-ecosystem: "github-actions"

|

||||

directory: "/"

|

||||

schedule:

|

||||

interval: "weekly"

|

||||

16

.github/workflows/build.yaml

vendored

16

.github/workflows/build.yaml

vendored

@@ -10,31 +10,33 @@ on:

|

||||

- main

|

||||

|

||||

permissions:

|

||||

contents: read # for actions/checkout to fetch code

|

||||

contents: read

|

||||

|

||||

jobs:

|

||||

container:

|

||||

build-flagger:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- name: Checkout

|

||||

uses: actions/checkout@v2

|

||||

uses: actions/checkout@v3

|

||||

- name: Restore Go cache

|

||||

uses: actions/cache@v1

|

||||

uses: actions/cache@v3.3.1

|

||||

with:

|

||||

path: ~/go/pkg/mod

|

||||

key: ${{ runner.os }}-go-${{ hashFiles('**/go.sum') }}

|

||||

restore-keys: |

|

||||

${{ runner.os }}-go-

|

||||

- name: Setup Go

|

||||

uses: actions/setup-go@v2

|

||||

uses: actions/setup-go@v4

|

||||

with:

|

||||

go-version: 1.17.x

|

||||

go-version: 1.19.x

|

||||

- name: Download modules

|

||||

run: |

|

||||

go mod download

|

||||

go install golang.org/x/tools/cmd/goimports

|

||||

- name: Run linters

|

||||

run: make test-fmt test-codegen

|

||||

- name: Verify CRDs

|

||||

run: make verify-crd

|

||||

- name: Run tests

|

||||

run: go test -race -coverprofile=coverage.txt -covermode=atomic $(go list ./pkg/...)

|

||||

- name: Check if working tree is dirty

|

||||

@@ -45,7 +47,7 @@ jobs:

|

||||

exit 1

|

||||

fi

|

||||

- name: Upload coverage to Codecov

|

||||

uses: codecov/codecov-action@v1

|

||||

uses: codecov/codecov-action@v3

|

||||

with:

|

||||

file: ./coverage.txt

|

||||

- name: Build container image

|

||||

|

||||

16

.github/workflows/e2e.yaml

vendored

16

.github/workflows/e2e.yaml

vendored

@@ -10,10 +10,10 @@ on:

|

||||

- main

|

||||

|

||||

permissions:

|

||||

contents: read # for actions/checkout to fetch code

|

||||

contents: read

|

||||

|

||||

jobs:

|

||||

kind:

|

||||

e2e-test:

|

||||

runs-on: ubuntu-latest

|

||||

strategy:

|

||||

fail-fast: false

|

||||

@@ -22,7 +22,6 @@ jobs:

|

||||

# service mesh

|

||||

- istio

|

||||

- linkerd

|

||||

- osm

|

||||

- kuma

|

||||

# ingress controllers

|

||||

- contour

|

||||

@@ -32,14 +31,17 @@ jobs:

|

||||

- skipper

|

||||

- kubernetes

|

||||

- gatewayapi

|

||||

- keda

|

||||

- apisix

|

||||

steps:

|

||||

- name: Checkout

|

||||

uses: actions/checkout@v2

|

||||

uses: actions/checkout@v3

|

||||

- name: Setup Kubernetes

|

||||

uses: engineerd/setup-kind@v0.5.0

|

||||

uses: helm/kind-action@v1.5.0

|

||||

with:

|

||||

version: "v0.11.1"

|

||||

image: kindest/node:v1.21.1@sha256:fae9a58f17f18f06aeac9772ca8b5ac680ebbed985e266f711d936e91d113bad

|

||||

version: v0.18.0

|

||||

cluster_name: kind

|

||||

node_image: kindest/node:v1.24.12@sha256:0bdca26bd7fe65c823640b14253ea7bac4baad9336b332c94850f84d8102f873

|

||||

- name: Build container image

|

||||

run: |

|

||||

docker build -t test/flagger:latest .

|

||||

|

||||

10

.github/workflows/helm.yaml

vendored

10

.github/workflows/helm.yaml

vendored

@@ -4,15 +4,17 @@ on:

|

||||

workflow_dispatch:

|

||||

|

||||

permissions:

|

||||

contents: write # needed to push chart

|

||||

contents: read

|

||||

|

||||

jobs:

|

||||

build-push:

|

||||

release-charts:

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

contents: write

|

||||

steps:

|

||||

- uses: actions/checkout@v2

|

||||

- uses: actions/checkout@v3

|

||||

- name: Publish Helm charts

|

||||

uses: stefanprodan/helm-gh-pages@v1.3.0

|

||||

uses: stefanprodan/helm-gh-pages@v1.7.0

|

||||

with:

|

||||

token: ${{ secrets.GITHUB_TOKEN }}

|

||||

charts_url: https://flagger.app

|

||||

|

||||

31

.github/workflows/push-ld.yml

vendored

31

.github/workflows/push-ld.yml

vendored

@@ -6,41 +6,44 @@ env:

|

||||

IMAGE: "ghcr.io/fluxcd/flagger-loadtester"

|

||||

|

||||

permissions:

|

||||

contents: write # needed to write releases

|

||||

packages: write # needed for ghcr access

|

||||

contents: read

|

||||

|

||||

jobs:

|

||||

build-push:

|

||||

release-load-tester:

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

id-token: write

|

||||

packages: write

|

||||

steps:

|

||||

- uses: actions/checkout@v2

|

||||

- uses: actions/checkout@v3

|

||||

- uses: sigstore/cosign-installer@v2.8.1

|

||||

- name: Prepare

|

||||

id: prep

|

||||

run: |

|

||||

VERSION=$(grep 'VERSION' cmd/loadtester/main.go | head -1 | awk '{ print $4 }' | tr -d '"')

|

||||

echo ::set-output name=BUILD_DATE::$(date -u +'%Y-%m-%dT%H:%M:%SZ')

|

||||

echo ::set-output name=VERSION::${VERSION}

|

||||

echo "BUILD_DATE=$(date -u +'%Y-%m-%dT%H:%M:%SZ')" >> $GITHUB_OUTPUT

|

||||

echo "VERSION=${VERSION}" >> $GITHUB_OUTPUT

|

||||

- name: Setup QEMU

|

||||

uses: docker/setup-qemu-action@v1

|

||||

uses: docker/setup-qemu-action@v2

|

||||

- name: Setup Docker Buildx

|

||||

id: buildx

|

||||

uses: docker/setup-buildx-action@v1

|

||||

uses: docker/setup-buildx-action@v2

|

||||

- name: Login to GitHub Container Registry

|

||||

uses: docker/login-action@v1

|

||||

uses: docker/login-action@v2

|

||||

with:

|

||||

registry: ghcr.io

|

||||

username: fluxcdbot

|

||||

password: ${{ secrets.GHCR_TOKEN }}

|

||||

- name: Generate image meta

|

||||

id: meta

|

||||

uses: docker/metadata-action@v3

|

||||

uses: docker/metadata-action@v4

|

||||

with:

|

||||

images: |

|

||||

${{ env.IMAGE }}

|

||||

tags: |

|

||||

type=raw,value=${{ steps.prep.outputs.VERSION }}

|

||||

- name: Publish image

|

||||

uses: docker/build-push-action@v2

|

||||

uses: docker/build-push-action@v4

|

||||

with:

|

||||

push: true

|

||||

builder: ${{ steps.buildx.outputs.name }}

|

||||

@@ -51,6 +54,8 @@ jobs:

|

||||

REVISION=${{ github.sha }}

|

||||

tags: ${{ steps.meta.outputs.tags }}

|

||||

labels: ${{ steps.meta.outputs.labels }}

|

||||

- name: Check images

|

||||

- name: Sign image

|

||||

env:

|

||||

COSIGN_EXPERIMENTAL: 1

|

||||

run: |

|

||||

docker buildx imagetools inspect ${{ env.IMAGE }}:${{ steps.prep.outputs.VERSION }}

|

||||

cosign sign ${{ env.IMAGE }}:${{ steps.prep.outputs.VERSION }}

|

||||

|

||||

84

.github/workflows/release.yml

vendored

84

.github/workflows/release.yml

vendored

@@ -3,51 +3,66 @@ on:

|

||||

push:

|

||||

tags:

|

||||

- 'v*'

|

||||

workflow_dispatch:

|

||||

inputs:

|

||||

tag:

|

||||

description: 'image tag prefix'

|

||||

default: 'rc'

|

||||

required: true

|

||||

|

||||

permissions:

|

||||

contents: write # needed to write releases

|

||||

id-token: write # needed for keyless signing

|

||||

packages: write # needed for ghcr access

|

||||

contents: read

|

||||

|

||||

env:

|

||||

IMAGE: "ghcr.io/fluxcd/${{ github.event.repository.name }}"

|

||||

|

||||

jobs:

|

||||

build-push:

|

||||

release-flagger:

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

contents: write # needed to write releases

|

||||

id-token: write # needed for keyless signing

|

||||

packages: write # needed for ghcr access

|

||||

steps:

|

||||

- uses: actions/checkout@v2

|

||||

- uses: sigstore/cosign-installer@main

|

||||

- uses: actions/checkout@v3

|

||||

- uses: fluxcd/flux2/action@main

|

||||

- uses: sigstore/cosign-installer@v2.8.1

|

||||

- name: Prepare

|

||||

id: prep

|

||||

run: |

|

||||

VERSION=$(grep 'VERSION' pkg/version/version.go | awk '{ print $4 }' | tr -d '"')

|

||||

if [[ ${GITHUB_EVENT_NAME} = "workflow_dispatch" ]]; then

|

||||

VERSION="${{ github.event.inputs.tag }}-${GITHUB_SHA::8}"

|

||||

else

|

||||

VERSION=$(grep 'VERSION' pkg/version/version.go | awk '{ print $4 }' | tr -d '"')

|

||||

fi

|

||||

CHANGELOG="https://github.com/fluxcd/flagger/blob/main/CHANGELOG.md#$(echo $VERSION | tr -d '.')"

|

||||

echo "[CHANGELOG](${CHANGELOG})" > notes.md

|

||||

echo ::set-output name=BUILD_DATE::$(date -u +'%Y-%m-%dT%H:%M:%SZ')

|

||||

echo ::set-output name=VERSION::${VERSION}

|

||||

echo "BUILD_DATE=$(date -u +'%Y-%m-%dT%H:%M:%SZ')" >> $GITHUB_OUTPUT

|

||||

echo "VERSION=${VERSION}" >> $GITHUB_OUTPUT

|

||||

- name: Setup QEMU

|

||||

uses: docker/setup-qemu-action@v1

|

||||

uses: docker/setup-qemu-action@v2

|

||||

- name: Setup Docker Buildx

|

||||

id: buildx

|

||||

uses: docker/setup-buildx-action@v1

|

||||

uses: docker/setup-buildx-action@v2

|

||||

- name: Login to GitHub Container Registry

|

||||

uses: docker/login-action@v1

|

||||

uses: docker/login-action@v2

|

||||

with:

|

||||

registry: ghcr.io

|

||||

username: fluxcdbot

|

||||

password: ${{ secrets.GHCR_TOKEN }}

|

||||

- name: Generate image meta

|

||||

id: meta

|

||||

uses: docker/metadata-action@v3

|

||||

uses: docker/metadata-action@v4

|

||||

with:

|

||||

images: |

|

||||

${{ env.IMAGE }}

|

||||

tags: |

|

||||

type=raw,value=${{ steps.prep.outputs.VERSION }}

|

||||

- name: Publish image

|

||||

uses: docker/build-push-action@v2

|

||||

uses: docker/build-push-action@v4

|

||||

with:

|

||||

sbom: true

|

||||

provenance: true

|

||||

push: true

|

||||

builder: ${{ steps.buildx.outputs.name }}

|

||||

context: .

|

||||

@@ -58,26 +73,43 @@ jobs:

|

||||

tags: ${{ steps.meta.outputs.tags }}

|

||||

labels: ${{ steps.meta.outputs.labels }}

|

||||

- name: Sign image

|

||||

env:

|

||||

COSIGN_EXPERIMENTAL: 1

|

||||

run: |

|

||||

echo -n "${{secrets.COSIGN_PASSWORD}}" | \

|

||||

cosign sign -key ./.cosign/cosign.key -a git_sha=$GITHUB_SHA \

|

||||

${{ env.IMAGE }}:${{ steps.prep.outputs.VERSION }}

|

||||

- name: Check images

|

||||

run: |

|

||||

docker buildx imagetools inspect ${{ env.IMAGE }}:${{ steps.prep.outputs.VERSION }}

|

||||

- name: Verifiy image signature

|

||||

run: |

|

||||

cosign verify -key ./.cosign/cosign.pub \

|

||||

${{ env.IMAGE }}:${{ steps.prep.outputs.VERSION }}

|

||||

cosign sign ${{ env.IMAGE }}:${{ steps.prep.outputs.VERSION }}

|

||||

- name: Publish Helm charts

|

||||

uses: stefanprodan/helm-gh-pages@v1.3.0

|

||||

if: startsWith(github.ref, 'refs/tags/v')

|

||||

uses: stefanprodan/helm-gh-pages@v1.7.0

|

||||

with:

|

||||

token: ${{ secrets.GITHUB_TOKEN }}

|

||||

charts_url: https://flagger.app

|

||||

linting: off

|

||||

- uses: fluxcd/pkg/actions/helm@main

|

||||

with:

|

||||

version: 3.10.1

|

||||

- name: Publish signed Helm chart to GHCR

|

||||

if: startsWith(github.ref, 'refs/tags/v')

|

||||

env:

|

||||

COSIGN_EXPERIMENTAL: 1

|

||||

run: |

|

||||

helm package charts/flagger

|

||||

helm push flagger-${{ steps.prep.outputs.VERSION }}.tgz oci://ghcr.io/fluxcd/charts

|

||||

cosign sign ghcr.io/fluxcd/charts/flagger:${{ steps.prep.outputs.VERSION }}

|

||||

rm flagger-${{ steps.prep.outputs.VERSION }}.tgz

|

||||

- name: Publish signed manifests to GHCR

|

||||

if: startsWith(github.ref, 'refs/tags/v')

|

||||

env:

|

||||

COSIGN_EXPERIMENTAL: 1

|

||||

run: |

|

||||

flux push artifact oci://ghcr.io/fluxcd/flagger-manifests:${{ steps.prep.outputs.VERSION }} \

|

||||

--path="./kustomize" \

|

||||

--source="$(git config --get remote.origin.url)" \

|

||||

--revision="${{ steps.prep.outputs.VERSION }}/$(git rev-parse HEAD)"

|

||||

cosign sign ghcr.io/fluxcd/flagger-manifests:${{ steps.prep.outputs.VERSION }}

|

||||

- uses: anchore/sbom-action/download-syft@v0

|

||||

- name: Create release and SBOM

|

||||

uses: goreleaser/goreleaser-action@v2

|

||||

uses: goreleaser/goreleaser-action@v4

|

||||

if: startsWith(github.ref, 'refs/tags/v')

|

||||

with:

|

||||

version: latest

|

||||

args: release --release-notes=notes.md --rm-dist --skip-validate

|

||||

|

||||

26

.github/workflows/scan.yml

vendored

26

.github/workflows/scan.yml

vendored

@@ -9,33 +9,33 @@ on:

|

||||

- cron: '18 10 * * 3'

|

||||

|

||||

permissions:

|

||||

contents: read # for actions/checkout to fetch code

|

||||

security-events: write # for codeQL to write security events

|

||||

contents: read

|

||||

|

||||

jobs:

|

||||

fossa:

|

||||

name: FOSSA

|

||||

scan-fossa:

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

security-events: write

|

||||

steps:

|

||||

- uses: actions/checkout@v2

|

||||

- uses: actions/checkout@v3

|

||||

- name: Run FOSSA scan and upload build data

|

||||

uses: fossa-contrib/fossa-action@v1

|

||||

uses: fossa-contrib/fossa-action@v2

|

||||

with:

|

||||

# FOSSA Push-Only API Token

|

||||

fossa-api-key: 5ee8bf422db1471e0bcf2bcb289185de

|

||||

github-token: ${{ github.token }}

|

||||

|

||||

codeql:

|

||||

name: CodeQL

|

||||

scan-codeql:

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

security-events: write

|

||||

steps:

|

||||

- name: Checkout repository

|

||||

uses: actions/checkout@v2

|

||||

uses: actions/checkout@v3

|

||||

- name: Initialize CodeQL

|

||||

uses: github/codeql-action/init@v1

|

||||

uses: github/codeql-action/init@v2

|

||||

with:

|

||||

languages: go

|

||||

- name: Autobuild

|

||||

uses: github/codeql-action/autobuild@v1

|

||||

uses: github/codeql-action/autobuild@v2

|

||||

- name: Perform CodeQL Analysis

|

||||

uses: github/codeql-action/analyze@v1

|

||||

uses: github/codeql-action/analyze@v2

|

||||

|

||||

@@ -15,3 +15,16 @@ sboms:

|

||||

artifacts: source

|

||||

documents:

|

||||

- "{{ .ProjectName }}_{{ .Version }}_sbom.spdx.json"

|

||||

|

||||

signs:

|

||||

- cmd: cosign

|

||||

env:

|

||||

- COSIGN_EXPERIMENTAL=1

|

||||

certificate: '${artifact}.pem'

|

||||

args:

|

||||

- sign-blob

|

||||

- '--output-certificate=${certificate}'

|

||||

- '--output-signature=${signature}'

|

||||

- '${artifact}'

|

||||

artifacts: checksum

|

||||

output: true

|

||||

|

||||

482

CHANGELOG.md

482

CHANGELOG.md

@@ -2,6 +2,488 @@

|

||||

|

||||

All notable changes to this project are documented in this file.

|

||||

|

||||

## 1.31.0

|

||||

|

||||

**Release date:** 2023-05-10

|

||||

|

||||

⚠️ __Breaking Changes__

|

||||

|

||||

This release adds support for Linkerd 2.12 and later. Due to changes in Linkerd

|

||||

the default namespace for Flagger's installation had to be changed from

|

||||

`linkerd` to `flagger-system` and the `flagger` Deployment is now injected with

|

||||

the Linkerd proxy. Furthermore, installing Flagger for Linkerd will result in

|

||||

the creation of an `AuthorizationPolicy` that allows access to the Prometheus

|

||||

instance in the `linkerd-viz` namespace. To upgrade your Flagger installation,

|

||||

please see the below migration guide.

|

||||

|

||||

If you use Kustomize, then follow these steps:

|

||||

* `kubectl delete -n linkerd deploy/flagger`

|

||||

* `kubectl delete -n linkerd serviceaccount flagger`

|

||||

* If you're on Linkerd >= 2.12, you'll need to install the SMI extension to enable

|

||||

support for `TrafficSplit`s:

|

||||

```bash

|

||||

curl -sL https://linkerd.github.io/linkerd-smi/install | sh

|

||||

linkerd smi install | kubectl apply -f -

|

||||

```

|

||||

* `kubectl apply -k github.com/fluxcd/flagger//kustomize/linkerd`

|

||||

|

||||

Note: If you're on Linkerd < 2.12, this will report an error about missing CRDs.

|

||||

It is safe to ignore this error.

|

||||

|

||||

If you use Helm and are on Linkerd < 2.12, then you can use `helm upgrade` to do

|

||||

a regular upgrade.

|

||||

|

||||

If you use Helm and are on Linkerd >= 2.12, then follow these steps:

|

||||

* `helm uninstall flagger -n linkerd`

|

||||

* Install the Linkerd SMI extension:

|

||||

```bash

|

||||

helm repo add l5d-smi https://linkerd.github.io/linkerd-smi

|

||||

helm install linkerd-smi l5d-smi/linkerd-smi -n linkerd-smi --create-namespace

|

||||

```

|

||||

* Install Flagger in the `flagger-system` namespace

|

||||

and create an `AuthorizationPolicy`:

|

||||

```bash

|

||||

helm repo update flagger

|

||||

helm install flagger flagger/flagger \

|

||||

--namespace flagger-system \

|

||||

--set meshProvider=linkerd \

|

||||

--set metricsServer=http://prometheus.linkerd-viz:9090 \

|

||||

--set linkerdAuthPolicy.create=true

|

||||

```

|

||||

|

||||

Furthermore, a bug which led the `confirm-rollout` webhook to be executed at

|

||||

every step of the Canary instead of only being executed before the canary

|

||||

Deployment is scaled up, has been fixed.

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Add support for Linkerd 2.13

|

||||

[#1417](https://github.com/fluxcd/flagger/pull/1417)

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Fix the loadtester install with flux documentation

|

||||

[#1384](https://github.com/fluxcd/flagger/pull/1384)

|

||||

- Run `confirm-rollout` checks only before scaling up deployment

|

||||

[#1414](https://github.com/fluxcd/flagger/pull/1414)

|

||||

- e2e: Remove OSM tests

|

||||

[#1423](https://github.com/fluxcd/flagger/pull/1423)

|

||||

|

||||

## 1.30.0

|

||||

|

||||

**Release date:** 2023-04-12

|

||||

|

||||

This release fixes a bug related to the lack of updates to the generated

|

||||

object's metadata according to the metadata specified in `spec.service.apex`.

|

||||

Furthermore, a bug where labels were wrongfully copied over from the canary

|

||||

deployment to primary deployment when no value was provided for

|

||||

`--include-label-prefix` has been fixed.

|

||||

This release also makes Flagger compatible with Flux's helm-controller drift

|

||||

detection.

|

||||

|

||||

#### Improvements

|

||||

|

||||

- build(deps): bump actions/cache from 3.2.5 to 3.3.1

|

||||

[#1385](https://github.com/fluxcd/flagger/pull/1385)

|

||||

- helm: Added the option to supply additional volumes

|

||||

[#1393](https://github.com/fluxcd/flagger/pull/1393)

|

||||

- build(deps): bump actions/setup-go from 3 to 4

|

||||

[#1394](https://github.com/fluxcd/flagger/pull/1394)

|

||||

- update Kuma version and docs

|

||||

[#1402](https://github.com/fluxcd/flagger/pull/1402)

|

||||

- ci: bump k8s to 1.24 and kind to 1.18

|

||||

[#1406](https://github.com/fluxcd/flagger/pull/1406)

|

||||

- Helm: Allow configuring deployment `annotations`

|

||||

[#1411](https://github.com/fluxcd/flagger/pull/1411)

|

||||

- update dependencies

|

||||

[#1412](https://github.com/fluxcd/flagger/pull/1412)

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Enable updates for labels and annotations

|

||||

[#1392](https://github.com/fluxcd/flagger/pull/1392)

|

||||

- Update flagger-install-with-flux.md

|

||||

[#1398](https://github.com/fluxcd/flagger/pull/1398)

|

||||

- avoid copying canary labels to primary on promotion

|

||||

[#1405](https://github.com/fluxcd/flagger/pull/1405)

|

||||

- Disable Flux helm drift detection for managed resources

|

||||

[#1408](https://github.com/fluxcd/flagger/pull/1408)

|

||||

|

||||

## 1.29.0

|

||||

|

||||

**Release date:** 2023-02-21

|

||||

|

||||

This release comes with support for template variables for analysis metrics.

|

||||

A canary analysis metric can reference a set of custom variables with

|

||||

`.spec.analysis.metrics[].templateVariables`. For more info see the [docs](https://fluxcd.io/flagger/usage/metrics/#custom-metrics).

|

||||

Furthemore, a bug related to Canary releases with session affinity has been

|

||||

fixed.

|

||||

|

||||

#### Improvements

|

||||

|

||||

- update dependencies

|

||||

[#1374](https://github.com/fluxcd/flagger/pull/1374)

|

||||

- build(deps): bump golang.org/x/net from 0.4.0 to 0.7.0

|

||||

[#1373](https://github.com/fluxcd/flagger/pull/1373)

|

||||

- build(deps): bump fossa-contrib/fossa-action from 1 to 2

|

||||

[#1372](https://github.com/fluxcd/flagger/pull/1372)

|

||||

- Allow custom affinities for flagger deployment in helm chart

|

||||

[#1371](https://github.com/fluxcd/flagger/pull/1371)

|

||||

- Add namespace to namespaced resources in helm chart

|

||||

[#1370](https://github.com/fluxcd/flagger/pull/1370)

|

||||

- build(deps): bump actions/cache from 3.2.4 to 3.2.5

|

||||

[#1366](https://github.com/fluxcd/flagger/pull/1366)

|

||||

- build(deps): bump actions/cache from 3.2.3 to 3.2.4

|

||||

[#1362](https://github.com/fluxcd/flagger/pull/1362)

|

||||

- build(deps): bump docker/build-push-action from 3 to 4

|

||||

[#1361](https://github.com/fluxcd/flagger/pull/1361)

|

||||

- modify release workflow to publish rc images

|

||||

[#1359](https://github.com/fluxcd/flagger/pull/1359)

|

||||

- build: Enable SBOM and SLSA Provenance

|

||||

[#1356](https://github.com/fluxcd/flagger/pull/1356)

|

||||

- Add support for custom variables in metric templates

|

||||

[#1355](https://github.com/fluxcd/flagger/pull/1355)

|

||||

- docs(readme.md): add additional tutorial

|

||||

[#1346](https://github.com/fluxcd/flagger/pull/1346)

|

||||

|

||||

#### Fixes

|

||||

|

||||

- use regex to match against headers in istio

|

||||

[#1364](https://github.com/fluxcd/flagger/pull/1364)

|

||||

|

||||

## 1.28.0

|

||||

|

||||

**Release date:** 2023-01-26

|

||||

|

||||

This release comes with support for setting a different autoscaling

|

||||

configuration for the primary workload.

|

||||

The `.spec.autoscalerRef.primaryScalerReplicas` is useful in the

|

||||

situation where the user does not want to scale the canary workload

|

||||

to the exact same size as the primary, especially when opting for a

|

||||

canary deployment pattern where only a small portion of traffic is

|

||||

routed to the canary workload pods.

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Support for overriding primary scaler replicas

|

||||

[#1343](https://github.com/fluxcd/flagger/pull/1343)

|

||||

- Allow access to Prometheus in OpenShift via SA token

|

||||

[#1338](https://github.com/fluxcd/flagger/pull/1338)

|

||||

- Update Kubernetes packages to v1.26.1

|

||||

[#1352](https://github.com/fluxcd/flagger/pull/1352)

|

||||

|

||||

## 1.27.0

|

||||

|

||||

**Release date:** 2022-12-15

|

||||

|

||||

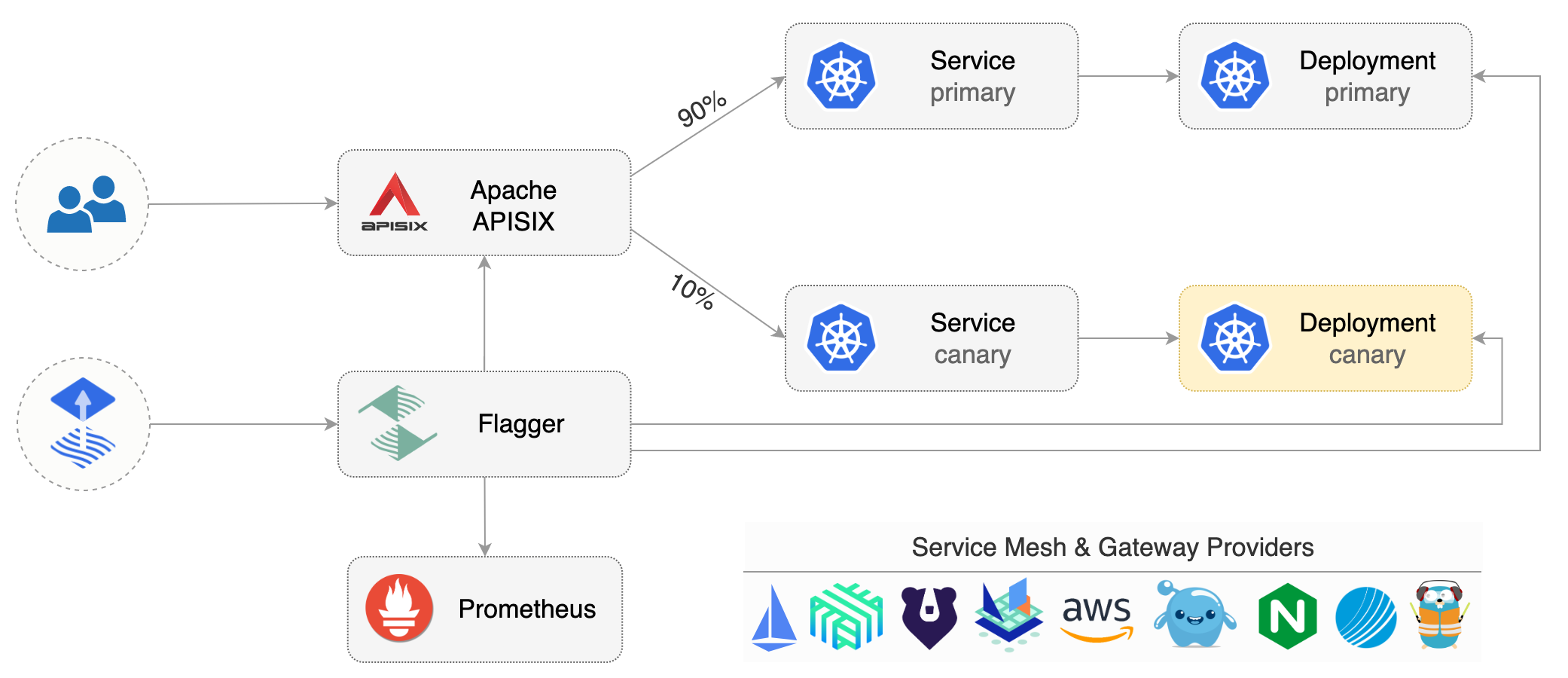

This release comes with support for Apachae APISIX. For more details see the

|

||||

[tutorial](https://fluxcd.io/flagger/tutorials/apisix-progressive-delivery).

|

||||

|

||||

#### Improvements

|

||||

|

||||

- [apisix] Implement router interface and observer interface

|

||||

[#1281](https://github.com/fluxcd/flagger/pull/1281)

|

||||

- Bump stefanprodan/helm-gh-pages from 1.6.0 to 1.7.0

|

||||

[#1326](https://github.com/fluxcd/flagger/pull/1326)

|

||||

- Release loadtester v0.28.0

|

||||

[#1328](https://github.com/fluxcd/flagger/pull/1328)

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Update release docs

|

||||

[#1324](https://github.com/fluxcd/flagger/pull/1324)

|

||||

|

||||

## 1.26.0

|

||||

|

||||

**Release date:** 2022-11-23

|

||||

|

||||

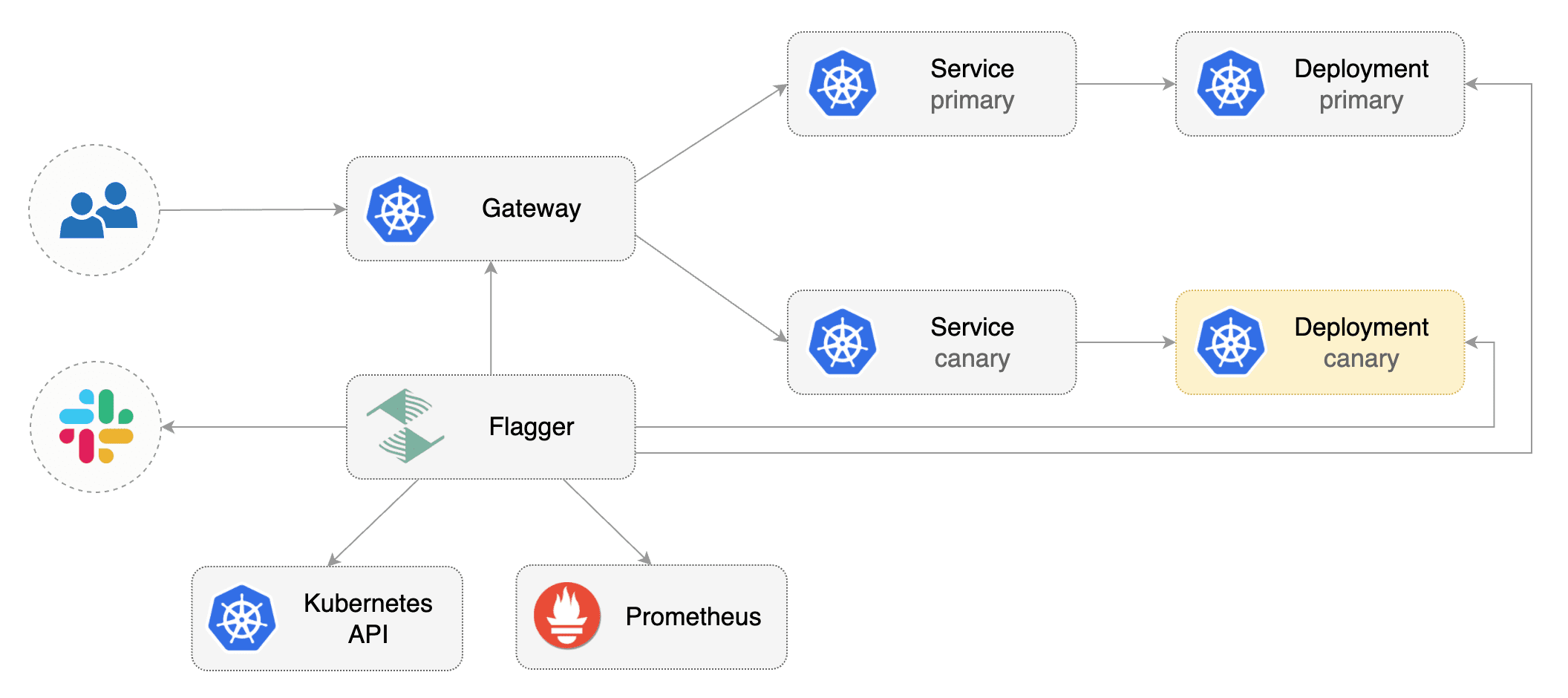

This release comes with support Kubernetes [Gateway API](https://gateway-api.sigs.k8s.io/) v1beta1.

|

||||

For more details see the [Gateway API Progressive Delivery tutorial](https://docs.flagger.app/tutorials/gatewayapi-progressive-delivery).

|

||||

|

||||

Please note that starting with this version, the Gateway API v1alpha2 is considered deprecated

|

||||

and will be removed from Flagger after 6 months.

|

||||

|

||||

#### Improvements:

|

||||

|

||||

- Updated Gateway API from v1alpha2 to v1beta1

|

||||

[#1319](https://github.com/fluxcd/flagger/pull/1319)

|

||||

- Updated Gateway API docs to v1beta1

|

||||

[#1321](https://github.com/fluxcd/flagger/pull/1321)

|

||||

- Update dependencies

|

||||

[#1322](https://github.com/fluxcd/flagger/pull/1322)

|

||||

|

||||

#### Fixes:

|

||||

|

||||

- docs: Add `linkerd install --crds` to Linkerd tutorial

|

||||

[#1316](https://github.com/fluxcd/flagger/pull/1316)

|

||||

|

||||

## 1.25.0

|

||||

|

||||

**Release date:** 2022-11-16

|

||||

|

||||

This release introduces a new deployment strategy combining Canary releases with session affinity

|

||||

for Istio.

|

||||

|

||||

Furthermore, it contains a regression fix regarding metadata in alerts introduced in

|

||||

[#1275](https://github.com/fluxcd/flagger/pull/1275)

|

||||

|

||||

#### Improvements:

|

||||

|

||||

- Add support for session affinity during weighted routing with Istio

|

||||

[#1280](https://github.com/fluxcd/flagger/pull/1280)

|

||||

|

||||

#### Fixes:

|

||||

|

||||

- Fix cluster name inclusion in alerts metadata

|

||||

[#1306](https://github.com/fluxcd/flagger/pull/1306)

|

||||

- fix(faq): Update FAQ about zero downtime with correct values

|

||||

[#1302](https://github.com/fluxcd/flagger/pull/1302)

|

||||

|

||||

## 1.24.1

|

||||

|

||||

**Release date:** 2022-10-26

|

||||

|

||||

This release comes with a fix to Gloo routing when a custom service name id used.

|

||||

|

||||

In addition, the Gloo ingress end-to-end testing was updated to Gloo Helm chart v1.12.31.

|

||||

|

||||

#### Fixes:

|

||||

|

||||

- fix(gloo): Use correct route table name in case service name was overwritten

|

||||

[#1300](https://github.com/fluxcd/flagger/pull/1300)

|

||||

|

||||

## 1.24.0

|

||||

|

||||

**Release date:** 2022-10-23

|

||||

|

||||

Starting with this version, the Flagger release artifacts are published to

|

||||

GitHub Container Registry, and they are signed with Cosign and GitHub ODIC.

|

||||

|

||||

OCI artifacts:

|

||||

|

||||

- `ghcr.io/fluxcd/flagger:<version>` multi-arch container images

|

||||

- `ghcr.io/fluxcd/flagger-manifest:<version>` Kubernetes manifests

|

||||

- `ghcr.io/fluxcd/charts/flagger:<version>` Helm charts

|

||||

|

||||

To verify an OCI artifact with Cosign:

|

||||

|

||||

```shell

|

||||

export COSIGN_EXPERIMENTAL=1

|

||||

cosign verify ghcr.io/fluxcd/flagger:1.24.0

|

||||

cosign verify ghcr.io/fluxcd/flagger-manifests:1.24.0

|

||||

cosign verify ghcr.io/fluxcd/charts/flagger:1.24.0

|

||||

```

|

||||

|

||||

To deploy Flagger from its OCI artifacts the GitOps way,

|

||||

please see the [Flux installation guide](docs/gitbook/install/flagger-install-with-flux.md).

|

||||

|

||||

#### Improvements:

|

||||

|

||||

- docs: Add guide on how to install Flagger with Flux OCI

|

||||

[#1294](https://github.com/fluxcd/flagger/pull/1294)

|

||||

- ci: Publish signed Helm charts and manifests to GHCR

|

||||

[#1293](https://github.com/fluxcd/flagger/pull/1293)

|

||||

- ci: Sign release and containers with Cosign and GitHub OIDC

|

||||

[#1292](https://github.com/fluxcd/flagger/pull/1292)

|

||||

- ci: Adjust GitHub workflow permissions

|

||||

[#1286](https://github.com/fluxcd/flagger/pull/1286)

|

||||

- docs: Add link to Flux governance document

|

||||

[#1286](https://github.com/fluxcd/flagger/pull/1286)

|

||||

|

||||

## 1.23.0

|

||||

|

||||

**Release date:** 2022-10-20

|

||||

|

||||

This release comes with support for Slack bot token authentication.

|

||||

|

||||

#### Improvements:

|

||||

|

||||

- alerts: Add support for Slack bot token authentication

|

||||

[#1270](https://github.com/fluxcd/flagger/pull/1270)

|

||||

- loadtester: logCmdOutput to logger instead of stdout

|

||||

[#1267](https://github.com/fluxcd/flagger/pull/1267)

|

||||

- helm: Add app.kubernetes.io/version label to chart

|

||||

[#1264](https://github.com/fluxcd/flagger/pull/1264)

|

||||

- Update Go to 1.19

|

||||

[#1264](https://github.com/fluxcd/flagger/pull/1264)

|

||||

- Update Kubernetes packages to v1.25.3

|

||||

[#1283](https://github.com/fluxcd/flagger/pull/1283)

|

||||

- Bump Contour to v1.22 in e2e tests

|

||||

[#1282](https://github.com/fluxcd/flagger/pull/1282)

|

||||

|

||||

#### Fixes:

|

||||

|

||||

- gatewayapi: Fix reconciliation of nil hostnames

|

||||

[#1276](https://github.com/fluxcd/flagger/pull/1276)

|

||||

- alerts: Include cluster name in all alerts

|

||||

[#1275](https://github.com/fluxcd/flagger/pull/1275)

|

||||

|

||||

## 1.22.2

|

||||

|

||||

**Release date:** 2022-08-29

|

||||

|

||||

This release fixes a bug related scaling up the canary deployment when a

|

||||

reference to an autoscaler is specified.

|

||||

|

||||

Furthermore, it contains updates to packages used by the project, including

|

||||

updates to Helm and grpc-health-probe used in the loadtester.

|

||||

|

||||

CVEs fixed (originating from dependencies):

|

||||

* CVE-2022-37434

|

||||

* CVE-2022-27191

|

||||

* CVE-2021-33194

|

||||

* CVE-2021-44716

|

||||

* CVE-2022-29526

|

||||

* CVE-2022-1996

|

||||

|

||||

#### Fixes:

|

||||

|

||||

- If HPA is set, it uses HPA minReplicas when scaling up the canary

|

||||

[#1253](https://github.com/fluxcd/flagger/pull/1253)

|

||||

|

||||

#### Improvements:

|

||||

|

||||

- Release loadtester v0.23.0

|

||||

[#1246](https://github.com/fluxcd/flagger/pull/1246)

|

||||

- Add target and script to keep crds in sync

|

||||

[#1254](https://github.com/fluxcd/flagger/pull/1254)

|

||||

- docs: add knative support to roadmap

|

||||

[#1258](https://github.com/fluxcd/flagger/pull/1258)

|

||||

- Update dependencies

|

||||

[#1259](https://github.com/fluxcd/flagger/pull/1259)

|

||||

- Release loadtester v0.24.0

|

||||

[#1261](https://github.com/fluxcd/flagger/pull/1261)

|

||||

|

||||

## 1.22.1

|

||||

|

||||

**Release date:** 2022-08-01

|

||||

|

||||

This minor release fixes a bug related to the use of HPA v2beta2 and updates

|

||||

the KEDA ScaledObject API to include `MetricType` for `ScaleTriggers`.

|

||||

|

||||

Furthermore, the project has been updated to use Go 1.18 and Alpine 3.16.

|

||||

|

||||

#### Fixes:

|

||||

|

||||

- Update KEDA ScaledObject API to include MetricType for Triggers

|

||||

[#1241](https://github.com/fluxcd/flagger/pull/1241)

|

||||

- Fix fallback logic for HPAv2 to v2beta2

|

||||

[#1242](https://github.com/fluxcd/flagger/pull/1242)

|

||||

|

||||

#### Improvements:

|

||||

- Update Go to 1.18 and Alpine to 3.16

|

||||

[#1243](https://github.com/fluxcd/flagger/pull/1243)

|

||||

- Clarify HPA API requirement

|

||||

[#1239](https://github.com/fluxcd/flagger/pull/1239)

|

||||

- Update README

|

||||

[#1233](https://github.com/fluxcd/flagger/pull/1233)

|

||||

|

||||

## 1.22.0

|

||||

|

||||

**Release date:** 2022-07-11

|

||||

|

||||

This release with support for KEDA ScaledObjects as an alternative to HPAs. Check the

|

||||

[tutorial](https://docs.flagger.app/tutorials/keda-scaledobject) to understand it's usage

|

||||

with Flagger.

|

||||

|

||||

The `.spec.service.appProtocol` field can now be used to specify the [`appProtocol`](https://kubernetes.io/docs/concepts/services-networking/service/#application-protocol)

|

||||

of the services that Flagger generates.

|

||||

|

||||

In addition, a bug related to the Contour prometheus query for when service name is overwritten

|

||||

along with a bug related to a Contour `HTTPProxy` annotations have been fixed.

|

||||

|

||||

Furthermore, the installation guide for Alibaba ServiceMesh has been updated.

|

||||

|

||||

#### Improvements:

|

||||

|

||||

- feat: Add an optional `appProtocol` field to `spec.service`

|

||||

[#1185](https://github.com/fluxcd/flagger/pull/1185)

|

||||

- Update Kubernetes packages to v1.24.1

|

||||

[#1208](https://github.com/fluxcd/flagger/pull/1208)

|

||||

- charts: Add namespace parameter to parameters table

|

||||

[#1210](https://github.com/fluxcd/flagger/pull/1210)

|

||||

- Introduce `ScalerReconciler` and refactor HPA reconciliation

|

||||

[#1211](https://github.com/fluxcd/flagger/pull/1211)

|

||||

- e2e: Update providers and Kubernetes to v1.23

|

||||

[#1212](https://github.com/fluxcd/flagger/pull/1212)

|

||||

- Add support for KEDA ScaledObjects as an auto scaler

|

||||

[#1216](https://github.com/fluxcd/flagger/pull/1216)

|

||||

- include Contour retryOn in the sample canary

|

||||

[#1223](https://github.com/fluxcd/flagger/pull/1223)

|

||||

|

||||

#### Fixes:

|

||||

- fix contour prom query for when service name is overwritten

|

||||

[#1204](https://github.com/fluxcd/flagger/pull/1204)

|

||||

- fix contour httproxy annotations overwrite

|

||||

[#1205](https://github.com/fluxcd/flagger/pull/1205)

|

||||

- Fix primary HPA label reconciliation

|

||||

[#1215](https://github.com/fluxcd/flagger/pull/1215)

|

||||

- fix: add finalizers to canaries

|

||||

[#1219](https://github.com/fluxcd/flagger/pull/1219)

|

||||

- typo: boostrap -> bootstrap

|

||||

[#1220](https://github.com/fluxcd/flagger/pull/1220)

|

||||

- typo: controller

|

||||

[#1221](https://github.com/fluxcd/flagger/pull/1221)

|

||||

- update guide for flagger on aliyun ASM

|

||||

[#1222](https://github.com/fluxcd/flagger/pull/1222)

|

||||

- Reintroducing empty check for metric template references.

|

||||

[#1224](https://github.com/fluxcd/flagger/pull/1224)

|

||||

|

||||

|

||||

## 1.21.0

|

||||

|

||||

**Release date:** 2022-05-06

|

||||

|

||||

This release comes with an option to disable cross-namespace references to Kubernetes

|

||||

custom resources such as `AlertProivders` and `MetricProviders`. When running Flagger

|

||||

on multi-tenant environments it is advised to set the `-no-cross-namespace-refs=true` flag.

|

||||

|

||||

In addition, this version enables Flagger to target Istio and Kuma multi-cluster setups.

|

||||

When installing Flagger with Helm, the service mesh control plane kubeconfig secret

|

||||

can be specified using `--set controlplane.kubeconfig.secretName`.

|

||||

|

||||

#### Improvements

|

||||

|

||||

- Add flag to disable cross namespace refs to custom resources

|

||||

[#1181](https://github.com/fluxcd/flagger/pull/1181)

|

||||

- Rename kubeconfig section in helm values

|

||||

[#1188](https://github.com/fluxcd/flagger/pull/1188)

|

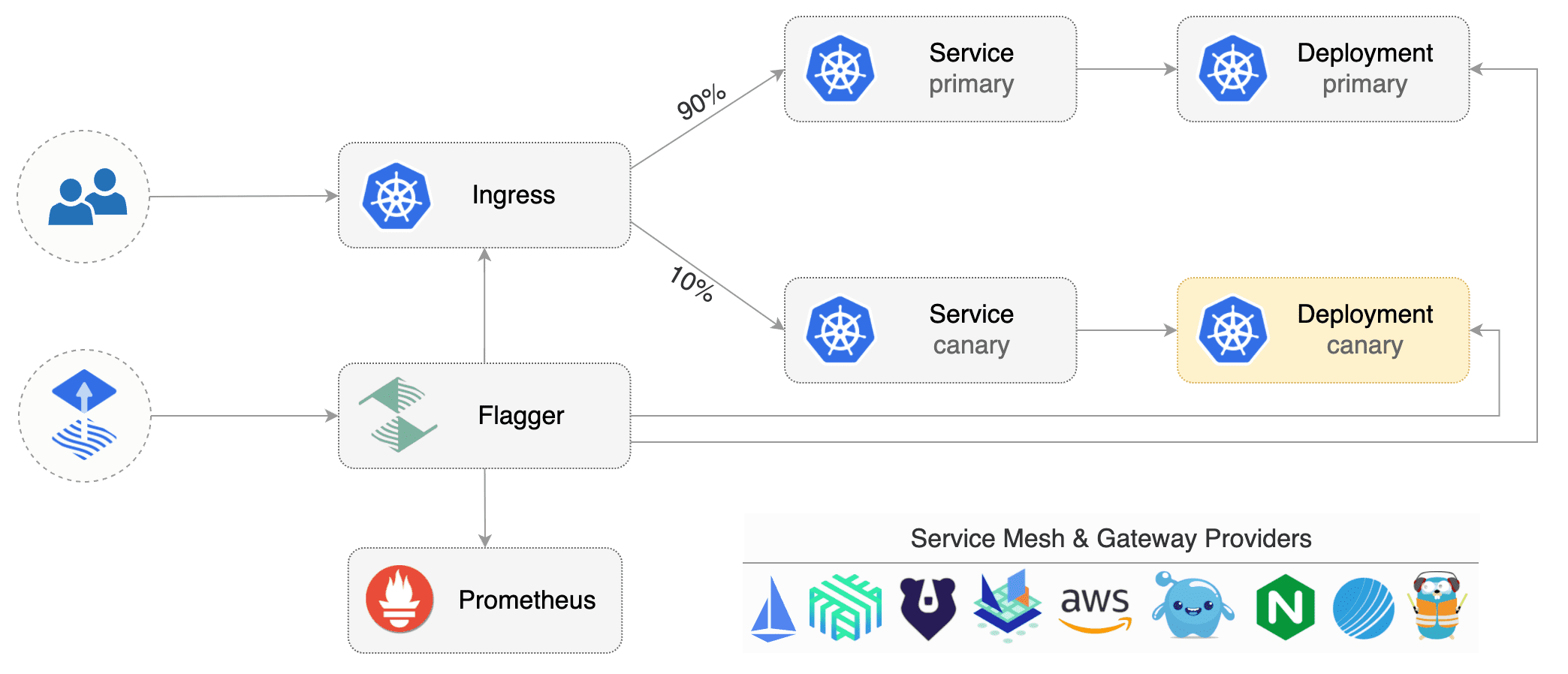

||||

- Update Flagger overview diagram

|

||||

[#1187](https://github.com/fluxcd/flagger/pull/1187)

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Avoid setting owner refs if the service mesh/ingress is on a different cluster

|

||||

[#1183](https://github.com/fluxcd/flagger/pull/1183)

|

||||

|

||||

## 1.20.0

|

||||

|

||||

**Release date:** 2022-04-15

|

||||

|

||||

This release comes with improvements to the AppMesh, Contour and Istio integrations.

|

||||

|

||||

#### Improvements

|

||||

|

||||

- AppMesh: Add annotation to enable Envoy access logs

|

||||

[#1156](https://github.com/fluxcd/flagger/pull/1156)

|

||||

- Contour: Update the httproxy API and enable RetryOn

|

||||

[#1164](https://github.com/fluxcd/flagger/pull/1164)

|

||||

- Istio: Add destination port when port discovery and delegation are true

|

||||

[#1145](https://github.com/fluxcd/flagger/pull/1145)

|

||||

- Metrics: Add canary analysis result as Prometheus metrics

|

||||

[#1148](https://github.com/fluxcd/flagger/pull/1148)

|

||||

|

||||

#### Fixes

|

||||

|

||||

- Fix canary rollback behaviour

|

||||

[#1171](https://github.com/fluxcd/flagger/pull/1171)

|

||||

- Shorten the metric analysis cycle after confirm promotion gate is open

|

||||

[#1139](https://github.com/fluxcd/flagger/pull/1139)

|

||||

- Fix unit of time in the Istio Grafana dashboard

|

||||

[#1162](https://github.com/fluxcd/flagger/pull/1162)

|

||||

- Fix the service toggle condition in the podinfo helm chart

|

||||

[#1146](https://github.com/fluxcd/flagger/pull/1146)

|

||||

|

||||

## 1.19.0

|

||||

|

||||

**Release date:** 2022-03-14

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

FROM golang:1.17-alpine as builder

|

||||

FROM golang:1.19-alpine as builder

|

||||

|

||||

ARG TARGETPLATFORM

|

||||

ARG REVISON

|

||||

@@ -21,7 +21,7 @@ RUN CGO_ENABLED=0 go build \

|

||||

-ldflags "-s -w -X github.com/fluxcd/flagger/pkg/version.REVISION=${REVISON}" \

|

||||

-a -o flagger ./cmd/flagger

|

||||

|

||||

FROM alpine:3.15

|

||||

FROM alpine:3.17

|

||||

|

||||

RUN apk --no-cache add ca-certificates

|

||||

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

FROM golang:1.17-alpine as builder

|

||||

FROM golang:1.19-alpine as builder

|

||||

|

||||

ARG TARGETPLATFORM

|

||||

ARG TARGETARCH

|

||||

@@ -6,15 +6,15 @@ ARG REVISION

|

||||

|

||||

RUN apk --no-cache add alpine-sdk perl curl bash tar

|

||||

|

||||

RUN HELM3_VERSION=3.7.2 && \

|

||||

RUN HELM3_VERSION=3.11.0 && \

|

||||

curl -sSL "https://get.helm.sh/helm-v${HELM3_VERSION}-linux-${TARGETARCH}.tar.gz" | tar xvz && \

|

||||

chmod +x linux-${TARGETARCH}/helm && mv linux-${TARGETARCH}/helm /usr/local/bin/helm

|

||||

|

||||

RUN GRPC_HEALTH_PROBE_VERSION=v0.4.6 && \

|

||||

RUN GRPC_HEALTH_PROBE_VERSION=v0.4.12 && \

|

||||

wget -qO /usr/local/bin/grpc_health_probe https://github.com/grpc-ecosystem/grpc-health-probe/releases/download/${GRPC_HEALTH_PROBE_VERSION}/grpc_health_probe-linux-${TARGETARCH} && \

|

||||

chmod +x /usr/local/bin/grpc_health_probe

|

||||

|

||||

RUN GHZ_VERSION=0.105.0 && \

|

||||

RUN GHZ_VERSION=0.109.0 && \

|

||||

curl -sSL "https://github.com/bojand/ghz/archive/refs/tags/v${GHZ_VERSION}.tar.gz" | tar xz -C /tmp && \

|

||||

cd /tmp/ghz-${GHZ_VERSION}/cmd/ghz && GOARCH=$TARGETARCH go build . && mv ghz /usr/local/bin && \

|

||||

chmod +x /usr/local/bin/ghz

|

||||

|

||||

5

GOVERNANCE.md

Normal file

5

GOVERNANCE.md

Normal file

@@ -0,0 +1,5 @@

|

||||

# Flagger Governance

|

||||

|

||||

The Flagger project is governed by the [Flux governance document](https://github.com/fluxcd/community/blob/main/GOVERNANCE.md),

|

||||

involvement is defined in the [Flux community roles document](chttps://github.com/fluxcd/community/blob/main/community-roles.md),

|

||||

and processes can be found in the [Flux process document](https://github.com/fluxcd/community/blob/main/PROCESS.md).

|

||||

@@ -6,3 +6,4 @@ In alphabetical order:

|

||||

|

||||

Stefan Prodan, Weaveworks <stefan@weave.works> (github: @stefanprodan, slack: stefanprodan)

|

||||

Takeshi Yoneda, Tetrate <takeshi@tetrate.io> (github: @mathetake, slack: mathetake)

|

||||

Sanskar Jaiswal, Weaveworks <sanskar.jaiswal@weave.works> (github: @aryan9600, slack: aryan9600)

|

||||

|

||||

9

Makefile

9

Makefile

@@ -5,6 +5,12 @@ LT_VERSION?=$(shell grep 'VERSION' cmd/loadtester/main.go | awk '{ print $$4 }'

|

||||

build:

|

||||

CGO_ENABLED=0 go build -a -o ./bin/flagger ./cmd/flagger

|

||||

|

||||

tidy:

|

||||

rm -f go.sum; go mod tidy -compat=1.19

|

||||

|

||||

vet:

|

||||

go vet ./...

|

||||

|

||||

fmt:

|

||||

go mod tidy

|

||||

gofmt -l -s -w ./

|

||||

@@ -27,6 +33,9 @@ crd:

|

||||

cat artifacts/flagger/crd.yaml > charts/flagger/crds/crd.yaml

|

||||

cat artifacts/flagger/crd.yaml > kustomize/base/flagger/crd.yaml

|

||||

|

||||

verify-crd:

|

||||

./hack/verify-crd.sh

|

||||

|

||||

version-set:

|

||||

@next="$(TAG)" && \

|

||||

current="$(VERSION)" && \

|

||||

|

||||

148

README.md

148

README.md

@@ -1,10 +1,11 @@

|

||||

# flagger

|

||||

# flagger

|

||||

|

||||

[](https://bestpractices.coreinfrastructure.org/projects/4783)

|

||||

[](https://github.com/fluxcd/flagger/actions)

|

||||

[](https://goreportcard.com/report/github.com/fluxcd/flagger)

|

||||

[](https://github.com/fluxcd/flagger/blob/main/LICENSE)

|

||||

[](https://github.com/fluxcd/flagger/releases)

|

||||

[](https://bestpractices.coreinfrastructure.org/projects/4783)

|

||||

[](https://goreportcard.com/report/github.com/fluxcd/flagger)

|

||||

[](https://app.fossa.com/projects/custom%2B162%2Fgithub.com%2Ffluxcd%2Fflagger?ref=badge_shield)

|

||||

[](https://artifacthub.io/packages/search?repo=flagger)

|

||||

[](https://clomonitor.io/projects/cncf/flagger)

|

||||

|

||||

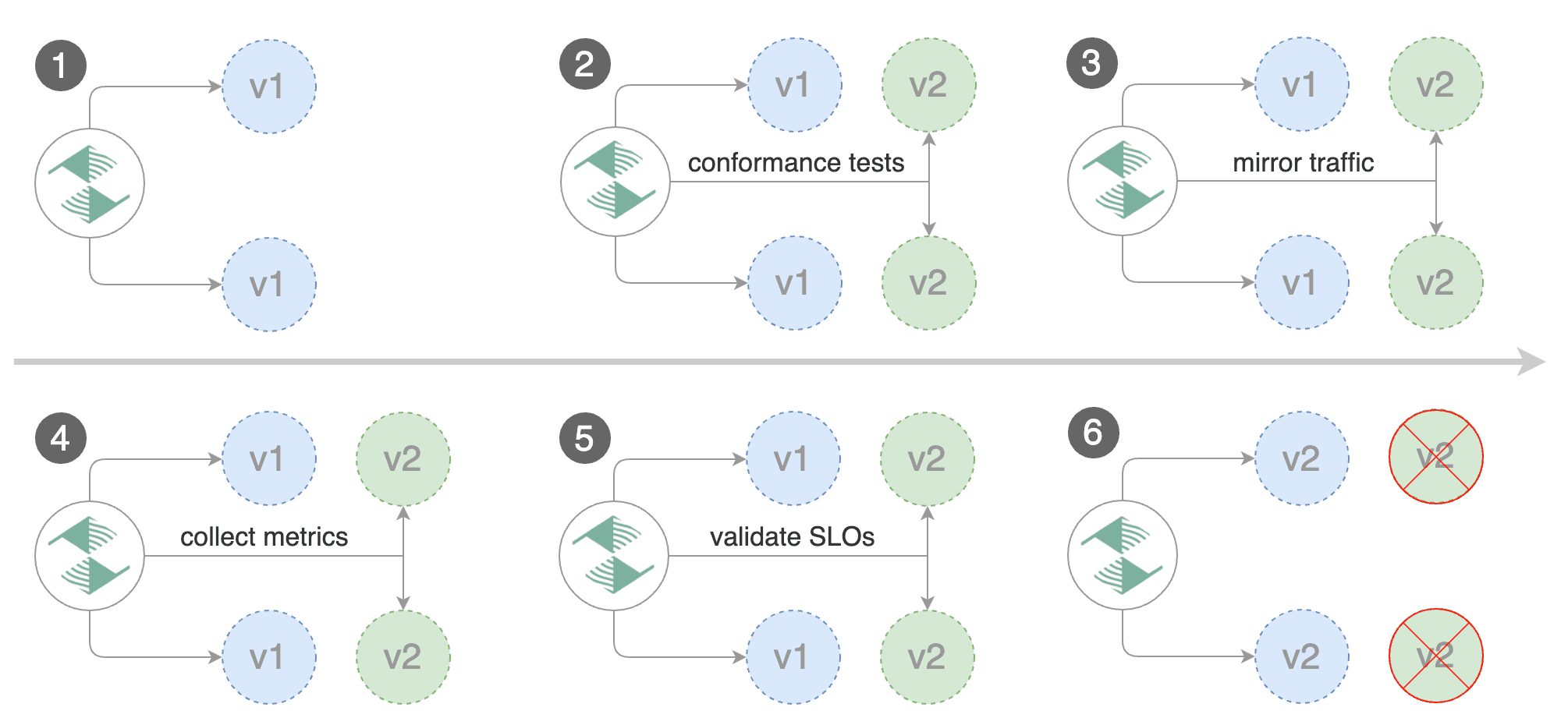

Flagger is a progressive delivery tool that automates the release process for applications running on Kubernetes.

|

||||

It reduces the risk of introducing a new software version in production

|

||||

@@ -13,54 +14,53 @@ by gradually shifting traffic to the new version while measuring metrics and run

|

||||

|

||||

|

||||

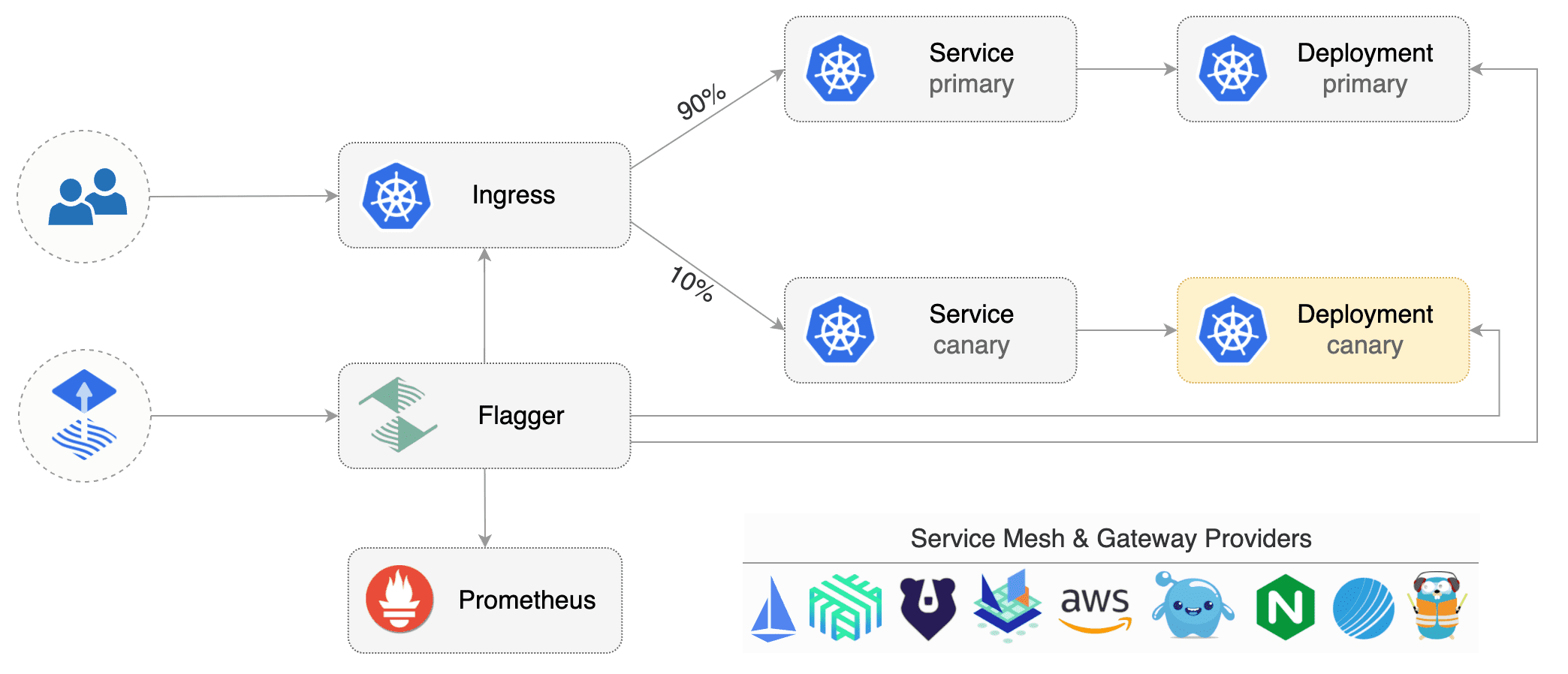

Flagger implements several deployment strategies (Canary releases, A/B testing, Blue/Green mirroring)

|

||||

using a service mesh (App Mesh, Istio, Linkerd, Open Service Mesh, Kuma)

|

||||

or an ingress controller (Contour, Gloo, NGINX, Skipper, Traefik) for traffic routing.

|

||||

For release analysis, Flagger can query Prometheus, Datadog, New Relic, CloudWatch, Dynatrace,

|

||||

InfluxDB and Stackdriver and for alerting it uses Slack, MS Teams, Discord, Rocket and Google Chat.

|

||||

and integrates with various Kubernetes ingress controllers, service mesh, and monitoring solutions.

|

||||

|

||||

Flagger is a [Cloud Native Computing Foundation](https://cncf.io/) project

|

||||

and part of [Flux](https://fluxcd.io) family of GitOps tools.

|

||||

and part of the [Flux](https://fluxcd.io) family of GitOps tools.

|

||||

|

||||

### Documentation

|

||||

|

||||

Flagger documentation can be found at [docs.flagger.app](https://docs.flagger.app).

|

||||

Flagger documentation can be found at [fluxcd.io/flagger](https://fluxcd.io/flagger/).

|

||||

|

||||

* Install

|

||||

* [Flagger install on Kubernetes](https://docs.flagger.app/install/flagger-install-on-kubernetes)

|

||||

* [Flagger install on Kubernetes](https://fluxcd.io/flagger/install/flagger-install-on-kubernetes)

|

||||

* Usage

|

||||

* [How it works](https://docs.flagger.app/usage/how-it-works)

|

||||

* [Deployment strategies](https://docs.flagger.app/usage/deployment-strategies)

|

||||

* [Metrics analysis](https://docs.flagger.app/usage/metrics)

|

||||

* [Webhooks](https://docs.flagger.app/usage/webhooks)

|

||||

* [Alerting](https://docs.flagger.app/usage/alerting)

|

||||

* [Monitoring](https://docs.flagger.app/usage/monitoring)

|

||||

* [How it works](https://fluxcd.io/flagger/usage/how-it-works)

|

||||

* [Deployment strategies](https://fluxcd.io/flagger/usage/deployment-strategies)

|

||||

* [Metrics analysis](https://fluxcd.io/flagger/usage/metrics)

|

||||

* [Webhooks](https://fluxcd.io/flagger/usage/webhooks)

|

||||

* [Alerting](https://fluxcd.io/flagger/usage/alerting)

|

||||

* [Monitoring](https://fluxcd.io/flagger/usage/monitoring)

|

||||

* Tutorials

|

||||

* [App Mesh](https://docs.flagger.app/tutorials/appmesh-progressive-delivery)

|

||||

* [Istio](https://docs.flagger.app/tutorials/istio-progressive-delivery)

|

||||

* [Linkerd](https://docs.flagger.app/tutorials/linkerd-progressive-delivery)

|

||||

* [Open Service Mesh (OSM)](https://docs.flagger.app/tutorials/osm-progressive-delivery)

|

||||

* [Kuma Service Mesh](https://docs.flagger.app/tutorials/kuma-progressive-delivery)

|

||||

* [Contour](https://docs.flagger.app/tutorials/contour-progressive-delivery)

|

||||

* [Gloo](https://docs.flagger.app/tutorials/gloo-progressive-delivery)

|

||||

* [NGINX Ingress](https://docs.flagger.app/tutorials/nginx-progressive-delivery)

|

||||

* [Skipper](https://docs.flagger.app/tutorials/skipper-progressive-delivery)

|

||||

* [Traefik](https://docs.flagger.app/tutorials/traefik-progressive-delivery)

|

||||

* [Kubernetes Blue/Green](https://docs.flagger.app/tutorials/kubernetes-blue-green)

|

||||

* [App Mesh](https://fluxcd.io/flagger/tutorials/appmesh-progressive-delivery)

|

||||

* [Istio](https://fluxcd.io/flagger/tutorials/istio-progressive-delivery)

|

||||

* [Linkerd](https://fluxcd.io/flagger/tutorials/linkerd-progressive-delivery)

|

||||

* [Open Service Mesh (OSM)](https://dfluxcd.io/flagger/tutorials/osm-progressive-delivery)

|

||||

* [Kuma Service Mesh](https://fluxcd.io/flagger/tutorials/kuma-progressive-delivery)

|

||||

* [Contour](https://fluxcd.io/flagger/tutorials/contour-progressive-delivery)

|

||||

* [Gloo](https://fluxcd.io/flagger/tutorials/gloo-progressive-delivery)

|

||||

* [NGINX Ingress](https://fluxcd.io/flagger/tutorials/nginx-progressive-delivery)

|

||||

* [Skipper](https://fluxcd.io/flagger/tutorials/skipper-progressive-delivery)

|

||||

* [Traefik](https://fluxcd.io/flagger/tutorials/traefik-progressive-delivery)

|

||||

* [Gateway API](https://fluxcd.io/flagger/tutorials/gatewayapi-progressive-delivery/)

|

||||

* [Kubernetes Blue/Green](https://fluxcd.io/flagger/tutorials/kubernetes-blue-green)

|

||||

|

||||

### Who is using Flagger

|

||||

### Adopters

|

||||

|

||||

**Our list of production users has moved to <https://fluxcd.io/adopters/#flagger>**.

|

||||

|

||||

If you are using Flagger, please [submit a PR to add your organization](https://github.com/fluxcd/website/tree/main/adopters#readme) to the list!

|

||||