mirror of

https://github.com/fluxcd/flagger.git

synced 2026-04-15 06:57:34 +00:00

Set Kubernetes min version to 1.16

Signed-off-by: Stefan Prodan <stefan.prodan@gmail.com>

This commit is contained in:

@@ -1,10 +1,16 @@

|

||||

# App Mesh Canary Deployments

|

||||

|

||||

This guide shows you how to use App Mesh and Flagger to automate canary deployments. You'll need an EKS cluster configured with App Mesh, you can find the installion guide [here](https://docs.flagger.app/install/flagger-install-on-eks-appmesh).

|

||||

This guide shows you how to use App Mesh and Flagger to automate canary deployments.

|

||||

You'll need an EKS cluster (Kubernetes >= 1.16) configured with App Mesh,

|

||||

you can find the installation guide [here](https://docs.flagger.app/install/flagger-install-on-eks-appmesh).

|

||||

|

||||

## Bootstrap

|

||||

|

||||

Flagger takes a Kubernetes deployment and optionally a horizontal pod autoscaler \(HPA\), then creates a series of objects \(Kubernetes deployments, ClusterIP services, App Mesh virtual nodes and services\). These objects expose the application on the mesh and drive the canary analysis and promotion. The only App Mesh object you need to create by yourself is the mesh resource.

|

||||

Flagger takes a Kubernetes deployment and optionally a horizontal pod autoscaler (HPA),

|

||||

then creates a series of objects (Kubernetes deployments, ClusterIP services,

|

||||

App Mesh virtual nodes and services).

|

||||

These objects expose the application on the mesh and drive the canary analysis and promotion.

|

||||

The only App Mesh object you need to create by yourself is the mesh resource.

|

||||

|

||||

Create a mesh called `global`:

|

||||

|

||||

@@ -37,7 +43,7 @@ EOF

|

||||

Create a deployment and a horizontal pod autoscaler:

|

||||

|

||||

```bash

|

||||

kubectl apply -k github.com/weaveworks/flagger//kustomize/podinfo

|

||||

kubectl apply -k https://github.com/fluxcd/flagger//kustomize/podinfo?ref=main

|

||||

```

|

||||

|

||||

Deploy the load testing service to generate traffic during the canary analysis:

|

||||

@@ -162,9 +168,13 @@ virtualservice.appmesh.k8s.aws/podinfo

|

||||

virtualservice.appmesh.k8s.aws/podinfo-canary

|

||||

```

|

||||

|

||||

After the boostrap, the podinfo deployment will be scaled to zero and the traffic to `podinfo.test` will be routed to the primary pods. During the canary analysis, the `podinfo-canary.test` address can be used to target directly the canary pods.

|

||||

After the boostrap, the podinfo deployment will be scaled to zero and the traffic to `podinfo.test`

|

||||

will be routed to the primary pods.

|

||||

During the canary analysis, the `podinfo-canary.test` address can be used to target directly the canary pods.

|

||||

|

||||

App Mesh blocks all egress traffic by default. If your application needs to call another service, you have to create an App Mesh virtual service for it and add the virtual service name to the backend list.

|

||||

App Mesh blocks all egress traffic by default.

|

||||

If your application needs to call another service, you have to create an App Mesh virtual service for it

|

||||

and add the virtual service name to the backend list.

|

||||

|

||||

```yaml

|

||||

service:

|

||||

@@ -174,7 +184,7 @@ App Mesh blocks all egress traffic by default. If your application needs to call

|

||||

- arn:aws:appmesh:eu-west-1:12345678910:mesh/my-mesh/virtualService/backend2

|

||||

```

|

||||

|

||||

## Setup App Mesh Gateway \(optional\)

|

||||

## Setup App Mesh Gateway (optional)

|

||||

|

||||

In order to expose the podinfo app outside the mesh you can use the App Mesh Gateway.

|

||||

|

||||

@@ -225,7 +235,7 @@ Open your browser and navigate to the ingress address to access podinfo UI.

|

||||

|

||||

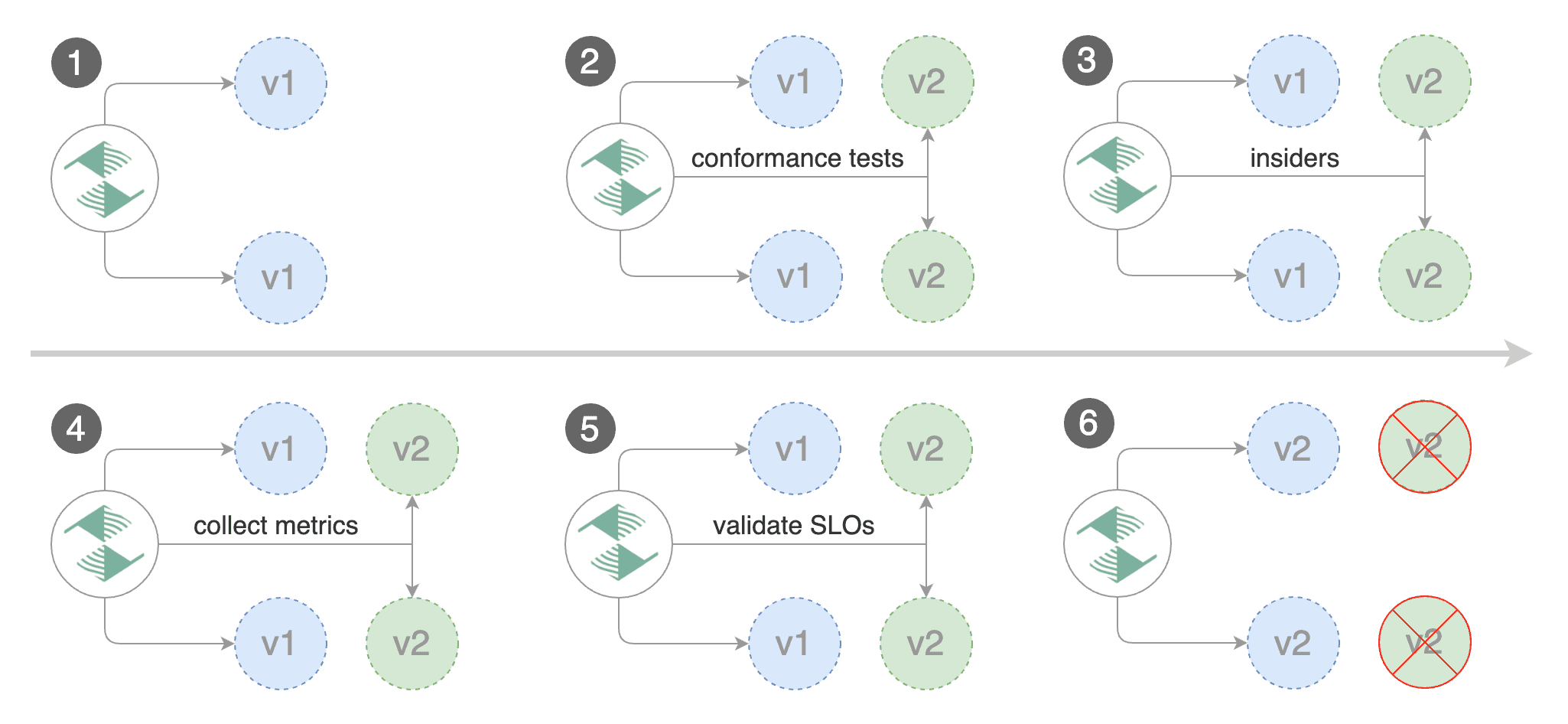

A canary deployment is triggered by changes in any of the following objects:

|

||||

|

||||

* Deployment PodSpec \(container image, command, ports, env, resources, etc\)

|

||||

* Deployment PodSpec (container image, command, ports, env, resources, etc)

|

||||

* ConfigMaps and Secrets mounted as volumes or mapped to environment variables

|

||||

|

||||

Trigger a canary deployment by updating the container image:

|

||||

@@ -268,7 +278,9 @@ When the canary analysis starts, Flagger will call the pre-rollout webhooks befo

|

||||

|

||||

**Note** that if you apply new changes to the deployment during the canary analysis, Flagger will restart the analysis.

|

||||

|

||||

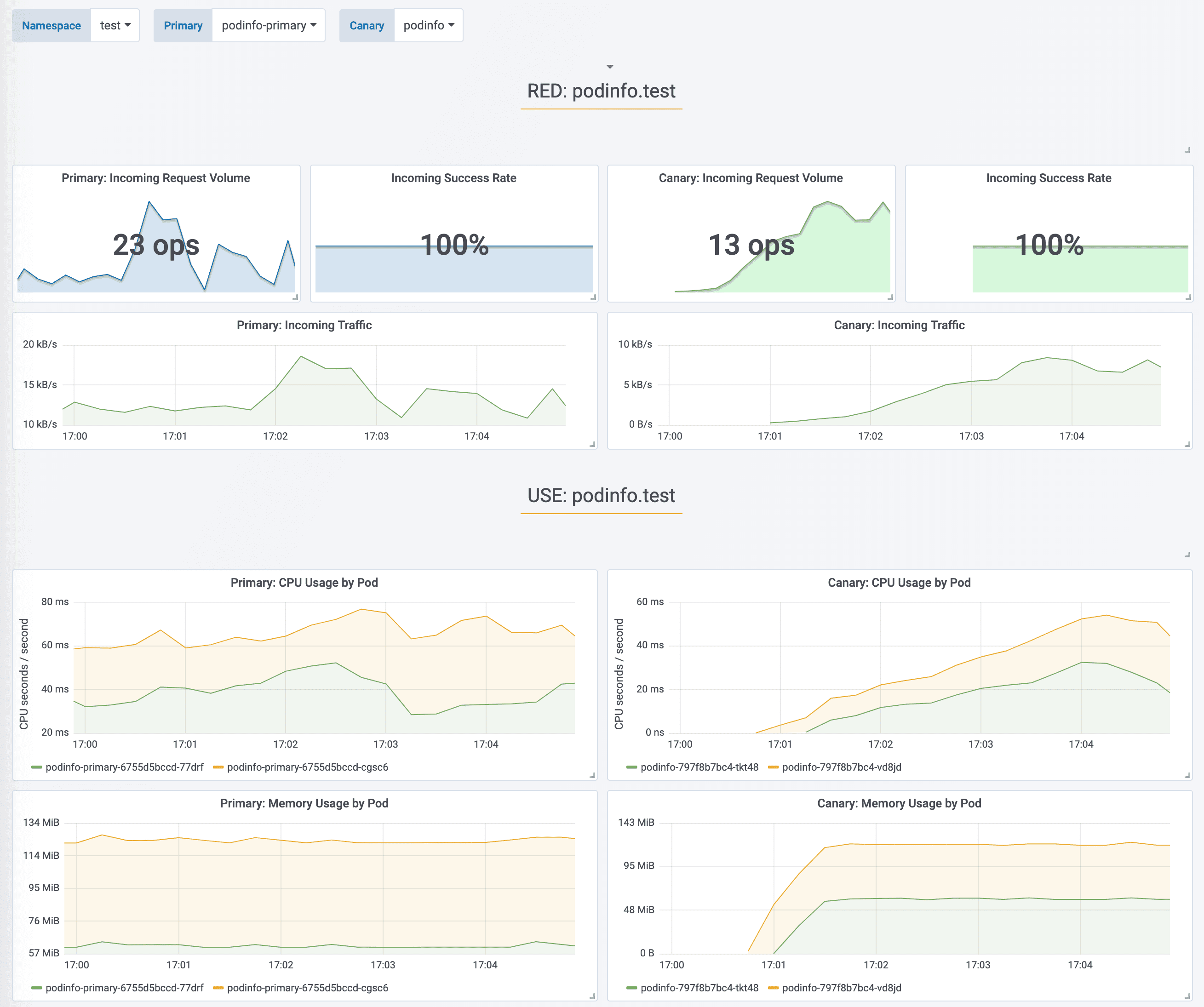

During the analysis the canary’s progress can be monitored with Grafana. The App Mesh dashboard URL is [http://localhost:3000/d/flagger-appmesh/appmesh-canary?refresh=10s&orgId=1&var-namespace=test&var-primary=podinfo-primary&var-canary=podinfo](http://localhost:3000/d/flagger-appmesh/appmesh-canary?refresh=10s&orgId=1&var-namespace=test&var-primary=podinfo-primary&var-canary=podinfo).

|

||||

During the analysis the canary’s progress can be monitored with Grafana.

|

||||

The App Mesh dashboard URL is

|

||||

[http://localhost:3000/d/flagger-appmesh/appmesh-canary?refresh=10s&orgId=1&var-namespace=test&var-primary=podinfo-primary&var-canary=podinfo](http://localhost:3000/d/flagger-appmesh/appmesh-canary?refresh=10s&orgId=1&var-namespace=test&var-primary=podinfo-primary&var-canary=podinfo).

|

||||

|

||||

|

||||

|

||||

@@ -316,7 +328,8 @@ Generate latency:

|

||||

watch -n 1 curl http://podinfo-canary.test:9898/delay/1

|

||||

```

|

||||

|

||||

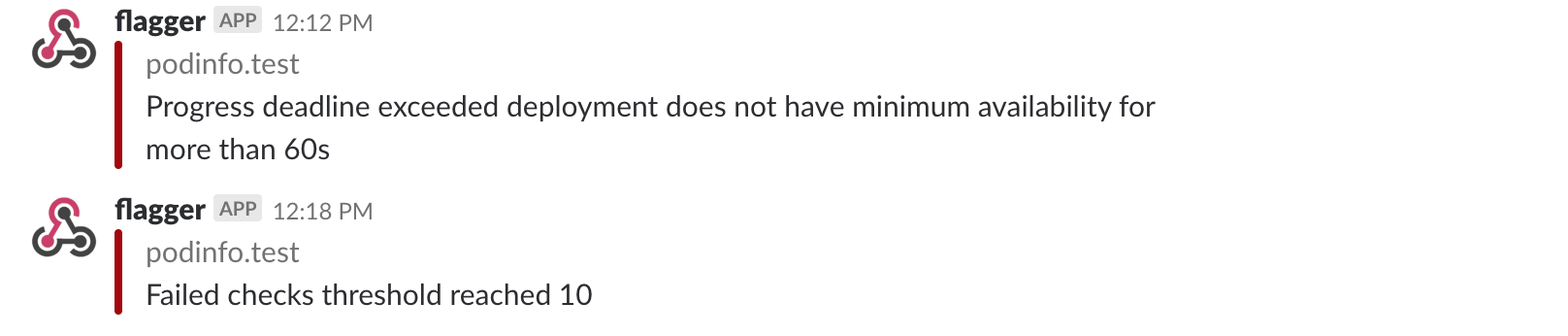

When the number of failed checks reaches the canary analysis threshold, the traffic is routed back to the primary, the canary is scaled to zero and the rollout is marked as failed.

|

||||

When the number of failed checks reaches the canary analysis threshold, the traffic is routed back to the primary,

|

||||

the canary is scaled to zero and the rollout is marked as failed.

|

||||

|

||||

```text

|

||||

kubectl -n appmesh-system logs deploy/flagger -f | jq .msg

|

||||

@@ -335,13 +348,16 @@ Rolling back podinfo.test failed checks threshold reached 5

|

||||

Canary failed! Scaling down podinfo.test

|

||||

```

|

||||

|

||||

If you’ve enabled the Slack notifications, you’ll receive a message if the progress deadline is exceeded, or if the analysis reached the maximum number of failed checks:

|

||||

If you’ve enabled the Slack notifications, you’ll receive a message if the progress deadline is exceeded,

|

||||

or if the analysis reached the maximum number of failed checks:

|

||||

|

||||

|

||||

|

||||

## A/B Testing

|

||||

|

||||

Besides weighted routing, Flagger can be configured to route traffic to the canary based on HTTP match conditions. In an A/B testing scenario, you'll be using HTTP headers or cookies to target a certain segment of your users. This is particularly useful for frontend applications that require session affinity.

|

||||

Besides weighted routing, Flagger can be configured to route traffic to the canary based on HTTP match conditions.

|

||||

In an A/B testing scenario, you'll be using HTTP headers or cookies to target a certain segment of your users.

|

||||

This is particularly useful for frontend applications that require session affinity.

|

||||

|

||||

|

||||

|

||||

@@ -408,5 +424,8 @@ Routing all traffic to primary

|

||||

Promotion completed! Scaling down podinfo.test

|

||||

```

|

||||

|

||||

For an in-depth look at the analysis process read the [usage docs](../usage/how-it-works.md).

|

||||

|

||||

The above procedure can be extended with

|

||||

[custom metrics](../usage/metrics.md) checks,

|

||||

[webhooks](../usage/webhooks.md),

|

||||

[manual promotion](../usage/webhooks.md#manual-gating) approval and

|

||||

[Slack or MS Teams](../usage/alerting.md) notifications.

|

||||

|

||||

@@ -6,7 +6,7 @@ This guide shows you how to use [Contour](https://projectcontour.io/) ingress co

|

||||

|

||||

## Prerequisites

|

||||

|

||||

Flagger requires a Kubernetes cluster **v1.11** or newer and Contour **v1.0** or newer.

|

||||

Flagger requires a Kubernetes cluster **v1.16** or newer and Contour **v1.0** or newer.

|

||||

|

||||

Install Contour on a cluster with LoadBalancer support:

|

||||

|

||||

@@ -16,10 +16,10 @@ kubectl apply -f https://projectcontour.io/quickstart/contour.yaml

|

||||

|

||||

The above command will deploy Contour and an Envoy daemonset in the `projectcontour` namespace.

|

||||

|

||||

Install Flagger using Kustomize \(kubectl 1.14\) in the `projectcontour` namespace:

|

||||

Install Flagger using Kustomize (kubectl 1.14) in the `projectcontour` namespace:

|

||||

|

||||

```bash

|

||||

kubectl apply -k github.com/weaveworks/flagger//kustomize/contour

|

||||

kubectl apply -k https://github.com/fluxcd/flagger//kustomize/contour?ref=main

|

||||

```

|

||||

|

||||

The above command will deploy Flagger and Prometheus configured to scrape the Contour's Envoy instances.

|

||||

@@ -51,13 +51,13 @@ kubectl create ns test

|

||||

Install the load testing service to generate traffic during the canary analysis:

|

||||

|

||||

```bash

|

||||

kubectl apply -k github.com/weaveworks/flagger//kustomize/tester

|

||||

kubectl apply -k https://github.com/fluxcd/flagger//kustomize/tester?ref=main

|

||||

```

|

||||

|

||||

Create a deployment and a horizontal pod autoscaler:

|

||||

|

||||

```bash

|

||||

kubectl apply -k github.com/weaveworks/flagger//kustomize/podinfo

|

||||

kubectl apply -k https://github.com/fluxcd/flagger//kustomize/podinfo?ref=main

|

||||

```

|

||||

|

||||

Create a canary custom resource \(replace `app.example.com` with your own domain\):

|

||||

|

||||

@@ -6,7 +6,7 @@ Crossover is a minimal Envoy xDS implementation supports [Service Mesh Interface

|

||||

|

||||

## Prerequisites

|

||||

|

||||

Flagger requires a Kubernetes cluster **v1.11** or newer and Envoy paired with [Crossover](https://github.com/mumoshu/crossover) sidecar.

|

||||

Flagger requires a Kubernetes cluster **v1.16** or newer and Envoy paired with [Crossover](https://github.com/mumoshu/crossover) sidecar.

|

||||

|

||||

Create a test namespace:

|

||||

|

||||

@@ -58,7 +58,7 @@ Flagger takes a Kubernetes deployment and optionally a horizontal pod autoscaler

|

||||

Create a deployment and a horizontal pod autoscaler:

|

||||

|

||||

```bash

|

||||

kubectl apply -k github.com/weaveworks/flagger//kustomize/podinfo

|

||||

kubectl apply -k https://github.com/fluxcd/flagger//kustomize/podinfo?ref=main

|

||||

```

|

||||

|

||||

Deploy the load testing service to generate traffic during the canary analysis:

|

||||

|

||||

@@ -9,7 +9,7 @@ This guide shows you how to use the [Gloo Edge](https://gloo.solo.io/) ingress c

|

||||

This guide was written for Flagger version **1.5.0** or higher. Prior versions of Flagger used Gloo upstream groups to handle

|

||||

canaries, but newer versions of Flagger use Gloo route tables to handle canaries as well as A/B testing.

|

||||

|

||||

Flagger requires a Kubernetes cluster **v1.11** or newer and Gloo Edge ingress **1.6.0** or newer.

|

||||

Flagger requires a Kubernetes cluster **v1.16** or newer and Gloo Edge ingress **1.6.0** or newer.

|

||||

|

||||

Install Gloo with Helm v3:

|

||||

|

||||

@@ -44,13 +44,13 @@ kubectl create ns test

|

||||

Create a deployment and a horizontal pod autoscaler:

|

||||

|

||||

```bash

|

||||

kubectl -n test apply -k github.com/weaveworks/flagger//kustomize/podinfo

|

||||

kubectl -n test apply -k https://github.com/fluxcd/flagger//kustomize/podinfo?ref=main

|

||||

```

|

||||

|

||||

Deploy the load testing service to generate traffic during the canary analysis:

|

||||

|

||||

```bash

|

||||

kubectl -n test apply -k github.com/weaveworks/flagger//kustomize/tester

|

||||

kubectl -n test apply -k https://github.com/fluxcd/flagger//kustomize/tester?ref=main

|

||||

```

|

||||

|

||||

Create a virtual service definition that references a route table that will be generated by Flagger \(replace `app.example.com` with your own domain\):

|

||||

|

||||

@@ -8,7 +8,7 @@ Besides weighted routing, Flagger can be configured to route traffic to the cana

|

||||

|

||||

## Prerequisites

|

||||

|

||||

Flagger requires a Kubernetes cluster **v1.14** or newer and Istio **v1.0** or newer.

|

||||

Flagger requires a Kubernetes cluster **v1.16** or newer and Istio **v1.0** or newer.

|

||||

|

||||

Install Istio with telemetry support and Prometheus:

|

||||

|

||||

@@ -21,7 +21,7 @@ kubectl apply -f https://raw.githubusercontent.com/istio/istio/release-1.8/sampl

|

||||

Install Flagger in the `istio-system` namespace:

|

||||

|

||||

```bash

|

||||

kubectl apply -k github.com/weaveworks/flagger//kustomize/istio

|

||||

kubectl apply -k github.com/fluxcd/flagger//kustomize/istio

|

||||

```

|

||||

|

||||

Create an ingress gateway to expose the demo app outside of the mesh:

|

||||

@@ -56,13 +56,13 @@ kubectl label namespace test istio-injection=enabled

|

||||

Create a deployment and a horizontal pod autoscaler:

|

||||

|

||||

```bash

|

||||

kubectl apply -k github.com/weaveworks/flagger//kustomize/podinfo

|

||||

kubectl apply -k https://github.com/fluxcd/flagger//kustomize/podinfo?ref=main

|

||||

```

|

||||

|

||||

Deploy the load testing service to generate traffic during the canary analysis:

|

||||

|

||||

```bash

|

||||

kubectl apply -k github.com/weaveworks/flagger//kustomize/tester

|

||||

kubectl apply -k https://github.com/fluxcd/flagger//kustomize/tester?ref=main

|

||||

```

|

||||

|

||||

Create a canary custom resource \(replace example.com with your own domain\):

|

||||

|

||||

@@ -6,7 +6,7 @@ This guide shows you how to use Istio and Flagger to automate canary deployments

|

||||

|

||||

## Prerequisites

|

||||

|

||||

Flagger requires a Kubernetes cluster **v1.14** or newer and Istio **v1.5** or newer.

|

||||

Flagger requires a Kubernetes cluster **v1.16** or newer and Istio **v1.5** or newer.

|

||||

|

||||

Install Istio with telemetry support and Prometheus:

|

||||

|

||||

@@ -19,7 +19,7 @@ kubectl apply -f https://raw.githubusercontent.com/istio/istio/release-1.8/sampl

|

||||

Install Flagger in the `istio-system` namespace:

|

||||

|

||||

```bash

|

||||

kubectl apply -k github.com/weaveworks/flagger//kustomize/istio

|

||||

kubectl apply -k github.com/fluxcd/flagger//kustomize/istio

|

||||

```

|

||||

|

||||

Create an ingress gateway to expose the demo app outside of the mesh:

|

||||

@@ -56,13 +56,13 @@ kubectl label namespace test istio-injection=enabled

|

||||

Create a deployment and a horizontal pod autoscaler:

|

||||

|

||||

```bash

|

||||

kubectl apply -k github.com/weaveworks/flagger//kustomize/podinfo

|

||||

kubectl apply -k https://github.com/fluxcd/flagger//kustomize/podinfo?ref=main

|

||||

```

|

||||

|

||||

Deploy the load testing service to generate traffic during the canary analysis:

|

||||

|

||||

```bash

|

||||

kubectl apply -k github.com/weaveworks/flagger//kustomize/tester

|

||||

kubectl apply -k https://github.com/fluxcd/flagger//kustomize/tester?ref=main

|

||||

```

|

||||

|

||||

Create a canary custom resource \(replace example.com with your own domain\):

|

||||

|

||||

@@ -8,7 +8,7 @@ For applications that are not deployed on a service mesh, Flagger can orchestrat

|

||||

|

||||

## Prerequisites

|

||||

|

||||

Flagger requires a Kubernetes cluster **v1.11** or newer.

|

||||

Flagger requires a Kubernetes cluster **v1.16** or newer.

|

||||

|

||||

Install Flagger and the Prometheus add-on:

|

||||

|

||||

@@ -53,13 +53,13 @@ kubectl create ns test

|

||||

Create a deployment and a horizontal pod autoscaler:

|

||||

|

||||

```bash

|

||||

kubectl apply -k github.com/weaveworks/flagger//kustomize/podinfo

|

||||

kubectl apply -k https://github.com/fluxcd/flagger//kustomize/podinfo?ref=main

|

||||

```

|

||||

|

||||

Deploy the load testing service to generate traffic during the analysis:

|

||||

|

||||

```bash

|

||||

kubectl apply -k github.com/weaveworks/flagger//kustomize/tester

|

||||

kubectl apply -k https://github.com/fluxcd/flagger//kustomize/tester?ref=main

|

||||

```

|

||||

|

||||

Create a canary custom resource:

|

||||

|

||||

@@ -6,12 +6,12 @@ This guide shows you how to use Linkerd and Flagger to automate canary deploymen

|

||||

|

||||

## Prerequisites

|

||||

|

||||

Flagger requires a Kubernetes cluster **v1.11** or newer and Linkerd **2.4** or newer.

|

||||

Flagger requires a Kubernetes cluster **v1.16** or newer and Linkerd **2.4** or newer.

|

||||

|

||||

Install Flagger in the linkerd namespace:

|

||||

|

||||

```bash

|

||||

kubectl apply -k github.com/weaveworks/flagger//kustomize/linkerd

|

||||

kubectl apply -k github.com/fluxcd/flagger//kustomize/linkerd

|

||||

```

|

||||

|

||||

Note that you'll need kubectl 1.14 or newer to run the above command.

|

||||

@@ -30,13 +30,13 @@ kubectl annotate namespace test linkerd.io/inject=enabled

|

||||

Install the load testing service to generate traffic during the canary analysis:

|

||||

|

||||

```bash

|

||||

kubectl apply -k github.com/weaveworks/flagger//kustomize/tester

|

||||

kubectl apply -k https://github.com/fluxcd/flagger//kustomize/tester?ref=main

|

||||

```

|

||||

|

||||

Create a deployment and a horizontal pod autoscaler:

|

||||

|

||||

```bash

|

||||

kubectl apply -k github.com/weaveworks/flagger//kustomize/podinfo

|

||||

kubectl apply -k https://github.com/fluxcd/flagger//kustomize/podinfo?ref=main

|

||||

```

|

||||

|

||||

Create a canary custom resource for the podinfo deployment:

|

||||

|

||||

@@ -6,7 +6,7 @@ This guide shows you how to use the NGINX ingress controller and Flagger to auto

|

||||

|

||||

## Prerequisites

|

||||

|

||||

Flagger requires a Kubernetes cluster **v1.14** or newer and NGINX ingress **v0.41** or newer.

|

||||

Flagger requires a Kubernetes cluster **v1.16** or newer and NGINX ingress **v0.41** or newer.

|

||||

|

||||

Install the NGINX ingress controller with Helm v3:

|

||||

|

||||

@@ -46,7 +46,7 @@ kubectl create ns test

|

||||

Create a deployment and a horizontal pod autoscaler:

|

||||

|

||||

```bash

|

||||

kubectl apply -k github.com/weaveworks/flagger//kustomize/podinfo

|

||||

kubectl apply -k https://github.com/fluxcd/flagger//kustomize/podinfo?ref=main

|

||||

```

|

||||

|

||||

Deploy the load testing service to generate traffic during the canary analysis:

|

||||

|

||||

@@ -4,19 +4,22 @@ This guide show you how to use Prometheus Operator for canary analysis.

|

||||

|

||||

## Prerequisites

|

||||

|

||||

Flagger requires a Kubernetes cluster **v1.16** or newer.

|

||||

|

||||

Install Prometheus Operator with Helm v3:

|

||||

|

||||

```bash

|

||||

helm repo add stable https://kubernetes-charts.storage.googleapis.com

|

||||

helm repo add prometheus-community https://prometheus-community.github.io/helm-charts

|

||||

|

||||

kubectl create ns monitoring

|

||||

helm upgrade -i prometheus stable/prometheus-operator \

|

||||

helm upgrade -i prometheus prometheus-community/kube-prometheus-stack \

|

||||

--namespace monitoring \

|

||||

--set prometheus.prometheusSpec.serviceMonitorSelectorNilUsesHelmValues=false \

|

||||

--set fullnameOverride=prometheus

|

||||

```

|

||||

|

||||

The `prometheus.prometheusSpec.serviceMonitorSelectorNilUsesHelmValues=false` option allows Prometheus operator to watch serviceMonitors outside of his namespace.

|

||||

The `prometheus.prometheusSpec.serviceMonitorSelectorNilUsesHelmValues=false`

|

||||

option allows Prometheus operator to watch serviceMonitors outside of his namespace.

|

||||

|

||||

Install Flagger by setting the metrics server to Prometheus:

|

||||

|

||||

@@ -84,7 +87,8 @@ spec:

|

||||

app: podinfo-canary

|

||||

```

|

||||

|

||||

We are setting `interval: 5s` to have a more aggressive scraping. If you do not define it, you must to use a longer interval in the Canary object.

|

||||

We are setting `interval: 5s` to have a more aggressive scraping.

|

||||

If you do not define it, you must to use a longer interval in the Canary object.

|

||||

|

||||

## Metric templates

|

||||

|

||||

@@ -191,5 +195,6 @@ spec:

|

||||

|

||||

Based on the above specification, Flagger creates the primary and canary Kubernetes ClusterIP service.

|

||||

|

||||

During the canary analysis, Prometheus will scrape the canary service and Flagger will use the HTTP error rate and latency queries to determine if the release should be promoted or rolled back.

|

||||

During the canary analysis, Prometheus will scrape the canary service and Flagger will use the HTTP error rate

|

||||

and latency queries to determine if the release should be promoted or rolled back.

|

||||

|

||||

|

||||

@@ -6,7 +6,7 @@ This guide shows you how to use the [Skipper ingress controller](https://opensou

|

||||

|

||||

## Prerequisites

|

||||

|

||||

Flagger requires a Kubernetes cluster **v1.14** or newer and Skipper ingress **0.11.40** or newer.

|

||||

Flagger requires a Kubernetes cluster **v1.16** or newer and Skipper ingress **0.11.40** or newer.

|

||||

|

||||

Install Skipper ingress-controller using [upstream definition](https://opensource.zalando.com/skipper/kubernetes/ingress-controller/#install-skipper-as-ingress-controller).

|

||||

|

||||

@@ -31,7 +31,7 @@ Certain arguments are relevant:

|

||||

Install Flagger using kustomize:

|

||||

|

||||

```bash

|

||||

kustomize build https://github.com/weaveworks/flagger/kustomize/kubernetes | kubectl apply -f -

|

||||

kustomize build https://github.com/fluxcd/flagger/kustomize/kubernetes | kubectl apply -f -

|

||||

```

|

||||

|

||||

## Bootstrap

|

||||

@@ -47,7 +47,7 @@ kubectl create ns test

|

||||

Create a deployment and a horizontal pod autoscaler:

|

||||

|

||||

```bash

|

||||

kubectl apply -k github.com/weaveworks/flagger//kustomize/podinfo

|

||||

kubectl apply -k https://github.com/fluxcd/flagger//kustomize/podinfo?ref=main

|

||||

```

|

||||

|

||||

Deploy the load testing service to generate traffic during the canary analysis:

|

||||

|

||||

@@ -4,7 +4,7 @@ This guide shows you how to use the [Traefik](https://doc.traefik.io/traefik/) a

|

||||

|

||||

## Prerequisites

|

||||

|

||||

Flagger requires a Kubernetes cluster **v1.14** or newer and Traefik **v2.3** or newer.

|

||||

Flagger requires a Kubernetes cluster **v1.16** or newer and Traefik **v2.3** or newer.

|

||||

|

||||

Install Traefik with Helm v3:

|

||||

|

||||

@@ -40,7 +40,7 @@ kubectl create ns test

|

||||

Create a deployment and a horizontal pod autoscaler:

|

||||

|

||||

```bash

|

||||

kubectl apply -k github.com/weaveworks/flagger//kustomize/podinfo

|

||||

kubectl apply -k https://github.com/fluxcd/flagger//kustomize/podinfo?ref=main

|

||||

```

|

||||

|

||||

Deploy the load testing service to generate traffic during the canary analysis:

|

||||

|

||||

Reference in New Issue

Block a user